Abstract

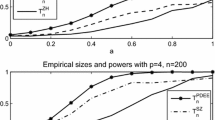

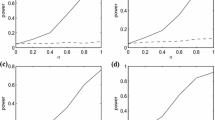

Comparison of two-sample heteroscedastic single-index models, where both the scale and location functions are modeled as single-index models, is studied in this paper. We propose a test for checking the equality of single-index parameters when dimensions of covariates of the two samples are equal. Further, we propose two test statistics based on Kolmogorov–Smirnov and Cramér–von Mises type functionals. These statistics evaluate the difference of the empirical residual processes to test the equality of mean functions of two single-index models. Asymptotic distributions of estimators and test statistics are derived. The Kolmogorov–Smirnov and Cramér–von Mises test statistics can detect local alternatives that converge to the null hypothesis at a parametric convergence rate. To calculate the critical values of Kolmogorov–Smirnov and Cramér–von Mises test statistics, a bootstrap procedure is proposed. Simulation studies and an empirical study demonstrate the performance of the proposed procedures.

Similar content being viewed by others

References

Akritas, M. G., Van Keilegom, I. (2001). Non-parametric estimation of the residual distribution. Scandinavian Journal of Statistics, 28(3), 549–567.

Carroll, R. J., Fan, J., Gijbels, I., Wand, M. P. (1997). Generalized partially linear single-index models. Journal of the American Statistical Association, 92(438), 477–489.

Cui, X., Härdle, W. K., Zhu, L. (2011). The EFM approach for single-index models. The Annals of Statistics, 39(3), 1658–1688.

Dette, H., Neumeyer, N., Keilegom, I. V. (2007). A new test for the parametric form of the variance function in non-parametric regression. Journal of the Royal Statistical Society: Series B (Statistical Methodology), 69(5), 903–917.

Fan, J., Gijbels, I. (1996). Local Polynomial Modelling and Its Applications. London: Chapman & Hall.

Feng, L., Zou, C., Wang, Z., Zhu, L. (2015). Robust comparison of regression curves. TEST, 24(1), 185–204.

Feng, Z., Zhu, L. (2012). An alternating determination-optimization approach for an additive multi-index model. Computational Statistics & Data Analysis, 56(6), 1981–1993.

Feng, Z., Wen, X. M., Yu, Z., Zhu, L. (2013). On partial sufficient dimension reduction with applications to partially linear multi-index models. Journal of the American Statistical Association, 108(501), 237–246.

Gørgens, T. (2002). Nonparametric comparison of regression curves by local linear fitting. Statistics & Probability Letters, 60(1), 81–89.

Härdle, W., Hall, P., Ichimura, H. (1993). Optimal smoothing in single-index models. The Annals of Statistics, 21, 157–178.

Koul, H. L., Schick, A. (1997). Testing for the equality of two nonparametric regression curves. Journal of Statistical Planning and Inference, 65(2), 293–314.

Kulasekera, K. (1995). Comparison of regression curves using quasi-residuals. Journal of the American Statistical Association, 90(431), 1085–1093.

Kulasekera, K., Wang, J. (1997). Smoothing parameter selection for power optimality in testing of regression curves. Journal of the American Statistical Association, 92(438), 500–511.

Li, G., Zhu, L., Xue, L., Feng, S. (2010). Empirical likelihood inference in partially linear single-index models for longitudinal data. Journal of Multivariate Analysis, 101(5), 718–732.

Li, G., Peng, H., Dong, K., Tong, T. (2014). Simultaneous confidence bands and hypothesis testing for single-index models. Statistica Sinica, 24(2), 937–955.

Liang, H., Liu, X., Li, R., Tsai, C. L. (2010). Estimation and testing for partially linear single-index models. The Annals of Statistics, 38, 3811–3836.

Lin, W., Kulasekera, K. (2010). Testing the equality of linear single-index models. Journal of Multivariate Analysis, 101(5), 1156–1167.

Neumeyer, N. (2009). Smooth residual bootstrap for empirical processes of nonparametric regression residuals. Scandinavian Journal of Statistics, 36(2), 204–228.

Neumeyer, N., Dette, H. (2003). Nonparametric comparison of regression curves: An empirical process approach. The Annals of Statistics, 31(3), 880–920.

Neumeyer, N., Van Keilegom, I. (2010). Estimating the error distribution in nonparametric multiple regression with applications to model testing. Journal of Multivariate Analysis, 101(5), 1067–1078.

Peng, H., Huang, T. (2011). Penalized least squares for single index models. Journal of Statistical Planning and Inference, 141(4), 1362–1379.

Pollard, D. (1984). Convergence of Stochastic Processes. New York: Springer.

Stute, W., Zhu, L. X. (2005). Nonparametric checks for single-index models. The Annals of Statistics, 33, 1048–1083.

Stute, W., Xu, W. L., Zhu, L. X. (2008). Model diagnosis for parametric regression in high-dimensional spaces. Biometrika, 95(2), 451–467.

van der Vaart, A.W., Wellner, J.A. (1996). Weak convergence and empirical processes, Springer series in statistics. New York: Springer. (with applications to statistics).

Van Keilegom, I., Manteiga, W. G., Sellero, C. S. (2008). Goodness-of-fit tests in parametric regression based on the estimation of the error distribution. TEST, 17(2), 401–415.

Wang, J. L., Xue, L., Zhu, L., Chong, Y. S. (2010). Estimation for a partial-linear single-index model. The Annals of Statistics, 38, 246–274.

Wang, T., Xu, P., Zhu, L. (2015). Variable selection and estimation for semi-parametric multiple-index models. Bernoulli, 21(1), 242–275.

Xia, Y. (2006). Asymptotic distributions for two estimators of the single-index model. Econometric Theory, 22, 1112–1137.

Xia, Y., Tong, H., Li, W. K., Zhu, L. X. (2002). An adaptive estimation of dimension reduction space. Journal of the Royal Statistical Society: Series B (Statistical Methodology), 64, 363–410.

Xu, P., Zhu, L. (2012). Estimation for a marginal generalized single-index longitudinal model. Journal of Multivariate Analysis, 105, 285–299.

Yu, Y., Ruppert, D. (2002). Penalized spline estimation for partially linear single-index models. Journal of the American Statistical Association, 97, 1042–1054.

Zhang, C., Peng, H., Zhang, J. T. (2010). Two samples tests for functional data. Communications in Statistics—Theory and Methods, 39(4), 559–578.

Zhang, J., Wang, X., Yu, Y., Gai, Y. (2014). Estimation and variable selection in partial linear single index models with error-prone linear covariates. Statistics, 48, 1048–1070.

Acknowledgements

The authors thank the editor, the associate editor and two referees for their constructive suggestions that helped them to improve the early manuscript. Jun Zhang’s research is supported by the National Natural Science Foundation of China (NSFC) (Grant No. 11401391). Zhenghui Feng’s research is supported by the Fundamental Research Funds for the Central Universities in China (Grant No. 20720171025). Xiaoguang Wang’s research is supported by the NSFC (Grant Nos. 11471065 and 11371077), and the Fundamental Research Funds for the Central Universities in China (Grant No. DUT15LK28).

Author information

Authors and Affiliations

Corresponding author

Appendix

Appendix

1.1 Proofs of Theorems 1 and 3

Lemma 1

Suppose that \(\varvec{X}_{i}\), \(i=1, \ldots , n\) are i.i.d. random vectors. Let \(m(\varvec{x})\) be a continuous function and its derivatives up to second order are bounded, satisfying \(E[m^{2}(\varvec{X})]<\infty \). \(E[m(\varvec{X})|\varvec{\beta }^{\tau }\varvec{X}=u]\) has a continuous bounded second derivative on u. Let K(u) be a bounded positive function with a bounded support satisfying the Lipschitz condition: there exists a neighborhood of the origin, say \(\Upsilon \), and a constant \(c>0\) such that for any \(\epsilon \in \Upsilon \): \(|K(u+\epsilon )-K(u)|<c|\epsilon |\). Given that \(h=n^{-d}\) for some \(d<1\), we have, for \(s_{0}>0\), and \(j=0,1,2\),

where \(\Delta = \{\varvec{\beta }\in \Theta , \Vert \varvec{\beta }-\varvec{\beta }_{0}\Vert \le Cn^{-1/2}\}\) for some positive constant C, \(\Theta =\{\varvec{\beta }, \Vert \varvec{\beta }\Vert =1, \beta _{1}>0\}\), \(\mu _{K,l}=\int t^{l}K(t)dt\), \(S(\varvec{\beta }_{0}^{\tau }\varvec{x})=\frac{d }{du}\left\{ f_{{\varvec{\beta }}_{0}^{\tau }{\varvec{X}}}(u) E\big [m(\varvec{X})|\varvec{\beta }_{0}^{\tau }\varvec{X}=u\big ]\right\} |_{u={\varvec{\beta }}_{0}^{\tau }{\varvec{x}}}\), and \(c_{n}=\left\{ \dfrac{(\log n)^{1+s_{0}}}{nh}\right\} ^{1/2}+h^{2}\).

Proof

This proof can be completed by a similar argument of Lemma A.4 in Wang et al. (2010). See also the Lemma A6.1 in Xia (2006). \(\square \)

Proofs of Theorems 1 and 3

We present the proof of Theorem 3. The proof of Theorem 1 is similar and we omit the details. We define \(c_{n_{s}}=\left\{ \dfrac{(\log n_{s})^{1+s_{0}}}{n_{s}h_{s}}\right\} ^{1/2}+h_{s}^{2}\) for \(s=1, 2\) for simplicity in the following.

Proof

Note that \(\mathcal {W}_{n_{1}n_{2}}\left( \hat{\varvec{\beta }}_{\mathcal {H}_{0}}^{(1)}\right) =\mathbf{0}\). Taylor expansion entails that

where \(\tilde{\varvec{\beta }}^{(1)}_{0}\) is between \(\hat{\varvec{\beta }}_{\mathcal {H}_{0}}^{(1)}\) and \(\varvec{\beta }_{0}^{(1)}\).

- Step 1 :

-

In the following, we define \(N=n_{1}+n_{2}\) for simplicity. In this step, we deal with \({{N}^{-1/2}}\mathcal {W}_{n_{1}n_{2}}\left( {\varvec{\beta }}_{0}^{(1)}\right) \). Using Lemma 1 and the detailed proofs of Lemma A.4 in Zhang et al. (2014), we have \(\hat{g}_{s}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{si}, \varvec{\beta }_{0})=g_{s}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{si})+O_{P}(c_{ns})\), \(\hat{V}_{s}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{si}, \varvec{\beta }_{0})=V_{s, {\varvec{\beta }}_{0}}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{si})+O_{P}(c_{ns})\), for \(s=1,2\). Moreover,

$$\begin{aligned}&S_{n_{1},l_{1}1}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{1i},\varvec{\beta }_{0})\nonumber \\&\quad =\, \frac{1}{n_{1}}\sum _{j=1}^{n_{1}}K_{h_{1}}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{1j}-\varvec{\beta }_{0}^{\tau }\varvec{X}_{1i}) (\varvec{\beta }_{0}^{\tau }\varvec{X}_{1j}-\varvec{\beta }_{0}^{\tau }\varvec{X}_{1i})^{l_{1}} \sigma _{1}^{2}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{1j})\epsilon _{1j}^{2}\nonumber \\&\qquad +\,\frac{2}{n_{1}}\sum _{j=1}^{n_{1}}K_{h_{1}}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{1j}-\varvec{\beta }_{0}^{\tau }\varvec{X}_{1i}) (\varvec{\beta }_{0}^{\tau }\varvec{X}_{1j}-\varvec{\beta }_{0}^{\tau }\varvec{X}_{1i})^{l_{1}} \nonumber \\&\qquad \times \,\left[ g_{1}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{1j})-\hat{g}_{1}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{1j}, \varvec{\beta }_{0})\right] \sigma _{1}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{1j})\epsilon _{1j}\nonumber \\&\qquad +\,\frac{1}{n_{1}}\sum _{j=1}^{n_{1}}K_{h_{1}}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{1j}-\varvec{\beta }_{0}^{\tau }\varvec{X}_{1i}) (\varvec{\beta }_{0}^{\tau }\varvec{X}_{1j}-\varvec{\beta }_{0}^{\tau }\varvec{X}_{1i})^{l_{1}}\nonumber \\&\qquad \times \,\left[ g_{1}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{1j})-\hat{g}_{1}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{1j}, \varvec{\beta }_{0})\right] ^{2}\nonumber \\&\quad =\,h_{1}^{l_{1}}f_{{\varvec{\beta }}_{0}^{\tau }{\varvec{X}}_{1}}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{1i}) \sigma _{1}^{2}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{1i})\mu _{Kl_{1}}+O_{P}(h_{1}^{l_{1}}c_{n1}+h_{1}^{l_{1}}c_{n1}^{2}), \end{aligned}$$(19)for \(l_{1}=0,1,2\). Using (19), we obtain \(\hat{\sigma }_{1}^{2}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{1i},\varvec{\beta }_{0})=\sigma _{1}^{2}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{1i})+O_{P}(c_{n1})\). Similarly, \(\hat{\sigma }_{2}^{2}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{2i},\varvec{\beta }_{0})=\sigma _{2}^{2}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{2i})+O_{P}(c_{n2})\).

Let \(G^{{\varvec{x}}}_{1,w}(u,\varvec{\beta })= E\left[ Y^{w}_{1}\{\varvec{X}_{1}-\varvec{x}\}|\varvec{\beta }^{\tau }\varvec{X}_{1}=u\right] f_{{\varvec{\beta }}^{\tau }{\varvec{X}}}(u)\), \(K'_{h_{1}}(u)=\frac{1}{h_{1}}K'(u/h_{1})\). Using condition (C3), we have

$$\begin{aligned}&E\left[ \frac{\partial }{\partial \varvec{\beta }}T_{n_{1},l_{1}l_{2}}(\varvec{\beta }^{\tau }\varvec{x},\varvec{\beta })\right] \nonumber \\&\quad =\,\frac{1}{n_{1}}\sum _{i=1}^{n_{1}}E\left[ K'_{h_{1}} (\varvec{\beta }^{\tau }\varvec{X}_{1i}-\varvec{\beta }^{\tau }\varvec{x})J_{{\varvec{\beta }}}^{\tau }\left( \frac{\varvec{X}_{1i}-\varvec{x}}{h_{1}}\right) (\varvec{\beta }^{\tau }\varvec{X}_{1i}-\varvec{\beta }^{\tau }\varvec{x})^{l_{1}} Y_{1i}^{l_{2}}\right] \nonumber \\&\qquad +\,\frac{1}{n_{1}}\sum _{i=1}^{n_{1}}E\left[ K_{h_{1}}(\varvec{\beta }^{\tau }\varvec{X}_{1i}-\varvec{\beta }^{\tau }\varvec{x}) J_{{\varvec{\beta }}}^{\tau }\left( {\varvec{X}_{1i}-\varvec{x}}\right) l_{1}(\varvec{\beta }^{\tau }\varvec{X}_{1i}-\varvec{\beta }^{\tau }\varvec{x})^{l_{1}-1}I\{l_{1}\ge 1\} Y_{1i}^{l_{2}}\right] \nonumber \\&\quad =\,-\sum _{v=0}^{2}\frac{l_{1}+v}{v!}J_{{\varvec{\beta }}}^{\tau }G^{{\varvec{x}}(v)}_{1,l_{2}}(\varvec{\beta }^{\tau }\varvec{x},\varvec{\beta })h_{1}^{l_{1}-1+v} \mu _{K,l_{1}-1+v}I\{l_{1}+v\ge 1\}\nonumber \\&\qquad ~~~+\,\sum _{v=0}^{3}\frac{l_{1}}{v!}J_{{\varvec{\beta }}}^{\tau }G^{{\varvec{x}}(v)}_{1,l_{2}}(\varvec{\beta }^{\tau }\varvec{x},\varvec{\beta })h_{1}^{l_{1}-1+v}\mu _{K,l_{1}-1+v}I\{l_{1}\ge 1\} +O(h_{1}^{l_{1}+2}), \end{aligned}$$(20)where \(G^{{\varvec{x}}(v)}_{1,l_{2}}(u,\varvec{\beta })=\frac{\partial ^{v}}{\partial u^{v}}G^{{\varvec{x}}}_{1,l_{2}}(u,\varvec{\beta })\), and \(I\{u\}\) is the indicator function. Similar to the proof of Theorem 3.1 in Fan and Gijbels (1996) and Lemma A.5 in Zhang et al. (2014), together with (20) and Lemma 1, we can have

$$\begin{aligned}&\frac{\partial \hat{g}_{1}(\varvec{\beta }^{\tau }\varvec{X}_{1i}, \varvec{\beta })}{\partial \varvec{\beta }^{(1)}}\Big |_{\varvec{\beta }^{(1)}=\tilde{\varvec{\beta }}^{(1)}_{0}}\nonumber \\&\quad =\,J^{\tau }_{{\varvec{\beta }}_{0}} \left[ \varvec{X}_{1i}-V_{1,{\varvec{\beta }}_{0}}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{1i})\right] g_{1}'(\varvec{\beta }_{0}^{\tau }\varvec{X}_{1i}) +O_{P}\left( h_{1}^{2}+\sqrt{\frac{(\log n_{1})^{1+s_{0}}}{n_{1}h_{1}^{3}}}\right) .\nonumber \\ \end{aligned}$$(21)Under the null hypothesis \(\mathcal {H}_{0}\),

$$\begin{aligned}&\frac{\partial \hat{g}_{2}(\varvec{\beta }^{\tau }\varvec{X}_{2i}, \varvec{\beta })}{\partial \varvec{\beta }^{(1)}}\Big |_{\varvec{\beta }^{(1)}=\tilde{\varvec{\beta }}^{(1)}_{0}}\nonumber \\&\quad =\,J^{\tau }_{{\varvec{\beta }}_{0}} \left[ \varvec{X}_{2i}-V_{2,{\varvec{\beta }}_{0}}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{2i})\right] g_{2}'(\varvec{\beta }_{0}^{\tau }\varvec{X}_{2i}) +O_{P}\left( h_{2}^{2}+\sqrt{\frac{(\log n_{2})^{1+s_{0}}}{n_{2}h_{2}^{3}}}\right) .\nonumber \\ \end{aligned}$$(22)Define that \(\mathcal {Q}_{n_{1}}(u,\varvec{\beta }_{0})=\frac{1}{n_{1}h_{1}^{2}}T_{n_{1},20}(u, \varvec{\beta }_{0})\frac{1}{n_{1}}T_{n_{1},00}(u, \varvec{\beta }_{0})-\frac{1}{n_{1}^{2}h_{1}^{2}}T_{n_{1},10}^{2}(u, \varvec{\beta }_{0})\) and \(\mathcal {L}_{n_{1}}(u,\varvec{\beta }_{0})=\frac{1}{n^{2}_{1}h_{1}^{2}}T_{n_{1},20}(u, \varvec{\beta }_{0})T_{n_{1},01}(u, \varvec{\beta }_{0})-\frac{1}{n^{2}_{1}h^{2}_{1}}T_{n_{1},10}(u, \varvec{\beta }_{0})T_{n_{1},11}(u, \varvec{\beta }_{0})\). Then, \(\hat{g}_{1}(u, \varvec{\beta }_{0})=\frac{\mathcal {L}_{n_{1}}(u,{\varvec{\beta }}_{0})}{\mathcal {Q}_{n_{1}}(u,{\varvec{\beta }}_{0})}\) and \(\hat{g}_{1}'(u, \varvec{\beta }_{0})=\frac{\partial \mathcal {L}_{n_{1}}(u,{\varvec{\beta }}_{0})/\partial u}{\mathcal {Q}_{n_{1}}(u,{\varvec{\beta }}_{0})}-\frac{\mathcal {L}_{n_{1}}(u,{\varvec{\beta }}_{0})\partial \mathcal {Q}_{n_{1}}(u,{\varvec{\beta }}_{0})/\partial u}{\mathcal {Q}^{2}_{n_{1}}(u,{\varvec{\beta }}_{0})}\). Following the proof of Lemma A.5 in Zhang et al. (2014), together with Lemma 1 and (20), we have \(\hat{g}_{1}'(u, \varvec{\beta }_{0})=g_{1}'(u)+O_{P}\left( h_{1}^{2}+\sqrt{\frac{(\log n_{1})^{1+s_{0}}}{n_{1}h_{1}^{3}}}\right) \) and \(\hat{g}_{1}'(\varvec{\beta }_{0}^{\tau }\varvec{X}_{1i}, \varvec{\beta }_{0})=g_{1}'(\varvec{\beta }_{0}^{\tau }\varvec{X}_{1i})+O_{P}\left( h_{1}^{2}+\sqrt{\frac{(\log n_{1})^{1+s_{0}}}{n_{1}h_{1}^{3}}}\right) \). Similarly, \(\hat{g}_{2}'(u, \varvec{\beta }_{0})=g_{2}'(u)+O_{P}\left( h_{2}^{2}+\sqrt{\frac{(\log n_{2})^{1+s_{0}}}{n_{2}h_{2}^{3}}}\right) \), \(\hat{g}_{2}'(\varvec{\beta }_{0}^{\tau }\varvec{X}_{2i}, \varvec{\beta }_{0})=g_{2}'(\varvec{\beta }_{0}^{\tau }\varvec{X}_{2i})+O_{P}\left( h_{2}^{2}+\sqrt{\frac{(\log n_{2})^{1+s_{0}}}{n_{2}h_{2}^{3}}}\right) \). Using the asymptotic results (21) and (22) and the condition of that \(\frac{n_{1}}{n_{1}+n_{2}}=\frac{n_{1}}{N}\rightarrow \lambda \in (0, 1)\), as \(\max \left\{ \frac{(\log n_{1})^{2+2s_{0}}}{n_{1}h_{1}^{2}}, \frac{(\log n_{2})^{2+2s_{0}}}{n_{2}h_{2}^{2}}\right\} \rightarrow 0\), and also \(\max \{n_{1}h_{1}^{8},n_{2}h_{2}^{8}\}\rightarrow 0\), we have

$$\begin{aligned}&{({n_{1}+n_{2})}^{-1/2}}\mathcal {W}_{n_{1}n_{2}}\left( {\varvec{\beta }}_{0}^{(1)}\right) \nonumber \\&\quad =\,\sqrt{\frac{n_{1}}{n_{1}+n_{2}}}n_{1}^{-1/2} \sum _{i=1}^{n_{1}}J_{{\varvec{\beta }}_{0}}^{\tau }\frac{\hat{g}_{1}'(\varvec{\beta }_{0}^{\tau }\varvec{X}_{1i}, \varvec{\beta }_{0})}{\hat{\sigma }_{1}^{2}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{1i}, \varvec{\beta }_{0})}\left[ \varvec{X}_{1i}-\hat{V}_{1}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{1i}, \varvec{\beta }_{0})\right] \nonumber \\&\qquad \times \left[ Y_{1i}-\hat{g}_{1}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{1i}, \varvec{\beta }_{0})\right] \nonumber \\&\qquad +\,\sqrt{\frac{n_{2}}{n_{1}+n_{2}}}n_{2}^{-1/2} \sum _{i=1}^{n_{2}}J_{{\varvec{\beta }}_{0}}^{\tau }\frac{\hat{g}_{2}'(\varvec{\beta }_{0}^{\tau }\varvec{X}_{2i}, \varvec{\beta }_{0})}{\hat{\sigma }_{2}^{2}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{2i}, \varvec{\beta }_{0})}\left[ \varvec{X}_{2i}-\hat{V}_{2}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{2i}, \varvec{\beta }_{0})\right] \nonumber \\&\qquad \times \left[ Y_{2i}-\hat{g}_{2}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{2i}, \varvec{\beta }_{0})\right] \nonumber \\&\quad =\sqrt{\frac{n_{1}}{n_{1}+n_{2}}} n_{1}^{-1/2} \sum _{i=1}^{n_{1}}J_{{\varvec{\beta }}_{0}}^{\tau }{g}_{1}'(\varvec{\beta }_{0}^{\tau }\varvec{X}_{1i})\left[ \varvec{X}_{1i}-{V}_{1, {\varvec{\beta }}_{0}} (\varvec{\beta }_{0}^{\tau }\varvec{X}_{1i})\right] {\sigma }_{1}^{-1}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{1i})\epsilon _{1i}\nonumber \\&\qquad +\,\sqrt{\frac{n_{2}}{n_{1}+n_{2}}} n_{2}^{-1/2} \sum _{i=1}^{n_{2}}J_{{\varvec{\beta }}_{0}}^{\tau }{g}_{2}'(\varvec{\beta }_{0}^{\tau }\varvec{X}_{2i})\left[ \varvec{X}_{2i}-{V}_{2, {\varvec{\beta }}_{0}} (\varvec{\beta }_{0}^{\tau }\varvec{X}_{2i})\right] {\sigma }_{2}^{-1}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{2i})\epsilon _{2i}\nonumber \\&\qquad +\,o_{P}(1), \end{aligned}$$(23)where \(V_{2, {\varvec{\beta }}_{0}}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{2})=E[\varvec{X}_{2}|\varvec{\beta }^{\tau }_{0}\varvec{X}_{2}]\).

- Step 2 :

-

In this sub-step, we deal with \(\frac{1}{n_{1}+n_{2}}\frac{\partial \mathcal {W}_{n_{1}n_{2}}\left( {\varvec{\beta }}^{(1)}\right) }{\partial {\varvec{\beta }}^{(1)}}\big |_{{\varvec{\beta }}^{(1)}=\tilde{{\varvec{\beta }}}^{(1)}_{0}}\). Define

$$\begin{aligned}&\mathcal {S}_{n_{1}n_{2}}(\tilde{\varvec{\beta }}^{(1)}_{0})\mathop {=}\limits ^\mathrm{def}\frac{1}{n_{1}+n_{2}}\sum _{s=1}^{2}\sum _{i=1}^{n_{s}} \left[ Y_{si}-\hat{g}_{s}(\tilde{\varvec{\beta }}_{0}^{\tau }\varvec{X}_{si}, \tilde{\varvec{\beta }}_{0})\right] \\&\times \frac{\partial }{\partial \varvec{\beta }^{(1)}}\left\{ J_{{\varvec{\beta }}}^{\tau }\hat{g}_{s}'(\varvec{\beta }^{\tau }\varvec{X}_{si}, \varvec{\beta })\left[ \varvec{X}_{si}-\hat{V}_{s}(\varvec{\beta }^{\tau }\varvec{X}_{si}, \varvec{\beta })\right] \hat{\sigma }_{s}^{-2}(\varvec{\beta }^{\tau }\varvec{X}_{si}, \varvec{\beta })\right\} \Big |_{\varvec{\beta }^{(1)}=\tilde{\varvec{\beta }}^{(1)}_{0}}, \end{aligned}$$and

$$\begin{aligned}&\mathcal {L}_{n_{1}n_{2}}(\tilde{\varvec{\beta }}^{(1)}_{0})\\&\mathop {=}\limits ^\mathrm{def}\frac{1}{n_{1}+n_{2}}\sum _{s=1}^{2}\sum _{i=1}^{n_{s}}\Big \{J_{\tilde{{\varvec{\beta }}}_{0}}^{\tau } \hat{g}_{s}'(\tilde{\varvec{\beta }}_{0}^{\tau }\varvec{X}_{si}, \tilde{\varvec{\beta }}_{0})\left[ \varvec{X}_{si}-\hat{V}_{s}(\tilde{\varvec{\beta }}_{0}^{\tau }\varvec{X}_{si}, \tilde{\varvec{\beta }}_{0})\right] \\&\quad \times \hat{\sigma }_{s}^{-2}(\tilde{\varvec{\beta }}_{0}^{\tau }\varvec{X}_{si}, \tilde{\varvec{\beta }}_{0})\Big \}\frac{\partial \hat{g}_{s}(\varvec{\beta }^{\tau }\varvec{X}_{si}, \varvec{\beta })}{\partial \varvec{\beta }^{(1)}}\Bigg |_{\varvec{\beta }^{(1)}=\tilde{\varvec{\beta }}^{(1)}_{0}}. \end{aligned}$$Then,

$$\begin{aligned} \frac{1}{n_{1}+n_{2}}\frac{\partial \mathcal {W}_{n_{1}n_{2}}\left( {\varvec{\beta }}^{(1)}\right) }{\partial \varvec{\beta }^{(1)}} \Bigg |_{\varvec{\beta }^{(1)}=\tilde{\varvec{\beta }}^{(1)}_{0}} =\mathcal {S}_{n_{1}n_{2}}(\tilde{\varvec{\beta }}^{(1)}_{0})+\mathcal {L}_{n_{1}n_{2}}(\tilde{\varvec{\beta }}^{(1)}_{0}), \end{aligned}$$(24)where \(\tilde{\varvec{\beta }}_{0}=\left( \sqrt{1-\tilde{\varvec{\beta }}_{0}^{(1)\tau }\tilde{\varvec{\beta }}_{0}^{(1)}}, \tilde{\varvec{\beta }}_{0}^{(1)\tau }\right) ^{\tau }\). Note that \(\tilde{\varvec{\beta }}^{(1)}_{0}\) is between \(\hat{\varvec{\beta }}_{\mathcal {H}_{0}}^{(1)}\) and \(\varvec{\beta }_{0}^{(1)}\). By using (18), we have \(\hat{\varvec{\beta }}_{\mathcal {H}_{0}}^{(1)}=\varvec{\beta }_{0}^{(1)}+O_P((n_{1}+n_{2})^{-1/2})\). Note that \(\tilde{\varvec{\beta }}^{(1)}_{0}\mathop {\longrightarrow }\limits ^{P}\varvec{\beta }_{0}^{(1)}\), \(\tilde{\varvec{\gamma }}^{(1)}_{0}\mathop {\longrightarrow }\limits ^{P}\varvec{\beta }_{0}^{(1)}\) and \(\tilde{\varvec{\beta }}^{}_{0}\mathop {\longrightarrow }\limits ^{P}\varvec{\gamma }_{0}^{}\), \(\tilde{\varvec{\gamma }}^{}_{0}\mathop {\longrightarrow }\limits ^{P}\varvec{\gamma }_{0}^{}\). Together with (21)–(22) and condition of that \(\frac{n_{1}}{n_{1}+n_{2}}\rightarrow \lambda \in (0, 1)\), we have

$$\begin{aligned}&\mathcal {L}_{n_{1}n_{2}}(\tilde{\varvec{\beta }}^{(1)}_{0})\mathop {\longrightarrow }\limits ^{P} \lambda J_{{{\varvec{\beta }}}_{0}}^{\tau }E\left[ \frac{g_{1}^{'2}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{1})}{ {\sigma }_{1}^{2}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{1})}\left[ \varvec{X}_{1}-V_{1,{\varvec{\beta }}_{0}}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{1})\right] ^{\otimes 2}\right] J_{{{\varvec{\beta }}}_{0}}\nonumber \\&\quad +\, (1-\lambda ) J_{{{\varvec{\beta }}}_{0}}^{\tau }E\left[ \frac{g^{'2}_{2}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{2})}{{\sigma }_{2}^{2}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{2})} \left[ \varvec{X}_{2}-V_{2, {\varvec{\beta }}_{0}}(\varvec{\beta }_{0}^{\tau }\varvec{X}_{2})\right] ^{\otimes 2}\right] J_{{{\varvec{\beta }}}_{0}}. \end{aligned}$$(25)Moreover, a direct calculation for \(\mathcal {S}_{n_{1}n_{2}}(\tilde{\varvec{\beta }}^{(1)}_{0})\) and Lemma 1 entail that \(\mathcal {S}_{n_{1}n_{2}}(\tilde{\varvec{\beta }}^{(1)}_{0})=o_{P}(1)\). Together with (23) and (25), we complete the proof of Theorem 2. \(\square \)

1.2 Proof of Theorem 3

Proof

From the proof of Theorem 3, we can have that

Under the null hypothesis \(\mathcal {H}_{0}: \varvec{\beta }_{0}=\varvec{\gamma }_{0}\), we can have

Moreover,

Then, the Slutsky Theorem and continuous mapping theorem entail that

We complete the proof of Theorem 3. \(\square \)

1.3 Proof of Theorem 4

Lemma 2

Suppose that conditions (C1)–(C5) hold. Let \(F_{\hat{\epsilon }_{s}}(t|\mathscr {Q}_{n_{s}})\) be the distribution function of \(\hat{\epsilon }_{s}=\frac{Y_{s}-\hat{g}_{s}(\hat{\omega }_{s,0}^{\tau }{\varvec{X}}_{s}, \hat{\omega }_{s,0})}{\hat{\sigma }_{s}(\hat{\omega }_{s,0}^{\tau }{\varvec{X}}_{s},\hat{\omega }_{s,0})}\) conditional on the data \(\mathscr {Q}_{n_{s}}=\{\varvec{X}_{si}, Y_{si}\}_{i=1}^{n_{s}}\) (i.e., considering \(\hat{g}_{s}\left( \hat{\omega }_{s,0}^{\tau }\varvec{x}_{s}, \hat{\omega }_{s,0}\right) , \hat{\sigma }_{s}\left( \hat{\omega }_{s,0}^{\tau }\varvec{x}_{s},\hat{\omega }_{s,0}\right) \) as fixed functions on \(\varvec{x}_{s}\)) for \(s=1,2\) respectively. Here, \(\hat{\omega }_{1,0}=\hat{\varvec{\beta }}_{0}\) and \(\hat{\omega }_{2,0}=\hat{\varvec{\gamma }}_{0}\). Then, we have

Proof

In the following, we only prove (28), the proof of (29) is similar and we omit the details. Let

where \(M_{1}^{1+\delta }( \mathfrak {R}_{c}^{p})\) is the class of all differential functions f(u) defined on the domain \(\mathfrak {R}_{c}^{p}\) of \(\varvec{x}_{1}\) and \(\Vert f\Vert _{1+\delta }\le 1\). Here \( \mathfrak {R}_{c}^{p}\) is a compact set of \(\mathbb {R}^{p}\) and

Using Lemma 1 and \(\Vert \hat{\varvec{\beta }}_{0}-\varvec{\beta }_{0}\Vert =O_{P}(n_{1}^{-1/2})\), and similar to the proofs of (21) and (22), we have that

uniformly in \(\varvec{x}_{1}\in \mathfrak {R}_{c}^{p}\). Let \(A_{n_{1}}(\varvec{x}_{1})=\frac{\hat{g}_{1}(\hat{{\varvec{\beta }}}_{0}^{\tau }{\varvec{x}}_{1}, \hat{{\varvec{\beta }}}_{0})-{g}_{1}({{\varvec{\beta }}}_{0}^{\tau } {\varvec{x}}_{1})}{\sigma _{1}({{\varvec{\beta }}}_{0}^{\tau } {\varvec{x}})}\), \(B_{n_{1}}(\varvec{x})=\frac{\hat{\sigma }_{1}(\hat{{\varvec{\beta }}}_{0}^{\tau }{\varvec{x}}_{1}, \hat{{\varvec{\beta }}}_{0})}{\sigma _{1}({{\varvec{\beta }}}_{0}^{\tau }{\varvec{x}}_{1})}\). So, (30) and (31) entail \(P\left( A_{n_{1}}\in M_{1}^{1+\delta }(\mathfrak {R}_{c}^{p}) \right) \rightarrow 1\), \(P\left( B_{n_{1}}\in M_{1}^{1+\delta }(\mathfrak {R}_{c}^{p})\right) \rightarrow 1\) as \(n_{1}\rightarrow \infty \), \(h_{1}\rightarrow 0\) and \(\frac{n_{1}h_{1}}{(\log n_{1})^{1+s}}\rightarrow \infty \).

By directly using the Corollary 2.7.2 of van der Vaart and Wellner (1996), the bracketing number \(N_{[~]}\left( \upsilon ^{2}, M_{1}^{1+\delta }(\mathfrak {R}_{c}^{p}), L_{2}(P)\right) \) can be at most \(\exp \left( c_{0}\upsilon ^{-\frac{2p}{1+\delta }}\right) \) for some positive constant \(c_{0}\), According to the proof of Lemma 1 in Appendix B of Akritas and Van Keilegom (2001), and then the class \(\mathscr {O}\) defined above is a Donsker class, i.e., we have that \({\displaystyle \int }_{0}^{\infty }\sqrt{N_{[~]}(\bar{\upsilon }, \mathscr {O}, L_{2}(P))}d\bar{\upsilon }<\infty \). Then, the proof of (28) is complete. \(\square \)

Proof of Theorem 4

We can have that

where \(R_{n_{1},1}(t)=o_{P}(n_{1}^{-1/2})\) uniformly in \(t\in \mathbb {R}\) by using Lemma 2. Taylor expansion entails that

where \(v_{n_{1}}^{*}(t,\varvec{x}_{1})\) is between 0 and \(t[B_{n_{1}}(\varvec{x}_{1})-1]+A_{n_{1}}(\varvec{x}_{1})\). Note that

Recall the definition of \(\hat{g}_{1}(u,\varvec{\beta }_{0})\) and using Lemma 1,

Similar to (21), we can also have

Together with (34), (35) and (36), we have

Similarly,

Then, Taylor expansion for \(\sqrt{\hat{\sigma }^{2}_{1}\left( \hat{{\varvec{\beta }}}_{0}^{\tau }{\varvec{x}}, \hat{{\varvec{\beta }}}_{0}\right) }-\sqrt{\sigma ^{2}_{1}({\varvec{\beta }}_{0}^{\tau }\varvec{x})}\) and asymptotic expression (38) entail that

Moreover, (34), (39) and Condition (C5) entail that \(R_{n_{1},4}(t)=o_{P}(n_{1}^{-1/2})\) uniformly in t. Together with (32), (26) and (37)–(39), we complete the proof of Theorem 2.

\(\square \)

1.4 Proof of Theorems 5 and 6

Proof

Recalling the definition of \(F^{*}_{\widetilde{\mathcal {H}}_{0},\epsilon _{1}}(t)=E\left[ F_{\epsilon _{1}}\left( t+\frac{ g_{2}({\varvec{\beta }}_{0}^{\tau }{\varvec{X}}_{1})-g_{1}({\varvec{\beta }}_{0}^{\tau }{\varvec{X}}_{1})}{\sigma ({\varvec{\beta }}_{0}^{\tau }{\varvec{X}})}\right) \right] \).

where \(F_{\widetilde{\mathcal {H}}_{0}, \hat{\epsilon }_{1}}(t|\mathscr {V}_{n_{1}n_{2}})\) be the distribution function of \(\hat{\epsilon }_{\widetilde{\mathcal {H}}_{0},1}=\frac{Y-\hat{g}_{2} (\hat{{\varvec{\beta }}}_{0}^{\tau } {\varvec{X}},\hat{{\varvec{\gamma }}}_{0})}{\hat{\sigma }_{1}(\hat{{\varvec{\beta }}}_{0}^{\tau }{\varvec{X}},\hat{{\varvec{\beta }}}_{0})} \) conditional on the data \(\mathscr {V}_{n_{1}n_{2}}=\{\varvec{X}_{1i}, Y_{1i},\varvec{X}_{2j}, Y_{2j}, 1\le i\le n_{1}, 1\le j \le n_{2} \}\), and similar to the analysis of Lemma 2, we have \(\displaystyle {\sup _{t\in \mathbb {R}}}|S_{n_{1},1}(t)|=o_{P}(n_{1}^{-1/2})\). Taylor expansion entails that

Similar to the analysis of (21), we can have that

Recall the definition of \(\hat{g}_{2}(u,\varvec{\gamma }_{0})\) and using Lemma 1,

We can also show that \(R_{n_{1}n_{2}}(t)\) defined in (41) is \(o_{P}(n^{-1/2}_{1}+n_{2}^{-1/2})\) uniformly in \(t\in \mathbb {R}\). Together with (39), (42) and (43), we have

Recalling the definitions of \(D_{1}(u)\) and \(\rho _{f,\sigma }(u)\), we complete the proof of Theorem 5. Moreover, the proof of Theorem 6 is completed by following the asymptotic result of Theorem 5 and recalling that \(D_{1}(u)\equiv D_{2}(u)\equiv 0\) under the null hypothesis, we omit the details. \(\square \)

1.5 Proof of Theorem 7

Proof

By using the detailed proof of Theorem 1 in Stute et al. (2008), the class of functions

is a Vapnik-Chervonenkis class with envelop function 4 (Pollard 1984, Ch. 2). Then, we can have that

Moreover, Taylor expansion entails that

If the local alternative hypothesis \(\mathcal {H}_{1n_{1}n_{2}}\) is true, together with (44) and (45), we have

We can also obtain a similar expression for \(\hat{F}_{\widetilde{\mathcal {H}}_{0},\epsilon _{2}}(t)-\hat{F}_{\epsilon _{2}}(t)\) and we omit the details. Using the continuous mapping theorem, we complete the proof of Theorem 7. \(\square \)

About this article

Cite this article

Zhang, J., Feng, Z. & Wang, X. A constructive hypothesis test for the single-index models with two groups. Ann Inst Stat Math 70, 1077–1114 (2018). https://doi.org/10.1007/s10463-017-0616-y

Received:

Revised:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10463-017-0616-y