Abstract

Purpose

To (1) develop a deep learning system (DLS) using a deep convolutional neural network (DCNN) for identification of pneumothorax, (2) compare its performance to first-year radiology residents, and (3) evaluate the ability of a DLS to augment radiology residents by detecting missed pneumothoraces.

Methods

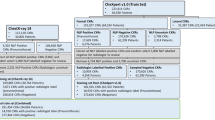

This was a retrospective study performed in September 2018. We obtained 112,120 chest radiographs (CXRs) from the NIH ChestXray14 database, of which 4360 cases (4%) were labeled as pneumothorax by natural language processing. We utilized 111,518 CXRs to train and validate the ResNet-152 DCNN pretrained on ImageNet to identify pneumothorax. DCNN testing was performed on a hold-out set of 602 CXRs, whose groundtruth was determined by a cardiothoracic radiologist. Two first-year radiology residents evaluated the test CXRs for presence of pneumothorax. Receiver operating characteristic (ROC) curves were generated for each evaluator with area under the curve (AUC) compared using the DeLong parametric method.

Results

The DCNN achieved AUC of 0.841 for identification of pneumothorax at a rate of 1980 images/min. In contrast, both first-year residents achieved significantly higher AUCs of 0.942 and 0.905 (p < 0.01 for both compared to DCNN), but at a slower rate of two images/min. The DCNN identified 3 of 31 (9.7%) additional pneumothoraces missed by at least one of the residents.

Conclusion

A DLS for pneumothorax identification had lower AUC than 1st-year radiology residents, but interpreted images > 1000× as fast and identified 3 additional pneumothoraces missed by the residents. Our findings suggest that DLS could augment radiologists-in-training to identify potential urgent findings.

Similar content being viewed by others

References

Gaskin CM, Patrie JT, Hanshew MD, Boatman DM, McWey R (2016) Impact of a reading priority scoring system on the prioritization of examination interpretations. Am J Roentgenol 206:1031–1039. https://doi.org/10.2214/AJR.15.14837

Prevedello LM, Erdal BS, Ryu JL, Little KJ, Demirer M, Qian S, White RD (2017) Automated critical test findings identification and online notification system using artificial intelligence in imaging. Radiology 285:923–931. https://doi.org/10.1148/radiol.2017162664

Chang PD, Kuoy E, Grinband J et al (2018) Hybrid 3D/2D convolutional neural network for hemorrhage evaluation on head CT. AJNR Am J Neuroradiol. https://doi.org/10.3174/ajnr.A5742

Olczak J, Fahlberg N, Maki A, Razavian AS, Jilert A, Stark A, Sköldenberg O, Gordon M (2017) Artificial intelligence for analyzing orthopedic trauma radiographs. Acta Orthop 88:581–586. https://doi.org/10.1080/17453674.2017.1344459

Chung SW, Han SS, Lee JW, Oh KS, Kim NR, Yoon JP, Kim JY, Moon SH, Kwon J, Lee HJ, Noh YM, Kim Y (2018) Automated detection and classification of the proximal humerus fracture by using deep learning algorithm. Acta Orthop 89:468–473. https://doi.org/10.1080/17453674.2018.1453714

Urakawa T, Tanaka Y, Goto S, Matsuzawa H, Watanabe K, Endo N (2018) Detecting intertrochanteric hip fractures with orthopedist-level accuracy using a deep convolutional neural network. Skelet Radiol 48:239–244. https://doi.org/10.1007/s00256-018-3016-3

Lian K, Bharatha A, Aviv RI, Symons SP (2011) Interpretation errors in CT angiography of the head and neck and the benefit of double reading. Am J Neuroradiol 32:2132–2135. https://doi.org/10.3174/ajnr.A2678

Meyer RE, Nickerson JP, Burbank HN, Alsofrom GF, Linnell GJ, Filippi CG (2009) Discrepancy rates of on-call radiology residents’ interpretations of CT angiography studies of the neck and circle of Willis. Am J Roentgenol 193:527–532. https://doi.org/10.2214/AJR.08.2169

Mellnick V, Raptis C, McWilliams S, Picus D, Wahl R (2016) On-call radiology resident discrepancies: categorization by patient location and severity. J Am Coll Radiol 13:1233–1238. https://doi.org/10.1016/j.jacr.2016.04.020

Ruutiainen AT, Durand DJ, Scanlon MH, Itri JN (2013) Increased error rates in preliminary reports issued by radiology residents working more than 10 consecutive hours overnight. Acad Radiol 20:305–311. https://doi.org/10.1016/j.acra.2012.09.028

Taylor AG, Mielke C, Mongan J (2018) Automated detection of moderate and large pneumothorax on frontal chest X-rays using deep convolutional neural networks: a retrospective study. PLoS Med 15:e1002697. https://doi.org/10.1371/journal.pmed.1002697

Rajpurkar P, Irvin J, Ball RL, Zhu K, Yang B, Mehta H, Duan T, Ding D, Bagul A, Langlotz CP, Patel BN, Yeom KW, Shpanskaya K, Blankenberg FG, Seekins J, Amrhein TJ, Mong DA, Halabi SS, Zucker EJ, Ng AY, Lungren MP (2018) Deep learning for chest radiograph diagnosis: a retrospective comparison of the CheXNeXt algorithm to practicing radiologists. PLoS Med 15:e1002686. https://doi.org/10.1371/journal.pmed.1002686

Wang X, Peng Y, Lu L, et al (2017) ChestX-ray8: hospital-scale chest X-ray database and benchmarks on weakly-supervised classification and localization of common thorax diseases

Oakden-Rayner L, Dunnmon J, Carneiro G, Ré C (2019) Hidden stratification causes clinically meaningful failures in machine learning for medical imaging

He K, Zhang X, Ren S, Sun J (2015) Deep residual learning for image recognition. arXiv

Russakovsky O, Deng J, Su H, et al (2014) ImageNet large scale visual recognition challenge. arXiv

Zhou B, Khosla A, Lapedriza A, et al (2015) Learning deep features for discriminative localization. arXiv. https://doi.org/10.1109/CVPR.2016.319

Goksuluk D, Korkmaz S, Zararsiz G, Karaagaoglu AE (2016) easyROC: an interactive Web-tool for ROC curve analysis using R Language Environment. R J 8:213. https://doi.org/10.32614/RJ-2016-042

DeLong ER, DeLong DM, Clarke-Pearson DL (1988) Comparing the areas under two or more correlated receiver operating characteristic curves: a nonparametric approach. Biometrics 44:837–845

Cheng PM, Tran KN, Whang G, Tejura TK (2019) Refining convolutional neural network detection of small-bowel obstruction in conventional radiography. Am J Roentgenol 212:342–350. https://doi.org/10.2214/AJR.18.20362

Kim TK, Yi PH, Hager GD, Lin CT (2009) Refining dataset curation methods for deep learning-based automated tuberculosis screening. J Thorac Dis 3

SIIM-ACR Pneumothorax Segmentation | Kaggle. https://www.kaggle.com/c/siim-acr-pneumothorax-segmentation/overview. Accessed 29 Oct 2019

Park S, Lee SM, Kim N, Choe J, Cho Y, Do KH, Seo JB (2019) Application of deep learning–based computer-aided detection system: detecting pneumothorax on chest radiograph after biopsy. Eur Radiol 29:5341–5348. https://doi.org/10.1007/s00330-019-06130-x

Kelly BS, Rainford LA, Darcy SP, Kavanagh EC, Toomey RJ (2016) The development of expertise in radiology: in chest radiograph interpretation, “expert” search pattern may predate “expert” levels of diagnostic accuracy for pneumothorax identification. Radiology 280:252–260. https://doi.org/10.1148/radiol.2016150409

Prabhakar AM, Misono AS, Hemingway J et al (2016) Medicare utilization of CT angiography from 2001 through 2014: continued growth by radiologists. J Vasc Interv Radiol 27:1554–1560. https://doi.org/10.1016/j.jvir.2016.05.031

Chung JH, Duszak R, Hemingway J et al (2019) Increasing utilization of chest imaging in US Emergency Departments from 1994 to 2015. J Am Coll Radiol 16:674–682. https://doi.org/10.1016/j.jacr.2018.11.011

Selvarajan SK, Levin DC, Parker L (2019) The increasing use of emergency department imaging in the United States: is it appropriate? Am J Roentgenol 213:W180–W184. https://doi.org/10.2214/AJR.19.21386

Funding

This work was supported by a Medical Student Research Grant from the Radiological Society of North America R&E Foundation (RMS1816 to TK Kim).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

This work was awarded the Trainee Research Prize in Emergency Radiology (Resident Award) at the 2019 Annual Meeting of the Radiological Society of North America (RSNA).

Ethics approval

This work utilized publicly available data and was considered not human subjects research by our institutional review board.

Consent to participate

Not applicable.

Consent for publication

Not applicable.

Availability of data and material

The source data utilized in this study is publicly available.

Code availability

Our code is not publicly available, but it utilizes standard methodologies and software packages that are described in the manuscript to allow independent replication.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Yi, P.H., Kim, T.K., Yu, A.C. et al. Can AI outperform a junior resident? Comparison of deep neural network to first-year radiology residents for identification of pneumothorax. Emerg Radiol 27, 367–375 (2020). https://doi.org/10.1007/s10140-020-01767-4

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10140-020-01767-4