Abstract

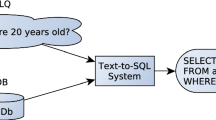

In recent years, Community Question Answering (CQA) becomes increasingly prevalent, because it provides platforms for users to collect information and share knowledge. However, given a question in a CQA system, there are often many different paired answers. It is almost impossible for users to view them item by item and select the most relevant one. Hence, answer selection becomes an important task of CQA. In this paper, we propose a novel solution - BertHANK, which is a hierarchical attention networks with enhanced knowledge and pre-trained model for answer selection. Specifically, in the encoding stage, knowledge enhancement and pre-training model are used for questions and answers, respectively. Further, we adopt multi-attention mechanism, including the cross-attention on question-answer pairs, the inner attention on questions at word level, and the hierarchical inner attention on answers at both word and sentence level, to capture more subtle semantic features. In more details, the cross-attention focuses on capturing interactive information among encoded questions and answers. While the hierarchical inner attention assigns different weights to words in sentences, and sentences in answers, thereby obtaining both global and local information of question-answer pairs. The hierarchical inner attention contributes to select out best-matched answers for specific questions. Finally, we integrate attention-questions and attention-answers to make prediction. The results show that our model achieves state-of-the-art performance on two corpora, SemEval-2015 and SemEval-2017 CQA datasets, outperforming the advanced baselines by a large margin.

Similar content being viewed by others

References

Nie L, Wang M, Zhang L, Yan S, Zhang B, Chua TS (2015) Disease inference from health-related questions via sparse deep learning. TKDE 27(8):2107–2119

Yuan S, Zhang Y, Tang J, Hall W, Cabot JB (2019) Expert finding in community question answering: a review. Artif Intell Rev 2:4470

Nie L, Wei X, Zhang D, Wang X, Gao Z, Yang Y (2017) Data-driven answer selection in community qa systems. In: IEEE transactions on knowledge and data engineering, pp 1186–1198

Tymoshenko K, Moschitti A (2015) Assessing the impact of syntactic and semantic structures for answer passages reranking. In: 24th ACM international on conference on information and knowledge management, pp 1451–1460

Yih SWT, Chang M-W, Meek C, Pastusiak A (2013) Question answering using enhanced lexical semantic models. In: 51st annual meeting of the association for computational linguistics (ACL), pp 1744–1753

Cao X, Cong G, Cui B, Jensen CS (2010) A generalized framework of exploring category information for question retrieval in community question answer archives. In: 19th international conference on World wide web. ACM, pp 201–210

Cao X, Cong G, Cui B, Jensen CS, Zhang C (2009) The use of categorization information in language models for question retrieval. In: 18th ACM conference on information and knowledge management, pp 265–274

Duan H, Cao Y, Lin C-Y, Yu Y (2008) Searching questions by identifying question topic and question focus. In: ACL-08: HLT, pp 156–164

Jeon J, Croft WB, Lee JH (2005) Finding semantically similar questions based on their answers. In: SIGIR. ACM, pp 617–618

Jeon J, Croft WB, Lee JH (2005) Finding similar questions in large question and answer archives. In: 14th ACM international conference on Information and knowledge management, pp 84–90

Xiang Y, Chen Q, Wang X, Qin Y (2017) Answer selection in community question answering via attentive neural networks. IEEE Signal Process Lett 24(4):505–509

Xiang Y, Zhou X, Chen Q, Zheng Z, Tang B, Wang X, Qin Y (2016) Incorporating label dependency for answer quality tagging in community question answering via cnn-lstm-crf. In: 26th international conference on computational linguistics, pp 1231–1241

Tan M, dos Santos C, Xiang B, Zhou B (2015) Lstm-based deep learning models for non-factoid answer selection. arXiv:1511.04108

Tau YW, Ming-Wei C, Christopher M, Andrzej P (2013) Question answering using enhanced lexical semantic models. In: Annual meeting of the association for computational linguistics

Riloff E, Thelen M (2012) A rule-based question answering system for reading comprehension tests. Science 2:447

Yih WT, Chang MW, Meek C, Pastusiak A (2013) Question answering using enhanced lexical semantic models. Meeting Assoc Comput Linguist 2:1744–1753

Wang M, Manning CD (2010) Probabilistic tree-edit models with structured latent variables for textual entailment and question answering. Int Conf Comput Linguist 5:1164–1172

Wang M, Smith NA, Mitamura T (2007) What is the jeopardy model? a quasisynchronous grammar for qa. In: EMNLP-CoNLL

Smith DA, Eisner J (2006) Quasi-synchronous grammars: alignment by soft projection of syntactic dependencies. In: Workshop on statistical machine translation

Heilman M, Smith NA (2010) Tree edit models for recognizing textual entailments, paraphrases, and answers to questions. In: NAACL

Yao X, Van Durme B, Clark P (2013) Answer extraction as sequence tagging with tree edit distance. In: NAACL-HLT

Feng M, Xiang B, Glass MR, Wang L, Zhou B (2015) Applying deep learning to answer selection: A study and an open task. page 813–820. In: IEEE workshop on automatic speech recognition and understanding (ASRU)

Surdeanu M, Ciaramita M, Zaragoza H (2011) Learning to rank answers to non-factoid questions from web collections. Comput Linguist 37(2):351–383

Robertson S, Zaragoza H et al (2009) The probabilistic relevance framework: Bm25 and beyond. Found Trends Inf Retr 3(4):333–389

Chao L (2016) Research and application on intelligent disease guidance and medical question answering method. MSc. thesis, Dalian University of Technology

Textrank MR (2004) Bringing order into text. 2004 conference on empirical methods in natural language processing. Science 2:11554

Moschitti A, Quarteroni S (2011) Linguistic kernels for answer re-ranking in question answering systems. Inf Process Manag 47(6):825–842

Yu L, Hermann KM, Blunsom P, Pulman S (2014) Deep learning for answer sentence selection. CoRR arXiv:1412.1632

Kim Y (2014) Convolutional neural networks for sentence classification. In: Conference on empirical methods in natural language processing (EMNLP). Association for Computational Linguistics, Doha, Qatar, pp 1746–1751

Severyn A, Moschitti A (2015) Learning to rank short text pairs with convolutional deep neural networks. Int ACM SIGIR Conf 5:373–382

Feng M et al (2015) Applying deep learning to answer selection: a study and an open task. In: IEEE workshop on automatic speech recognition and understanding, ASRU

Tymoshenko K, Bonadiman D, Moschitti A (2016) Convolutional neural networks vs. convolution kernels: feature engineering for answer sentence reranking. In: 2016 conference of the North American chapter of the association for computational linguistics: human language technologies, pp 1268–1278

Wang D, Nyberg E (2015) A long short-term memory model for answer sentence selection in question answering. Int Joint Conf Nat Lang Process Meeting Assoc Comput Linguist 3:707–712

Friedman JH (2001) Greedy function approximation: a gradient boosting machine. Ann Stat 29(5):1189–1232

Graves A, Schmidhuber J (2005) Framewise phoneme classification with bidirectional lstm and other neural network architectures. Neural Netw 18(5–6):602–610

Tan M, Xiang B, Dos Santos C, Zhou B (2016) Improved representation learning for question answer matching. In: 54th annual meeting of the association for computational linguistics, pp 464–473

Yin W, Yu M, Xiang B, Zhou B, Schtze H (2016) Simple question answering by attentive convolutional neural network. arXiv preprint arXiv:1606.03391

Bahdanau D, Cho K, Bengio Y (2015) Neural machine translation by jointly learning to align and translate. In: International conference on learning representations

Zhang X, Li S, Sha L, Wang H (2017) Attentive interactive neural networks for answer selection in community question answering. In: 31st AAAI conference on artificial intelligence, pp 3525–3531

Wang S, Jiang J (2017) A compare-aggregate model for matching text sequences. In: International conference on learning representations

Tan M, Xiang B, Zhou B (2015) Lstm based deep learning models for non-factoid answer selection. arXiv preprint arXiv:1511.04108

Tran NK, Niedereée C (2018) Multihop attention networks for question answer matching. In: International ACM SIGIR conference on research and development in information retrieval

Zhang X, Li S, Sha L, Wang H (2017) Attentive interactive neural networks for answer selection in community question answering. In: 31st AAAI conference on artificial intelligence, pp 3525–3531

Weston J, Chopra S, Bordes A (2014) Memory networks. arXiv preprint arXiv:1410.3916

Deng Y, Xie Y, Li Y, Yang M, Nan D, Fan W, Lei K, Shen Y (2019) Multi-task learning with multi-view attention for answer selection and knowledge base question answering. Proc AAAI Conf Artif Intell 33:6318–6325

Savenkov D, Agichtein E (2017) Evinets: neural networks for combining evidence signals for factoid question answering. In: Proceedings of the 55th annual meeting of the association for computational linguistics (vol 2: Short Papers), pp 299–304

Deng Y, Xie Y, Li Y, Yang M, Lam W, Shen Y (2021) Contextualized knowledge-aware attentive neural network: enhancing answer selection with knowledge. ACM Trans Inf Syst 40(1):4478

Bordes A, Usunier N, Garcia-Durán A, Weston J, Yakhnenko O (2013) Translating embeddings for modeling multi-relational data. In: Proceedings of the 26th international conference on neural information processing systems-vol 2, NIPS’13, pp 2787–2795

Devlin J, Chang M-W, Lee K, Toutanova K (2019) Bert: pre-training of deep bidirectional transformers for language understanding. In: NAACL, pp 4171–4186

Yu AW, Dohan D, Le Q, Luong T et al. (2018) Fast and accurate reading comprehension by combining self-attention and convolution. In: International conference on learning representations

Lin Z, Feng M, dos Santos CN, Yu M, Xiang B, Zhou B, Bengio Y (2017) A structured self-attentive sentence embedding. In: International conference on learning representations

Zhu M, Ahuja A, Wei W, Reddy CK (2010) A hierarchical attention retrieval model for healthcare question answering. In: World Wide Web conference, pp 2472–2482

Tran QH, Tran D-V, Vu T, Nguyen ML, Pham SB (2015) Jaist: combining multiple features for answer selection in community question answering. In: 9th international workshop on semantic evaluation (SemEval2015), pp 215–219

Hou Y, Tan C, Wang X, Zhang Y, Xu J, Chen Q (2015) Hitsz-icrc: exploiting classification approach for answer selection in community question answering. In: 9th international workshop on semantic evaluation (SemEval 2015), pp 196–202

Joty S, Barrón-Cedeño A, Da San Martino G, Filice S, Màrquez L, Moschitti A, Nakov P (2015) Global thread-level inference for comment classification in community question answering. In: EMNLP, pp 573–578

Joty S, Màrquez L, Nakov P (2016) Joint learning with global inference for comment classification in community question answering. In: 2016 conference of the North American chapter of the association for computational lin-guistics: human language technologies, pp 703–713

Wu W, Wang H, Li S (2017) Bi-directional gated memory networks for answer selection. In: Chinese computational linguistics and natural language processing based on naturally annotated big data, pp 251–262

Wu G, Sheng Y, Lan M, Wu Y (2017) Ecnu at semeval-2017 task 3: using traditional and deep learning methods to address community question answer-ing task. In 11th international workshop on semantic evaluation (SemEval-2017), pp 365–369

Xiang Y, Zhou X, Chen Q et al. (2016) Incorporating label dependency for answer quality tagging in community question answering via cnn-lstm-crf. In: COLING 2016, 26th international conference on computational linguistics, pp 1231–1241

Wu W, Sun X, Wang H et al. (2018) Question condensing networks for answer selection in community question answering. In: Association for computational linguistics, pp 1746–1755

Author information

Authors and Affiliations

Corresponding authors

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Yang, H., Zhao, X., Wang, Y. et al. BertHANK: hierarchical attention networks with enhanced knowledge and pre-trained model for answer selection. Knowl Inf Syst 64, 2189–2213 (2022). https://doi.org/10.1007/s10115-022-01703-7

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10115-022-01703-7