Abstract

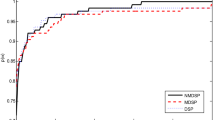

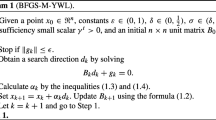

Following the modification scheme of Dong et al. made on the Hestenes–Stiefel method, we suggest a modified Polak–Ribiére–Polyak technique which satisfies the sufficient descent condition. We show that the method is globally convergent with the Wolfe line search conditions as well as the backtracking Armijo-type line search strategy proposed by Grippo and Lucidi, without convexity assumption on the objective function. Numerical experiments on some test functions of the CUTEr collection show that the method performs promisingly.

Similar content being viewed by others

References

Andrei, N.: Numerical comparison of conjugate gradient algorithms for unconstrained optimization. Stud. Inform. Control 16(4), 333–352 (2007)

Andrei, N.: A modified Polak–Ribière–Polyak conjugate gradient algorithm for unconstrained optimization. Optimization 60(12), 1457–1471 (2011)

Babaie-Kafaki, S., Ghanbari, R.: A descent extension of the Polak–Ribière–Polyak conjugate gradient method. Comput. Math. Appl. 68(12), 2005–2011 (2014)

Babaie-Kafaki, S., Ghanbari, R.: An optimal extension of the Polak–Ribière–Polyak conjugate gradient method. Numer. Funct. Anal. Optim. 38(9), 1115–1124 (2017)

Dai, Y.H.: Analyses of conjugate gradient methods. In: Ph.D. Thesis. Mathematics and Scientific/Engineering Computing, Chinese Academy of Sciences (1997)

Dai, Y.H., Han, J.Y., Liu, G.H., Sun, D.F., Yin, H.X., Yuan, Y.X.: Convergence properties of nonlinear conjugate gradient methods. SIAM J. Optim. 10(2), 348–358 (1999)

Dai, Y.H., Liao, L.Z.: New conjugacy conditions and related nonlinear conjugate gradient methods. Appl. Math. Optim. 43(1), 87–101 (2001)

Dai, Z.: Two modified Polak–Ribière–Polyak-type nonlinear conjugate methods with sufficient descent property. Numer. Funct. Anal. Optim. 31(8), 892–906 (2010)

Dai, Z.F., Wen, F.: Some improved sparse and stable portfolio optimization problems. Financ. Res. Lett. 27, 46–52 (2018)

Dai, Z.F., Wen, F.: Two nonparametric approaches to mean absolute deviation portfolio selection model. J. Ind. Manag. Optim. (2019), (Accepted)

Dolan, E.D., Moré, J.J.: Benchmarking optimization software with performance profiles. Math. Program. 91(2, Ser. A), 201–213 (2002)

Dong, X.L., Liu, H.W., He, Y.B., Babaie-Kafaki, S., Ghanbari, R.: A new three-term conjugate gradient method with descent direction for unconstrained optimization. Math. Model. Anal. 21(3), 399–411 (2016)

Dong, X.L., Liu, H.W., He, Y.B., Yang, X.M.: A modified Hestenes–Stiefel conjugate gradient method with sufficient descent condition and conjugacy condition. J. Comput. Appl. Math. 281(1), 239–249 (2015)

Gilbert, J.C., Nocedal, J.: Global convergence properties of conjugate gradient methods for optimization. SIAM J. Optim. 2(1), 21–42 (1992)

Gould, N.I.M., Orban, D., Toint, PhL: CUTEr: a constrained and unconstrained testing environment, revisited. ACM Trans. Math. Software 29(4), 373–394 (2003)

Grippo, L., Lucidi, S.: A globally convergent version of the Polak–Ribière gradient method. Math. Program. 78(3), 375–391 (1997)

Hager, W.W., Zhang, H.: Algorithm 851: \(\text{ CG }_{-}\)Descent, a conjugate gradient method with guaranteed descent. ACM Trans. Math. Software 32(1), 113–137 (2006)

Hager, W.W., Zhang, H.: A survey of nonlinear conjugate gradient methods. Pac. J. Optim. 2(1), 35–58 (2006)

Hestenes, M.R., Stiefel, E.: Methods of conjugate gradients for solving linear systems. J. Res. Nat. Bur. Stand. 49(6), 409–436 (1952)

Nocedal, J., Wright, S.J.: Numerical Optimization. Springer, New York (2006)

Polak, E., Ribière, G.: Note sur la convergence de méthodes de directions conjuguées. Rev. Française Informat. Recherche Opérationnelle 3(16), 35–43 (1969)

Polyak, B.T.: The conjugate gradient method in extreme problems. USSR Comput. Math. Math. Phys. 9(4), 94–112 (1969)

Sun, W., Yuan, Y.X.: Optimization Theory and Methods: Nonlinear Programming. Springer, New York (2006)

Yu, G., Guan, L., Li, G.: Global convergence of modified Polak–Ribière–Polyak conjugate gradient methods with sufficient descent property. J. Ind. Manag. Optim. 4(3), 565–579 (2008)

Yu, G.H.: Nonlinear Self-Scaling Conjugate Gradient Methods for Large-Scale Optimization Problems, Ph.D. Thesis. Sun Yat–Sen University (2007)

Yuan, G.L.: Modified nonlinear conjugate gradient methods with sufficient descent property for large-scale optimization problems. Optim. Lett. 3(1), 11–21 (2009)

Zhang, L., Zhou, W., Li, D.H.: A descent modified Polak–Ribière–Polyak conjugate gradient method and its global convergence. IMA J. Numer. Anal. 26(4), 629–640 (2006)

Zoutendijk, G.: Nonlinear programming, computational methods. In: Abadie, J. (ed.) Integer and Nonlinear Programming, pp. 37–86. North-Holland, Amsterdam (1970)

Acknowledgements

This research was in part supported by the grant 97022259 from Iran National Science Foundation (INSF), and in part by the Research Council of Semnan University. The authors thank the anonymous reviewers for their valuable comments and suggestions helped to improve the quality of this work. They are also grateful to Professor Michael Navon for providing the line search code.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Aminifard, Z., Babaie-Kafaki, S. A modified descent Polak–Ribiére–Polyak conjugate gradient method with global convergence property for nonconvex functions. Calcolo 56, 16 (2019). https://doi.org/10.1007/s10092-019-0312-9

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s10092-019-0312-9

Keywords

- Unconstrained optimization

- Conjugate gradient method

- Sufficient descent condition

- Line search

- Global convergence