Abstract

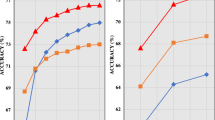

Recently, the vision transformer (ViT) achieved remarkable results on computer vision-related tasks. However, ViT lacks the inductive biases present on CNNs, such as locality and translation equivariance. Overcoming this deficiency usually comes at high cost, with networks with hundreds of millions of parameters, trained over extensive training routines and on large-scale datasets. Although one common alternative to mitigate this limitation involves combining self-attention layers with convolution layers, thus introducing some of the inductive biases from CNNs, large volumes of data are still necessary to attain state-of-the-art performance on benchmark classification tasks. To tackle the vision transformer’s lack of inductive biases without increasing the model’s capacity or requiring large volumes of training data, we propose a self-attention regularization mechanism based on two-dimensional distance information on an image with a new loss function, denoted Distance Loss, formulated specifically for the transformer encoder. Furthermore, we propose ARViT, an architecture marginally smaller than state-of-the-art vision transformers, in which the self-attention regularization method is deployed. Experimental results indicate that the ARViT, pre-trained with a self-supervised pretext-task on the ILSVRC-2012 ImageNet dataset, outperforms a similar capacity Vision Transformer by large margins on all tasks (up to 24%). When comparing with large-scale self-supervised vision transformers, ARViT also outperforms the SiT (Atito et al. in SiT: self-supervised vision transformer, 2021), but still underperforms when compared to MoCo (Chen et al. in: 2021 IEEE/CVF international conference on computer vision (ICCV), Montreal, 2020) and DINO (Caron et al. in: 2021 IEEE/CVF international conference on 354 computer vision (ICCV), Montreal, 2021).

Similar content being viewed by others

Notes

Imagenette and Imagewoof are available at: https://github.com/fastai.

References

Vaswani A, Shazeer N, Parmar N, Uszkoreit J, Jones L, Gomez AN, Kaiser, Polosukhin I (2017) Attention is all you need. In: Advances in neural information processing systems, vol 30

Dosovitskiy A, Beyer L, Kolesnikov A, Weissenborn D, Zhai X, Unterthiner T, Dehghani M, Minderer M, Heigold G, Gelly S, Uszkoreit J, Houlsby N (2020) An image is worth 16 \(\times\) 16 words: transformers for image recognition at scale. arXiv:2010.11929 [cs]

Zhao H, Jia J, Koltun V (2020) Exploring self-attention for image recognition. In: 2020 IEEE/CVF conference on computer vision and pattern recognition (CVPR), (Seattle, WA, USA). IEEE, pp 10073–10082

Ramachandran P, Parmar N, Vaswani A, Bello I, Levskaya A, Shlens J (2019) Stand-alone self-attention in vision models. In: Advances in neural information processing systems, vol 32

Chen Z, Xie L, Niu J, Liu X, Wei L, Tian Q (2021) Visformer: the vision-friendly transformer. In: Proceedings of the IEEE/CVF international conference on computer vision, pp 589–598

Han K, Wang Y, Chen H, Chen X, Guo J, Liu Z, Tang Y, Xiao A, Xu C, Xu Y (2022) A survey on vision transformer. In: IEEE transactions on pattern analysis and machine intelligence. ISBN: 0162-8828 Publisher: IEEE

Xu Y, Zhang Q, Zhang J, Tao D (2021) Vitae: vision transformer advanced by exploring intrinsic inductive bias. Adv Neural Inf Process Syst 34:28522–28535

Deng J, Dong W, Socher R, Li L.-J, Li K, Fei-Fei L (2009) Imagenet: a large-scale hierarchical image database. In: 2009 IEEE conference on computer vision and pattern recognition. Ieee, pp 248–255

Sun C, Shrivastava A, Singh S, Gupta A (2017) Revisiting unreasonable effectiveness of data in deep learning era. In: 2017 IEEE international conference on computer vision (ICCV), (Venice). IEEE, pp 843–852

Gidaris S, Singh P, Komodakis N (2018) Unsupervised representation learning by predicting image rotations. arXiv preprint arXiv:1803.07728

Chen C-FR, Fan Q, Panda R (2021) Crossvit: cross-attention multi-scale vision transformer for image classification. In: Proceedings of the IEEE/CVF international conference on computer vision, pp 357–366

Chu X, Tian Z, Zhang B, Wang X, Wei X, Xia H, Shen C Mar (2021) Conditional positional encodings for vision transformers. arXiv:2102.10882 [cs]

Han K, Xiao A, Wu E, Guo J, Xu C, Wang Y (2021) Transformer in transformer. Adv Neural Inf Process Syst 34:15908–15919

Graham B, El-Nouby A, Touvron H, Stock P, Joulin A, Jégou H, Douze M (2021) Levit: a vision transformer in convnet’s clothing for faster inference. In: Proceedings of the IEEE/CVF international conference on computer vision, pp 12259–12269

Li Y, Zhang K, Cao J, Timofte R, Van Gool L (2021) LocalViT: bringing locality to vision transformers. arXiv:2104.05707 [cs]

Liu Z, Lin Y, Cao Y, Hu H, Wei Y, Zhang Z, Lin S, Guo B (2021) Swin transformer: Hierarchical vision transformer using shifted windows. In: Proceedings of the IEEE/CVF international conference on computer vision, pp 10012–10022

Peng Z, Huang W, Gu S, Xie L, Wang Y, Jiao J, Ye Q (2021) Conformer: Local features coupling global representations for visual recognition. In: Proceedings of the IEEE/CVF international conference on computer vision, pp 367–376

Touvron H, Cord M, Douze M, Massa F, Sablayrolles A, Jégou H (2021) Training data-efficient image transformers & distillation through attention. In: International conference on machine learning, PMLR, pp 10347–10357

Parmar N, Vaswani A, Uszkoreit J, Kaiser L, Shazeer N, Ku A, Tran D (2018) Image transformer. In: International conference on machine learning, PMLR, pp 4055–4064

Cordonnier JB, Loukas A, Jaggi M (2020) On the relationship between self-attention and convolutional layers. arXiv:1911.03584 [cs, stat]

Wang W, Xie E, Li X, Fan D.-P, Song K, Liang D, Lu T, Luo P, Shao L (2021) Pyramid vision transformer: a versatile backbone for dense prediction without convolutions. In: Proceedings of the IEEE/CVF international conference on computer vision, pp 568–578

Chu X, Tian Z, Wang Y, Zhang B, Ren H, Wei X, Xia H, Shen C (2021) Twins: revisiting the design of spatial attention in vision transformers. Adv Neural Inf Process Syst 34:9355–9366

Xie Z, Lin Y, Yao Z, Zhang Z, Dai Q, Cao Y, Hu H (2021) Self-supervised learning with swin transformers. arXiv preprint arXiv:2105.04553

Liu Z, Ning J, Cao Y, Wei Y, Zhang Z, Lin S, Hu H (2022) Video swin transformer. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pp 3202–3211

Krizhevsky A, Sutskever I, Hinton GE (2017) ImageNet classification with deep convolutional neural networks. Commun ACM 60:84–90

Caron M, Touvron H, Misra I, Jegou H, Mairal J, Bojanowski P, Joulin A (2021) Emerging properties in self-supervised vision transformers. In: 2021 IEEE/CVF international conference on computer vision (ICCV), (Montreal, QC, Canada). IEEE, pp 9630–9640

Caron M, Misra I, Mairal J, Goyal P, Bojanowski P, Joulin A (2020) Unsupervised learning of visual features by contrasting cluster assignments. Adv Neural Inf Process Syst 33:9912–9924

Nilsback ME, Zisserman A (2008) Automated flower classification over a large number of classes. In: 2008 Sixth Indian conference on computer vision, graphics & image processing, (Bhubaneswar, India). IEEE, pp 722–729

Atito S, Awais M, Kittler J (2021) SiT: self-supervised vIsion Transformer. arXiv:2104.03602 [cs]

Chen X, Xie S, He K (2021) An empirical study of training self-supervised vision transformers. In: 2021 IEEE/CVF international conference on computer vision (ICCV), (Montreal, QC, Canada). IEEE, pp. 9620–9629

Author information

Authors and Affiliations

Corresponding author

Additional information

This work was presented in part at the joint symposium of the 27th International Symposium on Artificial Life and Robotics, the 7th International Symposium on BioComplexity, and the 5th International Symposium on Swarm Behavior and Bio-Inspired Robotics.

About this article

Cite this article

Mormille, L.H., Broni-Bediako, C. & Atsumi, M. Regularizing self-attention on vision transformers with 2D spatial distance loss. Artif Life Robotics 27, 586–593 (2022). https://doi.org/10.1007/s10015-022-00774-7

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10015-022-00774-7