Abstract

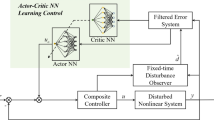

In this article, we propose a new control method using reinforcement learning (RL) with the concept of sliding mode control (SMC). Some remarkable characteristics of the SMC method are good robustness and stability for deviations from control conditions. On the other hand, RL may be applicable to complex systems that are difficult to model. However, applying reinforcement learning to a real system has a serious problem, i.e., many trials are required for learning. We intend to develop a new control method with good characteristics for both these methods. To realize it, we employ the actor-critic method, a kind of RL, to unite with the SMC. We are able to verify the effectiveness of the proposed control method through a computer simulation of inverted pendulum control without the use of inverted pendulum dynamics. In particular, it is shown that the proposed method enables the RL to learn in fewer trials than the reinforcement learning method.

Similar content being viewed by others

References

Morimoto J, Doya K (2005) Robust reinforcement learning. Neural Comput 17:335–359

Sutton RS, Barto AG (1998) Reinforcement learning. An introduction. MIT Press, Cambridge

Koga M (2000) Numerical computation by MATX. Tokyo Denki University Press, Tokyo

Author information

Authors and Affiliations

Corresponding author

Additional information

This work was presented in part at the 13th International Symposium on Artificial Life and Robotics, Oita, Japan, January 31–February 2, 2008

About this article

Cite this article

Obayashi, M., Nakahara, N., Kuremoto, T. et al. A robust reinforcement learning using the concept of sliding mode control. Artif Life Robotics 13, 526–530 (2009). https://doi.org/10.1007/s10015-008-0608-3

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10015-008-0608-3