Abstract

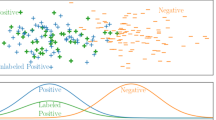

For multi-label learning, the specific features are extracted from the instances under the supervised of class label is meaningful, and the "purified" feature representation can also be shared with other features during learning process. Besides, it is essential to distinguish the inter-instance relations in input space and inter-label correlation relations in the output space on the multi-label datasets, which is conducive to improve the performance of the multi-label algorithm. However, most current multi-label algorithms aim to capture the mapping between instances and labels, while ignoring the information about instance relations and label correlations in the multi-label data structure. Motivated by these issues, we leverage the deep network to learn the special feature representations for multi-label components without abandoning overlapped features which may belong to other multi-label components. Meanwhile, the Euclidean matrices are leveraged to construct the diagonal matrix for the diffusion function, obtaining the new class latent representation by graph-based diffusion method preserve the inter-instance relations; it ensures that similar features have similar label sets. Further, considering that the contributions of these feature representation are different and have distinct influences on the final multi-label prediction results, the self-attention mechanism is introduced to fusion the other label-specific instance features to build the new joint feature representation, which derives dynamic weights for multi-label prediction. Finally, experimental results on the real data sets show promising wide availability for our approach.

Similar content being viewed by others

References

Gardner A, Elhami N, Selmic RR (2019) Classifying unordered feature sets with convolutional deep averaging networks. In: IEEE international conference on systems, man and cybernetics (SMC2019), Bari, Italy, October 6–9. pp 3447–3453

Kang L, Wu L, Yang Y-H (2013) A novel unsupervised approach for multilevel image clustering from unordered image collection. Front Comput Sci 7(1):69–82

Hoffmann M, Noé F (2019) Generating valid Euclidean distance matrices. CoRR https://arxiv.org/abs/1910.03131

Tang X, Wang Y, Wang Q (2012) A rotation and scale invariance face recognition method based on complex network and image contour.In: Proceedings of 12th international conference on control automation robotics and vision (ICARCV 2012), Guangzhou, China, December 5–7. pp 371–376

Jiang YG, Wu Z, Wang J, Xue X, Chang S-F (2018) Exploiting feature and class relationships in video categorization with regularized deep neural networks. IEEE Trans Pattern Anal Mach Intell 40(2):352–364

Zhou D, Bousquet O, Lal TN, Weston J, Schölkopf B (2003) Learning with local and global consistency. In: Neural information processing systems (NIPS2003), Vancouver, British Columbia, Canada, December 8–13. pp 321–328

Dou Z, Cui H, Wang B (2020) Learning global and local consistent representations for unsupervised image retrieval via deep graph diffusion networks. CoRR https://arxiv.org/abs/2001.01284

Ke T, Jing L, Lv H, Zhang L, Yaping H (2018) Global and local learning from positive and unlabeled examples. Appl Intell 48(8):2373–2392

Chen Z, Zhang M (2019) Multi-label learning with regularization enriched label-specific features. In: Proceedings of the 11th Asian conference on machine learning, (ACML 2019), Nagoya, Japan, November 17–19. pp 411–424

Chen L, Chen D (2019) Alignment based feature selection for multi-label learning. Neural Process Lett 50(3):2323–2344

Zhang ML, Zhou ZH (2014) A review on multi-label learning algorithms. IEEE Trans Knowl Data Eng 26(8):1819–1837

Tsoumakas G, Katakis I, Taniar D (2007) Multi-label classification: an overview. Int J Data Warehous Min 3(3):1–13

Madjarov G, Kocev D, Gjorgjevikj D et al (2012) An extensive experimental comparison of methods for multi-label learning. Pattern Recognit 45(9):3084–3104

Iscen A, Tolias G, Avrithis Y, Chum O (2019) Label propagation for deep semi-supervised learning. In: Proceedings of the IEEE conference on computer vision and pattern recognition (CVPR 2019), Long Beach, CA, USA, June 16–20. pp 5070–5079

Kang F, Jin R, Sukthankar R (2006) Correlated label propagation with application to multi-label learning. In: Proceedings of the IEEE computer vision and pattern recognition (CVPR2006), New York, USA, 17–22 June. pp 1719–1726

Zhu Y, Sapra K, Reda FA et al (2019) Improving semantic segmentation via video propagation and label relaxation. In: Proceedings of the IEEE conference on computer vision and pattern recognition (CVPR 2019), Long Beach, CA, USA, June 16–20. pp 8856–8865

Sun L, Feng S, Wang T, Lang C, Jin Y (2019) Partial multi-label learning by low-rank and sparse decomposition. In: Proceedings of the 33rd AAAI conference on artificial intelligence (AAAI2019), and the 31st innovative applications of artificial intelligence conference (IAAI2019), The 9th AAAI symposium on educational advances in artificial intelligence (EAAI2019), Honolulu, Hawaii, USA, January 27–February 1. pp 5016–5023

Jing L, Shen C, Yang L et al (2017) Multi-label classification by semi-supervised singular value decomposition. IEEE Trans Image Process 26:4612–4625

Wu F, Wang Z, Zhang Z et al (2007) Weakly semi-supervised deep learning for multi-label image annotation. IEEE Trans Big Data 1(3):109–122

Li Z, Tang Y, Li W, He Y (2019) Learning disentangled representation with pairwise independence. In: Proceedings of the 33rd AAAI conference on artificial intelligence (AAAI2019), and the 31st innovative applications of artificial intelligence conference (IAAI2019), The 9th AAAI symposium on educational advances in artificial intelligence (EAAI2019), Honolulu, Hawaii, USA, January 27–February 1. pp 4245–4252

Liu Y, Wang X, Wu S, Xiao Z (2019) Independence promoted graph disentangled networks. CoRR https://arxiv.org/abs/1911.11430

Dupont E (2018) Learning disentangled joint continuous and discrete representations. In: Proceedings of the advances in neural information processing systems 31: annual conference on neural information processing systems (NIPS 2018), Montréal, Canada, December 3–8. pp 708–718

Narayanaswamy S, Paige B, van de Meent J-W et al (2017) Learning disentangled representations with semi-supervised deep generative models. In: Proceedings of advances in neural information processing systems 30: annual conference on neural information processing systems (NIPS2017), Long Beach, CA, USA, December 4–9. pp 5925–5935

Li Z, Tang Y, He Y (2018) Unsupervised disentangled representation learning with analogical relations. In: Proceedings of the twenty-seventh international joint conference on artificial intelligence (IJCAI 2018) , Stockholm, Sweden, July 13–19. pp 2418–2424

Wicker J, Pfahringer B, Kramer S (2012) Multi-label classification using Boolean matrix decomposition. In: Proceedings of the ACM symposium on applied computing (ACM2012), Riva, Trento, Italy, March 26–30. pp 179–186

Huang J, Li G, Wang S et al (2017) Multi-label classification by exploiting local positive and negative pairwise label correlation. Neurocomputing 257:164–174

Chen G, Ye D, Xing Z et al (2017) Ensemble application of convolutional and recurrent neural networks for multi-label text categorization. In: Proceedings of international joint conference on neural networks (IJCNN 2017). pp 2377–2383

Wicker J, Tyukin A, Kramer S (2016) A nonlinear label compression and transformation method for multi-label classification using autoencoders. In: Proceedings of Pacific-Asia conference on knowledge discovery and data mining (PAKDD 2016). pp 328–340

Yeh CK, Wu WC, Ko WJ, Wang YCF (2017) Learning deep latent space for multi-label classification. In: AAAI 2017. pp 2838–2844

Seyedi SA, Lotfi A, Moradi P et al (2019) Dynamic graph-based label propagation for density peaks clustering. Expert Syst Appl 115:314–328

Ma J, Chow TWS, Zhang H (2020) Semantic-gap-oriented feature selection and classifier construction in multilabel learning. IEEE Trans Cybern 99:1–15

Qian W, Huang J, Wang Y, Shu W (2020) Mutual information-based label distribution feature selection for multi-label learning. Knowl Based Syst 195:105684

Xu N, Liu Y-P, Geng X (2020) Partial multi-label learning with label distribution. In: Proceedings of the thirty-second innovative applications of artificial intelligence conference (AAAI 2020): New York, NY, USA, February 7–12. pp 6510–6517

Wang L, Liu Y, Qin C, Sun G, Fu Y (2020) Dual relation semi-supervised multi-label learning. In: Proceedings of the thirty-second innovative applications of artificial intelligence conference (AAAI 2020): New York, NY, USA, February 7–12. pp 6227–6234

Roudsari AH, Afshar J, Lee CC, Lee W (2020) Multi-label patent classification using attention-aware deep learning model. In: Proceedings of the 2020 IEEE international conference on big data and smart computing (BigComp 2020), Busan, Korea (South), February 19–22. pp 558–559

Yang B, Xin T, Han M, Zhao X, Chen J (2020) Structured feature for multi-label learning. Neurocomputing 404:257–266

Gong X, Yuan D, Bao W (2020) Online metric learning for multi-label classification. In: Proceedings of the thirty-second innovative applications of artificial intelligence conference (AAAI 2020): New York, NY, USA, February 7–12. pp 4012–4019

Sovrano F, Palmirani M, Vitali F (2020) Deep learning based multi-label text classification of UNGA resolutions. CoRR https://arxiv.org/abs/2004.03455

Liang D, Gao X, Lu W, He L (2020) Deep multi-label learning for image distortion identification. Signal Process 172:107536

Kim B, Ghaffarzadegan S (2019) Self-supervised attention model for weakly labeled audio event classification. In: Proceedings of the 27th European signal processing conference (EUSIPCO 2019), A Coruña, Spain, September 2–6. pp 1–5

Zheng Z, Ben Y, Yuan C (2019) Multi-scale visual semantics aggregation with self-attention for end-to-end image-text matching. In: Proceedings of The 11th Asian conference on machine learning, (ACML2019), Nagoya, Japan, 17–19 November. pp 940–955

Read J, Pfahringer B, Holmes G, Frank E (2011) Classifier chains for multi-label classification. Mach Learn 85(3):333–359

Xuan J, Liu J, Zhang G, Da Xu RY, Luo X (2017) A Bayesian nonparametric model for multi-label learning. Mach Learn 106(11):1787–1815

Zhang ML, Zhou ZH (2007) Ml-knn: a lazy learning approach to multi-label learning. Pattern Recognit 40(7):2038–2048

Li Y-F, Hu J-H, Jiang Y, Zhou Z-H (2012) Towards discovering what patterns trigger what labels. In: Proceedings of the twenty-sixth AAAI conference on artificial intelligence (AAAI2012), Toronto, Ontario, Canada , July 22–26. pp 1012–1018

Tai F, Lin H (2012) Multilabel classification with principal label space transformation. Neural Comput 24(9):2508–2542

Chen Y-N, Lin H-T (2012) Feature-aware label space dimension reduction for multi-label classification. In Advances in neural information processing systems: proceedings of the 2012 conference (NIPS). pp 1538–1546

Zijia L, Guiguang D, Mingqing H, Jianmin W (2014) Multi-label classification via feature-aware implicit label space encoding. In” Proceedings of the 31st international conference on machine learning (ICML2014):II-325

Wei B, James K (2013) Efficient multi-label classification with many labels. In: Proceedings of the 30th international conference on machine learning (ICML2013). pp 405–413

Zhan W (2017) Inductive semi-supervised multi-label learning with co-training. In: proceedings of the 23rd ACM SIGKDD international conference 2017. pp 1305–1314

Zhang B, Wang Y, Chen F (2014) Multilabel image classification via high-order label correlation driven active learning. IEEE Trans Image Process 23:1430–1441

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

We declare that we have no financial and personal relationships with other people or organizations that can inappropriately influence our work; there is no professional or other personal interest of any nature or kind in any product, service and/or company that could be construed as influencing the position presented in, or the review of, the manuscript entitled “Partially Disentangled Latent Relations for Multi-Label Deep Learning”.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Lian, Sm., Liu, Jw., Lu, Rk. et al. Partially disentangled latent relations for multi-label deep learning. Neural Comput & Applic 33, 6039–6064 (2021). https://doi.org/10.1007/s00521-020-05381-w

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00521-020-05381-w