Abstract

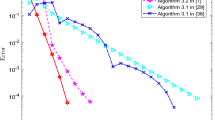

Many iterative algorithms like Picard, Mann, Ishikawa are very useful to solve fixed point problems of nonlinear operators in real Hilbert spaces. The recent trend is to enhance their convergence rate abruptly by using inertial terms. The purpose of this paper is to investigate a new inertial iterative algorithm for finding the fixed points of nonexpansive operators in the framework of Hilbert spaces. We study the weak convergence of the proposed algorithm under mild assumptions. We apply our algorithm to design a new accelerated proximal gradient method. This new proximal gradient technique is applied to regression problems. Numerical experiments have been conducted for regression problems with several publicly available high-dimensional datasets and compare the proposed algorithm with already existing algorithms on the basis of their performance for accuracy and objective function values. Results show that the performance of our proposed algorithm overreaches the other algorithms, while keeping the iteration parameters unchanged.

Similar content being viewed by others

References

Acedo GL, Xu HK (2007) Iterative methods for strict pseudo-contractions in Hilbert spaces. Nonlinear Anal: Theory Methods Appl 67(7):2258–2271

Agarwal RP, O’Regan D, Sahu DR (2007) Iterative construction of fixed points of nearly asymptotically nonexpansive mappings. J Nonlinear Convex Anal 8(1):61–79

Alvarez F (2004) Weak convergence of a relaxed and inertial hybrid projection-proximal point algorithm for maximal monotone operators in Hilbert space. SIAM J optim 14:773–782

Alvarez F, Attouch H (2001) An inertial proximal method for maximal monotone operators via discretization of a nonlinear oscillator with damping. Set-Valued Anal 9:3–11

Bauschke HH, Borwein JM (1996) On projection algorithms for solving convex feasibility problems. SIAM Rev 38:367–426

Bauschke HH, Combttes PL (2011) Convex Analysis and Monotone Operator Theory in Hilbert space. Springer, Berlin

Beck A, Teboulle M (2009) Fast gradient-based algorithms for constrained total variation image denoising and deblurring problems. IEEE Trans Image Process 18(11):2419–2434

Beck A, Teboulle M (2009) A fast iterative shrinkage-thresholding algorithm for linear inverse problems. SIAM J Imaging Sci 2(1):183–202

Bot RI, Csetnek ER (2016) An inertial alternating direction method of multipliers. Minimax Theory Appl 1:29–49

Bot RI, Csetnek ER, Hendrich C (2015) Inertial Douglas–Rachford splitting for monotone inclusion problems. Appl Math Comput 256:472–487

Byrne CL (2004) A unified treatment of some iterative algorithm in signal processing and image reconstruction. Inverse Probl 18:441–453

Chambolle A, Dossal C (2015) Dossal On the convergence of the iterates of the “fast iterative shrinkage/thresholding algorithm”. J Optim Theory Appl 166:968–982

Chang SS, Wang G, Wang L, Tang YK, Ma ZL (2014) \(\bigtriangleup \)-convergence theorems for multi-valued nonexpansive mapping in hyperbolic spaces. Appl Math Comput 249:535–540

Chen C, Ma S, Yang J (2014) A general inertial proximal point algorithm for mixed variational inequality problem. SIAM J Optim 25(4):2120–2142

Chidume CE, Mutangadura S (2001) An example on the Mann iteration method for Lipschitzian pseudocontractions. Proc Am Math Soc 129:2359–2363

Cholamjiak P, Abdou AA, Cho YJ (2015) Proximal point algorithms involving fixed points of nonexpansive mappings in CAT(0) spaces. Fixed Point Theory Appl. https://doi.org/10.1186/13663-015-0465-4

Dotson WG Jr (1970) On the Mann iterative process. Trans Am Math Soc 149:65–73

Ege O, Karaca I (2015) Banach fixed point theorem for digital images. J Nonlinear Sci Appl 8:237–245

Franklin J (1980) Methods of mathematical economics. Springer Verlag, New York

Ishikawa S (1974) Fixed points by a new iteration method. Proc Am Math Soc 44:147–150

Khan SH (2013) A Picard-Mann hybrid iterative process. Fixed Point Theory Appl 1:1–10

Lions JL, Stampacchia G (1967) Variational inequalities. Commun Pure Appl Math 20:493–519

Lorenz DA, Pock T (2015) An inertial forword–backword algorithm for monotone inclusions. J Math Imaging Vis 51:311–325

Mainge PE (2008) Convergence theorems for inertial KM-type algorithms. J Comput Appl Math 219:223–236

Mann WR (1953) Mean value methods in iteration. Proc Am Math Soc 4:506–610

Mercier B (1980) Mechanics and Variational Inequalities, Lecture Notes. Orsay Centre of Paris University

Nesterov YE (2007) Gradient methods for minimizing composite objective functions, Technical report, Center for Operations Research and Econometrics (CORE), Catholie University of Louvain

Nesterov YE (1983) A method for unconstrained convex minimization problem with the rate of convergence \(O(\frac{1}{k^2})\). Dokl Akad Nauk SSSR 269(3):543–547

Nesterov Y (2004) Introductory lectures on convex optimization: a basic course, Applied Optimization, vol 87. Kluwer Academic Publishers, Boston

Nesterov YE (2005) Smooth minimization of nonsmooth functions. Math Program 103(1):127–152

Ochs P, Chen Y, Brox T, Pock T (2014) Inertial proximal algorithm for non-convex optimization. SIAM J Imaging Sci 7(2):1388–1419

Parikh N, Boyd S (2014) Proximal algorithms. Found Trends Optim 1(3):127–239. https://doi.org/10.1561/2400000

Polyak BT (1964) Some methods of speeding up the convergence od iteration methods. U S S R Comput Math Math Phys 4:1–17

Sahu DR (2011) Applications of the S-iteration process to constrained minimization problems and split feasibility problems. Fixed Point Theory Appl 12:187–204

Sakurai K, Liduka H (2014) Acceleration of the Halpern algorithm to search for a fixed point of a nonexpansive mapping. Fixed Point Theory Appl 202

Suparatulatorn R, Cholamjiak P (2016) The modified S-iteration process for nonexpansive mappings in CAT(k) spaces. Fixed Point Theory Appl 25

Takahashi W, Takeuchi Y, Kubota R (2008) Strong convergence theorems by hybrid methods for families of nonexpansive mappings in Hilbert spaces. J Math Anal Appl 341(1):276–286

Tibshirani R (1996) Regression shrinkage and selection via the Lasso. J R Stat Soc Ser B 58(1):267–288

Tseng P (2008) On accelerated proximal gradient methods for convex-concave optimization, Technical report, University of Washington, Seattle

Uko LU (1993) Remarks on the generalized Newton method. Math Program 59:404–412

Uko LU (1996) Generalized equations and the generalized Newton method. Math Program 73:251–268

Yao Y, Cho YJ, Liou YC (2011) Algorithms of common solutions of variational inclusions, mixed equilibrium problems and fixed point problems. Eur J Oper Res 212(2):242–250

Zhao LC, Chang SS, Kim JK (2013) Mixed type iteration for total asymptotically nonexpansive mappings in hyperbolic spaces. Fixed Points Theory Appl 353

Acknowledgements

The first author would like to acknowledge the financial support by Indian Institute of Technology (Banaras Hindu University) in terms of teaching assistantship. The third author thankfully acknowledges the Council of Scientific and Industrial Research (CSIR), New Delhi, India, through University Grant Commission (UGC) for providing financial assistance in the form of Junior Research Fellowship through grant (Ref. No. 19/06/2016 (i) EU-V).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

There is no conflict of interest among all authors.

Ethical approval

This article does not contain any studies with human participants or animals performed by any of the authors.

Additional information

Communicated by V. Loia.

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Dixit, A., Sahu, D.R., Singh, A.K. et al. Application of a new accelerated algorithm to regression problems. Soft Comput 24, 1539–1552 (2020). https://doi.org/10.1007/s00500-019-03984-7

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00500-019-03984-7