Abstract

We describe a new data structure for dynamic nearest neighbor queries in the plane with respect to a general family of distance functions. These include \(L_p\)-norms and additively weighted Euclidean distances. Our data structure supports general (convex, pairwise disjoint) sites that have constant description complexity (e.g., points, line segments, disks, etc.). Our structure uses \(O(n \log ^3 n)\) storage, and requires polylogarithmic update and query time, improving an earlier data structure of Agarwal, Efrat, and Sharir which required \(O(n^{\varepsilon })\) time for an update and \(O(\log n)\) time for a query [SICOMP 1999]. Our data structure has numerous applications. In all of them, it gives faster algorithms, typically reducing an \(O(n^{\varepsilon })\) factor in the previous bounds to polylogarithmic. In addition, we give here two new applications: an efficient construction of a spanner in a disk intersection graph, and a data structure for efficient connectivity queries in a dynamic disk graph. To obtain this data structure, we combine and extend various techniques from the literature. Along the way, we obtain several side results that are of independent interest. Our data structure depends on the existence and an efficient construction of “vertical” shallow cuttings in arrangements of bivariate algebraic functions. We prove that an appropriate level in an arrangement of a random sample of a suitable size provides such a cutting. To compute it efficiently, we develop a randomized incremental construction algorithm for computing the lowest k levels in an arrangement of bivariate algebraic functions (we mostly consider here collections of functions whose lower envelope has linear complexity, as is the case in the dynamic nearest-neighbor context, under both types of norm). To analyze this algorithm, we also improve a longstanding bound on the combinatorial complexity of the vertical decomposition of these levels. Finally, to obtain our structure, we combine our vertical shallow cutting construction with Chan’s algorithm for efficiently maintaining the lower envelope of a dynamic set of planes in \({{\mathbb {R}}}^3\). Along the way, we also revisit Chan’s technique and present a variant that uses a single binary counter, with a simpler analysis and improved amortized deletion time (by a logarithmic factor; the insertion and query costs remain asymptotically the same).

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Nearest neighbor searching in the plane is one of the most fundamental problems in computational geometry [8]. Given a finite set S of sites in \({{\mathbb {R}}}^2\), the goal is to construct a data structure that can find the “closest” site for any given query object. If S is fixed, Voronoi diagrams and their many variants provide a simple and well-understood solution [5, 8], with linear storage and logarithmic query time. However, in many applications, the set S may change dynamically as sites get inserted and deleted. Now, we want to answer nearest neighbor queries interleaved with the updates. This setting is much less understood.

If S consists of singleton points and distances are measured in the Euclidean metric, we can achieve polylogarithmic update and query time [13, 14], with \(O(n\log ^3n)\) storage. However, we are often confronted with more general distance functions (e.g., \(L_p\)-norms or additively weighted Euclidean distances). Examples include the dynamic maintenance of a bichromatic closest pair of sites, constructing a Euclidean minimum-weight red-blue matching, constructing a Euclidean minimum spanning tree, computing the intersection of unit balls in three dimensions, or computing the smallest stabbing disk of a family of simply shaped compact strictly-convex sets in the plane; as well as computing a single-source shortest-path tree in a unit-disk graph (see Sect. 9 for details and references). Despite the numerous motivating applications, there has been virtually no progress on the basic problem since the 1990s. The state of the art is work by Agarwal et al. from 1999 [3]. It provides \(O(n^{\varepsilon })\) update and \(O(\log n)\) query time, for any fixed \({\varepsilon }>0\), while using \(O(n^{1+{\varepsilon }})\) storage.Footnote 1 We present a new solution that gives polylogarithmic update and query time, while using \(O(n \log ^3n)\) storage, for a wide range of distance functions. We assemble a broad set of techniques, such as randomized incremental construction, relative \((p, {\varepsilon })\)-approximations, shallow cuttings for xy-monotone surfaces in \({{\mathbb {R}}}^3\), and several advanced data structuring techniques.

We now describe our notions more thoroughly. Let S be a set of n pairwise disjoint sites. Each site is a simply-shaped compact convex region in the plane (points, line segments, disks, etc.). Let \(\delta :{{\mathbb {R}}}^2 \times {{\mathbb {R}}}^2 \rightarrow {{\mathbb {R}}}_{\ge 0}\) be a continuous distance function between points in the plane. For a site \(s \in S\), define the distance to s, \(f_s:{{\mathbb {R}}}^2 \rightarrow {{\mathbb {R}}}_{\ge 0}\), as \(f_s(x,y) = \delta ((x,y),s)= \min _{p\in s}\delta ((x,y),p)\) (the minimum exists since s is compact and \(\delta \) is continuous). We assume that \(\delta \) and the sites in S have constant description complexity. This means that they are defined by a constant number of polynomial equations and inequalities of constant maximum degree. Set \(F = \{f_s \mid s\in S\}\). The lower envelope \({{\mathcal {E}}}_F\) of F is the pointwise minimum \({{\mathcal {E}}}_F(x,y) = \min _{f \in F} f(x,y)\), and its xy-projection is called the minimization diagram of F, denoted by \({{\mathcal {M}}}_F\). The combinatorial complexity of \({{\mathcal {E}}}_F\) or of \({{\mathcal {M}}}_F\) is the total number of their vertices, edges, and faces. The book by Sharir and Agarwal [49] provides a comprehensive treatment of these concepts.

Now, given a query point \(q \in {{\mathbb {R}}}^2\), in order to find a \(\delta \)-nearest neighbor for q in S, we must identify a site s with \({{\mathcal {E}}}_F(q) = f_s(q)\). This translates to a vertical ray shooting query in \({{\mathcal {E}}}_F\): find the intersection of \({{\mathcal {E}}}_F\) and the z-vertical line through q, or, alternatively, locate q in the planar map \({{\mathcal {M}}}_F\), where each two-dimensional face \(\varphi \in {{\mathcal {M}}}_F\) is labeled with the site s for which \(f_s\) attains the minimum over \(\varphi \). (Edges and vertices can be labeled by the set of labels of their adjacent faces.)

The structure and the complexity of \({{\mathcal {E}}}_F\) and of \({{\mathcal {M}}}_F\), as well as algorithms for their construction and manipulation, have been studied for several decades (again, see [49]). To summarize, under the above assumptions, the combinatorial complexity of \({{\mathcal {E}}}_F\) (or of \({{\mathcal {M}}}_F\)) is \(O(n^{2+{\varepsilon }})\), for any fixed \({\varepsilon }>0\).Footnote 2 However, in many interesting cases, including the case where the functions \(f_s\) are linear (i.e., their graphs are non-vertical planes), the complexity of \({{\mathcal {E}}}_F\) is O(n). The case of planes arises, after simple algebraic manipulations, for point sites under the Euclidean distance. Then \({{\mathcal {M}}}_F\) is the Euclidean Voronoi diagram of S. There are many variants of Voronoi diagrams, for other classes of sites and distance functions, for which the complexity of \({{\mathcal {E}}}_F\) remains linear; see, e.g., the book by Aurenhammer et al. [5].

Coming back to nearest neighbor search, if we assume that \({{\mathcal {E}}}_F\) has linear complexity and can be constructed efficiently, all we need to do, in the so-called “static” case, is to preprocess \({{\mathcal {M}}}_F\) for fast planar point location. Then, a query takes \(O(\log n)\) time. If sites in S can be inserted or deleted, i.e., if F changes dynamically, then \({{\mathcal {E}}}_F\) may change rather drastically after an update. Maintaining an explicit representation of \({{\mathcal {M}}}_F\) thus becomes overwhelmingly expensive. Hence, the goal, in this paper and in earlier work, is to store an implicit representation of \({{\mathcal {E}}}_F\) that still supports efficient vertical ray shooting in the current envelope \({{\mathcal {E}}}_F\) (or point location in the current \({{\mathcal {M}}}_F\)).

In all the applications of dynamic nearest neighbor search that are studied in this paper, the lower envelope \({{\mathcal {E}}}_F\) has linear complexity. This is typically the case when S consists of point sites, and the distance functions are typically \(L_p\)-metrics, for \(1 \le p \le \infty \), or additively weighted Euclidean metrics, where each point site \(s \in S\) has a weight \(w_s \in {{\mathbb {R}}}\), and \(\delta (q,s) = |qs| + w_s\), where |qs| is the Euclidean distance between q and s. See, e.g., [5, 38] for details concerning the linear complexity of \({{\mathcal {E}}}_F\) in these cases. The lower envelope also has linear complexity for general classes of pairwise disjoint compact convex sites of constant description complexity, and for fairly general metrics.

Our main result is an efficient data structure that dynamically maintains a set F of bivariate functions as above, under insertions and deletions of functions, and supports efficient vertical ray shooting queries into \({{\mathcal {E}}}_F\). Assuming, as above, that the complexity of \({{\mathcal {E}}}_F\) is linear, the worst-case cost of a query, as well as the amortized expected cost of an update, is polylogarithmic, and the storage used by the structure is \(O(n \log ^3n)\). As a consequence, we obtain faster solutions to all the applications mentioned above, and many more, essentially reducing an \(O(n^{\varepsilon })\) factor in the complexity to polylogarithmic. Our results also generalize to the case where the lower envelope complexity is not linear.

A brief context. Suppose first that all functions in F are linear. (As noted, this applies to point sites in the Euclidean metric.) A classical solution for this case is due to Agarwal and Matoušek [4]. They show how to maintain dynamically an implicit representation of \({{\mathcal {E}}}_F\), with amortized update time \(O(n^{\varepsilon })\), for any fixed \({\varepsilon }> 0\); vertical ray shooting queries take \(O(\log n)\) worst-case time. Here, n denotes an upper bound on |F|. The case of more general bivariate functions, as described above, was studied by Agarwal et al. [3]. If \({{\mathcal {E}}}_F\) has linear complexity, their technique has amortized update time \(O(n^{\varepsilon })\), for any fixed \({\varepsilon }> 0\), and worst-case query time \(O(\log n)\), matching (asymptotically) the result for planes [4].

For more than ten years after the work of Agarwal and Matoušek [4], it was open whether the \(O(n^{\varepsilon })\) update time can be improved. In SODA 2006, Chan [13] presented an ingenious construction for the case of planes where both the (amortized) update time and the (worst-case) query time are polylogarithmic. More precisely, Chan’s structure (combined with the recent deterministic construction of shallow cuttings by Chan and Tsakalidis [16]) supports insertions in \(O(\log ^3n)\) amortized time, deletions in \(O(\log ^6n)\) amortized time, and queries in \(O(\log ^2n)\) worst-case time. However, before our work, it remained unknown whether a similar result (with polylogarithmic update and query time) is possible for arbitrary bivariate functions with constant description complexity and linear envelope complexity. We provide an algorithm that meets all these performance goals. Along the way, we also improve the deletion time for Chan’s data structure for planes by a factor of \(\log n\), and the bound of Agarwal et al. [3] for the complexity of the vertical decomposition of the \((\le k)\)-level in an arrangement of surfaces in \({{\mathbb {R}}}^3\) by a factor of \(k^{\varepsilon }\). Very recently, after the original submission of our paper, by combining a faster cutting construction with our observations, Chan [14] further improved the amortized deletion time for the case of planes to \(O(\log ^4n)\) and the amortized insertion time to \(O(\log ^2n)\).

2 Our Results and Techniques

Our data structure combines a multitude of techniques. We first give a broad overview of how these techniques play together; see Fig. 1, where the terms in the figure are explained below.

Maybe the most crucial observation is that the whole geometric part in Chan’s data structure [13, 14] is in the construction of small shallow cuttings for planes. Thus, once we have an analogous result for surfaces, we can maintain their dynamic lower envelope, or equivalently, solve the generalized dynamic planar nearest neighbor problem. It turns out that random sampling and the theory of relative \((p, {\varepsilon })\)-approximations easily yield a construction for the required cuttings. However, we must also find the corresponding conflict lists quickly. For this, we present an algorithm that uses randomized incremental construction (RIC) for the \((\le k)\)-level in an arrangement of surfaces. Together with an improved variant of Chan’s result, this gives the generalized nearest neighbor data structure. We show the impact of this structure by presenting numerous applications thereof, both old and new. In what follows, we describe the specific parts in more detail.

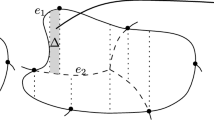

The geometric core of Chan’s data structure consists of an efficient construction of small-sized vertical shallow cuttings [12, 16]. Let F be a set of n functions in \({{\mathbb {R}}}^3\), identified in this paper with their graphs, and let \({{\mathcal {A}}}(F)\) denote the arrangement of F. We recall the notion of a k-level in \({{\mathcal {A}}}(F)\), for a parameter \(0 \le k \le n - 1\). It is the closure of the set of points q such that q lies on some function graph and exactly k graphs pass strictly below q.

Roughly speaking (more details follow below), for suitable parameters k and \(r \approx n/k\), a vertical k-shallow \(r^{-1}\)-cutting is a collection of pairwise openly disjoint semi-unbounded vertical prisms, where each prism consists of all points that lie vertically below some triangle. Furthermore, (i) these top triangles form a polyhedral terrain that is sandwiched between the k-level and the \(k'\)-level of the arrangement, for a suitable parameter \(k'\) close to k; (ii) the number of prisms is close to O(r); and (iii) each prism is crossed by approximately k function graphs.

Once a fast construction of vertical shallow cuttings of sufficiently small size is available, we can plug it into Chan’s machinery for planes [13], in almost black-box fashion. This gives a fast data structure for dynamic maintenance of the lower envelope in the general setting. Agarwal et al. [3] prove the existence of shallow cuttings of optimal size for general functions, but their cuttings are not “vertical”, in the above sense, and a direct algorithmic implementation of their ideas yields an additional \(O(n^{\varepsilon })\) factor for both the size and the construction time of the cutting. When applied to the dynamic maintenance problem, this gives (amortized) update cost \(O(n^{\varepsilon })\) rather than polylogarithmic. Refining this bound is one of the main goals of the present paper.

Thus, we design a different algorithm for computing a vertical shallow cutting. For this, we develop several technical results that we believe to be of independent interest. We use relative approximations [30] to show that, with high probability, we get an \({\varepsilon }\)-approximation of the k-level of \({{\mathcal {A}}}(F)\) by choosing a random sample \(S_k\) of \(cn{\varepsilon }^{-2}k^{-1}\log n\) functions from F and by taking the t-level of \({{\mathcal {A}}}(S_k)\), for \(t \in [(1+{{\varepsilon }}/{3})\,\lambda ,(1+{{\varepsilon }}/{2})\,\lambda ]\), \(\lambda = c{\varepsilon }^{-2}\log n\), and c a suitable constant. This means that any such t-level of \({{\mathcal {A}}}(S_k)\) lies between levels k and \((1+{\varepsilon })\,k\) of \({{\mathcal {A}}}(F)\). We show that for random t, the expected complexity of the t-level is \(O(n{\varepsilon }^{-5}k^{-1}\log ^2n)\).

Having computed such a t-level, we project it onto the xy-plane, construct the standard planar vertical decomposition of the faces of the projection, lift each resulting trapezoid \(\varphi \) back to a trapezoidal subface \(\varphi ^*\) embedded in a surface on the original level, and associate it with the semi-unbounded vertical prism that extends below \(\varphi ^*\). We show that this collection of prisms is a vertical k-shallow \(r^{-1}\)-cutting in \({{\mathcal {A}}}(F)\) (with k and \(r \approx n/k\) as above). We denote it by \(\Lambda _k\).

The last hurdle is to efficiently compute \(\Lambda _k\), together with the conflict lists of its prisms. The conflict list \({{\,\mathrm{CL}\,}}(\tau )\) of a prism \(\tau \in \Lambda _k\) is the set of all functions \(f \in F\) whose graphs cross the interior of \(\tau \). (Although the construction of \(\Lambda _k\) is performed with respect to the sample \(S_k\), the conflict lists are defined with respect to the whole set F).

This leads us to the classical problem of computing the t lowest levels in an arrangement of n bivariate functions of constant description complexity. A standard approach for this goes via randomized incremental construction (RIC), see, e.g., [8, 42]. Here, one adds the functions one by one, in random order, while maintaining some representation of the first t levels on the functions inserted so far. Following previous work, we maintain a cell decomposition of the region below the t-level of the function graphs inserted so far, and we associate with each cell a conflict list consisting of all the remaining functions that cross it. If we run this process to completion, we get a suitable decomposition of the t shallowest levels of the “final” \({{\mathcal {A}}}(F)\). If we stop after inserting the first \(cn{\varepsilon }^{-2}k^{-1}\log n\) functions, which serve as the desired random sample \(S_k\), we obtain, in addition to (a suitable decomposition of) the t shallowest levels of \({{\mathcal {A}}}(S_k)\), the conflict lists of its cells (with respect to the whole F).

Our decomposition of choice is (a suitable shallow portion of) the standard vertical decomposition of an arrangement of surfaces in \({{\mathbb {R}}}^3\) (see [19, 49] for details). Each prism extends between two consecutive levels of the current arrangement, so this decomposition differs from the vertical shallow cutting that we are after.Footnote 3 Nevertheless, we show how to transform this decomposition into a vertical shallow cutting, including the construction of the desired conflict lists of its semi-unbounded prisms. A fairly intricate analysis shows that the shallow cutting has expected complexity \(O(nk^{-1}\log ^2 n )\).

The implementation of such a RIC for the shallowest t levels of \({{\mathcal {A}}}(F)\) is far from trivial. It has been considered before for the case of planes. Mulmuley [41] described a RIC of the first t levels, when the lower envelope of the planes corresponds to the Voronoi diagram of a set of points in the xy-plane (under the standard algebraic manipulations alluded to above). Mulmuley’s procedure needs \(O(nt^2\log (n/t))\) expected time.Footnote 4 Agarwal et al. [2] used a somewhat less standard randomized incremental algorithm and obtained a bound of \(O(n\log ^3n + nt^2)\) expected time. Their algorithm works for any set of planes. It maintains a point p in each prism, such that the level of p in \({{\mathcal {A}}}(F)\) is known, and it uses this information to prune away prisms that can be ascertained not to intersect the shallowest t levels of \({{\mathcal {A}}}(F)\). Finally, Chan [11] obtained a bound of \(O(n\log n + nt^2)\) expected time with an algorithm that can be viewed as a batched randomized incremental construction. Unfortunately, it is not clear how to apply some crucial components of these algorithms when F is a set of nonlinear functions.

We present and analyze a standard randomized incremental construction algorithm for the shallowest t levels of an arrangement \({{\mathcal {A}}}(F)\) of a set F of n bivariate functions with constant description complexity and linear envelope complexity. Our algorithm runs in \(O( nt \lambda _s(t)\log (n/t) \log n)\) expected time, where s is a constant that depends on the surfaces and \(\lambda _s(t)\) is the maximum length of a Davenport–Schinzel sequence on t symbols of order s [49].Footnote 5 To get this result, we improve a bound of Agarwal et al. [3] on the complexity of the vertical decomposition of the t shallowest levels in \({{\mathcal {A}}}(F)\). Agarwal et al. proved that this complexity is \(O(nt^{2+{\varepsilon }})\), for any fixed \({\varepsilon }> 0\), via a fairly complicated charging scheme. We improve this to \(O(nt\lambda _s(t))\), with a simpler argument, where s is a constant that depends on the algebraic complexity of the functions of F (see a precise definition below).

Using our randomized incremental algorithm, we construct a vertical shallow cutting of the first k levels in \({{\mathcal {A}}}(F)\), consisting of \(O(nk^{-1}\log ^2 n)\) prisms, each with a conflict list of size O(k). The construction time is \(O(n\lambda _s(\log n)\log ^3n)\).

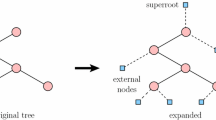

Once we have an efficient mechanism for constructing vertical shallow cuttings, we apply it, following and adapting the technique of Chan for the case of planes, to obtain our dynamic data structure. Before that, we re-examine Chan’s data structure, and we present it in a way that is easier to understand (in our opinion) and, at the same time, slightly faster than the original version. Our variant follows a standard route: we begin with a static data structure and extend it for insertions, using a (somewhat non-standard) variant of the well-known Bentley–Saxe binary counter technique [7]. Then, we show how to perform deletions via re-insertions of planes, using a lookahead deletion mechanism, the major innovation in Chan’s work. We believe that our analysis sheds additional light on the inner workings of Chan’s structure. We improve the amortized deletion time to \(O(\log ^5n)\), i.e., by a logarithmic factor. Deletions are the costliest operations in Chan’s structure and constitute the bottleneck in most of its applications. As mentioned, in recent work, Chan [14] achieved a further improvement, building upon our analysis, reducing the amortized deletion time to \(O(\log ^4n)\) and the amortized insertion time to \(O(\log ^2n)\).

We finally combine our shallow cutting construction with our improved version of Chan’s data structure, extended to more general functions, to obtain a dynamic data structure for vertical ray shooting into the lower envelope of a dynamically changing set of bivariate functions, as above. Our (worst-case, deterministic) query time is \(O(\log ^2n)\), the (amortized, expected) time for an insertion is \(O(\lambda _s(\log n)\log ^5n)\), and the (amortized, expected) time for a deletion is \(O(\lambda _s(\log n)\log ^9n)\). The larger polylogarithmic factors are a consequence of slightly weaker bounds on the complexity of an approximating level.

Plugging our new bounds into the applications in Agarwal et al. [3] and in Chan [13], we immediately improve several running times, replacing a factor of \(n^{\varepsilon }\) by a polylogarithmic factor. Some prominent examples are shown in the following table; details follow in Sect. 9. (Constants of proportionality are suppressed in the table.) The parameter s depends on the precise metric, and is defined in more detail later in the paper. Concrete values of s are given in the table for the specific respective applications.

Problem | Old bound | New bound |

|---|---|---|

Dynamic bichromatic closest pair in general planar metric | \(n^{\varepsilon }\) update [3] | \(\lambda _s(\log n)\log ^{5}n\) insertion, \(\lambda _s(\log n)\log ^{9}n\) deletion |

Minimum planar bichromatic Euclidean matching | \(n^{2+{\varepsilon }}\) [3] | \(n^2\lambda _6(\log n) \log ^{9} n\) |

Dynamic minimum spanning tree in \(L_p\)-metric | \(n^{\varepsilon }\) update [3] | \(\lambda _s(\log n)\log ^{11}n\) update |

Dynamic intersection of unit balls in \({{\mathbb {R}}}^3\) | \(n^{\varepsilon }\) update [3] queries in \(\log n\) and \(\log ^4n\) (depending on the precise query) | \(\lambda _6(\log n)\log ^5 n\) insertion, \(\lambda _6(\log n)\log ^{9}n\) deletion, queries in \(\log ^{2} n\) and \(\log ^5 n\) (depending on precise query) |

A particularly fruitful application domain for our data structure can be found in disk intersection graphs. These are defined as follows: Let \(S \subset {{\mathbb {R}}}^2\) be a finite set of point sites, each with an associated weight \(w_p > 0\), \(p \in S\); a site p with weight \(w_p\) represents the disk of radius \(w_p\) centered at p. The disk intersection graph for S, denoted D(S), has the sites in S as vertices, and there is an edge pq between two sites p, q in S if and only if \(|pq| \le w_p + w_q\), i.e., if the disk around p with radius \(w_p\) intersects the disk around q with radius \(w_q\). If all weights are 1, we call D(S) the unit disk graph for S. Disk intersection graphs are a popular model for geometrically defined graphs and networks, and enjoy an increasing interest in the research community, in particular due to applications in wireless sensor networks [10, 15, 27, 32, 34, 46]. The following table gives an overview of our results on disk graphs.

Problem | Old bound | New bound |

|---|---|---|

Shortest-path tree in a unit disk graph | \(n^{1+{\varepsilon }}\) [10] | \(n \lambda _6(\log n)\log ^{9}n\) |

Dynamic connectivity in disk intersection graphs with radii in \([1,\Psi ]\) | \(n^{20/21}\) update \(n^{1/7}\) query [15] | \(\Psi ^2 \lambda _6(\log n)\log ^{9}n\) update \(\log n/{\log \log n}\) query |

BFS tree in disk intersection graphs | \(n^{1+{\varepsilon }}\) [46] | \(n\lambda _6(\log n)\log ^9n \) |

\((1+\rho )\)-spanners for disk intersection graphs | \(n^{4/3+{\varepsilon }}\rho ^{-4/3}\log ^{2/3}\Psi \) [27] | \(n\rho ^{-2}\lambda _6(\log n)\log ^{9}n\) |

Two of the applications listed above concern finding shortest-path trees in unit disk graphs, and BFS-trees in disk intersection graphs. Our new structures give improved bounds almost in a black-box fashion, using the respective techniques of Cabello and Jejčič [10] and of Roditty and Segal [46]. Very recently, Wang and Xue [51] presented a deterministic algorithm to find the shortest-path tree in a unit disk graph in \(O(n \log ^2n)\) time. The other two applications are a bit more involved. First, we give a data structure for the dynamic maintenance of the connected components in a disk intersection graph, as disks are inserted or deleted, where we assume that all disks have radii from the interval \([1, \Psi ]\). Then, we can apply our data structure in a grid-based approach that gives an update time that depends on \(\Psi \) and is polylogarithmic if \(\Psi \) is constant. The previous bound of Chan, Pǎtraşcu, and Roditty [15] is only slightly sublinear (albeit independent of \(\Psi \)). Queries are faster in both approaches, but the bound in [15] is a power of n whereas here it is only sub-logarithmic. Very recently, Kauer and Mulzer [36] presented a method that improves the dependence on \(\Psi \). Finally, we give an algorithm for computing a \((1 + \rho )\)-spanner in a disk intersection graph, for any \(\rho > 0\). A \((1 + \rho )\)-spanner for D(S) is a subgraph H of D(S) such that the shortest path distances in H approximate the shortest path distances in D(S) up to a factor of \(1 + \rho \). The previous construction by Fürer and Kasiviswanathan [27] has a running time that depends on the radius ratio \(\Psi \), as defined above. Our new algorithm is independent of \(\Psi \) and achieves almost linear running time, improving the previous algorithm by a factor of at least \(n^{1/3}\).

Paper outline. Section 3 gives further background and precise definitions. In Sect. 4, we describe how to obtain a terrain that approximates the k-level of \({{\mathcal {A}}}(F)\) by random sampling, via relative \((p,{\varepsilon })\)-approximations (see [30] and below). In Sect. 5, we define a vertical shallow cutting, based on our level approximation, and show how to compute it with a randomized incremental construction of the shallowest t levels in \({{\mathcal {A}}}(F)\). In Sect. 6, we describe in detail the randomized incremental construction and analyze it. Section 7 gives our improved variant of Chan’s structure for maintaining the lower envelope of planes. Combining our cuttings with Chan’s machinery as presented in Sect. 7, we obtain, in Sect. 8, an efficient procedure for dynamically maintaining the lower envelope of a collection of algebraic surfaces of constant description complexity and linear lower envelope complexity. Finally, in Sect. 9, we present several known applications, for which we obtain better bounds, and our new applications for disk graphs. Along the way, we also consider the case where the lower envelope complexity of F is superlinear, and extend our analysis to this more general setup.

3 Preliminaries

Let \({{\mathcal {F}}}\) be a family of bivariate functions \(f:{{\mathbb {R}}}^2\rightarrow {{\mathbb {R}}}\), and let F be a finite subset of \({{\mathcal {F}}}\). Throughout the paper, we assume that the functions in \({{\mathcal {F}}}\) are continuous, totally defined, and algebraic, and that they have constant description complexity. This means that the graph of each function is a semialgebraic set, defined by a constant number of polynomial equalities and inequalities of constant maximum degree. We will generally make no distinction between a function \(f \in {{\mathcal {F}}}\) and its graph \(\{(x,y,f(x,y)) \mid x, y \in {{\mathbb {R}}}\}\), which is a continuous xy-monotone surface, also called a terrain.

The lower envelope \({{\mathcal {E}}}_F\) of F is the graph of the pointwise minimum of the functions of F. The xy-projection of \({{\mathcal {E}}}_F\) yields a subdivision of the xy-plane called the minimization diagram \({{\mathcal {M}}}_F\) of F. It can be represented by a standard doubly connected edge list (DCEL, see, e.g., [8]). Each (two-dimensional) face f of \({{\mathcal {M}}}_F\) is labeled by the function in F that attains the pointwise minimum over f.

When \({{\mathcal {M}}}_F\) consists of O(|F|) faces, vertices, and edges, for any finite \(F \subseteq {{\mathcal {F}}}\), we say that \({{\mathcal {F}}}\) has lower envelopes of linear complexity. We will mostly assume this to be the case for the families \({{\mathcal {F}}}\) considered here. In particular, this assumption holds when \({{\mathcal {F}}}\) is the family of all nonvertical planes, or when \({{\mathcal {F}}}\) is a family of distance functions under some metric (or under some so-called convex distance function [20]), each of which measures the distance of a point in the xy-plane to some given site (cf. Sect. 9), where the sites are assumed to be pairwise disjoint closed convex sets.

For simplicity, we also assume that F is in general position, i.e., no more than three functions meet at a common point, no more than two functions meet in a one-dimensional curve, no pair of functions are tangent to each other, and no function is tangent to the intersection curve of two other functions. For example, this holds if the coefficients of the polynomials defining the functions in F are algebraically independent over \({{\mathbb {R}}}\) [49]. Furthermore, we assume that the coordinate frame is generic, so that the xy-projections of the intersection curves of any pair of functions in F are also in general position, defined in an analogous sense.

Model of computation. We assume a (by now fairly standard) algebraic model of computation, in which primitive operations that involve a constant number of functions of \({{\mathcal {F}}}\) take constant time. Such operations include: computing the intersection points of any three functions, computing (a suitable representation of) the intersection curve of any two functions, decomposing it into connected components, finding a representative point on each such component, computing the points of intersection between the xy-projections of two intersection curves, testing whether a point lies below, on, or above a function graph, and so on. This model is reasonable, because there are standard techniques in computational algebra (see, e.g., [6, 47]), and actual packages (such as the one described by Boissonnat and Teillaud [9]), that perform such operations exactly in constant time. Technically, these methods and packages determine the truth value of any Boolean predicate of constant description complexity. That is, they are not expected to provide precise values of roots of polynomial equations, but they can determine, exactly and in constant time, any algebraic relation between such roots and/or similar entities, expressed by a constant number of polynomial equations and inequalities of constant maximum degree.

Shallow cuttings. Let \({{\mathcal {A}}}(F)\) be the arrangement of a set F of n bivariate functions from \({{\mathcal {F}}}\) in \({{\mathbb {R}}}^3\). The level of a point \(q\in {{\mathbb {R}}}^3\) in \({{\mathcal {A}}}(F)\) is the number of functions of F that pass strictly below q. For \(0\le k \le n-1\), the k-level \(L_k(F)\) of \({{\mathcal {A}}}(F)\) is the closure of the set of all points at level k that lie on a function in F. We denote by \(L_{\le k}(F)\) the union of the first k levels of \({{\mathcal {A}}}(F)\). For given parameters \(k \in \{0, \dots ,n-1\}\), \(r \in \{1, \dots , n\}\), a k-shallow \(r^{-1}\)-cutting in \({{\mathcal {A}}}(F)\) is a collection \(\Lambda \) of pairwise openly disjoint regions \(\tau \), each of constant description complexity, such that the union of all \(\tau \in \Lambda \) covers \(L_{\le k}(F)\), and such that the interior of each \(\tau \in \Lambda \) is intersected by at most n/r functions in F. The size of \(\Lambda \) is the number of regions in \(\Lambda \).

In addition, we call \(\Lambda \)vertical if every region \(\tau \in \Lambda \) is a (semi-unbounded) pseudo-prism. A pseudo-prism \(\tau \) of this kind consists of all points that lie vertically below some pseudo-trapezoid \({\overline{\tau }}\) on a function \(f \in F\). Such a pseudo-trapezoid is defined as the set

for real numbers \(x^- < x^+\) and (semi-)algebraic functions \(\psi ^-\), \(\psi ^+:{{\mathbb {R}}}\rightarrow {{\mathbb {R}}}\) of constant description complexity; some of these boundary constraints might be absent. For planes, \({\overline{\tau }}\) will simply be a planar y-vertical trapezoid, and we do not insist that \({\overline{\tau }}\) be contained in one of the input planes. Since the interior of a pseudo-prism in a vertical k-shallow \(r^{-1}\)-cutting is intersected by at least k functions, we must have \(k \le n/r\). In our setting, we will set \(r = \Theta (n/k)\), with a sufficiently small constant of proportionality, which is the case most relevant for all our applications.

Matoušek [40] proved that for any n hyperplanes in \({{\mathbb {R}}}^d\), there is a k-shallow \(r^{-1}\)-cutting of size \(O\bigl ({q^{\lceil d/2\rceil }r^{\lfloor d/2\rfloor }}\bigr )\), for \(q = kr/n+1\). For the most relevant case \(k=\Theta (n/r)\), we get \(q=O(1)\) and a cutting of size \(O\bigl (r^{\lfloor d/2\rfloor }\bigr )\), a significant improvement over the general bound \(O(r^d)\) for a cutting that covers all of \({{\mathcal {A}}}(F)\), rather than just \(L_{\le k}(F)\) [17].Footnote 6 For example, for planes in three dimensions, we get cuttings of size O(r) instead of \(O(r^3)\). This has led to improved solutions of many range searching and related problems (see, e.g., [16] and the references therein). Matoušek [40] presented a deterministic algorithm to construct a shallow cutting in polynomial time. If \(r < n^\delta \), the running time improves to \(O(n \log r)\), where \(\delta > 0\) is a sufficiently small constant depending on d. Later, Ramos [45] presented a (rather complicated) randomized algorithm for \(d = 2,3\), that constructs a hierarchy of shallow cuttings for a geometric sequence of \(O(\log n)\) values of r (and \(k=\Theta (n/r)\)), in \(O(n\log n)\) overall expected time. Recently, Chan and Tsakalidis [16] gave a deterministic algorithm for the same task. Their algorithm can be stopped early, to obtain an O(n/r)-shallow \(r^{-1}\)-cutting in \(O(n \log r)\) time. Interestingly, the analysis of Chan and Tsakalidis uses Matoušek’s theorem on the existence of an O(n/r)-shallow \(r^{-1}\)-cutting of size O(r) as a black box.

Chan [12] was the first to point out the existence of vertical shallow cuttings for planes in \({{\mathbb {R}}}^3\). Such a cutting originates from a polyhedral triangulated xy-monotone terrain that lies entirely above \(L_{\le k}(F)\), so that each triangle \({\overline{\tau }}\) of the terrain generates a semi-unbounded triangular prism \(\tau \) with \({\overline{\tau }}\) as its top face. These shallow cuttings have many applications, in particular in Chan’s data structure for dynamic maintenance of lower envelopes [13, 14], as reviewed above. The deterministic construction of Chan and Tsakalidis [16] constructs vertical shallow cuttings. Recently, Har-Peled et al. [29] gave an alternative construction with additional favorable properties.Footnote 7

Things become technically more involved when we allow more general algebraic functions. For example, decomposing cells of the arrangement into subcells of constant description complexity is easy for hyperplanes (where the subcells are simplices), using, e.g., a bottom-vertex triangulation [18, 22]. For general curves or surfaces, the only known general-purpose cell decomposition technique is vertical decomposition [19, 49]. In the plane, the complexity of such a decomposition is proportional to the complexity of the original arrangement, and in three and four dimensions, near (but not quite) optimal upper bounds are known [19, 37]. However, in dimension five and higher, there are significant gaps between the known upper and lower bounds [19]. Regarding shallow cuttings for general surfaces, we are aware only of the aforementioned result of Agarwal et al. [3], and of no work that considers vertical shallow cuttings for this general setup.

4 Approximate k-Levels

Here and in the following section, we show the existence of vertical shallow cuttings for surfaces. Later, we address the issue of how to efficiently compute these cuttings and the conflict lists of their pseudo-prisms.

Let \({{\mathcal {F}}}\) be a family of functions as in Sect. 3, and let F be a collection of n functions from \({{\mathcal {F}}}\). Recall that we assume that the lower envelope complexity of \({{\mathcal {F}}}\) is linear. Agarwal et al. [3] provide a shallow cutting for \({{\mathcal {A}}}(F)\), and show that, for any fixed \({\varepsilon }>0\), \(0\le k \le n-1\), and \(1\le r\le n\), there is a k-shallow (1/r)-cutting of size \(O(q^{2+{\varepsilon }}r)\), where \(q=kr/n+1\) (and the constant of proportionality depends on \({\varepsilon }\)). This is slightly sub-optimal when q is large. However, we are interested in the case \(r\approx n/k\), so \(q=O(1)\) and the bound becomes O(r), which is optimal. Nonetheless, the cutting of Agarwal et al. [3] is not vertical, and is therefore useless for our purposes.

Known techniques for computing vertical shallow cuttings for planes, and the conflict lists of their prisms [16, 29], crucially rely on the fact that if a plane intersects a semi-unbounded prism \(\tau \), it must also intersect a vertical edge of \(\tau \). This is not true for general functions. Thus, we use a somewhat different approach that results in cuttings whose size is a (small) polylogarithmic factor off optimal. It is an interesting challenge to tighten the bound. For the time being, though, we are not aware of any alternative construction that meets our specific needs.

Let \(0 < {\varepsilon }\le 1/2\) be a specified error parameter,Footnote 8 and let \(0\le k \le n-1\). We will approximate the level \(L_{k}(F)\) of \({{\mathcal {A}}}(F)\) by a terrain \({\overline{T}}_{k}\) (which will actually be a level in the arrangement of some sample of F), with the following properties (for simplicity, we ignore in this paper the trivial issue of rounding).

-

1.

\({\overline{T}}_{k}\) fully lies above \(L_k(F)\) and below \(L_{(1+{\varepsilon }) k}(F)\).

-

2.

The complexity \(|{\overline{T}}_{k}|\) of \({\overline{T}}_{k}\) is \(O(n{\varepsilon }^{-5}k^{-1}\log ^2n)\).

To construct \({\overline{T}}_{k}\), we use the notion of relative \((p,{\varepsilon })\)-approximation (see Har-Peled and Sharir [30] for more details): for a range space \((X,{{\mathcal {R}}})\) of finite VC-dimension, and for given parameters \(p,{\varepsilon }\in (0,1)\), a set \(A\subseteq X\) is called a relative \((p,{\varepsilon })\)-approximation, if, for each range \(R\in {{\mathcal {R}}}\), we have

As shown by Har-Peled and Sharir [30] (following Li et al. [39], see also Har-Peled’s book [28]), for any \(q \in (0,1)\), a random sample of size

is a relative \((p, {\varepsilon })\)-approximation with probability at least \(1-q\), where the constant of proportionality depends linearly on the VC-dimension, but is independent of \({\varepsilon }\) and p.

We apply this general machinery to the range space \(({{\mathcal {F}}},{{\mathcal {R}}})\) defined as follows. An object o can be either a straight line, a segment, a ray, or an edge in the arrangement of a constant number of functions of \({{\mathcal {F}}}\), a face in such an arrangement, a connected portion of such a face cut off by vertical planes orthogonal to the x-axis, or a connected component of the intersection of such a face with a plane orthogonal to the x-axis. Each range \(R \in {{\mathcal {R}}}\) corresponds to an object o as above, and is the set of functions of \({{\mathcal {F}}}\) that intersect o. The fact that \(({{\mathcal {F}}},{{\mathcal {R}}})\) has finite VC-dimension, follows by standard arguments (see, e.g., [28, 49]). Thus, let \(S_k\subseteq F\) be a random sample of size

where \(c > 0\) is a suitable constant, proportional to the VC-dimension of \(({{\mathcal {F}}},{{\mathcal {R}}})\). By the previous discussion, we can choose c such that \(S_k\) is a relative \(({k}/({2n}),{{\varepsilon }}/{3})\)-approximation for \(({{\mathcal {F}}},{{\mathcal {R}}})\), with probability at least \(1-1/n^b\), for some sufficiently large constant \(b \ge 1\). Note that for this choice of \(r_k\) to make sense, we need \(k = \Omega ( {\varepsilon }^{-2}\log n)\). The case of smaller k is simpler and will be treated below.

Set \({\overline{T}}_k\) to a random level \(L_t(S_k)\), where t is chosen uniformly in the range

We refer to \({\overline{T}}_k\) as an \({\varepsilon }\)-approximation to level \(L_k(F)\). This terminology is justified in the following lemmas. From now on, we will assume that \(S_k\) is indeed a relative \(({k}/({2n}), {{\varepsilon }}/{3})\)-approximation. The bounds in Lemmas 4.2 and 4.3 hold notwithstanding, since the assumption fails with probability at most \(1/n^b\), so that, by making b sufficiently large (as we can), the event of failure contributes a negligible amount to the relevant expectation.

Lemma 4.1

The terrain \({\overline{T}}_k\) lies between levels k and \((1+{\varepsilon })\,k\) of \({{\mathcal {A}}}(F)\).

Proof

Let p be a point of level k in \({{\mathcal {A}}}(F)\), and let \(R^{(p)}\) denote the range of those functions that pass below p. By assumption, \(S_k\) is a relative \(({k}/{(2n)},{{\varepsilon }}/{3})\)-approximation for a range space that includes \(R^{(p)}\). Since

the first case in (1) implies that

Thus, at most \((1+{{\varepsilon }}/{3})\,\lambda \) functions of \(S_k\) pass below p, i.e., p lies on or below \({\overline{T}}_k\). Similarly, let q be a point of level \((1+{\varepsilon })\,k\) in \({{\mathcal {A}}}(F)\). By a symmetric argument, at least

functions of \(S_k\) pass below q (using \({\varepsilon }\le 1/2\)). Hence, q lies on or above \({\overline{T}}_k\), and the lemma follows. \(\square \)

Lemma 4.2

The expected number of vertices p of \({{\mathcal {A}}}(S_k)\) whose level in \({{\mathcal {A}}}(S_k)\) is between \((1+{{\varepsilon }}/{3})\,\lambda \) and \((1+{{\varepsilon }}/{2})\,\lambda \) is \(O({n}{\varepsilon }^{-6}k^{-1}\log ^3n)\).

Proof

Let p be a vertex of \({{\mathcal {A}}}(F)\), and let \(\ell _{S_k}(p)\) denote the level of p in \({{\mathcal {A}}}(S_k)\). As follows from the proof of Lemma 4.1, the vertex p can satisfy \((1+{{\varepsilon }}/{3})\,\lambda \le \ell _{S_k}(p) \le (1+{{\varepsilon }}/{2})\,\lambda \) only if the level of p in \({{\mathcal {A}}}(F)\) lies between k and \((1+{\varepsilon })\,k\). The probability that p shows up in \({{\mathcal {A}}}(S_k)\) isFootnote 9

As shown by Clarkson and Shor [23], there are \(O(n((1+{\varepsilon })\,k)^2) = O(nk^2)\) vertices in \(L_{\le (1+{\varepsilon })k}(F)\). Hence, the expected number of vertices p of \({{\mathcal {A}}}(S_k)\) with \((1+{{\varepsilon }}/{2})\lambda \le \ell _{S_k}(p) \le (1+{{\varepsilon }}/{2})\,\lambda \) is at most \(O({n}{\varepsilon }^{-6}k^{-1}\log ^3n)\), as claimed. \(\square \)

Lemma 4.3

The expected complexity of \({\overline{T}}_k\), over the random choices of \(S_k\) and of the level in \([(1+{{\varepsilon }}/{3})\,\lambda ,(1+{{\varepsilon }}/{2})\,\lambda ]\), is

Proof

Since a level in \({{\mathcal {A}}}(S_k)\) is an xy-monotone terrain, and since each vertex of \({{\mathcal {A}}}(S_k)\) appears in only three (consecutive) levels, the sum of the complexities of all the \(L_j(S_k)\), for \(j\in [ (1+{{\varepsilon }}/{3})\,\lambda ,(1+{{\varepsilon }}/{2})\,\lambda ]\), is proportional to the number of vertices in \({{\mathcal {A}}}(S_k)\) with level between \((1+{{\varepsilon }}/{3})\,\lambda \) and \((1+{{\varepsilon }}/{2})\,\lambda \). Thus, by Lemma 4.2, the expected complexity of a random level in this range is

as claimed. \(\square \)

Finally, we discuss the case \(k = O({\varepsilon }^{-2} \log n)\). In this case, we pick t uniformly at random in the interval \([k,(1+{\varepsilon })k]\), and we set \({\overline{T}}_k\) to \(L_t(F)\). By construction, it is clear that \({\overline{T}}_k\) approximates the k-level in \({{\mathcal {A}}}(F)\). Furthermore, the same Clarkson–Shor bound used in the proofs of Lemmas 4.2 and 4.3 shows that \({\overline{T}}_k\) has expected complexity \(O({nk}/{{\varepsilon }}) = O({n}{\varepsilon }^{-5}k^{-1}\log ^2n)\), using our assumption on k.

Remark

The same result holds for general lower envelope complexity. Suppose that every set of m functions in \({{\mathcal {F}}}\) has lower envelope complexity at most \(\psi (m)\), where we assume (or require) that \(m \mapsto \psi (m)/m\) is monotonically increasing. Then, given a set F of n functions from \({{\mathcal {F}}}\), for every \({\varepsilon }\in (0, 1/2]\) and for every \(0\le k \le n-1\), we can find a terrain \({\overline{T}}_k\) that lies fully between \(L_k(F)\) and \(L_{(1+{\varepsilon })\,k}(F)\) and that has complexity \(O(\psi (n/k)\,{\varepsilon }^{-5}\log ^2n)\). Indeed, the argument proceeds as above, with slightly adjusted bounds. The Clarkson–Shor bound in the proof of Lemma 4.2 now shows that there are \(O\bigl ((1+{\varepsilon })^3 k^3 \psi (n/(1+{\varepsilon })k) \bigr ) =O(k^3\psi (n/k))\) vertices in \(L_{\le (1+{\varepsilon })k}(F)\), so the expected number of vertices in \({{\mathcal {A}}}(S_k)\) with level between \((1+{\varepsilon }/3)\,\lambda \) and \((1+{\varepsilon }/2)\,\lambda \) is \(O(\psi (n/k)\,{\varepsilon }^{-6}\log ^3n)\). Dividing by \({\varepsilon }\lambda /6\), we obtain the claimed bound.

5 From Approximate Levels to Shallow Cuttings

Having obtained an approximate level \({\overline{T}}_k\) as in Sect. 4, we would like to turn \({\overline{T}}_k\) into a shallow cutting for \(L_{\le k}(F)\) by creating for each face \({\overline{\varphi }}\) of \({\overline{T}}_k\) a semi-unbounded vertical pseudo-prism \(\varphi \) that consists of the points vertically below \({\overline{\varphi }}\). For brevity, we will refer to these pseudo-prisms simply as prisms, and we denote them by \(T_k\). The only issue is that the faces \({\overline{\varphi }}\) need not have constant complexity, so that the corresponding prisms might be crossed by too many functions in F.

Thus, we decompose each face \({\overline{\varphi }}\) of \({\overline{T}}_k\) into sub-faces of constant complexity, using two-dimensional vertical decomposition. More precisely, we project each face \({\overline{\varphi }}\) onto the xy-plane, and we decompose the resulting projection \({\overline{\varphi }}^*\) into y-vertical pseudo-trapezoids by erecting y-vertical segments from each vertex of \({\overline{\varphi }}^*\) and from each point of vertical tangency on its boundary, extending them either into infinity or until they hit another edge of \({\overline{\varphi }}^*\). By planarity, the number of pseudo-trapezoids is proportional to the complexity of \({\overline{\varphi }}\). We lift each resulting pseudo-trapezoid \(\tau ^*\) into a prism \(\tau \), consisting of all the points vertically below \({\overline{\varphi }}\) that project to \(\tau ^*\).Footnote 10 Our cutting \(\Lambda _k\) consists of all these prisms \(\tau \), and we denote by \({\overline{\Lambda }}_k\) the terrain formed by the ceilings \({\overline{\tau }}\), for \(\tau \in \Lambda _k\). Then, \({\overline{\Lambda }}_k\) is a refinement of \({\overline{T}}_k\). As we will shortly show, \(\Lambda _k\) is indeed a shallow cutting for \(L_{\le k}(F)\). For each prism \(\tau \in \Lambda _k\), the conflict list \({{\,\mathrm{CL}\,}}(\tau )\) is the set of functions of F that intersect \(\tau \).

Lemma 5.1

\(\Lambda _k\) is a shallow cutting of the first k levels of \({{\mathcal {A}}}(F)\). It consists (in expectation) of

prisms, and each prism in \(\Lambda _k\) intersects at least k and at most \((1+2{\varepsilon })\,k\) graphs of functions of F.

Proof

Let \(\tau \in \Lambda _k\) and let p be vertex of the ceiling \({\overline{\tau }}\) of \(\tau \). By Lemma 4.1, the level of p in \({{\mathcal {A}}}(F)\) is in \([k,(1+{\varepsilon })\,k]\). Thus, at most \((1+{\varepsilon })\,k\) functions in F pass below all vertices of \(\tau \). Furthermore, since \(\overline{\tau }\) does not intersect any function in \(S_k\), since \(S_k\) is a relative \(({k}/({2n}),{{\varepsilon }}/{3})\)-approximation for \(({{\mathcal {F}}},{{\mathcal {R}}})\), and since \({\overline{\tau }}\) induces a range in \({{\mathcal {R}}}\), by the second bound in (1), it follows that at most

functions of F cross \({\overline{\tau }}\). For any function \(f\in F\) that intersects \(\tau \) either passes below all vertices of \({\overline{\tau }}\) or crosses \({\overline{\tau }}\), we get

The construction of \(\Lambda _k\) ensures that \(|\Lambda _k|\) is proportional to the complexity of \({\overline{T}}_k\), so, by Lemma 4.3, it satisfies (in expectation) the bound asserted in the lemma. \(\square \)

Remark

More generally, Agarwal et al. [3] show the following: let \(\psi :\mathbb {N} \rightarrow \mathbb {N}\) be such that any m functions in \({{\mathcal {F}}}\) have a lower envelope of complexity \(\psi (m)\). Let \(F \subset {{\mathcal {F}}}\) be a set of n functions in F. Then, for any \(k \in \{1, \dots , n-1\}\), there exists a k-shallow \(\Theta (k/n)\)-cutting for F of size \(O(\psi (n/k))\). Our techniques also generalize to this case. In particular, we obtain the following result.

Lemma 5.2

For any \(k \in \{1, \dots , n-1\}\), our sampling procedure yields a shallow cutting \(\Lambda _k\) of the first k levels of \({{\mathcal {A}}}(F)\). It consists (in expectation) of \(O(|\Lambda _k|) =O({\varepsilon }^{-5}\psi (n/k) \log ^2n)\) prisms, and each prism in \(\Lambda _k\) intersects at least k and at most \((1+2{\varepsilon })\,k\) graphs of functions of F.

Proof

We only need a more general bound on the complexity of \({\overline{T}}_k\). By Clarkson–Shor, in general, there are \(O(\psi (n/k)\,k^3)\) vertices in \(L_{\le (1+{\varepsilon })k}(F)\), so we get the bound \(O({\varepsilon }^{-6}\psi (n/k)\log ^3n)\) in Lemma 4.2 and \(O({\varepsilon }^{-5}\psi (n/k)\log ^2n)\) in Lemma 4.3. Now, the result follows as before. \(\square \)

6 Randomized Incremental Construction of the \(\le t\) Level

Again, let \({{\mathcal {F}}}\) be a family of bivariate functions in \({{\mathbb {R}}}^3\) with constant description complexity and with linear lower envelope complexity. Let F be a subset of n members of \({{\mathcal {F}}}\), which we assume to be in general position, and let \(0\le t \le n-1\). Our goal is to construct the first t levels of \({{\mathcal {A}}}(F)\). We describe an algorithm with expected running time \(O(nt\lambda _s(t)\log (n/t)\log n)\) and with expected storage \(O(nt\lambda _s(t))\), where s is a constant that depends on \({{\mathcal {F}}}\), and \(\lambda _s(t)\) is the familiar Davenport–Schinzel bound [49]. Our algorithm can be used to compute a vertical shallow cutting as prescribed in Sect. 5, together with the conflict lists of its prisms.Footnote 11

We follow the standard randomized incremental construction (RIC) paradigm: we insert the surfaces of F one at a time, in random order, and maintain, after each insertion, the first t levels in the arrangement of the surfaces inserted so far (t stays fixed during the process). Number the elements of F in the random insertion order as \(f_1,\dots , f_n\), and put \(F_i=\{f_1,\dots ,f_i\}\), for \(i=1,\dots ,n\). As is standard in the RIC approach, the algorithm maintains a decomposition (the standard vertical decomposition in our case) of \(L_{\le t}(F_i)\) into cells of constant description complexity (these are not necessarily the semi-unbounded prisms of the vertical shallow cutting that we are after—see below), and keeps the conflict list for each cell \(\tau \), i.e., the set of all functions in F that cross \(\tau \). When the next function \(f_{i+1}\) is inserted, the conflict lists can be used to retrieve the cells that are crossed by \(f_{i+1}\). These cells are “destroyed”, as they no longer appear in the new decomposition, and are partitioned by \(f_{i+1}\) into fragments. These fragments are not necessarily valid prisms for the vertical decomposition of \(L_{\le t}(F_{i+1})\), and may need to be merged and refined into the correct new cells. In addition, we have to construct the conflict lists of the new cells, which are obtained from the conflict lists of the destroyed cells.

6.1 Computing the First t Levels

After each insertion, we maintain the vertical decomposition \({{\,\mathrm{VD}\,}}_{\le t}(F_i)\) of \(L_{\le t}(F_i)\), the first t levels of \({{\mathcal {A}}}(F_i)\). We obtain \({{\,\mathrm{VD}\,}}_{\le t}(F_i)\) by applying two decomposition stages to each cell of \(L_{\le t}(F_i)\). (We reiterate that this decomposition differs from the vertical shallow decomposition used above, in the sense that its prisms are in general not semi-unbounded; see below.)

We call a cell C of \(L_{\le t}(F_i)\) a stage-0 cell. In the first stage, we erect a vertical wall within C through each edge e of \(L_{\le t}(F_i)\) on \({\partial }C\). Each such wall is the union of all maximal vertical segments that lie in (the closure of) C and pass through the points of e. These walls partition C into stage-1 cells. Every stage-1 cell \(C'\) has a unique ceiling (“top” surface) and a unique floor (“bottom” surface). The ceiling and/or floor may be undefined if \(C'\) is unbounded. All other facets of \(C'\) lie on the vertical walls. The complexity of \(C'\) may however still be arbitrarily large. Thus, in the second stage, we take each stage-1 cell \(C'\), project it onto the xy-plane, and apply a two-dimensional vertical decomposition to the projection \((C')^*\). That is, as in Sect. 5, we erect a y-vertical segment through each vertex of \((C')^*\) and through each locally x-extremal point on its boundary. This partitions \((C')^*\) into y-vertical pseudo-trapezoids, and we lift each such pseudo-trapezoid \(\tau \) back into \({{\mathbb {R}}}^3\) by forming the intersection \((\tau \times {{\mathbb {R}}})\cap C'\). This yields a decomposition of \(C'\) into prism-like stage-2 cells of constant description complexity, referred to, for simplicity, just as prisms.Footnote 12 More details can be found in [19, 49]. Collectively, all the stage-2 cells, over all cells C and all subcells \(C'\), constitute the vertical decomposition \({{\,\mathrm{VD}\,}}_{\le t}(F_i)\).

6.1.1 Complexity of the Vertical Decomposition

As is well known, the complexity of \({{\,\mathrm{VD}\,}}_{\le t}(F_i)\) is proportional to the number of triples \((q, e, e')\), for e, \(e'\) edges of \(L_{\le t}(F_i)\) and for \(q\in {{\mathbb {R}}}^2\), so that q belongs to the xy-projections of e and \(e'\), and the z-vertical line \(\ell _q\) through q meets no other surface of \(F_i\) between e and \(e'\). We call \((e,e')\) a vertically visible pair, and refer to \((q,e,e')\) as a triple of vertical visibility. We assume that the pair \((e,e')\) is ordered so that e lies above \(e'\), i.e., we encounter e before \(e'\) as we travel along \(\ell _q\) from \(z = \infty \) to \(z = -\infty \).

The following crucial lemma, which we regard as one of the main contributions of the paper, bounds the complexity of \({{\,\mathrm{VD}\,}}_{\le t}(F_i)\). It improves an earlier bound of \(O(nt^{2+{\varepsilon }})\) by Agarwal et al. [3]. We define a parameter s as follows: For any \(f_1, f_2, f_3, f_4 \in F\), we let \(s(f_1, f_2, f_3, f_4)\) denote the number of co-vertical pairs of points \(q \in f_1\cap f_2\), \(q'\in f_3\cap f_4\). Then \(s=s_0+2\), where \(s_0\) is the maximum of \(s(f_1,f_2,f_3,f_4)\), over all quadruples \(f_1,f_2,f_3,f_4 \in F\). By our assumptions on \({{\mathcal {F}}}\) (including general position), we have \(s=O(1)\), where the constant dependsFootnote 13 on the complexity of the family \({{\mathcal {F}}}\). We use \(\lambda _s(t)\) to denote the maximum length of a Davenport–Schinzel sequence of order s on t symbols [49].

Lemma 6.1

Let F be a set of n functions of \({{\mathcal {F}}}\), and let \(1\le t \le n-1\). The complexity of \({{\,\mathrm{VD}\,}}_{\le t}(F)\) is \(O(nt\lambda _s(t))\).

Proof

Let e be an edge of \(L_{\le t}(F)\), and let \(F_e \subseteq F\) be the functions in F that pass vertically below some point on e. Since e is not crossed by any function of \(F_e\), each \(f \in F_e\) appears below every point of e, implying that \(|F_e| \le t\). Let \(V_e\) be the vertical wall erected downward from e, all the way to \(z=-\infty \). Then, the complexity of the upper envelope of \(F_e\), clipped to \(V_e\), is at most \(\lambda _{s_0}(t)\). Indeed, using a suitable parametrization of e, the cross-sections of the functions in \(F_e\) with \(V_e\) are totally defined univariate continuous functions, each pair of which intersect at most \(s_0\) times. This follows from the definition of \(s_0\), since the vertices of the arrangement of these functions are exactly the intersection points of \(V_e\) with edges \(e'\) of \(L_{\le t}(F)\) that form co-vertical pairs \((e,e')\) with e. Since the vertices of the upper envelope of these functions stand in a 1-1 correspondence with the triples of vertical visibility pairs with e as the top edge, the number of these pairs is at most \(\lambda _{s_0}(t)\), as claimed.

A standard application of the Clarkson–Shor technique implies that \(L_{\le t}(F)\) has \(O(t^3\cdot n/t)=O(nt^2)\) edges. This follows by charging the edges to their endpoints and by using the fact that there are O(m) vertices on the lower envelope of any m functions of F. This already gives a (weak) bound of \(O(nt^2\lambda _{s_0}(t)) \approx nt^3\) on the complexity of \({{\,\mathrm{VD}\,}}_{\le t}(F)\).

The arguments so far follow the initial part of the analysis of Agarwal et al. [3], but the next part is new and gives a sharper bound. Fix two functions \(f, f'\in F\), and let \(\gamma = f \cap f'\) be their intersection curve. We cut \(\gamma \) at each singular and locally x-extremal point. This decomposes \(\gamma \) into O(1) connected x-monotone Jordan subarcs. Recall that, in addition to general position of F, we also assume a generic coordinate frame, so that no resulting piece lies within some yz-parallel plane.

We cut these arcs further at their intersections with the level \(L_{t}(F)\), and we keep those portions that lie in \(L_{\le t}(F)\). To control the number of such portions, we relax the problem a bit, replacing the level t by a larger level \(t'\) with \(t\le t'\le 2t\), for which the complexity of \(L_{t'}(F)\) is O(nt). Since the overall complexity of \(L_{\le 2t}(F)\) is \(O(nt^2)\) (as just noted), the average complexity of a level between t and 2t is indeed O(nt). Thus, there is a level \(t'\) with the above properties. We will establish the asserted upper bound for \({{\,\mathrm{VD}\,}}_{\le t'}(F)\), which then also applies to \({{\,\mathrm{VD}\,}}_{\le t}(F)\). To keep the notation simple, we continue to denote the top level \(t'\) as t.

Let \(\Gamma \) be the set of all Jordan subarcs of some intersection curve that lies in \(L_{\le t}(F)\) (now with the new, potentially larger, index t). If \(\gamma \in \Gamma \) does not fully lie below \(L_{t}(F)\), it ends in at least one vertex of \(L_{t}(F)\), so the number of these \(\gamma \in \Gamma \) is O(nt). Any other \(\gamma \in \Gamma \) is charged either to one of its endpoints, or, if it is unbounded (and x-monotone), to its intersection with a plane at infinity, say \(V_\infty \): \(x = +\infty \). If \(\gamma \) reaches \(V_\infty \), it appears there as a vertex of the first t levels of the cross-sections of the functions in F with \(V_\infty \). An application of the Clarkson–Shor technique to this planar arrangement shows that there are O(nt) such vertices, so this also bounds the number of these arcs in \(\Gamma \). Finally, we bound the number of \(\gamma \in \Gamma \) with a singular or locally x-extremal endpoint by charging \(\gamma \) to this endpoint. The number of these points lying in \(L_{\le t}(F)\) is bounded by yet another application of the Clarkson–Shor technique. Noting that each such point is now defined by only two functions of F, this leads to the upper bound O(nt). Thus, \(|\Gamma |=O(nt)\).

Fix an arc \(\gamma \in \Gamma \), and let \(\mu (\gamma )\) be the number of edges in \(L_{\le t}(F)\) on \(\gamma \). In general, \(\mu (\gamma ) \ge 1\). We decompose \(\gamma \) into \(\xi (\gamma ) := \lceil \mu (\gamma )/t\rceil \) pieces, each consisting of at most t consecutive edges. By general position, if \(e_1\) and \(e_2\) are consecutive edges along \(\gamma \), the set of functions of F that appear below \(e_1\) and the set of functions that appear below \(e_2\) differ exactly by the third function incident to the common endpoint of \(e_1\) and \(e_2\). This implies that, for a piece \(\delta \) of \(\gamma \), the overall number of functions that appear below \(\delta \) is at most 2t. Some of these functions are now only partially defined. Arguing as above, the number of vertically visible pairs whose top edge lies on \(\delta \) is at most \(\lambda _{s_0 + 2}(2t) = O(\lambda _s(t))\). Hence, the overall number of triples of vertical visibility in \(L_{\le t}(F)\) is

We have already seen that \(|\Gamma |=O(nt)\). Furthermore, \(\sum _{\gamma \in \Gamma }\mu (\gamma )\) is simply the number of edges in \(L_{\le t}(F)\), which, as already argued, is \(O(nt^2)\). It follows that the number of vertically visible pairs in \(L_{\le t}(F)\) is \(O( nt\lambda _s(t))\). \(\square \)

Remark

Our analysis also works if the lower envelope complexity is not necessarily linear. Let \(\psi :\mathbb {N} \rightarrow \mathbb {N}\) be such that any m functions in \({{\mathcal {F}}}\) have a lower envelope of complexity at most \(\psi (m)\). Then we obtain the following bound.

Lemma 6.2

Let \({{\mathcal {F}}}\) be a family of functions with lower envelope complexity bounded by \(\psi (m)\), let F be a set of n functions of \({{\mathcal {F}}}\), and let \(1\le t \le n-1\). The complexity of \({{\,\mathrm{VD}\,}}_{\le t}(F)\) is \(O(t^2\psi (n/t)\,\lambda _s(t))\).

Proof

The proof proceeds exactly as the proof of Lemma 6.1, but with more general bounds for the various structures associated with \({{\mathcal {A}}}(F)\). In particular, by the Clarkson–Shor technique, the overall complexity of \(L_{\le 2t }(F)\) now is \(O(t^3\psi (n/t))\), and hence we can find a level \(t\le t'\le 2t\) such that \(L_{t'}\) has complexity \(O(t^2\psi (n/t))\). The arguments for bounding \(|\Gamma |\) remain valid, but now the complexity of the first t levels of the planar arrangement on \(V_\infty \), and the number of singular or locally x-extremal points in \(L_{\le t}\), are both \(O(t^2\psi (n/t))\).Footnote 14 Proceeding with these bounds as before, we obtain the claimed result. \(\square \)

The randomized incremental construction. Although the high-level description of the randomized incremental construction is fairly routine, the finer details are somewhat intricate, and their description is rather lengthy. We present the construction, with full details, in Appendix A. As we will show, the expected running time of the procedure is proportional to the expectation of

where \(\Pi ^*\) is the set of all prisms that are generated during the incremental process, and where \({{\,\mathrm{CL}\,}}(\tau )\) is the conflict list of prism \(\tau \).

6.2 Analysis

We now bound the expected value of (4). Let \(\Pi \) be the set of all possible pseudo-prisms. That is, we consider all possible sets \(F_0\) of at most six surfaces in F, and for each such \(F_0\), we construct the entire vertical decomposition \({{\,\mathrm{VD}\,}}(F_0)\) and add the resulting prisms to \(\Pi \).

We associate two weights with each prism \(\tau \in \Pi \). The first weight \(w_0(\tau )\) is the size of its conflict list, that is \(w_0(\tau )=|{{{\,\mathrm{CL}\,}}(\tau )}|\). The second weight \(w^-(\tau )\) equals the number of surfaces that pass fully below \(\tau \). For simplicity, we focus on prisms that are defined by exactly six functions; the treatment of prisms defined by fewer functions is fully analogous. Let \(\Xi (\tau )\) denote the set of defining functions of \(\tau \). As just said, we only consider the case \(|\Xi (\tau )| = 6\).

Following a standard approach to the analysis of RICs, we proceed in two steps. First, we estimate the probability that a prism with given weights ever appears in \(L_{\le t}(F_i)\). Then, we estimate the number of prisms \(\tau \) with weights \(w^-(\tau ) \le a\) and \(w_0(\tau ) \le b\), using the Clarkson–Shor technique and several other considerations. Finally, we combine the bounds to get the desired estimate on the expected running time and storage of the algorithm.

Estimating the probability of a prism to appear. For the first step, let \(\tau \in \Pi \) be a prism with six defining functions and with \(w^-(\tau )=a\), \(w_0(\tau )=b\). That is, \(\tau \) has \(w^-(\tau ) = a\)lower surfaces and \(w_0(\tau ) = b\)crossing surfaces. Neither of \(w^-(\tau )\), \(w_0(\tau )\) counts any of the defining functions of \(\tau \), although some of these functions might pass below \(\tau \). The number of such ‘lower’ defining functions is always between 1 and 5, because the floor is always such a lower function, and the ceiling is always excluded; see Fig. 2.

The prism \(\tau \) appears in some \({{\,\mathrm{VD}\,}}_{\le t}(F_i)\) if and only if (i) the last function in \(\Xi (\tau )\), denoted as \(f_6\), is inserted before any of the b crossing surfaces; and (ii) at most \(t'=t-\xi \) of the a lower non-defining surfaces are inserted before \(f_6\), where \(\xi \ge 1\) is the number of defining functions of \(\tau \) that pass below \(\tau \), one of which is the floor of \(\tau \).

The probability \(p_\tau \) of this event can be calculated as follows. Restrict the random insertion permutation to the \(a+b+6\) relevant surfaces (the a surfaces below \(\tau \), the b surfaces crossing \(\tau \), and the six surfaces in \(\Xi (\tau )\)). To get a restricted permutation that satisfies (i) and (ii), we first choose which function in \(\Xi (\tau )\) is \(f_6\), then we choose some \(j\le \min {\{a,t'\}}\) of the a lower surfaces to precede \(f_6\), then we mix these j surfaces with the five in \(\Xi (\tau ) {\setminus } \{f_6\}\), and finally we place the remaining \(a-j\) lower surfaces and all b crossing surfaces after \(f_6\). We thus get

We rewrite and bound each summand in (5) as follows.

Let

be our bound on the jth summand of (5). Note that with a, b fixed, \(\varphi _{a,b}(x)\) peaks at \(x = 5\,({a + b})/{b}-5 = {5a}/{b}\) (the positive root of the derivative of \(\varphi _{a,b}(x)\) satisfies \(5\,(x+5)^4 - {b\,(x+5)^5 }/{(a + b)} = 0\)). We estimate \(p_\tau \) by replacing the sum by an integral. That is,

to justify the inequality between the sum and the integral in (6), it suffices to note that, for \(x\in [j,j+1]\),

for every \(j\ge 0\). To estimate the integral, we substitute \(y=xb/(a+b)\). The upper limit in the integral becomes

and we get

The integral in (7) is at most

Thus,Footnote 15\(p_\tau = O(1/b^6)\). For large c, we cannot improve this bound. However, if c is sufficiently small, bounding the integral in (7) by an absolute constant may be wasteful. For \(a\le t\) we will not refine the bound and use \(p_\tau = O(1/b^6)\). Consider now the case \(a> t > t'\), so \(c=(\min {\{a,t'\}} + 1)\,b/(a+b)={b\,(t' + 1)}/({a+b})\). As is easily checked, the function \(\varphi (y) = (y+5b/(a+b))^5 e^{-y}\) is increasing on \([0, 5a/(a+b)]\), so when

we bound the integral in (7) by \(c\varphi (c)\), and getFootnote 16

Thus, we can bound \(p_\tau \) in terms of a and b. Denoting this bound by p(a, b), we have

(Unless b is very small, the constraint \(a\le t\) or \(a>t\) is subsumed by the other respective constraint.)

Bounding the number of prisms of small weights. We next estimate the number of prisms \(\tau \in \Pi \) with \(w^-(\tau )\le a\) and \(w_0(\tau )\le b\). We denote this quantity as \(N_{\le a,\le b}\). We also use the notation \(N_{a,b}\) for the number of prisms \(\tau \in \Pi \) with \(w^-(\tau ) = a\) and \(w_0(\tau ) = b\).

Lemma 6.3

The number of prisms \(\tau \) with \(w^-(\tau )\le a\) and \(w_0(\tau )\le b\) is \(O(nb^5)\), for \(a\le b\), and \(O(nab^4\lambda _s(a/b))\), for \(a>b\).

Proof

Set \(p = 1/b\), and let \(R \subseteq F\) be a random sample of size pn.

Case 1: \(a \le b\). We assume that \(b \le n/10\), so \(p = 1/b \ge 10/n\). Fix a prism \(\tau \in \Pi \), defined by six functions, with \(w^-(\tau )=i\) and \(w_0(\tau ) = j\), with \(i\le a\), \(j\le b\). Let \(q_\tau \) be the probability that R contains (i) the defining set \(\Xi (\tau )\); (ii) none of the j crossing functions; and (iii) none of the i lower functions. By elementary probability,

this follows since we set \(p = 1/b\) and assumed that \(a \le b\) and \(b \le n/10\), so that \(n - k \ge n - a - b \ge n - 2b \ge n/2\) and \(np - l \ge np - 5 \ge np/2\). If the event holds, \(\tau \) becomes a prism in \({{\,\mathrm{VD}\,}}_{\le 6}(R)\). By Lemma 6.1, the number of such prisms is \(O(|R|) = O(n/b)\). This yields, as a variant of the Clarkson–Shor theory, \(N_{\le a,\le b} = O(nb^5)\). This bound also holds trivially if \( b > n/10\), since there are at most \(O(n^6)\) prisms in total.

Case 2: \(a > b\). Again, we assume that \(b \le n/10\), so we have \(p = 1/b \ge 10/n\). Also, we require that n is more than a large enough constant. We put \(\xi _0 = 2a/b\) and \(\xi = \xi _0 + \nu \), where \(\nu \) is the number of defining functions of \(\tau \) that pass below \(\tau \). As before, fix a prism \(\tau \in \Pi \), defined by six functions, with \(w^-(\tau ) = i\) and \(w_0(\tau ) = j\), \(i\le a\), \(j\le b\). The probability \(q_\tau \) that \(\tau \) appears in \({{\,\mathrm{VD}\,}}_{\le \xi }(R)\) is the probability that R contains (i) the defining set \(\Xi (\tau )\); (ii) none of the j crossing functions; and (iii) at most \(\xi _0\) of the i lower functions. Similarly to Case 1, the probability that (i) and (ii) hold is at least \((p/2)^6(1-p/2)^b = \Omega (1/b^6)\). Conditioned on (i) and (ii) holding, (iii) is the event that when choosing \(np -6\) out of \(n - 6 - j\) functions, we obtain at most \(\xi _0\) of the i lower functions. The number of the lower functions in the sample follows a hypergeometric distribution, with expected value

using our assumption that \(b \le n/10\) and that n is large enough. Now, the Chernoff bound for the hypergeometric distribution (see [21] and [43, Thm. 5.2 and Cor. 4.4]) implies that the failure probability, of choosing more than \(\xi _0 = 2a/b\) lower functions, is at most \(e^{-a/(10b)} \le e^{-1/10}\). Hence, we have \(q_\tau = \Omega (1/b^6)\). To complete the Clarkson–Shor analysis, we need an upper bound on the number of prisms in \({{\,\mathrm{VD}\,}}_{\le \xi }(R)\). By Lemma 6.1, this is \(O(\xi \lambda _s(\xi )|R|)\). The analysis thus yields

as asserted. Again, the bound holds trivially if \(b > n/10\) or if n is constant. \(\square \)

Remark

As usual, the bounds generalize to superlinear lower envelope complexity. Let \(\psi :\mathbb {N}\rightarrow \mathbb {N}\) be such that any m functions in \({{\mathcal {F}}}\) have a lower envelope of complexity at most \(\psi (m)\), and suppose that \(m \mapsto \psi (m)/m\) is monotonically increasing. Then we obtain the following bound.

Lemma 6.4

The number of prisms \(\tau \) with \(w^-(\tau )\le a\) and \(w_0(\tau )\le b\) is \(O(b^6\psi (n/b))\) for \(a\le b\), and \(O(a^2b^4\psi (n/a)\,\lambda _s(a/b))\) for \(a>b\).

Proof

The reasoning is analogous to that in the proof of Lemma 6.3, but we replace the bounds from Lemma 6.1 with the bounds from Lemma 6.2. In particular, in Case 1, the vertical decomposition \({{\,\mathrm{VD}\,}}_{\le 6}(R)\) has \(O(\psi (n/b))\) prisms, and in Case 2, the vertical decomposition \({{\,\mathrm{VD}\,}}_{\le \xi }(R)\) has

prisms. (Since we assumed that \(\psi (m)/m\) is increasing, we have \(\psi (n/2a) \le \psi (n/a)\).) \(\square \)

We can now combine all the bounds derived so far, and bound (i) the expected number of prisms that are ever generated in the RIC, and (ii) the expected overall size of their conflict lists, which, as explained above, dominates the running time of the algorithm (with an additional logarithmic factor). The expected number of prisms is simply

Similarly, the expected overall size of the conflict lists is

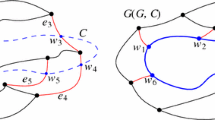

We bound these sums separately for pairs (a, b) within each of the six regions depicted in Fig. 3. Together, these regions cover the entire range \(a,b\ge 0\), \(a+b\le n-6\). As will follow from the forthcoming analysis, the most expensive prisms are those for which (a, b) lies in region (I) or region (IV).

Region (I) In this region, \(5\le b\le 5n/t\) and \(0\le a\le bt/5\). We cover the region by vertical slabs of the form \(S_j := \{(a,b) \mid b_{j-1} \le b\le b_j\}\), for \(j\ge 1\), where \(b_j=5\cdot 2^j\), for \(j=0,\dots ,\lceil \log (n/k) \rceil \). Within each slab \(S_j\), the maximum value of \(p_\tau \) is \(O(1/b_{j-1}^6)= O(1/2^{6j})\), and we bound \(\sum _{(a,b)\in S_j} N_{a,b}\) by \(N_{\le b_jt/5,\le b_j}\) which, by Lemma 6.3, is

Hence, the contribution of \(S_j\) to (9) is at most

and, summing this over j, we get that the contribution of region (I) to (9) is \(O(nt\lambda _s(t))\). Similarly, the contribution of \(S_j\) to (10) is at most

We need to multiply this bound by the number of slabs, which, as is easily checked, is \(O(\log (n/t))\). Hence, the contribution of region (I) to (10) is \(O(nt\,\lambda _s(t)\log (n/t))\).

Region (II) In this region, \(5n/t \le b \le n/2\) and \(0\le a\le n-6-b\). Here too we cover the region by vertical slabs of the form \(S'_j := \{(a,b) \mid b'_{j-1} \le b\le b'_j\}\), for \(j\ge 1\), where \(b'_j=(5n/t)\cdot 2^j\), for \(j=0,\dots ,\lceil \log (t/10)\rceil \). Within each \(S'_j\), the maximum value of \(p_\tau \) is \(O(1/(b'_{j-1})^6) = O(t^6/(n^62^{6j}))\), and we bound \(\sum _{(a,b)\in S'_j} N_{a,b}\) by \(N_{\le n-b'_{j-1}-6,\le b'_j}\) which, by Lemma 6.3, is (upper-bounding \(n-b'_{j-1}-6\) by n)

Hence, the contribution of \(S'_j\) to (9) is at most \(O( t^2\lambda _s(t)/2^{3j})\), and, summing this over j, we get that the contribution of region (II) to (9) is \(O(t^2\lambda _s(t)) = O(nt\lambda _s(t))\). Similarly, the contribution of \(S'_j\) to (10) is at most

and, summing this over j, we get that the contribution of region (II) to (10) is \(O(nt\,\lambda _s(t))\).

Region (III) In this region, \(n/2 \le b \le n\) and \(0\le a\le n-6-b\). We treat this region as a single entity. The maximum value of \(p_\tau \) here is \(O(1/n^6)\), and we bound \(\sum _{(a,b)\in \mathrm{(III)}} N_{a,b}\) by the overall number of prisms, which is \(O(n^6)\), getting a negligible contribution to (9) of only O(1). A similar argument shows that the contribution of this region to (10) is O(n), again negligible compared with the other regions.

Region (IV) In this region, \(t\le a\le a_0\approx n\) and \(0\le b\le 5a/t\). We cover the region by horizontal slabs of the form \(S''_j := \{(a,b) \mid a_{j-1} \le a\le a_j\}\), for \(j\ge 1\), where \(a_j=t\cdot 2^j\), for \(j=0,\dots ,\lceil \log (a_0/t)\rceil = O(\log (n/t))\). Within each slab \(S''_j\), the maximum value of \(p_\tau \) is \(O(t^6/a_{j-1}^6) = O(1/2^{6j})\), and we bound \(\sum _{(a,b)\in S''_j} N_{a,b}\) by \(N_{\le a_j,\le 5a_j/t}\) which, by Lemma 6.3, is

Hence, the contribution of \(S''_j\) to (9) is at most

and, summing this over j, we get that the contribution of region (IV) to (9) is \(O(nt\lambda _s(t))\). Similarly, the contribution of \(S''_j\) to (10) is at most

Since the number of slabs is \(O(\log (n/t))\), region (IV) contributes \(O(nt\,\lambda _s(t)\log (n/t))\) to (10).

Region (V) In this region, \(a_0\le a\le n\) and \(0\le b\le n-a-6\). We treat this region as a single entity. The maximum value of \(p_\tau \) in this region is \(O(t^6/n^6)\), and we bound \(\sum _{(a,b)\in \mathrm{(V)}}N_{a,b}\) by