Abstract

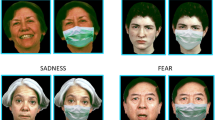

The use of signs as a major means for communication affects other functions such as spatial processing. Intriguingly, this is true even for functions which are less obviously linked to language processing. Speakers using signs outperform non-signers in face recognition tasks, potentially as a result of a lifelong focus on the mouth region for speechreading. On this background, we hypothesized that the processing of emotional faces is altered in persons using mostly signs for communication (henceforth named deaf signers). While for the recognition of happiness the mouth region is more crucial, the eye region matters more for recognizing anger. Using morphed faces, we created facial composites in which either the upper or lower half of an emotional face was kept neutral while the other half varied in intensity of the expressed emotion, being either happy or angry. As expected, deaf signers were more accurate at recognizing happy faces than non-signers. The reverse effect was found for angry faces. These differences between groups were most pronounced for facial expressions of low intensities. We conclude that the lifelong focus on the mouth region in deaf signers leads to more sensitive processing of happy faces, especially when expressions are relatively subtle.

Similar content being viewed by others

Notes

There was also a smaller but significant quadratic trend (F = 115.144; p < 0.001) and cubic trend (F = 4.398; p = 0.043).

There was also a smaller but significant quadratic trend (F = 13.506; p = 0.001).

We performed two additional ANOVAs on accuracies and latencies, comparing two sub-groups of deaf signers, i.e. those using DGS and SSS (N = 14) with those using SSS (N = 6) only. For accuracies, the only significant effect involving the factor GROUP was an interaction of STIMULUS TYPE × INTENSITY × GROUP (F(6, 108) = 2.579, p = 0.026, ηp2 = 0.125). Running the ANOVA for each group separately, revealed a significant interaction of STIMULUS TYPE × INTENSITY in both groups (F(6, 78) = 3.617, p = 0.007, ηp2 = 0.218 for the signers using DGS and SSS; F(6, 30) = 10.154, p < 0.001, ηp2 = .670 for the signers using SSS only. With increasing intensity both groups displayed the effect of TYPE with the highest accuracy for O stimuli followed by MEmo and Eemo. However, in the group using DGS and SSS the effect of STIMULUS TYPE appeared already with the lowest morphing intensity (F(2,26) = 9.560, p = 0.001, η2p = 0.424), while this was not the case in participants using SSS only (F(2,10) = 1.258, p = 0.326, η2p = 0.201)..For latencies, there were no effects involving GROUP.

References

Andersson, U., Lyxell, B., Ronnberg, J., & Spens, K. E. (2001). Cognitive correlates of visual speech understanding in hearing-impaired individuals. Journal of Deaf Studies and Deaf Education,6(2), 103–116. https://doi.org/10.1093/deafed/6.2.103.

Beaudry, O., Roy-Charland, A., Perron, M., Cormier, I., & Tapp, R. (2014). Featural processing in recognition of emotional facial expressions. Cognition and Emotion,28(3), 416–432. https://doi.org/10.1080/02699931.2013.833500.

Bellugi, U., O’Grady, L., Lillo-Martin, D., Hynes, M. G., van Hoek, K., & Corina, D. (1990). Enhancement of spatial cognition in deaf children. In V. Volterra & C. J. Erting (Eds.), From gesture to language in hearing and deaf children (pp. 278–298). Berlin: Springer.

Benton, A. L. (1983). Facial recognition: Stimulus and multiple choice pictures; Contributions to neuropsychological assessment. Oxford: Oxford University Press.

Bernstein, L. E., Tucker, P. E., & Demorest, M. E. (2000). Speech perception without hearing. Perception and Psychophysics,62(2), 233–252. https://doi.org/10.3758/bf03205546.

Bettger, J. (1992). The effects of experience on spatial cognition: Deafness and knowledge of ASL. Unpublished dissertation. Urbana-Champaign: University of Illinois.

Bettger, J. G., Emmorey, K., McCullough, S. H., & Bellugi, U. (1997). Enhanced facial diserimination: Effects of experience with American sign language. Journal of Deaf Studies and Deaf Education,2(4), 223–233. https://doi.org/10.1093/oxfordjournals.deafed.a014328.

Calvo, M. G., & Nummenmaa, L. (2008). Detection of emotional faces: salient physical features guide effective visual search. Journal of Experimental Psychology: General,137(3), 471–494. https://doi.org/10.1037/a0012771.

Campbell, R., Brooks, B., Haan, E. D., & Roberts, T. (1996). Dissociating face processing skills: Decisions about lip read speech, expression, and identity. The Quarterly Journal of Experimental Psychology A,49(2), 295–314. https://doi.org/10.1080/027249896392649.

Chen, Q., He, G., Chen, K., Jin, Z., & Mo, L. (2010). Altered spatial distribution of visual attention in near and far space after early deafness. Neuropsychologia,48(9), 2693–2698. https://doi.org/10.1016/j.neuropsychologia.2010.05.016.

Chen, Q., Zhang, M., & Zhou, X. (2006). Effects of spatial distribution of attention during inhibition of return (IOR) on flanker interference in hearing and congenitally deaf people. Brain Research,1109(1), 117–127. https://doi.org/10.1016/j.brainres.2006.06.043.

de Heering, A., Aljuhanay, A., Rossion, B., & Pascalis, O. (2012). Early deafness increases the face inversion effect but does not modulate the composite face effect. Frontiers in Psychology,3, 124. https://doi.org/10.3389/fpsyg.2012.00124.

Dobel, C., Enriquez-Geppert, S., Hummert, M., Zwitserlood, P., & Bolte, J. (2011). Conceptual representation of actions in sign language. Journal of Deaf Studies and Deaf Education,16(3), 392–400. https://doi.org/10.1093/deafed/enq070.

Dyck, M. J., & Denver, E. (2003). Can the emotion recognition ability of deaf children be enhanced? A pilot study. Journal of Deaf Studies and Deaf Education,8(3), 348–356. https://doi.org/10.1093/deafed/eng019.

Dye, M. W., Baril, D. E., & Bavelier, D. (2007). Which aspects of visual attention are changed by deafness? The case of the Attentional Network Test. Neuropsychologia,45(8), 1801–1811. https://doi.org/10.1016/j.neuropsychologia.2006.12.019.

Ekman, P., & Friesen, W. V. (1971). Constants across cultures in the face and emotion. Journal of Personality and Social Psychology,17(2), 124–129. https://doi.org/10.1037/h0030377.

Ekman, P., & Friesen, W. V. (1986). A new pan-cultural facial expression of emotion. Motivation and Emotion,10(2), 159–168.

Emmorey, K. (2001). Language, cognition, and the brain: Insights from sign language research. London: Psychology Press.

Emmorey, K., Kosslyn, S. M., & Bellugi, U. (1993). Visual imagery and visual-spatial language: Enhanced imagery abilities in deaf and hearing ASL signers. Cognition,46(2), 139–181.

Emmorey, K., Thompson, R., & Colvin, R. (2008). Eye gaze during comprehension of American Sign Language by native and beginning signers. Journal of Deaf Studies and Deaf Education,14(2), 237–243.

Emmorey, K., & Tversky, B. (2002). Spatial perspective choice in ASL. Sign Language and Linguistics,5(1), 3–26. https://doi.org/10.1075/sll.5.1.03emm.

Goldstein, N. E., & Feldman, R. S. (1996). Knowledge of American sign language and the ability of hearing individuals to decode facial expressions of emotion. Journal of Nonverbal Behavior,20(2), 111–122. https://doi.org/10.1007/bf02253072.

Goldstein, N. E., Sexton, J., & Feldman, R. S. (2000). Encoding of facial expressions of emotion and knowledge of American sign language. Journal of Applied Social Psychology,30(1), 67–76. https://doi.org/10.1111/j.1559-1816.2000.tb02305.x.

Gosselin, P., & Kirouac, G. (1995). Le décodage de prototypes émotionnels faciaux. Canadian Journal of Experimental Psychology/Revue canadienne de psychologie expérimentale,49(3), 313–329. https://doi.org/10.1037/1196-1961.49.3.313.

Hauthal, N., Neumann, M. F., & Schweinberger, S. R. (2012). Attentional spread in deaf and hearing participants: face and object distractor processing under perceptual load. Attention, Perception and Psychophysics,74(6), 1312–1320. https://doi.org/10.3758/s13414-012-0320-1.

He, H., Xu, B., & Tanaka, J. (2016). Investigating the face inversion effect in a deaf population using the Dimensions Tasks. Visual Cognition,24(3), 201–211.

Huynh, H., & Feldt, L. S. (1976). Estimation of the Box correction for degrees of freedom from sample data in randomized block and split-plot designs. Journal of Educational Statistics,1(1), 69–82.

Izard, C. E. (1994). Innate and universal facial expressions: Evidence from developmental and cross-cultural research. Psychological Bulletin,115(2), 288–299. https://doi.org/10.1037/0033-2909.115.2.288.

Letourneau, S. M., & Mitchell, T. V. (2011). Gaze patterns during identity and emotion judgments in hearing adults and deaf users of American Sign Language. Perception,40(5), 563–575. https://doi.org/10.1068/p6858.

Letourneau, S. M., & Mitchell, T. V. (2013). Visual field bias in hearing and deaf adults during judgments of facial expression and identity. Frontiers in Psychology,4, 319. https://doi.org/10.3389/fpsyg.2013.00319.

Levinson, S. C. (2003). Space in language and cognition: Explorations in cognitive diversity. Cambridge: Cambridge University Press.

Lore, W. H., & Song, S. (1991). Central and peripheral visual processing in hearing and nonhearing individuals. Bulletin of the Psychonomic Society,29(5), 437–440.

Ludlow, A., Heaton, P., Rosset, D., Hills, P., & Deruelle, C. (2010). Emotion recognition in children with profound and severe deafness: Do they have a deficit in perceptual processing? Journal of Clinical and Experimental Neuropsychology,32(9), 923–928. https://doi.org/10.1080/13803391003596447.

Marassa, L. K., & Lansing, C. R. (1995). Visual word recognition in two facial motion conditions: Full-face versus lips-plus-mandible. Journal of Speech Language and Hearing Research,38(6), 1387–1394. https://doi.org/10.1044/jshr.3806.1387.

Mastrantuono, E., Saldana, D., & Rodriguez-Ortiz, I. R. (2017). An eye tracking study on the perception and comprehension of unimodal and bimodal linguistic inputs by deaf adolescents. Frontiers in Psychology,8, 1044. https://doi.org/10.3389/fpsyg.2017.01044.

Maurer, D., Le Grand, R., & Mondloch, C. J. (2002). The many faces of configural processing. Trends in Cognitive Sciences,6(6), 255–260.

McCullough, S., & Emmorey, K. (1997). Face processing by deaf ASL signers: Evidence for expertise in distinguishing local features. Journal of Deaf Studies and Deaf Education,2(4), 212–222. https://doi.org/10.1093/oxfordjournals.deafed.a014327.

McCullough, S., & Emmorey, K. (2009). Categorical perception of affective and linguistic facial expressions. Cognition,110(2), 208–221. https://doi.org/10.1016/j.cognition.2008.11.007.

Mitchell, T. V. (2017). Category selectivity of the N170 and the role of expertise in deaf signers. Hearing Research,343, 150–161.

Mitchell, T. V., Letourneau, S. M., & Maslin, M. C. (2013). Behavioral and neural evidence of increased attention to the bottom half of the face in deaf signers. Restorative Neurology and Neuroscience,31(2), 125–139. https://doi.org/10.3233/rnn-120233.

Neville, H. J., & Lawson, D. (1987a). Attention to central and peripheral visual space in a movement detection task: An event-related potential and behavioral study. I. Normal hearing adults. Brain Research,405(2), 253–267.

Neville, H. J., & Lawson, D. (1987b). Attention to central and peripheral visual space in a movement detection task: an event-related potential and behavioral study. II. Congenitally deaf adults. Brain Research,405(2), 268–283.

Parasnis, I., Samar, V. J., Bettger, J. G., & Sathe, K. (1996). Does deafness lead to enhancement of visual spatial cognition in children? Negative evidence from deaf nonsigners. The Journal of Deaf Studies and Deaf Education,1(2), 145–152.

Proksch, J., & Bavelier, D. (2002). Changes in the spatial distribution of visual attention after early deafness. Journal of Cognitive Neuroscience,14(5), 687–701. https://doi.org/10.1162/08989290260138591.

Rosenblum, L. D. (2008). Speech perception as a multimodal phenomenon. Current Directions in Psychological Science,17(6), 405–409. https://doi.org/10.1111/j.1467-8721.2008.00615.x.

Schiff, W. (1973). Social-event perception and stimulus pooling in deaf and hearing observers. The American Journal of Psychology,86(1), 61–78. https://doi.org/10.2307/1421848.

Schweinberger, S. R., & Soukup, G. R. (1998). Asymmetric relationships among perceptions of facial identity, emotion, and facial speech. Journal of Experimental Psychology: Human Perception and Performance,24(6), 1748–1765. https://doi.org/10.1037/0096-1523.24.6.1748.

Sidera, F., Amado, A., & Martinez, L. (2017). Influences on Facial Emotion Recognition in Deaf Children. Journal of Deaf Studies and Deaf Education,22(2), 164–177. https://doi.org/10.1093/deafed/enw072.

Sladen, D. P., Tharpe, A. M., Ashmead, D. H., Grantham, D. W., & Chun, M. M. (2005). Visual attention in deaf and normal hearing adults. Journal of Speech Language and Hearing Research,48(6), 1529–1537. https://doi.org/10.1044/1092-4388(2005/106).

Stoll, C., Palluel-Germain, R., Caldara, R., Lao, J., Dye, M. W., Aptel, F., & Pascalis, O. (2017). Face recognition is shaped by the use of sign language. The Journal of Deaf Studies and Deaf Education,23(1), 62–70.

Tracy, J. L., & Robins, R. W. (2008). The automaticity of emotion recognition. Emotion,8(1), 81–95. https://doi.org/10.1037/1528-3542.8.1.81.

Valentine, T. (1991). A unified account of the effects of distinctiveness, inversion, and race in face recognition. The Quarterly Journal of Experimental Psychology Section A,43(2), 161–204.

Vassallo, S., Cooper, S. L., & Douglas, J. M. (2009). Visual scanning in the recognition of facial affect: is there an observer sex difference? Journal of Vision,9(3), 1–10. https://doi.org/10.1167/9.3.11.

Watanabe, K., Matsuda, T., Nishioka, T., & Namatame, M. (2011). Eye gaze during observation of static faces in deaf people. PLoS One,6(2), e16919.

Weisel, A. (1985). Deafness and perception of nonverbal expression of emotion. Perceptual and Motor Skills,61(2), 515–522. https://doi.org/10.2466/pms.1985.61.2.515.

Whorf, B. L. (2012). Language, thought, and reality. In J. B. Carroll, S. C. Levinson, P. Lee (Eds.), Selected writings of Benjamin Lee Whorf (2nd ed.). Cambridge, Massachusetts: MIT Press.

Young, A., Perrett, D., Calder, A., Sprengelmeyer, R., & Ekman, P. (2002). Facial expressions of emotion: Stimuli and tests (FEEST). Bury St. Edmunds: Thames Valley Test Company.

Acknowledgements

We want to cordially thank both the deaf and hearing participants for their willingness to take part in our study. We are grateful to Christine Wulf for technical assistance.

Author information

Authors and Affiliations

Contributions

CD, RZ designed the study, analyzed the data and wrote the paper. BNK designed the study, collected and analyzed the data, SRS and OGL wrote the paper.

Corresponding author

Ethics declarations

Conflict of interest

The authors declare no conflict of interest.

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Dobel, C., Nestler-Collatz, B., Guntinas-Lichius, O. et al. Deaf signers outperform hearing non-signers in recognizing happy facial expressions. Psychological Research 84, 1485–1494 (2020). https://doi.org/10.1007/s00426-019-01160-y

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00426-019-01160-y