Abstract

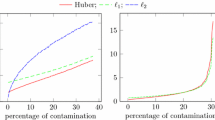

Robust estimation of a mean vector, a topic regarded as obsolete in the traditional robust statistics community, has recently surged in machine learning literature in the last decade. The latest focus is on the sub-Gaussian performance and computability of the estimators in a non-asymptotic setting. Numerous traditional robust estimators are computationally intractable, which partly contributes to the renewal of the interest in the robust mean estimation. Robust centrality estimators, however, include the trimmed mean and the sample median. The latter has the best robustness but suffers a low efficiency drawback. Trimmed mean and median of means, achieving sub-Gaussian performance have been proposed and studied in the literature. This article investigates the robustness of leading sub-Gaussian estimators of mean and reveals that none of them can resist greater than \(25\%\) contamination in data and consequently introduces an outlyingness induced winsorized mean which has the best possible robustness (can resist up to \(50\%\) contamination without breakdown) meanwhile achieving high efficiency. Furthermore, it has a sub-Gaussian performance for uncontaminated samples and a bounded estimation error for contaminated samples at a given confidence level in a finite sample setting. It can be computed in linear time.

Similar content being viewed by others

References

Alon N, Matias Y, Szegedy M (2002) The space complexity of approximating the frequency moments. J Comput Syst Sci 58:137–147

Bernstein SN (1946) The theory of probabilities. Gastehizdat Publishing House, Moscow

Boucheron S, Lugosi G, Massart P (2013) Concentration inequalities: a nonasymptotic theory of independence. Oxford University Press, Oxford

Catoni O (2012) Challenging the empirical mean and empirical variance: a deviation study. Ann Inst Henri Poincaré, Prob Stat 48(4):1148–1185

Catoni O, Giulini I (2018) Dimension-free PAC-Bayesian bounds for the estimation of the mean of a random vector. arXiv preprint arXiv:1802.04308

Chen M, Gao C, Ren Z (2018) Robust covariance and scatter matrix estimation under Huber’s contamination model. Ann Stat 46:1932–1960

Davies PL (1987) Asymptotic behavior of S-estimators of multivariate location parameters and dispersion matrices. Ann Stat 15:1269–1292

Depersin J, Lecué G (2021) On the robustness to adversarial corruption and to heavy-tailed data of the Stahel–Donoho median of means. arXiv:2101.09117v1

Diakonikolas I, Kane D (2019) Recent advances in algorithmic high-dimensional robust statistics. arXiv:1911.05911v1

Donoho DL (1982) Breakdown properties of multivariate location estimators. Harvard University, PhD Qualifying paper

Donoho DL, Huber PJ (1983) A festschrift foe Erich L. Lehmann. In: Bickel PJ, Doksum KA, Hodges JL (eds) The notion of breakdown point. Chapman and Hall, Wadsworth, pp 157–184

Hastie T, Tibshirani R, Wainwright MJ (2015) Statistical learning with sparsity: the lasso and generalizations. CRC Press, Boca Raton

Hsu D (2010) Robust statistics. http://www.inherentuncertainty.org/2010/12/robust-statistics.html

Hubert M, Rousseeuw PJ, Van Aelst S (2008) High-breakdown robust multivariate methods. Stat Sci 23(1):92–119

Jerrum M, Valiant L, Vazirani V (1986) Random generation of combinatorial structures from a uniform distribution. Theor Comput Sci 43:186–188

Lerasle M (2019) Selected topics on robust statistical learning theory, Lecture Notes. arXiv:1908.10761v1

Lerasle M, Oliveira RI (2011) Robust empirical mean estimators. Preprint. Available at arXiv:1112.3914

Liu X (2017) Approximating projection depth median of dimensions \(p \ge 3\). Commun Stat Simul C 46:3756–3768

Lopuhaä HP, Rousseeuw J (1991) Breakdown points of affine equivariant estimators of multivariate location and covariance matrices. Ann Statist 19:229–248

Lecué G, Lerasle M (2020) Robust machine learning by median-of-means: theory and practice. Ann Statist 48:906–931

Lugosi G, Mendelson S (2019) Mean estimation and regression under heavy-tailed distributions: a survey. Found Comput Math 19:1145–1190

Lugosi G, Mendelson S (2021) Robust multivariate mean estimation: the optimality of trimmed mean. Ann Stat 49(1):393–410. https://doi.org/10.1214/20-AOS1961

Nemirovsky AS, Yudin DB (1983) Problem complexity and method efficiency in optimization

Pauwels E (2020) Lecture notes: statistics, optimization and algorithms in high dimension. https://www.math.univ-toulouse.fr/ epauwels/M2RI/

Rousseeuw PJ (1984) Least median of squares regression. J Am Stat Assoc 79:871–880

Rousseeuw PJ (1985) Multivariate estimation with high breakdown point. In: Grossmann W, Pflug G, Vincze I, Wertz W (eds) Mathematical statistics and applications. Riedel, Kufstein, pp 283–297

Rousseeuw PJ, Ruts I (1998) Construting the bivariate Tukey median. Stat Sin 8(3):827–839

Rousseeuw PJ, Yohai VJ (1984) Robust regression by means of S-estimators. In Robust and nonlinear time series analysis. Lecture Notes in Statist. Springer, New York. 26:256–272

Stahel WA (1981) Robuste Schatzungen: Infinitesimale Optimalitiit und Schiitzungen von Kovarianzmatrizen. Ph.D. dissertation, ETH, Zurich

Sun Q, Zhou WX, Fan JQ (2020) Adaptive Huber regression. J Am Stat Assoc 115(529):254–265. https://doi.org/10.1080/01621459.2018.1543124

Weber A (1909) Uber den Standort der Industrien, Tubingen. In: Alfred Weber’s Theory of Location of Industries, University of Chicago Press. English translation by Freidrich, C.J. (1929)

Weng H, Maleki A, Zheng L (2018) Overcoming the limitations of phase transition by higher order analysis of regularization techniques. Ann Stat 46(6A):3099–3129

Wu M, Zuo Y (2009) Trimmed and Winsorized means based on a scaled deviation. J Stat Plann Inference 139(2):350–365

Zuo Y (2003) Projection-based depth functions and associated medians. Ann Stat 31:1460–1490

Zuo Y (2006) Robust location and scatter estimators in multivariate analysis. Imperial College Press, London, pp 467–490

Zuo Y (2006) Multi-dimensional trimming based on projection depth. Ann Stat 34(5):2211–2251

Zuo Y (2018) A new approach for the computation of halfspace depth in high dimensions. Commun Stat Simul Comput 48(3):900–921

Zuo Y, Serfling R (2000) General notions of statistical depth function. Ann Stat 28:461–482

Acknowledgements

The author thanks Hanshi Zuo, Prof.s Wei Shao, Yimin Xiao, and Haolei Weng for insightful comments and stimulus discussions. Helpful comments and suggestions of two anonymous reviewers are highly appreciated.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Below is the link to the electronic supplementary material.

Rights and permissions

Springer Nature or its licensor holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Zuo, Y. Non-asymptotic analysis and inference for an outlyingness induced winsorized mean. Stat Papers 64, 1465–1481 (2023). https://doi.org/10.1007/s00362-022-01353-5

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00362-022-01353-5

Keywords

- Non-asymptotic analysis

- Centrality estimation

- Sub-Gaussian performance

- Computability

- Finite sample breakdown point