Abstract

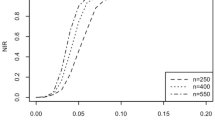

In this paper, we consider problem of variable selection in higher-order partially linear spatial autoregressive model with a diverging number of parameters. By combining series approximation method, two-stage least squares method and a class of non-convex penalty function, we propose a variable selection method to simultaneously select both significant spatial lags of the response variable and explanatory variables in the parametric component and estimate the corresponding nonzero parameters. Unlike existing variable selection methods for spatial autoregressive models, the proposed variable selection method can simultaneously select significant explanatory variables and spatial lags of the response variable. Under appropriate conditions, we establish rate of convergence of the penalized estimator of the parameter vector in the parametric component and uniform rate of convergence of the series estimator of the nonparametric component, and show that the proposed variable selection method enjoys the oracle property. That is, it can estimate the zero parameters as exact zero with probability approaching one, and estimate the nonzero parameters as efficiently as if the true model was known in advance. Simulation studies show that the proposed variable selection method is of satisfactory finite sample properties. Especially, when the sample size is moderate, the proposed variable selection method even works well in the case where the correlation among the explanatory variables in the parametric component is strong. An application of the proposed variable selection method to the Boston house price data serves as a practical illustration.

Similar content being viewed by others

References

Ai CR, Zhang YQ (2017) Estimation of partially specified spatial panel data models with fixed-effects. Econom Rev 36:6–22

Badinger H, Egger P (2011) Estimation of higher-order spatial autoregressive cross-section models with heteroscedastic disturbances. Pap Reg Sci 90:213–235

Cai ZW, Xu XP (2008) Nonparametric quantile estimations for dynamic smooth coefficient models. J Am Stat Assoc 103:1595–1608

Du J, Sun XQ, Cao RY, Zhang ZZ (2018) Statistical inference for partially linear additive spatial autoregressive models. Spat Stat 25:52–67

Fan JQ, Huang T (2005) Profile likelihood inferences on semiparametric varying-coefficient partially linear models. Bernoulli 11:1031–1057

Fan JQ, Li RZ (2001) Variable selection via nonconcave penalized likelihood and its oracle properties. J Am Stat Assoc 96:1348–1360

Gupta A, Robinson PM (2015) Inference on higher-order spatial autoregressive models with increasingly many parameters. J Econom 186:19–31

Harrison D, Rubinfeld DL (1978) Hedonic housing prices and the demand for clean air. J Environ Econ Manage 5:81–102

Horn R, Johnson C (1985) Matrix analysis. Cambridge University Press, Cambridge

Hoshino T (2018) Semiparametric spatial autoregressive models with endogenous regressors: with an application to crime data. J Bus Econ Stat 36:160–172

Kelejian HH, Prucha IR (1999) A generalized moments estimator for the autoregressive parameter in a spatial model. Int Econom Rev 40:509–533

Kong E, Xia YC (2012) A single-index quantile regression model and its estimation. Econ Theory 28:730–768

Lee LF (2004) Asymptotic distributions of quasi-maximum likelihood estimators for spatial autoregressive models. Econometrica 72:1899–1925

Lee LF, Liu XD (2010) Efficient GMM estimation of high order spatial autoregressive models. Econ Theory 26:187–230

Lesage JP, Pace RK (2009) Introduction to spatial econometrics. CRC Press, Boca Raton

Li DK, Mei CL, Wang N (2019) Tests for spatial dependence and heterogeneity in spatially autoregressive varying coefficient models with application to Boston house price analysis. Reg Sci Urban Econ 79:103470

Li TZ, Mei CL (2013) Testing a polynomial relationship of the non-parametric component in partially linear spatial autoregressive models. Pap Reg Sci 92:633–649

Li TZ, Mei CL (2016) Statistical inference on the parametric component in partially linear spatial autoregressive models. Commun Stat Simul Comput 45:1991–2006

Lin X, Lee LF (2010) GMM estimation of spatial autoregressive models with unknown heteroskedasticity. J Econom 157:34–52

Lin X, Weinberg B (2014) Unrequited friendship? how reciprocity mediates adolescent peer effects. Reg Sci Urban Econ 48:144–153

Liu X, Chen JB, Cheng SL (2018) A penalized quasi-maximum likelihood method for variable selection in the spatial autoregressive model. Spat Stat 25:86–104

Luo GW, Wu MX (2019) Variable selection for semiparametric varying-coefficient spatial autoregressive models with a diverging number of parameters. Commun Stat Theory Methods 50(9):2062–2079. https://doi.org/10.1080/03610926.2019.1659367

Newey WK (1997) Convergence rates and asymptotic normality for series estimators. J Econom 79:147–168

Neyman J, Scotts EL (1948) Consistent estimates based on partially consistent observations. Econometrica 16:1–32

Pace RK, Gilley OW (1997) Using the spatial configuration of the data to improve estimation. J Real Estate Financ Econ 14:333–340

Su LJ (2012) Semiparametric GMM estimation of spatial autoregressive models. J Econom 167:543–560

Su LJ, Jin SN (2010) Profile quasi-maximum likelihood estimation of partially linear spatial autoregressive models. J Econom 157:18–33

Sun Y, Yan HJ, Zhang WY, Lu ZD (2014) A semiparametric spatial dynamic model. Ann Stat 42:700–727

Tao J (2005) Spatial econometrics: models, methods and applications. Dissertation, Ohio State University

Wang HS, Li RZ, Tsai C-L (2007) Tuning parameter selectors for the smoothly clipped absolute deviation method. Biometrika 94:553–568

Wu YQ, Sun Y (2017) Shrinkage estimation of the linear model with spatial interaction. Metrika 80:51–68

Xie HL, Huang J (2009) SCAD-penalized regression in high-dimensional partially linear models. Ann Stat 37:673–696

Xie TF, Cao RY, Du J (2020) Variable selection for spatial autoregressive models with a diverging number of parameters. Stat Pap 61:1125–1145

Yang ZL (2018) Bootstrap LM tests for higher-order spatial effects in spatial linear regression models. Empir Econ 55:35–68

Zhang YQ, Sun YQ (2015) Estimation of partially specified dynamic spatial panel data models with fixed-effects. Reg Sci Urban Econ 51:37–46

Zhang YQ, Yang GR (2015a) Statistical inference of partially specified spatial autoregressive model. Acta Math Appl Sin E 31:1–16

Zhang YQ, Yang GR (2015b) Estimation of partially specified spatial panel data models with random-effects. Acta Math Sin 31:456–478

Zhang ZY (2013) A pairwise difference estimator for partially linear spatial autoregressive models. Spatial Econom Anal 8:176–194

Zou H (2006) The adaptive lasso and its oracle properties. J Am Stat Assoc 101:1418–1429

Acknowledgements

The authors are grateful to the editor Christine H. Müller and reviewers for their constructive comments and suggestions, which lead to an improved version of this paper. This research was supported by the Natural Science Foundation of Shaanxi Province [Grant Number 2021JM349], the National Statistical Science Project [Grant Number 2019LY36] and the National Natural Science Foundation of China [Grant Number 11972273].

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix: Proofs

Appendix: Proofs

In this appendix, we give the technical proofs of Theorems 1–3. In our proofs, we will frequently use the following three facts.

Fact 1. If the row and column sums of \(n{\times }n\) matrices \({\mathbf{A}}_{n1}\) and \({\mathbf{A}}_{n2}\) are uniformly bounded in absolute value, then the row and column sums of \({\mathbf{A}}_{n1}{\mathbf{A}}_{n2}\) and \({\mathbf{A}}_{n2}{\mathbf{A}}_{n1}\) are also uniformly bounded in absolute value.

Fact 2. The largest eigenvalue of an idempotent matrix is at most one.

Fact 3. For any \(n{\times }n\) matrix \({\mathbf{A}}_{n}\), its spectral radius is bounded by \(\mathrm{{max}}_{1{\le }i{\le }n} \sum _{j=1}^{n}|a_{n,ij}|\), where \(a_{n,ij}\) is the (i, j)th element of \({\mathbf{A}}_{n}\).

Proof of Theorem 1

Let \({\alpha }_{n}={\sqrt{p_{n}/n}}+K^{-{\delta }}\) and \({\varvec{\theta }}={\varvec{\theta }}_{0}+{\alpha }_{n}{\mathbf{u}}\). It suffices to prove that for any given \({\eta }>0\), there exists a sufficiently large positive constant C such that

This implies that with probability at least \(1-{\eta }\), there exists a local minimizer \(\widehat{\varvec{\theta }}\) in the ball \(\{{\varvec{\theta }}_{0}+{\alpha }_{n}{\mathbf{u}}:{\Vert {\mathbf{u}}\Vert {\le }C}\}\) such that \(\Vert \widehat{\varvec{\theta }}-{\varvec{\theta }}_{0}\Vert = \mathrm{{O}}_{p}({\alpha }_{n})\).

Let

and

It follows from the assumptions about the penalty function that \(p_{{\lambda }_{j}}(0)=0\) and \(p_{{\lambda }_{j}}(\cdot )\) is increasing on \([0,{\infty })\). Thus, we have

For \({C}_{n1}\), we have

Let \({\mathbf{V}}_{n}={\mathbf{m}}_{0}({\mathbf{Z}}_{n})-{\mathbf{P}}_{n}{\varvec{\nu }}_{0}\) and \({\overline{\varvec{\varepsilon }}}_{n}=({\mathbf{G}}_{n1}{\varvec{\varepsilon }}_{n}, \ldots ,{\mathbf{G}}_{nr}{\varvec{\varepsilon }}_{n},{\mathbf{0}}_{n{\times }p_{n}})\), then it is easy to show \({\mathbf{D}}_{n}={\overline{\mathbf{D}}}_{n}+{\overline{\varvec{\varepsilon }}}_{n}\). Thus, \({D}_{n1}\) can be decomposed into

where \({D}_{n11}={\varvec{\varepsilon }}_{n}^{{\mathrm{T}}}({\mathbf{I}}_{n}-{\varvec{\varPi }}_{n}){\mathbf{M}}_{n}({\mathbf{I}}_{n}-{\varvec{\varPi }}_{n})\overline{\mathbf{D}}_{n}{\mathbf{u}}\), \({D}_{n12}={\varvec{\varepsilon }}_{n}^{{\mathrm{T}}}({\mathbf{I}}_{n}-{\varvec{\varPi }}_{n}){\mathbf{M}}_{n}({\mathbf{I}}_{n}-{\varvec{\varPi }}_{n})\overline{\varvec{\varepsilon }}_{n}{\mathbf{u}}\), \({D}_{n13}={\mathbf{V}}_{n}^{{\mathrm{T}}}({\mathbf{I}}_{n}-{\varvec{\varPi }}_{n}){\mathbf{M}}_{n}({\mathbf{I}}_{n}-{\varvec{\varPi }}_{n})\overline{\mathbf{D}}_{n}{\mathbf{u}}\) and \({D}_{n14}={\mathbf{V}}_{n}^{{\mathrm{T}}}({\mathbf{I}}_{n}-{\varvec{\varPi }}_{n}){\mathbf{M}}_{n}({\mathbf{I}}_{n}-{\varvec{\varPi }}_{n})\overline{\varvec{\varepsilon }}_{n}{\mathbf{u}}\).

By Assumption 2 and Fact 2, we have

This together with Markov’s inequality implies that

It follows from Assumption 3.4 and Fact 2 that

With (15), (16) and Cauchy–Schwarz inequality, we obtain \({D}_{n11}=\mathrm{{O}}_{P}({\sqrt{np_{n}}}\Vert {\mathbf{u}}\Vert )\).

For \(j=1,\ldots ,r\), it follows from Assumption 1.3 and Fact 1 that the row sums of \({\mathbf{G}}_{nj}{\mathbf{G}}_{nj}^{{\mathrm{T}}}\) are uniformly bounded in absolute value. Hence, we obtain \({\eta }_{\max }({\mathbf{G}}_{nj}{\mathbf{G}}_{nj}^{{\mathrm{T}}})=\mathrm{{O}}(1)\) by Fact 3. Using this result and a similar proof to that of (15), we have

This together with (15) and Cauchy–Schwarz inequality yields

It follows from (17) and Cauchy–Schwarz inequality that

By Fact 2 and Assumption 4.1, we obtain

Using (16), (18) and Cauchy–Schwarz inequality, we get

Similarly, we can prove \(D_{n14}=\mathrm{{O}}_{P}({\sqrt{np_{n}}}K^{-{\delta }}\Vert {\mathbf{u}}\Vert )\) by using (17), (18) and Cauchy–Schwarz inequality. Combining the orders of \(D_{n11}\), \(D_{n12}\), \(D_{n13}\) and \(D_{n14}\), we obtain

For \(D_{n2}\), we have

where \(D_{n21}={\mathbf{u}}^{{\mathrm{T}}}\overline{\mathbf{D}}_{n}^{{\mathrm{T}}}({\mathbf{I}}_{n}-{\varvec{\varPi }}_{n}) {\mathbf{M}}_{n}({\mathbf{I}}_{n}-{\varvec{\varPi }}_{n})\overline{\mathbf{D}}_{n}{\mathbf{u}}\), \(D_{n22}={\mathbf{u}}^{{\mathrm{T}}}\overline{\varvec{\varepsilon }}_{n}^{{\mathrm{T}}}({\mathbf{I}}_{n}-{\varvec{\varPi }}_{n}) {\mathbf{M}}_{n}({\mathbf{I}}_{n}-{\varvec{\varPi }}_{n})\overline{\varvec{\varepsilon }}_{n}{\mathbf{u}}\) and \(D_{n23}={\mathbf{u}}^{{\mathrm{T}}}\overline{\mathbf{D}}_{n}^{{\mathrm{T}}}({\mathbf{I}}_{n}-{\varvec{\varPi }}_{n}) {\mathbf{M}}_{n}({\mathbf{I}}_{n}-{\varvec{\varPi }}_{n})\overline{\varvec{\varepsilon }}_{n}{\mathbf{u}}\). Similar to the proof of \(D_{n1}\), we can obtain \(D_{n21}=\mathrm{{O}}(n\Vert {\mathbf{u}}\Vert ^{2})\), \(D_{n22}=\mathrm{{O}}_{P}(p_{n}\Vert {\mathbf{u}}\Vert ^{2})\) and \(D_{n23}=\mathrm{{O}}_{P}({\sqrt{np_{n}}}\Vert {\mathbf{u}}\Vert ^{2})\). Thus, we have \(D_{n2}=\mathrm{{O}}_{P}(n\Vert {\mathbf{u}}\Vert ^{2})\).

Next, we consider \({C}_{n2}\). Let \(a_{n}=\max \{|p_{{\lambda }_{j}}^{\prime }(|{\theta }_{j0}|)|, {\theta }_{j0}{\ne }0\}\) and \(b_{n}=\max \{|p_{{\lambda }_{j}}^{\prime \prime }(|{\theta }_{j0}|)|, {\theta }_{j0}{\ne }0\}\). Then, it follows from \({\lambda }_{{\mathrm{max}}}{\rightarrow }0\) as \(n{\rightarrow }{\infty }\) and the Condition (6) on the penalty function that \(a_{n}=o(1)\) and \(b_{n}=o(1)\). By using Taylor expansion of the penalty function and Cauchy-Schwarz inequality, we have

By comparing the orders of \({\alpha }_{n}{D}_{n1}\), \({\alpha }_{n}^{2}{D}_{n2}\) and \({C}_{n2}\) and noting that \({a_{n}}=\mathrm{{o}}(1)\) and \({b_{n}}=\mathrm{{o}}(1)\), we can conclude that \({\alpha }_{n}^{2}{D}_{n2}\) dominates both \({\alpha }_{n}{D}_{n1}\) and \({C}_{n2}\) provided C is sufficiently large. Thus, (14) holds for sufficiently large C. This completes the proof of Theorem 1. \(\square \)

Proof of Theorem 2

We first prove part (a). It is sufficient to prove that, for any \({\varvec{\theta }}\) satisfying \(\Vert {\varvec{\theta }}-{\varvec{\theta }}_{0}\Vert = \mathrm{{O}}_{P}({\sqrt{p_{n}/n}}+K^{-{\delta }})\) and some small \({{\delta }}_{n}=C({\sqrt{p_{n}/n}}+K^{-{\delta }})\), with probability tending to 1 as \(n \rightarrow \infty \),

and

Hence, (19) and (20) imply that the minimizer of \(Q({\varvec{\theta }})\) attains at \({\theta }_{j}=0,j=t+l+1,\ldots ,p_{n}+r\).

For \(j=t+l+1,\ldots ,p_{n}+r\), we have

By using the same arguments as those used in the proof of Theorem 1 and notice that \({\varvec{\theta }}-{\varvec{\theta }}_{0}= \mathrm{{O}}_{P}({\sqrt{p_{n}/n}}+K^{-{\delta }})\), we can conclude that

and

Combining the above results, we get

Since \(p_{{\lambda }_{j}}^{\prime }(0+)={\lambda }_{j}\), \({\lambda }_{j}^{-1}p_{{\lambda }_{j}}^{\prime }(|{\theta }_{j}|) {\ge }\mathop {\lim \inf }_{n \rightarrow \infty }\mathop {\lim \inf }_{{\theta }_{j} \rightarrow 0}{\lambda }_{j}^{-1}p_{{\lambda }_{j}}^{\prime }(|{\theta }_{j}|)=1\). This together with \({\lambda }_{j}\left( {\sqrt{p_{n}/n}}+K^{-{\delta }}\right) ^{-1} >{\lambda }_{{\mathrm{min}}}\left( {\sqrt{p_{n}/n}}+K^{-{\delta }}\right) ^{-1}{\rightarrow }{\infty }\) as \(n{\rightarrow }{\infty }\) imply that the sign of \(\frac{\partial {Q({\varvec{\theta }})}}{\partial {{\theta }_{j}}}\) is completely determined by that of \({\theta }_{j}\). As a result, (19) and (20) hold.

Next, we prove part (b). Since \({\widehat{\varvec{\theta }}}=(({\widehat{\varvec{\theta }}}^{*})^{{\mathrm{T}}},{\mathbf{0}})^{{\mathrm{T}}}\) minimizes \(Q({\varvec{\theta }})\), \({\widehat{\varvec{\theta }}}\) must satisfy the following system of equations

That is

By straightforward derivation, we have

where \(\widetilde{\mathbf{D}}_{n}^{*}=({\mathbf{I}}_{n}-{\varvec{\varPi }}_{n}){\mathbf{D}}_{n}^{*}\). By applying Taylor expansion to \(p_{{\lambda }_{j}}^{\prime }(|{\widehat{\theta }}_{j}^{*}|)\), we obtain

For both SCAD and MCP penalty functions, as \({\lambda }_{\max }{\rightarrow }0\), \(p_{{\lambda }_{j}}^{\prime }(|{\theta }_{j0}^{*}|)=0\) and \(p_{{\lambda }_{j}}^{\prime \prime }(|{\theta }_{j0}^{*}|)=0\). Thus, we have

This together with (21) and (22) yields

It follows from (23) that

Note that \({\mathbf{M}}_{n}^{*}\) is an \(n{\times }(t+l)\) idempotent matrix. Thus, by using the same arguments as those in the proof of Theorem 1, we can obtain

and

Let \({\overline{\varvec{\varepsilon }}}_{n}^{*}=({\mathbf{G}}_{n1}{\varvec{\varepsilon }}_{n}, \ldots ,{\mathbf{G}}_{nt}{\varvec{\varepsilon }}_{n},{\mathbf{0}}_{n{\times }l})\), then \({\mathbf{D}}_{n}^{*}=\overline{\mathbf{D}}_{n}^{*}+\overline{\varvec{\varepsilon }}_{n}^{*}\). This together with Cauchy–Schwarz inequality and the above four results, we have

and

Combining (24)–(27), we obtain

By using the central limit theorem and the Slutsky’s Lemma, we have

\(\square \)

Proof of Theorem 3

First, we prove part (a). By the definition of \({\widehat{\varvec{\nu }}}\), we have

where \(C_{n6}=({\mathbf{P}}_{n}^{{\mathrm{T}}}{\mathbf{P}}_{n})^{-1}{\mathbf{P}}_{n}^{{\mathrm{T}}}{\mathbf{D}}_{n} ({\varvec{\theta }}_{0}-\widehat{\varvec{\theta }})\), \(C_{n7}=({\mathbf{P}}_{n}^{{\mathrm{T}}}{\mathbf{P}}_{n})^{-1}{\mathbf{P}}_{n}^{{\mathrm{T}}}{\mathbf{V}}_{n}\) and \(C_{n8}=({\mathbf{P}}_{n}^{{\mathrm{T}}}{\mathbf{P}}_{n})^{-1}{\mathbf{P}}_{n}^{{\mathrm{T}}}{\varvec{\varepsilon }}_{n}\).

By Assumption 3.4, Fact 2 and \({\mathbf{D}}_{n}=\overline{\mathbf{D}}_{n}+\overline{\varvec{\varepsilon }}_{n}\), we obtain

where \(C_{n61}={{\underline{c}}}_{P}^{-1}(\widehat{\varvec{\theta }}-{\varvec{\theta }}_{0})^{{\mathrm{T}}} (n^{-1}\overline{\mathbf{D}}_{n}^{{\mathrm{T}}}\overline{\mathbf{D}}_{n}) (\widehat{\varvec{\theta }}-{\varvec{\theta }}_{0})\), \(C_{n62}={{\underline{c}}}_{P}^{-1}(\widehat{\varvec{\theta }}-{\varvec{\theta }}_{0})^{{\mathrm{T}}} (n^{-1}\overline{\varvec{\varepsilon }}_{n}^{{\mathrm{T}}}\overline{\varvec{\varepsilon }}_{n}) (\widehat{\varvec{\theta }}-{\varvec{\theta }}_{0})\) and \(C_{n63}=2{{\underline{c}}}_{P}^{-1}(\widehat{\varvec{\theta }}-{\varvec{\theta }}_{0})^{{\mathrm{T}}} (n^{-1}\overline{\mathbf{D}}_{n}^{{\mathrm{T}}}\overline{\varvec{\varepsilon }}_{n}) (\widehat{\varvec{\theta }}-{\varvec{\theta }}_{0})\).

It follows from Theorem 1 that \(\Vert \widehat{\varvec{\theta }}-{\varvec{\theta }}_{0}\Vert ^{2}= \mathrm{{O}}_{P}({{p_{n}/n}}+K^{-2{\delta }})\). This together with Assumption 3.4 yields

For \(i,j=1,\ldots ,r\), by Assumption 1.3 and Facts 1 and 3, we have

This implies that \(n^{-1}{\varvec{\varepsilon }}_{n}^{{\mathrm{T}}}{\mathbf{G}}_{ni}^{{\mathrm{T}}}{\mathbf{G}}_{nj}{\varvec{\varepsilon }}_{n} =\mathrm{{O}}_{P}(1)\) (\(i,j=1,\ldots ,r\)). Thus, we get

By using Cauchy–Schwarz inequality and the orders of \(C_{n61}\) and \(C_{n62}\), we can show that

Combining the orders of \(C_{n61}\), \(C_{n62}\) and \(C_{n63}\), we obtain

From the proof of (18), we have \(\Vert {\mathbf{V}}_{n}\Vert ^{2}=\mathrm{{O}}(nK^{-2{\delta }})\). This together with Assumption 4.4 and Fact 2 yields

This means that \(\Vert C_{n7}\Vert =\mathrm{{O}}(K^{-{\delta }})\).

Under Assumption 4.4, we have

This implies that \(\Vert C_{n8}\Vert =\mathrm{{O}}_{P}({\sqrt{K/n}})\).

Combining the orders of \(C_{n6}\), \(C_{n7}\) and \(C_{n8}\) with triangle inequality, we obtain

This together with Assumptions 4.1 and 4.3 yields

Thus, we complete the proof of part (b). \(\square \)

Rights and permissions

About this article

Cite this article

Li, T., Kang, X. Variable selection of higher-order partially linear spatial autoregressive model with a diverging number of parameters. Stat Papers 63, 243–285 (2022). https://doi.org/10.1007/s00362-021-01241-4

Received:

Revised:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00362-021-01241-4