Abstract

Objective

To develop and evaluate the performance of U-Net for fully automated localization and segmentation of cervical tumors in magnetic resonance (MR) images and the robustness of extracting apparent diffusion coefficient (ADC) radiomics features.

Methods

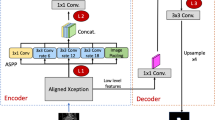

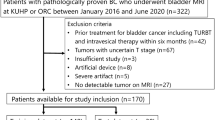

This retrospective study involved analysis of MR images from 169 patients with cervical cancer stage IB–IVA captured; among them, diffusion-weighted (DW) images from 144 patients were used for training, and another 25 patients were recruited for testing. A U-Net convolutional network was developed to perform automated tumor segmentation. The manually delineated tumor region was used as the ground truth for comparison. Segmentation performance was assessed for various combinations of input sources for training. ADC radiomics were extracted and assessed using Pearson correlation. The reproducibility of the training was also assessed.

Results

Combining b0, b1000, and ADC images as a triple-channel input exhibited the highest learning efficacy in the training phase and had the highest accuracy in the testing dataset, with a dice coefficient of 0.82, sensitivity 0.89, and a positive predicted value 0.92. The first-order ADC radiomics parameters were significantly correlated between the manually contoured and fully automated segmentation methods (p < 0.05). Reproducibility between the first and second training iterations was high for the first-order radiomics parameters (intraclass correlation coefficient = 0.70–0.99).

Conclusion

U-Net-based deep learning can perform accurate localization and segmentation of cervical cancer in DW MR images. First-order radiomics features extracted from whole tumor volume demonstrate the potential robustness for longitudinal monitoring of tumor responses in broad clinical settings.

Summary

U-Net-based deep learning can perform accurate localization and segmentation of cervical cancer in DW MR images.

Key Points

• U-Net-based deep learning can perform accurate fully automated localization and segmentation of cervical cancer in diffusion-weighted MR images.

• Combining b0, b1000, and apparent diffusion coefficient (ADC) images exhibited the highest accuracy in fully automated localization.

• First-order radiomics feature extraction from whole tumor volume was robust and could thus potentially be used for longitudinal monitoring of treatment responses.

Similar content being viewed by others

Abbreviations

- ADC:

-

Apparent diffusion coefficient

- DSC:

-

Dice similarity coefficient

- DW:

-

Diffusion-weighted

- MR:

-

Magnetic resonance

- PPV:

-

Positive predictive value

- ROI:

-

Region of interest

- T2W:

-

T2-weighted

References

Sala E, Rockall AG, Freeman SJ, Mitchell DG, Reinhold C (2013) The added role of MR imaging in treatment stratification of patients with gynecologic malignancies: what the radiologist needs to know. Radiology 266:717–740

Bhatla N, Berek JS, Cuello Fredes M et al (2019) Revised FIGO staging for carcinoma of the cervix uteri. Int J Gynaecol Obstet 145:129–135

Ma DJ, Zhu JM, Grigsby PW (2011) Tumor volume discrepancies between FDG-PET and MRI for cervical cancer. Radiother Oncol 98:139–142

Dimopoulos JC, De Vos V, Berger D et al (2009) Inter-observer comparison of target delineation for MRI-assisted cervical cancer brachytherapy: application of the GYN GEC-ESTRO recommendations. Radiother Oncol 91:166–172

Lin YC, Lin G, Hong JH et al (2017) Diffusion radiomics analysis of intratumoral heterogeneity in a murine prostate cancer model following radiotherapy: Pixelwise correlation with histology. J Magn Reson Imaging 46:483–489

Schob S, Meyer HJ, Pazaitis N et al (2017) ADC histogram analysis of cervical cancer aids detecting lymphatic metastases-a preliminary study. Mol Imaging Biol 19:953–962

Liu Y, Zhang Y, Cheng R et al (2019) Radiomics analysis of apparent diffusion coefficient in cervical cancer: a preliminary study on histological grade evaluation. J Magn Reson Imaging 49:280–290

Meng J, Zhu L, Zhu L et al (2017) Whole-lesion ADC histogram and texture analysis in predicting recurrence of cervical cancer treated with CCRT. Oncotarget 8:92442–92453

Lin G, Yang LY, Lin YC et al (2019) Prognostic model based on magnetic resonance imaging, whole-tumour apparent diffusion coefficient values and HPV genotyping for stage IB-IV cervical cancer patients following chemoradiotherapy. Eur Radiol 29:556–565

Gillies RJ, Kinahan PE, Hricak H (2016) Radiomics: images are more than pictures, they are data. Radiology 278:563–577

Torheim T, Malinen E, Hole KH et al (2017) Autodelineation of cervical cancers using multiparametric magnetic resonance imaging and machine learning. Acta Oncol 56:806–812

Cui S, Mao L, Jiang J, Liu C, Xiong S (2018) Automatic semantic segmentation of brain gliomas from MRI images using a deep cascaded neural network. J Healthc Eng 2018:4940593

Blanc-Durand P, Van Der Gucht A, Schaefer N, Itti E, Prior JO (2018) Automatic lesion detection and segmentation of 18F-FET PET in gliomas: a full 3D U-Net convolutional neural network study. PLoS One 13:e0195798

Perkuhn M, Stavrinou P, Thiele F et al (2018) Clinical evaluation of a multiparametric deep learning model for glioblastoma segmentation using heterogeneous magnetic resonance imaging data from clinical routine. Invest Radiol 53:647–654

Trebeschi S, van Griethuysen JJM, Lambregts DMJ et al (2017) Deep learning for fully-automated localization and segmentation of rectal cancer on multiparametric MR. Sci Rep 7:5301

Alkadi R, Taher F, El-Baz A, Werghi N (2018) A deep learning-based approach for the detection and localization of prostate cancer in T2 magnetic resonance images. J Digit Imaging. https://doi.org/10.1007/s10278-018-0160-1

Ronneberger O, Fischer P, Brox T (2015) U-Net: convolutional networks for biomedical image segmentation. In: Navab N, Hornegger J, Wells W, Frangi A (eds) Medical Image Computing and Computer-Assisted Intervention – MICCAI 2015. MICCAI 2015. Lecture Notes in Computer Science, vol 9351. Springer International Publishing, Cham, pp 234–241

Shelhamer E, Long J, Darrell T (2017) Fully convolutional networks for semantic segmentation. IEEE Trans Pattern Anal Mach Intell 39:640–651

Wang J, Lu J, Qin G et al (2018) Technical note: a deep learning-based autosegmentation of rectal tumors in MR images. Med Phys 45:2560–2564

Arcos-Garcia A, Alvarez-Garcia JA, Soria-Morillo LM (2018) Deep neural network for traffic sign recognition systems: an analysis of spatial transformers and stochastic optimisation methods. Neural Netw 99:158–165

Lu CF, Hsu FT, Hsieh KL et al (2018) Machine learning-based radiomics for molecular subtyping of gliomas. Clin Cancer Res 24:4429–4436

Taha AA, Hanbury A (2015) Metrics for evaluating 3D medical image segmentation: analysis, selection, and tool. BMC Med Imaging 15:29

Dolz J, Xu X, Rony J et al (2018) Multiregion segmentation of bladder cancer structures in MRI with progressive dilated convolutional networks. Med Phys 45:5482–5493

Huang YT, Chang CB, Yeh CJ et al (2018) Diagnostic accuracy of 3.0T diffusion-weighted MRI for patients with uterine carcinosarcoma: assessment of tumor extent and lymphatic metastasis. J Magn Reson Imaging. https://doi.org/10.1002/jmri.25981

Jalaguier-Coudray A, Villard-Mahjoub R, Delouche A et al (2017) Value of dynamic contrast-enhanced and diffusion-weighted MR imaging in the detection of pathologic complete response in cervical cancer after neoadjuvant therapy: a retrospective observational study. Radiology 284:432–442

Zhu Y, Wei R, Gao G et al (2019) Fully automatic segmentation on prostate MR images based on cascaded fully convolution network. J Magn Reson Imaging 49:1149–1156

Acknowledgments

The authors acknowledge the assistance provided by the Cancer Center and Clinical Trial Center (Statistician Dr. Lan-Yan Yang), Chang Gung Memorial Hospital, Linkou, Taiwan, which was founded by the Ministry of Health and Welfare of Taiwan MOHW106-TDU-B-212-113005.

Funding

This study was supported by the Chang Gung Medical Foundation grant nos. CPRPG3G0021-3, CIRPG3H0011, CIRPG3D0163, and CMRPG3I0141; Ministry of Science and Technology (Taiwan) grant no. MOST 106-2314-B-182A-016-MY2; and Chang Gung IRB97-2366B, IRB102-0620A3, IRB201800412B0, and IRB201702204B0.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Guarantor

The scientific guarantor of this publication is Gigin Lin, MD, PhD.

Conflict of interest

The authors of this manuscript declare no relationships with any companies, whose products or services may be related to the subject matter of the article.

Statistics and biometry

Dr. Lan-Yan Yang kindly provided statistical advice for this manuscript.

Informed consent

Written informed consent was obtained from all subjects (patients) in this study.

Ethical approval

Institutional Review Board approval was obtained.

Methodology

• retrospective

• performed at one institution

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Electronic supplementary material

ESM 1

(DOCX 10317 kb)

Rights and permissions

About this article

Cite this article

Lin, YC., Lin, CH., Lu, HY. et al. Deep learning for fully automated tumor segmentation and extraction of magnetic resonance radiomics features in cervical cancer. Eur Radiol 30, 1297–1305 (2020). https://doi.org/10.1007/s00330-019-06467-3

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00330-019-06467-3