Abstract

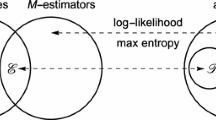

The aim of this paper is to present a new estimation procedure that can be applied in various statistical frameworks including density and regression and which leads to both robust and optimal (or nearly optimal) estimators. In density estimation, they asymptotically coincide with the celebrated maximum likelihood estimators at least when the statistical model is regular enough and contains the true density to estimate. For very general models of densities, including non-compact ones, these estimators are robust with respect to the Hellinger distance and converge at optimal rate (up to a possible logarithmic factor) in all cases we know. In the regression setting, our approach improves upon the classical least squares in many respects. In simple linear regression for example, it provides an estimation of the coefficients that are both robust to outliers and simultaneously rate-optimal (or nearly rate-optimal) for a large class of error distributions including Gaussian, Laplace, Cauchy and uniform among others.

Similar content being viewed by others

References

Audibert, J.-Y., Catoni, O.: Robust linear least squares regression. Ann. Stat. 39(5), 2766–2794 (2011)

Baraud, Y.: Model selection for regression on a random design. ESAIM Probab. Stat. 6, 127–146 (2002)

Baraud, Y.: Estimator selection with respect to Hellinger-type risks. Probab. Theory Related Fields 151(1–2), 353–401 (2011)

Barron, A., Birgé, L., Massart, P.: Risk bounds for model selection via penalization. Probab. Theory Related Fields 113(3), 301–413 (1999)

Barron, A.R.: Complexity regularization with application to artificial neural networks. In: Nonparametric Functional Estimation and Related Topics (Spetses, 1990), vol. 335 of Nato Advanced Science Series C: Mathematical and Physical Sciences, pp. 561–576. Kluwer, Dordrecht (1991)

Birgé, L.: Approximation dans les espaces métriques et théorie de l’estimation. Z. Wahrsch. Verw. Gebiete 65(2), 181–237 (1983)

Birgé, L.: Stabilité et instabilité du risque minimax pour des variables indépendantes équidistribuées. Ann. Inst. H. Poincaré Probab. Stat. 20(3), 201–223 (1984)

Birgé, L.: Model selection via testing: an alternative to (penalized) maximum likelihood estimators. Ann. Inst. H. Poincaré Probab. Stat. 42(3), 273–325 (2006)

Birgé, L.: Robust tests for model selection. In Banerjee, M., Bunea, F., Huang, J., Koltchinskii, V., Maathuis, M.H. (eds.) From Probability to Statistics and Back: High-Dimensional Models and Processes, vol. 9, pp. 47–64. IMS Collections (2013)

Birgé, L., Massart, P.: Rates of convergence for minimum contrast estimators. Probab. Theory Relat. Fields 97(1–2), 113–150 (1993)

Birgé, L., Massart, P.: From model selection to adaptive estimation. Festschrift for Lucien Le Cam, pp. 55–87. Springer, New York (1997)

Birgé, L., Massart, P.: Minimum contrast estimators on sieves: exponential bounds and rates of convergence. Bernoulli 4(3), 329–375 (1998)

Birgé, L., Massart, P.: Minimal penalties for Gaussian model selection. Probab. Theory Relat. Fields 138(1–2), 33–73 (2007)

Dudley, R.M.: A course on empirical processes. InÉcole d’été de Probabilités de Saint-Flour, XII—1982, vol. 1097 of Lecture Notes in Mathematics, pp. 1–142. Springer, Berlin (1984)

Ghosal, S., Ghosh, J.K., van der Vaart, A.W.: Convergence rates of posterior distributions. Ann. Stat. 28(2), 500–531 (2000)

Giné, E., Koltchinskii, V.: Concentration inequalities and asymptotic results for ratio type empirical processes. Ann. Probab. 34(3), 1143–1216 (2006)

Grenander, U.: Abstract inference. In: Wiley Series in Probability and Mathematical Statistics. Wiley, New York (1981)

Hájek, J.: Local asymptotic minimax and admissibility in estimation. In: Proceedings of the Sixth Berkeley Symposium on Mathematical Statistics and Probability (University of California, Berkeley, 1970/1971), vol. I. Theory of statistics, pp. 175–194. University of California Press, Berkeley (1972)

Huber, P.J.: Robust estimation of a location parameter. Ann. Math. Stat. 35, 73–101 (1964)

Huber, P.J.: Robust Statistics. In: Wiley Series in Probability and Mathematical Statistics. Wiley, New York (1981)

Ibragimov, I.A., Has’minskiĭ, R.Z.: On estimate of the density function. Zap. Nauchn. Semin. LOMI 98, 61–85 (1980)

Ibragimov, I.A., Has’minskiĭ, R.Z.: Statistical Estimation. Asymptotic Theory, vol. 16. Springer, New York (1981)

Klein, T., Rio, E.: Concentration around the mean for maxima of empirical processes. Ann. Probab. 33(3), 1060–1077 (2005)

Kolmogorov, A.N., Tihomirov, V.M.: \(\varepsilon \)-entropy and \(\varepsilon \)-capacity of sets in functional space. Am. Math. Soc. Transl. (2) 17, 277–364 (1961)

Koltchinskii, V.: Local Rademacher complexities and oracle inequalities in risk minimization. Ann. Stat. 34(6), 2593–2656 (2006)

Le Cam, L.: On the assumptions used to prove asymptotic normality of maximum likelihood estimates. Ann. Math. Stat. 41, 802–828 (1970)

Le Cam, L.: Convergence of estimates under dimensionality restrictions. Ann. Stat. 1, 38–53 (1973)

Le Cam, L.: On local and global properties in the theory of asymptotic normality of experiments. In: Stochastic processes and related topics (Proceedings of the Summer Research Institute Statistical Inference for Stochastic Processes, Indiana University, Bloomington, 1974, vol. 1; dedicated to Jerzy Neyman), pp. 13–54. Academic Press, New York (1975)

Le Cam, L.: Asymptotic Methods in Statistical Decision Theory. Springer Series in Statistics. Springer, New York (1986)

Le Cam, L.: Maximum likelihood: an introduction. Inter. Stat. Rev. 58(2), 153–171 (1990)

Le Cam, L., Yang, G.L.: Asymptotics in Statistics. Some Basic Concepts. Springer Series in Statistics. Springer, New York (1990)

Massart, P.: Concentration Inequalities and Model Selection, vol. 1896 of Lecture Notes in Mathematics. Springer, Berlin. Lectures from the 33rd Summer School on Probability Theory held in Saint-Flour, 6–23 July 2003 (2007)

Massart, P., Nédélec, É.: Risk bounds for statistical learning. Ann. Stat. 34(5), 2326–2366 (2006)

Sart, M.: Estimation of the transition density of a Markov chain. Ann. l’I.H.P. Probab. Stat. 50(3), 1028–1068 (2014)

Sart, M.: Model selection for poisson processes with covariates. ESAIM: PS 19, 204–235 (2015)

Sart, M.: Robust estimation on a parametric model via testing. Bernoulli 22(3), 1617–1670 (2016)

van de Geer, S.: The method of sieves and minimum contrast estimators. Math. Methods Stat. 4(1), 20–38 (1995)

van der Vaart, A., Wellner, J.A.: A note on bounds for VC dimensions. In: High Dimensional Probability V: The Luminy Volume, vol. 5 of Institute of Mathematical Statistics Collection, pp. 103–107. Institute of Mathematical Statistics, Beachwood (2009)

van der Vaart, A.W.: Asymptotic statistics, vol. 3 of Cambridge Series in Statistical and Probabilistic Mathematics. Cambridge University Press, Cambridge (1998)

van der Vaart, A.W., Wellner, J.A.: Weak Convergence and Empirical Processes. With Applications to Statistics. Springer Series in Statistics. Springer, New York (1996)

Whittaker, E.T., Watson, G.N.: A Course of Modern Analysis. In: Cambridge Mathematical Library. Cambridge University Press, Cambridge. An introduction to the general theory of infinite processes and of analytic functions; with an account of the principal transcendental functions, reprint of the fourth (1927) edition (1996)

Yang, Y., Barron, A.: Information-theoretic determination of minimax rates of convergence. Ann. Stat. 27(5), 1564–1599 (1999)

Acknowledgments

One of the authors is grateful to Vladimir Koltchinskii for stimulating discussions and especially letting him know about the nice properties of VC-subgraph classes and all authors would like to thank the referee for his/her many useful comments.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Baraud, Y., Birgé, L. & Sart, M. A new method for estimation and model selection:\(\rho \)-estimation. Invent. math. 207, 425–517 (2017). https://doi.org/10.1007/s00222-016-0673-5

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00222-016-0673-5