Abstract

We consider a queueing system with N heterogeneous service facilities, in which admission and routing decisions are made when customers arrive and the objective is to maximize long-run average net rewards. For this type of problem, it is well-known that structural properties of optimal policies are difficult to prove in general and dynamic programming methods are computationally infeasible unless N is small. In the absence of an optimal policy to refer to, the Whittle index heuristic (originating from the literature on multi-armed bandit problems) is one approach which might be used for decision-making. After establishing the required indexability property, we show that the Whittle heuristic possesses certain structural properties which do not extend to optimal policies, except in some special cases. We also present results from numerical experiments which demonstrate that, in addition to being consistently strong over all parameter sets, the Whittle heuristic tends to be more robust than other heuristics with respect to the number of service facilities and the amount of heterogeneity between the facilities.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Many routing problems involving multiple queueing facilities can be mathematically formulated as Markov decision processes (MDPs) and solved exactly using well-known dynamic programming (DP) techniques such as value iteration and policy improvement (Bellman 1957; Howard 1960; Puterman 1994). Unfortunately, these techniques are usually of no practical use for solving problems which are modelled on real-world applications. The size and complexity of the state space that one must consider has been cited as one of the curses of dimensionality which usually impede exact solution attempts (Sutton and Barto 1998; Powell 2007).

To address this problem, there are various strategies that one might employ. Depending on the particular features of the problem under consideration, it may be possible to simplify the search by proving that optimal policies must have certain characteristics. However, even if this is possible, one may need to resort to the use of heuristics which can be relied upon to find near-optimal policies in a time-efficient manner. In this paper we discuss the use of ‘index-based’ heuristics and consider their application to a problem involving a Markovian queueing system with heterogeneous service facilities arranged in parallel, each with its own queue and multiple servers available.

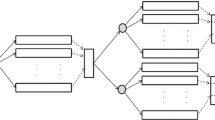

We consider systems which can be modelled as shown in Fig. 1. Customers arriving at the ‘routing point’ must either be sent to one of the N service facilities, in which case they wait in the queue for that facility until a server becomes available, or rejected without receiving service. A fixed, facility-dependent reward is earned each time a customer is served, but holding costs are also incurred and depend linearly on the waiting times of customers. A detailed formulation is provided in Sect. 2.

The fact that we consider heterogeneous facilities, each with the ability to serve multiple customers at once, makes public service systems a natural application area for our work. In the context of healthcare systems, for example, a patient seeking an elective knee operation might be faced with a choice of different treatment providers which differ from each other with respect to the quality of treatment provided, the number of beds available, the expected length of stay and other factors. It is well-known that for systems such as these, the performance of the system (measured, for example, by the long-run average net reward per unit time after deducting holding costs) is not optimized by allowing customers to make ‘selfish’ decisions based only on their own interests (Bell and Stidham 1983; Hassin and Haviv 2003; Haviv and Roughgarden 2007; Knight and Harper 2013; Shone et al. 2016; Knight et al. 2017). Instead, customers must be directed to follow an optimal (sometimes referred to as a ‘socially optimal’) policy which takes into account the effects of decisions on customers who have yet to arrive. It is the need to find, or approximate, a socially optimal policy that we focus on in this paper.

For routing problems involving multiple service facilities in parallel, optimal routing policies do not necessarily have simple characterizations. “Join the Shortest Queue” (JSQ) rules often apply to systems in which all facilities are identical (see Winston 1977; Weber 1978; Hordijk and Koole 1990; Menich and Serfozo 1991; Koole et al. 1999), but even in these cases an optimal policy may have counter-intuitive properties (Whitt 1986). In the case of heterogeneous facilities, some compelling results have been obtained for single-server queues (Hordijk and Koole 1992; Ha 1997), but in general the analysis is much more difficult. Consequently, previous research has tended to focus on developing heuristic (sub-optimal) routing policies. For example, policies based on applying one step of policy iteration to a ‘Bernoulli splitting’ policy have been shown to perform strongly in various contexts (Krishnan 1990; Sassen et al. 1997; Ansell et al. 2003a; Bhulai and Koole 2003; Argon et al. 2009; Hyytia et al. 2012).

The heuristic policy to be developed in this paper has its origins in the work of Gittins (1979) and Whittle (1988) on deriving ‘dynamic allocation indices’ for multi-armed bandit processes. Informally speaking, an index-based policy is a policy which associates a certain, easily-computable score or index to the various possible decision options in any given system state, and then chooses the option with the highest index. In order for an index-based policy to be applicable to a particular problem, the property of indexability must first be established. Although this property is not trivial to prove in general, it has been proven to hold in various problems involving queueing or inventory control (see Ansell et al. 2003b; Archibald et al. 2009; Glazebrook et al. 2009, 2011, 2014; Hodge and Glazebrook 2011; Nino-Mora 2012; Larranaga et al. 2016).

The modus operandi of the particular type of index policy that we focus on in this paper, which we shall refer to as the Whittle index heuristic, was first described in general terms by Whittle (1988) and has been shown to yield strong-performing policies in various types of problems involving dynamic resource allocation. Nino-Mora (2002) studied a similar problem to ours, with the evolution of ‘service facilities’ described by birth–death processes, and derived general expressions for the indices upon which decisions are based. Argon et al. (2009) also considered a routing problem in which customers are routed to single-server facilities. Their model does not include admission control, but does include more general cost structures and different customer types. Some recent applications for the Whittle heuristic in the literature include egalitarian processor sharing systems (Borkar and Pattathil 2017), scheduling for time-varying channels (Aalto et al. 2016), partially observed binary Markov chains (Borkar 2017), scheduling of information transmissions (Hsu 2018), allocation of scarce resources to a large number of jobs (Li et al. 2020) and allocation of assets prone to failure (Ford et al. 2020).

In the context of the routing problem that we consider in this paper, an advantage of using a Whittle index heuristic is that we are able to show that the resulting index policies possess certain intuitively ‘nice’ structural properties which usually cannot be proven to hold for optimal policies. Since the heuristic policies appear to perform very strongly in numerical experiments, this provides justification for searching for optimal policies within a reduced class of solutions which possess these ‘nice’ properties (this is a topic of ongoing work). The main contributions of our paper are as follows:

-

We confirm (Sect. 3) that the service facilities in our problem are indexable and derive expressions for the Whittle indices in terms of the system parameters.

-

We show (Sect. 4) that the positive recurrent state space under an arbitrary optimal stationary policy is bounded between two finite sets, one of which is derived using the Whittle indices.

-

We show (Sect. 4) that the Whittle heuristic policy becomes asymptotically optimal in light-traffic and heavy-traffic limits.

-

We prove (Sect. 4) that the Whittle heuristic policy possesses various other ‘intuitive’ structural properties and provide counter-examples to show that these may not hold in general for optimal policies.

-

We introduce (Sect. 5) an alternative index-based heuristic based on one-step policy improvement, and show that it possesses asymptotic light-traffic (but not heavy-traffic) optimality.

-

We present (Sect. 6) results from numerical experiments to compare the performance of the Whittle heuristic policy with those of optimal policies (where feasible) and alternative heuristic policies.

In the next section we provide a detailed formulation of the multiple-facility routing problem considered throughout this paper.

2 Problem formulation

We consider a queueing system with N service facilities, each of which has its own queue and a first-come–first-served (FCFS) queue discipline. Customers arrive from outside the system according to a Poisson process with demand rate \(\lambda >0\). Newly-arrived customers may proceed to any one of the N service facilities or, alternatively, exit the system immediately without incurring any cost or reward (referred to as balking). Thus, there are \(N+1\) possible destinations for any individual customer. An individual facility \(i\in \{1,2,\ldots ,N\}\) possesses \(c_i\) identical service channels, and service times at any channel of facility i are exponentially distributed with mean \(\mu _i^{-1}>0\).

The system earns a fixed reward \(\alpha _i>0\) for each customer who completes service at facility \(i\in \{1,2,\ldots ,N\}\), but also incurs a linear holding cost \(\beta _i>0\) per unit time for each customer waiting at the facility (whether in the queue or in service). We also assume that \(\alpha _i>\beta _i/\mu _i\) for each \(i\in \{1,2,\ldots ,N\}\) in order to ensure that rewards adequately compensate customers for their own expected service costs (otherwise we would have redundancy in the system).

The system is fully observable, in the sense that the number of customers present at each facility is always known and can be used to inform decision-making. We do not make any assumptions as to whether decisions are made by customers themselves or whether they are directed by a central controller, since this depends on the physical context of the problem and does not affect our results in this paper. If customers make their own decisions, then an optimal policy can be regarded as that which arises from customers co-operating with each other to maximize their collective welfare.

Let \(S=\{(x_1,x_2,\ldots ,x_N):x_i\in {\mathbb {N}}_0\text { for each }i\in \{1,2,\ldots ,N\}\}\) denote the state space of the system, where \(x_i\) is the number of customers present at facility i (including those being served). A system state is denoted by a vector \({\mathbf {x}}\in S\). We also use \({\mathbf {x}}^{i+}\) (resp. \({\mathbf {x}}^{i-}\)) to denote the state which is identical to \({\mathbf {x}}\) except that one extra customer (resp. one fewer customer) is present at facility i. That is:

where \({\mathbf {e}}_i\) is the ith vector in the standard orthonormal basis of \({\mathbb {R}}^N\).

The sum of the infinitesimal transition rates under any state \({\mathbf {x}}\in S\) is bounded above by \(\lambda +\sum _{i=1}^N c_i\mu _i\). We can therefore apply the process of uniformization (Lippman 1975; Serfozo 1979) to formulate the system as a discrete-time Markov Decision Process (MDP) in which the action space, transition probabilities and single-step reward function are defined as follows:

-

Under any state \({\mathbf {x}}\in S\), the action space is

$$\begin{aligned} A=\{0,1,2,\ldots ,N\}. \end{aligned}$$An action \(a\in A\) represents the decision of the next customer to arrive in the system. If \(a=0\) then the customer balks, and if \(a=i\) for some \(i\in \{1,2,\ldots ,N\}\) then the customer goes to facility i.

-

The transition probability of going from state \({\mathbf {x}}\in S\) to state \({\mathbf {y}}\in S\setminus \{{\mathbf {x}}\}\) in a single discrete time step, given that action \(a\in A\) has been chosen, is denoted by \(p({\mathbf {x}},a,{\mathbf {y}})\), where

$$\begin{aligned} p({\mathbf {x}},a,{\mathbf {y}})={\left\{ \begin{array}{ll} \lambda \varDelta ,&{}\text {if }a=i\text { for some }i \in \{1,2,\ldots ,N\}\text { and }{\mathbf {y}}={\mathbf {x}}^{i+},\\ \min (x_i,c_i)\mu _i\varDelta ,&{}\text {if }{\mathbf {y}}={\mathbf {x}}^{i-}\text {for some }i\in \{1,2,\ldots ,N\},\\ 0,&{}\text {otherwise},\end{array}\right. }\nonumber \\ \end{aligned}$$(1)and \(\varDelta =\left( \lambda +\sum _{i=1}^N c_i\mu _i\right) ^{-1}\) is the discrete-time step size. Note that, following uniformization, we assume that at most one random event (either an arrival or a service completion) may occur in a single discrete time step. A ‘self-transition’ from state \({\mathbf {x}}\in S\) to itself may occur if no random event takes place, and the relevant transition probability is

$$\begin{aligned} p({\mathbf {x}},a,{\mathbf {x}})=1-I(a\ne 0)\lambda \varDelta -\sum \limits _{i=1}^N \min (x_i,c_i)\mu _i\varDelta , \end{aligned}$$where I denotes the indicator function.

-

Under state \({\mathbf {x}}\in S\), rewards are being earned at a total rate of \(\sum _{i=1}^N \alpha _i\min (x_i,c_i)\mu _i\) and holding costs are being incurred at a rate \(\sum _{i=1}^N \beta _i x_i\). We therefore formulate the single-step reward function as

$$\begin{aligned} r({\mathbf {x}})=\sum \limits _{i=1}^N \big (\alpha _i\min (x_i,c_i)\mu _i-\beta _i x_i\big ).\end{aligned}$$(2)

For simplicity we will assume throughout this paper that \(\varDelta =\left( \lambda +\sum _{i=1}^N c_i\mu _i\right) ^{-1}=1\), since the units of time are arbitrary.

We define an optimal policy as a decision-making rule \(\theta \) which maximizes the long-run average net reward per unit time, defined as

where \({\mathbf {x}}_n\) denotes the state of the system at the nth discrete time step (with \({\mathbf {x}}_0\) as the initial state).

Let \(w_a({\mathbf {x}})\) denote an individual customer’s expected net reward for choosing action \(a\in \{0,1,\ldots ,N\}\) under state \({\mathbf {x}}\in S\). If the action chosen is to join some facility \(i\in \{1,\ldots ,N\}\) at which \(x_i\) customers are already present, then the expected waiting time is \(1/\mu _i\) if \(x_i<c_i\), and \((x_i-c_i+1)/(c_i\mu _i)+1/\mu _i=(x_i+1)/(c_i\mu _i)\) if \(x_i\ge c_i\). Hence:

Also, let \({\tilde{\theta }}\) denote the ‘selfish’ (myopic) policy which operates in such a way that any customer arriving in the system chooses the action \(a\in \{0,1,\ldots ,N\}\) which maximizes \(w_a({\mathbf {x}})\), with ties broken arbitrarily except that we assume balking (\(a=0\)) is chosen only if \(w_i({\mathbf {x}})<0\) for all \(i\in \{1,2,\ldots ,N\}\). By considering the inequalities

we can show that the maximum number of customers at facility i under policy \({\tilde{\theta }}\) is \(\lfloor \beta _i/(\alpha _ic_i\mu _i)\rfloor \), where \(\lfloor \cdot \rfloor \) denotes the floor function. Hence, the set of positive recurrent states under \({\tilde{\theta }}\) is \({\tilde{S}}\), where

It is proved in (Shone et al. 2016) (Theorem 2) that there always exists a stationary policy \(\theta ^*:S\rightarrow A\) which maximizes (3). Furthermore, if \(S_{\theta ^*}\) denotes the set of positive recurrent states under a particular optimal stationary policy \(\theta ^*\), then

This may be described as the ‘containment property’ of socially optimal policies.

3 The Whittle index heuristic

The main theoretical contributions of our paper (to follow in Sect. 4) rely upon the service facilities having a property referred to as indexability, and the resulting development of a heuristic routing policy based on optimal admission policies for individual facilities. Indexability is not necessarily a trivial property to prove in general settings, and sufficient conditions for this property to hold have been well-studied in the literature; see, for example, Bertsimas and Nino-Mora (1996) and Nino-Mora (2001, (2002) for the development of polyhedral methods. As noted by Glazebrook et al. (2009), however, it is often possible to provide simple, direct proofs of indexability in specific problems where the index for resource i (or, in our case, facility i) has a natural interpretation as a fair charge for utilization. Fortunately, in our model it is straightforward to establish the indexability property using a simple geometrical argument. Full details follow later in this section, but first we introduce the Lagrangian relaxation upon which the index heuristic is based.

Let \(\varTheta \) denote the class of stationary policies under which our N-facility queueing system is stable and has a stationary distribution; that is, if \(\theta \in \varTheta \) then the distribution \(\left\{ \pi _\theta ({\mathbf {x}})\right\} _{{\mathbf {x}}\in S}\) exists, where \(\pi _\theta ({\mathbf {x}})\) is the steady-state probability of the system being in state \({\mathbf {x}}\in S\) under \(\theta \) and \(\sum _{{\mathbf {x}}\in S}\pi _\theta ({\mathbf {x}})=1\). We note that although \(\lambda \) can be arbitrarily large, \(\varTheta \) is always non-empty since it includes the trivial policy which chooses to balk at all states in S.

For each policy \(\theta \in \varTheta \) and facility \(i\in \{1,2,\ldots ,N\}\), let \(\eta _i(\theta )\) denote the effective queue-joining rate per unit time at facility i under \(\theta \) (i.e. the long-run average number of customers joining facility i per unit time), and let \(L_i(\theta )\) denote the long-run average number of customers present at facility i under \(\theta \). Then the long-run average reward \(g^\theta \) under policy \(\theta \) is independent of the initial state \({\mathbf {x}}_0\) and may be expressed in the form

Closed-form expressions for \(\eta _i(\theta )\) and \(L_i(\theta )\) are unattainable in general when \(N\ge 2\), but the expression on the right-hand side of (7) will nevertheless prove useful. We note that under any policy \(\theta \in \varTheta \), the sum of the effective queue-joining rates \(\eta _i(\theta )\) at the various facilities must be bounded above by the system demand rate. That is:

Following Whittle (1988), we consider a Lagrangian relaxation of our original problem involving an expanded class of stationary policies \(\varTheta '\) which are at liberty to ‘break’ the natural physical restrictions of the system by sending a newly-arrived customer to any subset of the set of facilities \(\{1,2,\ldots ,N\}\). That is, for each state \({\mathbf {x}}\in S\), the action \(\theta ({\mathbf {x}})\) chosen by a policy \(\theta \in \varTheta '\) satisfies

where \({\mathcal {P}} \left( \{1,2,\ldots ,N\}\right) \) is the power set (i.e. the set of all subsets, including the empty set) of \(\{1,2,\ldots ,N\}\). Conceptually, one now considers a new optimization problem in which the option is available to produce ‘copies’ of each customer who arrives, and send these copies to any number of facilities (at most one copy per facility). For each state \({\mathbf {x}}\in S\), \(\theta ({\mathbf {x}})\) is the set of facilities which, under the policy \(\theta \in \varTheta '\), receive (a copy of) a new customer if an arrival occurs under state \({\mathbf {x}}\).

We incorporate the constraint (8) in a Lagrangian fashion by letting \({\hat{g}}(W)\) denote the optimal expected long-run average reward for the new (relaxed) problem, defined as

where \(W\in {\mathbb {R}}\) is a Lagrange multiplier. Clearly, any policy \(\theta \) belonging to the class of policies \(\varTheta \) for the original problem may be represented by a policy \(\theta '\) in the new class \(\varTheta '\) for which the cardinality of \(\theta '({\mathbf {x}})\) is either 1 or 0 at all states \({\mathbf {x}}\in S\). Hence, for \(W\ge 0\),

where \(g^*=\sup _{\theta \in \varTheta }g^\theta \) is the optimal expected long-run average reward in the original problem. One can re-write (9) in an equivalent form:

Then, as in Glazebrook et al. (2009) (for example), one obtains a facility-wise decomposition:

where, for each facility \(i\in \{1,2,\ldots ,N\}\),

Here, \({\varTheta _i}'\) (for \(i=1,2,\ldots ,N\)) is a class of stationary policies which choose either to accept a customer (denoted by 1) or reject (denoted by 0) at any given state. Since the relaxation of the problem allows newly-arrived customers to be sent to any subset of the N facilities, the decision of whether or not to admit a customer at some facility \(i\in \{1,2,\ldots ,N\}\) can be made independently of the decisions made in regard to the other facilities \(j\ne i\). It follows that an optimal solution to the relaxed N-facility problem can be found by solving N independent single-facility admission control problems. For each facility \(i\in \{1,2,\ldots ,N\}\), the corresponding single-facility problem involves customers arriving according to a Poisson process with a demand rate \(\lambda \) (the same demand rate as for the N-facility problem), \(c_i\) service channels, and exponentially-distributed service times with mean \(\mu _i^{-1}\). The holding cost is \(\beta _i\) per customer per unit time, but importantly the reward for service is now \(\alpha _i-W\) as opposed to \(\alpha _i\). Hence, it is natural to interpret W as an extra charge for admitting a customer; see Fig. 2.

The single-facility problem described above exactly fits the formulation of Sect. 2 (with \(N=1\)), except that the reward \(\alpha _i-W\) may not be positive. Interpreting Theorem 2 from Shone et al. (2016) in the context of a single-facility problem, we can be assured that there exists an average reward optimal threshold policy. This means that a customer arriving at the facility balks if and only if the system state x equals or exceeds the integer threshold n, i.e. \(x\ge n\). We will refer to such a policy as an n-threshold policy.

Let \(\theta _i^*\) denote an optimal threshold policy at facility i, given an entry charge W. This means that \(\theta _i^*\) chooses an action \(a\in \{0,1\}\) in response to an input \((x,W)\in {\mathbb {N}}_0\times {\mathbb {R}}\), where x is the observed state and W is the entry charge. Re-interpreting the summary measures \(\eta _i(\cdot )\) and \(L_i(\cdot )\) so that they are now functions of policies \(\theta _i\) belonging to the set \({\varTheta _i}'\) associated with the single-facility problem, it follows that \((\alpha _i-W)\eta _i(\theta _i^*)-\beta _i L_i(\theta _i^*)\) is a valid expression for \({\hat{g}}_i(W)\), the optimal average reward for facility i.

Next, we recall that the facility \(i\in \{1,2,\ldots ,N\}\) was arbitrary in this discussion and let \(\theta _1^*,\theta _2^*,\ldots ,\theta _N^*\) be optimal threshold policies at the various facilities. Also, let \(\theta ^*\) be a stationary policy belonging to the expanded class \(\varTheta '\) which operates in such a way that, for each state \({\mathbf {x}}\in S\),

That is, each time a new customer arrives, they are sent to all of the facilities \(i\in \{1,2,\ldots ,N\}\) at which the optimal threshold policy \(\theta _i^*\) would choose to accept a customer. By the previous arguments, \(\theta ^*\) attains average reward optimality in the relaxed version of the problem.

In order to derive the Whittle heuristic for the original N-facility problem, it remains to establish the connection between this heuristic and the optimal solutions for the relaxed version of the problem discussed thus far. The Whittle heuristic relies upon the notion of indexability of a service facility, which we define [in line with Whittle (1988)] as follows:

Definition 1

(Indexability) Facility \(i\in \{1,2,\ldots ,N\}\) is said to be indexable if, given any state \(x\in {\mathbb {N}}_0\), there exists \(W_i(x)\in {\mathbb {R}}\) such that \(\theta _i^*\) chooses to accept a new customer if and only if \(W<W_i(x)\).

For each facility \(i\in \{1,2,\ldots ,N\}\), let \(T_i^*(W)\) denote the smallest integer n such that an n-threshold policy achieves average reward optimality in a single-facility problem with demand rate \(\lambda \), \(c_i\) service channels, service rate \(\mu _i\), holding cost \(\beta _i\) and reward for service \(\alpha _i-W\). Then we can show that the indexability property holds for facility i if and only if \(T_i^*(W)\) satisfies the following properties:

-

1.

\(T_i^*(W)\) is monotonically decreasing with W.

-

2.

For any \(x\in {\mathbb {N}}_0\), there exists \(W_i(x)\in {\mathbb {R}}\) such that \(T_i^*(W)> x\) if and only if \(W<W_i(x)\).

In the event that the indexability property holds, we refer to the critical value \(W_i(x)\) as the Whittle index for facility i and state x. Although indexability is not trivial to prove in general, the property has been shown to hold in various problems involving queueing or inventory control (see Nino-Mora 2002; Ansell et al. 2003b; Archibald et al. 2009; Glazebrook et al. 2009; Argon et al. 2009; Hodge and Glazebrook 2011 and references therein). The next result confirms that the facilities in our problem are indexable, and also provides an expression for the Whittle index \(W_i(x)\) in terms of the system parameters. Proof of the lemma can be found in Appendix A.

Lemma 1

Each facility \(i\in \{1,2,\ldots ,N\}\) is indexable. Furthermore, a valid expression for the Whittle index \(W_i(x)\) is

where \(\pi _i(y,T)\) denotes the steady-state probability of facility i being in state \(y\in {\mathbb {N}}_0\), given that a threshold of T is applied.

We note that convenient formulae for \(\pi _i(y,T)\) are available from finite-buffer M/M/c queueing theory (see, for example, Gross and Harris 1998):

where

Several remarks should be made at this point. Firstly, equation (13) can be found in a more general form in Corollary 7.1 of Nino-Mora (2012). Secondly, the expression \(\sum _{y=0}^{x+1}y\pi _i(y,x+1)-\sum _{y=0}^x y\pi _i(y,x)\) which appears on the right-hand side of (13) is simply \(L_i(x+1)-L_i(x)\), where \(L_i(x)\) is the expected number of customers present at facility i given a threshold of x. In the special case where \(x<c_i\), Little’s formula yields \(L_i(x+1)-L_i(x)=(\lambda /\mu _i)(\pi _i(x,x)-\pi _i(x+1,x+1))\), and hence we obtain

Thus, the Whittle index at states \(x<c_i\) is equal to a customer’s expected net reward for joining facility i. As a further remark, suppose we have a single-server facility (\(c_i=1\)). Then it is straightforward to apply results for finite-buffer M/M/1 queues in order to obtain

where \(\rho _i=\lambda /\mu _i\). This is analogous to the equation (7.3) given in Nino-Mora (2002), except that their result is given in the context of minimizing holding costs (without a reward for service). A similar result can also be found by considering equation (18) in Argon et al. (2009) and setting (in their notation) \(\alpha =0\), \(\beta =\lambda /\mu =\rho \) and \(c(i)=(i+1)h/\mu \).

In the light of Definition 1, we can obtain an optimal policy \(\theta ^*\) for the relaxed N-facility problem by specifying its decision at state \({\mathbf {x}}\in S\) as follows:

As observed in Argon et al. (2009) and Glazebrook et al. (2009), the fact that an optimal solution to the relaxed problem may be described using the Whittle indices makes it logical to propose a heuristic policy for the original N-facility problem, which involves sending any new customer who arrives under state \({\mathbf {x}}\in S\) to a facility i which maximizes \(W_i(x_i)\), or choosing to balk if none of the \(W_i(x_i)\) values are positive. The optimality of such a policy cannot be guaranteed, but its intuitive justification lies in the fact that \(W_i(x_i)\), when positive, is a measure of the amount by which the ‘charge for admission’ W would need to be increased before the optimal policy \(\theta ^*\) for the relaxed problem would choose not to admit a customer to facility i. Thus, \(W_i(x_i)\) may be regarded somewhat crudely as a measure of the margin by which one would be ‘in favor’ of having an extra customer present at facility i. A similar interpretation is that \(W_i(x_i)\) is a ‘fair charge’ for admitting a customer to facility i when there are \(x_i\) customers already present.

The Whittle index heuristic policy \(\theta ^{[W]}\) (hereafter referred to as the Whittle policy) for our original N-facility routing problem is defined below.

Definition 2

(Whittle index policy) At any given state \({\mathbf {x}}\in S\), the Whittle index policy \(\theta ^{[W]}\) chooses an action as follows:

where \(W_i(x)\) is defined in (13). In cases where two or more facilities attain the maximum in (15), it will be assumed that a decision is made according to some pre-determined ranking order of the N facilities.

We note that, for any state \({\mathbf {x}}=(x_1,\ldots ,x_N)\in S\), there is an equivalence between the following three statements:

-

1.

\(W_i(x_i)>0\) for some \(i\in \{1,2,\ldots ,N\}\),

-

2.

\(\theta ^{[W]}({\mathbf {x}})\ne 0\),

-

3.

Any optimal stationary policy for a single-facility problem with parameters corresponding to those of facility \(i\in \{1,2,\ldots ,N\}\), is a threshold policy with threshold greater than \(x_i\).

We will make use of this equivalence in several of our later proofs.

In the next section we investigate the similarities and differences between the Whittle index policy and an optimal policy which maximizes (3).

4 Structural and asymptotic properties of the index heuristic

Suppose we have a stationary policy, \(\theta ^*\), which is optimal under the average reward criterion. In this section we will present several counter-examples to show that \(\theta ^*\) may possess surprising and counter-intuitive structural properties. Indeed, there is little that can be proved about \(\theta ^*\) in general. However, it is possible to show that the positive recurrent state space under \(\theta ^*\) may be bounded by two finite sets. Let the sets \(S^\circ \) and \(S_{\theta ^*}\) be defined as follows:

Also, let \({\tilde{S}}\) be the ‘selfish’ state space defined in (5). The following relationship may be proved to hold for any optimal stationary policy \(\theta ^*\):

Indeed, the fact that \(S_{\theta ^*}\subseteq {\tilde{S}}\) has been proved in Shone et al. (2016) (Lemma 6). By using this result and also showing that optimal policies never choose to balk if there is an idle server available at one of the N facilities, the lower bound \(S^\circ \subseteq S_{\theta ^*}\) can be established. A full proof can be found in Appendix B. We refer to the property \(S^\circ \subseteq S_{\theta ^*}\) as the non-idling property of optimal policies.

We have not stated (17) as a theorem because it can be regarded as a corollary of a stronger result, which follows next. It is possible to use the structural properties of the Whittle index policy to obtain an improved lower bound for \(S_{\theta ^*}\). Throughout the rest of this section we will use \(\theta ^{[W]}({\mathbf {x}})\in \{0,1,\ldots ,N\}\) to denote the action chosen by the Whittle policy \(\theta ^{[W]}\) in response to an observed state \({\mathbf {x}}\in S\), and we will also use \(S_W\) to denote the set of states in S which are positive recurrent under \(\theta ^{[W]}\). The following lemma is needed:

Lemma 2

Let \(\theta ^*\) be an optimal stationary policy. Then, for any \({\mathbf {x}}\in S_{\theta ^*}\),

That is, the Whittle policy \(\theta ^{[W]}\) chooses to balk at any state \({\mathbf {x}}\in S_{\theta ^*}\) where \(\theta ^*\) chooses to balk.

Proof of the lemma is established using dynamic programming recursions and can also be achieved via a sample path argument. The details can be found in Appendix C. Essentially, one can show that if balking is chosen at some state \({\mathbf {x}}\in S_{\theta ^*}\) by the optimal policy \(\theta ^*\), then balking would also be chosen by an optimal threshold policy in a single-facility problem involving any of the facilities \(i\in \{1,2,\ldots ,N\}\) at the state with \(x_i\) customers present (where \(x_i\) is the \(i^{\text {th}}\) component of the state \({\mathbf {x}}\) in the N-facility problem). Since the Whittle policy \(\theta ^{[W]}\) makes decisions by considering each of the N facilities operating in isolation, this is sufficient to establish the result.

Our next theorem states that the Whittle index policy \(\theta ^{[W]}\) is conservative in comparison to an optimal stationary policy \(\theta ^*\).

Theorem 1

(Conservativity of the Whittle policy) For any optimal stationary policy \(\theta ^*\), we have

Proof

The containment property \(S_{\theta ^*}\subseteq {\tilde{S}}\) is already known. It follows that there must exist some state \({\mathbf {x}}\in S_{\theta ^*}\) at which \(\theta ^*\) chooses to balk; otherwise, an unbroken sequence of customer arrivals (without any service completions) would cause the process to pass outside \({\tilde{S}}\) under \(\theta ^*\). Let \({\mathbf {z}}\in S_{\theta ^*}\) be a state at which \(\theta ^*\) chooses to balk, and let

That is, \(S_{{\mathbf {z}}}\) is the set of states in \({\tilde{S}}\) which satisfy the componentwise inequality \({\mathbf {x}}\le {\mathbf {z}}\). Since \({\mathbf {z}}\in S_{\theta ^*}\), it follows that all states in \(S_{{\mathbf {z}}}\) are also included in \(S_{\theta ^*}\), since they are accessible from \({\mathbf {z}}\) via service completions. Hence, \(S_{{\mathbf {z}}}\subseteq S_{\theta ^*}\). On the other hand, since balking is chosen by \(\theta ^*\) at \({\mathbf {z}}\), it follows from Lemma 2 that balking is also chosen at \({\mathbf {z}}\) by the Whittle policy \(\theta ^{[W]}\). By definition of the Whittle policy, this implies that \(W_i(z_i)\le 0\) for all \(i\in \{1,2,\ldots ,N\}\). Therefore it is impossible for any state \({\mathbf {x}}\notin S_{{\mathbf {z}}}\) to be accessible from state \({\mathbf {0}}\) (the empty system state) under the Whittle policy, since this would require joining some facility \(i\in \{1,2,\ldots ,N\}\) to be chosen at a state \({\mathbf {y}}\in S_{{\mathbf {z}}}\) with \(y_i=z_i\) and hence \(W_i(y_i)\le 0\). It follows that \(S_W\subseteq S_{{\mathbf {z}}}\subseteq S_{\theta ^*}\).

To complete the proof, it remains only to show that \(S^\circ \subseteq S_W\). In Sect. 3 it was shown that, for any facility \(i\in \{1,\ldots ,N\}\), we have \(W_i(x)=\alpha _i-\beta _i/\mu _i\) for all states \(x<c_i\) (see (14)). Recall that our model assumes \(\alpha _i-\beta _i/\mu _i>0\); otherwise, facility i would be redundant. Hence, \(\theta ^{[W]}\) cannot choose to balk at any state with \(x_i<c_i\) for some \(i\in \{1,2,\ldots ,N\}\). Since \(S_W\) is contained in \(S_{\theta ^*}\) (and hence finite), it then follows that there exists a state \({\mathbf {x}}\) with \(x_i\ge c_i\) for all \(i\in \{1,2,\ldots ,N\}\) which is positive recurrent under \(\theta ^{[W]}\) (indeed, such a state must be accessible from \({\mathbf {0}}\) via an unbroken sequence of customer arrivals). Hence, all states in \(S^\circ \) are also positive recurrent under \(\theta ^{[W]}\). \(\square \)

Next, we turn our attention to the asymptotic properties of the Whittle index policy as \(\lambda \) becomes either very small or very large. Since \(\theta ^{[W]}\) is a heuristic policy, its optimality cannot be proved in general, but the next theorem establishes that the Whittle policy achieves (asymptotic) optimality in a light-traffic limit, and also in a heavy-traffic limit.

Theorem 2

(Optimality of the Whittle policy in light-traffic and heavy-traffic limits)

Let \(g^{[W]}(\lambda )\) be the long-run average reward attained by the Whittle policy \(\theta ^{[W]}(\lambda )\) given a demand rate \(\lambda >0\), and let \(g^*(\lambda )\) be the corresponding value under an optimal policy. Then:

-

1.

\(\theta ^{[W]}\) is asymptotically optimal in a light-traffic limit. That is:

$$\begin{aligned} \lim _{\lambda \rightarrow 0}\frac{g^*(\lambda )-g^{[W]}(\lambda )}{g^*(\lambda )}=0. \end{aligned}$$ -

2.

\(\theta ^{[W]}\) is optimal in a heavy-traffic limit. That is:

$$\begin{aligned} \lim _{\lambda \rightarrow \infty } \left( g^*(\lambda )-g^{[W]}(\lambda )\right) =0. \end{aligned}$$

Proof of Theorem 2 can be found in Appendix D. In the light-traffic case, it suffices to show that the Whittle policy makes optimal decisions at the state with no customers present, since the decisions chosen at other states essentially become unimportant in the limiting scenario. In the heavy-traffic case, the proof is accomplished by showing that the Whittle heuristic directs customers to balk if and only if all servers are busy at all facilities, and that this results in the system residing continuously in a state which maximizes the single-step reward function \(r({\mathbf {x}})\).

Theorem 2 relies upon the fact that optimal policies become quite simplistic in the limiting cases as \(\lambda \rightarrow 0\) and \(\lambda \rightarrow \infty \). For general values of \(\lambda \), however, optimal policies can be quite intricate. The remaining results in this section identify structural properties of the Whittle policy \(\theta ^{[W]}\) which do not necessarily hold under an optimal policy.

First, we consider monotonicity. We will generalize our previous notation and use \(S_\theta \) to denote the set of states which are positive recurrent under an arbitrary stationary policy \(\theta \).

Theorem 3

(Monotonicity) Suppose \(N\ge 2\), and let \(\varTheta ^{[M]}\) denote the class of all stationary policies \(\theta \) which satisfy the following three monotonicity properties:

-

(a)

If \(\theta ({\mathbf {x}})=0\) for some \({\mathbf {x}}\in S_\theta \), then \(\theta ({\mathbf {x}}^{i+})=0\) for all \(i\in \{1,2,\ldots ,N\}\) with \({\mathbf {x}}^{i+}\in S_\theta \).

-

(b)

If \(\theta ({\mathbf {x}})=i\) for some \({\mathbf {x}}\in S_\theta \) and \(i\in \{1,2,\ldots ,N\}\) with \(x_i\ge 1\), then \(\theta ({\mathbf {x}}^{i-})=i\).

-

(c)

If \(\theta ({\mathbf {x}})=i\) for some \({\mathbf {x}}\in S_\theta \) and \(i\in \{1,2,\ldots ,N\}\), then \(\theta ({\mathbf {x}}^{j+})=i\) for any \(j\in \{1,2,\ldots ,N\}\setminus \{i\}\) such that \({\mathbf {x}}^{j+}\in S_\theta \).

Then:

-

1.

\(\theta ^{[W]}\in \varTheta ^{[M]}\),

-

2.

If \(N=2\) and \(c_1=c_2=1\) then there exists an optimal policy in \(\varTheta ^{[M]}\),

-

3.

In general, \(\varTheta ^{[M]}\) is not guaranteed to include an optimal policy.

Proof

It is trivial to show that the Whittle policy \(\theta ^{[W]}\) possesses the monotonicity properties (a)–(c), since this is a direct consequence of the index-based nature of the policy. We therefore begin with Statement 2, which relates to a special case of our model with only two facilities and a single server at each. In general, any optimal policy must be associated with a constant \(g^*\) and a function h satisfying the well-known average reward optimality equations:

Importantly, the function h satisfying (19) is unique up to an additive constant (see Puterman 1994). The proof of Statement 2 depends on showing that, in the special case under consideration, h satisfies three properties defined as follows:

-

\(h(({\mathbf {x}}^{j+})^{j+})-h({\mathbf {x}}^{j+})\le h({\mathbf {x}}^{j+})-h({\mathbf {x}})\) for all \({\mathbf {x}}\in S\) and \(j\in \{1,2\}\) (concavity);

-

\(h(({\mathbf {x}}^{i+})^{j+})-h({\mathbf {x}}^{i+})\le h({\mathbf {x}}^{j+})-h({\mathbf {x}}^{j+})\) for all \({\mathbf {x}}\in S\) and \(i,j\in \{1,2\}\) with \(i\ne j\) (submodularity);

-

\(h(({\mathbf {x}}^{j+})^{j+})-h(({\mathbf {x}}^{i+})^{j+})\le h({\mathbf {x}}^{j+})-h({\mathbf {x}}^{i+})\) for all \({\mathbf {x}}\in S\) and \(i,j\in \{1,2\}\) with \(i\ne j\) (diagonal submissiveness).

These properties can be established using inductive arguments based on value iteration, and the existence of an optimal policy in \(\varTheta ^{[M]}\) then follows. For full details, please refer to Appendix E.

Unfortunately, the properties of concavity, submodularity and diagonal submissiveness cannot be proven to hold in the full generality of our model. We prove Statement 3 of the theorem using a counter-example. Consider a two-facility system with demand rate \(\lambda =12\) and the following parameters for the two facilities:

We can use value iteration to confirm the existence of a unique optimal stationary policy \(\theta ^*\) for this system. The positive recurrent state space under this policy is \(S_{\theta ^*}=\{(x_1,x_2)\in {\mathbb {N}}_0^2:x_1\le 2\text { and }x_2\le 2\}\), i.e. it includes 9 states. However, the decision of \(\theta ^*\) at state (0, 0) is to join Facility 2, whereas the decision at (1, 0) is to join Facility 1. This contravenes monotonicity property (b) stated in the theorem, so the proof is complete. \(\square \)

Fellow researchers may be interested to know that we have been unable to find either a proof or a counter-example to show whether or not monotonicity property (a) is guaranteed to hold under an optimal policy \(\theta ^*\) (indeed, this property may be meaningless if it can be shown that \(\theta ^*({\mathbf {x}})=0\Rightarrow {\mathbf {x}}^{i+}\notin S_{\theta ^*}\)). In addition, we have been unable to find either a proof or a counter-example to show whether or not an optimal policy satisfying all three properties (a)–(c) is guaranteed to exist if \(N\ge 3\) and \(c_i=1\) for all \(i\in \{1,2,\ldots ,N\}\).

Next, we consider how the size of the positive recurrent state space changes as the demand rate \(\lambda \) varies. Intuitively, one might suppose that strong-performing policies should become more conservative as \(\lambda \) increases. The next theorem shows that this is indeed the case for the Whittle policy \(\theta ^{[W]}\), but not for optimal policies in general.

Theorem 4

(Conservativity with demand) Let \(S_W(\lambda )\) and \(S^*(\lambda )\) be defined as follows:

Then, given any two demand rates \(\lambda _1,\lambda _2\) with \(\lambda _1>\lambda _2>0\):

-

1.

\(S_W(\lambda _1)\subseteq S_W(\lambda _2)\),

-

2.

If \(N=1\), then \(S^*(\lambda _1)\subseteq S^*(\lambda _2)\),

-

3.

In general, \(S^*(\lambda _1)\not \subseteq S^*(\lambda _2)\).

Proof

Since the Whittle index policy is derived from the properties of optimal admission policies for single-facility problems, Statement 1 is actually implied by Statement 2. However, Statement 2 is somewhat non-trivial to prove. We have used a dynamic programming argument to establish this result, and the details can be found in Appendix F.

We provide a counter-example to establish Statement 3. Consider a two-facility system in which both facilities have a single server available. The parameters for the facilities are:

Consider two different demand rates, \(\lambda _1=10\) and \(\lambda _2=9.8\). Under the larger demand rate \(\lambda _1\), the unique optimal policy \(\theta _1^*\) found by value iteration has positive recurrent state space \(S^*(\lambda _1)=\{(x_1,x_2)\in {\mathbb {N}}_0^2:x_1\le 10\text { and }x_2\le 14\}\), with a unique balking state (10, 14). However, under the smaller demand rate \(\lambda _2\), value iteration yields a unique optimal policy with \(S^*(\lambda _2)=\{(x_1,x_2)\in {\mathbb {N}}_0^2:x_1\le 11\text { and }x_2\le 13\}\), with a unique balking state (11, 13). Since \(S^*(\lambda _1)\) includes states with \(x_2=14\), the ‘conservativity with demand’ property does not hold. \(\square \)

Next, we examine a property related to the distribution of balking states under a stationary policy. It is natural to suppose that, under a strong-performing policy \(\theta \), the positive recurrent state space \(S_\theta \) should take the form of a cuboid in N dimensions. Indeed, if \(S_\theta \) is finite, then the cuboid property is implied by the existence of a unique state in \(S_\theta \) at which balking is chosen. The next result states that the Whittle policy \(\theta ^{[W]}\) must have a unique recurrent balking state, but this is not necessarily true for an optimal stationary policy.

Theorem 5

(Unique recurrent balking state) Suppose \(N\ge 2\), and let \(\varTheta ^{[B]}\) denote the set of all stationary policies \(\theta \) for which the set of positive recurrent states \(S_\theta \) includes a unique state at which balking is chosen. Then:

-

1.

\(\theta ^{[W]}\in \varTheta ^{[B]}\),

-

2.

If \(N=2\) and \(c_1=c_2=1\) then there exists an optimal policy in \(\varTheta ^{[B]}\),

-

3.

In general, \(\varTheta ^{[B]}\) is not guaranteed to include an optimal policy.

Proof

The proof of Statement 1 is trivial, since the only state \({\mathbf {x}}\in S_W\) at which \(\theta ^{[W]}\) chooses to balk is the state with \(x_i=\min \{x\ge 0:W_i(x)\le 0\}\) for \(i\in \{1,2,\ldots ,N\}\).

The proof of Statement 2 relies upon the properties of concavity, submodularity and diagonal submissiveness for the function h satisfying the Eq. 19 in a system with two single-server facilities. These properties were established (for the \(N=2\), \(c_1=c_2=1\) case) in the proof of Theorem 3. Details of how these properties imply a unique balking state can be found in Ha (1997) (Theorem 3). Ha’s results are given in the context of a make-to-stock production system with two products and a single server. He defines a ‘base stock policy’ as a policy for which production is stopped if and only if all products have inventory at or above their specified base stock levels; this is analogous to a policy with a unique balking state in our model. We also note that Ha considers a minimization problem as opposed to a maximization problem, and the value function in his model has the converse properties of convexity, supermodularity and diagonal dominance. However, the arguments in his proof can be translated to our setting in an obvious way.

To prove Statement 3, we provide a counter-example which was found by a numerical search. Consider a system with 3 facilities and a demand rate \(\lambda =21.57\). The parameters for the facilities are:

For this system, the unique optimal policy \(\theta ^*\) found using value iteration chooses to balk at the states (13, 10, 14) and (12, 11, 14), both of which are positive recurrent under \(\theta ^*\). Thus, the process operating under \(\theta ^*\) is able to access two different ‘balking states’, implying that \(\theta ^*\notin \varTheta ^{[B]}\). \(\square \)

Our final result in this section concerns a special case in which all of the N facilities share the same parameters (\(c_i\), \(\mu _i\), \(\alpha _i\) and \(\beta _i\)). We refer to this as the ‘homogeneous facilities’ case. Like the previous three results, it highlights an intuitively ‘sensible’ structural property which is possessed by the Whittle policy \(\theta ^{[W]}\), but not by optimal policies in general.

Theorem 6

(Cube property for a homogeneous system) Suppose \(N\ge 2\) and the facilities are homogeneous, i.e. we have \(c_i=c\), \(\mu _i=\mu \), \(\alpha _i=\alpha \), \(\beta _i=\beta \) for \(i\in \{1,2,\ldots ,N\}\). Let \(\varTheta ^{[C]}\) denote the set of all stationary policies \(\theta \) for which the set of positive recurrent states \(S_\theta \) is of the form \(\{{\mathbf {x}}\in S:x_i\le M\text { for all }i\in \{1,2,\ldots ,N\}\}\) for some \(M\in {\mathbb {N}}_0\). Then:

-

1.

\(\theta ^{[W]}\in \varTheta ^{[C]}\),

-

2.

In general, \(\varTheta ^{[C]}\) is not guaranteed to include an optimal policy.

Proof

As in Theorems 3 and 5, the proof of Statement 1 is trivial, since it is a direct consequence of the index-based nature of the Whittle index policy.

We provide a counter-example to establish Statement 2. Consider a system with demand rate \(\lambda =15\) and two single-server facilities which share an identical set of parameters as follows:

With these parameters, it transpires that the set of positive recurrent states \(S_{\theta ^*}\) associated with any optimal stationary policy must be either of dimension \(3\times 4\) or \(4\times 3\). The optimal decision-making structure is shown in Table 1.

If we restrict attention to stationary policies, then it can be seen from Table 1 that there must be a unique balking state at either (2, 3) or (3, 2). Therefore an optimal stationary policy will allow one of the two facilities to have up to three customers present, but not both. The state (3, 3) is not accessible from \({\mathbf {0}}\) (i.e. positive recurrent) under any optimal stationary policy. Since Table 1 accounts for all 8 optimal stationary policies in this system, we conclude that none of these are included in \(\varTheta ^{[C]}\). \(\square \)

The results in this section have shown that the Whittle policy \(\theta ^{[W]}\) belongs to a class of policies which possess certain intuitively ‘sensible’ structural properties. However, the counter-examples have shown that an optimal policy need not necessarily be included in the same class, and therefore \(\theta ^{[W]}\) must be sub-optimal in some cases. In Sect. 6 we present the results of numerical experiments to evaluate the performance of the Whittle policy. These numerical results include comparisons with an alternative heuristic policy, which is developed in the next section.

5 An alternative heuristic policy

In this section we describe an alternative heuristic policy which is derived from the application of a single step of policy iteration to a ‘static routing’ or ‘Bernoulli splitting’ policy. Similar approaches have been used for other routing problems in the literature; see Krishnan (1990), Ansell et al. (2003b) and Argon et al. (2009) and references therein. The heuristic shares some similarities with the Whittle heuristic, in the sense that it requires the calculation of indices for the N individual facilities; however, the indices themselves are calculated in a completely different way from those derived in Sect. 3.

To begin, consider a randomized policy under which routing decisions are made according to a fixed probability distribution \(\{\sigma _a\}_{a=0}^N\), where a belongs to the same action set A described in Sect. 2; hence, \(\sum _{a=0}^N \sigma _a=1\). We refer to this type of policy as a static policy, since it does not have the ability to make decisions dynamically according to the system state. We will commit a slight abuse of notation and represent an arbitrary static policy by a vector \(\varLambda =(\lambda _1,\ldots ,\lambda _N)\), where \(\lambda _i=\lambda \sigma _i\) is the arrival rate for facility i (\(i\in \{1,\ldots ,N\}\)) and \(\lambda _0=\lambda \sigma _0\) is the rate at which customers balk. We can then write the expected long-run average reward under this policy as

where \(L_i(\lambda _i)\) is the expected number of customers present at facility i, given that arrivals occur according to a Poisson process with rate \(\lambda _i\) (here we are making use of the well-known ‘Poisson splitting’ property). It should be noted that \(L_i(\lambda _i)\) is finite if and only if \(\lambda _i<c_i\mu _i\), so we will define \(g^\varLambda =-\infty \) for any policy \(\varLambda \) with \(\lambda _i\ge c_i\mu _i\) for at least one facility i. Known results for M/M/c queues imply that \(L_i(\cdot )\) is a strictly convex function (see Grassmann 1983; Lee and Cohen 1983); hence, \(g^\varLambda \) is strictly concave and there must be a unique policy \(\varLambda \) which maximizes \(g^\varLambda \) over all static policies.

We will use \(\varLambda ^*:=(\lambda _1^*,\ldots ,\lambda _N^*)\) to denote the unique optimal static policy. Here, ‘optimal’ means ‘optimal among all static policies’, not ‘optimal over all policies’. In fact, it is easy to show that all static routing policies are sub-optimal if non-static policies which make state-dependent routing decisions are included as candidates. Nevertheless, it transpires that a strong-performing (heuristic) dynamic routing policy can be obtained by applying a single step of DP-style policy iteration to the optimal static policy \(\varLambda ^*\).

To assist our development, let us define \(V^{(n)}({\mathbf {x}},\varLambda ^*)\) as the expected total reward over n discrete time steps given that policy \(\varLambda ^*\) is followed and the initial state is \({\mathbf {x}}\in S\). Note that, in our uniformized MDP described in Sect. 2, \(\lambda _i^*\) can be interpreted as the probability that a customer arrives and is sent to facility i at an arbitrary discrete time step under policy \(\varLambda ^*\). Under the usual paradigm of policy iteration, we aim to choose an action a under state \({\mathbf {x}}\) which maximizes

That is, we aim to maximize the improvement in the long-run expected total net reward that would result from choosing action a under state \({\mathbf {x}}\) at an arbitrary time step and then following the optimal static policy \(\varLambda ^*\) at all time steps thereafter, as opposed to simply following the policy \(\varLambda ^*\) at all times. Given that the implementation of policy \(\varLambda ^*\) results in individual facilities operating independently with their own Poisson arrival rates, it will be useful to write

where \(V_i^{(n)}(x,\lambda _i^*)\) is an expected finite-stage reward for facility i only, given x customers initially present and a Poisson demand rate \(\lambda _i^*\). We will also define

for each facility i and state \(x\in {\mathbb {N}}_0\). Then, after some manipulations using (21)–(24), it can be shown that

Hence, in order to obtain a dynamic routing policy via the application of a policy iteration step to an optimal static policy, we should make decisions in such a way that customers who arrive under a given state \({\mathbf {x}}=(x_1,\ldots ,x_N)\) are directed to join the facility i which maximizes \(D_i(x_i,\lambda _i^*)\) if this value is positive; otherwise, they should balk.

It can be seen from (23) that \(h_i(x_i,\lambda _i^*)\) is equivalent to the well-known ‘relative value function’ which appears in the optimality equations and policy evaluation equations for average reward MDPs (see Puterman 1994). In our context, \(h_i(x_i,\lambda _i^*)\) applies to facility i only and we can interpret the demand rate \(\lambda _i^*\) as the ‘policy’ for this facility. We have also defined state zero as the ‘reference state’ which the other states’ values are compared against. The policy evaluation equations for facility i can be written

where \(r_i(x)\) and \(p_i(x,y,\lambda _i^*)\) are the obvious single-facility analogues of the rewards and transition probabilities defined in Sect. 2 and \(g_i(\lambda _i^*)\) is the long-run average reward for facility i. We can then calculate the \(D_i(x,\lambda _i^*)\) values by using the Eq. (26). Before proceeding, we note that if \(\lambda _i^*=0\) for a particular facility i then \(h_i(x,\lambda _i^*)\) and \(D_i(x,\lambda _i^*)\) are trivially equal to zero for all \(x\in {\mathbb {N}}_0\), so we will only consider facilities i for which \(\lambda _i^*>0\).

By setting \(x=0\) in the Eq. (26) and noting that \(r_i(0)=0\) and \(h_i(0,\lambda _i^*)=0\), we obtain:

In general, for integers \(x\in \{1,\ldots ,c_i-1\}\), we have:

Following simple manipulations, we obtain the recurrence relationship:

By making recursive substitutions in (27) we then obtain, for \(x\in \{0,1,\ldots ,c_i-1\}\):

Similarly, for integers \(x\ge c_i\), the recurrence relationship is:

By using (28) and (29) and applying a simple inductive argument, one can then show that for \(x\ge c_i\), we have:

Let \(\theta ^{[B]}\) denote the ‘Bernoulli improvement’ heuristic which is obtained by applying a step of policy iteration to the optimal static policy \(\varLambda ^*\). The conclusion of this section is that \(\theta ^{[B]}\) chooses actions as follows:

where \(D_i(x_i,\lambda _i^*)\) is defined in (28) (for \(x_i\in \{0,1,\ldots ,c_i-1\}\)) and (30) (for \(x\ge c_i\)). We note that, from a practical point of view, implementation of \(\theta ^{[B]}\) requires the initial solution of a convex optimization problem in order to obtain the optimal static policy \(\varLambda ^*\). The values \(g_i(\lambda _i^*)\) (required for the computation of \(D_i(x,\lambda _i^*)\)) are then readily obtained as functions of the \(\lambda _i^*\).

For the special case \(c_i=1\), we note that (28) and (30) reduce to

This implies that \(\theta ^{[B]}\) is more conservative than the selfish policy \({\tilde{\theta }}\) in a system with single servers at all facilities, since \({\tilde{\theta }}\) prefers joining facility i to balking at state \(\mathbf{x }\) if and only if \(\alpha _i-\beta _i(x_i+1)/\mu _i\ge 0\) (see Sect. 2).

The next theorem states that the Bernoulli improvement policy possesses the property of asymptotic light-traffic optimality which (according to Theorem 2) is also a feature of the Whittle policy \(\theta ^{[W]}\).

Theorem 7

(Optimality of the Bernoulli improvement policy in a light-traffic limit) Let \(g^{\varLambda ^*}(\lambda )\) and \(g^{[B]}(\lambda )\) be the long-run average rewards attained by the optimal static policy \(\varLambda ^*\) and the Bernoulli improvement policy \(\theta ^{[B]}\) respectively given a demand rate \(\lambda >0\), and let \(g^*(\lambda )\) be the corresponding value under an optimal policy. Then \(\varLambda ^*\) and \(\theta ^{[B]}\) are both asymptotically optimal in a light-traffic limit. That is:

On the other hand, it is easy to find counter-examples to show that \(\theta ^{[B]}\) does not possess the property of heavy-traffic optimality described (in the context of the Whittle policy \(\theta ^{[W]}\)) in Theorem 2. In Appendix G we have provided a proof of Theorem 7 and also a counter-example to show the lack of heavy-traffic optimality for \(\theta ^{[B]}\).

6 Numerical results

In this section we report the results of a series of experiments involving more than 37,000 randomly-generated sets of system parameters. In order to evaluate the exact sub-optimality of a heuristic policy, it is necessary to evaluate the expected long-run average reward earned by the relevant policy and compare this with the optimal value \(g^*\) associated with an average reward optimal policy. Usually, one would wish to carry out these tasks using dynamic programming algorithms, but this is only practical if the finite state space \({\tilde{S}}\) is of relatively modest size. Of course, the Whittle policy described in Sect. 3 can easily be applied to systems in which \({\tilde{S}}\) is extremely large, but it is generally not feasible to evaluate the optimal value \(g^*\) in such systems, and therefore the only comparisons of interest that can be made in ‘large’ systems are between the Whittle policy (whose performance must be approximated, using simulation) and with alternative heuristics such as the selfish policy \({\tilde{\theta }}\) and the Bernoulli improvement policy \(\theta ^{[B]}\) described in Sects. 2 and 5 respectively. As such, this section is divided into two subsections:

-

In Sect. 6.1, systems of relatively modest size are considered. These are systems in which the size of \(|{\tilde{S}}|\) facilitates the efficient computation of the optimal average reward \(g^*\) using DP algorithms, and also enables similar evaluations of the average rewards earned by the Whittle policy \(\theta ^{[W]}\), the Bernoulli improvement policy \(\theta ^{[B]}\) and the selfish policy \({\tilde{\theta }}\).

-

In Sect. 6.2, ‘large’ systems are considered. These are systems in which the exact computation of \(g^*\) is assumed to be infeasible, and the average rewards earned by \(\theta ^{[W]}\) are compared with those associated with alternative heuristic policies via simulation experiments.

For purposes of distinction, a ‘modest-sized’ system is defined in this section as a system in which \(2\le N\le 4\) and the cardinality of \({\tilde{S}}\) is between 100 and 100,000. Although it is certainly possible to apply DP algorithms to systems of greater size than this, it is desirable to impose a relatively strict restriction on \(|{\tilde{S}}|\) in order to allow a large number of experiments to be carried out in a reasonable amount of time. The remainder of this section will proceed to present the results obtained from numerical experiments.

6.1 ‘Modest-sized’ systems with \(2\le N\le 4\)

We conducted a series of numerical experiments involving 32,934 randomly-generated sets of system parameters. Details of the methods used to generate the parameters can be found in Appendix H.

Table 2 shows 95% confidence intervals for the percentage suboptimality values recorded for each of the three heuristic policies \(\theta ^{[W]}\), \(\theta ^{[B]}\) and \({\tilde{\theta }}\), (columns 3-5). The first row shows summary results for all 32,934 systems, and the next three rows show results for particular values of N. Both \(\theta ^{[W]}\) and \(\theta ^{[B]}\) are consistently strong, with \(\theta ^{[W]}\) tending to be slightly stronger overall (within 1% of optimality on average). Indeed, \(\theta ^{[W]}\) was the best-performing of the three heuristics in about 65% of experiments. Noticeably, all of the heuristics tend to do better in the \(N=2\) case than in the \(N=3\) and \(N=4\) cases. In Sect. 6.2, we will present results for larger values of N.

Next, let \(\rho :=\lambda \left( \sum _{i=1}^N c_i\mu _i\right) ^{-1}\) be a measure of the relative traffic intensity for a particular system. In Table 3 we have presented results for \(\theta ^{[W]}\), \(\theta ^{[B]}\) and \({\tilde{\theta }}\) in a similar format to that of Table 2, except with results categorized according to \(\rho \) rather than N. Our results indicate that \(\theta ^{[W]}\) tends to be strongest for very small (i.e. close to zero) or very large (i.e. significantly larger than 1) values of \(\rho \) - which is unsurprising, since it is known to be asymptotically optimal in light-traffic and heavy-traffic limits due to Theorem 2. Of greater interest, perhaps, are the comparisons with the alternative heuristic policies \(\theta ^{[W]}\) and \({\tilde{\theta }}\), and how these are affected by the value of \(\rho \).

Table 3 shows that the suboptimality of \(\theta ^{[W]}\) is greatest when \(\rho \) is close to 1, although it remains within \(1.6\%\) of optimality (on average) in such cases. The Bernoulli improvement policy \(\theta ^{[B]}\) may be slightly stronger than \(\theta ^{[W]}\) when \(\rho \) is close to 1, but (unlike \(\theta ^{[W]}\)) it performs worse as \(\rho \) increases beyond 1. This is consistent with the result of Theorem 7. The selfish policy \({\tilde{\theta }}\) performs well when \(\rho \) is very small, but is very poor in other cases.

We also investigated the effect of heterogeneity between service facilities on our results. Recall that a particular facility i in our model has four parameters: \(c_i\), \(\mu _i\), \(\alpha _i\) and \(\beta _i\). For each of our 32,934 randomly-generated parameter sets we calculated the coefficient of variation (i.e. the ratio of the standard deviation to the mean) of the values \(c_1,\ldots ,c_N\) in order to obtain a measure, denoted by \(\phi _c\), of the variation between \(c_i\) values. We then repeated this process for the other parameter types in order to obtain the analogous statistics \(\phi _{\mu }\), \(\phi _{\alpha }\) and \(\phi _{\beta }\), and calculated the average coefficient of variation as \({\bar{\phi }}:=(\phi _c+\phi _{\mu }+\phi _{\alpha }+\phi _{\beta })/4\). Table 4 shows comparisons between the performances and suboptimality values of the three heuristic policies \(\theta ^{[W]}\), \(\theta ^{[B]}\) and \({\tilde{\theta }}\), with results categorized according to the value of \({\bar{\phi }}\).

Table 4 indicates that \(\theta ^{[W]}\) remains very strong for all values of \({\bar{\phi }}\). More interestingly, however, there is a clear trend for the other two heuristics (\(\theta ^{[B]}\) and \({\tilde{\theta }}\)) to perform worse as \({\bar{\phi }}\) increases. Consequently, the improvements given by \(\theta ^{[W]}\) as opposed to \(\theta ^{[B]}\) and \({\tilde{\theta }}\) tend to increase with \({\bar{\phi }}\). Indeed, the Spearman’s rank correlation coefficient between \({\bar{\phi }}\) and \((g^{[W]}-g^{[B]})/g^{[B]}\) is 0.178 when all 32,934 trials are considered, and between \({\bar{\phi }}\) and \((g^{[W]}-{\tilde{g}})/{\tilde{g}}\) (where \({\tilde{g}}\) is the selfish policy’s performance) it is 0.102. These values are statistically highly significant, and it is not obvious why \(\theta ^{[W]}\) should be more robust to heterogeneity between facilities than the other heuristics. We will return to this subject in our conclusions.

6.2 ‘Large’ systems with \(N\ge 4\)

We performed a further series of experiments, involving another 4660 randomly-generated sets of system parameters. The intention in this part was to investigate ‘large’ systems, in which the size of the selfish state space \({\tilde{S}}\) would preclude the use of dynamic programming algorithms. The median of \(|{\tilde{S}}|\) over all of the 4660 trials performed was approximately 6.25 billion states, and the maximum was approximately \(6.03\times 10^{20}\) (0.6 sextillion). Please see Appendix H for details of how the parameter sets were generated.

Without the use of DP algorithms, one cannot evaluate the optimal average reward \(g^*\) for a given system, nor is it possible to evaluate the performances of the heuristic policies \(\theta ^{[W]}\), \(\theta ^{[B]}\) and \({\tilde{\theta }}\) exactly. However, as discussed in previous sections, the indices used for decision-making by these policies are simple to obtain (regardless of the size of \(|{\tilde{S}}|\)), since they are determined by considering facilities individually. One can then use simulation to estimate the average rewards earned by the respective heuristic policies.

As Sect. 6.1, our interest lies mainly in assessing the strength of the Whittle policy \(\theta ^{[W]}\). Given that we cannot evaluate the exact suboptimality of \(\theta ^{[W]}\) in larger systems, we decided to compensate by expanding our set of alternative heuristic policies to be used for comparison purposes. The results in Sect. 6.1 have already shown that the selfish policy \({\tilde{\theta }}\) performs poorly in many cases, especially if the demand rate is high. However, we can derive other (possibly stronger) heuristics by considering a simple generalization of the selfish decision-making rule. For a given state \({\mathbf {x}}\in S\), action \(a\in \{0,1,\ldots ,N\}\) and parameter \(p\in [0,1]\), let \(w_a({\mathbf {x}},p)\) be defined as follows:

Thus, \(w_a({\mathbf {x}},p)\) is equivalent to the expected net reward for an individual customer defined in (4) except that the rewards \(\alpha _i\) for the various facilities are scaled by a multiplier p.

Let \({\tilde{\theta }}^p\) denote the policy which operates in such a way that the action chosen under state \({\mathbf {x}}\in S\) is the action which maximizes \(w_a({\mathbf {x}},p)\), with ties broken arbitrarily (except that \(a=0\) is chosen only if \(w_i({\mathbf {x}},p)<0\) for all \(i\in \{1,2,\ldots ,N\}\)). Also, let \({\tilde{g}}^p\) denote the average reward under policy \({\tilde{\theta }}^p\). If \(p=1\) then \({\tilde{\theta }}^p\) is equivalent to the usual selfish policy, \({\tilde{\theta }}\). However, the value of p which maximizes \({\tilde{g}}^p\) is likely to be smaller than 1, especially if the demand rate is high.

Let \({\mathcal {D}}\) be a discretization of the interval [0, 1] and let us define \({\tilde{g}}^{{\mathcal {D}}}\) by

i.e. \({\tilde{g}}^{{\mathcal {D}}}\) is the maximum average reward attained over all possible policies \({\tilde{\theta }}^p\), subject to the constraint \(p\in {\mathcal {D}}\). We will also use \({\tilde{\theta }}^{{\mathcal {D}}}\) to denote a policy in \(\{{\tilde{\theta }}^p\}_{p\in {\mathcal {D}}}\) which attains the average reward \({\tilde{g}}^{{\mathcal {D}}}\). It should be noted that \({\tilde{\theta }}^{{\mathcal {D}}}\) is not an admissible policy itself; instead, it represents the strongest-performing of a set of policies for a particular system. We intend to use \({\tilde{g}}^{{\mathcal {D}}}\) as a benchmark in order to evaluate the strength of \(\theta ^{[W]}\) in larger systems.

In each of our 4660 randomly-generated scenarios we simulated the performances of all 100 policies in the set \(\{{\tilde{\theta }}^p\}_{p\in {\mathcal {D}}}\), with the discretized set \({\mathcal {D}}\) given by \({\mathcal {D}}=\{0.01,0.02,\ldots ,0.99,1\}\), and estimated \({\tilde{g}}^{{\mathcal {D}}}\) by taking the maximum of these. We also simulated the performances of \(\theta ^{[W]}\) and \(\theta ^{[B]}\) using the same random number seed used to simulate the 100 policies in \(\{{\tilde{\theta }}^p\}_{p\in {\mathcal {D}}}\). We note here that simulating the performances of 102 different stationary policies is a computationally intensive task, and this is why we have considered fewer random scenarios in Sect. 6.2 than in Sect. 6.1. The implementation and simulation of the Whittle policy itself is extremely fast even in very large systems, and does not pose any computational difficulty.

Table 5 summarizes the performance of \(\theta ^{[W]}\) against \(\theta ^{[B]}\) and \({\tilde{\theta }}^{{\mathcal {D}}}\) in these 4460 experiments, with results categorized according to the value of N (the number of facilities). Columns 3–4 show the percentage improvements achieved by \(\theta ^{[W]}\) against the other heuristics for different N values. Column 5 (resp. 6) shows the percentages of experiments in which the (estimated) long-run average reward achieved by the Whittle policy, \(g^{[W]}\), was at least as great as \(g^{[B]}\) (resp. \({\tilde{g}}^{{\mathcal {D}}}\)). There is a general trend for \(\theta ^{[W]}\) to increase its advantages against \(\theta ^{[B]}\) and \({\tilde{\theta }}^{{\mathcal {D}}}\) as N increases. Also, by comparing Tables 2 and 5, we may observe that the relative strength of \(\theta ^{[W]}\) versus the other heuristics appears to have increased significantly in these ‘large system’ experiments.

Table 6 shows additional comparisons between the three heuristic policies with results categorized according to the value of \(\rho =\lambda \left( \sum _{i=1}^N c_i\mu _i\right) ^{-1}\). As in Sect. 6.1 (see Table 3), \(\theta ^{[W]}\) becomes stronger relative to the alternative heuristics as \(\rho \) increases beyond 1. The Bernoulli improvement policy, \(\theta ^{[B]}\), also tends to be stronger than \({\tilde{\theta }}^{{\mathcal {D}}}\) (this can be seen by comparing columns 3 and 4, for example).

Finally, Table 7 shows the results of our experiments categorized according to the value of \({\bar{\phi }}\), where \({\bar{\phi }}\) is defined the same way as in Sect. 6.1; i.e. it is the average of the coefficients of variation for the four parameter types (\(c_i,\mu _i,\alpha _i,\beta _i\)). As in Section 6.1, we observe that \(\theta ^{[W]}\) tends to increase its advantage over other heuristics when the heterogeneity between facilities is increased.

It seems clear from our results in Sect. 6.2 that, if we want to find an alternative heuristic policy which rivals the performance of the Whittle policy \(\theta ^{[W]}\), it is not sufficient to simply modify the selfish decision rule so that rewards are given relatively less importance compared to expected waiting costs (and hence the system becomes less busy). Indeed, the Whittle policy is based on socially optimal (i.e. average reward optimal) decisions at individual facilities, and this enables it to make smarter decisions than the policies in the set \(\{{\tilde{\theta }}_p\}_{p\in {\mathcal {D}}}\). To illustrate this point, suppose we have two facilities i and j such that \(w_i({\mathbf {x}},p)=w_j({\mathbf {x}},p)\) under a particular state \({\mathbf {x}}\in S\). Suppose also that the reward for service \(\alpha _i\) is substantially larger than \(\alpha _j\), but the expected waiting costs at facility i are also larger (this may be due to a longer expected waiting time, for example). In this situation, the generalized selfish policy \({\tilde{\theta }}_p\) is unable to distinguish between facilities i and j, but one might imagine that joining facility j should be a better choice in the context of average reward maximization, since it has a smaller impact on future congestion levels in the system. The index (13) employed by the Whittle policy is better-suited to taking such considerations into account.

The randomly-generated parameter sets and results of the numerical experiments reported in this paper have been archived and are available at http://doi.org/10.5281/zenodo.3775332.

7 Conclusions

Theorem 2 in Shone et al. (2016) has established that it is theoretically possible to find an average reward optimal policy for the MDP formulated in Sect. 2 by truncating the state space S, and applying a dynamic programming algorithm to an MDP with the finite state space \({\tilde{S}}\). Unfortunately, the finite set \({\tilde{S}}\) might itself be very large in many problem instances, and for this reason it is necessary to look for heuristic approaches which can be relied upon to yield near-optimal policies in a short amount of time.

As discussed in the introduction, the Whittle index heuristic is now well-established in the field of stochastic dynamic programming and we have shown (Lemma 1) that the indexability property, which can be difficult to prove in other settings, can be applied in our problem. A key finding of our paper is that the positive recurrent state space under an optimal stationary policy is not only bounded above by the selfish state space \({\tilde{S}}\), but also bounded below by the ‘Whittle state space’ \(S_W\) (Theorem 1). We have also proved certain structural properties of the Whittle policy, including its asymptotic optimality in light-traffic and heavy-traffic limits (Theorems 2–6). These results are useful since, in general, structural properties of optimal policies are difficult to prove for routing problems involving heterogeneous service facilities.

The empirical results in Sect. 6 have shown that the Whittle policy \(\theta ^{[W]}\) is very close to optimality in systems which are ‘small enough’ to allow the computation of an optimal policy. In larger systems, we have verified that it performs strongly against alternative heuristics, including the policy \(\theta ^{[B]}\) obtained by applying one step of policy improvement to a ‘Bernoulli splitting’ policy. Notably, its superiority over other heuristics appears to increase as

-

(i)

the traffic intensity increases beyond 1;

-

(ii)

the number of facilities increases;

-

(iii)

the heterogeneity between service facilities increases.

The first of the above characteristics is implied by Theorem 2, but the reasons for the second and third characteristics are less obvious. Indeed, all of the heuristics that we have considered share some broad methodological similarities, in that they require the computation of indices for individual facilities—so it is not immediately clear why the Whittle policy’s indices should be more robust than others with respect to dimensionality or heterogeneity. We intend to investigate this further in future work.

For any given set of system parameters, the indices which characterize the Whittle policy \(\theta ^{[W]}\) are calculated in a completely deterministic way. Thus, this heuristic does not rely on any iterative algorithm, nor does it involve any type of simulation or random sampling. One might regard the deterministic nature of this heuristic as both a strength and a weakness. On one hand, the simplicity makes it extremely easy to implement; on the other hand, if the heuristic is found to perform poorly in a particular system, then it is not necessarily easy to see how the decision-making indices might be adjusted in order to achieve closer proximity to an optimal policy. In future work, we intend to test the performance of the Whittle heuristic against policies obtained by approximate dynamic programming (ADP) methods, including those which have achieved popularity in the fields of neuro-dynamic programming and reinforcement learning (Bertsekas and Tsitsiklis 1996; Powell 2007; Sutton and Barto 1998). We also intend to use the Whittle policy in conjunction with ADP methods, by allowing it to act as a reference point within broader search algorithms.

References

Aalto S, Lassila P, Osti P (2016) Whittle index approach to size-aware scheduling for time-varying channels with multiple states. Queueing Syst 83:195–225

Ansell PS, Glazebrook KD, Kirkbride C (2003a) Generalised “join the shortest queue” policies for the dynamic routing of jobs to multi-class queues. J Oper Res Soc 54:379–389

Ansell PS, Glazebrook KD, Nino-Mora J, O’Keeffe M (2003b) Whittle’s index policy for a multi-class queueing system with convex holding costs. Math Methods Oper Res 57:21–39

Archibald TW, Black DP, Glazebrook KD (2009) Indexability and index heuristics for a simple class of inventory routing problems. Oper Res 57:314–326

Argon NT, Ding L, Glazebrook KD, Ziya S (2009) Dynamic routing of customers with general delay costs in a multiserver queuing system. Probab Eng Inf Sci 23(2):175–203

Bell CE, Stidham S (1983) Individual versus social optimization in the allocation of customers to alternative servers. Manag Sci 29(7):831–839

Bellman RE (1957) Dynamic programming. Princeton University Press, Princeton

Bertsekas DP, Tsitsiklis JN (1996) Neuro-dynamic programming. Athena Scientific, Nashua

Bertsimas D, Nino-Mora J (1996) Conservation laws, extended polymatroids and multi-armed bandit problems: a polyhedral approach to indexable systems. Math Oper Res 21:257–306