Abstract

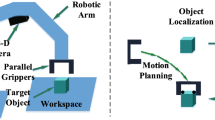

Applying deep neural network models to robot-arm grasping tasks requires the laborious and time-consuming annotation of a large number of representative examples in the training process. Accordingly, this work proposes a two-stage grasping model, in which the first stage employs learning-based template matching (LTM) algorithm for estimating the object position, and a self-rotation learning (SRL) network is then proposed to estimate the rotation angle of the grasping objects in the second stage. The LTM algorithm measures similarity between the feature maps of the search and template images which are extracted by a pre-trained model, while the SRL network performs the automatic rotation and labelling of the input data for training purposes. Therefore, the proposed model does not consume an expensive human-annotation process. The experimental results show that the proposed model obtains 92.6% when testing on 2400 pairs of the template and target images. Moreover, in performing practical grasping tasks on a NVidia Jetson TX2 developer kit, the proposed model achieves a higher accuracy (88.5%) than other grasping approaches on a split of Cornell-grasp dataset.

Similar content being viewed by others

Data availability

Not applicable.

Code availability

Not applicable.

References

Jiang Y, Moseson S, Saxena A (2011) Efficient grasping from rgbd images: Learning using a new rectangle representation. In: IEEE Int Conf Robot Autom (ICRA), pp 3304–3311

Lenz I, Lee H, Saxena A (2015) Deep learning for detecting robotic grasps. Int J Robot Res 34(4–5):705–724

Li CHG, Chang YM (2019) Automated visual positioning and precision placement of a workpiece using deep learning. Int J Adv Manuf Technol 104(9):4527–4538

Morrison D, Corke P, Leitner J (2020) Learning robust, real-time, reactive robotic grasping. Int J Robot Res 39(2–3):183–201

Redmon J, Angelova A (2015) Real-time grasp detection using convolutional neural networks. In: 2015 IEEE Int Conf Robot Autom (ICRA), IEEE, pp 1316–1322

Wang Z, Li Z, Wang B, Liu H (2016) Robot grasp detection using multimodal deep convolutional neural networks. Adv Mech Eng 8(9):1687814016668077

Zhao D, Sun F, Wang Z, Zhou Q (2021) A novel accurate positioning method for object pose estimation in robotic manipulation based on vision and tactile sensors. Int J Adv Manuf Technol 116(9):2999–3010

Elangovan N, Gerez L, Gao G, Liarokapis M (2021) Improving robotic manipulation without sacrificing grasping efficiency: a multi-modal, adaptive gripper with reconfigurable finger bases. IEEE Access 9:83298–83308

Michalos G, Dimoulas K, Mparis K, Karagiannis P, Makris S (2018) A novel pneumatic gripper for in-hand manipulation and feeding of lightweight complex parts–a consumer goods case study. Int J Adv Manuf Technol 97(9):3735–3750

Spiliotopoulos J, Michalos G, Makris S (2018) A reconfigurable gripper for dexterous manipulation in flexible assembly. Inventions 3(1):4

Kokic M, Stork JA, Haustein JA, Kragic D (2017) Affordance detection for task-specific grasping using deep learning. In: 2017 IEEE-RAS 17th Inter Conf Humanoids, IEEE, pp 91–98

Rezapour Lakani S, Rodríguez-Sánchez AJ, Piater J (2019) Towards affordance detection for robot manipulation using affordance for parts and parts for affordance. Auton Robots 43(5):1155–1172

Mahler J, Matl M, Liu X, Li A, Gealy D, Goldberg K (2018) Dex-net 3.0: Computing robust vacuum suction grasp targets in point clouds using a new analytic model and deep learning. In: 2018 IEEE Int Conf Robot Autom (ICRA), IEEE, pp 5620–5627

Monica R, Aleotti J (2020) Point cloud projective analysis for part-based grasp planning. IEEE Robot Autom Lett 5(3):4695–4702

Levine S, Pastor P, Krizhevsky A, Ibarz J, Quillen D (2018) Learning hand-eye coordination for robotic grasping with deep learning and large-scale data collection. Int J Robot Res 37(4–5):421–436

Pinto L, Gupta A (2016) Supersizing self-supervision: Learning to grasp from 50k tries and 700 robot hours. In: 2016 IEEE Int Conf Robot Autom (ICRA), IEEE, pp 3406–3413

Le MT, Lien JJJ (2021) Learning-based template matching for robot arm grasping. In: 2021 IEEE International Conference on Systems, Man, and Cybernetics (SMC), IEEE, pp 1763–1768

Chen F, Ye X, Yin S, Ye Q, Huang S, Tang Q (2019) Automated vision positioning system for dicing semiconductor chips using improved template matching method. Int J Adv Manuf Technol 100(9):2669–2678

Zhong F, He S, Li B (2017) Blob analyzation-based template matching algorithm for led chip localization. Int J Adv Manuf Technol 93(1):55–63

Desai BK, Pandya M, Potdar M (2013) Comparison of various template matching techniques for face recognition. Int J Eng Res Dev 8(10):16–18

Loew DG (2004) Distinctive image features from scale-invariant keypoints. Int J Comput Vision

Rublee E, Rabaud V, Konolige K, Bradski G (2011) Orb: an efficient alternative to sift or surf. In: IEEE Int Conf Comput Vision, pp 2564–2571

Oron S, Dekel T, Xue T, Freeman WT, Avidan S (2017) Best-buddies similarity–robust template matching using mutual nearest neighbors. IEEE Trans Pattern Anal Machine Intell 40(8):1799–1813

Talmi I, Mechrez R, Zelnik-Manor L (2017) Template matching with deformable diversity similarity. In: Proc Conf Comput Vis Pattern Recognit, pp 175–183

Kat R, Jevnisek R, Avidan S (2018) Matching pixels using co-occurrence statistics. In: Proc Conf Comput Vis Pattern Recognit, pp 1751–1759

Cheng J, Wu Y, AbdAlmageed W, Natarajan P (2019) QATM: Quality-aware template matching for deep learning. In: Proc Conf Comput Vis Pattern Recognit, pp 11553–11562

Karaoguz H, Jensfelt P (2019) Object detection approach for robot grasp detection. In: 2019 IEEE Int Conf Robot Autom (ICRA), IEEE, pp 4953–4959

Asif U, Tang J, Harrer S (2018) Graspnet: an efficient convolutional neural network for real-time grasp detection for low-powered devices. In: IJCAI, pp 4875–4882

Chen T, Kornblith S, Norouzi M, Hinton G (2020) A simple framework for contrastive learning of visual representations. In: International Conference on Machine Learning, PMLR, pp 1597–1607

Grill JB, Strub F, Altché F, Tallec C, Richemond P, Buchatskaya E, Doersch C, Avila Pires B, Guo Z, Gheshlaghi Azar M et al (2020) Bootstrap your own latent-a new approach to self-supervised learning. Adv Neural Info Process Syst 33:21271–21284

Feng Z, Xu C, Tao D (2019) Self-supervised representation learning by rotation feature decoupling. In: Proc Conf Comput Vis Pattern Recognit, pp 10364–10374

Li X, Hu X, Qi X, Yu L, Zhao W, Heng PA, Xing L (2021) Rotation-oriented collaborative self-supervised learning for retinal disease diagnosis. IEEE Trans Med Imag

Sandler M, Howard A, Zhu M, Zhmoginov A, Chen LC (2018) Mobilenetv2: Inverted residuals and linear bottlenecks. In: Proc Conf Comput Vis Pattern Recognit, pp 4510–4520

Deng J, Dong W, Socher R, Li LJ, Li K, Fei-Fei L (2009) Imagenet: A large-scale hierarchical image database. In: IEEE Conf Comput Vis Pattern Recognit, IEEE, pp 248–255

Wu Z, Xiong Y, Yu SX, Lin D (2018) Unsupervised feature learning via non-parametric instance discrimination. In: Proc Conf Comput Vis Pattern Recognit, pp 3733–3742

Ester M, Kriegel HP, Sander J, Xu X (1996) A density-based algorithm for discovering clusters in large spatial databases with noise. AAAI Press, pp 226–231

Wu Y, Lim J, Yang MH (2013) Online object tracking: a benchmark. In: Proc Conf Comput Vis Pattern Recognit, pp 2411–2418

Funding

This study was supported in part by the Ministry of Science and Technology (MOST) of Taiwan, R.O.C., under Grant No. MOST 110-2221-E-006-179. The additional support provided by Tongtai Machine & Tool Co., Ltd. (Taiwan) and Contrel Technology Co., Ltd. (Taiwan) is also gratefully acknowledged.

Author information

Authors and Affiliations

Contributions

All authors contributed to the study conception and design. Material preparation, data collection, analysis, and writing—original draft preparation were performed by Minh-Tri Le; supervision, project administration, writing—review and editing were performed by Jenn-Jier James Lien. All authors read and approved the final manuscript.

Corresponding author

Ethics declarations

Ethics approval

The authors state that the present work is in compliance with the ethical standards.

Consent to participate

There is no consent to participate needed in the present study.

Consent for publication

There is no consent to publish needed in the present study.

Conflicts of interest

The authors declare no competing interests.

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Below is the link to the electronic supplementary material.

Supplementary file23 (MP4 23266 KB)

Rights and permissions

About this article

Cite this article

Le, MT., Lien, JJ.J. Robot arm grasping using learning-based template matching and self-rotation learning network. Int J Adv Manuf Technol 121, 1915–1926 (2022). https://doi.org/10.1007/s00170-022-09374-y

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00170-022-09374-y