Abstract

We elicit adolescent girls’ attitudes towards intimate partner violence and child marriage using purposefully collected data from rural Bangladesh. Alongside direct survey questions, we conduct list experiments to elicit true preferences for intimate partner violence and marriage before age 18. Responses to direct survey questions suggest that very few adolescent girls in the study accept the practises of intimate partner violence and child marriage (5% and 2%). However, our list experiments reveal significantly higher support for both intimate partner violence and child marriage (at 30% and 24%). We further investigate how numerous variables relate to preferences for egalitarian gender norms in rural Bangladesh.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Gender disparities in outcomes favouring men within developing countries are generally larger than in the developed world (Jayachandran 2015). Similar disparities also often exist in attitudes towards gender equality (see, for instance, Asadullah and Wahhaj 2019; Borrell-Porta et al. 2019). Such attitudes, including attitudes supporting domestic violence and child marriage, can have important effects on behaviours, as well as play a prominent role in explaining observed gender disparities in outcomes. For instance, Dhar et al. (2016) find that parents’ discriminatory attitudes reduce daughters’ aspirations to pursue schooling beyond secondary school in India. Maertens (2013) finds that parents’ perceptions of ideal age at marriage have an adverse impact on daughter’s schooling. Despite the important role that attitudes play in explaining behaviours and outcomes, much of the existing empirical literature relies on survey-based direct questions eliciting attitudes that are likely to suffer from measurement error leading to biased estimates. Our study addresses this limitation by making use of list experiments that enable us to elicit attitudes regarding gender roles and behaviours in a better way.

One aspect of their lives in which women face a disadvantage is as victims of violence. Women in South Asia face various forms of violence throughout their lifetimes, from early childhood to old age (Solotaroff and Pande 2014). Surprisingly, some of the global hotspots for child marriage and violence against women are also places that have seen considerable progress in poverty reduction and women’s economic participation. One such developing country setting is Bangladesh, where female labor force participation for women age 15+ increased from 23% in 1990 to 33% in 2017.Footnote 1 While the incidence of child marriage has fallen in recent decades, it remained the case that approximately 38% of women in Bangladesh were married by the time they were 15 years of age and 74.7% were married by the time they were 18 years of age in 2014. Support for intimate partner violence is relatively high among both young and old women in Bangladesh and has remained unchanged in recent decades despite improvements in female labor force participation (see Appendix 1 for more details). In 2014, 28.3% of Bangladeshi women agreed that a husband is justified in beating his wife for one of the following reasons: if the wife burns the food, if she argues with the husband, if she goes out without telling him, if she neglects the children or if she refuses to have sexual intercourse with him.Footnote 2 Finally, 22.4% of ever married Bangladeshi women report being a victim of physical or sexual violence by their husband/partner in the last 12 months.Footnote 3 These kinds of disparities, which disproportionately put women at a disadvantage within the household compared with men, are likely to have serious implications for women’s welfare.

The objective of this paper is to measure attitudes towards intimate partner violence and child marriage among adolescent girls in rural Bangladesh. We do this by using standard survey questions (direct questions) as well as methods to elicit responses to sensitive survey questions (list experiments, see “Section 2” for a description and examples). We find that the method of measurement matters when eliciting responses to sensitive questions. Standard direct survey questions under-estimate support for socially harmful practices—few adolescent girls in the study accept the practice of intimate partner violence (5%) or child marriage (2%) when asked directly. List experiments reveal substantially higher support for both intimate partner violence (30%) and child marriage (24%). Adolescent girls with lower levels of education under-report their support for child marriage by 16 percentage points compared with adolescent girls with higher education. We also find that girls randomly exposed to village level adolescent clubs set up by the Bangladeshi non-government organization BRAC, which educated them on marital rights and laws, under-report their support for intimate partner violence in comparison with non-exposed adolescent girls. To our knowledge, ours is the first study to use list experiment methods to elicit attitudes towards child marriage as well as domestic violence, and has greater external validity than similar empirical investigations.

The rest of the paper is organised as follows. “Section 2” provides a literature review and lists the contributions this paper makes to the literature. “Section 3” describes the background of our study and list experiment that generated the data we use in this paper. “Section 4” lays out the empirical analysis that we perform on the data as well as including a discussion of our findings, while “Section 5” concludes.

2 Literature review and contribution

An important concern when eliciting gender attitudes in surveys is related to measurement error.Footnote 4 Suppose a survey respondent is queried on whether they consider domestic violence to be acceptable. It is very likely that they would either choose not to respond to the query (leading to systematic item non-response) or respond to the effect that they do not consider domestic violence to be acceptable (misreporting, which might arise from social desirability). In either case, the resulting measurement error will lead to biased estimates when investigating the relationship between gender attitudes and other outcome variables that we might be interested in (Bound et al. 2001).

There are different strategies that have been employed to deal with measurement error when eliciting responses to sensitive questions. One is to rely on administrative data rather than self-reports, even though in developing countries they are usually not systematically collected nor well registered.Footnote 5 Another is to use intensive qualitative fieldwork, as done by Blattman et al. (2016), in which local researchers spend several days with a random sub-sample of survey respondents after a survey has taken place. They then obtain verbal confirmation of sensitive behaviours allowing the employment of a validation technique to examine the nature of measurement error in survey responses. Alternatively, one may use quantitative survey methods to examine measurement error in responses to sensitive questions, such as randomized response techniques, endorsement or list experiments.Footnote 6

In this paper, we use a list experiment which is also known as the item count or unmatched count technique. In a list experiment, survey respondents are queried on the number of items they agree with on a list, which (randomly) either includes or excludes a sensitive item (Miller 1984; Imai 2011). We use list experiments to deal with measurement error in elicited gender attitudes in Bangladesh.

Several recent empirical studies make use of list experiments. Karlan and Zinman (2012) use a list experiment to indirectly elicit how borrowers from microfinance institutions (MFI) use their loan proceeds in Peru and the Philippines. Using the results from the list experiment and comparing them with responses to direct survey questions, they find that direct elicitation underreports the non-enterprise use of the loan proceeds by borrowers from MFIs. List experiments have also been employed to elicit truthful responses to sexual behaviours, such as condom use, number of partners and unfaithfulness in Uganda (Jamison et al. 2013), Colombia (Chong et al. 2013) and Côte d’Ivoire (Chuang et al. 2019); harmful traditional practices against women in Ethiopia (De Cao et al. 2017; De Cao and Lutz 2018; Gibson et al. 2018) and anti-gay sentiment in the USA (Coffman et al. 2016).

A recent study examining gender attitudes (specifically those related to female genital mutilation/cutting) in Ethiopia finds under-reporting in direct attitude questions of 10 percentage points (De Cao and Lutz 2018). This study also provides suggestive evidence that under-reporting is more pronounced among uneducated women and among women who were targeted by a non-government organization (NGO) intervention to strengthen the health system as well as sexual and reproductive health knowledge.

A few recent studies have also used list experiments to examine measurement error in domestic violence reporting and prevalence. Peterman et al. (2017) use a list experiment combined with an unconditional cash transfer given to female caregivers of children younger than 5 in rural Zambia. They find that 15% of the women had experienced physical intimate partner violence in the last 12 months. They also find no effect of the cash transfer on intimate partner violence 4 years after the program. Since direct questions are not asked, it is not possible to examine the direction or magnitude of the measurement error in such questions. Joseph et al. (2017) use a list experiment in Kerala, India to find that the level of under-reporting of domestic violence is over 9 percentage points, while being negligible for physical harassment on buses. They analyse the list experiment using difference-in-means across sub-groups of the population. Unlike Peterman et al. (2017) and Joseph et al. (2017), Aguero and Frisancho (2018) follow WHO guidelines and protocol to ask female respondents direct questions on violence (which are comparable to the widely used domestic violence questions asked in the Demographic and Household Surveys) and compare these with a list experiment used to elicit information on experiences of physical and sexual intimate partner violence. They use a sample of female clients of a micro credit organisation operating in urban areas of Lima, Peru. Aguero and Frisancho (2018) find that more educated women systematically underreport violence more often, but that there is no under-reporting by women who have less education. They also describe a low-cost solution to correct for bias in a setting in which there is non-classical measurement error in the dependent variable (for instance the dependent variable could be intimate partner violence as in Aguero and Frisancho 2018); there is no measurement error in the independent variable and endogeneity is present. This solution involves using estimates generated from list experiments carried alongside other survey instruments.

Our work contributes to this literature in several important ways. It is the first study to use a list experiment to elicit attitudes towards child marriage, at the same time being among the first to develop a list experiment for domestic violence alongside Peterman et al. (2017), Joseph et al. (2017) and Aguero and Frisancho (2018). Since our sample comprises a third of all districts of Bangladesh, it has greater external validity than many of the other empirical investigations in this area (e.g., urban Lima in Aguero and Frisancho (2018)). Ours is also the first study that makes use of an RCT (non-formal education intervention) to analyse how support for domestic violence and child marriage changes with the intervention while also using a list experiment. We analyse our list experiments by using regression techniques that allow us to investigate how the probability of supporting the sensitive question varies as a function of respondent’s characteristics (as in Coffman et al. 2016; Aguero and Frisancho 2018; De Cao and Lutz 2018), improving on earlier papers that only compute difference-in-means across sub-groups of the population (e.g., Karlan and Zinman 2012; Joseph et al. 2017). Finally, we discuss the validity of our list experiments in relation to recent criticisms raised by Chuang et al. (2019).

3 Data and study design

3.1 Survey design

Data on list experiments eliciting attitudes towards domestic violence and child marriage used in this study was collected in February 2017 as part of an end-line survey to evaluate the Adolescent Development Programme (ADP), a randomized control trial (RCT) intervention implemented by, BRAC, the largest NGO in Bangladesh. The ADP intervention introduced village level random variation in adolescent girls’ exposure to non-formal education on marital rights and laws. The program design of the intervention is described in detail in Appendix 2. The baseline survey design considered 27 BRAC branch offices located in the 19 poorest districtsFootnote 7 where BRAC was about to implement and scale up the ADP scheme by the end of 2012. Of a total 216 villages in the sample under 27 BRAC branches, half were assigned to the program and the remaining served as non-program villages. Randomisation was done at the village level where BRAC branches were considered as clusters, i.e. it is a clustered RCT. Within the catchment area of each sample village, 20 adolescents (ages 11–16), of whom 15 were girls and five boys, were interviewed. A total of 4320 adolescents (3240 females) ages 11–16 years were interviewed across all villages as part of the baseline survey in June 2012.Footnote 8 The same adolescents were interviewed in the end-line survey in February 2017, with 2732 (or 63% of the baseline respondents) being successfully re-interviewed (of which 2020 are females).Footnote 9 Appendix Table 8 gives a comparison of characteristics across the sample of 3240 adolescent girls and the sub-sample of 2020 who completed the end-line survey. An important concern is related to potentially selective attrition of subjects from baseline to end-line. However, observable characteristics of adolescent girls which are related to age, religion and education as well as their mother’s age, education and empowerment measures at baseline for the complete sample and the sub-sample successfully re-interviewed at end-line are very similar, making selective attrition unlikely in this setting.

3.2 List experiments to elicit gender attitudes

The list experiment question used to elicit attitudes towards domestic violence included the following items: (1) If the father is too busy with outside work, this has a negative impact on children’s education; (2) it is not acceptable to use contraceptives to avoid pregnancy; (3) in a marriage both husband and wife should decide on how many children to have; (4) a wife can be hit, slapped, kicked or physically hurt by the husband under any circumstances.Footnote 10

The list experiment question used to elicit attitudes towards child marriage included the following items: (1) It is important for girls to attend school; (2) birth of a girl brings as much happiness to a family as birth of a boy does; (3) literate mothers can take care of their children better than illiterate mothers; (4) a girl should be married off before 18.

We randomly divided our respondents in two groups, A and B, that acted as either control or treatment for the first or second list experiment. This allowed us to reduce bias in the answers, given that only one list experiment with one sensitive item was asked from each respondent. In both list experiments, the sensitive item is the last item.Footnote 11 For each list experiment, the control group was asked the list experiment with only items (1)-(2)-(3). We carefully selected our non-sensitive questions after discussions with BRAC, and although the items (1)–(3) in both list experiments seem sensitive too, in the local setting they fit as non-sensitive items given the illegality of the sensitive one.Footnote 12 Moreover, recent research shows that non-sensitive items more closely related to the sensitive one perform better because they make the sensitive item less salient (Chuang et al. 2019).

In early January 2017, BRAC researchers piloted the list experiment questions in the Karail slums in Dhaka district with 10 female adolescents participating in the pilot. The primary objective was to verify the adequacy of the list experiment statements, and to assess the appropriateness of using stones (marbles) by interviewees to describe their responses. Stones were used to avoid numeracy-related bias in responses (as in De Cao and Lutz 2018). The survey work was conducted by a team of 50 enumerators who received an intensive week-long training at the BRAC head office which was directly supervised by study team members as well as field management trainers from BRAC’s Research and Evaluation Division (RED). The majority of enumerators (38 out of 50) were females keeping in mind the study population (where 75% adolescent respondents were female). In total, the enumerators were organized in 15 teams so that each team in a sample site had enough female enumerators available to interview a female adolescent.

The list experiment questions were asked in the last page of a long questionnaire (about 40 pages long). Direct questions phrased in the same way as the sensitive item in the list experiments were asked from all respondents but at around page 20 of the questionnaire. We have no reason to believe that respondents were cognisant of this design and that the format influenced the list experiment results as so many different issues were dealt with during the interview. However, when we analyse the direct questions, we focus on the sample corresponding to the list experiment control group. See also “Section 4.4” for further discussion on the validity of our list experiments.

3.3 Estimation sample

Given that the targets of the NGO intervention were primarily adolescent girls, we restrict our estimation sample to adolescent girls who responded to the list experiment questions asked in the end-line survey. This gives us an estimation sample of 2020 adolescent girls. Table 1 reports descriptive statistics for this sample. Half of the sample was exposed to the ADP program.Footnote 13 Of the respondents, 42.5% had less than 9 years of schooling or had (at most) completed junior secondary education while the rest had either secondary or tertiary education. The average age while completing the end-line survey was 17.5 years. 28% of the adolescent girls were married by the time they completed the end-line survey, of whom 71% were married before age 18.

When directly asked about gender attitudes, only 2% of the adolescent girls agreed that a girl should be married off before age 18. Similarly, only 5% agreed that a wife can be hit, slapped, kicked or physically hurt by the husband under any circumstances. This is striking because of the high prevalence of early marriage as well as violence against women in the study area. For example, turning to maternal characteristics, in 15% of the cases, respondents’ mothers reported being beaten at least once by their husband in the last 12 months. Moreover, about 46% of the girls’ mothers in the estimation sample were pregnant before age 18. Female respondents in rural Bangladesh are also subject to patriarchal social norms—89% of the mothers of adolescent respondents reportedly practiced PurdahFootnote 14 when they went out.

4 Empirical strategy and results

4.1 Empirical strategy

In a standard list experiment design a sample of respondents (N) is randomly divided in two groups: control and treatment. Each respondent in the control group (Ti = 0, where i indicates the individual) receives a list of J non-sensitive, yes/no items, and is asked to provide the total number of items he/she agrees on. The same applies to each respondent in the treatment group (Ti = 1) where the list is increased by one item to include the sensitive item (J + 1 items). Let us assume \( {Z}_{\mathrm{ij}}^{\ast } \) to be the respondent i’s truthful preference to the jth item, j = 0, 1, …, J (Imai 2011). We have that Zij(T) is one if the answer to the jth item is one, and zero otherwise. The econometrician only observes Yi = Yi(Ti) where \( {Y}_i(0)={\sum}_{j=1}^J{Z}_{\mathrm{ij}}(0) \) or \( {Y}_i(1)={\sum}_{j=1}^{J+1}{Z}_{ij}(1) \).

A list experiment is valid (Imai 2011; Blair and Imai 2012) if: (a) the randomisation is good, meaning that for each respondent \( \left\{{\left\{{Z}_{\mathrm{ij}}(0),\left.{Z}_{ij}(1)\right\}\right.}_{j=1}^J,\left.{Z}_{i,J+1}(1)\right\}\right.\perp {T}_i \); (b) there are no design effects meaning that the inclusion of the sensitive item does not change the sum of affirmative answers to the non-sensitive items (\( {\sum}_{j=1}^J{Z}_{ij}(0)={\sum}_{j=1}^J{Z}_{\mathrm{ij}}(1) \)); (c) there are no liars meaning that the respondent replies truthfully to the sensitive item (\( {Z}_{i,J+1}(1)={Z}_{i,J+1}^{\ast } \)). Assumption (c) is also called ceiling and floor effects. Ceiling effects occur when a respondent in the treatment group gives the answer Yi = J even if he/she would have replied Yi = J + 1. Floor effects occur instead when a respondent in the treatment group answers Yi = 1 even if he/she would have replied Yi = 0.

If the list experiment satisfies (a), (b) and (c), then support for the sensitive item can be obtained by simply using a difference-in-means estimator:

where \( {N}_1={\sum}_{i=1}^N{T}_i \) is the treatment group size and N0 the control group size. To investigate how preferences over the sensitive item change with changes in respondent’s characteristics, a multivariate regression model can be used.Footnote 15 In particular, the following equation can be estimated:

where Xi are the respondent’s characteristics and (γ, δ) are the parameters to estimate. We can estimate (γ, δ) using ordinary least squares (OLS).

4.2 Estimation results

In Table 2, we present the distribution of responses to our two list experiments (LE). The proportion of women in favour of domestic violence (DV) and child marriage (CM) is computed using the difference-in-means estimator and is respectively 30% (SE = 0.028) and 24% (SE = 0.026).Footnote 16

Table 3 reports the analysis when we run a linear regression model, as in Eq. (2). The first four columns report the results where the outcome is the list experiment outcome for domestic violence (LE DV), while the remaining four refer to the list experiment outcome for child marriage (LE CM). Columns (1) and (5) report regressions where the list experiment outcomes are regressed only on a list experiment indicator (Ti); these correspond to the difference-in-means estimate from Eq. (1). The next columns in Table 3 add the most important individual characteristics. The coefficients of interest are the ones interacted with the list experiment dummy (δ). Column (2) provides the effect of being exposed to ADP on LE DV and finds a surprisingly positive effect indicating an increase in support for domestic violence. Column (4) also includes age, primary education and marital status, and it shows that ADP-exposed adolescent girls are 11.4 percentage points (p value = 0.005) more likely to be in favour of domestic violence than girls not exposed to the ADP intervention even after controlling for other individual characteristics. The results of the list experiment outcome for child marriage, instead, reveal an interesting effect of education. Column (8) shows that less educated girls, who have at most completed primary education, are 16.2 percentage points (p value = 0.012) more likely to support child marriage compared to more educated girls.

We report and use robust standard errors when interpreting our results in the previous paragraph. We also compute and report p values computed using the wild bootstrap when clustering at the NGO branch level (since there are only 27 NGO branches). Our results remain robust to the use of clustered standard errors at the NGO branch level.

4.3 Social desirability bias

In this section, we examine social desirability bias by comparing attitudes towards domestic violence and child marriage measured via a list experiment with the same attitudes measured via a standard direct survey question (DQ). Table 1 reports that only 5% and 2% of respondents support domestic violence and child marriage when asked directly. When considering the direct question on domestic violence (DQ DV), we restrict our sample to the control group in the list experiment for domestic violence. Similarly, when considering the direct question on child marriage (DQ CM), we restrict our sample to the control group in the list experiment for child marriage. This implies that when we compare the direct question response with the list experiment, each respondent would have answered the sensitive question only once. In Table 4, we estimate linear probability models, using an indicator variable taking the value one if a girl supports domestic violence in columns (1)–(4) as the dependent variable of interest.Footnote 17 An indicator variable taking the value one if a girl supports child marriage is used as the dependent variable of interest in columns (5)–(8). Explanatory variables include a girl’s main characteristics (ADP exposure, age, marital status and education). While being exposed to ADP has no effect on adolescent girl’s attitudes, primary education is positively associated with the probability of supporting domestic violence, while age is negatively associated with the probability of supporting child marriage. Lower educated girls (with at most primary education) are about 3 percentage points more likely to support domestic violence; while being a year older decreases the probability that child marriage is supported by 0.5 percentage points.Footnote 18

Next, we empirically test whether there are statistically significant differences between the estimates obtained using list experiments vs. direct questions eliciting gender attitudes. This difference tells us how much the true support for domestic violence or child marriage is under-reported. The underlying assumption is that true support for domestic violence or child marriage is measured using the list experiment. A second assumption is that the measurement error in the direct questions and list experiments has the same sign. Formally, let us define Zi, J + 1(0) as the respondent i’s potential answer to the sensitive item when asked directly (Blair and Imai 2012). Then, social desirability bias is as follows:

The first term can be estimated as in Eq. (2), while the second can be estimated with a linear probability model regressing the observed value of Zi, J + 1(0) on Xi. Given that the LE only allows to identify the total number of items the respondent agrees on but not which ones (e.g., \( {Z}_{i,J+1}^{\ast } \) cannot be identified), we cannot study the social desirability bias at the individual level, but at aggregate level.

Table 5 reports the differences in the estimated proportion of girls answering the sensitive item in the affirmative when using the list experiment or the direct question by socio-demographic characteristics.Footnote 19 The direct question estimates correspond to the list experiment control group sub-samples. The first row of Table 5 shows the unconditional results; these reveal a large difference of 24 percentage points in support for domestic violence, and 22 percentage points in support for child marriage. In the following rows of Table 5, we report differences in gender attitudes elicited using list experiments and direct questions by examining the estimated proportions for different groups whilst controlling for all other characteristics. All differences are highly statistically significant and between 15 and 30 percentage points. When indirectly questioned, girls seem to be much more in favour of both domestic violence and child marriage than when asked directly. Which girls under-report their support the most? By taking differences again between groups (e.g., married versus non-married and primary educated versus secondary/tertiary educated) from columns (5) and (6), we find two interesting results. First, girls exposed to the ADP intervention are more likely to under-report their support (by 12 percentage points) for domestic violence compared to girls who are not exposed to the intervention (p value = 0.044). Second, less educated girls (i.e. primary schooling or below) are 15 percentage points more likely to under-report their support for child marriage than the higher educated girls (p value = 0.008).

We also estimate the proportions for the DQ using probit models. Table 13 shows the social desirability bias when the DQ predictions and their standard errors (columns (3)–(4)) come from the probit models used in Table 12. Reassuringly, the results are very similar to Table 5.

4.4 Validity of the list experiments

In “Section 4.1”, we discussed the conditions for list experiments to be valid. Here, we discuss the validity of each of them in the context of the list experiments that we implemented. The balance tests for the randomisation of the list experiments can be seen in Table 6. Column (5) reports the p value of the t test statistic where each main variable in the control group is compared with the one in the treatment group. None of the differences are statistically significant, indicating that our list experiment randomisation is good.

To test if there is a violation of the design effects assumption, Blair and Imai (2012) developed a statistical test. The null hypothesis of this test indicates no design effects, and we fail to reject it.Footnote 20 This indicates that the inclusion of the sensitive item did not change the responses to the non-sensitive items.

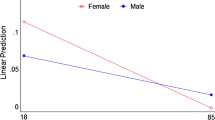

The third requirement for a valid list experiment is the absence of ceiling or floor effects. This assumption called no liars cannot be statistically tested (with the linear model used in this paper, Blair and Imai 2012), but we can analyse the distribution of responses to our list experiments (see Table 2 and Fig. 1). As can be seen, responses to the list experiments are well distributed, being mainly concentrated around 2 and 3. None of the respondents responded zero to either list experiment, but floor effects are expected to play a minor role. Table 2 shows that 4% and 5% of the respondents have no problems in revealing their support for domestic violence and child marriage, but there are quite a few girls who replied “3” to both list experiments, particularly the one on child marriage. In Table 7, we run different regressions to analyse floor and ceiling effects. We create an outcome called floor LE DV (Floor LE CM) that takes the value one if the LE DV (LE CM) is equal to one and zero otherwise; and an outcome ceiling LE DV (ceiling LE CM) that takes the value one if the LE DV (LE CM) is equal to three and zero otherwise. We regress these outcomes on the main respondent characteristics for the list experiment control group. This allows us to see who is most likely hitting the floor or the ceiling and may thus be over- or under-reporting her support for domestic violence or child marriage, not reporting “0” or “4”. We find no statistically significant effect of any of those characteristics on the outcomes, except for primary education on ceiling effects for the list experiment on child marriage. This result could indicate a ceiling effect for less educated girls. Bearing in mind this limitation, it has been shown that when there are ceiling (or floor) effects, the true support for the sensitive item is underestimated (Blair and Imai 2012).

Given that our list experiments show heterogenous effects by ADP exposure for domestic violence, and by education for child marriage, we examine the distribution of responses to the list experiments by these characteristics in Figs. 2 and 3. This is not a formal test, but the idea behind these Figures is to try to understand if these girls (less educated and ADP exposed) have understood the mechanism behind the list experiment and have manipulated their results. For the sake of comparison, we report the distribution of the list experiments also for the highly educated girls and girls not exposed to ADP. In Fig. 2, we can see how responses to the list experiment on domestic violence in the different groups is well distributed, with only a small number of cases at the extremes. In Fig. 3, the distribution of responses to the list experiment on child marriage shows that some girls gave the response 3, which might indicate the presence of a ceiling effect. In this case, we might have an underestimate of the true support for child marriage. Tests for design effects run on the sub-sample of low educated, high educated, ADP-exposed and ADP-non-exposed adolescent girls always fail to reject the null hypothesis of no design effects (results available upon request).

In a recent paper, Chuang et al. (2019) critically examine the usefulness of indirect survey methods such as list experiments and randomized response techniques. They implement a large number of double list experiments within a single survey taken by respondents in Côte d’Ivoire where groups A and B acted as treatment and controls for the same sexual or reproductive health sensitive behaviour; in this design, the non-sensitive items for groups A and B need to be different by construction. Use of double list experiments allows the generation of two difference-in-means estimators that can be compared, which to date had only been used to reduce the variance compared to a single list experiment (Droitcour et al. 1991; Glynn 2013). For most sensitive behaviours, Chuang et al. (2019) find statistically significant differences in the two difference-in-means estimates obtained for every list experiment; they conclude by suggesting that such comparisons (which can only be carried out using double list experiments) be used to check the internal consistency of the list experiment technique.

Since we did not implement double list experiments, we cannot carry out the tests proposed in Chuang et al. (2019). We preferred to ask our respondents direct questions on attitudes to compare them with the indirect list experiment questions. We believe this method is better suited to our objective to examine social desirability bias since in a double list experiment everyone is asked about the sensitive item twice, both directly or via the list experiment.Footnote 21 An important exercise in Chuang et al. (2019)’s work is the variation in the type of non-sensitive items ranging from innocuous items to items related to the sensitive item. The authors find that non-sensitive items more closely related to the sensitive one perform better. None of the non-sensitive items in our list experiments are innocuous, making the sensitive item less salient, and supporting the validity of our design.

Tables 13 and 14 report similar analysis to respectively Tables 3 and 4 but with additional controls. We included controls for the following measures of maternal empowerment: if the respondent’s mother has been beaten by her husband, was married early, became pregnant early, and if she practices purdah. None of these additional variables are statistically significant, except if the mother practices purdah which increases the likelihood of supporting child marriage when asked directly. Nonetheless, adding these variables does not change our main findings.

5 Discussion

Our findings show that measurement error is important when examining attitudes towards sensitive issues such as domestic violence or child marriage. Under-reporting can be quite high. We find that only 5.4% of adolescent girls support domestic violence when questioned directly, but 29.7% support domestic violence when questioned indirectly via a list experiment. Similar results are shown for child marriage, where 2.1% of the respondents think a girl should be married off by age 18 when asked a direct question, but support increases to 23.9% when asked via a list experiment.

Interestingly, we find girls who have lower education under-report their support for child marriage compared to girls who have higher education. To the best of our knowledge, this is the first study which implements a list experiment to examine attitudes towards child marriage; therefore, we cannot compare this result with existing studies. There are no heterogenous effects by education when looking at attitudes towards domestic violence. In contrast, Aguero and Frisancho (2018) use a list experiment to study domestic violence experiences in urban Lima (Peru), and find high under-reporting among the most educated respondents. This difference could be related to the different contexts or to the fact that we aim at measuring attitudes, while Aguero and Frisancho focus on behaviours. Our survey asks girls if “a wife can be hit, slapped, kicked or physically hurt by the husband under any circumstances”. In our context, girls with at most primary education might have more to lose if they do not support domestic violence, while educated girls might have better outside options (e.g., better jobs) and depend less on their husband. De Cao and Lutz (2018) examine attitudes towards female genital cutting in Ethiopia and find, similarly to us, that uneducated women are less willing to share their support for the practice.

Finally, we find suggestive evidence that the social desirability bias for domestic violence is larger among adolescent girls exposed to ADP. ADP is a random intervention; hence, we can interpret its effect to be causal, even if only marginally statistically significant.Footnote 22 The intervention focuses on the change in traditional attitudes through non-formal training and dissemination of information regarding sexual health, gender rights and legal provisions for violence against women including child marriage. It is certainly possible that respondents in ADP-exposed areas conform to the expectations of those providing the program treatment. The ADP campaign aims at changing the local customs and this may increase social pressures around gender attitudes resulting in a stronger incentive to reveal a biased answer. We provide a more detailed comparison of the ADP program with other similar programs in developing countries in Appendix 2.

6 Conclusion

Traditional “gender attitudes” or beliefs regarding the appropriateness and/or acceptability of gender-specific roles and behaviour in society are considered important drivers of women’s well-being. While measures of gender attitudes are now included in many representative international and national surveys, they suffer from potential measurement error, limiting their usefulness in empirical research. Using a unique data set from Bangladesh, we confirm that subjective responses to sensitive direct questions under-estimate support for regressive social practices such as wife beating and child marriage. We find that girls with higher education are more supportive of egalitarian gender norms pertaining to child marriage. While we do not claim this to be a causal relationship, our finding is supportive of expanding access to education to young girls in developing country settings. We also find that exposure to a program that disseminated knowledge on gender empowerment led girls to hide their true support for domestic violence. This indicates that (at least in the short-term) programs like the ADP might not have the desired effects on gender attitudes. We also find that different individual characteristics are associated with under-reporting of different aspects of gender attitudes. For instance, education matters for under-reporting of attitudes which pertain to child marriage, while ADP exposure matters for under-reporting of attitudes regarding domestic violence. This indicates that there are no simple prescriptions or general rules that apply across all aspects of gender attitudes. Our research suggests that survey methods matter in eliciting attitudes towards gendered violence and child marriage. The evidence presented in this paper also highlights the difficulty in permanently shifting gender attitudes exclusively through social empowerment programs even in a setting where girls’ schooling and economic opportunities have improved considerably in recent decades.

Our results confirm the relevance of potential bias in responses to standard direct questions when the outcome of interest is sensitive. We suggest practitioners to measure each sensitive outcome using different survey methodologies to test if there is indeed under- or over-reporting. We believe this is particularly important in the context of policy impact evaluations where gathering complementing evidence about the effectiveness of a program or intervention is crucial when attitudes or behaviour concern sensitive topics.

Notes

World Bank Indicators, https://data.worldbank.org/indicator/SL.TLF.CACT.FE.ZS?view=chart.

These estimates are based on data from the 2014 Demographic and Health Survey (DHS), https://dhsprogram.com/topics/gender/index.cfm.

These estimates are based on data from the 2007 Demographic and Health Survey which included the Domestic Violence module, https://dhsprogram.com/topics/gender/index.cfm.

A related literature explores the formation of attitudes regarding gender equality in developing country contexts. Beaman et al. (2009) find that prior exposure to female leaders increased the chances of women’s electoral success in India. They find that changes in voter’s gender attitudes (measured using Implicit Association Tests) arising from exposure to female leaders are an important channel through which this change happens. Jensen and Oster (2009) use panel data to find that introduction of cable television in rural India reduced the reported acceptability of domestic violence and son preference. They also find accompanying increases in female autonomy, decreases in fertility and increased school enrolment of young children. Dhar et al. (2016) examine how intergenerational transmission plays a role in the formation of gender attitudes in India.

In a randomised response technique respondents are asked to use a randomisation device such as a dice or coin whose outcome is unknown to the enumerator (Warner 1965). In an endorsement experiment, randomly selected survey respondents are asked for their support of policies which have been endorsed by a socially sensitive actor whilst other survey respondents are asked for their support for the same policies without the endorsement. If endorsement increases support for the policies, then this is taken as evidence of support for the socially sensitive actor (Bullock et al. 2011).

These were selected based on national poverty ranking.

The adolescent sample size was considered sufficient with 80% power and 95% confidence level for a 20% effect size (Khatoon et al. 2018).

Given random program placement at the village level, all adolescent girls in the program area had an equal probability of participation in the ADP intervention. As such, a comparison of mean outcomes across ADP program and control villages yield intention-to-treat (ITT) estimate of the program effect.

The instructions given the respondent before the list experiment module are as follows: “Now I will read out a number of statements to you and request you to anonymously give me your answer indicating how many statements you agree with. I’ll give you 4 stones which I’ll request you to keep in your right hand. Both of your hands will have to be kept behind you so that they are not visible to me. If you agree with the statement I read out, transfer one stone from your right to your left hand. Please do not show this to me or tell me verbally your answer or whether you transferred any stone from right to left hand. If you do not agree with a sentence, do not transfer any stone from your right hand. At the end of this exercise, tell me the total number of stones in your left hand.”

List experiments should not be too short to avoid the ceiling and floor effect, and usually include a 3-item or 4-item list (Kuklinski et al. 1997). We did not have enough power to randomize the order of the items, but it is common practice to have the sensitive item at the very end.

There is a variety of punishments against perpetrators under the following relevant laws: the Domestic Violence Prevention and Protection Act of 2010; the Prevention of Oppression against Women and Children Act of 2000; the Child Marriage Restraint Act of 1929 (later revised as “the Child Marriage Restraint Act of 2017”).

Balance tests on the ADP intervention are given in Appendix Table 9.

The word “Purdah” refers to female seclusion or dressing conservatively in presence of a non-related male member or when venturing outside the house. Among our female adolescent respondents (i.e. the main study population), 91% practise Purdah.

The average answer in the control group for the LE DV question is 2.19 (SE = 0.019), while in the treated it is 2.48 (SE = 0.020); the difference-in-means is then 0.297 (SE = 0.028). The average answer in the control group for the LE CM question is 2.48 (SE = 0.019), while in the treated it is 2.71 (SE = 0.019); the difference-in-means is then 0.238 (SE = 0.026).

Results are very similar if we instead compute average marginal effects from a non-linear probit model (see Table 10).

The R-squared is low for DQ in specifications that include an intercept only or an intercept and ADP exposure only (columns (1)–(2) and (5)–(6), Table 4); these specifications were estimated to facilitate comparison with the LE results (Table 3). We also do not a find a good model fit for these specifications when using a non-linear probit model (Appendix Table 10). Inclusion of additional variables (such as education, age and whether married) improve model fit by increasing the R-squared. We interpret this as showing that it is difficult to predict individual responses to direct questions with much accuracy using the models at hand (either linear OLS or non-linear probit), at least in specifications where a full set of controls in not used.

Ideally, the direct question should only be asked from the list experiment control group to avoid potential underreporting (see our discussion on how we deal with this in our list experiment, “Section 4.1”).

Similar findings were found by De Cao and Lutz (2018) that study attitudes towards female genital cutting in Ethiopia. The intervention they consider, however, is not random and prevents them from claiming causal effects.

Note that there are no questions that explicitly ask women on attitudes towards child marriage, so we cannot examine how attitudes towards child marriage vary across cohorts and over time.

We find a lower fraction of respondents support intimate partner violence when asked directly in our survey but our direct question is very different, asking if wife beating is justified under any rather than specific circumstances.

Those activities involves various initiative such as interactive popular theatre, adolescent fairs, cultural competition and sports for development.

Another recent study is Dhar et al. (2018) which examines a school-based randomized intervention in a north Indian state (Haryana) where gender discrimination is entrenched. In contrast to club-based safe space interventions in Bangladesh and Uganda, the intervention in Dhar et al. (2018) is integrated within regular classrooms/schools and conditional on school attendance and government school enrolment. The sample includes both rural and urban locations. While dosage was only a total of 20 h in the secondary school-based program in Haryana (India), it’s a non-community-level multi-year school-based intervention. This study finds a positive effect of the intervention on adolescent’s support for gender equality. The evaluation study (i.e. Dhar et al. 2018) relies on aggregate indices of gender attitudes and do not report treatment effect for attitude questions specific to the appropriate age of marriage for girls.

This is also true for Dhar et al. (2018) who report positive impacts on gender attitudes. However, they do not report the results separately on attitude towards domestic violence and child marriage.

References

Aguero J, Frisancho V (2018) Misreporting of intimate partner violence in developing countries: measurement error and new strategies to minimize it. Working paper

Amin S, Saha J, Ahmed J (2018) Skills-building programs to reduce child marriage in Bangladesh: a randomized controlled trial. J Adolesc Health 63(3):293–300

Asadullah MN, Wahhaj Z (2019) Early marriage, social networks and the transmission of norms. Economica. 86(344):801–831

Bandiera O, Buehren N, Burgess R, Goldstein M, Gulesci S, Rasul I, Sulaiman M (2018) Women’s empowerment in action: evidence from a randomized control trial in Africa, CEPR Discussion Papers 13386, C.E.P.R. Discussion Papers

Beaman L, Chattopadhyay R, Duflo E, Pande R, Topalova P (2009) Powerful women: does exposure reduce bias? Q J Econ 124(4):1497–1540

Blair G, Imai K (2012) Statistical analysis of list experiments. Polit Anal 20(1):47–77

Blattman C, Jamison J, Koroknay-Palicz T, Rodrigues K, Sheridan M (2016) Measuring the measurement error: a method to qualitatively validate survey data. J Dev Econ 120:99–112

Borrell-Porta M, Costa-Font J, Philipp J (2019) The ‘mighty girl’ effect: does parenting daughters alter attitudes towards gender norms? Oxf Econ Pap 71(1):25–46

Bound J, Brown C, Mathiowetz N (2001) Measurement error in survey data. Handb Econ 2:3705–3843

Buchmann N, Field E, Glennerster R, Nazneen S, Pimkina S, Sen I (2018) Power vs. money: alternative approaches to reducing child marriage in Bangladesh, A randomized control trial. working paper. https://www.povertyactionlab.org/sites/default/files/publications/Power-vs-Money-Working-Paper.pdf

Bullock W, Imai K, Shapiro JN (2011) Statistical analysis of endorsement experiments: measuring support for militant groups in Pakistan. Polit Anal 19(4):363–384

Chong A, Gonzales-Navarro M, Karlan D, Valdivia M (2013) Effectiveness and spillovers of online sex education: evidence from a randomized evaluation in Colombian public schools. NBER Working Paper 18776

Chuang E, Dupas P, Huillery E, Seban J (2019) Sex, lies and measurement: do indirect response survey methods work? Mimeo

Coffman KB, Coffman LC, Ericson KMM (2016) The size of the LGBT population and the magnitude of antigay sentiment are substantially underestimated. Manag Sci 63(10):3168–3186

De Cao E, Lutz C (2018) Sensitive survey questions: measuring attitudes regarding female genital mutilation through a list experiment. Oxford Bull Econ Stat. https://doi.org/10.1111/obes.12228

De Cao E, Huis M, Jemaneh S, Lensink R (2017) Community conversations as a strategy to change harmful traditional practices against women. Appl Econ Lett 24:72–74

Dhar D, Jan T, Jayachandran S (2016) Intergenerational transmission of gender attitudes: evidence from India. Working paper

Dhar D, Jain T, Jayachandran S (2018) Reshaping adolescents’ gender attitudes: evidence from a school-based experiment in India

Droitcour J, Caspar RA, Hubbard ML, Parsley TL, Visscher W, Ezzati TM (1991) The item-count technique as a method of indirect questioning: a review of its development and a case study application. In: Biemer PP, Groves RM, Lyberg LE, Mathiowetz NA, Sudman S (eds) Measurement errors in surveys. Wiley, New York, pp 185–210

Gibson MA, Gurmu E, Cobo B, Rueda MM, Scott IM (2018) Indirect questioning method reveals hidden support for female genital cutting in South Central Ethiopia. PLoS One 13:e0193985. https://doi.org/10.1371/journal.pone.0193985

Glynn AN (2013) What can we learn with statistical truth serum? Design and analysis of the list experiment. Public Opin Q 77(S1):159–172

Imai K (2011) Multivariate regression analysis for the item count technique. J Am Stat Assoc 106(494):407–416

Jamison JC, Karlan D, Raffler P (2013) Mixed-method evaluation of a passive mHealth sexual information texting Service in Uganda. Inf Technol Int Dev 9(3):1–28

Jayachandran S (2015) The root causes of gender inequality in developing countries. Annu Rev Econ 7:63–88

Jensen R, Oster E (2009) The power of TV: cable television and women’s status in India. Q J Econ 124(3):1057–1094

Joseph G, Usman Javaid S, Andres LA, Chellaraj G, Solotaro JL, Rajan SI (2017) underreporting of gender-based violence in Kerala, India: an application of the list randomization method, Technical report. Policy Research Working Paper N. 8044, World Bank

Karlan DS, Zinman J (2012) List randomization for sensitive behavior: an application for measuring use of loan proceeds. J Dev Econ 98(1):71–75

Khatoon FZ, Khan S, Khan TN, Alim MA (2018) The changing status of adolescents' knowledge, attitude and practice regarding reproductive and health and social issues: an impact assessment of APON intervention of adolescent development Programme of BRAC BEP. RED working paper

Kuklinski JH, Cobb MD, Gilens M (1997) Racial attitudes and the “New South”. J Polit 59:323–349

Maertens A (2013) Social norms and aspirations: age of marriage and education in rural India. World Dev 47:1–15

Miller JD (1984) A new survey technique for studying deviant behavior, Ph.D. thesis, The George Washington University

Moseson H, Massaquoi M, Dehlendorf C, Bawo L, Dahn B, Zolia Y, Vittinghoff E, Hiatt RA, Gerdts C (2015) Reducing under-reporting of stigmatized health events using the List Experiment: results from a randomized, population-based study of abortion in Liberia. Int J Epidemiol 44(6):1951–1958

Palermo T, Bleck J, Peterman A (2014) Tip of the iceberg: reporting and gender- based violence in developing countries. Am J Epidemiol 179(5):602–612

Peterman A, Palermo T, Handa S, Seidenfeld D (2017) List randomization for soliciting experience of intimate partner violence: application to the evaluation of Zambia’s unconditional child grant program. Health Econ Lett:1–7

Solotaroff JL, Pande RP (2014) "Violence against Women and Girls : Lessons from South Asia," World Bank Publications, The World Bank, number 20153

Warner SL (1965) Randomized response: a survey technique for eliminating evasive answer bias. J Am Stat Assoc 60:63–69

Acknowledgements

We are indebted to Andrew Jenkins and Erum Mariam for their encouragement and support. We thank Julian Jamison and Giulia La Mattina for useful comments on an earlier version of the paper. In addition, we are grateful to three anonymous referees and the Editor, Klaus F. Zimmermann, for their help and guidance in the review process. The usual disclaimers apply.

Funding

This study has been funded by BRAC (Research and Evaluation Department) and Institute of Education and Development (IED) of BRAC University.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

Author A has received research grants from BRAC. Author C is an employee of BRAC.

Additional information

Responsible editor: Klaus F. Zimmermann

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendices

Appendix 1: Female labor force participation and gender attitudes using the Bangladesh Demographic and Health Surveys (DHS)

To examine differences in female labor force participation, as well as the incidence of and attitudes towards child marriage and domestic violence across cohorts and over time we use data from the 2007 and 2014 Demographic and Health Surveys (DHS) for Bangladesh. These are nationally representative surveys that interview repeated cross-sections of Bangladeshi households. For the following discussion, we make use of responses to the women’s questionnaire from the 2007 and 2014 surveys where the respondents were ever married women from these households between the ages of 15 and 49.

Figure 4 shows the fraction within different age groups of women who report that they are currently working. While the fraction of women currently working is less than 40% within all age groups in both the 2007 and 2014 surveys, these fractions have increased over time if we compare the 2007 and 2014 respondents. Among the 2014 respondents, the fraction of currently working women has particularly increased within the older age groups of 35–39, 40–44 and 45–49 in comparison with the 2007 respondents.

Figure 5 provides the average age at first cohabitation (or marriage) within different age groups for both the 2007 and 2014 respondents. This provides us with information on the incidence of child marriage.Footnote 23 As may be seen in Fig. 5, there is an increase in average age at first cohabitation over time within all age groups, and particularly among the oldest age groups of 40–44- and 45–49-year-old women. For both the 2007 and 2014 respondents, average age at first cohabitation is lowest for the youngest age group 15–19 (largely because the average is over the few women who are already cohabiting at this age), and then for older women belonging to age groups 30–34, 40–44 and 45–49.

Questions on incidence of domestic violence were only asked in the 2007 DHS. Figure 6 shows the fraction of women within different age groups who experienced either less severe violence (left panel) or severe violence (right panel). While relatively high fractions of women experience less severe violence (> 40% for all age groups) and severe violence (> 10% for all age groups), there does not seem to be much variation in incidence across age groups. In other words, younger and older women seem to be equally likely to experience either less severe or severe violence from an intimate partner.

Next, we turn to attitudes towards domestic violence as given in Figs. 7 and 8. These are constructed from a set of questions asking women whether they agree that wife beating is justified in the following situations (for 2007 respondents):

-

1

If the wife goes out without telling husband

-

2

If the wife neglects the children

-

3

If the wife argues with the husband

-

4

If the wife refuses to have sex with the husband

In the 2014 survey, female respondents are asked if they agree with the above four statements, and, also, whether they agree that wife beating is justified:

-

5.

If the wife burns the food

Figure 7 provides a summary of responses for the 2007 respondents by age group and Fig. 8 provides this summary for the 2014 respondents. Firstly, despite potential measurement error in these responses a relatively large fraction of women agree that wife beating is justified in these situations. Approximately 20% of women support wife beating in situations 1–3 and approximately 10% in situations 4–5.Footnote 24 Second, there is very little variation in support for wife beating across age groups within the 2007 respondents or within the 2014 respondents. In other words, younger women seem as likely to support wife beating as older women, and this is true in 2007 as well as in 2014. Finally, from a comparison of Figs. 7 and 8, it does not seem that attitudes towards wife beating have changed over time since approximately the same fraction of women support wife beating in 2007 and 2014. This is despite the improvements in labor force participation over this period that we discussed earlier as shown in Fig. 4.

Comparing over the recent past, Bangladesh has seen an improvement in female force participation particularly among older women and a reduction in the incidence of child marriage. However, gender attitudes specifically towards domestic violence remain unchanged. While we have not ruled out potential confounds in this descriptive discussion, the patterns we have shown indicate that improvements in female labor force participation could have driven reductions in the incidence of child marriage, but that it is unlikely that changes in female labor force participation led to changes in gender attitudes (at least those related to domestic violence) in Bangladesh.

Appendix 2: The ADP program

BRAC has innovated a range of club-based adolescent development programmes which expose adolescents to a variety of activities such as (i) livelihood training courses, (ii) special network for (female) adolescent photographers, (iii) communication, awareness and advocacy through dialogues among adolescents, their parents and influential persons in the community,Footnote 25 and (iv) the Adolescent Peer Organised Network (APON). All educational activities are organized in adolescent clubs (aka “Kishori clubs”), whereby lessons are delivered in structured courses. These clubs offer a safe space where adolescent girls can read, socialise, play games, take part in cultural activities and have an open discussion on personal and social issues with their peers. These clubs are set up at the village level using a former BRAC school building as the venue. In 2016, there were around 8100 adolescent clubs all over Bangladesh. There are also different versions of the ADP in terms of the combination of activities conducted at the club. Our study involves a simpler version of the ADP intervention where the only activity component (or intervention) is APON. This focuses on life skill–based education on different social and health-related issues (such as reproductive health, sexual abuse, children’s rights, gender, sexually transmitted infections, sexual harassment, child trafficking, substance abuse, violence, family planning, child marriage, dowry and acid attacks) facilitated by adolescents’ peers. Club-based lessons on life skills aside, APON activities also include book exchange, reading, playing indoor and outdoor games, performing cultural programmes and observing different international and national days.

In terms of pedagogic structure, there are 2 hours of session in a week which take place every Thursday at the club. In total, four sessions take place in a month and 48 sessions altogether in 1 year. The APON/life skill–based education offers, in total, 12 subjects on different social and health-related issues in which 31 learning stories are articulated. Clubs are managed by an adolescent leader who is responsible for implementing all club activities. The leader is chosen based on leadership abilities.

Each club consists of 25–35 adolescent members of age 10–19 years, with 75% girls and the rest boys. Participation in the club is conditioned by socio-economic status. Adolescents who dropped out from school and come from a poor socio-economic background are given priority. While individual adolescents from the eligible groups self-selected in an ADP club (i.e. participation is non-random), all eligible adolescents in ADP program village were equally exposed (i.e. intervention exposure is random)—the study design randomly assigned treatment (i.e. the ADP Program placement) at the village level. Moreover, both program and non-program villages have benefited from BRAC’s non-formal education in the past.

1.1 Comparison with similar interventions in developing countries

There are also a number of other developing country studies that have evaluated related programs and their impact on gender attitudes and outcomes. These include the “empowerment and livelihoods for adolescents” (ELA) training scheme in Uganda (Bandiera et al. 2018), BALIKA (Bangladeshi Association for Life Skills, Income, and Knowledge for Adolescents) in Bangladesh (Amin et al. 2018) and Kishori Kendra (KK) scheme of training-based gender empowerment and financial incentives to delay marriage in Bangladesh (Buchmann et al. 2018). Both ELA and KK include safe space components where, in clubs, adolescents receive life skill lessons about gender rights and sexual education. However, they differ in other aspects. For instance, ELA simultaneously provides a vocational training component for income generating activities while KK includes a financial incentives component to delay marriage (in addition to a 6-month empowerment program) as well as an additional treatment arm offering empowerment plus incentive.

The existing developing country interventions differ considerably in terms of intensity of the treatment, target population, design and geographic coverage. For example, the ADP scheme’s dosage was 96 hours total for 1 year. In contrast, girls in the safe space groups in Bangladesh received about 200 hours of training in over 6 months (Buchmann et al. 2018), 144 hours total in BALIKA scheme in Bangladesh (Amin et al. 2018) and over 500 hours in five sessions per week for 2 years in the ELA project in Uganda (Bandiera et al. (2018).Footnote 26

In this context, ELA and KK are both variants of the scheme to which our sample respondents are exposed. However, in contrast to Bangladesh ADP scheme, ELA and KK are multifaceted programs and their evaluations have a longer window (4 years post-intervention). Moreover, these interventions did not have a conclusive impact on attitudes towards child marriage and do not report an impact on domestic violence.Footnote 27 Buchmann et al. (2018) contains data on a rich set of outcomes on age at marriage as well as indices for gender attitudes but the estimated impact on the standard empowerment component is insignificant. While ELA is reported to be effective in improving girls’ expectations for ages at first marriage for women, the most suitable age to start childbearing and delaying pregnancy, it is not known what the impact would have been in the absence of livelihood training. Compared to KK, BALIKA and ELA, BRAC’s ADP scheme studied in this paper only focuses on the standard empowerment component. While these differences can undermine the size of the program impact, they do not necessarily explain the greater support for attitude towards domestic violence among ADP participants which remains a puzzle.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Asadullah, M.N., De Cao, E., Khatoon, F.Z. et al. Measuring gender attitudes using list experiments. J Popul Econ 34, 367–400 (2021). https://doi.org/10.1007/s00148-020-00805-2

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00148-020-00805-2