Abstract

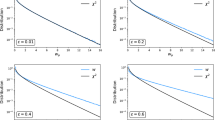

The posterior probabilities ofK given models when improper priors are used depend on the proportionality constants assigned to the prior densities corresponding to each of the models. It is shown that this assignment can be done using natural geometric priors in multiple regression problems if the normal distribution of the residual errors is truncated. This truncation is a realistic modification of the regression models, and since it will be made far away from the mean, it has no other effect beyond the determination of the proportionality constants, provided that the sample size is not too large. In the caseK=2, the posterior odds ratio is related to the usualF statistic in “classical” statistics. Assuming zero-one losses the optimal selection of a regression model is achieved by maximizing the posterior probability of a submodel. It is shown that the geometric criterion obtained in this way is asymptotically equivalent to Schwarz’s asymptotic Bayesian criterion, sometimes called the BIC criterion. An example of polynomial regression is used to provide numerical comparisons between the new geometric criterion, the BIC criterion and the Akaike information criterion.

Similar content being viewed by others

References

Akaike, H. (1973). Information theory and an extension of the maximum likelihood principle. In B. Petrov and F. Csaki, eds.,2nd International Symposium on Information Theory. Akademiai Kiado, Budapest. Reprinted inBreakthroughs in Statistics, 1 (1992). (S. Kotz and N.L. Johnson, eds.) Springer-Verlag, New York.

Akaike, H. (1977).Applications of Statistics. On entropy maximization principle, pp. 27–42. North-Holland, Amsterdam.

Bartlett, M. S. (1957). A comment on D.V. Lindley’s statistical paradox.Biometrika, 44:533–534.

Berger, J. O. andPericchi, L. R. (1996). The intrinsic Bayes factor for model selection and prediction.Journal of the American Statistical Association, 91:109–122.

Bhansali, R. J. (1986). Asymptotically efficient selection of the order by the criterion autoregressive transfer function.Annals of Statistics, 14:315–325.

Bickel, P. J. andDoksum, K. A. (1977).Mathematical Statistics: Basic Ideas and Selected Topics. Holden-Day, Inc, San Francisco.

Chipman, H., George, E. I., andMcCulloch, R. E. (2002).Model Selection. The practical implementation of Bayesian model selection, vol. 38, pp. 65–134. P. Lahiri, ed. Institute of Mathematical Statistics Lecture Notes-Monograph Series.

Dempster, A. P. (1971). Foundations of statistical inference, model searching and estimation in the logic of inference, pp. 56–81. Holt Rinehart and Winston of Canada, Toronto.

Edgeworth, F. Y. (1883). The method of least squares.The London, Edinburgh and Dublin Philosophical Magazine and Journal of Science Series, 16:360–375.

Gauss, K. F. (1809).Theoria Motus Corporum Coelestium. Hamburg. English translation by C.H. Davis 1963, New York, Dover.

Geisser, S. andEddy, W. F. (1979). A predictive approach to model selection.Journal of the American Statistical Association, 74:153–160.

Gelfand, A. E. andDey, D. K. (1994). Bayesian model choice: asymptotics and exact calculations.Journal of the Royal Statistical Society Series B, 56:501–513.

George, E. I. andMcCulloch, R. (1993). On obtaining invariant prior distributions.Journal of Statistical Planning and Inference, 37:169–179.

Guinnes (1996).Guinnes book of Records.

Guttman, I. (1967). The use of the concept of a future observation in goodness-of-fit problems.Journal of the Royal Statistical Society Series B, 29:83–100.

Hager, H. andAntle, C. (1968). The choice of the degree of a polynomial.Journal of the Royal Statistical Society Series B, 30:469–471.

Halpern, E. F. (1973). Polynomial regression from a Bayesian approach.Journal of the American Statistical Association, 68:137–143.

Jeffreys, H. (1961).Theory of Probability. Oxford University Press, Oxford, 3rd ed.

Kass, R. E. andWasserman, L. (1995). A reference test for nested hypotheses and its relationship to the schwarz criterion.Journal of the American Statistical Association, 90:928–934.

Keynes, J. M. (1921).A Treatise on Probability. Macmillan. London.

Macdonell, W. R. (1901). On criminal anthropometry and the identification of criminals.Biometrika, 1:177–227.

O’Hagan, A. (1995). Fractional Bayes factors for model comparison (with discussion).Journal of the Royal Statistical Society Series B, 57:99–138.

Pearson, E. S. andHartley, H. O. (1954).Biometrika Tables for Statisticians, vol. 1. University Press, Cambridge, 3rd ed.

Rueda, R. (1992). A Bayesian alternative to parametric hypothesis testing.Test, 1:61–68.

Schwarz, G. (1978). Estimating the dimension of a model.Annals of Statistics, 6:461–464.

Smith, A. F. M. andSpiegelhalter, D. J. (1980). Bayes factors and choice criteria for linear models.Journal of the Royal Statistical Society, Series B, 42:768–776.

Villegas, C. (1981). Inner statistical inference II.Annals of Statistics, 9:768–776.

Villegas, C. (1990). Bayesian inference in models with euclidean structures.Journal of the American Statistical Association, 85:1159–1164.

Villegas, C. andMartinez, C. J. (1999). On the concepts of coherence and admissibility.Test, 8:319–338.

Author information

Authors and Affiliations

Corresponding author

Additional information

Villegas and Swartz were partially supported by grants from the Natural Sciences and Engineering Research Council of Canada.

Rights and permissions

About this article

Cite this article

Villegas, C., Swartz, T. & Martínez, C. On the probability of a model. Test 11, 413–438 (2002). https://doi.org/10.1007/BF02595715

Received:

Accepted:

Issue Date:

DOI: https://doi.org/10.1007/BF02595715

Key Words

- Bayesian testing

- geometric Bayesian inference

- geometric priors, model selection

- probability of a model

- sharp hypotheses

- variable selection