Abstract

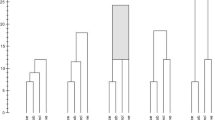

Whenevern objects are characterized by a matrix of pairwise dissimilarities, they may be clustered by any of a number of sequential, agglomerative, hierarchical, nonoverlapping (SAHN) clustering methods. These SAHN clustering methods are defined by a paradigmatic algorithm that usually requires 0(n 3) time, in the worst case, to cluster the objects. An improved algorithm (Anderberg 1973), while still requiring 0(n 3) worst-case time, can reasonably be expected to exhibit 0(n 2) expected behavior. By contrast, we describe a SAHN clustering algorithm that requires 0(n 2 logn) time in the worst case. When SAHN clustering methods exhibit reasonable space distortion properties, further improvements are possible. We adapt a SAHN clustering algorithm, based on the efficient construction of nearest neighbor chains, to obtain a reasonably general SAHN clustering algorithm that requires in the worst case 0(n 2) time and space.

Whenevern objects are characterized byk-tuples of real numbers, they may be clustered by any of a family of centroid SAHN clustering methods. These methods are based on a geometric model in which clusters are represented by points ink-dimensional real space and points being agglomerated are replaced by a single (centroid) point. For this model, we have solved a class of special packing problems involving point-symmetric convex objects and have exploited it to design an efficient centroid clustering algorithm. Specifically, we describe a centroid SAHN clustering algorithm that requires 0(n 2) time, in the worst case, for fixedk and for a family of dissimilarity measures including the Manhattan, Euclidean, Chebychev and all other Minkowski metrics.

Similar content being viewed by others

References

AHO, A.V., HOPCROFT, J.E., and ULLMAN, J.D. (1974),The Design and Analysis of Computer Algorithms, Reading, Massachusetts: Addison-Wesley.

ANDERBERG, M.R. (1973),Cluster Analysis for Applications, New York: Academic.

BATAGELJ, V. (1981), “Note on Ultrametric Hierarchical Clustering Algorithms,”Psychometrika, 46, 351–352.

BENZECRI, J.P. (1982), “Construction d'une Classification Ascendante Hierarchique par la Recherche en Chaîne des Voisins Réciproques,”Les Cahiers de l'Analyse des Données, VII, 209–218.

BRUYNOOGHE, M. (1978), “Classification Ascendante Hiérarchique des Grands Ensembles de Données: un Algorithme Rapide Fondé sur la Construction des Voisinages Réductibles,”Les Cahiers de l'Analyse des Données, III, 7–33.

CORMACK, R.M. (1971), “A Review of Classification,”Journal of the Royal Statistical Society, Series A, 134, 321–367.

COXETER, H.S.M. (1963), “An Upper Bound for the Number of Equal Nonoverlapping Spheres that can Touch Another of the Same Size,” inProceedings of the Symposium in Pure Mathematics VII, Convexity, ed. V. Klee, Providence, Rhode Island: American Mathematical Society, 53–71.

DEFAYS, D. (1977), “An Efficient Algorithm for a Complete Link Method,”Computer Journal, 20, 364–366.

DUBIEN, J.L., and WARDE, W.D. (1979), “A Mathematical Comparison of the Members of an Infinite Family of Agglomerative Clustering Algorithms,”Canadian Journal of Statistics, 7, 29–38.

EDELSBRUNNER, H., and VAN LEEUWEN, J. (1983), “Multidimensional Data Structures and Algorithms — a Bibliography,” Report F 104, Institute für Informationsverarbeitung, Technische Universität Graz, Graz, Austria.

EVERITT, B. (1980).Cluster Analysis (Second Edition), London: Heinemann.

FLORIAN, A. (1980), “Newtonsche und Hadwigersche Zahlen,” Report 145, Institut für Mathematik, Universität Salzburg, Salzburg, Austria.

GOWER, J.C., and ROSS, G.J.S. (1969), “Minimum Spanning Trees and Single Linkage Cluster Analysis,”Applied Statistics, 18, 54–64.

GROEMER, H. (1961), “Abschätzungen für die Anzahl der konvexen Körper, die einen konvexen Körper berühren,”Monatshefte für Mathematik, 65, 74–81.

GRUNBAUM, B. (1961), “On a Conjecture of H. Hadwiger,”Pacific Journal of Mathematics, 11, 215–219.

HADWIGER, H. (1957), “Uber Treffanzahlen bei translationsgleichen Eikörpern,”Archiv der Mathematik, 8, 212–213.

HADWIGER, H., and DEBRUNNER, H. (1955),Kombinatorische Geometrie in der Ebene, Genève: Monographies de l'Enseignement Mathématique.

HARTIGAN, J.A. (1975),Clustering Algorithms, New York: John Wiley.

HUBERT, L., and SCHULTZ, J. (1975), “Hierarchical Clustering and the Concept of Space Distortion,”British Journal of Mathematical and Statistical Psychology, 28, 121–133.

HWANG, F.K. (1979), “An 0(n log n) Algorithm for Rectilinear Minimal Spanning Tree,”Journal of the Association for Computing Machinery, 26, 177–182.

JARDINE, N., and SIBSON, R. (1971),Mathematical Taxonomy, London: John Wiley.

JOHNSON, S.C. (1967), “Hierarchical Clustering Schemes,”Psychometrika, 32, 241–254.

JUAN, J. (1982), “Programme de Classification Hiérarchique par l'Algorithme de la Recherche en Chaîne des Voisins Réciproques,”Les Cahiers de l'Analyse des Données, VII, 219–225.

LANCE, G.N., and WILLIAMS, W.T. (1966), “A Generalized Sorting Strategy for Computer Classifications,”Nature, 212, 218.

LANCE, G.N., and WILLIAMS, W.T. (1967), “A General Theory of Classificatory Sorting Strategies. 1. Hierarchical Systems,”Computer Journal, 9, 373–380.

MILLIGAN, G.W. (1979), “Ultrametric Hierarchical Clustering Algorithms,”Psychometrika, 44, 343–346.

MURTAGH, F. (1983), “A Survey of Recent Advances in Hierarchical Clustering Algorithms,”Computer Journal, 26, 354–359.

ROHLF, F.J. (1973), “Algorithm 76. Hierarchical Clustering Using the Minimum Spanning Tree,”Computer Journal, 16, 93–95.

ROHLF, F.J. (1977), “Computational Efficiency of Agglomerative Clustering Algorithms,” Report RC 6831, IBM T.J. Watson Research Center, Yorktown Heights, New York.

ROHLF, F.J. (1978), “A Probabilistic Minimum Spanning Tree Algorithm,”Information Processing Letters, 7, 44–48.

ROHLF, F.J. (1982), “Single-link Clustering Algorithms,” inHandbook of Statistics, Vol. 2, eds. P.R. Krishnaiah and L.N. Kanal, Amsterdam and New York: North Holland, 267–284.

ROSS, G.J.S. (1969), “Algorithm AS 15. Single Linkage Cluster Analysis,”Applied Statistics, 18, 106–110.

SHAMOS, M.I., and HOEY, D. (1975), “Closest-point Problems,”Sixteenth Symposium on Foundations of Computer Science, New York: Institute of Electrical and Electronics Engineers, 151–162.

SIBSON, R. (1973), “SLINK: an Optimally Efficient Algorithm for the Single-link Cluster Method,”Computer Journal, 16, 30–34.

SNEATH, P.H.A., and SOKAL, R.R. (1973),Numerical Taxonomy, San Francisco: W.H. Freeman.

WARD, Jr., J.H. (1963), “Hierarchical Grouping to Optimize an Objective Function,”Journal of the American Statistical Association, 58, 236–244.

WEIDE, B. (1977), “A Survey of Analysis Techniques for Discrete Algorithms,”Computing Surveys, 9, 291–313.

WISHART, D. (1969), “256 Note: An Algorithm for Hierarchical Classifications,”Biometrics, 25, 165–170.

Author information

Authors and Affiliations

Additional information

This work was partially supported by the Natural Sciences and Engineering Research Council of Canada and by the Austrian Fonds zur Förderung der wissenschaftlichen Forschung.

Rights and permissions

About this article

Cite this article

Day, W.H.E., Edelsbrunner, H. Efficient algorithms for agglomerative hierarchical clustering methods. Journal of Classification 1, 7–24 (1984). https://doi.org/10.1007/BF01890115

Issue Date:

DOI: https://doi.org/10.1007/BF01890115