Abstract

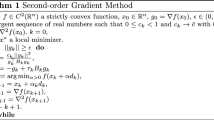

The gradient path of a real valued differentiable function is given by the solution of a system of differential equations. For a quadratic function the above equations are linear, resulting in a closed form solution. A quasi-Newton type algorithm for minimizing ann-dimensional differentiable function is presented. Each stage of the algorithm consists of a search along an arc corresponding to some local quadratic approximation of the function being minimized. The algorithm uses a matrix approximating the Hessian in order to represent the arc. This matrix is updated each stage and is stored in its Cholesky product form. This simplifies the representation of the arc and the updating process. Quadratic termination properties of the algorithm are discussed as well as its global convergence for a general continuously differentiable function. Numerical experiments indicating the efficiency of the algorithm are presented.

Similar content being viewed by others

References

M. Avriel and J.P. Dauer, “A homotopy based approach to unconstrained optimization”, Tech. Rept. 76-14, Department of Operations Research, Stanford University, CA (1976).

M.C. Biggs, “Minimizations algorithms making use of non-quadratic properties of the objective function”,Journal of the Institute of Mathematics and its Applications 8 (1971) 315–327.

C.A. Botsaris, “A curvilinear optimization method based upon iterative estimation of the eigensystem of the Hessian matrix”, Special report WISK 217, National Research Institute for Mathematical Sciences, Pretoria, South Africa (1976).

C.A. Botsaris and D.H. Jacobson, “A Newton-type curvilinear search method for optimization”,Journal of Mathematical Analysis and Applications 54 (1976) 217–229.

C.G. Broyden, “Quasi-Newton methods and their applications to function minimization”,Mathematics of Computation 21 (1967) 368–381.

C.G. Broyden, “The convergence of a class of double rank minimization algorithms”,Journal of the Institute of Mathematics and its Applications 6 (1970) 222–231.

A.R. Colville, “A comparative study of nonlinear programming codes”, Tech. Rept. 370-2949, IBM New York Scientific Center (1967).

W.C. Davidon, “Optimally conditioned optimization algorithms without line searches”,Mathematical Programming 9 (1975) 1–30.

E.J. Davison and P. Wong, “A robust conjugate-gradient algorithm which minimizesL-functions”,Automatica 11 (1975) 297–308.

J.E. Dennis and J.J. Moré, “Quasi-Newton methods, motivation and theory”,SIAM Review 19 (1977) 46–89.

R. Fletcher, “A new approach to variable metric algorithms”,The Computer Journal 13 (1970) 317–322.

R. Fletcher and M.J.D. Powell, “A rapidly convergent descent method for minimization”,The Computer Journal 6 (1963) 163–168.

R. Fletcher and M.J.D. Powell, “On the modification of theLDL T factorization”,Mathematics of Computation 28 (1974) 1067–1087.

P.E. Gill and W. Murray, “Quasi-Newton methods for unconstrained optimization”,Journal of the Institute of Mathematics and its Applications 9 (1972) 91–108.

D. Goldfarb, “A family of variable metric methods derived by variational means”,Mathematics of Computation 24 (1970) 23–26.

H.Y. Hung, “Unified approach to quadratically convergent algorithms for function minimization”Journal of Optimization Theory and Applications 5 (1970) 405–423.

D.H. Jacobson and W. Oxman, “An algorithm that minimizes homogeneous functions ofN variables inN + 2 iterations and rapidly minimizes general functions”,Journal of Mathematical Analysis and Applications 38 (1972) 535–552.

J. Kowalik and M.R. Osborne,Methods for unconstrained optimization (American Elsevier, New York, 1968).

G.P. McCormick, “An arc method for nonlinear programming”,SIAM Journal on Control 13 (1975) 1194–1216.

S.S. Oren, “Self-scaling variable metric (SSVM) algorithms, Part II: Implementation and experiments”,Management Science 20 (1974) 863–874.

E. Polak, “A modified secant method for unconstrained minimization”,Mathematical Programming 6 (1974) 264–280.

L.S. Pontryagin,Ordinary differential equations (Addison Wesley, Reading, MA., 1962).

M.J.D. Powell, “An iterative method for finding stationary values of a function of several variables”,The Computer Journal 5 (1962) 147–151.

M.J.D. Powell, “Quadratic termination properties of Davidon's new variable metric algorithm”,Mathematical Programming 12 (1977) 141–147.

H.H. Rosenbrock, “An automatic method for finding the greatest or least value of a function,”The Computer Journal 3 (1960) 175–184.

D.F. Shanno, “Conditioning of quasi-Newton methods for function minimization”Mathematics of Computation 24 (1970) 647–656.

D.F. Shanno and K. Phua, “Matrix conditioning and nonlinear optimization” (September, 1976).

E. Spedicato, “A variable-metric method for function minimization derived from invariancy to nonlinear scaling”,Journal of Optimization Theory and Applications 20 (1976) 315–330.

J. Vial and I. Zang, “Unconstrained optimization by approximation of the gradient path”,Mathematics of Operations Research, to appear.

Author information

Authors and Affiliations

Rights and permissions

About this article

Cite this article

Zang, I. A new arc algorithm for unconstrained optimization. Mathematical Programming 15, 36–52 (1978). https://doi.org/10.1007/BF01608998

Received:

Revised:

Issue Date:

DOI: https://doi.org/10.1007/BF01608998