Abstract

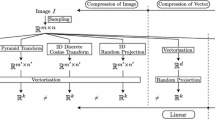

The Self-Organising Map (SOM) is an Artificial Neural Network (ANN) model consisting of a regular grid of processing units. A model of some multidimensional observation, e.g. a class of digital images, is associated with each unit. The map attempts to represent all the available observations using a restricted set of models. In unsupervised learning, the models become ordered on the grid so that similar models are close to each other. We review here the objective functions and learning rules related to the SOM, starting from vector coding based on a Euclidean metric and extending the theory of arbitrary metrics and to a subspace formalism, in which each SOM unit represents a subspace of the observation space. It is shown that this Adaptive-Subspace SOM (ASSOM) is able to create sets of wavelet- and Gabor-type filters when randomly displaced or moving input patterns are used as training data. No analytical functional form for these filters is thereby postulated. The same kind of adaptive system can create many other kinds of invariant visual filters, like rotation or scale-invariant filters, if there exist corresponding transformations in the training data. The ASSOM system can act as a learning feature-extraction stage for pattern recognisers, being able to adapt to arbitrary sensory environments. We then show that the invariant Gabor features can be effectively used in face recognition, whereby the sets of Gabor filter outputs are coded with the SOM and a face is represented by the histogram over the SOM units.

Similar content being viewed by others

References

Zeki S. The representation of colours in the cerebral cortex. Nature (London) 1980; 284: 412–418.

Suga N, O'Neill WE. Neural axis representing target range in the auditory cortex of the mustache bat. Science 1979; 206: 351–353.

Kohonen T. Self-Organizing Maps. Springer Series in Information Sciences, vol 30. Springer, Heidelberg, 1995 (2nd edn, 1997).

Kohonen T. Physiological interpretation of the self-organizing map algorithm. Neural Networks 1993; 6: 895–905.

Kaski S, Kohonen T. Winner-take-all networks for physiological models of competitive learning. Neural Networks 1994; 7: 973–984.

Sirosh J, Miikkulainen R. Cooperative self-organization of afferent and lateral connections in cortical maps. Biol Cybern 1994; 77: 65–78.

Didday R. The simulation and modelling of distributed information processing in the frog visual system. PhD Thesis. Stanford University, 1970.

Didday R. A model of visuomotor mechanisms in the frog optic tectum. Math Biosci 1976; 169–180.

Amari S, Arbib MA. Competition and cooperation in neural nets. In: Metzler J (ed). Academic Press, New York, 1977; 119–165.

Grossberg S. Adaptive pattern classification and universal recoding: I. Parallel development and coding of neural feature detectors. Biol Cybern 1976; 23: 121–134.

Grossberg S. Adaptive pattern classification and universal recoding: II. Feedback, expectation, olfaction, illusions. Biol Cybern 1976; 23: 187–202.

Hebb D. Organization of Behaviour. Wiley, New York, 1949.

Gersho A. On the structure of vector quantisers. IEEE Trans Inform Theory 1979; IT-25(4): 373–380.

Linde Y, Buzo A, Gray RM. An algorithm for vector quantization. IEEE Trans Communication 1980; COM-28: 84–95.

Gray RM. Vector quantization. IEEE ASSP Mag 1984; 1: 4–29.

Makhoul J, Roucos S, Gish H. Vector quantization in speech coding. Proc IEEE 1985; 73: 1551–1588.

Kohonen T. Self-organizing maps: Optimization approaches. In: Kohonen T et al. (eds). Artificial Neural Networks. North Holland, Amsterdam, 1991; 2: 981–990.

Robbins H, Monro S. A stochastic approximation method. Ann Math Statist 1951; 22: 400–407.

Oja E. Subspace methods of pattern recognition. RSP/Wiley, Letchworth, UK, 1983.

Oja E, Kohonen T. The subspace learning algorithm as a formalism for pattern recognition and neural networks. Proc IEEE 1988 ICNN, San Diego, 1988; 277–284.

Oja E. A simplified neuron model as a principal component analyzer. J Math Biol 1982; 15: 267–273.

Kohonen T. Emergence of invariant-feature detectors in self-organization. In: Palaniswami M et al. (eds). Computational Intelligence, A Dynamic System Perspective. IEEE Press, New York, 1995; 17–31.

Gabor D. Theory of communication. J IEE 1946; 93: 429–457.

Daugman J. Uncertainty relation for resolution in space, spatial frequency, and orientation optimised by two-dimensional visual cortical filters. J Opt Soc Am 1985; 2: 1160–1169.

Kohonen T. Emergence of invariant feature detectors in the adaptive-subspace self-organizing map. Biol Cybern 1996; 75: 281–291.

Kohonen T, Kaski S, Lappalainen H. Self-organised formation of various invariant-feature filters in the adaptive-subspace SOM. Neural Computation 1997; 9: 1321–1344.

Brodatz P. Textures — A photographic album for artists and designers. Reinhold, New York, 1968.

Oja E, Lampinen J. Unsupervised learning for feature extraction. In: Zurada JM, Marks II RJ, Robinson CJ (eds). Computational Intelligence: Imitating life. IEEE Press, New York, 1994; 13–22.

Lampinen J, Oja E. Distortion tolerant pattern recognition based on self-organizing feature extraction. IEEE Trans Neural Networks 1995; 6: 539–547.

Granlund GH. In search of a general picture processing operator. Comp Graph Image Proc 1978; 8: 155–173.

Daugman J. Complete discrete 2-D Gabor transforms by neural networks for image analysis and compression. IEEE Trans ASSP 1988; 36(7): 1169–1179.

Buhmann J, Lange J, von der Malsburg C. Distortion invariant object recognition by matching hierarchically labeled graphs. Proc IEEE IJCNN-89, Washington DC, 1989; 155–159.

Buhmann J, Lades M, von der Malsburg C. Size and distortion invariant object recognition by hierarchical graph matching. Proc IEEE IJCNN-89, San Diego, 1989; 411–416.

Flator KA, Toborg ST. An approach to image recognition using sparse filter graphs. Proc IEEE IJCNN-89, Washington DC, 1989; 313–320.

Giles CL, Griffin R, Maxwell T. Encoding geometric invariances in higher order neural networks. Neural Information Processing Systems, American Institute of Physics, New York, 1988; 301–309.

Perantonis SJ, Lisboa PJG. Translation, rotation, and scale invariant pattern recognition by high-order neural networks and moment classifiers. IEEE Trans Neural Networks 1992; 3(2): 241–251.

Taylor JG, Coombes S. Learning higher order correlations. Neural Networks 1993; 6: 423–427.

Ullman S, Basri R. Recognition by linear combination of models. IEEE Trans PAMI 1991; 13: 992–1006.

Author information

Authors and Affiliations

Rights and permissions

About this article

Cite this article

Kohonen, T., Oja, E. Visual feature analysis by the self-organising maps. Neural Comput & Applic 7, 273–286 (1998). https://doi.org/10.1007/BF01414888

Issue Date:

DOI: https://doi.org/10.1007/BF01414888