Abstract

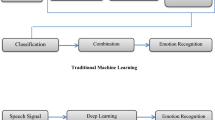

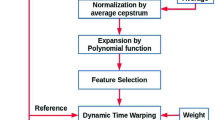

In this paper, hum of a person (instead of normal speech) is used to design a voice biometric system for person recognition. In addition, a recently proposed static feature set, viz., Variable length Teager energy based Mel Frequency Cepstral Coefficients (VTMFCC), is found to capture source-like information of a hum signal. Effectiveness of VTMFCC over linear prediction (LP) residual to capture the complementary information than MFCC is demonstrated in a hum signal. Person recognition performance is found to be better when a score-level fusion is used by combining evidences from static and dynamic features for MFCC (system) and VTMFCC (source-like) features than MFCC alone. Experiments are validated on two types of dynamic features, viz., delta cepstrum and shifted delta cepstrum. In addition, for score-level fusion using static and dynamic features % identification rate and % Equal Error Rate are observed to outperform by 7.9 % and 0.27 %, respectively than MFCC alone. Furthermore, we have observed that person recognition system gives better performance for larger frame duration 69.6 ms as opposed to traditional 10–30 ms frame duration.

Similar content being viewed by others

References

Amino, K., & Arai, T. (2009). Speaker-dependent characteristics of the nasals. Forensic Science International, 185(1–3), 21–28.

Calvo, J. R., Fernández, R., & Hernández, G. (2007). Application of shifted delta cepstral features in speaker verification. In Proc. INTERSPEECH’07, Antwerp, Belgium, Sept. 2007 (pp. 734–737).

Campbell, W. M., Assaleh, K. T., & Broun, C. C. (2002). Speaker recognition with polynomial classifiers. IEEE Transactions on Speech and Audio Processing, 10(4), 205–212.

Dang, J., & Honda, K. (1996). Acoustic characteristics of the human paranasal sinuses derived from transmission characteristics measurement and morphological observation. The Journal of the Acoustical Society of America, 100(5), 3374–3383.

Davis, S. B., & Mermelstein, P. (1980). Comparison of parametric representations for monosyllabic word recognition in continuously spoken sentences. IEEE Transactions on Acoustics, Speech, and Signal Processing, 28(4), 357–366.

Furui, S. (1981). Cepstral analysis technique for automatic speaker verification. IEEE Transactions on Acoustics, Speech, and Signal Processing, 29(2), 254–272.

Jain, A. K., Ross, A., & Prabhakar, S. (2004). An introduction to biometric recognition. IEEE Transactions on Circuits and Systems, 14(1), 4–20.

Jin, M., Kim, J., & Yoo, C. D. (2009). Humming-based human verification and identification. In Proc. int. conf. on acoust. speech and signal processing, ICASSP’09 (pp. 1453–1456).

Kaiser, J. F. (1990). On a simple algorithm to calculate the ‘energy’ of a signal. In Proc. of int. conf. on acoust., speech and signal processing, ICASSP’90 (Vol. 1, pp. 381–384).

Kominek, J., & Black, A. (2003). The CMU ARCTIC databases for speech synthesis (Tech. Rep. CMU-LTI-03-177). Language Technologies Institute, Carnegie Mellon University. http://www.festvox.org/cmu_arctic. Accessed 18 June 2012.

Martin, A. F., Doddington, G., Kamm, T., Ordowski, M., & Przybocki, M. (1997). The DET curve in assessment of detection task performance. In Proc. EUROSPEECH’97 (Vol. 4, pp. 1899–1903).

Murty, K. S. R., & Yegnanarayana, B. (2006). Combining evidence from residual phase and MFCC features for speaker recognition. IEEE Signal Processing Letters, 13(1), 52–55.

Nicholson, S., Milner, B., & Cox, S. (1997). Evaluating feature set performance using the F-ratio and J-measures. In Proc. EUROSPEECH’97 (pp. 413–416).

Patil, H. A., & Madhavi, M. C. (2012). Significance of magnitude and phase information via VTEO for humming based biometrics. In International conference on biometrics, ICB’12, Delhi, India, March 30–April 1, 2012.

Patil, H. A., & Parhi, K. K. (2010). Novel variable length Teager energy based features for person recognition from their hum. In Proc. int. conf. acoust., speech and signal processing, ICASSP’10, Dallas, Texas, USA, March 14–19, 2010 (pp. 4526–4529).

Patil, H. A., Jain, R., & Jain, P. (2008). Identification of speakers from their hum. In P. Sojka et al. (Eds.), Lecture notes in artificial intelligence, LNAI: Vol. 5426. TSD (pp. 461–468). Berlin: Springer.

Patil, H. A., Jain, R., & Jain, P. (2009). A novel approach to identification of speakers from their hum. In 7th int. conf. advances in pattern recognition, ICAPR, ISI Kolkata, Feb. 4–6, 2009 (pp. 167–170). Washington: IEEE Computer Society.

Patil, H. A., Madhavi, M. C., & Parhi, K. K. (2011). Combining evidence from spectral and source-like features for person recognition from humming. In Proc. INTERSPEECH’11, Florence, Italy, 28–31 Aug. 2011 (pp. 369–372).

Patil, H. A., Madhavi, M. C., Jain, R., & Jain, A. K. (2012). Combining evidences from temporal and spectral features for person recognition from humming. In M. K. Kundu et al. (Eds.), Lecture notes in computer science, LNCS: Vol. 7143. PerMIn (pp. 321–328). Berlin: Springer.

Quatieri, T. F. (2002). Discrete-time speech signal processing: principal and practice. Delhi: Prentice Hall.

Tomar, V., & Patil, H. A. (2008). On the development of variable length Teager energy Operator (VTEO). In Proc. INTERSPEECH’08, Brisbane, Australia, September 22–26, 2008 (pp. 1056–1059).

Weiss, N. A., & Hasset, M. J. (1993). Introductory statistics (3rd ed.). Reading: Addison-Wesley.

Yingyong, Q., & Fox, R. A. (1992). Analysis of nasal consonants using perceptual linear prediction. The Journal of the Acoustical Society of America, 91(3), 1718–1726.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Patil, H.A., Madhavi, M.C. & Parhi, K.K. Static and dynamic information derived from source and system features for person recognition from humming. Int J Speech Technol 15, 393–406 (2012). https://doi.org/10.1007/s10772-012-9161-5

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10772-012-9161-5