Abstract

One of the scientific objectives of NASA’s Fermi Gamma-ray Space Telescope is the study of Gamma-Ray Bursts (GRBs). The Fermi Gamma-Ray Burst Monitor (GBM) was designed to detect and localize bursts for the Fermi mission. By means of an array of 12 NaI(Tl) (8 keV to 1 MeV) and two BGO (0.2 to 40 MeV) scintillation detectors, GBM extends the energy range (20 MeV to > 300 GeV) of Fermi’s main instrument, the Large Area Telescope, into the traditional range of current GRB databases. The physical detector response of the GBM instrument to GRBs is determined with the help of Monte Carlo simulations, which are supported and verified by on-ground individual detector calibration measurements. We present the principal instrument properties, which have been determined as a function of energy and angle, including the channel-energy relation, the energy resolution, the effective area and the spatial homogeneity.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

The Fermi Gamma-ray Space Telescope (formerly known as GLAST), which was successfully launched on June 11, 2008, is an international and multi-agency space observatory [2, 26] that studies the cosmos in the photon energy range of 8 keV to greater than 300 GeV. The scientific motivations for the Fermi mission comprise a wide range of non-thermal processes and phenomena that can best be studied in high-energy gamma rays, from solar flares to pulsars and cosmic rays in our Galaxy, to blazars and Gamma-Ray Bursts (GRBs) at cosmological distances [11]. Particularly in GRB science, the detection of energy emission beyond 50 MeV [6, 17] still represents a puzzling topic, mainly because only a few observations by the Energetic Gamma-Ray Experiment Telescope (EGRET) [33] on-board the Compton Gamma-Ray Observatory (CGRO) [12, 15] and more recently by AGILE [10] are presently available above this energy. Fermi’s detection range, extending approximately an order of magnitude beyond EGRET’s upper energy limit of 30 GeV, will hopefully expand the catalogue of high-energy burst detections. A greater number of detailed observations of burst emission at MeV and GeV energies should provide a better understanding of bursts, thus testing GRB high-energy emission models [5, 25, 30, 35]. Fermi was specifically designed to avoid some of the limitations of EGRET, and it incorporates new technology and advanced on-board software that will allow it to achieve scientific goals greater than previous space experiments.

The main instrument on board the Fermi observatory is the Large Area Telescope (LAT), a pair conversion telescope, like EGRET, operating in the energy range between 20 MeV and 300 GeV. This detector is based on solid-state technology, obviating the need for consumables (as was the case for EGRET’s spark chambers, whose detector gas needed to be periodically replenished) and greatly decreasing (<10 μs) dead time (EGRET’s high dead time was due to the length of time required to re-charge the HV power supplies after event detection). These features, combined with the large effective area and excellent background rejection, allow the LAT to detect both faint sources and transient signals in the gamma-ray sky. Aside from the main instrument, the Fermi Gamma-Ray Burst Monitor (GBM) extends the Fermi energy range to lower energies (from 8 keV to 40 MeV). The GBM helps the LAT with the discovery of transient events within a larger FoV and performs time-resolved spectroscopy of the measured burst emission. In case of very strong and hard bursts, the GRB position, which is usually communicated by the GBM to the LAT, allows a repointing of the main instrument, in order to search for higher energy prompt or delayed emission.

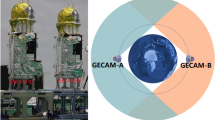

The GBM is composed of unshielded and uncollimated scintillation detectors (12 NaI (Tl) and two BGO) which are distributed around the Fermi spacecraft with different viewing angles, as shown in Fig. 1, in order to determine the direction to a burst by comparing the count rates of different detectors. The reconstruction of source locations and the determination of spectral and temporal properties from GBM data requires very detailed knowledge of the full GBM detectors’ response. This is mainly derived from computer modeling and Monte Carlo simulations [14, 20], which are supported and verified by experimental calibration measurements.

On the left: Schematic representation of the Fermi spacecraft, showing the placement of the 14 GBM detectors: 12 NaI detectors (from n0 to nb) are located in groups of three on the spacecraft edges, while two BGOs (b0 and b1) are positioned on opposite sides of the spacecraft. On the right: Picture of Fermi taken at Cape Canaveral few days before the launch. Here, six NaIs and one BGO are visible on the spacecraft’s side. Photo credit: NASA/Kim Shiflett (http://mediaarchive.ksc.nasa.gov)

In order to perform the above validations, several calibration campaigns were carried out in the years 2005 to 2008. The calibration of each individual detector (or detector-level calibration) comprises three distinct campaigns: a main campaign with radioactive sources (from 14.4 keV to 4.4 MeV), which was performed in the laboratory of the Max-Planck-Institut für extraterrestrische Physik (MPE, Munich, Germany), and two additional campaigns focusing on the low energy calibration of the NaI detectors (from 10 to 60 keV) and on the high energy calibration of the BGO detectors (from 4.4 to 17.6 MeV), respectively. The first one was performed at the synchrotron radiation facility of the Berliner Elektronenspeicherring-Gesellschaft für Synchrotronstrahlung (BESSY, Berlin, Germany), with the support and collaboration of the German Physikalisch-Technische Bundesanstalt (PTB), while the second was carried out at the SLAC National Accelerator Laboratory (Stanford, CA, USA).

Subsequent calibration campaigns of the GBM instrument were performed at system-level, that comprises all flight detectors, the flight Data Processing Unit (DPU) and the Power Supply Box (PSB). These were carried out in the laboratories of the National Space Science and Technology Center (NSSTC) and of the Marshall Space Flight Center (MSFC) at Huntsville (AL, USA) and include measurements for the determination of the channel-energy relation of the flight DPU and checking of the detectors’ performance before and after environmental tests. After the integration of GBM onto the spacecraft, a radioactive source survey was performed in order to verify the spacecraft backscattering in the modeling of the instrument response. These later measurements are summarized in internal NASA reports and will be not further discussed.

This paper focuses on the detector-level calibration campaigns of the GBM instrument, and in particular on the analysis methods and results, which crucially support the development of a consistent GBM instrument response. It is organized as follows: Section 2 outlines the technical characteristics of the GBM detectors; Section 3 describes the various calibration campaigns which have been done, highlighting simulations of the calibration in the laboratory environment performed at MPE (see Section 3.4); Section 4 discusses the analysis system for the calibration data and shows the calibration results. In Section 5, final comments about the scientific capabilities of GBM are given and the synergy of GBM with present space missions is outlined.

2 The GBM detectors

The GBM flight hardware comprises a set of 12 Thallium activated Sodium Iodide crystals (NaI(Tl), hereafter NaI), two Bismuth Germanate crystals (Bi4 Ge3 O13, commonly abbreviated as BGO), a DPU, and a PSB. In total, 17 scintillation detectors were built: 12 flight module (FM) NaI detectors, two FM BGO detectors, one spare NaI detector and two engineering qualification models (EQM), one for each detector type. Since detector NaI FM 06 immediately showed low-level performances, it was decided to replace it with the spare detector, which was consequently numbered FM 13. Note that the detector numbering scheme used in the calibration and adopted throughout this paper is different to the one used for in-flight analysis, as indicated in Table 4 (columns 2 and 3) in the Appendix.

The cylindrical NaI crystals (see Fig. 2) have a diameter of 12.7 cm (5”) and a thickness of 1.27 cm (0.5”). For light tightness and for sealing the crystals against atmospheric moisture (NaI(Tl) is very hygroscopic) each crystal is packed light-tight in a hermetically sealed Al-housing (with the exception of the glass window to which the PMT is attached). In order to allow measurements of X-rays down to 5 keV (original project goal [8]) the radiation entrance window is made of a 0.2 mm thick Beryllium sheet. However, due to mechanical stability reasons, an additional 0.7 mm thick Silicone layer had to be mounted between the Be window and the crystal, causing a slight increase of the low-energy detection threshold. Moreover, an 0.5 mm thick Tetratex layer was placed in front of the NaI crystal, in order to improve its reflectivity. The transmission probability as a function of energy for all components of the detector window’s system is shown in Fig. 3. Consequently, NaI detectors are able to detect gamma-rays in the energy range between ~ 8 keV and ~ 1 MeV. The individual detectors are mounted around the spacecraft and are oriented as shown schematically in Fig. 1 (left panel). This arrangement results in an exposure of the whole sky unocculted by the earth in orbit.

The Hamamatsu R877 photomultiplier tube (PMT) is used for all the GBM detectors. This is a 10-stage 5-inch phototube made from borosilicate glass with a bialkali (CsSb) photocathode, which has been modified (R877RG-105) in order to fulfill the GBM mechanical load-requirements.

With their energy range extending between ~ 0.2 and ~ 40 MeV, two BGO detectors provide the overlap in energy with the LAT instrument and are crucial for in-flight inter-instrument calibration. The two cylindrical BGO crystals (see Fig. 4) have a diameter and a length of 12.7 cm (5”) and are mounted on opposite sides of the Fermi spacecraft (see Fig. 1), providing nearly a 4 π sr FoV. The BGO housings are made of CFRP (Carbon Fibre Reinforced Plastic), which provides the light tightness and improves the mechanical stability of the BGO unit. For thermal reasons, the interface parts are fabricated of Titanium. On each end, the circular side windows of the crystal are polished in mirror quality and are viewed by a PMT (same type as used for the NaI detectors). Viewing the crystal by two PMTs guarantees a better light collection and a higher level of redundancy.

On the top: Schematic cross-section of a GBM BGO detector. The right hand side of the schematic is a cut away view, whereas the left hand side is an external view. The central portion contains the BGO crystal (light blue), which is partially covered by the outer surface of the detector’s assembly. A picture of a BGO detector flight unit taken in the laboratory during system level calibration measurements is shown in the bottom panel

The output signals of all PMTs (for both NaIs and BGOs) are first amplified via linear charge-sensitive amplifiers. The preamplifier gains and the HVs are adjusted so that they produce a ~5 V signal for a 1 MeV gamma-ray incident on a NaI detector and for a 30 MeV gamma-ray incident on a BGO detector. Due to a change of the BGO HV settings after launch, this value changed to 40 MeV, thus extending the original BGO energy range [8]. Signals are then sent through pulse shaping stages to an output amplifier supplying differential signals to the input stage of the DPU, which are combined by a unity gain operational amplifier in the DPU before digitizing. In the particular case of BGO detectors, outputs from the two PMTs are divided by two and then added at the preamplifier stage in the DPU.

In the DPU, the detector pulses are continuously digitized by a separate flash ADC at a speed of 0.1 μs. The pulse peak is measured by a separate software running in a Field Programmable Gate Array (FPGA). This scheme allows a fixed energy independent commendable dead-time for digitization. The signal processor digitizes the amplified PMT anode signals into 4096 linear channels. Due to telemetry limitations, these channels are mapped (pseudo-logarithmic compression) on-board into (1) 128-channel resolution spectra, with a nominal temporal resolution of 4.096 s (Continuous High SPECtral resolution or CSPEC data) and (2) spectra with a poorer spectral resolution of eight channels and better temporal resolution of 0.256 s (Continuous high TIME resolution or CTIME data) by using uploaded look-up tables.Footnote 1 These were defined with the help of the on-ground channel-energy relations (see Section 4.2). Moreover, time-tagged event (TTE) data are continuously stored by the DPU. These data consist of individually digitized pulse height events from the GBM detectors which have the same channel boundaries as CSPEC and 2 μs resolution. TTE data are transmitted only when a burst trigger occurs or by command. More details on the GBM data type as well as a block diagram of the GBM flight hardware can be found in [24].

Besides processing signals from the detectors, the DPU processes commands, formats data for transmission to the spacecraft and controls high and low voltage (HV and LV) to the detectors. Changes in the detector gains can be due to several effects, such as temperature changes of the detectors and of the HV power supply, variations in the magnetic field at the PMT, and PMT aging. GBM adopts a technique previously employed on BATSE, that is Automatic Gain Control (AGC). In this way, long timescale gain changes are compensated by the GBM flight software by adjusting the PMT HV to keep the background 511 keV line at a specified energy channel.

3 Calibration campaigns

To enable the location of a GRB and to derive its spectrum, a detailed knowledge of the GBM detector response is necessary. The information regarding the detected energy of an infalling gamma-ray photon, which is dependent on the direction from where it entered the detector, is stored into a response matrix. This must be generated for each detector using computer simulations. The actual detector response at discrete incidence angles and energies has to be measured to verify the validity of the simulated responses. The complete response matrix of the whole instrument system (including LAT and the spacecraft structure) is finally created by simulation of a dense grid of energies and infalling photon directions using the verified simulation tool [21].

The following subsections are dedicated to the descriptions of the three calibration campaigns at detector level. The most complete calibration of all flight and engineering qualification models was performed at the MPE laboratory using a set of calibrated radioactive sources whose type and properties are listed in Table 1.

Due to the lack of radioactive sources producing lines below 60 keV and in order to study spatial homogeneity properties of NaI detectors, a dedicated calibration campaign was performed at PTB/BESSY. Here, four NaI detectors (FM 01, FM 02, FM 03 and FM 04)Footnote 2 were exposed to a monochromatic X-ray beam with energy ranging from 10 to 60 keV, and the whole detector’s surface was additionally raster-scanned at different energies with a pencil beam perpendicular to the detector’s surface.

In order to extend the BGO calibration range, another dedicated calibration campaign was carried out at the SLAC laboratory. Here, the BGO EQM detectorFootnote 3 was exposed to three gamma-ray lines (up to 17.6 MeV) produced by the interaction of a proton beam of ~340 keV, generated with a small Van-de-Graaff accelerator, with a LiF-target. A checklist showing which detectors were employed at each detector-level calibration campaign is given in Table 4 (columns 4 to 6).

3.1 Laboratory setup and calibration instrumentation at MPE

The measurements performed at MPE resulted in an energy calibration with various radioactive sources and, in addition, a calibration of the angular response of the detectors at different incidence angles of the radiation. The detectors and the radioactive sources were fixed on special holders which were placed on wooden stands above the laboratory floor to reduce scattering from objects close to them (see Fig. 5). The radioactive sources were placed almost always at the same distance (d) from the detector. The position of the detector’s wooden stand with respect to the laboratory was never changed during measurements. Due to the unavailability of the flight DPU and PSB, commercial HV and LV power supplies were used and the data were read out by a Breadboard DPU.

The determination of the angular response of the detectors was achieved in the following way. The center of the NaI detector calibration coordinate system was chosen at the center of the external surface of the Be-window of the detector unit, with the X axis pointing toward the radioactive source, the Y axis pointing toward left, and Z axis pointing up (see Fig. 8, left panel). The detectors were mounted on a specially developed holder in such a way that the front of the Be-window was parallel to the Y/Z plane (if the detector is pointed to the source; i.e. 0° position) and so that detectors could be rotated around two axes in order to achieve all incidence angles of the radiation. The detector rotation axes were the Z-axis (Azimuth) and around the X-axis (roll). For BGO detectors, the mounting was such that the very center of the detector (center of crystal) was coincident with the origin of the coordinate system and the 0° position was defined as the long detector axis coincident with the Y-axis. The BGO detectors were only rotated around the Z-axis, and no roll angles were measured in this case.

3.2 NaI low-energy calibration at PTB/BESSY

The calibration of the NaI detectors in the low photon energy range down to 10 keV was performed with monochromatic synchrotron radiation with the support of the PTB. A pencil beam of about 0.2 ×0.2 mm 2 was extracted from a wavelength-shifter beamline, the “BAMline” [29], at the electron storage ring BESSY II, which is equipped with a double-multilayer monochromator (DMM) and a double-crystal monochromator (DCM) [13]. In the photon energy range from 10 keV to 30 keV DCM and DMM were operated in series to combine the high resolving power of the DCM with the high spectral purity of the DMM. Above 30 keV, a high spectral purity with higher order contributions below 10 − 4 was already achieved by the DCM alone. The tunability of the photon energy was also used to investigate the detectors in the vicinity of the Iodine K-edge at 33.17 keV.

The absolute number of photons in the pencil beam was independently determined by two different methods: firstly by taking at each photon energy a spectrum with a high-purity germanium detector (HPGe) for which a quantum detection efficiency (QDE) of unity had been determined earlier, and secondly by using silicon photodiodes which in turn had been calibrated against PTB primary detector standards such as a cryogenic radiometer and a free-air ionization chamber [23]. As these photodiodes are operated in the photovoltaic mode, the photon fluxes had to be about four orders of magnitude higher than for the counting detectors. Different pairs of Cu and Al filters were designed for different photon energy ranges so that the transmittance of one filter was in the order of 1% which can easily be measured. Two identical filters were used in series to achieve the required reduction in flux by four orders of magnitude. A picture of the calibration setup is shown in Fig. 6.

NaI FM 04 detector photographed inside the measurement cave of the BAMline during the low-energy calibration campaign at the electron storage ring BESSY II in Berlin. The HPGe detector is located left of the GBM detector. Both are mounted on the XZ table which was moved by step motors during the scans. The beam exit window of the BAMline is located below the red box visible at the top right corner. The Cu and Al filters holders are placed horizontally between the window and the detectors

The effective area of the detectors as a function of the photon energy was determined by scanning the detectors at discrete locations in x- and y-direction over the active area while the pencil beam was fixed in space. During the scan, the intensity was monitored with a photodiode operated in transmission. The effective area is just the product of the average QDE and the active area. In addition, the spatial homogeneity of the QDE was determined by these measurements (see Section 4.4).

The measurements presented in this paper were recorded at 18 different energies, namely from 10 to 20 kev in 2 keV steps, from 30 to 37 keV in 1 keV steps and at 32.8, 40, 50 and 60 keV. These accurate measurements allowed to exactly determine the low-energy behavior of the channel-energy relation of the NaI detectors (see Section 4.2.1) and to fine tune the energy range around the Iodine K-edge at 33.17 keV (see Section 4.2.2). Moreover, three rasterscans of the detector’s surface were performed at 10, 36 and 60 keV in order to study the detectors’ spatial homogeneity (see Section 4.5 for more details).

3.3 BGO high-energy calibration at SLAC

In order to better constrain the channel-energy relation and the energy resolution at energies higher than 4.4 MeV, an additional high-energy calibration of the BGO EQM detector was performed at SLAC with a small electrostatic Van-de-Graaff accelerator [19]. This produces a proton beam up to ~350 keV and was already used to verify the LAT photon effective area at the low end of the Fermi energy range (20 MeV). When the proton beam produced by the Van-de-Graaff accelerator strikes a LiF target, which terminates the end of the vacuum pipe (see Fig. 7, left panel), gammas with energies of 6.1 MeV, 14.6 MeV, and 17.5 MeV are produced via the reactions

The highly excited 17.5 MeV state of 8Be is created by protons in a resonance capture process at 340 keV on 7Li (see Eq. 1). At lower energies, photons are still produced from the Breit-Wigner tail (Γ = 12 keV) of the 8Be* resonance. The narrow gamma-ray line at 17.5 MeV is produced by the transition to the 8Be ground state, in which the quantum energy is determined by hν = Q + 7/8 E p , where Q = 17.2 MeV is the energy available from the mass change and E p = 340 keV is the proton beam energy. The gamma-ray line observed at 14.6 MeV, which corresponds to transitions to the first excited state of 8Be, is broadened with respect to the experimental resolution, because of the short lifetime of the state against decay into two alpha-particles. Finally, Eq. 2 shows that 6.1 MeV gamma-rays are generated when the narrow (Γ = 3.2 keV) 16O resonance at 340 keV is hit.

BGO EQM detector photographed in the SLAC laboratory during the high-energy calibration campaign (left panel). The grey box on the left is the end of the proton beam, inside which the LiF target was placed in order to react and produce the desired gamma lines (see Eq. 1 and 2). The right panel shows a simulation of the gamma-ray interaction with the detector. Only gamma-rays whose first interaction is within the detector crystal are shown for clarity

For performing the measurements, the EQM detector was placed as close as possible to the LiF-target at an angle of ~45° with respect to the proton-beam line, in order to guarantee a maximized flux of the generated gamma-rays. Unfortunately, measurements for the determination of the detector’s effective area could not be obtained, since the gamma-ray flux was not closely monitored.

3.4 Simulation of the laboratory and the calibration setup at MPE

In order to simulate the recorded spectra of the calibration campaign at MPE to gain confidence in the simulation software used, a very detailed model of the environment in which the calibration took place had to be created. The detailed modeling of the laboratory was necessary as all scattered radiation from the surrounding material near and far had to be included to realistically simulate all the radiation reaching the detector.Footnote 4 Background measurements with no radioactive sources present were taken to subtract the ever-present natural background radiation in the laboratory. However, the source-induced “background” radiation created by scattered radiation of the non-collimated radioactive sources had to be included in the simulation to enable a detailed comparison with the measured spectra.

The detailed modeling of the calibration setup of the MPE laboratory was performed using the GEANT4 -based GRESSFootnote 5 simulation software provided by the collaboration team based at the Los Alamos National Laboratory (LANL, USA), who also provided the software model of the detectors [14]. The modeling of the whole laboratory included laboratory walls (concrete), windows (aluminum, glass), doors (steel), tables (wood, aluminum), cupboards (wood), a shelf (wood), the electricity distributor closet (steel) the optical bench (aluminum, granite) and the floor (PVC). Moreover, detector and source stands (wood), source holder (PVC, acrylic) and detector holder (aluminum) were modeled in great detail (see Fig. 8, left panel). A summary of the comparison of the measurements and the simulation, with respect to the influence of the various components of the calibration environment is given in Table 2 and a sample plot of the scattered radiation is given in the right panel of Fig. 8.

Simulation of the laboratory environment. The left panel shows in a top view of the laboratory the components of the simulation model of all objects which were present during the calibration campaign. Also shown is the coordinate system adopted (X and Y axis of the right-handed system; +Z axis pointing upward). The right panel shows an example of the simulated scattering of the radiation in the laboratory. In a view from the -Y axis, the path of the first 100 photons interacting with the detector are shown. The radioactive source emitted radiation of 1.275 MeV isotropically. The major part of the detected photons is directly incident, but a significant fraction is scattered radiation by the laboratory environment (see Table 2)

Additional simulations of the other calibration campaigns, in particular for the PTB/ BESSY one, are planned. In the case of SLAC measurements, the simulation tools were only used to determine the ratios between full-energy peaks and escape peaks (see Fig. 7, right panel): no further simulation of the calibration setup is foreseen.

4 Calibration data analysis and results

4.1 Processing of calibration runs

During each calibration campaign, all spectra measured by the GBM detectors were recor-ded together with the information necessary for the analysis. Shortly before or after the collection of data runs, additional background measurements were recorded for longer periods. Every run was then normalized to an exposure time of 1 h, and the background was subsequently subtracted from the data. In the case of measurements performed at PTB/BESSY, natural background contribution could be neglected due to the very high beam intensities and to the short measurement times.

Figures 9, 10, 11 and 12 show a series of sample spectra collected with detectors NaI FM 04, BGO FM02 and BGO EQM. These particular detectors were chosen arbitrarily to present the whole analysis, since it was checked that all other detectors behave in an identical way (see Section 4.3 and Section 4.4). In Fig. 9, four calibration runs recorded at PTB/BESSY at different photon energies (10, 33, 34 and 60 keV) highlight the appearance of an important feature of the NaI spectra. Below the characteristic Iodine (I) K-shell binding energy of 33.17 keV (or “K-edge” energy), spectra display only the full-energy peak, which moves toward higher channel numbers with increasing photon energy (see panels a and b). For energies higher than the K-edge energy, a second peak appears to the left of the full-energy peak (see panels c and d), which is caused by the escape of characteristic X-rays resulting from K-shell transitions (fluorescence of Iodine). The energy of this fluorescence escape peak equals the one of the full-energy peak minus the X-ray line energy [4]. The contributions of the different Iodine Kα and Kβ fluorescence lines can not be resolved by the detector.

Spectra measured with monochromatic synchrotron radiation (SR) at PTB/BESSY with detector NaI FM 04. Results for four different photon energies are shown: a 10 keV, b 33 keV, c 34 keV, and d 60 keV. The two top panels (a and b) display spectra collected below the Iodine K-edge energy (i.e. <33.17 keV). Above this energy (panels c and d), the characteristic Iodine escape peak is clearly visible to the left of the full-energy peak

NaI spectra from radioactive sources recorded at MPE are shown in Fig. 10. A detailed description of the full-energy peaks characterizing every source is given in Section 4.1.1. Beside full-energy and Iodine escape peaks, spectra from high-energy radioactive lines show more features (i.e. see the 137Cs spectrum in panel f ), such as the low-energy X radiation (due to internal scattering of gamma-rays very close to the radioactive material) at the very left of the spectrum, the Compton distribution, which is a continuous distribution due to primary gamma-rays undergoing Compton scattering within the crystal, and a backscatter peak at the low-energy end of the Compton distribution.

Similarly, BGO spectra from radioactive sources collected at MPE and SLAC with detector FM 02 and BGO EQM are presented in Figs. 11 and 12. The spectrum produced by the Van-de-Graaff proton beam at SLAC, which was measured by the spare detector BGO EQM, is shown in panel c of Fig. 12.

4.1.1 Analysis of the full-energy peak

Radioactive lines emerge from the measured spectra as peaks of various shapes and with multiple underlying contributions. Depending on the specific spectrum, one or more Gaussians in the form

were added in order to fit the data. The three free parameters are (1) the peak area A, (2) the peak center x c , and (3) the full width at half maximum w, which is related to the standard deviation (σ) of the distribution through the relation w = 2 \(\sqrt{\,2 \ln{2}}\) · σ ≈ 2.35 · σ. For the analysis of the measured full-energy peaks, further background components (linear, quadratic or exponential) and Gaussian components had to be modeled in addition to the main Gaussian(s), in order to account for non-photo-peak contributions, such as the overlapping Compton distributions, the backscattered radiation caused by the presence of the uncollimated radioactive source in the laboratory or other unknown background features. In the case of PTB/BESSY spectra, asymmetries appearing at the low energy tail of the full-energy peak were neglected by choosing a smaller region of interest and fitting only the right side of the peak. As already mentioned, no background was modeled under these spectra.

Moreover, since both NaI and BGO detectors are not always able to fully separate two lines lying close to each other and thus resulting in a single broadened peak, particular constraints between line parameters of single peak components were fixed before running the fitting routines. The relation between two line areas (‘A 1’ and ‘A 2’) arises from the transition probability P of the single line energies (see Table 1, column 4). A ratio between areas, K area = P 1/P 2, was obtained by considering those probabilities together with the transmission probability for the detector entrance window and the relative transmission of the photons between source and detector. Finally, the ratios were cross-checked and determined through detailed simulations performed for seven double lines measured with NaI and BGO detectors, which are listed in Table 3.

An important consideration when fitting mathematical functions to these data is that the calculated statistical errors of the fit parameters are always within 0.1% in the case of line areas and FWHM, or even 0.01% in the case of line-centers. Such extreme precisions cause very high chi-square values in subsequent analysis, as in the determination of the channel-energy relation, which extends over an entire energy decade in the case of NaI detectors. Moreover, it was noticed that by slightly changing the initial fitting conditions, such as the region of interest around the peak or the type of background, parameter values suffered from substantial changes with respect to a precedent analysis. This effect is particularly strong in the analysis of multiple peaks, were more Gaussians and background functions are added and the number of free parameters increases. In order to account for this effects and to get a more realistic evaluation of the fit parameter errors, we decided to analyse several times one spectrum per source (measured at normal incidence by detectors NaI FM 04 and BGO FM 02), each time putting different initial fitting conditions. This procedure was repeated several times (usually ~10–20 times, i.e. until the systematic contribution was not further increasing and a good chi-square value of the individual fit was produced), thus obtaining a dataset of fit parameters and respective errors. For each error dataset, standard deviations (σ) were calculated, resulting in values of the order of 1% for line areas and FWHMs and of 0.1% for line-centers, and were finally added to the fit error, thus obtaining realistic errors.

The fitting results for 17 lines measured by NaI FM 04 are presented graphically in Figs. 13 and 14. In each panel the fitted line energies are given in the top right corner. Fits to the data are shown in red. Gaussian components, describing the full-energy peaks, and background components are shown as solid blue and dotted green curves, respectively. Dashed blue curves represent either background (Fig. 13, panels b, c, d, and f) or Iodine escape peaks (Fig. 14, panels b and c). For energies above 279.2 keV (Fig. 14, panels d to h), the Iodine escape peak is no longer fitted as an extra component but is absorbed by the full-energy peak. The tails observed at energies lower than 20 keV (Fig. 13, panels a and b) are supposed to be due to scattering from the entrance window materials or to some L-shell escape X-rays.

Full-energy peak analysis of NaI lines. (a-f) Data points (in black) are plotted with statistical errors. Line fits (solid red curves) arise from the superposition of different components: (i) one (or more) Gaussian functions describing the full-energy peak(s) (solid blue curves); (ii) secondary Gaussian functions modeling the Iodine escape peaks or other unknown background features (dashed blue curves); (iii) a constant, linear, quadratic or exponential function accounting for background contributions (dotted green curves). For PTB/BESSY line analysis (panels a and e), the background contributions could be neglected and only the fit to the full-energy peak was performed starting from 4 to 10 channels before the maximum

Full-energy peak analysis of NaI lines. (a-h) Data points (in black) are plotted with statistical errors. Line fits (solid red curves) arise from the superposition of different components: (i) one (or more) Gaussian functions describing the full-energy peak(s) (solid blue curves); (ii) secondary Gaussian functions modeling the Iodine escape peaks or other unknown background features (dashed blue curves); (iii) a constant, linear, quadratic or exponential function accounting for background contributions (dotted green curves). For PTB/BESSY line analysis (panel a), the background contributions could be neglected and only the fit to the full-energy peak was performed starting from 4 to 10 channels before the maximum

Results for 16 fitted BGO lines are shown in Figs. 15 and 16. Each full-energy peak was modeled with a single Gaussian (solid blue curves) over an exponential background (dotted green curves). The line from 57Co at 124.59 keV (Fig. 15, panel a) lies outside the nominal BGO energy range (200 keV–40 MeV) and shows a strong asymmetric broadening on the left of the full-energy peak, which can be described by an additional Gaussian component (dashed blue curve). Panels a, c, e, f and g of Fig. 16 show fitted lines from spectra taken at SLAC with BGO EQM. For some spectra, energies of the first and second pair production escape peaks, which lie ~ 511 keV and ~ 1 MeV below the full-energy peak, respectively, are reported in the top right of each plot. Some of these secondary lines were included in the determination of the BGO channel-energy relation (see Section 4.2.4).

Full-energy peak analysis of BGO lines. Data points (in black) are plotted with statistical errors. Line fits (solid red curves) arise from the superposition of different components: (i) one (or more) Gaussian functions describing the full-energy peak(s) and the pair production escape peaks (solid blue curves); (ii) a constant, linear, quadratic or exponential function accounting for background contributions (dotted green curves) (a–h)

Full-energy peak analysis of BGO lines. Data points (in black) are plotted with statistical errors. Line fits (solid red curves) arise from the superposition of different components: (i) one (or more) Gaussian functions describing the full-energy peak(s) and the pair production escape peaks (solid blue curves); (ii) a constant, linear, quadratic or exponential function accounting for background contributions (dotted green curves) (a–g)

4.2 Channel-energy relation

4.2.1 NaI nonlinear response

Several decades of experimental studies of the response of NaI(Tl) to gamma-rays have indicated that the scintillation efficiency mildly varies with the deposited energy [7, 16, 27, 28]. Such nonlinearity must be correctly taken into account when relating the pulse-height scale (i.e. the channel numbers) to gamma-ray energies. Figure 17 shows the pulse height per unit energy (normalized to a value of unity at 661.66 keV) versus incident photon energy E γ as measured by detector NaI FM 04. The data points include radioactive source measurements performed at MPE (triangles) together with additional low-energy measurements taken at PTB/BESSY between 10 and 60 keV (squares). In this case, nonlinearity clearly appears as a dip in the plot at a characteristic energy corresponding to the K-shell binding energy in Iodine, i.e. 33.17 keV (as previously mentioned in Section 4.1). Photoelectrons ejected by incident gamma-rays just above the K-shell absorption edge have very little kinetic energy, so that the response drops. Just below this energy, however, K-shell ionization is not possible and L-shell ionization takes place. Since the binding energy is lower, the ejected photoelectrons are more energetic, which causes a rise in the response.

The addition of measurements taken at PTB/BESSY with four NaI detectors (see Section 3.2) for computing the NaI response is particularly necessary in the region around the K-edge energy, since the radioactive sources used at MPE only sample it with four lines, three of which (22.1, 25 and 32.06 keV) belong to double peaks and the first line from 57Co at 14.41 keV shows asymmetries and broadening (see Fig. 13, panel a). From the collected PTB/BESSY data, line fitting results were obtained for 19 spectra collected at energies between 10 and 60 keV. Corrections of gain settings between the detectors during the two different calibration campaigns were carefully taken into account.

In order to compute a valuable NaI channel-energy relation, the energy range was initially split into two regions, one below and one above the K-edge energy. For E < 33.17 keV, data were fitted with a second degree polynomial (parabola), while for E > 33.17 keV the following empirical function was adopted:

where E is the line energy in keV and x c is the line-center position in channels.

Figure 18 shows an example of the channel-energy relation calculated for detector FM 04 in the low-energy (left panel) and in the high-energy range (right panel). Radioactive sources data (triangles) and PTB/BESSY data (squares) are fitted together with a second degree polynomial (left panel, red curve) and with the empirical function of Eq. 4 (right panel, blue curve). Analysis of all detectors shows similar results. In particular, all calculated relations give fit residuals below 1%, as required. The obtained fit parameters are reported in the figure’s caption.

Channel-energy relation calculated below (left panel) and above (right panel) the K-edge energy for detector NaI FM 04. MPE data points (triangles) and PTB/BESSY data points (squares) are fitted together with a second degree polynomial below 33.17 keV (left panel, red curve) and with the empirical function (see Eq. 4) above 33.17 keV (right panel, blue curve). Residuals to the fits are given in the panel under the respective plots. For the quadratic fit below the K-edge, following fit parameters were obtained: a = 1.08 ± 0.05, b = 0.1495 ± 0.0009, c = (2.64 ± 0.04) · 10 − 4, with a reduced χ 2 of 23. In the case of the empirical fit above the K-edge, the fit parameters are a = 94.7 ± 1.4, b = 2.73 ± 0.09, c = 0.2306 ± 0.0008, d = − 26.2 ± 0.4, with a reduced χ 2 of 60

4.2.2 The iodine K-edge region

By taking a closer look to the region around the K-edge energy (see Fig. 19, left panel), the discrepancy between low and high-energy fits becomes clearly visible. Thus, assigning a unique energy to every channel is a more delicate issue. A possible way to solve such an ambiguity is to divide the channels domain into three parts, as shown in Fig. 19, right panel. x e and x q represent the channels were the empirical and quadratic fit, respectively, assume the value of E K = 33.17 keV (red and blue diamonds). For all channels in the interval x e < x < x q , a linear relation was calculated in order to assign an average energy to each channel: The green triangles represent the average energies of the two energies calculated with both relations (diamonds and circles). For the analysis of laboratory calibrations, we calculated a K-edge region (region 2) of about five to seven channels for every NaI detector. On orbit however, due to a much smaller number of channels (128), the flight DPU groups these channels into one “transition” channel (per detector), which is calculated through lookup tables.

On the left: Channel-energy relation around the Iodine K-edge for detector NaI FM 04. Data points from radioactive source lines (triangles) and from synchrotron radiation (squares) are fitted together with a quadratic function below the K-edge energy (red curve) and with the empirical function above the K-edge energy (blue curve). Residuals to the fits are given in the panel under the plot. On the right: Schematic representation of the channel-energy relation around the Iodine K-edge energy. In region 1, for x < x e , the quadratic relation is adopted (red curve). In region 3, for x > x q , the empirical function is adopted (blue curve). The ambiguity arises at channel x e (region 2), which could in principle be equally described by both relations, i.e. we could assign to it either an energy E q (x e ) (red circle) or the K-edge energy E K (blue diamond). In order to avoid this ambiguity, a linear relation (green curve) has been derived in order to assign an average energy value to each channel in region 2 (green triangles)

4.2.3 Simulation validation

In order to check the accuracy of the obtained channel-energy relation and to validate simulations presented in Section 3.4, radioactive source spectra were compared with simulated data. Figures 20 and 21 show the 14 previously analysed NaI lines (measured at normal incidence with detector FM 12)Footnote 6 as a function of energy, that is after applying the channel-energy conversion. Simulated unbroadened and broadened spectra are overplotted as green and red histograms, respectively. Sample spectra over the full NaI energy range comparing simulation and measurements can be found in [14].

Comparison between spectra collected with NaI FM 12, after channel-energy conversion, and simulated spectra for 6 radioactive source lines. Here, line fits and various components, previously described in Figs. 13 and 14, are shown as solid and dashed grey curves, respectively. Simulated broadened spectra are overplotted in red. Unbroadened spectra (green histograms) can be considered as guidelines for the exact energy positions of lines and secondary escape peaks (see text). The height of the unbroadened peaks is truncated in most plots to better show the comparison between real and broadened spectra (a–f)

Comparison between spectra collected with NaI FM 12, after channel-energy conversion, and simulated spectra for 5 radioactive source lines. Here, line fits and various components, previously described in Figs. 13 and 14, are shown as solid and dashed grey curves, respectively. Simulated broadened spectra are overplotted in red. Unbroadened spectra (green histograms) can be considered as guidelines for the exact energy positions of lines and secondary escape peaks (see text). The height of the unbroadened peaks is truncated in most plots to better show the comparison between real and broadened spectra (a–e)

Unbroadened lines represent good guidelines to check the exact position of the full-energy and escape peaks. A good example is the high-energy double peak of 57Co (Fig. 20, panel f), where simulations confirm the position of both radioactive lines at 122.06 and 136.47 keV, and the presence of the Iodine escape peak around ~90 keV. Still, some discrepancies are evident, e.g. at lower energies. One likely cause of the discrepancies below 60 keV, mostly resulting in a higher number of counts of the simulated data compared to the real data, and which is particularly visible for the 57Co line at 14.41 keV (Fig. 20, panel a), is the uncertainty about the detailed composition of the radioactive source. Indeed, radioactive isotopes are contained in a small (1 mm) sphere of “salt”. Simulations including this salt sphere were performed and a factor of 3.8 difference in the perceived 14.41 keV line strength was found. The true answer lies somewhere between this and the simulation with no source material, as salt and radioactive isotope are mixed. For the general calibration simulation, a pointsphere of radioactive material not surrounded by salt had been used. Another possible explanation could probably be the leakage of secondary electrons from the surface of the detector leading to a less-absorbed energy. Further discrepancies at higher energies, which are visible e.g. in panels d and e of Fig. 21 are smaller than 1%.

4.2.4 BGO response

The determination of a channel-energy relation for the BGO detectors required the additional analysis of the high-energy data taken with the EQM module at SLAC to cover the energy domain between 5 MeV and 20 MeV. In order to combine the radioactive source measurements made with the BGO FMs at MPE and the proton beam induced radiation measurements made with the BGO EQM at SLAC (see Section 3.3), it was necessary to take into account the different gain settings by application of a scaling factor. The scaling factor was derived by comparing 22Na and Am/Be measurements (at 511, 1274, and 4430 keV) which were done at both sites. Due to the very low statistics in the measurements from the high-energy reaction of the Van-de-Graaf beam on the LiF target (Eq. 2), first and second electron escape peaks from pair annihilation of the 14.6 MeV line could not be considered in this analysis (see also Fig. 16, panel f). They were mainly used as background reference points in order to help finding the exact position of the 17.5 MeV line. In this way, a dataset of 23 detected lines was available for determining the BGO EQM channel-energy relation.

For the BGO flight modules analysis (FM 01 and FM 02), a smaller line sample between 125 keV and 4.4 MeV was adopted, still leading to similar results. Differences are due to different gains, caused by the setup constraints, which are described in more detail in Section 4.3. Similarly to the NaI analysis, BGO datasets were fitted with an empirical function (Eq. 4). Figure 22 shows the BGO EQM channel-energy relation between 0.2 and 20 MeV. Fit parameters are given in the caption. In this case, fit residuals are smaller than 4%.

BGO channel-energy relation and corresponding residuals calculated for EQM detector applying the empirical fit (blue curve) of Eq. 4 over the full energy domain between 0.2 and 20 MeV. Data taken at MPE and at SLAC are marked with triangles and squares, respectively. Fit parameters for this fit are a = −428 ± 23, b = −54 ± 3, c = 8.29 ± 0.06 and d = 167 ± 11, with a reduced χ 2 of 7

4.3 Energy resolution

The energy resolution R of a detector is conventionally defined as the full width at half maximum (w) of the differential pulse height distribution divided by the location of the peak centroid H 0 [22]. This quantity mainly reflects the statistical fluctuations recorded from pulse to pulse. In the case of an approximately linear response, the average pulse amplitude is given by H 0 = K N, where K is a proportionality constant, and the limiting resolution of a detector can be calculated as

where N represents the average number of charge carriers (in our case, it represents the number of photoelectrons emitted from the PMT photocathode), and the standard deviation of the peak in the pulse height spectrum is given by σ = \(K\,\sqrt{N}\). However, in real detectors the resolution is not only determined by photoelectron statistics, but can be affected by other effects, such as (1) local fluctuations in the scintillation efficiency; (2) nonuniform light collection; (3) variance of the photoelectron collection over the photocathode; (4) contribution from the nonlinearity of the NaI scintillation response; (5) contributions from PMT gain drifts; and (6) temperature drift (e.g. see [22]). In order to take all these effects into account, a nonlinear dependence of the energy resolution was assumed:

This formula is mainly based on traditional physical understanding of scintillation detectors and produces a physically motivated behavior outside the range of measurements. It consists of (1) a constant term, a, which describes limiting electronic resolution (typically not a noticeable effect in scintillators); (2) a term proportional to the square root of the energy, explaining statistical fluctuations in the numbers of scintillation photons and photo electrons; and (3) a term proportional to the energy, which accounts for the non-ideal “transfer efficiency” of transporting scintillation photons from their creation sites to the PMT photocathode. For the actual fits the first parameter a was set to zero, since no significant electronic broadening was observed. Spatial non-uniformity of the energy resolution will be discussed in Section 4.5.

In order to fit the energy resolution, it was necessary to convert the measured widths (in channels) to energies in keV by applying to each detector the corresponding channel-energy relation previously obtained. Fit results for detector NaI FM 04 and for the three BGO detectors (EQM, FM 01 and FM 02) are displayed in Fig. 23. In the case of NaI (left panel), we noticed that similar results could also be obtained by excluding those calibration lines which are affected by greater uncertainties of w, namely the 14.4 keV line from 57Co and all secondary lines, i.e. the 25 keV line from 109Cd, the 36.6 keV from 137Cs and the 136.6 keV line from 57Co. As regards the BGO energy resolution (right panel), EQM results (blue triangles) show poorer energy resolution compared to FM 01 (red squares) and FM 02 (yellow dots), which could be explained by minor differences in the detector design (optical coupling). Finally, a common plot of all NaI and BGO flight module detectors is shown in Fig. 24.

Energy resolution R in percentage calculated for detector NaI FM 04 (left panel) and for all BGO detectors (right panel), i.e. BGO EQM (blue curve), BGO FM 01 (red curve) and BGO FM 02 (yellow curve). Residuals to Eq. 6 are given in the panel under the plot. NaI FM 04 fit parameters to Eq. 6 are b = 0.916 ± 0.014 and c = 0.0994 ± 0.0010, with a reduced χ 2 of 5. In the case of the BGO detectors, the fit parameters for EQM are, b = 3.54 ± 0.05 and c = 0.0506 ± 0.0013, with a reduced χ 2 of 2.2; for FM 01, b = 3.09 ± 0.03 and c = 0.027 ± 0.003, with a reduced χ 2 of 1.3; for FM 02, b = 3.050 ± 0.021 and c = 0.0361 ± 0.0012, with a reduced χ 2 of 2.4

FWHM (in keV) as a function of Energy for the 12 NaI FM detectors (blue squares) and for the two BGO FM detectors (green triangles). For both detector types, the standard fit to Eq. 6 is plotted

4.4 Full-energy peak effective area and angular response

The full-energy peak effective area in cm2 for both NaI and BGO detectors was computed as:

where (1) A = Line area (count/s); (2) a c = Current source activity (1/s); (3) P T = Line transition probability; (4) d s X = Distance between source and detector (cm). No additional factor to account for flux attenuation between the source and the detector was needed, since its effect above 20 keV is less than 1%. The different line-transition probabilities for each radioactive nuclide which were applied for this analysis can be found in Table 1 (column 4). The reference activities were provided in a calibration certificate by the supplier of the radioactive sources.Footnote 7 The radioactive source activities at the day of measurement were calculated by taking into account the time elapsed since the calibration reference day. The relative measurement uncertainty of the given activities for all sources is 3%, with the exception of the Mercury source (203Hg) and the Cadmium source (109Cd), which have an uncertainty of 4%.

Results for the on-axis effective area as a function of the energy for all NaI and BGO FM detectors are shown in Fig. 25. The initial drop below 20 keV is due to a Silicone rubber layer placed between the NaI crystal and the entrance window, which absorbs low-energy gamma-rays. At energies higher than 300 keV, NaI detectors become more transparent to radiation and a decrease in the response is observed. The BGO on-axis effective area is constant over the energy range 150 keV–2 MeV, with a mean value of 120 ± 6 cm 2. Unfortunately, the effective area at 4.4 MeV could not be determined, since the activity of the Am/Be source was not known. SLAC measurements could not be used for this purpose either. At 33.17 keV, the effect of the Iodine K-edge is clearly visible as a drop. This energy region was extensively investigated during the PTB/BESSY calibration campaign and is further described in Section 4.5.

For several radioactive sources, off-axis measurements of the NaI and BGO response have been performed. These are extremely important for the interpretation of scattered photon flux both from the spacecraft and from the atmosphere. Figure 26 shows results for the NaI and the BGO effective area as a function of the irradiation angle over the full 360°. The left panel presents NaI FM 04 measurements from (i) the 32.89 keV lineFootnote 8 from 137Cs (top green curve); (ii) the 279.2 keV line from 203Hg (middle red curve); and (iii) the 661.66 keV line from 137Cs (bottom yellow curve). It’s worth noting that all curves, especially the middle one, trace the detector’s structure (crystal, housing, and PMT). Furthermore, the bottom curve (661.66 keV) varies very little with the inclination angle because of the high penetration capability of gamma-rays at those energies. In the case of BGO, measurements performed with 88Y at 898.04 and 1836.06 keV highlight the two drops in the response due to the presence of the PMTs on both sides of the crystal. Although the BGO detectors are symmetrical, an asymmetry in the curves is caused by the Titanium bracket on one side of the crystal housing, which is necessary for mounting the detectors onto the spacecraft (Fig. 5, right panel). Comparisons of the effective area for NaI and BGO with simulations can be found in [14].

Off-axis effective area as a function of the irradiation angle (from –90° to 270°) for NaI FM 04 (left panel) and BGO EQM (right panel). Different curves represent different line-energies. In the case of NaI, results for three radioactive lines are shown, namely: 32.06 keV from 137Cs (top green curve), 279.2 keV from 203Hg (middle red curve), and 661.66 keV from 137Cs (bottom yellow curve). For BGO, two lines from 88Y are shown: 898.04 keV (blue curve) and 1836.06 keV (red curve)

4.5 Quantum detection efficiency (QDE) and spatial uniformity of NaI detectors

As already mentioned in Section 3.2, the QDE for detector NaI FM 04 could be determined through detailed measurements performed at PTB/BESSY by firstly relating the fitted full-energy peak area of the NaI spectrum with the sum of counts detected by the HPGe detector (for which QDE = 1) in the same region of interest around the full-energy peak (which includes the small Ge escape peak appearing above 11 keV), and subsequently accounting for the different beam fluxes. The QDE was determined at all energies by analyzing a spectrum taken in the center of the detector’s surface and at three energies (rasterscans at 10, 36 and 60 keV) by analyzing spectra taken over the whole surface area (see Fig. 28, top panels, for results obtained at 10 and 60 keV). The effective area (in cm 2) can then be calculated as the integral of the QDE over the detector’s active area (126.7 cm 2). Results for 19 lines measured between 10 and 60 keV are shown in Fig. 27. This Figure can be considered as a zoom at low energies of the NaI effective area in Fig. 25.

Contour plots showing results for the PTB/BESSY rasterscans of detector NaI FM04. At the top: QDE calculated over the active area (126 cm 2) at 10 and 60 keV (left and right panel, respectively). At the bottom: Calculated fit parameters of the full-energy peak as a function of the beam position (in mm) for the 10 keV rasterscan: (1) line-center (in channel #, left panel); (2) line resolution (in %, right panel). The color code is indicated in the colorbars above each plot

The detector’s spatial homogeneity was investigated at PTB/BESSY by means of rasterscans of detector NaI FM 04 at three distinct energies, namely 10, 36 and 60 keV. During each rasterscan, ~ 700 runs per detector were recorded with a spacing of 5 mm, and for each one the full-energy peak was analysed as previously described in Section 4.1.1. For the rastercsan at 10 keV, the dependence of line-center (in channel #) and line resolution (in %) on the rasterscan position in mm (DET_X and DET_Y) is shown in Fig. 28, bottom panels. Results for the line area are not further shown, since the QDE plots previously discussed in Fig. 28, top panels, already reflect the surface behavior for this parameter).

From the line center spatial dependence one can notice that some border effects appear toward the edge of the NaI crystal, shifting the full-energy peak to lower channel numbers i.e. energies. This effect is of the order of 12% at 10 and 60 keV and of 7% at 36 keV. The line resolution is homogeneous over the whole detector’s area, with a mean value of 25%, 15% and 10% at 10, 36 and 60 keV, respectively. While the first two resolutions are comparable to the results obtained with radioactive sources at 14.4 and 36.6 keV, the 60 keV rasterscan gives an improved resolution when compared to the result of 15% obtained with the 241Am source at 59.4 keV (see Fig. 13, panel f).

5 Conclusions

After the successful launch of the Fermi mission and the proper activation of its instruments, the 14 GBM detectors started to collect scientific data. The spectral overlap of the two BGO detectors (0.2 to 40 MeV) with the LAT lower limit of ~ 20 MeV opens a promising epoch of investigation of the high-energy prompt and afterglow GRB emission in the yet poorly explored MeV-GeV energy region.

On ground, the angular and energy response of each GBM detector was calibrated using various radioactive sources between 14.4 keV and 4.4 MeV. The channel-energy relations, energy resolutions, on- and off-axis effective areas of the single detectors were determined. Additional calibration measurements were performed for NaI detectors at PTB/BESSY below 60 keV and for BGO detectors at SLAC above 5 MeV, thus covering the whole GBM energy domain. As already mentioned in the introduction, further calibration measurements at system level and after integration onto the spacecraft were carried out (see Table 4). All those measurements crucially contribute to the validation of Monte Carlo simulations of the direct GBM detector response. These incorporate detailed models of the Fermi observatory, including the GBM detectors, instruments, and all in-flight spacecraft components, plus the scattering of gamma-rays from a burst in the spacecraft and in the Earth’s atmosphere [14]. The response as a function of photon energy and direction is finally captured in a Direct Response Matrix (DRM) database, allowing the determination of the true gamma-ray spectrum from the measured data.

The results reported in this paper directly contribute to the DRM final determination, and they fully follow physical expectations [18]. Measurements and fit results for two sample detectors (NaI FM 04 and BGO FM 02) are given in Tables 5 and 6, respectively (see Appendix). Fit parameters for the energy-channel relations and energy resolutions of those detectors are always reported in the captions of the corresponding plots (see Figs. 18, 22 and 23). It is worth noting that these parameters reflect the characteristics of all other NaI and BGO detectors not reported in this paper, thus showing that all detectors behave the same within statistics.

The channel-energy relation parameters obtained throughout this analysis are not directly used for in-flight calculations because of the different electronic setup which was used during detector level calibration and in-flight (see Section 3.1). The same analysis method described in Section 4.1.1 and the same fitting procedures of Section 4.2 were adopted to analyze data collected during system level calibration. These results confirm that the systematic uncertainties in the channel-energy conversions arise from the discussed sources, i.e. fitting procedure, limited statistics in the case of high-energy lines of BGOs, electronics and non-uniform responses of detectors, and are fully consistent with measurements presented in this paper.

The GBM detectors will play an important role in the GRB field in the next decade. The unprecedented synergy between the GBM and the LAT will allow to observe burst spectra covering ~ 7 decades in energy. Moreover, simultaneous observations by the large number of gamma-ray burst detectors operating in the Fermi era will complement each other. A nice overview of the currently operating space missions can be found in Table 1 of [3]. Here, instrument characteristics such as FOVs, effective areas, localization uncertainties and energy bands are compared. The GBM detectors fit in this overall picture by providing a higher trigger energy range (50–300-keV) than e.g. Swift-BAT [9] (15–150 keV) and a spectral coverage up to 40 MeV, an energy limit which can only be investigated with the LAT and the Mini-Calorimeter on-board AGILE [32]. New insights into the GRB properties are therefore expected from GBM, thus advancing the study of GRB physics.

Notes

The temporal resolution of CTIME and CSPEC data is adjustable: nominal integration times are decreased when a trigger occurs.

Detectors were delivered to MPE for detector level calibration in batches of four, and shortly there after shipped to the US for system level calibration. Therefore, as the PTB/BESSY facility was only available for a short time, only one batch of NaIs could be calibrated there.

The BGO flight modules were not available for calibration at the time of measurements, since they had already been shipped for system integration.

An important argument driving the decision not to use a collimator for measurements with radioactive sources was the fact that the simulation of the laboratory environment with all its scattering represented a necessary and critical test for the simulation software, which later had to include the spacecraft [34].

In this case, simulations were not based on measurements performed with detector FM 04, because at that time detector FM 12 was the first to have a complete set of collected spectra.

Calibrated radioactive sources were delivered by AEA Technology QSA GmbH (Braunschweig, Germany) together with a calibration certificate from the Deutscher Kalibrierdienst (DKD, Calibration laboratory for measurements of radioactivity, Germany).

In this case, the double line was fitted with a single Gaussian, since the response dramatically drops above 90° and the fit algorithm is not capable of identifying two separate components.

References

Agostinelli, S., et al.: Geant4 − a simulation toolkit. Nucl. Instrum. Methods A 506, 250 (2003)

Atwood, W.B.: Gamma Large Area Silicon Telescope (GLAST): applying silicon strip detector technology to the detection of gamma rays in space. Nucl. Instrum. Methods A 342, 302 (1994)

Band, D.L.: The synergy of gamma-ray burst detectors in the GLAST era. AIPC 1000, 121 (2008)

Thomson, A., Vaughan D. (eds.): X-ray data booklet. Lawrence Berkeley National Laboratory, Berkeley, CA. http://xdb.lbl.gov (2001). Accessed 3 December 2008

Dermer, C.D., Böttcher, M., Chiang, J.: Spectral energy distributions of gamma-ray bursts energized by external shocks. ApJ 513, 656 (1999)

Dingus, B.L.: Observations of the highest energy gamma-rays from gamma-ray Bursts. AIPC 558, 383 (2001)

Engelkeimer, D.: Nonlinear response of NaI(Tl) to photons. Rev. Sci. Instrum. 27, 589 (1956)

GBM Proposal, vol. I. Science Investigation and Technical Description. http://gammaray.msfc. nasa.gov/gbm/publications/proposal/ (1999). Accessed 3 December 2008.

Gehrels, N., et al.: The swift gamma-ray Burst Mission. Ap. J. 611, 1005–1020 (2004)

Giuliani, A., et al.: AGILE detection of delayed gamma-ray emission from GRB 080514B. A&A 491, 25 (2008)

GLAST Science Brochure: Exploring Nature’s Highest Energy Processes with the Gamma-Ray Large Area Space Telescope, Document ID: 20010070290; Report Number: NAS 1.83:9-107-GSFC, NASA/NP-2000-9-107-GSFC (2001). Accessed 3 December 2008.

Gonzalez, M.M., et al.: A γ-ray burst with a high-energy spectral component inconsistent with the synchrotron shock model. Nature 424, 751 (2003)

Görner, W., et al.: BAMline: the first hard X-ray beamline at BESSY II. Nucl. Instrum. Methods A 467–468, 703–706 (2001)

Hoover, A.S., et al.: GLAST burst monitor instrument simulation and modeling. AIPC 1000, 565 (2008)

Hurley, K., et al.: Detection of a γ-ray burst of very long duration and very high-energy. Nature 372, 652 (1994)

Iredale, P.: The non-proportional response of NaI(Tl) crystals to γ-rays. Nucl. Instr. Meth. 11, 336 (1961)

Kaneko, Y., et al.: Broadband spectral properties of bright high-energy gamma-ray bursts observed with BATSE and EGRET. ApJ 677, 1168 (2008)

von Kienlin, A., et al.: The GLAST burst monitor. SPIE 5488, 763 (2004)

von Kienlin, A., et al.: High-energy calibration of a BGO detector of the GLAST burst monitor. AIPC 921, 576–577 (2007)

Kippen, R.M.: The GEANT low-energy Compton scattering (GLECS) package for use in simulating advanced Compton telescopes. New Astron. Rev. 48, 221 (2004)

Kippen, R.M., et al.: Instrument response modeling and simulation for the GLAST burst monitor. AIPC 921, 590 (2007)

Knoll, G.F.: Radiation Detection and Measurement, 3rd edn. Wiley, New York (1989)

Krumrey, M., Gerlach, M., Scholze, F., Ulma, G.: Calibration and characterization of semiconductor X-ray detectors with synchrotron radiation. Nucl. Instrum. Methods A 568, 364–368 (2006)

Meegan, C.A., et al: The GLAST burst monitor. AIPC 921, 13 (2007)

Mészáros, P., Rees, M.J., Papathanassiou, H.: Spectral properties of blast-wave models of gamma-ray burst sources. ApJ 432, 181 (1994)

Michelson, P., et al.: GLAST: a detector for high-energy gamma rays. Proc. SPIE 2806, 31 (1996)

Moszynskyński, M., et al.: Intrinsic energy resolution of NaI(Tl). Nucl. Instr. Nucl. Instrum. Methods Phys. Rev. A 484, 259 (2002)

Prescott, J.R., Narayan, G.H.: Electron response and intrinsic line-widths in NaI(Tl). Nucl. Instrum. Methods 75, 51 (1969)

Riesemeier, H., et al.: Layout and first XRF applications of the BAMline at BESSY II. X-Ray Spectrom. 34, 160–163 (2005)

Sari, R., Esin, A.A.: On the synchrotron self-compton emission from relativistic shocks and its implications for gamma-ray burst afterglows. ApJ 548, 787 (2001)

Schötzig, U., Schrader, H.: Halbwertszeiten und Photonen-Emissionswahrscheinlichkeiten von häufig verwendeten Radionukliden, PTB-Bericht PTB-Ra-16/5, 5th edition (1998)

Tavani, M., et al.: The AGILE mission and its scientific instrument. SPIE 6266, 2 (2006)

Thompson, D.J., et al.: Calibration of the energetic Gamma-ray experiment telescope (EGRET) for the compton Gamma-ray observatory. ApJS 86, 629 (1993)

Wallace, M., et al.: Full spacecraft source modeling and validation for the GLAST burst monitor. AIPC 921, 58-59 (2007)

Waxman, E.: Gamma-Ray-burst afterglow: supporting the cosmological fireball model, constraining parameters, and making predictions. ApJ 485, 5 (1997)

Author information

Authors and Affiliations

Corresponding author

Additional information

Open Access

This article is distributed under the terms of the Creative Commons Attribution Noncommercial License which permits any noncommercial use, distribution, and reproduction in any medium, provided the original author(s) and source are credited.

Appendix

Appendix

Rights and permissions

Open Access This is an open access article distributed under the terms of the Creative Commons Attribution Noncommercial License (https://creativecommons.org/licenses/by-nc/2.0), which permits any noncommercial use, distribution, and reproduction in any medium, provided the original author(s) and source are credited.

About this article

Cite this article

Bissaldi, E., von Kienlin, A., Lichti, G. et al. Ground-based calibration and characterization of the Fermi gamma-ray burst monitor detectors. Exp Astron 24, 47–88 (2009). https://doi.org/10.1007/s10686-008-9135-4

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10686-008-9135-4