Abstract

Given the vast uncertainty surrounding climate impacts, meta-analyses of global climate damage estimates are a key tool for determining the relationship between temperature and climate damages. Due to limited data availability, previous meta-analyses of global climate damages potentially suffered from multiple sources of coefficient and standard error bias: duplicate estimates, omitted variables, measurement error, overreliance on published estimates, dependent errors, and heteroskedasticity. To address and test for these biases, we expand on previous datasets to obtain sufficient degrees of freedom to make the necessary model adjustments, including dropping duplicate estimates and including methodological variables. Estimating the relationship between temperature and climate damages using weighted least squares with cluster-robust standard errors, we find strong evidence that duplicate and omitted variable biases flatten the relationship. However, the magnitude of the bias greatly depends on the treatment of speculative high-temperature (>4 \(^{\circ }\)C) damage estimates. Replacing the DICE-2013R damage function with our preferred estimate of the temperature–damage relationship, we find a three- to four-fold increase in the 2015 SCC relative to DICE, depending on the treatment of productivity. When catastrophic impacts are also factored in, the SCC increases by four- to five-fold.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Climate change is one of the preeminent policy issues of our day, and the Paris agreement signals some international agreement that action is urgently needed. The social cost of carbon (SCC) is one of the primary tools for calibrating the socially optimal policy response, particularly in the U.S. under the Obama Administration. The SCC is estimated using integrated assessment models (IAMs), which capture the various steps in the climate and economic processes that translate a marginal unit of \(\hbox {CO}_{2}\) emissions into a measurement of economic damage. While accuracy within each of these steps is necessary to precisely estimate the SCC, accurately calibrating the climate damage function—which we define as translating a temperature change into a percentage change in GDP—is critical.Footnote 1 Given the considerable uncertainty in climate impacts, meta-analyses of climate damage estimates are a key tool for depicting the relationship between temperature and climate damages, so as to communicate the current state of knowledge to model developers (Van den Bergh and Button 1999). By clarifying this relationship and the uncertainty underlying it, meta-analyses also explain to policymakers arguably the best assessment of the risks that climate change poses to the global economic system and to human well-being.Footnote 2

Due to a dearth of global damage estimates, past meta-analyses (Newbold and Marten 2014; Nordhaus 2013; Tol 2009, 2014) conducted relatively limited analyses of the temperature–damage relationship. It is fair to say that the first damage studies made the very reasonable choice of using simple “back of the envelope” estimates for damages. Gradually, the number of studies increased as methodologies improved, and authors were able to gather small datasets varying from 13 to 43 damage estimates (acquired from 13 to 17 early studies) as a basis for assigning damage values. However, we believe that these earlier analyses are quite limited and potentially biased because these datasets were characterized by non-independent observations (Aldy et al. 2010; Van den Bergh and Botzen 2015). Many observations were estimated using similar methods and models, and very often undertaken by the same authors and thus had some element of duplication.

The idea of using meta-analyses to summarize the state of knowledge is well established. However, it becomes problematic to work with averages of earlier values if those estimates come from only a very limited number of pioneering authors who have published several estimates each. In fact, 5 of 13 studies used in the regression by Nordhaus (2013) were Nordhaus’ own earlier estimates, and an additional four were from the other two main modelers in the IAM literature. Given the small sample sizes available in the literature, authors of these prior meta-analyses simply regressed global climate damages (measured as a percentage of global GDP) on global temperature change (assumed to be relative to the pre-industrial period) and calculated basic ordinary least squares (OLS) standard errors. In doing so, they did not account for duplication or dependency of damage estimates. The consequence is an exaggerated sense of knowledge about the temperature–damage relationship and a greater weighting of estimates from established authors in the field (who tend toward the enumerative strategy) and away from new authors such as Burke et al. (2015) (who tend toward statistical-based strategies); see also Sterner (2015).

These earlier meta-analyses find that global climate damages increase at an increasing rate, though they differ in the exact rate of change; see Fig. 1. Specifically, the models predict a wide range of potential damage estimates from 1.9 to 17.3% of GDP for a 3 \(^{\circ }\hbox {C}\) increase in global average surface temperature. While these earlier estimates represent strong first steps in understanding the temperature–damage relationship, they are potentially biased due to these data limitations and the limiting effect of the estimation techniques employed. This is particularly true when they are used to predict the damages from higher temperatures, since there is a large sensitivity to the choice of functional form.

Temperature–damage relationship for previous meta-analyses and the preferred regression [regression (4) on Table 2] from our study. This figure compares damage functions corresponding to previous meta-analyses to damage functions corresponding to the preferred regression [i.e., regression (4) in Table 2]. Following Nordhaus (2013), we multiply the coefficients of the preferred regression specification corresponding to non-catastrophic impacts (t2 and prod_t2) by 25% when constructing the damage functions to account for potential omitted non-catastrophic impacts of climate change

There are several sources of bias in previous empirical estimates of the temperature–damage relationship. First, because the data are dependent on one another due to similar underlying estimation methods, authors, and models (Tol 2009; Aldy et al. 2010; Dell et al. 2014; Van den Bergh and Botzen 2015; National Academies of Sciences, Engineering, and Medicine 2017), OLS is inefficient and the resulting standard errors are biased. More importantly, as indicated above, due to the common practice of citing earlier estimates and updating previous estimates in the literature, the resulting estimates suffer from multiple (duplicate) publication bias. Ours is, to the best of our knowledge, the first paper to apply this rigorous meta-analysis concept fully in climate economics.Footnote 3 Given that older (i.e., less up-to-date) damage estimates tend to be cited multiple times (Van den Bergh and Botzen 2015), we expect this to bias downward the temperature–damage relationship. Second, earlier estimates also suffer from omitted variable bias due to a failure to account for whether the underlying damage estimates captured non-market and catastrophic climate impacts in addition to market impacts (Van den Bergh and Botzen 2015; JEP 2015) and to control for whether market impact estimates included potential impacts on economic productivity. Third, earlier studies potentially suffer from publication bias by relying exclusively on published studies (Ackerman and Stanton 2010; Havranek et al. 2015). Fourth, there are several citation errors with respect to damage estimates (JEP 2015),Footnote 4 in particular with respect to the measurement of temperature (Gelman 2014). Last, high-temperature damage estimates (corresponding to temperature changes above 4 \(^{\circ }\)C) have an undue impact on the estimated temperature–damage relationship over the critical time period of the twenty-first century, despite these estimates’ empirical shakiness and overreliance on extrapolation (Van den Bergh and Botzen 2015); for example, Tol (2014)’s results are extremely sensitive to the inclusion of its sole high-temperature damage estimate (see later discussion). By dealing systematically with these biases, this paper identifies a significant downward bias in the literature of the effect of temperature change on global climate damages.

Our aim is thus to improve understanding of the temperature–damage relationship by advancing the meta-analysis techniques pioneered by Tol and Nordhaus. A meta-analysis is not necessarily synonymous with “truth,” but we presumably all agree that if we are going to do a meta-analysis, every effort should be spent on trying to do it is as well as possible. By conducting an exhaustive search of the published and grey literature to assemble a larger dataset, we are able to conduct a meta-analysis using more data-intensive techniques that can more accurately capture this relationship and the uncertainty underlying it. Specifically, using weighted least squares and calculating cluster-robust standard errors at the model level, we regress climate damages on an adjusted measurement of temperature change squared and its interaction with indicator variables for whether the model captures non-market, productivity, and catastrophic climate impacts. By dropping duplicate damage estimates and high-temperature damage estimates, we explore the impact of duplicate publication and other biases on the temperature–damage relationship. In doing so, we are able to better characterize the current state of knowledge with respect to the temperature–damage relationship. To understand the potential effect of the previous bias on the SCC, we re-calibrate the Dynamic Integrated Climate-Economy (DICE) model, which is generally seen as the first and most respected IAM, by replacing its damage function with the preferred specification of the temperature–damage relationship and re-estimating the SCC.

Our paper is structured as follows. First, we discuss the history of global climate damage estimates. Second, we discuss the relevant meta-analysis literature in the context of global climate damages. Third, we describe the creation of our datasets. Fourth, we present the econometric model used for our meta-analysis of willingness to pay. Fifth, we present our regression results with respect to the temperature–damage relationship. Sixth, we provide a short sensitivity analysis. Seventh, we discuss the interpretation of our results as a damage function. Finally, we conclude.

2 History of Global Climate Damage Estimates

Since 1994, when the first serious attempts at empirically estimating global damages from climate change began (Tol 2009), analysts have developed multiple methodologies to identify the monetary value of climate impacts. These methodologies—each with relative strengths and weaknesses—were introduced in essentially four waves. Each methodology continues to be employed, often by the same authors(s).

The first wave of serious empirical estimates consists of applications of the enumerative strategy and expert elicitation. Fankhauser (1995) is credited with the first extensive application of the enumerative strategy (i.e., the bottom-up approach) to estimate global climate damages (Tol 2009). This quickly became the go-to methodology for IAM modelers to calibrate their climate damage function(s), most notably DICE (Nordhaus 1994a), the Framework for Uncertainty, Negotiation and Distribution (FUND) (Tol 1995), and Policy Analysis of the Greenhouse Effect (PAGE) (Plambeck and Hope 1996). Relying heavily on author discretion (Pindyck 2013), IAM modelers assemble and construct regional-sector damage estimates (for example, estimates of damage to U.S. agriculture), citing economic impact studies, valuing physical impact estimates from the sciences, or applying benefit-transfer (Tol 2009; Van den Bergh and Botzen 2015).Footnote 5 While the enumerative strategy can capture market, non-market, and catastrophic damages from climate change, enumerative studies often omit significant impacts ignored by the modelers (Revesz et al. 2014; Howard 2014; Interagency Working Group on Social Cost of Carbon 2016) and general equilibrium impacts (Hoel and Sterner 2007). The range of enumerative damage estimates is 2.3 to −11.5% of GDP for temperature increases of 1–3 \(^{\circ }\)C.

Also early on in the development of IAMs, model developers (Nordhaus 1994b; Schauer 1995) conducted several small-sample expert elicitation studies on global damages with the aim of calibrating their model’s climate damage function(s). Recent work (Howard 2015) extends this methodology to a large sample of objectively selected experts. Given this method’s reliance on subjective opinion, the failure of these studies to assign weights based on accuracy of predictions (using seed questions) is an ongoing shortcoming (Cooke 2013; Oppenheimer et al. 2016). Expert elicitation captures a range of market and non-market impacts with an estimate range of 0 to −10.2% of GDP for temperature increases of 1–6 \(^{\circ }\)C.

Starting in the early 2000s, authors introduced cross-sectional regression to identify the impact of the current climate on national GDP and well-being, and then projected future impacts by inputting future climate scenarios. Authors used several regression techniques, including Ricardian analysis (Mendelsohn et al. 2000b),Footnote 6 regressing climate variables directly on GDP (e.g., Choiniere and Horowitz 2006; Nordhaus 2006), regressing happiness on climate variables and income (Rehdanz and Maddison 2005; Maddison and Rehdanz 2011), and the household production function approach (Maddison 2003). While the statistical approach is advantageous in that it is data-driven and captures adaptation using current technologies (Mendelsohn et al. 2000b; Burke et al. 2015), omitted variable bias is potentially a serious problem; all but one study (Choiniere and Horowitz 2006) attempt to address this issue using unique identification strategies. Cross-sectional analyses, however, capture only a narrow set of climate impacts. The first two regression techniques omit non-market impacts, while the latter two omit market impacts. Additionally, these studies omit impacts that vary only a little spatially (e.g., \(\hbox {CO}_{2}\) fertilization and sea level rise), have not yet occurred (e.g., tipping points), or are not captured by variation in average temperature (e.g., extreme weather) (Tol 2009; Van den Bergh and Botzen 2015). The range of cross-sectional climate damage estimates is 0.1 to −16.3% of GDP for temperature increases of 0.7–3.2 \(^{\circ }\)C.

Starting around 2010, climate economists employed computable general equilibrium analysis (CGE) for the first time to estimate global damage estimates from climate change. As in enumerative studies, modelers employed region-sector impact estimates from the economics literature to calibrate their climate-economic models; the difference from enumerative studies is that they used estimated impacts to the supply of production inputs and demand for outputs in their calibration, instead of estimated impacts to output produced. Examples of CGE models include the Inter-temporal Computable Equilibrium System (ICES) (Bosello et al. 2012) and Environmental Impact and Sustainability Applied General Equilibrium Model (ENVISAGE) (Roson and Van der Mensbrugghe 2012). Unlike traditional IAMs, CGE models capture the impacts of climate change on economic growth through changes in the stock of inputs (capital and land), factor productivity (capital, labor, land, and/or total), and demand for services (energy, health, and/or tourism) (Eboli et al. 2010; Roson and Van der Mensbrugghe 2012). While the CGE model framework allows climate impacts to interact, it comes with the shortcomings of the enumerative approach. The methodology omits non-marketFootnote 7 and catastrophic impacts, in addition to the transitional costs of climate change.Footnote 8 The range of CGE climate damage estimates is −0.2 to −4.6% of GDP for temperature increases of 1.5–4.8 \(^{\circ }\)C.

More recently, climate economists introduced panel regression and scientific estimates. To avoid omitted variable bias, Dell et al. (2012) and Burke et al. (2015) exploited annual variation in weather data to develop panel data of GDP and weather over time. By including country and time fixed effects to control for time and spatial varying unobservables, these studies analyzed the impact of changes in weather on changes in GDP assuming that impacts of weather and climate are identical (Dell et al. 2014; Burke et al. 2015). While there is an ongoing debate over the underlying identification strategy, we avoid the role of arbiter to prevent the introduction of selection bias (instead we test the sensitivity of our results to the exclusion of this and other estimation strategies).Footnote 9 The mechanisms for climate’s impact on economic growth are unclear, though likely pathways include declines in input (capital, labor, and land) and total factor productivity (Dell et al. 2014; Howard 2014; Burke et al. 2015). Panel estimates omit non-market, tipping point, and general equilibrium impacts (Dell et al. 2014; Burke et al. 2015), and have a range of −0.3 to −23% of GDP for temperature increases of 3.4–4.3 \(^{\circ }\)C.

Following the lead of Nordhaus (1994b), who included scientists in his landmark survey on climate change to capture a wider range of future possibilities, we also include science-based damage estimates. Scientific estimates use physical thresholds—limits of human physical adaptability to heat stress (Weitzman 2012) and the 2 \(^{\circ }\)C limit on global warming recommended by a large body of scientists and international organizations (Nordhaus 2014)—to derive global damage estimates from climate change.Footnote 10 The methodology potentially captures market, non-market, and tipping point impacts (Nordhaus 2015). Its range of damage estimates is −4.9 to −99% of GDP for temperature increases of 3–12 \(^{\circ }\)C.

With each wave of estimates, IAM and CGE modelers released updated damage estimates based on their own or others’ previous work. This has led to many duplicate and interdependent estimates in the literature. Given that enumerative and CGE studies together represent the majority of studies in previous meta-analyses (e.g., Tol 2014), these inter-relationships are serious problems; see Fig. 2. A further consequence is that the data underlying even the most up-to-date enumerative damage estimates date back to the 1990s (Revesz et al. 2014).

Connections of papers in Tol (2014), excluding Nordhaus (2013). Interpreting the figure: squares are estimates; a blue square is an estimate based on the previous paper(s) (i.e., uses it as an input); a red square is an updated estimate of the previous paper; a green square is an estimate that is both an update and based on previous paper(s); a purple square means that the estimate is an additional data point from the same study; a dotted line means a somewhat related (though not directly related) estimate. With respect to the sole use of dotted lines in this figure, the catastrophic component of Nordhaus and Boyer (2000)’s damage estimate is constructed from the question about the probability of catastrophic damages (i.e., a 25% or more loss of GDP) for a 3 \(^{\circ }\)C increase by 2090 as reported in the Nordhaus (1994b) survey. The Nordhaus (1994b) damage estimate cited by Tol (2009) is calculated from a related question: the most likely (i.e., the 50th percentile) climate impact (measured as a % of GDP) of a 3 \(^{\circ }\)C increase by 2090. Finally, we exclude the Nordhaus (2013) study cited in Tol (2014) on the grounds that it is a linear combination of the Tol (2009) estimates—a subset of Tol (2014)—because it is an earlier meta-analysis

While predicting the magnitude of climate damages is fraught with uncertainty, climate damage estimates become even more speculative above 3–4 \(^{\circ }\)C. If we look at a scatter plot of the estimates (see Figure A1 in Appendix A), a 3 \(^{\circ }\)C increase—the most likely value of the climate sensitivity parameter (IPCC 2007)—is a natural break in the data; only eight estimates correspond to temperature changes exceeding 3 \(^{\circ }\)C. Similarly, 4 \(^{\circ }\)C is another natural cutoff, as it represents the approximate increase in temperature by the end of the century under Business As Usual [see Scenario A2 in IPCC (2007) and RCP 8.5 in IPCC 2013] and most climate research focuses on impacts occurring during this century. While uncertainty in climate damage estimates increases with temperature regardless of the estimation strategy,Footnote 11 estimates become highly uncertain and contentious above this temperature threshold of 3–4 \(^{\circ }\)C.Footnote 12 In fact, our limited knowledge of impacts may still be overstated, with three of the five estimates above 4 \(^{\circ }\)C being potential extrapolations of other damage estimates.

3 Conducting Meta-analyses of Global Climate Damage Estimates

Meta-analysis (and specifically meta-regression) has become a common tool in environmental economics. Nelson and Kennedy (2009) provide a thorough review of this literature up until 2009, and find a wide range of quality in the literature. Meta-regression is commonly used to study climate damages to a particular economic sector (e.g., Challinor et al. (2014) analyze global agricultural production) and/or region (e.g., Houser et al. 2014 analyze U.S. climate damages). Some of these meta-analyses reveal complex relationships between climate change and society, including Hsiang et al. (2013), who find that climate change will lead to more human conflicts. Only recently has meta-regression been employed at the macro-scale (Tol 2009, 2014; Nordhaus 2013; Newbold and Marten 2014), though none have met the standards laid out in Nelson and Kennedy (2009).

In this study, we are interested in the factual and methodological determinants of global willingness to pay to avoid total impacts of climate change as measured as a percentage of global GDP (D). As in previous meta-analyses, increase in global average surface temperature (T) is included as the sole factual cause of observed heterogeneity. The idea behind this decision is not that temperature is the singular climate-related driver of impacts, but that many of the other drivers, such as precipitation change and increased storm intensity, are strongly correlated with temperature change. Unlike previous meta-analyses of global climate damage estimates, we also control for methodological causes of the observed heterogeneity in the damage impact estimates, in order to avoid omitted variable bias. We divide these methodological variables into those that directly (R) and indirectly (W) impact the relationship between temperature and damages.

Generally, the meta-analysis regression is

where \(\varepsilon \) is the error term. This error term can be subdivided into unobservables (\(\mu \)) and measurement errors (e) from studies where \(\mu \sim \,N\left( {0,\sigma _\mu ^2 } \right) \) and \(e\sim N\left( {0,\sigma _e^2 } \right) \).Footnote 13 While \(\sigma _e^2 \) is often reported in statistical studies (Rhodes 2012), this is unobserved for non-statistical damage estimates. Following the common assumption of a linear function, we assume that the temperature–damage relationship becomes

where Z and X are the unobserved factual and methodological causes of the unobserved heterogeneity of damages.

Heteroskedasticity and dependent errors are common issues in meta-analyses (Nelson and Kennedy 2009; Stanley et al. 2013; U.S. Environmental Protection Agency (EPA) 2006). Unaddressed, these estimation issues result in inconsistent and inefficient coefficient estimates—which are particularly important given the small sample sizes of most meta-analyses—and biased estimates of standard errors. Heteroskedasticity arises because of significant unobserved heterogeneity in the damage estimates. Although weighted least squares (WLS) is the preferred estimator for small sample sizes, WLS as traditionally specified (i.e., using \(\frac{1}{\sigma _e^2 }\) as weights) cannot be used,Footnote 14 because the standard errors (i.e., \(\sigma _e^2 )\) are unobserved for non-statistical global climate damage estimates. Instead, Davidson and MacKinnon (1993) or other heteroskedasticity robust standard errors are recommended (U.S. Environmental Protection Agency (EPA) 2006; Nelson and Kennedy 2009; Rhodes 2012). Dependence of the error terms arises because of correlations in the underlying damage estimate—including similar authorship, estimation method, or model—which result in clustering of error terms. Due to small sample size, the use of the preferred estimators to address dependence—generalized least squares (GLS) and panel estimators—is limited (Cameron and Trivedi 2010, pp. 274–275; Nelson and Kennedy 2009; Van den Bergh and Button 1999), and a combination of cluster-robust standard errors, carefully chosen control variables, and the use of only one estimate per study are recommended (Nelson and Kennedy 2009; Cameron and Trivedi 2010).

Outlier estimates constitute another key economic issue in meta-analysis due to their potential overly influential impact on study findings (Nelson and Kennedy 2009). The meta-analysis literature recommends analyzing sensitivity to outliers by dropping potential outliers and using alternative estimators that are robust to outliers (e.g., Huber weights) (U.S. Environmental Protection Agency (EPA) 2006; Nelson and Kennedy 2009).

Duplicate publication is an identification issue, raised in the medical literature (Tramèr et al. 1997; Gøtzsche 1989), that is currently unaddressed in the climate economics literature. Unlike the medical literature that discusses the potential bias from overt and covert re-publication of scientific results (e.g., with and without cross-references to original reports or data), we are concerned with potential bias from the common practices of updating climate-damage estimates over time and calibrating climate-model damage functions based on previous estimates in the climate damage literature. Tramèr et al. (1997) addresses duplication by excluding duplicate estimates.

4 Data

For our study’s dataset, we combine the studies included in the most recent analyses by Tol (2014, 2015b)Footnote 15 and Newbold and Marten (2014). We double-check estimates—including re-aggregating damage estimates using GDP weights (see Appendix A1)—and correct errors when present (see Appendix A2). We also searched for new damage estimates by: (1) including updates of previous damage estimates; (2) reviewing publications of already-included authors; (3) reviewing the damage estimates underlying the studies cited in the most up-to-date meta-analysis of the SCC (Havranek et al. 2015); and (4) searching Google Scholar and Econlit.Footnote 16 We include an estimate if it meets the following conditions: global, unique (i.e., not a re-running of a previously cited IAM or an IAM copying a previously cited IAM), and dating after 1993 [the start of modern climate damage estimates (Tol 2009)] and before 2016. Following the U.S. Environmental Protection Agency’s (EPA) (2006) recommendation, we include only one estimate per study,Footnote 17 unless multiple estimates were deemed potentially independent.Footnote 18 We assembled 49 damage estimates from 41 studies, using six estimation strategies; 24 primary authors (including ten estimates from Nordhaus); and 22–27 models (depending on the definition). Of these estimates, 11 are drawn from the gray literature as a means of addressing publication bias.Footnote 19

After assembling the data, we drop estimates that do not meet our a priori selection criteria. We are interested solely in global willingness to pay to avoid climate change, and drop all studies that measure impacts in terms of compensating surplus (CS): Maddison (2003), Rehdanz and Maddison (2005), and Maddison and Rehdanz (2011); we conduct sensitivity analysis to this assumption in Appendix C in the Supplementary Material.Footnote 20 In the case of Meyer and Cooper (1995), who apply the value of statistical life from OECD nations and use parity unit damage valuation to derive an estimate of the amount society is willing to accept for climate damages, we undo these adjustments to derive a corresponding willingness to pay estimate; see Appendix A3. Additionally, we exclude all estimates that arbitrarily cap damages based on author discretion: Nordhaus (1994a) and Nordhaus and Yang (1996).Footnote 21 Finally, we drop cross-sectional studies at the national level that do not address omitted variable bias: Choiniere and Horowitz (2006). This leaves 43 data points from 35 studies.

Given that many of the estimates are updates or citations of previous estimates—giving a false sense of precision—we further limit our observations to prevent duplication bias (i.e., double counting) (Gøtzsche 1989; Tramèr et al. 1997; Nelson and Kennedy 2009). We define a study as a duplicate if it is not the most up-to-date estimate by an author utilizing a particular method or if the estimate cites already-included estimates.Footnote 22 After dropping estimates that correspond to this definition, 26 non-duplicate estimates from 20 studies remain.

We code multiple damage, temperature, and methodological variables. D_new is the damage estimate cited in the reviewed paper. T_new is global average mean surface temperature increase in degrees Celsius relative to the pre-industrial or current time. For studies that estimate the impact of an increase in global land temperature, we multiply their temperature change by the ratio between global land and surface temperatures to convert to global surface temperature; see Appendix A2. We take care to distinguish studies that include non-market damages or catastrophic damages or allow for an effect through changes in productivity.Footnote 23 Similarly, we distinguish between cross-sectional and panel data (i.e., the inclusion of country fixed effects) and we account for the type and date of each study. Finally, we classify studies by author, estimation method, and model type. See Table 1 for a summary of the data before and after we remove duplicate estimates.

The mean damage estimate increases from 6.3% of GDP to 9.5% when we remove duplicate estimates (a similar increase occurs for the median and temperature-weighted mean damage estimates). Looking at Figure A1 in Appendix A, we can also see that the bulk of estimates for both datasets are below 4 \(^{\circ }\)C even after eliminating duplicate estimates, highlighting the potential for high-temperature estimates to have a disproportionate effect on meta-regression results. In particular, local polynomial smoothing of damages on temperature change (see Figure A2 in Appendix A) shows that the impact of duplicate estimates is potentially dependent on the treatment of high-temperature damage estimates.

Damage estimates also differ by author, estimation method, and model. In particular, damage estimates derived using science-based and survey methodologies tend to be higher, though there are a handful of high enumerative and statistical damage estimates. Furthermore, damage estimates derived by Tol using the enumerative strategy appear to be lower than other enumerative estimates. Given that the estimates from the early waves tend to be lower than estimates from later waves, it is unsurprising that—contrary to the findings of Tol (2009)—damage estimates appear to be increasing over time, though the results are generally insignificant.Footnote 24

5 Econometric Specification

Following historical precedent (of climate damages increasing at an increasing rate in temperature), and to preserve degrees of freedom, we assume a quadratic damage function with no initial benefits from climate change, such that \(g\left( T \right) =T^{2}\) in Eq. (2). Unlike previous papers, we control for whether temperature is measured relative to pre-industrial or current temperatures by including an adjustment term \(\theta _j \)—as measured by the difference in temperature in the base year (or period) of the study relative to the pre-industrial periodFootnote 25—such that Eq. (2) simplifies to

where we assume that there are no climate damages for a 0 \(^{\circ }\)C increase [a standard assumption implying \(\gamma =0\) in Eq. (2)] and t2 is our new temperature-squared variable that accounts for its corresponding estimate’s reference period. If we relax the implicit assumption in Eq. (3) that there are no methodological variables, and if we account for clustering (i.e., dependence), we can rewrite this expression as

where k is the cluster level (author, estimation method and model) and \(X_{j,k,l} \) is an indicator variable for estimate jand category \(l \epsilon \left\{ { mkt,cat,prod,cross } \right\} \), which is equal to one if estimate j captures only market impacts (i.e., omits non-market impacts), includes catastrophic impacts, accounts for productivity, and corresponds to a cross-sectional estimate without country fixed effects, respectively.Footnote 26 This expression simplifies to

where \(\varepsilon _{j,k} \sim N\left( {0,\sigma ^{2}} \right) \).

Given our study’s small sample size, we estimate Eq. (4) using WLS with clustered standard errors assuming that we reject the null hypotheses of homoskedasticity and independent observations. In terms of addressing heteroskedasticity, we do not observe measurement error or the number of observations for many global climate damage estimates. Since the literature finds that damage estimates are more uncertain for higher temperature changes (Tol 2009, 2014; Burke et al. 2015), we instead weight estimates by the inverse of temperature change to place lower weight on the more uncertain damage estimates (Day 1999). In terms of addressing dependence of estimates, there are multiple scales of potential dependence: author, estimation method, and model. Therefore, we cluster at the scale for which there is the strongest empirical evidence of dependence, in addition to carefully choosing control variables and selecting only one estimate per study (except when potential independence cannot be rejected).

To analyze the impacts of duplication and high-temperature damage estimates, we estimate expression (4) including and excluding duplicate and high-temperature damage estimates. Given our hypothesis of duplication bias, regressions that include duplicate data are used for purposes of producing a counterfactual; it should be assumed that we are referring to estimates that exclude duplicate damage estimates unless explicitly stated. Given the evidence that high-temperature damage estimates are speculative and potentially extrapolations of already included estimates, we explore the impact of a 4 \(^{\circ }\)C increase as a cut-off for lower temperature damage estimates. For analyzing the potential impact of climate change in this century, the preferred dataset excludes duplicate and high-temperature damage estimates.

Finally, given concerns about overfitting our model due to its small sample size—particularly in the preferred dataset consisting of 21 observations (excluding duplicate and high-temperature damage estimates)—we conduct our analysis using two sets of exogenous variables: a smaller set that includes only temperature squared and its interaction with the market and catastrophic indicator variables and an extended set that also includes the indicator variable for cross-sectional studies without country fixed effects and the interaction of temperature squared with the productivity indicator variable. The latter two variables are chosen for exclusion because both variables have lower correlation with damages across all datasets—particularly the preferred dataset—and productivity is strongly correlated with the market indicator variable.Footnote 27

6 Results

We test for heteroskedasticity and dependent error terms using Cameron and Trivedi’s decomposition of IM test (see Table B1 in Appendix B) and the Breusch–Pagan test of independence (see Table B2), respectively. We consistently fail to reject the null hypothesis of homoskedasticity when we exclude high-temperature estimates and consistently reject the null hypothesis when we include them. The Cameron and Trivedi’s decomposition of IM test also tests for non-normal kurtosis and skewness, which we consistently reject (the only exception is skewness when we include duplicate estimates and drop high-temperature damage estimates).Footnote 28 For purposes of simplicity, we present results using WLS regardless of whether we exclude high-temperature estimates; these results only slightly differ from the OLS results. We also consistently reject the null hypothesis of independent damage estimates, including at the model scale, for which we reject at the 1% significance level.Footnote 29 For all datasets, we cluster error terms at the model scale.

When we regress climate damages on temperature squared and its interaction with indicator variables, the results indicate a strong relationship between temperature and climate damages; see Table 2. In particular, the regressions are all jointly significant at the 1% significance level, and the coefficient corresponding to temperature squared (i.e., non-catastrophic climate damages) is positive and significant at the 5% significance level. Additionally, catastrophic impacts are positive, but only significant at the 1% level when damage estimates for high-temperature increases (i.e., above 4 \(^{\circ }\)C) are included. As expected, we generally find that the exclusion of non-market impacts has a negative and sometimes significant effect on damages. In the extended specifications, we find that productivity has an insignificant, positive effect on climate damages, as well as strong evidence of cross-sectional bias.

Our results appear split with respect to our other two main statistical concerns: over-specification and high-temperature estimates. On the one hand, over-specification appears not to be of particular concern, given the small change in coefficients corresponding to temperature-squared and catastrophic impacts that occurs with the inclusion of the productivity and cross-sectional controls. While there are some significant changes in the coefficient corresponding to the market indicator variable, this is due to the strong correlation between the market and productivity indicator variables that results from the exclusion of non-market impacts and productivity modeling in all CGE and panel models. On the other hand, high-temperature damage estimates appear to be a critical issue, given the large change in coefficient magnitudes between Specifications (4) and (8), though only the coefficients corresponding to temperature-squared and its interaction with the market indicator variable are statistically different; this highlights a common theme in this paper that total damages are robust.Footnote 30 The signs of coefficients are almost identical when we include and exclude high-temperature estimates; again, the only difference is the coefficient corresponding to the market indicator variable when productivity is excluded due to the high level of correlation between the market variable and productivity. As a consequence of this correlation between the market and productivity impacts of climate change in the underlying damage estimates and an increase in adjusted-R squared with the inclusion of productivity and cross-sectional controls, we choose the extended specification as the preferred specification [i.e., Specifications (4) and (8) in Table 2].

Our damage estimates are statistically higher than those of Nordhaus (2013). We reject the null hypothesis that non-catastrophic damages (the coefficient corresponding to t2) and total damages (the sum of the coefficients corresponding to temperature-squared and its interaction with the catastrophic dummy) are equivalent to the Nordhaus (2013) damage estimate of 0.2136; the only exception is for non-catastrophic damages when we include high-temperature estimates. If we also consider climate change’s impact on productivity jointly with non-catastrophic and total impacts (i.e., add the coefficient corresponding to the interaction of temperature-squared and productivity), this difference remains statistically significant.Footnote 31

In support of the main hypothesis of our paper, we find strong evidence of duplication bias when we exclude damage estimates above 4 \(^{\circ }\)C. Compared to the counterfactual dataset of low-temperature damage estimates that includes duplicate estimates, the damage coefficient corresponding to temperature-squared increases and the coefficient corresponding to catastrophic impacts declines when we drop duplicate estimates. As expected, the standard errors corresponding to these variables also increase—resulting in catastrophic impacts becoming statistically insignificant—emphasizing the false precision from duplication. Though less substantial, we find similar changes for the larger dataset that includes high-temperature estimates. Using seemingly unrelated regression (SUR) to test for duplicate publication bias (a test of whether the exclusion restriction for duplicate estimates significantly impacts coefficients), we reject the null of no duplication bias at the 1% significance level for the preferred dataset that considers only damage estimates corresponding to low-temperature increase (i.e., 4 \(^{\circ }\)C or less) and fail to reject the null for the dataset containing high-temperature damage estimates.Footnote 32 Interestingly, while we find strong evidence of duplication bias, we find no evidence of publication bias.Footnote 33

We also find evidence of omitted variable bias. In particular, we consistently find the previously omitted variables (mkt_t2, cat_t2, prod_t2, and cross, as defined above in Eq. (4)) jointly significant. The adjusted R-squared statistic also increases when we include these previously omitted variables. Furthermore, we reject the null of no omitted variable bias using the Ramsey regression specification-error test for omitted variables when we include only temperature-squared and the shorter set of explanatory variables (t2, mkt_t2, and cat_t2) at the 5 and 10% significance levels. We fail to reject the null hypothesis when we use the extended set of variables (including prod_t2 and cross) and when we exclude high-temperature estimates, though not when we include high-temperature estimates. Finally, documentation on the construction of estimates also indicates that these indicator variables should be included based on theory (see Sect. 2).

For the preferred regression of impacts this century (i.e., Regression (4) in Table 2, corresponding to the regression of damages on the extended set of variables excluding high-temperature estimates), we have shown that the coefficient corresponding to our current best estimate of non-catastrophic impacts (i.e., temperature-squared) significantly increases (almost doubling) when we address duplication bias and that the resulting estimates differ from Nordhaus (2013). Given that our results demonstrate that duplication bias can significantly affect the temperature–damage relationship, it is important to determine the relative importance of duplication bias in driving the difference between our results and those of Nordhaus (2013) relative to the other forms of bias discussed earlier—omitted variable bias and measurement error—and relative to obtaining a larger dataset and improving the estimation technique.

To address this issue, we run the Nordhaus (2013) model over five datasets and sets of variables to identify the relative impact of each adjustment made from the Nordhaus (2013) estimate [Specification (1) in Table 3] to the preferred estimate [Specification (5) in Table 3]. Each step captures the impact of one particular change that we introduced: (2) correcting data and improving the estimation technique, (3) introducing additional damage estimates, (4) accounting for omitted variable bias, and (5) accounting for duplication bias. Considering non-catastrophic impacts, we find that duplication bias and introducing new data have the most significant impact on the percent change in the coefficient corresponding to temperature-squared, at 81 and 55%, respectively.Footnote 34

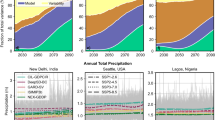

If we make the assumption that the relationship captured in our meta-analysis represents a damage function (Tol 2009; Nordhaus 2013), we may be interested in understanding which factors—including source of bias—have the most influence on the SCC. Using DICE—the sole IAM to currently calibrate its damage function using meta-analysis and one of three models used to calculate the official U.S. SCC (Interagency Working Group on Social Cost of Carbon 2016)—we estimate the SCC and repeat the above analysis. If we consider only non-catastrophic impacts (as in the DICE-2013R damage function), addressing the above biases increases the SCC (in 2015) by three- to four-fold from Specification (1)–(5), depending on whether we account for the impact of climate change on economic productivity. If we consider total climate impacts (both non-catastrophic and catastrophic), addressing the above biases increases the SCC (in 2015) by four- to five-fold depending on the treatment of productivity; see Fig. 3. When considering the percent change in the SCC from specification to specification, we find nearly identical results to the previous paragraph, indicating that duplication is one of the most significant sources of bias in previous estimates. Finally, we find that uncertainty over the SCC increases—as measured by the difference between the 5th and 95th percentile SCC estimates (accounting for non-catastrophic impacts only)—from Specification (1)–(5).

Social cost of carbon over time calculated using Nordhaus (2013) and the preferred regression [regression (4) in Table 2], including climate impacts on economic productivity in non-catastrophic climate impacts. This figure compares the social cost of carbon over time from baseline runs of DICE-2013R (Nordhaus 2013) to runs replacing the DICE damage function with the preferred regression [i.e., Regression (4) in Table 2] with productivity impacts included. Following Nordhaus (2013), we multiply the coefficients corresponding to non-catastrophic impacts (t2 plus prod_t2) by 25% when constructing the damage functions to account for potential omitted non-catastrophic impacts of climate change. If we excluded productivity impacts in the figure above, it would shift down the damage functions corresponding to the preferred specification and narrow its corresponding 95th percent confidence interval

Unsurprisingly given the insignificance of duplicate publication bias found earlier when considering data corresponding to all temperature increases, we find that accounting for duplication bias has a similarly small effect on the temperature–damage relationship and the SCC when including high-temperature damage estimates; see Table B3 in Appendix B. However, the corresponding 2015 SCC estimates are still far above the original DICE-2013R model, with an increase in the range of one-half to fourfold when considering only non-catastrophic climate impacts and three- to six-fold when considering total climate impacts, where the range is dependent on the inclusion of the impact of climate change on productivity. When we consider the climate impacts on productivity jointly with the non-catastrophic or total impacts of climate change, our final SCC estimates—Specification (5)—are almost identical, regardless of whether we account for high-temperature damage estimates, and considerably higher and more uncertain than the original DICE-2013R estimate.

7 Sensitivity Analysis

We conducted additional sensitivity analyses (alternative functional forms, data and variable definitions, clustering scales, and estimators; treatment of outliers; and dropping estimates, studies, and estimation methodologies one by one) for the preferred specifications [i.e., regressions (4) and (8) in Table 2]. This section discusses notable results; see Appendix C in the Supplementary Material for an in-depth discussion.

We use the Nordhaus (2013) damage functional form in our main text, despite some alternative damage functional forms (Weitzman 2012; Tol 2014, 2015b) that fit the data slightly better. These alternative functional forms increase the number of explanatory variables, running the risk of overfitting the model. Thus, they are less useful for damage predictions. Even so, we redo our primary analysis using the Tol (2014) functional form, and find strong evidence that duplication bias led to downward bias in the original Tol (2014) temperature–damage relationship; see Appendices C1 and C2.

Our primary estimates of climate impacts are quite robust to these sensitivity analyses, particularly for non-catastrophic impacts when excluding high-temperature estimates and total impacts in general. Total climate impacts tend to remain relatively constant as alternative assumptions shift impacts between the non-catastrophic and catastrophic components. A more restrictive definition of duplication that limits our choice of data to only one estimate per study (to exclude extrapolated estimates) shifts catastrophic impacts to non-catastrophic impacts (see Appendix C4) when including high-temperature estimates, while alternative estimators shift non-catastrophic impacts to catastrophic impacts (Appendix C5). While we find little evidence of non-normal error distributions, regressions that are robust to outliers (including those due to non-normal error distributions) cause small shifts from non-catastrophic to catastrophic impacts—such that total impacts remain relatively constant—as would be expected under a right-skewed distribution; see tables in Appendices C6 and C7.

Finally, earlier estimation methodologies (enumerative, CGE, cross-sectional) predict a flatter temperature–damage relationship relative to more recent methodologies (science, panel regression) and surveys; see Appendix C8. As a consequence, older methodologies tend to reduce non-catastrophic impacts and increase catastrophic coefficients; newer methodologies tend to increase non-catastrophic impacts and reduce catastrophic coefficients. Dropping the currently debated panel methodology has no effect on the results (nor does dropping cross-sectional studies); though, dropping all statistical estimates results in the positive impact of productivity becoming statistically significant. Like the newer methodologies, CS estimates imply a steeper temperature–damage relationship, despite capturing only climate impacts on non-market goods and services. Despite this result, including these CS estimates in the preferred specifications has little impact on key results; see Appendix C9. As theorized, we find evidence of CS estimates being significantly higher than willingness to pay (WTP) estimates.

In summary, considering the large number of alternatives tested and the fact that the overall effects of the sensitivity tests are small (mostly shifting impacts from non-catastrophic to catastrophic), the results should be seen as robust.

8 Discussion

Like Nordhaus (2013), we take the extra step of interpreting the temperature–damage relationship captured by the above meta-analysis as a climate damage function. To include these meta-analysis results in DICE-2013R, we followed Nordhaus in multiplying the sum of the coefficients corresponding to non-catastrophic impacts (temperature-squared and its potential interaction with productivity) by 1.25 to account for omitted non-catastrophic climate impacts.Footnote 35 Given that we use data including and excluding high-temperature damage estimates (i.e., above 4 \(^{\circ }\)C) and two sets of control variables when implementing our estimation strategy, it is important to clarify which of our estimates is the appropriate relationship to use in modifying the DICE-2013R damage function.

When re-estimating the DICE-2013R damage function, it is appropriate on several grounds to exclude high-temperature estimates (i.e., above 4 \(^{\circ }\)C). First, high-temperature estimates are highly speculative in nature, given that uncertainty in climate damage estimates increases in temperature, yet including these five estimates (19% of estimates) significantly decreases the impact of temperature-squared on non-catastrophic damages by half and shifts catastrophic impacts from statistically insignificance to significance at the 1% level (see Table 2); this is despite the inclusion of weights to partially mitigate this undue influence. In contrast to the outsized impact of these estimates, the majority (approximately two-thirds) of the 2015 SCC estimate for DICE-2013R correspond to impacts occurring this century; this result is relatively robust to the magnitude of the damage coefficient. Most high-temperature estimates are also potentially duplicates of damage estimates corresponding to temperature increases below 4 \(^{\circ }\)C, whose exclusion results in a non-catastrophic damage function more stable to the definition of duplication; see Appendix C4 in the Supplementary Material. Thus, excluding damage estimates for temperature changes above 4 \(^{\circ }\)C prevents high-temperature estimates from unduly influencing the estimated temperature–damage relationship relevant for the twenty-first century, for which estimates for approximately 4 \(^{\circ }\)C or less are more germane.

Following Nordhaus (2013), we specify a damage function corresponding to non-catastrophic damages from Regression (4) in Table 2, excluding productivity, such that the quadratic damage function parameter is equivalent to the coefficient corresponding to temperature-squared. Thus, while we control for catastrophic damages and productivity in the preferred regression specification, we exclude the corresponding estimated coefficients in the base specification of the damage function because of their mixed significance and volatility across the various specifications. Instead, given the debate over the impact of climate change on productivity and economic growth (Dell et al. 2012; Burke et al. 2015; Howard 2015), we recommend conducting an analysis of sensitivity to the inclusion of the productivity impact. Replacing the DICE-2013R damage function with our estimates of non-catastrophic damages, we find a mean 2015 SCC estimate of $68 (2015 USD) with a 95% confidence interval from $16 to $132 using our base specification, and a mean of $83 with a range of −$2 to $206 when including productivity. To put this range in context, the higher end of this SCC range implies that the United Nation’s 2 \(^{\circ }\)C limit is potentially too high from a social welfare perspective. Despite these wide confidence intervals, which highlight the extreme risk of climate change, it should be noted that these ranges underestimate uncertainty by ignoring the uncertainty underlying the data estimates themselves (due to insufficient data).

One potential solution to addressing this risk is factoring in catastrophic damages. To account for catastrophic impacts, we would ideally run a Monte Carlo simulation whereby catastrophic impacts are captured by the variance of the damage coefficient (hence the importance of improved estimation of standard errors). However, given that we do not reject the normal distribution (nor could we know the “true” distribution even if we did) we choose a normal distribution (a symmetric, thin-tailed distribution) to model error terms. If this assumption fails (due to too little data to detect it or our inability to capture the uncertainty underlying most estimates), a Monte Carlo simulation may fail to truly highlight the underlying climate risks—as highlighted by Weitzman (2009)’s Dismal Theory—providing little more information than the 95th percent confidence interval reported earlier. Furthermore, given that DICE is often run as a deterministic model, we recommend also conducting a sensitivity analysis with respect to the inclusion of catastrophic damages. Replacing the DICE-2013R damage function with our estimate of total damages (non-catastrophic and catastrophic), we find a mean 2015 SCC estimate of $97 (2015 USD) and $113 when we exclude and include productivity, respectively. Using these estimates for policy would put a higher premium on risk, and keeps the optimal temperature level at 2.1 \(^{\circ }\)C or lower.

Some have questioned whether this type of top-down analysis is appropriate, and thus question the validity of SCC estimates such as those derived above. For example, Tol (2009) argues that these studies should not be treated as time-series data, and that any analysis attempting to estimate a damage function should be interpreted cautiously. Citing the Intergovernmental Panel on Climate Change (2014), JEP (2015) argues against this methodology because estimates use different estimation methods and models to capture overlapping sets of impacts. In this paper, we attempt to address these shortcomings by including methodological variables that capture differences in overlapping estimates and by accounting for different estimation methods and models. While this type of meta-analysis is fraught with challenges, as critics suggest, we believe that analysts should continue to work to improve these estimates because such analyses provide valuable information about the temperature–damage relationship; in fact, some analysts have already chosen to embrace the technique (Nordhaus 2013; National Academies of Sciences, Engineering, and Medicine 2017).Footnote 36 However, analysts should also be clear about the limitations of the methodology. Furthermore, as more data become available, we should expect meta-analysis estimates to improve as analysts are able to control for a more refined set of differences between estimates (including which sectors are included, such as agriculture, sea-level rise, etc.).

As of late, there has been a new wave of advanced statistical estimates of climate damages—including bottom-up estimates (Schlenker and Roberts 2009; Hsiang et al. 2011) and global market impact estimates (e.g., Dell et al. 2012; Burke et al. 2015)—that some economists may argue make the top-down approach to estimating a damage function unnecessary. However, the above techniques are also key to the bottom-up approach, given that most of these studies focus on particular sectors in developed nations only, and will require benefit-cost transfer and meta-analyses methods—as developed in this paper—to assemble global climate damage estimates. For example, there are a multitude of regional agricultural studies on the costs of climate change, and meta-analyses are necessary to estimate regional and global agricultural damage functions.Footnote 37 In the meantime, as statistical methods and data improve, the top-down approach—meta-analysis and surveys—are necessary. Climate policy cannot wait while statistical studies are perfected. Instead, we should utilize the best available estimates of total climate damages, and should update these estimates as new data becomes available. We believe that top-down and bottom-up approaches should converge over time, as methods improve and more data becomes available. Future work should aim to improve global climate damage estimates (the top-down approach) and region-sector climate damage estimates (a bottom-up approach), and utilize meta-analyses to determine whether these approaches are converging in magnitude.

9 Conclusion

This paper contributes to the literature on IAMs and climate change more generally, by offering an improved estimation of the temperature–damage relationship. Using a larger dataset than previously assembled, this paper highlights several methodological improvements that address bias present in previous meta-analyses. Specifically, due to restricted analyses resulting from limited availability of data, previous meta-analyses of climate damages suffered from multiple sources of coefficient and standard error bias: duplication bias, dependence, heteroskedasticity, measurement error, and omitted variable bias. By expanding our dataset (to include additional published and grey literature estimates), including methodological variables, clustering standard errors, and correcting the specification of temperature (to account for different reference periods), we are able to address and test for these biases. While it turns out that publication bias and the measurement error in temperature were not very significant in most cases, duplication bias and omitted variable bias significantly impact the temperature–damage relationship. According to the preferred specification, non-catastrophic damages are likely between 7 and 8% of GDP for a 3 \(^{\circ }\)C increase (from the pre-industrial period), compared to the 2.4% predicted by DICE-2013R (Nordhaus 2013), and are between 9 and 10% when factoring in catastrophic risks.

We also demonstrate that decisions regarding the treatment of high-temperature estimates (exceeding 4 \(^{\circ }\)C) are critical in determining the temperature–damage relationship. When we include these high-temperature estimates, our range of non-catastrophic damage estimates shifts to 4–8% for a 3 \(^{\circ }\)C increase. The range of total damage estimates shifts to 7–11% for a 3 \(^{\circ }\)C increase when we include these high-temperature estimates. In presenting these findings, we highlight the need to carefully consider the relevant time period over which the temperature–damage relationship is identified.

We can interpret the temperature–damage relationship estimated above as a damage function (as done in DICE-2013R). Using the preferred specification, we find that the 2015 SCC increases by approximately three- to four-fold depending on the treatment of productivity. Applying a SCC of this magnitude globally implies that aggressive climate action that limits the maximum temperature increase to below 2.5 \(^{\circ }\)C is optimal. Factoring in catastrophic risks increases the SCC range and further restricts the optimal maximum temperature increase to 2.1 \(^{\circ }\)C or lower.

DICE-2013R has yet to be integrated into the U.S. government’s analysis (stopping currently at DICE-2010 (Interagency Working Group on Social Cost of Carbon 2016), which relies on the previously employed enumerative calibration strategy). This paper highlights how the U.S. government could re-estimate the DICE-2013R damage function to meet previously set government standards for meta-analyses (U.S. Environmental Protection Agency (EPA) 2006). Making this sole change would increase the resulting SCC of the Interagency Working Group on Social Cost of Carbon by between one-and-a-half to twofold. While the Trump Administration disbanded the Interagency Work Group in 2017, U.S. agencies will still need to calculate the cost of greenhouse emissions and our above analysis still applies.

Notes

While recent papers investigate other potential forms of climate damage functions—for example, Dietz and Stern (2015) include direct effects of temperature change on capital stock and total factor productivity—for purposes of clarity we restrict ourselves to the dominant form as stated.

There are authors who find the difficulty of estimating damage from unprecedented climate change so daunting that they lean towards seeing it as fundamentally unknowable. Examples of this view include: Pindyck (2013)’s statement that “more than temperature change itself, economic impact may be in the realm of the ‘unknowable”’; Heal and Millner (2014)’s view that “we also have to recognize, however, that many of the most important empirical uncertainties, such as...the adaptability of future societies to an altered climate, are fundamentally unknowable”; and Wagner and Weitzman (2015)’s questioning of “how can we know the relevant probabilities of rare extreme scenarios that we have not previously observed and whose dynamics we understand only crudely at best? The true numbers are largely unknown and may simply be unknowable.” We greatly respect these positions, but naturally we still believe—as researchers conducting a meta-analysis—that it is worth trying to quantify despite the daunting challenges.

While duplication bias is not explicitly cited in Ackerman and Stanton (2010), they recognize that a similar selection procedure for Tol (2008)’s meta-analysis of SCC estimates may result in bias because approximately 50% of the estimates were drawn from his own papers, which tend toward the lower end of the range (Van den Bergh and Botzen 2015).

Mendelsohn et al. (2000b) produce two estimates using statistical and experimental methodologies, though we classify the latter as statistical when clustering due to likely dependence. See Supplementary Material.

Because some CGE models capture the impact of lost ecosystem services on GDP (e.g., Bosello and Parrado 2014), we conduct analysis of sensitivity to this assumption.

Unlike other CGE model estimates, Bosello and Parrado (2014) attempt to capture transitional costs.

The ongoing debate over whether the impacts of weather capture changes in the climate boils down to whether short-run and long-run adaptation are similar and whether impacts intensify over time (Dell et al. 2014; Burke et al. 2015; Nordhaus 2015; Hsiang 2016). Just as we do not question the various identification strategies employed in cross-sectional regressions to address omitted variable bias, we include panel estimates without questioning the method’s identification strategy. Instead, we analyze sensitivity to their exclusion in Appendix C in the Supplementary Material, and find that the exclusion of panel estimates has little effect on our primary results.

In the former methodology, Weitzman (2012) estimates impacts of 50 and 99% of global GDP for 6 and 12 \(^{\circ }\)C increases (Ackerman et al. 2012), respectively, based on the share of human habitat that will be inhospitable. While the 12 \(^{\circ }\)C estimate is based on a global limit from Sherwood and Huber (2010), the 6 \(^{\circ }\)C estimate internalizes regional limits, such as the Middle East becoming inhospitable by the end of the century under a Business As Usual scenario (Pal and Eltahir 2015). In the latter methodology, Nordhaus (2014) calibrates the DICE-2013R’s damage function assuming that the 2 \(^{\circ }\)C limit is optimal under alternative constraints: a strict 2 \(^{\circ }\)C limit and an average increase of 2 \(^{\circ }\)C over the 2050–2250 period.

In general, global damage estimates become less accurate (i.e., higher variance) the farther out into the future that they predict (Van den Bergh and Botzen 2015). While there are no enumerative based estimates for above 3 \(^{\circ }\)C, an enumerative estimate is likely to become less accurate as the climate scenario for which it makes a prediction moves farther and farther from the studies that underlie it; this is also the case for the similarly calibrated CGE studies. Surveys rely on expert elicitation, which is likely to become more unreliable as experts are asked to make predictions for future scenarios farther away from current observations (i.e., time periods and warming levels). Statistical (cross-sectional and panel) estimates are based on observed variation, and become less accurate (i.e., there is an increase in variance) the larger the out-of-sample prediction that is required. In cross-sectional studies, the higher the temperature change predicted, the more nations enter unobserved temperature levels. Similar issues also arise in out-of-sample prediction using panel regression, where “average annual temperatures in a country are almost never more than 2 \(^{\circ }\)C from their long-run historical mean.” (Dell et al. 2014) Finally, while scientific estimates seem the most likely to be accurate for high-temperature increases, the predictions are only as accurate as the climate models upon which they are based (see disparity between Sherwood and Huber 2010; Pal and Eltahir 2015 at 6 \(^{\circ }\)C), the extent to which other limits to human adaptation occur earlier, and/or the extent to which human ingenuity is able to overcome the limitations of heat stress.

Some of these damage estimates corresponding to high-temperature increases are potential leverage points that may confound OLS regression and other low breaking point estimators (Verardi and Croux 2009; Alma 2011). See the Supplementary Material for a more thorough discussion of leverage points and their identification.

The normality assumption may not hold—causing inefficient coefficient estimates and biased standard errors—if errors terms are right-skewed due to more negative than positive climate surprises (Tol 2009). We estimate robust regressions partially to address this issue; see the Supplementary Material.

When measurement error is unobserved, some meta-analyses use the sample size of the study (n) to construct a weight \(\left( {\sqrt{n}} \right) \) because studies with larger sample sizes tend to be more accurate (Day 1999; Nelson and Kennedy 2009). In the case of climate change, the number of observations is unobserved for non-statistical based estimates.

The search terms were: “percentage of GDP”, “climate change”, & global; “percent of GDP”, “climate change”, & global; “percent GDP”, “climate change”, & global; “% of GDP”, “climate change”, & global; “% GDP”, “climate change”, & global; “climate change” & “world output”; “Estimated impact of global warming on world output”; “climate change”, “economic impact”, & global; “climate change” & “global impact”.

When it was necessary to select only one estimate, we chose the estimate that utilizes the Business As Usual scenario, the most climate information available (e.g., temperature and precipitation changes), and GDP weights.

There are eight studies that present two potentially independent estimates using alternative temperatures or methodologies. We conduct sensitivity analysis to maintaining one data point from these studies instead of two.

While testing for publication bias would be ideal, it is not possible in this context because many estimates do not provide standard errors. Thus, the Egger and Begg test statistics for publication bias cannot be constructed.

Standards for meta-analysis recommend either studying comparable estimates only or controlling for the underlying differences using explanatory variables. We choose the former approach in this case. Given that the vast majority of damage estimates are willingness to pay (WTP) estimates, we analyze WTP to avoid climate damages and drop CS welfare measures (which combine how much climate losers are willing to accept for their worsening climate and how much climate winners are WTP for their improving climate). Given that CS is the appropriate welfare measure “assuming rights to the existing climate (Maddison and Rehdanz 2011)” and implies higher damages per temperature increase than WTP (hence, Tol (2015a) finds that Maddison and Rehdanz (2011) is an outlier), our study represents a lower bound estimate of the effects of climate on wellbeing. While it is tempting to choose the latter approach by including an indicator variable equal to one if the estimate measures CS, a confounding factor is that these three estimates are also the sole estimates to exclude market impacts of climate change; this makes the interpretation of the indicator variable difficult. Even so, we conduct sensitivity analysis to this approach and show that it has little impact on the final results; see Appendix C in the Supplementary Material.

With respect to dropping estimates based on already included estimates, we make exceptions for Hanemann (2008), as adjusted by Ackerman et al. (2012), Meyer and Cooper (1995), Roson and Van der Mensbrugghe (2012) and Bosello and Parrado (2014), where we believe that the authors used the original estimates (Nordhaus 2008; Fankhauser 1995; Tol 2002) as a starting point for their own estimates, such that the final estimates represent a significant departure from the original estimates. We only conduct sensitivity analysis to these two previous exceptions, given that ENVISAGE (Roson and Van der Mensbrugghe 2012) and ICES (Bosello and Parrado 2014) overlap with FUND only in minor ways.

We assume that (1) all science-based damage estimates account for catastrophic impacts, and (2) panel estimates of the temperature–damage relationship (Dell et al. 2012; Burke et al. 2015) capture the effects of climate change on GDP via productivity because they measure the effect of climate change on economic growth.

Following Tol (2009), we regress damages on Time, finding that damage estimates (insignificantly) increase over time. To check our results for robustness, we regress damages on time and time squared with and without controlling for temperature. In general, the time variables are insignificant, except when we include only the linear time and temperature terms; in this case, damage estimates significantly increase in magnitude over time.

In our dataset, this adjustment is captured using a 5-year average of NASA’s global annual mean land-ocean temperature index relative to 1880 (http://data.giss.nasa.gov/gistemp/graphs_v3/Fig.A2.txt).

Of the cross-sectional studies, the studies that analyze the G-Econ dataset (e.g., Nordhaus 2006)—which consists of GDP and climate data at the sub-national scale—include country fixed effects.

Furthermore, the indicator variable for productivity is also perfectly correlated with CGE and panel models, though not with statistical models in general.

The Cameron and Trivedi’s decomposition of IM test also tests for homoskedastic normal error terms. While we consistently fail to reject the null of normal kurtosis and skewness, we reject the null of a normal error distribution, except when we exclude high-temperature damage estimates and include the full set of explanatory variables (i.e., the preferred set of assumptions). Given that we fail to reject normal kurtosis and skewness, we assume that this is primarily driven by heteroskedasticity.

At the author scale, we reject the null hypothesis of independence at the 5 and 10% significance levels when we include or exclude high-temperature damage estimates, respectively. At the method scale, we reject the null hypothesis at the 5% significance level.

We utilize seemingly unrelated regression (SUR) to test for statistical difference in the climate damage impacts when we account for high-temperature estimates. In terms of statistical significance, only the coefficients corresponding to temperature-squared and its interaction with the market indicator variables significantly differ between the inclusion and exclusion of high-temperature damage estimates, such that only non-catastrophic impacts differ, but total impacts do not.

Using SUR, we reject the null that the coefficients corresponding to temperature squared and its interactions are equal when we do and do not account for duplication at the 1% significance level when considering only damage estimates for low-temperature increases. At the 5% significance level, the difference is also significant when we consider only the coefficient corresponding to non-catastrophic damages (i.e., temperature squared) and total damages (i.e., temperature squared and its interaction with the catastrophic indicator variable), regardless of whether we consider productivity jointly. When we include high-temperature estimates, we fail to reject the null of no duplication bias for non-catastrophic damage and total damages.

Including an indicator variable for grey literature and/or its interaction with temperature-squared in all specifications in Table 2, we fail to reject the null of no publication bias. Of the twenty-four different specifications, we find only one instance where we reject the null of no publication bias at the 10% significance level.

We can check whether the order of adjustment matters. When we reverse the order of the last two adjustments, such that (4) is duplication bias and (5) is omitted variable bias, we slightly adjust the ranking. Instead of duplicate bias being the primary change, it is now of similar magnitude as new data with an impact of approximately 50%. Omitted variable bias goes from 10% to 34% to become the third most important adjustment.

Most of the underlying estimates systematically omit key climate impacts that could significantly increase climate damages, including socially-contingent climate impacts (migration, social and political conflict, and violence), ocean acidification, etc. (Howard 2014; Revesz et al. 2014). Given that some of the climate damage estimates in this study are over two decades old, and considering the positive coefficient corresponding to Time, we should expect climate damage estimates to continue to increase over time as currently omitted climate impacts are captured in future damage estimates. Given this bias, an adjustment upward of the temperature–damage relationship—such as the 25% adjustment employed by Nordhaus (2013)—may be justifiable when using meta-analysis results to calibrate an IAM damage function.

The National Academies of Sciences, Engineering, and Medicine (2017) recognizes meta-analysis of global damage estimates as one of three methods that the Interagency Working Group on the Social Cost of Carbon can use to calibrate an IAM’s damage function, given the caveat that they must address the dependence of damage estimates.

Another alternative, laid out by Kopp et al. (2013), is to develop an infrastructure that uses statistical (for example, Bayesian) methods to update damage functions as new estimates become available.

Abbreviations

- BAU:

-

Business As Usual

- \(\hbox {CO}_{2}\) :

-

Carbon dioxide

- CS:

-

Compensating surplus

- CGE:

-

Computable general equilibrium

- DICE:

-

Dynamic Integrated Climate-Economy model

- ENVISAGE:

-

Environmental Impact and Sustainability Applied General Equilibrium Model

- EPA:

-

Environmental Protection Agency of the U.S.

- FUND:

-

Framework for Uncertainty, Negotiation and Distribution

- GLS:

-

Generalized least squares

- IAMs:

-

Integrated assessment models

- IWG:

-

Interagency Working Group on Social Cost of Carbon

- IPCC:

-

Intergovernmental Panel on Climate Change

- ICES:

-

Inter-temporal Computable Equilibrium System

- JEP:

-

Journal of Economic Perspectives editors

- NAS:

-