Abstract

The pupillographic sleepiness test (PST) is an accurate predictor of alertness failure and performance impairment across sleep deprivation. At 11 min in duration, the task is considered too long to be used in occupational or roadside settings. We therefore investigated the predictive capacity of the PST at seven shortened test durations. Eighteen healthy young adults (aged 21.4 ± 3.2 years, 10 men) underwent 40 h of continuous wakefulness, completing an 11-min PST and a 10-min psychomotor vigilance task (PVT) every 2 h. Waking electroencephalography was recorded and scored for microsleeps during PVTs. The PST was divided into eight equal 82-s blocks and the predictive capacity of the pupillary unrest index (PUI) calculated at descending PST durations by systematically removing blocks. PUI increased significantly with time awake for all test durations (p < .0001), with a similar amplitude of PUI observed for test durations of 5.5 min and longer. While all test durations accurately predicted PVT impairment (AUC: 0.72–0.86, p < .001) and microsleep (AUC: 0.74–0.84, p < .0001), 5.5 min was the shortest duration where accuracy remained high across level and type of impairment (AUC: 0.79–0.86). For the 5.5-min duration, the positive predictive value (PPV) and negative predictive value (NPV) were on average 50.1% and 89.4%, respectively, and were comparable to the full 11-min task (PPV: 49.2%; NPV: 91%). The PST can be shortened to 5.5 min without compromising accuracy in detecting performance impairment or physiological drowsiness. The PST is an ideal candidate for fitness-for-duty or fitness-to-drive testing, and future studies should examine its predictive capacity, at shorter durations, against operationally relevant outcomes.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

Drowsiness is a leading cause of motor vehicle crashes (MVCs), alongside alcohol intoxication and speeding (Australian Transport Council, 2011; Connor et al., 2002). Worldwide estimates suggest approximately 20% of serious MVCs are caused by drowsiness (Blanco, Biever, Gallagher, & Dingus, 2006; Connor et al., 2002), with insufficient sleep resulting in a two- to fifteen-fold increase in crash risk (Tefft, 2018). Occupations that require individuals to work shifts, particularly night shifts, are of major concern due to the synergistic impact of sleep loss and circadian misalignment on alertness (Anderson et al., 2012). Accordingly, shift workers are at a heightened risk of drowsiness-related MVCs (Crummy, Cameron, Swann, Kossmann, & Naughton, 2008; Lee et al., 2016), particularly professional drivers (Stevenson et al., 2014) or those working in healthcare settings (Anderson et al., 2018; Barger et al., 2005; Ftouni et al., 2012; Mulhall et al., 2019). A clear strategy for alleviating drowsiness-related MVCs is the development of tools to monitor and identify the drowsy state (Wolkow, Rajaratnam, Anderson, Howard, & Mansfield, 2019). While a number of devices have been developed and validated in recent years (Dawson, Searle, & Paterson, 2014), many of these are continuous monitoring devices providing a warning of the increasing drowsy state (Anderson, Chang, Sullivan, Ronda, & Czeisler, 2013; Anderson et al., 2018; Ftouni et al., 2013; Ftouni et al., 2012; Mulhall et al., 2019).

Single-time-point predictions of drowsiness-related crash risk, such as tests designed for fitness to drive or fitness for duty, would allow for early interventions. One such test is the pupillographic sleepiness test (PST), an 11-min test of pupil diameter fluctuations (Wilhelm, Wilhelm, Ludtk, Streicher, & Adler, 1998b). In total darkness, spontaneous oscillations of pupil diameter reflect changes in autonomic nervous system activity (Oken, Salinsky, & Elsas, 2006). In the alert state, the pupil diameter is largely stable, becoming more unstable with increasing drowsiness due to fluctuations between sympathetic and parasympathetic control (Oken et al., 2006). This instability results in oscillations in pupil size, which can be captured with the pupillary unrest index (PUI) (Lüdtke, Wilhelm, Adler, Schaeffel, & Wilhelm, 1998). We and others have shown that the PUI is sensitive to increased time awake (Maccora, Manousakis, & Anderson, 2018; Regen, Dorn, & Danker-Hopfe, 2013; Wilhelm, Rühle, Widmaier, Lüdtke, & Wilhelm, 1998a), and is predictive of subsequent performance failure (attentional lapses) and increased physiological sleepiness (microsleeps and slow eye movements) (Maccora et al., 2018). Although the PUI metric within the PST is a reliable indicator of drowsiness, and therefore offers the potential for a fitness-for-duty/fitness-to-drive test, the duration of the task (11 min) is too long for a roadside test, and may not be feasible within demanding, time-constrained work environments such as healthcare settings, mining operations, and aircraft flight decks.

This was highlighted previously using the psychomotor vigilance task (PVT), a test of a sustained reaction time. While the full 10-min test duration is optimal for capturing sleepiness-induced performance failure, shortened test durations of as little as 3 min can adequately capture impairments following total sleep deprivation and sleep restriction (Basner & Dinges, 2011; Loh, Lamond, Dorrian, Roach, & Dawson, 2004; Roach, Dawson, & Lamond, 2006). This has subsequently led to the successful implementation of shortened test durations in healthcare settings to monitor alertness across successive night shifts (Ganesan et al., 2019), and the development of tablet-based apps to measure PVT performance in operational settings (e.g. Joggle Research, USA).

While it has been shown previously that the PST task does exhibit a time-on-task effect (like the PVT; Doran, Van Dongen, & Dinges, 2001), both the first and second half increase separately across a night of sleep (Wilhelm, Wilhelm, et al., 1998b). The extent to which the PST can be shortened to make it more operationally practical and feasible remains unknown. We therefore examined whether the PST, a valid and reliable automated test of drowsiness, can be reduced in duration while retaining high accuracy for predicting performance impairment and physiological sleepiness.

Method

Participants

Eighteen healthy young adults (10 men, 8 women) aged 18–29 years (M = 21.4 ± 3.2 years) took part in the study. Participants were non-smokers, consumed less than 300 mg of caffeine per day, and had a body mass index (BMI) within the healthy range [18–35 (M = 23.45, SD = 4.31)]. They reported a habitual sleep duration of 7–9 h; habitual sleep times between 22:00 and 01:00 and wake times between 06:00 and 09:00; did not nap more than once a week; had no history of medical, psychiatric, or sleep conditions; were free of medications or substances known to affect the central nervous system; did not have any visual impairments, eye conditions, or vision corrected by surgery or corrective lenses; and had not worked shift work or travelled across two time zones in the past 3 months. Female participants were not currently pregnant or using hormonal contraception.

The sample size was based on previous sleep deprivation protocols testing the utility of ocular metrics to predict impairment. Our previous work demonstrated significant results with strong effect sizes (Ns = 10–29) (Anderson et al., 2013; Ftouni et al., 2013). With N = 18, we demonstrate 99% power to detect a medium effect size for linear mixed-model analysis. The present study was approved by Monash University Human Research Ethics Committee. Written informed consent was provided by participants prior to participation in the study, and participants were reimbursed for their time.

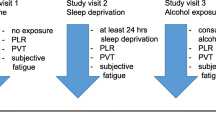

Procedure

To ensure all participants were sleep-satiated prior to admission to the study, all participants maintained a self-selected fixed 9:15 sleep/wake schedule at home for one week. Compliance with this schedule was monitored with Actiwatch-2 activity monitors (Philips Respironics, USA) and time- and date-stamped call-ins at bed and wake time. During this week, participants were required to abstain from the consumption of caffeine, alcohol, nicotine, medication, and recreational drugs, as confirmed by urine toxicology upon arrival at the laboratory. Participants were admitted to a time-isolated, temperature- (21 °C ± 1 °C) and light-controlled private room with ensuite, underwent a baseline night of sleep (8 h) scheduled at their habitual bedtime, and woke to a 40-h period of extended wakefulness under modified constant routine conditions. Here, participants were seated upright under dim light conditions (< 3 lux) and provided with hourly isocaloric snacks to equally distribute nutritional intake. Participants were provided 5 min of free movement every hour to stretch and use the bathroom. Alertness testing began 2 h post-wake, and was conducted bi-hourly thereafter. A night of recovery sleep in the laboratory was provided at the end of the 40 h.

Alertness testing battery

Bi-hourly alertness testing consisted of the pupillographic sleepiness test (PST), followed by the psychomotor vigilance task (PVT), with continuous monitoring of brain activity with electroencephalography (EEG).

Spontaneous oscillations of the pupil were monitored using the F2D2 portable PST (AMTech Pupilknowlogy, Dossenheim, Germany) [see Peters, Grüner, Durst, Hütter, and Wilhelm (2014) for a full device description]. Under conditions of complete darkness, participants fixated on a small light-emitting diode housed within a set of portable goggles, while pupil diameter was measured using an infrared video pupilometer. Test duration was 11 min, pupil diameter was sampled every 40 ms (sampling rate = 25 Hz), and the pupillary unrest index (PUI) was automatically calculated in eight 82.5 s blocks. During the test, participants were asked to open their eyes if the eyes remained shut for more than 5 s—no other conversation was permitted. The test was terminated after a minimum of 5.5 min (four blocks) if the participant was consistently unable to open their eyes long enough for the pupil to be detected.

Vigilant attention was assessed using a 10-min visual PVT (Dinges & Powell, 1985). Participants were required to respond as quickly as possible to an ascending millisecond stopwatch appearing at random intervals (2–10 s). Following a response, the participant’s reaction time was displayed on the screen before the next trial commenced. Failure to respond within 10,000 ms sounded an audible tone. Electroencephalogram (EEG: F3, F4, C3, C4, P3, P4) and electrooculogram (EOG) linked to the contralateral mastoids were recorded continuously using Compumedics Profusion 4 software (Compumedics Limited, Melbourne, Australia) and gold cup electrodes. Data was sampled at 512 Hz, with a low-pass filter at 30 Hz, a high-pass filter at 0.3 Hz, and a notch filter at 50 Hz.

Data analysis

Data cleaning

PVT responses < 100 ms were removed. Due to non-normal distribution of the data, PVT lapses (responses > 500 ms) were transformed using [(√n)+ (√n+1)] (Basner & Dinges, 2009). EEG data during the PVT was visually scored for microsleeps, defined as an intrusion of theta or delta activity > 3 s in the absence of eye blinks. For each PST, the pupillary unrest index (PUI; changes in pupil diameter in mm/min) was automatically calculated using AMTech F2D2 software in eight bins of 82.5 s (Peters et al., 2014). Briefly, PUI is the sum of absolute changes in pupil diameter: data was reduced by calculating the average for periods of 16 consecutive values, and the absolute values of the differences from one 16-value segment to the next are summarised for each 82.5-s bin. The PUI is the normalised value over a 1-min window, which is averaged for each complete 82.5-s bin [see Lüdtke et al. (1998) for full methods]. Every possible test duration was examined by systematically removing bins and manually calculating the mean PUI for the remaining bins (see Table 1). To be included in the calculation of the PUI, 50% of the available blocks had to contain valid data. Those tests that contained less than 50% valid data were marked as a ‘failed PST’. For all analysis of PUI metrics, failed PSTs were regarded as missing data; however, for the final analysis (pass/fail PST), they were regarded as a ‘failed’ PST due to inability to complete the test.

Time course of changes in alertness

For each PST test duration, PUI data was log-transformed, and data from the first 16 h was averaged to form a baseline measurement of rested wake performance (Anderson et al., 2013; Ftouni et al., 2013). Subsequent time points (hours 18–38) were then compared to the baseline value using linear mixed-model analysis to account for inter-individual variability and missing data. Time spent awake was modelled as a fixed factor, and participant was modelled as a random factor. A compound symmetry covariance type was used for all models, as this provided the lowest Schwarz Bayesian criterion (BIC) (Schwarz, 1978). To compare the different duration tests, a linear mixed model was run, with test duration and time spent awake modelled as fixed factors, and participant modelled as a random factor. Here, the main effect of test duration and the interaction with time spent awake were examined. An auto-regressive covariance structure was used. Post hoc pairwise comparisons were conducted within each model as required. A false discovery rate adjustment was applied to control for type I error (Benjamini & Hochberg, 1995). FDR adjusted p values (padj) are provided for all post hoc tests conducted. All statistical analyses were conducted in SPSS version 24 software (IBM, Armonk, NY).

Receiver operating characteristic (ROC) analysis

ROC analysis was conducted using SigmaPlot version 13 (ROC Curves Module; Systat Software, Inc., San Jose, CA) to assess the accuracy of the PUI score at different PST durations in predicting performance impairment, defined here as the number of PVT lapses, and physiological sleepiness, defined as the number of microsleeps during the PVT. Three threshold increases from ‘baseline’ were defined in order to assess mild–severe impairment: 25%, 50%, and 75%. Thresholds were calculated according to previous work (Anderson et al., 2013; Chua et al., 2012), and a given time point was classified as either ‘alert’ or ‘drowsy’, depending on whether the impairment criteria (performance or physiological) fell below or above the threshold (Chua et al., 2012). Here, sensitivity is defined as the percentage of ‘drowsy’ time points that were correctly assigned a high PUI score (i.e., the percentage of times the test correctly detected a drowsy individual based on PVT lapses and microsleeps), and specificity is the percentage of times the test correctly detected an alert individual. Positive predictive value (PPV) refers to the percentage of high PUI scores that were genuinely drowsy points (i.e., the percentage of positive PSTs that were also considered impaired on PVT or EEG), and negative predictive value (NPV) refers to the percentage of low PUI scores that were genuinely alert time points. Optimal cut-off values were determined using two criteria: (1) balanced specificity and sensitivity using Youden’s J index (Youden, 1950), and (2) minimum specificity of 85% to reduce the number of false positives, which is important for roadside testing and in line with recommendations for roadside drug testing (Verstraete, 2005). In this analysis, only PSTs with > 50% data were included in the analysis.

Chi-square analysis of PST pass/fail

Finally, to examine the impact of failing to complete the PST due to ocular occlusion and falling asleep, each PST was dichotomised as a pass/fail. First, we utilised the cut-off scores developed in the ROC curve analysis to predict moderate impairment (performance and physiological) whilst maximising specificity (> 85%), and a ‘failed’ PST was defined as all PSTs where the PUI was above the cut-off score, or there was < 50% valid data due to eye closure. A ‘passed’ PST was any complete PST with a PUI below the cut-off score. Pearson chi-square analysis was conducted to examine the predictive capacity of each PST duration to predict moderate impairment, and sensitivity, specificity, PPV, NPV, and odds ratios were calculated. Second, adjusted cut-off scores were proposed to re-establish maximised specificity (> 85%), and adjusted values reported.

Results

Data were obtained from 18 participants who each completed the 40 h extended wake protocol. Data from one participant was excluded due to binocular miosis in total darkness (pupil diameter < 3 mm; in addition to other data abnormalities). In total, 323 ‘alertness testing’ sessions (PVT + PST) were completed (17 participants x 19 test sessions). Of these, 322 (99.7%) PVT test sessions were included and 322 PST sessions were included (one PST session was lost due to an inability to calibrate the device due to excessive sleepiness; 0.3% data loss). Of the 322 PST sessions, a total of 32 tests were terminated early due to interference from sleepiness-related ocular occlusions, and two tests were terminated early due to the device no longer tracking the pupil—potentially due to poor calibration. EEG was recorded from 321 (99.4%) PVT sessions, with only two excluded due to technical difficulties (0.6% data loss). As per Maccora et al. (2018), PVT lapses [F(11,176) = 15.6, p < .0001] and number of microsleeps [F(16,174) = 6.69, p < .0001] both showed significant increases from the ‘baseline’ well-rested day. Consistent with PUI, both outcomes showed peak impairment at 26 h post-wake (see Fig. 1).

Impact of test duration on time course of PUI

Table 2 shows mean PUI and number of data points included at each time point for each test duration. As can be seen, shorter test durations resulted in less data loss, as the most impaired participants who fell asleep part way through the PST were included. PUI increased significantly across time awake for all test durations (p < .0001; see Fig. 2). Post hoc comparisons revealed that PUI was higher for all time points (hours 18–38) relative to baseline (hours 2–16) for all test durations (padj < .01) except for the one-block (1.4 min) test duration: here, relative to baseline, the one-block PST exhibited higher PUI values for all time points (Padj < .05) except for hour 34 (Padj = 0.074).

Bi-hourly mean (± standard error) pupillary unrest index (PUI; mm/min) for each PST duration. Panel a shows the standard 11-min test duration, and panels b–h show each shortened duration, with the 11-min duration as a comparison. Black triangles represent a significant increase from baseline (hours 2–16; padj < .01); white triangles represent a significant increase from baseline (padj < .05). Grey shaded area represents habitual sleep period

There was a main effect of test duration on PUI score [F(7,352.1) = 13.48, p < .0001], such that compared to the full 11-min test, all test durations ≤ 5.5 min resulted in lower PUI scores (padj < .023). Additionally, the 1.4-min duration resulted in lower PUI scores than all test durations (padj < .046), the 2.8-min duration resulted in lower PUI scores than all test durations ≥ 5.5 min (padj < .023), and the 4.1-min duration resulted in lower PUI scores than all test durations ≥ 6.9 min (padj < .030). There was no interaction between test duration and time since wake [F(77,933.5) = 0.21, P = 1.00].

Impact of test duration on predictive capacity of the PUI

Ability to predict performance impairment

On average, mild performance impairment was classified as 10.27 ± 1.05 lapses (43% of tests classified as impaired), moderate impairment was classified as 19.03 ± 1.83 lapses (35% of tests classified as impaired), and severe impairment was classified as 27.78 ± 2.64 lapses (22% of tests classified as impaired). At the group level, these were comparable to 16 h, 20–22 h, and 24 h of wakefulness, respectively. All PST durations successfully predicted performance impairment at all impairment levels (p < .0001; see Table 3). For mild impairment, the area under the curve (AUC) remained constant at 0.86 for all blocks until the 4.1-min duration task. For moderate and severe impairment, the AUC was relatively stable until shorter test durations (4.1 min and 2.8 min for moderate and severe impairment, respectively). Associated recommended cut-off scores (using Youden’s J) appeared to be at approximately 9 mm/min, dropping below 8 for shorter test durations (less than 4.1 min when identifying mild impairment, but less than 2.8 min when identifying moderate performance impairment). When maximising specificity (to > 85%), cut-off scores for identifying impairment were higher, at approximately 11 mm/min (see Table 3), and were relatively stable for all test durations longer than 4.1 min (where they dropped below 10 mm/min for identifying mild impairment). Figure 3 shows ROC curves and scatter plots for four different test durations. As seen in panels d–f, optimal cut-off points remained relatively stable until the 2.8 min test duration, although there was a high number of false positives across all test durations (i.e., a PUI score above the cut-off indicating drowsiness, but no performance failure in the alert column).

Receiver operating characteristic curve analysis for mild (upper panels), moderate (middle panels), and severe (lower panels) performance impairment. Panels a–c show ROC curves for four PST durations, and panels d–f show the mean PUI score for different test durations dichotomised as alert or drowsy based on PVT lapses. Solid bars represent mean PUI for each dichotomisation. Dashed horizontal line represents optimal cut-off score for each test duration (red = Youden’s J; blue = 85% specificity). Alert tests above the dashed line represent false positives (FP); alert tests below the line represent true negatives (TN); drowsy tests above the line represent true positives (TP); and drowsy tests below each line represent false negatives (FN)

When specificity was maximised, sensitivity was highest for the 11-min test duration (66.7%) for predicting mild impairment, with the highest values also reported for PPV and NPV (75.9% and 79%, respectively). The difference across all test durations 4.1 min and longer, however, was minimal, with PPV ranging from 75.2% to 75.9% and NPV ranging from 75% to 79%. This was also observed for identifying moderate and severe impairment (see Table 3 and Fig. 3).

Ability to predict physiological sleepiness

On average, mild physiological sleepiness was classified as 4.87 ± 0.75 microsleeps (29% of tests classified as impaired), moderate impairment was classified as 9.74 ± 1.50 microsleeps (20% of tests classified as impaired), and severe impairment was classified as 14.60 ± 2.24 microsleeps (12% of tests classified as impaired). At the group level, these were equivalent to 16–18 h, 22–24 h, and 26–28 h of wakefulness, respectively. Similar to performance impairment, ROC curve analysis revealed that all PST durations significantly predicted physiological impairment at all impairment levels (p < .0001; see Table 4). For mild impairment, AUC remained constant at ~0.80 for all test durations 5.5 min and longer. Using Youden’s J, recommended cut-off scores remained stable at ~10 mm/min until the 4.1-min duration, where they consistently decreased for all levels of impairment. When maximising specificity (to > 85%), cut-off scores were higher. Table 4 presents the sensitivity, specificity, and positive and negative predictive values for all test durations, and Fig. 4 shows ROC curves and scatter plots for four different test durations. As seen in Table 4, sensitivity was highest for the 5.5-min test duration (59%) for predicting mild impairment. Accordingly, the PPV and NPV were also highest (at 61% and 84%, respectively), and were improved relative to the full 11-min duration test (55% and 83%, respectively). This was not observed, however, for moderate and severe impairment, where the 11-min test appeared to perform ‘best’, although, similar to that described for performance impairment (see above), the difference between all test durations 5.5–11 min was minimal for PPV (moderate impairment, range 47.2–47.9%; severe impairment, range 30.4–31%) and NPV (moderate impairment, range 89.7–92.2%; severe impairment, range 92.5–93.8%). Reduced accuracy across test durations 4.1 min and shorter was consistent across all levels of impairment (see Table 4 and Fig. 4).

Receiver operating characteristic curve analysis for mild–severe physiological sleepiness. Panels a–c show ROC curves for four PST durations, and panels d–f show the mean PUI score for individual tests dichotomised as alert or drowsy based on microsleeps. Solid bars represent mean PUI for each dichotomisation. Dashed horizontal line represents optimal cut-off score for each test duration (red = Youden’s J; blue = 85% specificity). Alert tests above the line represent false positives (FP); alert tests below the line represent true negatives (TN); drowsy tests above the line represent true positives (TP); and drowsy tests below each line represent false negatives (FN)

Ability to predict moderate impairment using a pass/fail criteria

Chi-square analysis of the dichotomised PSTs based on the ‘maximised specificity’ cut-off scores presented in Tables 3 and 4 showed that all PST durations significantly predicted moderate performance impairment and physiological impairment (p < .001). As seen in Table 5, using these cut-off scores but including ‘failed’ PSTs, sensitivity increased slightly and specificity decreased slightly, with similar odds ratios. Adjustment of cut-off scores to improve specificity back to at least 85% resulted in minor increases in cut-off scores (increases of less than 1.12) and minor changes to sensitivity and specificity.

Discussion

This paper is the first to systematically investigate the sensitivity of shortened PST durations to sleep loss, as well as the associated predictive capacity to detect performance and physiological impairment at shorter test durations. Taking into consideration various levels of impairment (mild, moderate, and severe) and various types of impairment (behavioural and physiological), our data suggest that a shortened test duration of 5.5 min has the same level of accuracy (and in some cases better accuracy) than the full 11-min test duration, and is thus considered optimal. For operational settings where time is critical, the test may be employed at 4.1 min with relatively little compromise on accuracy, although we do not recommend test durations less than this unless impairment is severe.

The development of a short, objective, and predictive test of drowsiness is critical for early intervention, and optimal for fitness-for duty-tests and fitness-to-drive tests, including roadside testing. The PST is one of the few commercially available tests that allow for a single-time-point assessment of drowsiness, and has been previously shown to predict subsequent performance impairment and physiological sleepiness (Maccora et al., 2018). Demonstrating that the device and PUI metric accurately predict performance impairment and physiological sleepiness at shorter test durations makes this test more operationally practical for field, clinical, and roadside settings, either in experimental research studies or within an operational fatigue risk management strategy.

We observed clear time-on-task effects which were consistent with previous data showing a small time-on-task effect of the PST (Wilhelm, Wilhelm, et al., 1998b). Here, we showed a marked reduction in PUI scores for the three shortest PST durations (1.4 min to 4.1 min), as well as a reduction for the 5.5-min duration relative to the 11-min task. This suggests that longer test durations yield higher PUI scores, although no significant interaction with time awake was found. These results highlight the importance of developing individualised cut-offs for each test duration. As we exhibited a clear time-on-task effect of PUI, it is worth noting that a comparison of the predictive capacity of the first 1.4 min (as we have done) with the final 1.4 min of the full 11-min PST would very likely result in different predictive values and different PUI cut-off scores, as the final 1.4 min would likely yield higher mean PUI scores. However, as this lacks operational utility, we have not explored this possibility within this manuscript.

Our study provides optimal cut-off scores and ROC curve results for all test durations (see Tables 3 and 4) for any future study or operational setting that may wish to employ a shorter test duration. As all test durations significantly predicted performance and physiological impairment above chance (all AUCs above 0.70)—even the 1.4-min test duration—the test may be adjusted relative to operational needs, and using our cut-off points for decision-making (i.e., employ countermeasure, proceed to rest break, etc.). As the cut-off for accurately detecting impairment changes with decreasing test duration, it is critical that impairment thresholds are modified accordingly, akin to the changing threshold for a PVT lapse at shorter PVT test durations (Basner, Mollicone, & Dinges, 2011). Consistent with the full-duration PST, shorter duration values resulted in better predictive capacity for performance impairment than physiological impairment, and reduced predictive capacity for severe levels of impairment compared to the mild and moderate levels of impairment, with a noticeable reduction in sensitivity. The severe impairment thresholds were equivalent to approximately 24–26 h of sleep deprivation at the group level, which represented peak levels of impairment due to the additive effect of homeostatic sleep pressure and the circadian nadir in alertness (Maccora et al., 2018). Therefore, the reduced sensitivity suggests that the PUI score increases prior to reaching peak impairment and is therefore better at predicting impairment that includes earlier, milder forms of drowsiness (i.e., extended wakefulness of 16–20 h).

Although ocular metrics are highly sensitive to detecting the drowsy state (Cori, Anderson, Shekari Soleimanloo, Jackson, & Howard, 2019), the PST does rely on the eye remaining open to capture an accurate recording of the pupil and its changing diameter. As drowsiness increases, data loss on the PST increases due to the onset of microsleeps, possibly reducing its efficacy. A shortened test duration of 5.5 min therefore also results in a greater level of data retention. For instance, for the 5.5-min duration, only 2.2% (7/323) of tests were excluded from analysis, compared to 6.2% (20/323) for the full 11-min test duration. This allows for analysis of sleepier individuals who were physically unable to complete the full 11-min test without falling asleep (although operationally this would indicate a ‘failed’ test). It is worth noting that in the real world, an inability to complete the PST would typically be considered a ‘failed’ PST. Analysis of the PST using a pass/fail criterion showed that the predictive efficacy was mostly unaffected, with comparable levels of sensitivity and specificity for detecting moderate impairment. Therefore, we suggest that utilising a 50% data inclusion criteria, marking a PST as either ‘failed’ or ‘impaired’ results in equal predictive value, and could be used in operational or research settings.

The PVT is the most widely used tool for detecting drowsiness, largely due to its sensitivity and lack of learning effects (Balkin et al., 2004; Lim & Dinges, 2008). While considered a reliable candidate for predicting operator fatigue-related deficits, the 10-min duration was considered too long to be acceptable in operational settings, particularly when repeated administration was required (Basner et al., 2011; Dinges & Mallis, 1998). Interestingly, and somewhat consistent with our test duration outcomes, a 5-min task has been consistently shown to yield similar levels of sensitivity to sleep loss as the standard 10-min PVT (Lamond, Dawson, & Roach, 2005; Lamond et al., 2008; Roach et al., 2006), as has a modified 3-min version (Basner et al., 2011), while test durations of less than 2 min were considered too brief (Loh et al., 2004; Roach et al., 2006).

Our analysis was designed to make the PST more operationally feasible and practical. When evaluating the validity of a drowsiness detection device for fitness for duty or fitness to drive, it is essential to examine not only the capacity to detect drowsiness (i.e., time awake, or during the circadian nadir), but also performance on a different task, and ideally a task that relates most closely to job performance, particularly when job performance represents a serious risk (i.e., driving, ICU monitoring, control room, etc.) (Gilliland & Schlegel, 1993). While we have shown a shortened 5-min PST to accurately detect time awake, performance impairment, and physiological indices of drowsiness, it remains unclear whether the PST accurately predicts real-world performance outcomes such as driving or other operationally relevant outcomes. The portable nature of the F2D2 device, the objective and physiological nature of data recording, and the flexible task duration make the PST an ideal candidate for fitness-for-duty/roadside testing of drowsiness. Future field-based studies are needed, however, to demonstrate its utility in predicting operationally relevant drowsiness-related risk.

References

Anderson, C., Chang, A. M., Sullivan, J. P., Ronda, J. M., & Czeisler, C. A. (2013). Assessment of drowsiness based on ocular parameters detected by infrared reflectance oculography. Journal of Clinical Sleep Medicine, 9(9), 907-920. https://doi.org/10.5664/jcsm.2992

Anderson, C., Ftouni, S., Ronda, J. M., Rajaratnam, S. M. W., Czeisler, C. A., & Lockley, S. W. (2018). Self-reported drowsiness and safety outcomes while driving after an extended duration work shift in trainee physicians. Sleep, 41(2). https://doi.org/10.1093/sleep/zsx195

Anderson, C., Sullivan, J. P., Flynn-Evans, E. E., Cade, B. E., Czeisler, C. A., & Lockley, S. W. (2012). Deterioration of neurobehavioral performance in resident physicians during repeated exposure to extended duration work shifts. Sleep, 35(8), 1137-1146. https://doi.org/10.5665/sleep.2004

Australian Transport Council. (2011). National Road Safety Strategy, 2011-2020. Retrieved from

Balkin, T. J., Bliese, P. D., Belenky, G., Sing, H., Thorne, D. R., Thomas, M., … Wesensten, N. J. (2004). Comparative utility of instruments for monitoring sleepiness-related performance decrements in the operational environment. Journal of Sleep Research, 13(3), 219-227. https://doi.org/10.1111/j.1365-2869.2004.00407.x

Barger, L. K., Cade, B. E., Ayas, N. T., Cronin, J. W., Rosner, B., Speizer, F. E., … Safety, G. (2005). Extended work shifts and the risk of motor vehicle crashes among interns. N Engl J Med, 352(2), 125-134. https://doi.org/10.1056/NEJMoa041401

Basner, M., & Dinges, D. F. (2009). Dubious bargain: trading sleep for Leno and Letterman. Sleep, 32(6), 747-752. https://doi.org/10.1093/sleep/32.6.747

Basner, M., & Dinges, D. F. (2011). Maximizing sensitivity of the psychomotor vigilance test (PVT) to sleep loss. Sleep, 34(5), 581-591.

Basner, M., Mollicone, D., & Dinges, D. F. (2011). Validity and sensitivity of a brief psychomotor vigilance test (PVT-B) to total and partial sleep deprivation. Acta Astronautica, 69(11-12), 949-959. https://doi.org/10.1016/j.actaastro.2011.07.015

Benjamini, Y., & Hochberg, Y. (1995). Controlling the false discovery rate: a practical and powerful approach to multiple testing. Journal of the Royal Statistical Society. Series B (Methodological), 57(1), 289-300.

Blanco, M., Biever, W. J., Gallagher, J. P., & Dingus, T. A. (2006). The impact of secondary task cognitive processing demand on driving performance. Accident Analysis and Prevention, 38(5), 895-906. https://doi.org/10.1016/j.aap.2006.02.015

Chua, E. C. P., Tan, W. Q., Yeo, S. C., Lau, P., Lee, I., Mien, I. H., … Gooley, J. J. (2012). Heart rate variability can be used to estimate sleepiness-related decrements in psychomotor vigilance during total sleep deprivation. Sleep, 35(3), 325-334. https://doi.org/10.5665/sleep.1688

Connor, J., Norton, R., Ameratunga, S., Robinson, E., Civil, I., Dunn, R., … Jackson, R. (2002). Driver sleepiness and risk of serious injury to car occupants: Population based case control study. British Medical Journal, 324(7346), 1125-1128.

Cori, J. M., Anderson, C., Shekari Soleimanloo, S., Jackson, M. L., & Howard, M. E. (2019). Narrative review: Do spontaneous eye blink parameters provide a useful assessment of state drowsiness? Sleep Medicine Reviews, 45, 95-104. https://doi.org/10.1016/j.smrv.2019.03.004

Crummy, F., Cameron, P. A., Swann, P., Kossmann, T., & Naughton, M. (2008). Prevalence of sleepiness in surviving drivers of motor vehicle collisions. Internal medicine journal, 38(10), 769-775.

Dawson, D., Searle, A. K., & Paterson, J. L. (2014). Look before you (s)leep: Evaluating the use of fatigue detection technologies within a fatigue risk management system for the road transport industry. Sleep Medicine Reviews, 18(2), 141-152. https://doi.org/10.1016/j.smrv.2013.03.003

Dinges, D. F., & Mallis, M. M. (1998). Managing fatigue by drowsiness detection: can technological promises be realized?. Retrieved from Pergamon.

Dinges, D. F., & Powell, J. W. (1985). Microcomputer analyses of performance on a portable, simple visual RT task during sustained operations. Behavior Research Methods, 17(6), 652-655. https://doi.org/10.3758/bf03200977

Doran, S. M., Van Dongen, H. P. A., & Dinges, D. F. (2001). Sustained attention performance during sleep deprivation: Evidence of state instability. Archives Italiennes de Biologie, 139(3), 253-267.

Ftouni, S., Rahman, S. A., Crowley, K. E., Anderson, C., Rajaratnam, S. M. W., & Lockley, S. W. (2013). Temporal dynamics of ocular indicators of sleepiness across sleep restriction. Journal of Biological Rhythms, 28(6), 412-424. https://doi.org/10.1177/0748730413512257

Ftouni, S., Sletten, T. L., Howard, M. E., Anderson, C., LennÉ, M. G., Lockley, S. W., & Rajaratnam, S. M. W. (2012). Objective and subjective measures of sleepiness, and their associations with on-road driving events in shift workers. Journal of Sleep Research, no-no. https://doi.org/10.1111/j.1365-2869.2012.01038.x

Ganesan, S., Magee, M., Stone, J. E., Mulhall, M. D., Collins, A., Howard, M. E., … Sletten, T. L. (2019). The Impact of Shift Work on Sleep, Alertness and Performance in Healthcare Workers. Scientific Reports, 9(1). https://doi.org/10.1038/s41598-019-40914-x

Gilliland, K., & Schlegel, R. E. (1993). Readiness to perform: A critical analysis of the concept and current practices. Retrieved from

Lamond, N., Dawson, D., & Roach, G. D. (2005). Fatigue assessment in the field: validation of a hand-held electronic psychomotor vigilance task. Aviat Space Environ Med, 76(5), 486-489.

Lamond, N., Jay, S. M., Dorrian, J., Ferguson, S. A., Roach, G. D., & Dawson, D. (2008). The sensitivity of a palm-based psychomotor vigilance task to severe sleep loss. Behav Res Methods, 40(1), 347-352. https://doi.org/10.3758/brm.40.1.347

Lee, M. L., Howard, M. E., Horrey, W. J., Liang, Y., Anderson, C., Shreeve, M. S., … Czeisler, C. A. (2016). High risk of near-crash driving events following night-shift work. Proc Natl Acad Sci U S A, 113(1), 176-181. https://doi.org/10.1073/pnas.1510383112

Lim, J., & Dinges, D. F. (2008). Sleep deprivation and vigilant attention. Annals of the New York Academy of Sciences, 1129, 305-322. https://doi.org/10.1196/annals.1417.002

Loh, S., Lamond, N., Dorrian, J., Roach, G. D., & Dawson, D. (2004). The validity of psychomotor vigilance tasks of less than 10-minute duration. Behavior Research Methods, 36(2), 339-346. https://doi.org/10.3758/bf03195580

Lüdtke, H., Wilhelm, B., Adler, M., Schaeffel, F., & Wilhelm, H. (1998). Mathematical procedures in data recording and processing of pupillary fatigue waves. Vision Research, 38(19), 2889-2896. https://doi.org/10.1016/S0042-6989(98)00081-9

Maccora, J., Manousakis, J. E., & Anderson, C. (2018). Pupillary instability as an accurate, objective marker of alertness failure and performance impairment. Journal of Sleep Research, 28(2), e12739. https://doi.org/10.1111/jsr.12739

Manousakis, J. E., Maccora, J., & Anderson, C. (2020). Shortened PST Prediction - Laboratory Data. Retrieved from: https://bridges.monash.edu/articles/Shortened_PST_Prediction_-_Laboratory_Data/12577679

Mulhall, M. D., Sletten, T. L., Magee, M., Stone, J. E., Ganesan, S., Collins, A., … Rajaratnam, S. M. W. (2019). Sleepiness and driving events in shift workers: the impact of circadian and homeostatic factors. Sleep, 42(6). https://doi.org/10.1093/sleep/zsz074

Oken, B. S., Salinsky, M. C., & Elsas, S. M. (2006). Vigilance, alertness, or sustained attention: physiological basis and measurement. Clinical Neurophysiology, 117(9), 1885-1901. https://doi.org/10.1016/j.clinph.2006.01.017

Peters, T., Grüner, C., Durst, W., Hütter, C., & Wilhelm, B. (2014). Sleepiness in professional truck drivers measured with an objective alertness test during routine traffic controls. International archives of occupational and environmental health, 87(8), 881-888. https://doi.org/10.1007/s00420-014-0929-6

Regen, F., Dorn, H., & Danker-Hopfe, H. (2013). Association between pupillary unrest index and waking electroencephalogram activity in sleep-deprived healthy adults. Sleep Medicine, 14(9), 902-912. https://doi.org/10.1016/j.sleep.2013.02.003

Roach, G. D., Dawson, D., & Lamond, N. (2006). Can a shorter psychomotor vigilance task be used as a reasonable substitute for the ten-minute psychomotor vigilance task? Chronobiol Int, 23(6), 1379-1387. https://doi.org/10.1080/07420520601067931

Schwarz, G. (1978). Estimating the dimension of a model. The Annals of Statistics, 6(2), 461-464.

Stevenson, M. R., Elkington, J., Sharwood, L., Meuleners, L., Ivers, R., Boufous, S., … Wong, K. (2014). The role of sleepiness, sleep disorders, and the work environment on heavy-vehicle crashes in 2 Australian states. Am J Epidemiol, 179(5), 594-601. https://doi.org/10.1093/aje/kwt305

Tefft, B. C. (2018). Acute sleep deprivation and culpable motor vehicle crash involvement. Sleep, 41(10). https://doi.org/10.1093/sleep/zsy144

Verstraete, A. G. (2005). Oral fluid testing for driving under the influence of drugs: history, recent progress and remaining challenges. Forensic Sci Int, 150(2-3), 143-150. https://doi.org/10.1016/j.forsciint.2004.11.023

Wilhelm, B., Rühle, K. H., Widmaier, D., Lüdtke, H., & Wilhelm, H. (1998a). Objective measurement of severity and therapeutic effect in obstructive sleep apnea syndrome by the pupillographic sleepiness test. Somnologie, 2(2), 51-57. https://doi.org/10.1007/s11818-998-0008-x

Wilhelm, B., Wilhelm, H., Ludtk, H., Streicher, P., & Adler, M. (1998b). Pupillographic assessment of sleepiness in sleep-deprived healthy subjects. Sleep, 21(3), 258-265.

Wolkow, A. P., Rajaratnam, S. M. W., Anderson, C., Howard, M. E., & Mansfield, D. (2019). Recommendations for current and future countermeasures against sleep disorders and sleep loss to improve road safety in Australia. Intern Med J, 49(9), 1181-1184. https://doi.org/10.1111/imj.14423

Youden, W. J. (1950). Index for rating diagnostic tests. Cancer, 3(1), 32-35. https://doi.org/10.1002/1097-0142(1950)3:1<32::AID-CNCR2820030106>3.0.CO;2-3

Acknowledgements

The authors thank the study participants, and the technical staff at the Monash Sleep and Circadian Medicine Laboratory—in particular, Rebecca Khan and Mulia Marzuki for assistance in collecting data, and Andrew Laing for sleep scoring. This study was funded by VicRoads. The content is solely the responsibility of the authors and does not necessarily represent the official views of VicRoads.

Open practices statement

The data used in this manuscript is publicly available at https://bridges.monash.edu/articles/Shortened_PST_Prediction_-_Laboratory_Data/12577679. DOI: 10.26180/5ef6c4f217d2e (Manousakis, Maccora, & Anderson, 2020)

Author information

Authors and Affiliations

Contributions

CA designed the protocol, and JEM and JM collected the data. CA and JEM developed the data analysis plan, JEM and JM completed data cleaning and analysis, and JEM and CA interpreted the data. JEM drafted the manuscript and CA and JM provided edits.

Corresponding author

Ethics declarations

Conflict of interest

All authors report no conflicts of interest with data as described in this manuscript. In the interest of full disclosure, CA reports receiving a research award/prize from Sanofi-Aventis; research support from VicRoads; speaker fees from Brown Medical School/Rhode Island Hospital, Ausmed, Healthed, and Teva Pharmaceuticals; and contract research funding from Pacific Brands and Rio Tinto Coal Australia; she has served as consultant to the Rail, Bus and Tram Union, National Transport Commission, and the Transport Accident Commission on issues related to fatigue in transportation; and has served as an expert witness on sleep-related motor vehicle crashes. Dr Anderson leads a team within the Cooperative Research Centre for Alertness, Safety and Productivity.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Manousakis, J.E., Maccora, J. & Anderson, C. The validity of the pupillographic sleepiness test at shorter task durations. Behav Res 53, 1488–1501 (2021). https://doi.org/10.3758/s13428-020-01509-x

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.3758/s13428-020-01509-x