Abstract

Large calorimetric neutrino mass experiments using thermal detectors might be going to play a crucial role in the challenge for assessing the neutrino mass. This paper describes a tool based on Monte Carlo methods which has been developed to estimate the statistical sensitivity of calorimetric direct neutrino mass measurements using the \(^{163}\)Ho electron capture decay. The tool is applied to investigate the effect of various experimental parameters. In this paper I report the results useful for designing an experiment with sub-eV sensitivity.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

One of the challenges for particle physics in next decade will be to probe the neutrino absolute mass down to at least the lowest bound of the inverted hierarchy region, i.e. about 0.05 eV [1].

Present best limits on the neutrino absolute mass have been set using MAC-E filter spectrometers to analyze the end-point of \(^3\)H beta decay [2, 3] and are about 2 eV. In a couple of years the new large MAC-E filter spectrometer of the KATRIN experiment will become operational with the aim to push the sensitivity to neutrino absolute mass down to about 0.2 eV [4]. With KATRIN, this experimental approach reaches its technical limits. It is therefore mandatory for the neutrino physics community to define alternative and complementary experimental methodologies to extend the reach of direct neutrino mass measurements.

The calorimetric measurement of nuclear decays with low end-point is a promising alternative way which has been already applied to \(^{187}\)Re beta decay leveraging the powerful technique of low temperature calorimetry [5–9]. More recently, the use of \(^{163}\)Ho has been widely revived. Presently there are at least two projects working to perform high sensitivity experiments with \(^{163}\)Ho: ECHo [10] and HOLMES, a follow up of the MARE project, which was recently funded by the European Research Council [9, 11]. As one of the promoters of the HOLMES experiment, I developed a Monte Carlo code for assessing the statistical sensitivity of calorimetric neutrino mass measurements based on \(^{163}\)Ho electron capture (EC) decay. In this paper I collected the most relevant results to share them with the growing community of scientists engaged in such type of experiments.

2 Calorimetric measurement of \(^{163}\)Ho electron capture decay

De Rujula and Lusignoli [12] discussed the calorimetric measurement of the \(^{163}\)Ho spectrum as a mean for directly measuring the electron neutrino mass \(m_\nu \). \(^{163}\)Ho decays by electron capture (EC) to \(^{163}\)Dy with a half life of about 4570 years and with the lowest known \(Q\)-value, which allows captures only from the M shell or higher. The decay \(Q\)-value has been experimentally determined only using the ratios of the capture probability from different atomic shells. The various determinations span from 2200 to 2800 eV – with a recommended value of \(2555\pm 16\) eV [13] –, where the error is largely due to systematic uncertainties such as the errors on the theoretical atomic physics factors involved.

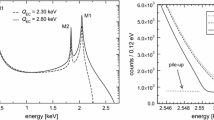

In a calorimetric EC experiment all the de-excitation energy is recorded. The de-excitation energy \(E_c\) is the energy released by all the atomic radiation emitted in the process of filling the vacancy left by the EC decay, mostly electrons with energies up to about 2 keV [12] (the fluorescence yield is less than \(10^{-3}\)). The calorimetric spectrum appears as a series of lines at the ionization energies \(E_i\) of the captured electrons. These lines have a natural width \(\Gamma _i\) of a few eV and therefore the actual spectrum is a continuum with marked peaks with Breit–Wigner shapes (Fig. 1). The spectral end-point is shaped by the same neutrino phase space factor \((Q-E_e)[(Q-E_e)^2-m_\nu ^2]^{1/2}\) that appears in a beta decay spectrum, with the total de-excitation energy \(E_c\) replacing the electron kinetic energy \(E_e\). For a non-zero \(m_\nu \), the de-excitation (calorimetric) energy \(E_c\) distribution is expected to be

where \(G_{\beta } = G_F \cos \theta _C\) (with the Fermi constant \(G_F\) and the Cabibbo angle \(\theta _C\)), \(E_i\) is the binding energy of the \(i\)th atomic shell, \(\Gamma _i\) is the natural width, \(n_i\) is the fraction of occupancy, \(C_i \) is the nuclear shape factor, \(\beta _i\) is the Coulomb amplitude of the electron radial wave function (essentially, the modulus of the wave function at the origin) and \(B_i\) is an atomic correction for electron exchange and overlap.

As for beta decay experiments, the neutrino mass sensitivity depends on the fraction of events close to the end-point, which in turn depends on the decay \(Q\)-value. In particular, the closer the \(Q\)-value to the highest \(E_i\), the larger the resonance enhancement of the rate near the end-point, where the neutrino mass effects are relevant.

Because of the high specific activity of \(^{163}\)Ho (about \(2\times 10^{11}\) \(^{163}\)Ho nuclei give one decay per second) the calorimetric measurements will be achieved by introducing relatively small amounts of \(^{163}\)Ho nuclei in detectors whose design and physical characteristics – i.e. material and size – are driven almost exclusively by the detector performance requirements and by the de-excitation radiation containement [10, 11].

3 Monte Carlo simulation

In this section we describe a frequentist Monte Carlo code developed to estimate the statistical neutrino mass sensitivity for a calorimetric \(^{163}\)Ho EC measurement.Footnote 1 The approach is a replica of the one outlined in [15] for beta decay calorimetric experiments. It consists in the simulation of the spectra that would be measured by a large number of experiments carried out in a given configuration: the spectra are then fit as the real ones and the statistical sensitivity is deduced from the distribution of the obtained \(m^2_\nu \) parameters.

This method proved to be extremely powerful since it allows to include all relevant experimental effects – such as energy resolution, pile-up and background – and also to estimate systematic uncertainties [15]. In this paper however we will limit the discussion to the statistical sensitivity, since systematic effects in this kind of measurement are not fully known yet.

The parameters describing the experimental configuration are the total number of \(^{163}\)Ho decays \(N_{ev}\), the FWHM of the Gaussian energy resolution \(\Delta E\) \(_{\mathrm {FWHM}}\), the fraction of unresolved pile-up events \(f_{pp}\) and the radioactive background \(B(E)\).

The total number of events is given by \(N_{ev} = N_{det}A_\mathrm {EC}t_M\), where \( N_{det}\), \(A_\mathrm {EC}\) and \(t_M\) are the total number of detectors, the EC decay rate in each detector and the measuring time, respectively.

Pile-up happens when two decays in one detector are too close in time and are mistaken as a single one with an apparent energy equal to the sum of the two decays. In first approximation, this has a probability of \(f_{pp}= \tau _R A_\mathrm {EC}\), where \(\tau _R\) is the detector time resolution. The energy spectrum of pile-up events is given by the self-convolution of the calorimetric EC decay spectrum and extends up to \(2Q\), producing therefore a background impairing the ability to identify the neutrino mass effect at the decay spectrum end-point \(Q\). In the case of \(^{163}\)Ho decay, the pile-up events spectrum is quite complex and presents a number of peaks right at the end-point of the decay spectrum (Fig. 1).

The \(B(E)\) function is usually taken as a constant \(B(E)=bT\), where \(b\) is the average background count rate for unit energy and for a single detector, and \(T=N_{det}\times t_M\) is the experimental exposure.

The theoretical spectrum \(S(E_c)\) which is measured by the simulated toy experiments is then given by (Fig. 1):

where \(N_\mathrm {EC}(E_c,m_\nu )\) is the \(^{163}\)Ho spectrum as described by (1) and with unity normalization, \(B(E)\) is the background energy spectrum, and the detector energy response function is given by

with standard deviation \(\sigma = \Delta E_{\mathrm {FWHM}}/2.35\).Footnote 2 For the simulations, the parameters \(E_i\), \(\Gamma _i\), \(n_i\), \(C_i\), \(B_i\), and \(\beta _i\) in (1) are taken from [16].

The set of experimental spectra – typically between 100 and 1000 – is obtained by fluctuating the spectrum \(S(E_c)\) (1) according to Poisson statistics. Each simulated spectrum is then fitted using (1) and leaving \(m^2_\nu \), \(Q\), \(N_{ev}\), \(f_{pp}\) and \(b\) as free parameters. In a real experiment the atomic parameters describing the Breit–Wigner peaks will be determined from high statistics measurements. Still, a correct neutrino mass analysis of the experimental spectrum may require to leave some of them as free parameters in the fit, in particular the ones relative to the M1 peak. At the expenses of a much higher computing time, a modified version of the code has been developed to investigate the effect of this approach. Some tests have been carried out leaving the three additional M1 peak parameters free – i.e. position \(E_{M1}\), width \(\Gamma _{M1}\), and relative intensity – in few sample experimental configurations: the results show a worsening of the sensitivity however always well below 10 %. The simulated experimental spectra are generated on an energy interval which is smaller than the full 0 – \(2Q\) interval and the fits are performed on sub-intervals of this. For most of the simulations presented in this paper, the fitting interval is 1500–3500 eV. However, tests show that the results worsen less than 10 % by shifting the lower end of the fitting interval up to 2100 eV – i.e. to the right side of the M1 peak.

The 90 % CL \(m_\nu \) statistical sensitivity \(\Sigma _{90}(m_\nu )\) of the simulated experimental configuration can be obtained from the distribution of the \(m^2_\nu \) found by fitting the spectra. For a Gaussian distribution as the one found in the present work the sensitivity is then given by \(\Sigma _{90}(m_\nu ) = \sqrt{1.64 \sigma _{m_\nu ^2}}\), where \(\sigma _{m_\nu ^2}\) is the standard deviation of the distribution:

where \(N\) is the number of generated spectra and \(m_{\nu _i}^2\) are the values found in each fit for \(m^2_\nu \) fit parameter.

The statistical error on the sensitivity \(\Sigma _{90}(m_\nu )\) is given by (see [15] for details)

where \(y_i=(m_{\nu _i}^2 - \overline{m_\nu ^2})^2\) and \(\overline{y} \approx \sigma _{m_\nu ^2}^2\). Using Eq. (5) one finds that the statistical error on the Monte Carlo results is around 3 and 1 % for about 100 and 1000 simulated experiments, respectively.

3.1 Results

Given the large uncertainties on the \(^{163}\)Ho EC \(Q\)-value, all the simulations have been performed for few \(Q\)-values picked in the interval 2200–2800 eV.

First of all it is instructive to compare how the statistical sensitivity for a given statistics \(N_{ev}\) depends on the \(Q\)-value in the case of \(^{163}\)Ho and of a low energy beta decay with a spectral shape similar to the one of \(^{187}\)Re [15]. Figure 2 shows that \(^{163}\)Ho experimental sensitivity depends on the \(Q\)-value more steeply than \(Q^{3/4}\) (dashed line in Fig. 2) as for beta decays and, for \(Q\)-values smaller than about 2400 eV, \(^{163}\)Ho experiments are more favorable than beta decay ones. The details of the simulation are given in the caption of Fig. 2. The steeper behavior observed for \(^{163}\)Ho decay is the result of the resonance enhancement caused by the proximity to the M1 capture peak.

Monte Carlo simulations confirm that the total statistics \(N_{ev}\) is crucial to reach a sub-eV neutrino mass statistical sensitivity as for beta decay experiments (Fig. 3, see caption for the simulation details.) [15]. In particular the sensitivity shows the same scaling as \(N_{ev}^{-1/4}\) (dashed line in Fig. 3), as it would be naively expected for a \(m_\nu ^2\) sensitivity purely determined by statistical fluctuations. The uncertainty affecting the \(Q\)-value translates into about a factor 3–4 on the achievable neutrino mass sensitivity.

3.1.1 Effect of experimental parameters

Figure 4 helps understanding the role of the detector performance in terms of time end energy resolutions. Indeed the experimental sensitivity is not directly related to the time resolution, but only to the combination \(f_{pp}=\tau _R\times A_\mathrm {EC}\), that is the amount of pile-up. In Fig. 4 the sensitivity is plotted for constant energy resolution \(\Delta E\) \(_{\mathrm {FWHM}}\) (left) and for constant \(f_{pp}\) (right), with the other experimental parameter varying in an interval of interest for typical detector configurations (see caption for more details). The plots suggest that the impact of the energy resolution on the sensitivity is relatively smaller than that of pile-up. Moreover, in presence of a high level of pile-up, the experiment is relatively less sensitive to the energy resolution. Qualitatively this latter effect can be understood in the following way: the more the pile-up hinders the signal at \(Q\), the larger is the energy interval below \(Q\) which weighs in the fit, and the less the energy resolution counts. However, it is worth noting that the time resolution depends on the detector signal-to-noise ratio at high frequency and therefore at constant bandwidth – that is at constant detector rise time – an energy resolution deterioration unavoidably turns in a worse time resolution. This effect it is not considered in the simulation.

\(^{163}\)Ho decay experiments statistical sensitivity dependence on pile-up fraction \(f_{pp}\) and energy resolution \(\Delta E\) \(_{\mathrm {FWHM}}\) for \(Q=2600\) eV, \(N_{ev}=10^{14}\), and \(b=0\) count/eV/s/detector. Left energy resolution is fixed to \(\Delta E\) \(_{\mathrm {FWHM}}\) \(=1\) eV. Right pile-up fraction taken as (from bottom to top) \(f_{pp}=10^{-7}\), \(10^{-5}\), \(10^{-4}\), and \(10^{-3}\)

3.1.2 Trade-off between activity and pile-up

Given the strong dependence of the sensitivity on the total statistics, for a fixed experimental exposure \(T\) – that is for a fixed measuring time and a fixed experiment size – and for fixed detector performance, \(\Delta E\) \(_{\mathrm {FWHM}}\) and \(\tau _R\), it always pays out to increase the single detector activity \(A_\mathrm {EC}\) as high as technically possible, even at the expenses of an increasing pile-up level. This is exemplified in Fig. 5 (see caption for the simulation details). Of course there maybe several limitations to the possible activity \(A_\mathrm {EC}\), such as, for example, the effect of the \(^{163}\)Ho nuclei on the detector performance or detector cross-talk and dead time considerations. It is worth noting that in calorimetric measurements of the type considered here, in first approximation the increase of single detector activity \(A_\mathrm {EC}\) does not go along with an increase of the detector size (see Sect. 2), which would translate in a performance degradation. Figure 6 displays the statistical sensitivity achievable with a single detector activity of about 100 decays/s under the same hypothesis for detector performance and exposure as in Fig. 5.

A slice of the data plotted in Fig. 5 taken for \(A_\mathrm {EC}\) \(=100\) decay/s

3.1.3 Effect of background

Because of the very low fraction of decays in the region of interest close to \(Q\), the background may be a critical issue in end-point neutrino mass measurements. The Monte Carlo simulations here are done with the hypothesis of a constant background \(b\). A constant background is negligible as long as it is much smaller than the pile-up spectrum, that is when \(b \ll A_\mathrm {EC}f_{pp}/2Q\). Figure 7 confirms this simple considerations and shows that this is another good reason to have detectors with the highest possible activity. For large activities and correspondingly large pile-up rate, experiments should be relatively insensitive to cosmic rays and to environmental radioactivity. In a typical experiment with low temperature microcalorimeters, detectors have a sensitive area exposed to cosmic rays of the order of \(10^{-8}\) m\(^2\) and a thickness of few micrometers: at sea level this translates in a cosmic ray interaction rate of about one per day with an average energy deposition of 10 keV, which, in turns, means \(b\lesssim 10^{-4}\) count/eV/day/detector. The flat background observed in the AgReO\(_4\) microcalorimenters of the MIBETA experiment [7, 8] was indeed measured to be about \(1.5\times 10^{-4}\) count/eV/day/detector, though comparison with \(^{163}\)Ho decay experiments is difficult because of the different detector geometry and composition. All the above considerations should be complemented with an analysis of the effect of contaminations in the bulk of the detector – especially \(\beta \) and EC decaying isotopes – and of the fluorescent X-ray and Auger emission from the material closely surrounding the detectors. The \(^{163}\)Ho isotope production and its detector embedding are also likely to add radiactive contaminants internal to the detector: one example of dangerous isotope is \(^{166m}\)Ho (\(\beta \) decay, \(Q_\beta =1854\) keV, \(\tau _{1/2}=1200\) years) which is produced together with \(^{163}\)Ho in many of the production routes which have been proposed [17]. A detailed analysis of the possible contaminations and their effects on the sensitivity is out the scope of the present work.

Effect of background on statistical sensitivity for \(N_{ev}=10^{14}\) and \(\Delta E\) \(_{\mathrm {FWHM}}\) \(=1\) eV. Left \(A_\mathrm {EC}\) \(=3\) Hz/det and \(f_{pp}\) \(= 3\times 10^{-6}\), right \(A_\mathrm {EC}\) \(=300\) Hz/det and \(f_{pp}\) \(= 3\times 10^{-3}\). The background levels in the boxes are in count/eV/day/detector units

3.1.4 Required experimental exposure

Table 1 gives the exposure \(T\) required for a target neutrino mass statistical sensitivity of 0.1 eV, for three \(Q\)-values, and for a range of meaningful experimental parameters \(\Delta E\) and \(f_{pp}\). Exposures in the table are obtained by scaling the results of Monte Carlo simulations run for these parameter pairs and for a statistics of \(10^{14}\) decays. Exposures are given for a single detector activity of 1 decay/s and exposures for a different activity \(A_\mathrm {EC}\) can be obtained by simply dividing the given ones by the new \(A^\prime _\mathrm {EC}\). For a different target sensitivity \(\Sigma ^\prime _{90}(m_\nu )\), \(T^\prime \) exposures can be obtained again by scaling \(T\) in Table 1 as follows

where \(\Sigma _{90}(m_\nu )\) is the table target mass sensitivity. For given pile-up fraction \(f_{pp}\) and single detector activity \(A_\mathrm {EC}\) the corresponding detector time resolution is obtained as \(\tau _R=f_{pp}/A_\mathrm {EC}\).

3.2 Conclusions

In this paper I have discussed the statistical sensitivity of calorimetric \(^{163}\)Ho electron capture neutrino mass experiments using Monte Carlo simulations. Although assessing the real reach of this type of measurements requires also an extensive analysis of the systematic effects, the results reported in this paper may be useful for designing the first generation of high statistics experiments aiming at sub-eV sensitivities.

Notes

This is a frequentist Monte Carlo in the sense that, without making any a-priori hypothesis on the probability distribution of the measurement results (the neutrino mass squared), a large number of toy experiments is performed and the frequency distribution of the results is considered. Since there is no true signal in the toy experiments, the sample mean is about 0 as expected and the sample standard deviation gives the instrumental statistical uncertainty which is defined as the instrumental sensitivity. This approach has been checked against the sensitivity definition proposed in [14], i.e. the average upper limit one would get from an ensemble of experiments with the expected background and no true signal. Indeed the two approaches give similar – though not identical – results. However the definition in [14] runs into problems when dealing with non-physical results (i.e. negative square neutrino masses). In fact fits of individual toy experiments may return a negative square neutrino mass and estimating the upper limit then requires an approach either Bayesian or frequentist as described in [14]. On the contrary the approach used for the results reported in this paper does not require any further statistical “trick” to deal with the unavoidable non-physical results and it is therefore intrinsically robust.

In actual experiments \(R_{\Delta E}(E_c)\) may have an explicit dependence on the energy \(E_c\): usually the energy resolution \(\Delta E\) \(_{\mathrm {FWHM}}\) gets worse for increasing energy. This behaviour has not been included in the present investigation because it does not affect the experimental sensitivity, although it has to be considered when analysing real data to avoid systematic errors.

References

G.L. Fogli et al., Phys. Rev. D 86, 013012 (2012)

Ch. Kraus et al., Eur. Phys. J. C 73, 2323 (2013)

V.N. Aseev, Phys. Rev. D 84, 112003 (2011)

KATRIN Design Report, FZKA7090 (2004)

M. Galeazzi et al., Phys. Rev. C 63, 014302 (2001)

M.F. Gatti et al., Nucl. Phys. B 91, 293 (2001)

C. Arnaboldi et al., Phys. Rev. Lett. 91, 161802 (2003)

M. Sisti et al., Nucl. Instrum. Methods A 520, 125 (2004)

A. Nucciotti, Nucl. Phys. B (Proc. Suppl.) 229–232, 155 (2012)

L. Gastaldo et al., Nucl. Instrum. Methods A 711, 150–159 (2013)

M. Galeazzi et al., Phys. Rev. D. arXiv:1202.4763v2

A. De Rujula, M. Lusignoli, Phys. Lett. B 118, 429 (1982)

C.W. Reich, B. Singh, Nucl. Data Sheets 111, 1211 (2010)

G.J. Feldman, R.D. Cousins, Phys. Rev. D 57, 3873 (1998)

A. Nucciotti et al., Astropart. Phys. 34, 80 (2010)

M. Lusignoli et al., Phys. Lett. B 697, 11–14 (2011)

J.W. Engle et al., Nucl. Instrum. Methods B 311, 131–138 (2013)

Acknowledgments

I would like to thank for the useful discussions Joe Formaggio, Maurizio Lusignoli, Alvaro de Rujula, and the HOLMES collaboration members.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution License which permits any use, distribution, and reproduction in any medium, provided the original author(s) and the source are credited.

Funded by SCOAP3 / License Version CC BY 4.0.

About this article

Cite this article

Nucciotti, A. Statistical sensitivity of \(^{163}\)Ho electron capture neutrino mass experiments. Eur. Phys. J. C 74, 3161 (2014). https://doi.org/10.1140/epjc/s10052-014-3161-3

Received:

Accepted:

Published:

DOI: https://doi.org/10.1140/epjc/s10052-014-3161-3