Abstract

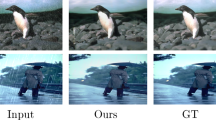

Many severe weather conditions (rain, haze, sandstorm) have a significant impact on the quality of the images; therefore, it severely restricts lots of computer vision tasks such as object recognition, object detection, and object tracking. Although there are plenty of deraining algorithms, single image deraining is relatively rare. In real-world scenarios, rain removal in a single image is a difficult task, and exiting methods often result in poor effects. We present a multi-scale attention generative adversarial network called MSA-GAN for single image rain removal, which applies an attentive generative network using adversarial training. The generative network adopts multi-scale attention mechanisms which use spatial pyramid to capture features from different receptive fields and lead the fine fusion of relevant information at different scales. Extensive experimental results on synthetic and real-world rainy data sets show that our method has better performance than the most state-of-the-art ones. The proposed method also inspires a new research direction of vision task. Our source code is soon to be available.

Similar content being viewed by others

REFERENCES

K. Bai, X. Liao, Q. Zhang, X. Jia, and S. Liu, “Survey of learning based single image super-resolution reconstruction technology,” Pattern Recognit. Image Anal. 30, 567–577 (2020). https://doi.org/10.1134/S1054661820040045

Y. Chen and C. Hsu, “A generalized low-rank appearance model for spatio-temporally correlated rain streaks,” in IEEE International Conference on Computer Vision, Sydney, Australia, 2013 (IEEE, 2013), pp. 1968–1975. https://doi.org/10.1109/ICCV.2013.247

L. Chen, H. Zhang, J. Xiao, L. Nie, J. Shao, W. Liu, and T. Chua, “SCA-CNN: Spatial and channel-wise attention in convolutional networks for image captioning,” in IEEE Conf. on Computer Vision and Pattern Recognition, Honolulu, Hawaii, 2017 (IEEE, 2017), pp. 6298–6306. https://doi.org/10.1109/CVPR.2017.667

X. Ding, L. Chen, X. Zheng, Y. Huang, and D. Zeng, “Single image rain and snow removal via guided L0 smoothing filter,” Multimedia Tools Appl. 75, 2697–2712 (2016). https://doi.org/10.1007/s11042-015-2657-7

X. Fu, J. Huang, X. Ding, Y. Liao, and J. W. Paisley, “Clearing the skies: A deep network architecture for single-image rain removal,” IEEE Trans. Image Process. 26, 2944–2956 (2017). https://doi.org/10.1109/TIP.2017.2691802

X. Fu, J. Huang, D. Zeng, Y. Huang, X. Ding, and J. W. Paisley, “Removing rain from single images via a deep detail network,” in IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, Hawaii, 2017 (IEEE, 2017), pp. 1715–1723. https://doi.org/10.1109/CVPR.2017.186

K. Garg and S. K. Nayar, “Detection and removal of rain from videos,” in Proc. IEEE Computer Society Conf. on Computer Vision and Pattern Recognition, Washington, 2004 (IEEE, 2004), pp. 528–535. https://doi.org/10.1109/CVPR.2004.1315077

Z. Gu, M. Ju, and D. Zhang, “A novel Retinex image enhancement approach via brightness channel prior and change of detail prior,” Pattern Recognit. Image Anal. 27, 234–242 (2017). https://doi.org/10.1134/S1054661817020055

S. Gu, D. Meng, W. Zuo, and L. Zhang, “Joint convolutional analysis and synthesis sparse representation for single image layer separation,” in IEEE Int. Conf. on Computer Vision, Venice, 2017 (IEEE, 2017), pp. 1717–1725. https://doi.org/10.1109/ICCV.2017.189

K. He, J. Sun, and X. Tang, “Guided image filtering,” in Computer Vision–ECCV 2010, Ed. by K. Daniilidis, P. Maragos, and N. Paragios, Lecture Notes in Computer Science, vol. 6311 (Springer, Berlin, 2010), pp. 1–14. https://doi.org/10.1007/978-3-642-15549-9_1

X. Hu, C. Fu, L. Zhu, and P.-A. Heng, “Depth-attentional features for single-image rain removal,” in IEEE Conference on Computer Vision and Pattern Recognition, Long Beach, Calif., 2019 (IEEE, 2019), pp. 8014–8023. https://doi.org/10.1109/CVPR.2019.00821

J. Hu, L. Shen, and G. Sun, “Squeeze-and-excitation networks,” in IEEE/CVF Conference on Computer Vision and Pattern Recognition, Salt Lake City, Utah, 2018 (IEEE, 2018), pp. 7132–7141. https://doi.org/10.1109/CVPR.2018.00745

D. Huang, L. Kang, Y. F. Wang, and C. Lin, “Self-learning based image decomposition with applications to single image denoising,” IEEE Trans. Multimedia 16, 83–93 (2014). https://doi.org/10.1109/TMM.2013.2284759

K. Jiang, Z. Wang, P. Yi, C. Chen, B. Huang, Y. Luo, J. Ma, and J. Jiang, “Multi-scale progressive fusion network for single image deraining,” in IEEE/CVF Conf. on Computer Vision and Pattern Recognition (CVPR), Seattle, 2020 (IEEE, 2020), pp. 8343–8352. https://doi.org/10.1109/CVPR42600.2020.00837

L. Kang, C. Lin, and Y. Fu, “Automatic single-image-based rain streaks removal via image decomposition,” IEEE Trans. Image Process. 21, 1742–1755 (2012). https://doi.org/10.1109/TIP.2011.2179057

J. Kim, J. Sim, and C. Kim, “Video deraining and desnowing using temporal correlation and low-rank matrix completion,” IEEE Trans. Image Process. 24, 2658–2670 (2015). https://doi.org/10.1109/TIP.2015.2428933

O. Kupyn, V. Budzan, M. Mykhailych, D. Mishkin, and J. Matas, “DeblurGAN: Blind motion deblurring using conditional adversarial networks,” in IEEE/CVF Conf. on Computer Vision and Pattern Recognition, Salt Lake City, Utah, 2018 (IEEE, 2018), pp. 8183–8192. https://doi.org/10.1109/CVPR.2018.00854

Y. Lai, Q. Wang, and R. Chen, “Improved single image haze removal for intelligent driving,” Pattern Recognit. Image Anal. 30, 523–529 (2020). https://doi.org/10.1134/S1054661820030177

S. Li, W. Ren, F. Wang, I. B. Araujo, E. K. Tokuda, R. H. Junior, R. Cesar-Junior, Z. Y. Wang, and X. C. Cao, “A comprehensive benchmark analysis of single image deraining: Current challenges and future perspectives,” Int. J. Comput. Vision 129, 1301–1322 (2021). https://doi.org/10.1007/s11263-020-01416-w

Y. Li, R. T. Tan, X. Guo, J. Lu, and M. S. Brown, “Rain streak removal using layer priors,” in IEEE Conf. on Computer Vision and Pattern Recognition, Las Vegas, 2016 (IEEE, 2016), pp. 2736–2744. https://doi.org/10.1109/CVPR.2016.299

W. Liu, D. Anguelov, D. Erhan, C. Szegedy, S. E. Reed, C. Fu, and A. C. Berg, “SSD: single shot multibox detector,” in Computer Vision–ECCV 2016, Ed. by B. Leibe, J. Matas, N. Sebe, and M. Welling, Lecture Notes in Computer Science, vol. 9905 (IEEE, 2016), pp. 21–37. https://doi.org/10.1007/978-3-319-46448-0_2

D. Liu, B. Wen, X. Liu, Z. Wang, and T. S. Huang, “When image denoising meets high-level vision tasks: A deep learning approach,” in Proc. 27th Int. Joint Conf. on Artificial Intelligence, Stockholm, Sweden, 2018, Ed. by J. Lang (AAAI Press, 2018), pp. 842–848.

Y. Luo, Y. Xu, and H. Ji, “Removing rain from a single image via discriminative sparse coding,” in IEEE Int. Conf. on Computer Vision, Santiago, Chile, 2015 (IEEE, 2015), pp. 3397–3405. https://doi.org/10.1109/ICCV.2015.388

D. Pathak, P. Krähenbühl, J. Donahue, T. Darrell, and A. A. Efros, “Context encoders: Feature learning by inpainting,” in IEEE Conf. on Computer Vision and Pattern Recognition (CVPR), Las Vegas, 2016 (IEEE, 2016), pp. 2536–2544. https://doi.org/10.1109/CVPR.2016.278

R. Qian, R. T. Tan, W. Yang, J. Su, and J. Liu, “Attentive generative adversarial network for raindrop removal from a single image,” in IEEE/CVF Conf. on Computer Vision and Pattern Recognition, Salt Lake City, Utah, 2018 (IEEE, 2018), pp. 2482–2491. https://doi.org/10.1109/CVPR.2018.00263

A. Radford, L. Metz, and S. Chintala, “Unsupervised representation learning with deep convolutional generative adversarial networks,” in 4th Int. Conf. on Learning Representations, San Juan, Puerto Rico, 2016.

J. Redmon and A. Farhadi, “YOLOv3: An incremental improvement” (2018). arXiv:1804.02767 [cs.CV]

S. Ren, K. He, R. B. Girshick, and J. Sun, “Faster R-CNN: Towards real-time object detection with region proposal networks,” in Advances in Neural Information Processing Systems, Ed. by C. Cortes, N. Lawrence, D. Lee, M. Sugiyama, and R. Garnett (Curran Associates, 2015), pp. 91–99.

D. Ren, W. Zuo, Q. Hu, P. Zhu, and D. Meng, “Progressive image deraining networks: A better and simpler baseline,” in IEEE Conf. on Computer Vision and Pattern Recognition, Long Beach, Calif., 2019, pp. 3937–3946. https://doi.org/10.1109/CVPR.2019.00406

V. Santhaseelan and V. K. Asari, “Utilizing local phase information to remove rain from video,” Int. J. Comput. Vision 112, 71–89 (2015). https://doi.org/10.1007/s11263-014-0759-8

Z. Wang, A. C. Bovik, H. R. Sheikh, and E. P. Simoncelli, “Image quality assessment: From error visibility to structural similarity,” IEEE Trans. Image Process. 13, 600–612 (2004). https://doi.org/10.1109/TIP.2003.819861

T. Wang, X. Yang, K. Xu, S. Chen, Q. Zhang, and R. W. H. Lau, “Spatial attentive single-image deraining with a high quality real rain dataset,” in IEEE Conference on Computer Vision and Pattern Recognition, Long Beach, Calif., 2019 (IEEE, 2019), pp. 12270–12279. https://doi.org/10.1109/CVPR.2019.01255

W. Wei, D. Meng, Q. Zhao, Z. Xu, and Y. Wu, “Semi-supervised transfer learning for image rain removal,” in IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, Calif., 2019 (IEEE, 2019), pp. 3877–3886. https://doi.org/10.1109/CVPR.2019.00400

J. Yang, F. Liu, H. Yue, X. Fu, C. Hou, and F. Wu, “Textured image demoiréing via signal decomposition and guided filtering,” IEEE Trans. Image Process. 26, 3528–3541 (2017). https://doi.org/10.1109/TIP.2017.2698920

W. Yang, R. Tan, J. Feng, J. Liu, Z. Guo, and S. Yan, “Deep joint rain detection and removal from a single image,” in IEEE Conf. on Computer Vision and Pattern Recognition (CVPR), Honolulu, Hawaii, 2017 (IEEE, 2017), pp. 1685–1694. https://doi.org/10.1109/CVPR.2017.183

S. You, R. T. Tan, R. Kawakami, Y. Mukaigawa, and K. Ikeuchi, “Adherent raindrop modeling, detection and removal in video,” IEEE Trans. Pattern Anal. Mach. Intell. 38, 1721–1733 (2016). https://doi.org/10.1109/TPAMI.2015.2491937

H. Zhang and V. M. Patel, “Density-aware single image deraining using a multi-stream dense network,” in IEEE/CVF Conf. on Computer Vision and Pattern Recognition, Salt Lake City, Utah, 2018 (IEEE, 2018), pp. 695–704. https://doi.org/10.1109/CVPR.2018.00079

H. Zhao, Y. Zhang, S. Liu, J. Shi, C. C. Loy, D. Lin, and J. Jia, “PSANet: Point-wise spatial attention network for scene parsing,” in Computer Vision–ECCV 2018 (Springer, Cham, 2018), pp. 270–286. https://doi.org/10.1007/978-3-030-01240-3_17

X. Zheng, Y. Liao, W. Guo, X. Fu, and X. Ding, “Single-image-based rain and snow removal using multi-guided filter,” in Neural Information Processing. ICONIP 2013, Ed. by M. Lee, A. Hirose, Z. G. Hou, and R. M. Kil, Lecture Notes in Computer Science, vol. 8228 (Springer, Berlin, 2013), pp. 258–265. https://doi.org/10.1007/978-3-642-42051-1_33

Funding

This work was supported in part by the Fundamental Research Funds for the Central Universities under grant no. 3122013D020 of Civil Aviation University of China.

This work was supported in part by the Open Fund of Tianjin Key Laboratory for Advanced Signal Processing under grant no. 2021ASP-TJ03.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

COMPLIANCE WITH ETHICAL STANDARDS

This article is a completely original work of its authors; it has not been published before and will not be sent to other publications until the PRIA Editorial Board decides not to accept it for publication.

Conflict of Interest

The author declares that he has no conflicts of interest.

Additional information

Wanwei Wang received the M.S. degree in signal and information processing from Civil Aviation University of China, in 2010. He is the Deputy Director of Flight Tracking and Surveillance Technology Research Center, Assistant Director of Tianjin Key Laboratory for Advanced Signal Processing of Civil Aviation University of China. From 2010 to the present, he is a Lecturer with the Institute of College of Electronic Information and Automation, Civil Aviation University of China. His current research interest includes signal and image processing, computer vision, machine learning.

Rights and permissions

About this article

Cite this article

Wanwei Wang Multi-Scale Attention Generative Adversarial Network for Single Image Rain Removal. Pattern Recognit. Image Anal. 32, 436–447 (2022). https://doi.org/10.1134/S1054661822020201

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1134/S1054661822020201