Abstract

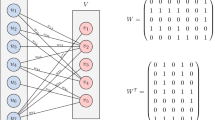

Fully-connected tensor network (FCTN) decomposition is a generalization of the popular tensor train and tensor ring decompositions and has been applied to various fields with great success. The standard method for computing this decomposition is the well-known alternating least squares (ALS). However, it is very expensive, especially for large-scale tensors. To reduce the cost, we propose an ALS-based randomized algorithm. Specifically, by defining a new tensor product called subnetwork product and adjusting the sizes of FCTN factors suitably, the structure of the coefficient matrices of the ALS subproblems from FCTN decomposition is first figured out. Then, with the structure and the properties of subnetwork product, we devise the randomized algorithm based on leverage sampling. This algorithm enables sampling on FCTN factors and hence avoids the formation of full coefficient matrices of ALS subproblems. The computational complexity and numerical performance of our algorithm are presented. Experimental results show that it requires much less computation time to achieve similar accuracy compared with the deterministic ALS method. Further, we apply our algorithm to four famous problems, i.e., tensor-on-vector regression, multi-view subspace clustering, nonnegative tensor approximation and tensor completion, and the performances are quite decent.

Similar content being viewed by others

Data availability

The data that support the findings of this study are available from the corresponding author upon reasonable request.

Notes

Our convergence conditions for Algorithm 5 are \(\mathop {\max }\limits _k {\left\| {{{\textbf{X}_k} - {\textbf{X}_k}{\textbf{Z}_k} - {\textbf{E}_k}}} \right\| _\infty } \le \varepsilon \) and \(\mathop {\max }\limits _k {\left\| {\textbf{Z}_k^t - \textbf{Y}_k^t} \right\| _\infty } \le \varepsilon \) for \(k=1,2,\cdots ,K\) with \(\epsilon = 10^{-6}\).

References

Bader BW, Kolda TG et al (2021) Tensor toolbox for MATLAB. Version 3.2.1. https://www.tensortoolbox.org Accessed 05 Apr 2021

Bahadori MT, Yu QR, Liu Y (2014) Fast multivariate spatio-temporal analysis via low rank tensor learning. Adv Neural Inf Process Syst 27

Battaglino C, Ballard G, Kolda TG (2018) A practical randomized CP tensor decomposition. SIAM J Matrix Anal Appl 39(2):876–901

Drineas P, Mahoney MW, Muthukrishnan S (2011) Faster least squares approximation. Numer Math 117(2):219–249

Fahrbach M, Fu G, Ghadiri M (2022) Subquadratic Kronecker regression with applications to tensor decomposition. Adv Neural Inf Process Syst 35:28776–28789

Han Z, Huang T, Zhao X, Zhang H, Liu Y (2023) Multi-dimensional data recovery via feature-based fully-connected tensor network decomposition. In: IEEE transactions on big data

Kolda TG, Bader BW (2009) Tensor decompositions and applications. SIAM Rev 51(3):455–500

Larsen BW, Kolda TG (2022) Practical leverage-based sampling for low-rank tensor decomposition. SIAM J Matrix Anal Appl 43(3):1488–1517

Liu Y, Zhao X, Song G, Zheng Y, Ng MK, Huang T (2024) Fully-connected tensor network decomposition for robust tensor completion problem. Inverse Probl Imaging 18(1):208–238

Long Z, Zhu C, Chen J, Li Z, Ren Y, Liu Y (2024) Multiview MERA subspace clustering. IEEE Trans Multimed 26:3102–3112

Lyu C, Zhao X, Li B, Zhang H, Huang T (2022) Multi-dimensional image recovery via fully-connected tensor network decomposition under the learnable transforms. J. Sci. Comput. 93(2):49

Ma L, Solomonik E (2021) Fast and accurate randomized algorithms for low-rank tensor decompositions. Adv Neural Inf Process Syst 34:24299–24312

Ma P, Chen Y, Zhang X, Xing X, Ma J, Mahoney MW (2022) Asymptotic analysis of sampling estimators for randomized numerical linear algebra algorithms. J Mach Learn Res 23:1–45

Mahoney MW et al (2011) Randomized algorithms for matrices and data. Found Trends® Mach Learn 3(2): 123–224

Malik OA (2022) More efficient sampling for tensor decomposition with worst-case guarantees. In: International conference on machine learning, pp 14887–14917

Malik OA, Becker S (2018) Low-rank Tucker decomposition of large tensors using TensorSketch. Adv Neural Inf Process Syst 31

Malik OA, Becker S (2021) A sampling-based method for tensor ring decomposition. In: International conference on machine learning, pp 7400–7411

Martinsson PG, Tropp JA (2020) Foundations and algorithms: Randomized numerical linear algebra. Acta Numer 29:403–572

Mickelin O, Karaman S (2020) On algorithms for and computing with the tensor ring decomposition. Numer Linear Algebra Appl 27(3):e2289

Murray R, Demmel J, Mahoney MW, Erichson NB, Melnichenko M, Malik OA, Grigori L, Luszczek P, Dereziński M, Lopes ME, Liang T, Luo H, Dongarra J (2023) Randomized numerical linear algebra: a perspective on the field with an eye to software. arXiv preprint arXiv:2302.11474

Oseledets IV (2011) Tensor-train decomposition. SIAM J Sci Comput 33(5):2295–2317

Rabusseau G, Kadri H (2016) Low-rank regression with tensor responses. Adv Neural Inf Process Syst 29

Song G, Ng MK (2020) Nonnegative low rank matrix approximation for nonnegative matrices. Appl Math Lett 105:106300

Song Q, Ge H, Caverlee J, Hu X (2019) Tensor completion algorithms in big data analytics. ACM Trans Knowl Discov Data 13(1):1–48

Sultonov A, Matveev S, Budzinskiy S (2023) Low-rank nonnegative tensor approximation via alternating projections and sketching. Comput Appl Math 42(2):68

Tang C, Zhu X, Liu X, Li M, Wang P, Zhang C, Wang L (2018) Learning a joint affinity graph for multiview subspace clustering. IEEE Trans Multimed 21(7):1724–1736

Von Luxburg U (2007) A tutorial on spectral clustering. Stat Comput 17: 395–416

Woodruff DP et al (2014) Sketching as a tool for numerical linear algebra. Found Trends® Theor Comput Sci 10(1–2):1–157

Yu Y, Li H (2024) Practical sketching-based randomized tensor ring decomposition. Numer Linear Algebra Appl 31:e2548

Yu Y, Li H, Zhou J (2023) Block-randomized stochastic methods for tensor ring decomposition. arXiv preprint arXiv:2303.16492

Zhao Q, Zhou G, Xie S, Zhang L, Cichocki A (2016) Tensor ring decomposition. arXiv preprint arXiv:1606.05535

Zheng W, Zhao X, Zheng Y, Pang Z (2021) Nonlocal patch-based fully connected tensor network decomposition for multispectral image inpainting. IEEE Geosci Remote Sens Lett 19:1–5

Zheng Y, Huang T, Zhao X, Zhao Q (2022) Tensor completion via fully-connected tensor network decomposition with regularized factors. J Sci Comput 92(1):8

Zheng W, Zhao X, Zheng Y, Huang T (2024) Provable stochastic algorithm for large-scale fully-connected tensor network decomposition. J Sci Comput 98(1):16

Zheng Y, Huang T, Zhao X, Zhao Q, Jiang T (2021) Fully-connected tensor network decomposition and its application to higher-order tensor completion. In: Proceedings of the AAAI conference on artificial intelligence, vol 35, pp 11071–11078

Zhou G, Cichocki A, Zhao Q, Xie S (2014) Nonnegative matrix and tensor factorizations: an algorithmic perspective. IEEE Signal Process Mag 31(3):54–65

Zhou J, Sun WW, Zhang J, Li L (2023) Partially observed dynamic tensor response regression. J Am Stat Assoc 118(541):424–439

Acknowledgements

The authors would like to thank the editor and the anonymous reviewers for their detailed comments and helpful suggestions, which helped considerably to improve the quality of the paper.

Funding

The work is supported by the National Natural Science Foundation of China (No. 11671060) and the Natural Science Foundation of Chongqing, China (No. cstc2019jcyj-msxmX0267).

Author information

Authors and Affiliations

Contributions

All the authors contributed to the study conception and design, and read and approved the final manuscript.

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest.

Ethical approval

Not applicable.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Wang, M., Cui, H. & Li, H. A random sampling algorithm for fully-connected tensor network decomposition with applications. Comp. Appl. Math. 43, 226 (2024). https://doi.org/10.1007/s40314-024-02751-1

Received:

Revised:

Accepted:

Published:

DOI: https://doi.org/10.1007/s40314-024-02751-1

Keywords

- Fully-connected tensor network decomposition

- Alternating least squares

- Randomized algorithm

- Leverage sampling

- Tensor-on-vector regression

- Multi-view subspace clustering

- Nonnegative tensor approximation

- Tensor completion