Abstract

Best-practice economic evaluation methods for health-related decision making at a national level in England are well established, and as a first principle generally involve attempting to maximise the amount of health generated from the health system’s budget. Such methods are applied in ways that are broadly transparent and accountable, often at arm’s length from explicit political pressures. At local levels of decision making, however, decision making is arguably less likely to be applied according to established overarching principles, is less transparent and is more subject to political pressures. This may be owing to a multiplicity of reasons, for example, undesirability/inappropriateness of such methods, or a failure to make the methods clear to local decision makers. We outline principles for economic evaluations and break down these methods into their component parts, considering their relevance in the English local context. These include taxonomies of decision-making frameworks, budgets, costs, outcome, and characterisations of cost effectiveness. We also explore the role of broader factors, including the relevance of assuming a single fixed budget, pressures resulting from political and budgetary cycles and affordability. We consider the data requirements to inform such deliberations. By setting out principles for economic evaluation methods in a clear language aimed at local decision making, a potential role for such methods can be established, which to date has failed to emerge. While the extent to which these methods can and should be applied are a matter for continued debate, the establishment of such a mutual understanding may assist in the improvement of methods for such decision making and the outcomes resulting from their application.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

Existing methods for economic evaluations are aimed at national decision making and are much less likely to be applied at a local level. |

We suggest some potential reasons for the relative lack of the codification and application of such methods. |

We also suggest that methods for economic evaluations may be applicable in many respects at a local level, and propose that there is a potential for an improvement in local decision making if health economists are able to set out the underlying principles for economic evaluations behind their methods. |

1 Introduction

The effective commissioning of health and social care services requires appropriate methods to determine which services should be funded, given competing demands within a finite budget: economic evaluation (EE) is widely used for such an appraisal. An EE involves the assessment of costs and outcomes of alternatives within a single evaluation framework [1], for example, costs falling on the healthcare budget and health-related outcomes in the case of an EE for health technologies. The use of EE evidence is an integral part of health technology assessment (HTA) processes carried out by national agencies such as the National Institute for Health and Care Excellence (NICE) in England and Wales [2]. However, at a local level where a significant level of health and social care funding decisions are made, EE evidence traditionally plays a less integral role in the decision-making process [3, 4]. Within an English local decision-maker’s commissioning cycle, new interventions are considered within strategic planning processes based on needs assessments and reviews of current service provisions alongside contemporary policy objectives [5]. The costs associated with such outcomes are often considered within an accountancy framework to ensure any spending remains within budgetary restrictions, with outcomes and costs potentially considered sequentially rather than simultaneously as within an EE approach.

A disconnect therefore exists between the nature and use of economic evidence to inform national decision making and to inform English local commissioning. While agencies such as NICE use HTA processes to provide policymakers with evidence and guidance to inform decision making, they have no direct commissioning or budgetary responsibilities themselves, with the financial impact of their guidance often falling on local commissioners’ budgets [6]. Under such conditions, NICE guidance based on EE evidence may determine a new intervention to be cost effective from a national perspective, but local commissioners may be reluctant to conform to such guidance [7]. In an English setting, this lack of alignment is likely to be widespread, with the majority of commissioning decisions and budgetary responsibility held at a local rather than national level; for example, by local clinical commissioning groups (CCGs) and local authorities (LAs). Local authorities are responsible for commissioning publicly funded social care and some public health services, as well as for funding a variety of other non-care and non-health services such as education and waste disposal [8]. Clinical commissioning groups fund the majority of National Health Service (NHS) healthcare services, constituting approximately two-thirds of the NHS budget [5], a responsibility that will be transferred to Integrated Care Systems (ICSs) during 2022 [9].

This article describes the disconnect between current EE frameworks and associated evidence often considered at the national level, and how local decision makers in England operationalise outcome and cost-related information within local commissioning processes. To achieve this aim, we explore the basic principles of resource-allocation decision making that EE evidence is intended to inform. While the specifics of outcomes and outcomes-based commissioning form a necessary discussion point in the context of EE, we restrict the bulk of our discussion of outcomes to the scope of which outcomes are relevant, and the perspective that defines this scope. Our primary focus, however, is on cost-related considerations given the importance of budgetary restrictions at the local level: this includes types of costs, budgets, expenditure, affordability and cost effectiveness. We consider three specific categories of EE frameworks, their respective decision-making criteria and potential suitability within a local decision-making context. We additionally reflect on the availability of routinely collected care data within England to quantify resource use and costs. Finally, we discuss possible ways to bridge the disconnect so EE evidence that is relevant to the decision maker can be generated and better operationalised within local resource-allocation decision-making processes. While we focus on the situation in England, local and national, much of our discussion of local decision making in England may be generalisable to other contexts in which health-related decisions are made without systematised use of EE methods.

2 Principles for Constrained Healthcare Decision Making

Economics frequently characterises decision making as attempting to make an optimal decision given the existence of one or more constraints. In the context of an extra-welfarist approach to an EE of health, the decision is often conceptualised as seeking to maximise some health-related outcome given constraints relevant to the health service [1]. The relevant constraints in this resource-allocation dilemma are both budgetary (e.g. the amount of money available to the health service) and technical (i.e. what is practically possible given the available inputs and the state of technology). A simplified application of these principles is that when a new technology becomes available, there is a need to generate evidence informing whether the new treatment is worth financing (i.e. whether it represents value for money) for which information is needed on the relevant outcome, the financial cost incurred to achieve this outcome and the opportunity cost implications of these financial costs.

3 How Have These Principles Been Implemented at a National Level by NICE?

While not a perfect realisation of a full solution to a maximisation problem in a first-best world [10, 11], the process implemented at a national level by NICE draws on the concepts of the extra-welfarist approach of health maximisation. The approach outlined by NICE [2] for technology appraisal focuses on the use of EE evidence in the form of a cost-effectiveness analysis (CEA), which assesses one or more new health technologies in terms of their incremental costs and health outcomes compared to existing practice. Health outcomes are operationalised as quality-adjusted life-years (QALYs), a metric capturing both quality and quantity of life. In implementing these methods, NICE adopts a health, personal and social care perspective, and thus deems relevant costs to be only those “under the control of the NHS and personal and social services” [2]. Similarly, the outcomes perspective incorporates consideration of all direct health effects, measured in QALYs, and preferably using the EQ-5D measure and an associated preference-based value set to capture health-related quality of life [2].

This information on incremental costs and health gains is combined to produce an incremental cost-effectiveness ratio (ICER), which represents the additional cost of generating one extra QALY using the new technology. Cost effectiveness is then judged relative to a threshold compared to which the ICER must be lower—or judged to be more likely than not to be lower [12]—in order to be deemed cost effective [1]. While in practice the threshold employed by NICE may substantially deviate from this [13], it is often characterised as representing the cost effectiveness of those healthcare treatments that would be given up should the proposed intervention be introduced [10, 14] under the assumption that such a new intervention takes up only a small proportion of existing spending [15]. Under such circumstances, a comparison of the ICER of some new technology with the threshold assesses whether the new technology generates more QALYs from a given amount of money than would existing practice, and thus whether the new technology should be deemed cost effective. Where the budgetary impact is large, such a comparison, implicitly assuming that the opportunity cost at the margin is considered constant with respect to the scale of what is displaced in practice, may be inappropriate. Consequently, the budget impact test requires that new interventions with a budgetary impact of over £20 million in the first 3 years of implementation trigger an additional period of negotiation between NICE, NHS England and Improvement and the manufacturer, such that the new intervention becomes affordable to the NHS [16, 17].

4 What Aspects of Local Decision-Making Reality are Failed by the Approach Associated with NICE?

Decision making at a local level, however, is likely to substantially differ from the NICE approach in a number of key ways that test the relevance and appropriateness of it as a method. While the national context for healthcare decision making is relatively straightforward and thus can be summarised in brief, important differences are likely to exist at a local level. Notably, the use of a single QALY-based outcome is unlikely to be deemed sufficient or even relevant by local decision makers [18]. We discuss a multiplicity of potential interlocking reasons for this, including the different scope of responsibilities at the local level compared to the narrower and more health-focused national context of NICE, as well as differences in both contexts in the availability of relevant information and in perceptions of the role for EE methods. This section introduces the context for local decision making, and discusses potential important differences from the NICE approach that may exist for decision making at such sub-national levels.

4.1 Funding and Budgets

While decisions made by NICE generally involve the use of a series of yearly budgets from which allocations must be made, the picture at a local level is likely to differ, with responsibility covering multiple budgets with a diverse set of aims, often beyond health. Although this may differ from the context in which most health economists conducting EEs may operate, EE methods still remain potentially applicable. While primary consideration is generally given to the impact on the budget for the remit of most direct relevance (most commonly, the healthcare budget in the case of healthcare decisions), decision making can account for alternative or additional budgets, both private (e.g. out-of-pocket patient expenditures) and public (e.g. education), and divisions of budgets within a remit (e.g. separate budgets for primary and secondary healthcare). While national decision making by NICE in issuing statutorily binding technology appraisal guidance [19], or the issuing of other non-statutorily binding guidance in other areas, is also a degree of separation away from its implementation (generally by CCGs), at the LA level there is greater fusion in this sense.

Clinical commissioning groups and LAs are both allocated funding from central government, with LAs also able to raise funds from local taxation, service fees and other sources of income such as investment returns [20]. Each body is given statutory responsibilities, involving some discretionary decision making, to provide certain services: CCGs are responsible for the joint commissioning of healthcare, while LAs have statutory responsibilities for, inter alia, public health [5, 20,21,22]. A number of definitions and distinctions regarding budgets are important at this stage, both to illustrate the local context and also to provide a suggested comprehensive framework with which local decision makers could consider the applicability of EE methods to their responsibilities.

4.1.1 Soft Versus Hard Budgets

Budgets—the relevant monetary amount available for that service—that cannot be exceeded are hard; budgets that can be exceeded are soft. In reality, such distinctions are not always so clear nor binary. Components of individual budgets might be variously hard and soft: for instance, funds for health might be ring fenced in particular areas or up to a particular level. This is particularly true in LA budgeting in England, where some funding is statutorily ring fenced (e.g. public health grants from central government), and other components of the budget must be committed to the provision of particular statutory services (e.g. waste collection) [20, 21]. This relates to a distinction between organisation-level budgets and programme-level budgets.

4.1.2 Organisation-Level Budgets and Programme Budgets

Organisation-level budgets refer to those at the highest level possible and would generally be assumed to be hard. For example, at a council level in England, this requirement is enshrined in statutory obligations placed on LAs to prevent budgetary overspend by the Local Government Finance Act 1998 and successive Local Government Acts [20, 23].

Programme budgets are budgets allocated to a particular service or programme delivered by the organisation; for example, for health overall or a particular health service. Local decision makers may be able to reallocate funds from one programme to another through a process known as virement. Costs resulting from the same decision may ultimately fall on multiple programme budgets (e.g. public health and patient-facing health) or multiple organisations (e.g. central vs local government, CCGs vs councils). As part of the Health and Care Bill 2021 and also other initiatives such as the Better Care Fund, pooled budgets to enable cross-sector commissioning objectives to be completed (e.g. commissioning end-of-life care and falls prevention services) are attempts to remove sector-specific budgeting issues when the initial and future costs fall on multiple sectors (i.e. CCGs/ICSs and LAs in this example).

4.1.3 Time Horizons in Budgeting

Concerns regarding the timing of spending can arise either from legal or accounting requirements [20] and the preferences of relevant actors involved in decision making. Statutory obligations are placed on councils regarding budgetary overspend, and further obligations enforce the submission of a medium-term financial strategy covering expectations and plans for revenue and expenditure over at least the following 3 years [20, 23]. While over the short-term some use may be made of reserve funds, a persistent overspend that results in depletion of such reserves is unsustainable [23]. Similarly, local healthcare providers (CCGs, trusts and expected ICSs) are mandated to produce annual accounting reports that are submitted to national bodies such as the Department of Health and Social Care [24]. Related issues are considered in a later section on affordability concerns.

Further non-statutorily induced time preferences may arise out of political budget cycles: components of a public body’s spending that are affected by the electoral cycle [25]. These may influence decision making in ways such as creating greater short-term discretionary spending around electorally sensitive times, or causing the discounting of spending beyond time periods in which decision makers may no longer be in office.

4.2 Costs

Health economists conventionally assume that costs faced by the commissioner from any new care option, as well as those currently borne from current services, are completely flexible and portable. For the purposes of public sector decision making and local budgets, generally the costs of interest are those borne by the relevant organisation(s) or programme(s) financing the services. Like budgets, specific cost aspects need careful consideration. While costs are presented here grouped by type, other logical groupings exist, for example across budgets or time, and depending on circumstances may be more appropriate for the decision at hand [26, 27].

4.2.1 Fixed vs Variable Costs

Fixed costs are those that are associated with the start-up or running of an activity even if the amount of that activity turns out to be zero. Consider the example of a vaccination centre. The rental cost of this centre—even if nobody attends the centre to be vaccinated—must still be paid, and constitutes a fixed cost.

Variable costs are those that increase with usage: for instance, the cost of a single vaccine. Marginal costs are highly related and consist of the increase in variable costs attributable to a one unit increase in the output: in the case of a vaccination programme, this would be the extra cost attributable to the provision of one extra vaccine. As in the distinction between hard and soft budgets, these distinctions are not always as clear or binary, and whether something is a fixed or variable cost may depend on the exact context of the decision being made.

4.2.2 Sunk Costs

Sunk costs are costs that are irrecoverable once incurred. In the health sector, we might think about something like developing a new bespoke IT software platform. Once developed, these platforms cannot generally be repurposed for other uses. This contrasts with (for instance) constructing a new vaccination centre, assuming the building or its component materials could be repurposed. Transaction or “friction” costs are generally a type of sunk cost, referring to costs arising separately from the mere production of a good but which are incurred in order to effect the transaction [28, 29].

4.3 Expenditure, Affordability and Cost Effectiveness

Affordability is a related concept to cost effectiveness [15, 30]. For example, consider a new programme costing £4 million given a discretionary health budget of £1 million (i.e. the budget remaining after all mandated services are funded). This programme would, owing to its cost exceeding the entire budget, be simply unaffordable.

In practice, most new programmes are not wholly unaffordable. In the aforementioned example, implementing a quarter of the £4 million programme is possible within the existing budget of £1 million, but every other programme funded from the discretionary budget would have to be entirely displaced. As this example implies, the opportunity cost is likely to increase disproportionately with the scale of the proposed commitment relative to the available discretionary budget, as more and more cost-effective programmes will need to be displaced. In a NICE context, such a situation motivates the use of the budget impact test [17]. While such circumstances may be relatively rare in a national decision-making context where budgets are large, they are likely to be more prevalent in the context of local decision making where budgets are smaller and harder. This is likely to apply to a greater extent in LAs with smaller per capita discretionary budgets. Research in the context of national decision making has sought to relax this assumption [11, 15, 30] and, although similar research in the context of local decision making is currently absent, the implications of this are nevertheless likely to be relevant to informing such decisions. Relatedly, while research at a national level has sought to establish the likely cost effectiveness of treatments displaced—both at the margin and with large degrees of budgetary impact—in practice [13, 31], such estimates are likely to be absent at a local level.

Affordability concerns are also likely to be linked to relevant time horizons in budgeting. When considering relevant expenditure, an implicit assumption often made is that the timing of expenditure commitments is largely unimportant and that high impacts can be smoothed over time. This means that questions on the merits of capital expenditure are largely judged according to the implied rate of return of the programme, rather than the outlay amount. Because of constraints on capital expenditure, local decision makers cannot always borrow money to pay for something that appears cost effective when there is a substantial up-front cost even if future rates of return would exceed rates of interest on borrowing.

Furthermore, uncertainty regarding expenditure is likely to pose greater problems at local levels. National decision makers deliberating on commissioning recommendations may be able to tolerate high levels of uncertainty regarding the cost of any given individual new programme because of both the large number of funded programmes, each of which only take up a small proportion of the total proportion of spending, and the separation from the consequences of budgetary overspends. While approaches that shift the burden of risk away from the payer exist, such as outcomes-based commissioning, an approach based on the principle that funding and reimbursement decisions should be made based on providers delivering certain outcomes, rather than paying for volumes of processes, these are generally not applied at a local level and may impose onerous data-gathering requirements. Examples of similar commissioning methods exist across various areas of public policy, both at a national level in areas other than health [32, 33], and at a local level in health-related commissioning [34], as detailed in [35]. Such an approach, however, remains far from fully adopted for all commissioning decisions. Further examples of innovative payment structures, such as a lump-sum payment for as much of a treatment as transpires to be required (a ‘Netflix model’, which shifts risk onto the pharmaceutical provider [36]), have emerged in the USA, but remain largely untrialled in the UK.

5 Role of Different Decision-Making Frameworks and Criteria

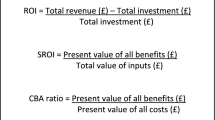

To conduct a full EE, analysts require a decision-making framework to judge and quantify both costs and outcomes of relevance, and criteria to determine the optimal decision. While other frameworks exist that fail to compare multiple courses of action across both costs and outcomes, these are generally considered to be partial evaluations: for example, budget impact analyses, involving the consideration only of costs [27, 37]. For descriptive purposes, we focus on three potentially local decision-maker-relevant frameworks: HTA, multi-criteria decision analysis (MCDA) and programme budgeting and marginal analysis (PBMA). While in principle a cost-benefit analysis method could be applied, the commonly cited prerequisites for this—optimally set budgets based on a consumer’s willingness to pay or of the ability of decision makers to operate beyond fixed budgets, and a comprehensive definition and consideration of all objects of value [38, 39]—mean that its wide application is unlikely in the contexts we consider.

5.1 HTA

Health technology assessment-associated decision-making frameworks and criteria are most commonly associated with reimbursement agencies and related processes, such as the focus in NICE’s reference case [40] on comparison of the relevant ICER with some threshold value or range. Such methods are not commonly used nor fully accepted by local decision makers to guide their commissioning cycle [7, 18, 41].

Health technology assessment as a framework can incorporate a range of other conceptualisations of the maximisation problem beyond QALYs, for which outcomes could be either natural units (e.g. life-years) or monetary values [1, 27]. Fundamentally, however, approaches associated with HTA processes tend to seek to explicitly maximise a defined outcome subject to some budgetary constraint. As an approximation to this, use is commonly made of ICERs (and their comparison to some threshold value or range), or return-on-investment ratios, where some new candidate programme is compared to existing programmes that could be defunded.

5.2 MCDA

An MCDA allows for a range of outcomes and costs to be accounted for within the same framework that are decided upon through stakeholder engagement, whereby the decision problem is subject to a range of criteria for consideration. Although it is possible to compare costs related to the achievement of multiple outcomes within HTA frameworks (e.g. by producing multiple ICERs based on various outcomes), weighting and trading off between the outcomes requires a MCDA-type framework. Guidance for an MCDA has been developed [42, 43] with a guide focussing on determining, weighting and assessing the criteria by which any results are compared, in eight steps: defining the decision problem; selecting and structuring criteria; measuring performance; scoring alternatives; weighting criteria; calculating aggregate scores; dealing with uncertainty; interpretation and reporting results. While allowing for the existence of and providing a framework for trade-offs between multiple criteria may be seen as a benefit, it also represents a potential limitation of the MCDA. While several methods for this have been proposed, any such preference weighting is subjective, and carries all of the problems inherent in the aggregation of individual preferences described in Arrow’s impossibility theorem [44]. Establishing such a preference-based weighting risks, for instance, a ‘town hall’-type approach, in which the stakeholder with greatest influence is able to determine the outcome.

5.3 PBMA

A PBMA places a focus on reviewing resources allocated to specific programmes with a subsequent assessment of added/forgone benefits and costs from alternative programme(s) and associated budgets. Similar to an MCDA, a PBMA has eight steps defined [45]: choose a set of meaningful programmes; identify current activity and expenditure in those programmes; think of improvement; weigh up incremental costs and incremental benefits and prioritise a list; consult widely; decide on changes; effect the changes; evaluate progress. These PBMA steps reflect the commissioning cycle from strategic planning, to procuring services, then monitoring and evaluation; as such, it has been suggested to aid with investment and disinvestment decisions. The consideration of disinvestment and accounting for budgetary restrictions for specific programmes is important for local decision makers, thus making the PBMA approach a potentially attractive option. A key limitation is that PBMA does not focus on any specific or range of criteria (e.g. as within HTA and MCDA) and thus the scope of the decision problem both in terms of relevant outcomes and associated costs (and budgets) can be difficult to conceptualise and operationalise.

5.4 Overview of Different Decision-Making Frameworks and Criteria

The relevance of our specified three overarching decision-making frameworks (HTA, MCDA and PBMA) for guiding commissioning-based decision making is dependent on their components (e.g. inputs to include and outcomes presented), processes (e.g. stakeholder engagement and discussions), relevance (e.g. QALYs compared to other outcomes) and data requirements. Arguably, each approach has both benefits and limitations to guide resource-allocation decision making. Whereas there are benefits to having a single criterion for cross-comparability of results and a common goal, beyond the healthcare system (where health is a natural outcome for consideration) it is likely to frequently be the case that health is not the primary, sole or even relevant outcome of interest; neither is the maximisation of any individual outcome likely to be deemed appropriate. The ability to account and then weigh off more than one criterion (e.g. within a MCDA) and account for explicit budgetary restrictions (e.g. within a PBMA) are arguably important and even necessary considerations for local decision makers dealing with cross-sector and explicit budget restrictions. Whatever the approach, use of EE evidence to inform decision making is further complicated because of tight timescales to produce business cases, and a lack of, or constraints on, necessary data, capacity and skill sets—a complication that applies all the more when methods carrying greater informational requirements and greater stakeholder engagement (such as MCDAs and PBMAs) are employed.

6 Steps Towards Implementation Including Data Requirements

All of these quantifiable aspects of spending and decision making—costs, budgets and expenditure—require relevant and accurate data regardless of the evaluation framework applied. While the NICE reference case imposes clear and strict information requirements on those submitting new technologies for appraisal [40], no such statutory structure exists at a local level. Relatedly, estimates of relevant thresholds to be used for local decision making are both absent and likely to be less appropriate to a local context, making characterisation of the opportunity cost of any decision unachievable.

Although some routine data do exist (noting local decision makers seldom have the time nor funds for primary data collection), they are not as extensive, accessible, reliable or indeed timely as local commissioners often need [46]. In terms of budgets, this information may be known only to those specific job roles (e.g. finance managers) and may at any given time not be wholly known with certainty: this is an issue both for commissioners and researchers seeking to aid the commissioning processes. In terms of costs and expenditure as part of the NHS, NHS Digital working on behalf of the Department of Health and NHS England, has mandated the need for minimum datasets across NHS services that reflect both activity data (e.g. hospital spells via Hospital Episode Statistics data) and reimbursement cost codes (e.g. Healthcare Resource Grouper version 4) [47].

For local NHS decision makers such as the current CCGs (and forthcoming ICSs), use can be made of local data flows from any service within their geographical jurisdiction, as well as of datasets such as the Secondary Uses Service [48]. Access to and use of these data can inform evaluation activities to inform the commissioning processes. Beyond the NHS and even within specific NHS services (e.g. general practitioner data), access to and knowledge of such data is more complicated. For example, non-NHS services such as social care have no mandated metadata, for example the NHS Data model and dictionary for England [49]. As a result, knowledge of data in many relevant areas is often limited to those who use the data regularly, which could lead to issues when supporting commissioning decisions across sectors. The importance of metadata to enable knowledge and transparency for commissioners and researchers forms the motivation for efforts to improve the clarity and accessibility of such datasets, such as Health Data Research UK’s Innovation Gateway (www.healthdatagateway.org/), which has been the focus of National Institute for Health and Care Research-funded research to unlock data for public health policy and practice [50].

7 Discussion

Any EE involves joint consideration of both costs and outcomes, where what is considered a relevant outcome is ultimately to be defined by the decision maker but informed by the stakeholders on whose behalf they act. In health economics, the most common generic measure of health is the QALY, which attempts to capture both morbidity and mortality. This outcome measure can also, however, be life-years, infections avoided or any outcome deemed to be relevant. A key issue is that unlike NICE taking a stance on the QALYs as its preferred outcome, within and across sectors the ability to decide on and then quantify what are considered relevant outcomes is complicated.

The interest in accounting (and perhaps the need to account) for outcomes alongside cost considerations at the local level makes it difficult to suggest any one EE framework to develop the evidence needed to inform resource allocation at both the national and local level. While this paper has identified many ways in which the conventional NICE approach fails to reflect the reality of local decision making, we do not consider these issues to be necessarily a fundamental flaw with the existing frameworks but with the inflexibility of how they are conventionally perceived and/or applied. While issues regarding uncertainty and timing are typically collapsed into a single net present value in the headline results (e.g. ICERs) within a CEA, disaggregated analysis and presentation of results can therefore help to inform decision makers on these specific issues. Uncertainty is less often included, but features in some types of analysis. The scale is not typically explicitly incorporated within a CEA, but information on prevalent and incident populations and budget impact analyses can help to address this. A simple and practical alternative approach would be to consider estimates of cost effectiveness of new programmes against more stringent benchmarks of value than are typically used (i.e. a lower cost-effectiveness threshold) when there are significant affordability issues at hand. When this is appropriate, and to what extent, remains unclear and requires further research in the context of local decision making.

In principle, CEA can reflect all of these issues within the opportunity cost [15]. However, as we have discussed, in practice, the likelihood of this being the case in local decision-making contexts is small. An MCDA could be useful in the sense that cost effectiveness judged next to a conventional benchmark of value (but not fully reflecting issues of affordability within the opportunity cost) could be looked at alongside the affordability issue itself. However, it is not clear that decision makers would have enough information to appropriately weigh off these two components and the rationale for this approach is weak, nor is there necessarily agreement on this issue among health economists [51]. A PBMA represents perhaps the best option for local decision makers as this approach would allow them to identify matching disinvestments to fund the new programme and therefore provide evidence on the corresponding opportunity cost, but requires detailed budgetary information and knowledge, alongside other limitations (e.g. no common objective to inform the necessary trade-offs).

8 Conclusions

This paper has outlined principles and component parts of EEs, and considered their application in a national context by NICE. While the exact nature of local decision making in England may mean that such methods cannot necessarily be simply translated in toto to local English contexts, and we detail potential issues with the application of EE methods in these contexts, components of and principles involved in such methods may be of greater interest and relevance in more contexts than have previously been commonly assumed. Scope exists for greater application of EE methods and involvement of health economists, and such greater application may well produce results deemed to be preferable by English local decision makers.

References

Drummond MF, Sculpher MJ, Claxton K, Stoddart GL, Torrance GW. Methods for the economic evaluation of health care programmes. Oxford: Oxford University Press; 2015.

National Institute for Health and Care Excellence. Guide to the methods of technology appraisal 2013. London: National Institute for Health and Care Excellence (NICE); 2013. http://www.ncbi.nlm.nih.gov/books/NBK395867/. Accessed 12 Sep 2018.

Eddama O, Coast J. A systematic review of the use of economic evaluation in local decision-making. Health Policy. 2008;86:129–41.

Eddama O, Coast J. Use of economic evaluation in local health care decision-making in England: a qualitative investigation. Health Policy. 2009;89:261–70.

Wenzel L, Robertson R. What is commissioning and how is it changing. King’s Fund. 2019. https://www.kingsfund.org.uk/publications/what-commissioning-and-how-itchanging

Joore M, Grimm S, Boonen A, de Wit M, Guillemin F, Fautrel B. Health technology assessment: a framework. RMD Open. 2020;6: e001289.

Hinde S, Horsfield L, Bojke L, Richardson G. The relevant perspective of economic evaluations informing local decision makers: an exploration in weight loss services. Appl Health Econ Health Policy. 2020;18:351–6.

Sandford M. Local government in England: structures. House Commons Libr Lond. 2022. https://commonslibrary.parliament.uk/researchbriefings/sn07104

UK Parliament. Health and Care Bill 2021/22. 2022. https://bills.parliament.uk/bills/3022. Accessed 13 Aug 2022.

Culyer AJ. Cost-effectiveness thresholds in health care: a bookshelf guide to their meaning and use. Health Econ Policy Law. 2016;11:415–32.

Howdon D, Lomas J, Paulden M. Implications of nonmarginal budgetary impacts in health technology assessment: a conceptual model. Value Health. 2019;22:891–7.

Claxton K. The irrelevance of inference: a decision-making approach to the stochastic evaluation of health care technologies. J Health Econ. 1999;18:341–64.

Claxton K, Martin S, Soares M, Rice N, Spackman E, Hinde S, et al. Methods for the estimation of the National Institute for Health and Care Excellence cost-effectiveness threshold. Health Technol Assess. 2015;19(1–503):v–vi.

McCabe C, Claxton K, Culyer AJ. The NICE cost-effectiveness threshold: what it is and what that means. Pharmacoeconomics. 2008;26:733–44.

Lomas J, Claxton K, Martin S, Soares M. Resolving the “cost-effective but unaffordable” paradox: estimating the health opportunity costs of nonmarginal budget impacts. Value Health. 2018;21:266–75.

National Institute for Health and Care Excellence. Procedure for varying the funding requirement to take account of net budget impact. 2017. https://www.nice.org.uk/Media/Default/About/what-we-do/NICE-guidance/NICE-technology-appraisals/TA-HST-procedure-varying-the-funding-direction.pdf. Accessed 13 Aug 2022.

National Institute for Health and Care Excellence. Budget impact test. NICE; 2020. https://www.nice.org.uk/about/what-we-do/our-programmes/nice-guidance/nice-technology-appraisal-guidance/budget-impact-test. Accessed 2 Jun 2020.

Frew E, Breheny K. Health economics methods for public health resource allocation: a qualitative interview study of decision makers from an English local authority. Health Econ Policy Law. 2020;15:128–40.

National Institute for Health and Care Excellence. Achieving and demonstrating compliance with NICE TA and HST guidance. NICE; 2022. https://www.nice.org.uk/about/what-we-do/our-programmes/nice-guidance/nice-technology-appraisal-guidance/achieving-and-demonstrating-compliance. Accessed 1 Aug 2022.

Local Government Association. Local government finance. 2019. https://www.local.gov.uk/publications/councillor-workbook-local-government-finance. Accessed 19 Jan 2022.

Goddard J. Local authority provision of essential services. House of Lords. UK; 2019.

NHS Confederation. What are clinical commissioning groups? 2021. https://www.nhsconfed.org/articles/what-are-clinical-commissioning-groups. Accessed 26 Jan 2022.

Nolan WS, Pitt J. Balancing local authority budgets. CIPFA. 2016;16.

NHS England. NHS trusts: requirements for annual governance statements and other year-end material 2021/22. 2022. https://www.england.nhs.uk/wp-content/uploads/2022/03/NHS-trusts-AGS-and-year-end-requirements-2021-22.pdf. Accessed 13 Aug 2022.

Drazen A. Political budget cycles. The new Palgrave dictionary of economics. London: Palgrave Macmillan UK; 2018: p. 10379–86. https://doi.org/10.1057/978-1-349-95189-5_2415. Accessed 19 Jan 2022

Franklin M, Lomas J, Walker S, Young T. An educational review about using cost data for the purpose of cost-effectiveness analysis. Pharmacoeconomics. 2019;37:631–43.

Franklin M, Lomas J, Richardson G. Conducting value for money analyses for non-randomised interventional studies including service evaluations: an educational review with recommendations. Pharmacoeconomics. 2020;38:665–81.

Niehans, J. (1989). Transaction Costs. In: Eatwell, J., Milgate, M., Newman, P. (eds) Money. The New Palgrave. Palgrave Macmillan, London. https://doi.org/10.1007/978-1-349-19804-7_41.

Klaes M. Transaction costs, history of The new Palgrave dictionary of economics. London: Palgrave Macmillan UK; 2008.

Lomas J. Incorporating affordability concerns within cost-effectiveness analysis for health technology assessment. Value Health. 2019;22:898–905.

Lomas J, Martin S, Claxton KP. Estimating the marginal productivity of the English National Health Service from 2003/04 to 2012/13. York: Centre for Health Economics, University of York; 2018.

National Audit Office. The troubled families programme: update. National Audit Office London; 2016.

National Audit Office. The work programme. National Audit Office London; 2014.

Taunt R, Allcock C, Lockwood A. (2015) Need to Nurture: Outcomes-based Commissioning in the NHS. London: The Health Foundation https://www.health.org.uk/sites/default/files/NeedToNurture_1.

Robertson R, Ewbank L. Thinking differently about commissioning: learning from new approaches to local planning. London: The King’s Fund; 2020. www.kingsfund.org.uk/publications. Accessed 13 Aug 2022.

Liu H, Mulcahy A. Why states’ ‘Netflix model’ prescription drug arrangements are no silver bullet. Health Aff (Millwood). 2020. https://doi.org/10.1377/forefront.20200629.430545/full/. Accessed 26 Jan 2022.

Sullivan SD, Mauskopf JA, Augustovski F, Jaime Caro J, Lee KM, Minchin M, et al. Budget impact analysis-principles of good practice: report of the ISPOR 2012 Budget Impact Analysis Good Practice II Task Force. Value Health. 2014;17:5–14.

Claxton K, Sculpher M, Culyer T. Mark versus Luke? Appropriate Methods for the Evaluation of Public Health Interventions. Centre for Health Economics, University of York; 2007 Nov. Report No.: 031cherp. https://ideas.repec.org/p/chy/respap/31cherp.html. Accessed 13 Aug 2022.

Sculpher M, Claxton K. Real economics needs to reflect real decisions. Pharmacoeconomics. 2012;30:133–6.

National Institute for Clinical Excellence. Guide to the methods of technology appraisal. 2004. https://webarchive.nationalarchives.gov.uk/20080611223138/http://www.nice.org.uk/niceMedia/pdf/TAP_Methods.pdf. Accessed 13 Aug 2022.

Frew E, Breheny K. Methods for public health economic evaluation: a Delphi survey of decision makers in English and Welsh local government. Health Econ. 2019;28:1052–63.

Marsh K, IJzerman M, Thokala P, Baltussen R, Boysen M, Kaló Z, et al. Multiple criteria decision analysis for health care decision making—emerging good practices: report 2 of the ISPOR MCDA Emerging Good Practices Task Force. Value Health. 2016;19:125–37.

Thokala P, Devlin N, Marsh K, Baltussen R, Boysen M, Kalo Z, et al. Multiple criteria decision analysis for health care decision making—an introduction: report 1 of the ISPOR MCDA Emerging Good Practices Task Force. Value Health. 2016;19:1–13.

De Montis A, De Toro P, Droste-Franke B, Omann I, Stagl S. Assessing the quality of different MCDA methods. In: Getzner M, Spash C, Stagl S, editors. Alternatives for environmental valuation. London: Routledge; 2004. p. 115–49.

Edwards RT, McIntosh E, editors. Applied health economics for public health practice and research. 1st ed. New York: Oxford University Press; 2019.

Franklin M, Thorn J. Self-reported and routinely collected electronic healthcare resource-use data for trial-based economic evaluations: the current state of play in England and considerations for the future. BMC Med Res Methodol. 2019;19:8.

NHS Digital. Data sets. 2022. https://digital.nhs.uk/data-and-information/data-collections-and-data-sets/data-sets. Accessed 14 Jan 2022.

NHS Digital. Secondary Uses Service (SUS). 2022. https://digital.nhs.uk/services/secondary-uses-service-sus. Accessed 14 Jan 2022.

NHS. NHS data model and dictionary. 2021. https://www.datadictionary.nhs.uk/. Accessed 14 Jan 2022.

Franklin M. Unlocking real-world data to promote and protect health and prevent ill-health in the Yorkshire and Humber region. The University of Sheffield; 2021. https://figshare.shef.ac.uk/articles/report/Unlocking_real-world_data_to_promote_and_protect_health_and_prevent_ill-health_in_the_Yorkshire_and_Humber_region/14723685/1. Accessed 13 Aug 2022.

Bilinski A, MacKay E, Salomon JA, Pandya A. Affordability and value in decision rules for cost-effectiveness: a survey of health economists. Value Health. 2022;25:1141–7.

Acknowledgements

We thank the other co-applicants as part of the “Unlocking Data to Inform Public Health Policy and Practice” project, which included: Tony Stone, Susan Baxter, Annette Haywood, Monica Jones, Anthea Sutton, Mark Clowes, Suzanne Mason, Louise Brewins, Philip Truby, Michelle Horspool, Kamil Sterniczuk, Jennifer Saunders and Christopher Gibbons. We also thank the Study Steering Committee for providing valuable insight and guiding the study throughout: Steven Senior (Chair), Gerry Richardson (Deputy Chair), Katherine Brown, William Whittaker, Emily Tweed, Shane Mullen, Vanessa Powell-Hoyland, Barbara Coyle and Abbygail Jaccard. We thank Judith Town and Jennie Milner from NHS Sheffield CCG for important insights into local budgeting. We also thank our Patient and Public Involvement group for making sure the public has a voice when guiding our study; our Patient and Public Involvement group included Sarah Markham, Della Ogunleye and Terry Lock, among other members who preferred to remain anonymous. We thank Lauren Hartley, Emma Bennett and Amanda Lane for providing valuable administrative support to the project. We also thank all staff across the Sheffield City Council, City of York Council and Sheffield Clinical Commissioning Group who took part in our workshops and provided us with their insight, knowledge and experience that made the project possible. Any outstanding errors are of course our own. Special acknowledgement: Louise Brewins sadly passed away during the conduct of this research study. We especially thank Louise for her input and insight during the study, as well as for her professionalism but also friendly and upbeat attitude throughout. On behalf of the research team and colleagues across the city councils, CCGs and universities, Louise will be sadly missed.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Funding

This study/project is funded by the National Institute for Health and Care Research (NIHR) Public Health Research programme (NIHR award identifier: 133634) with in-kind support provided by the NIHR Applied Research Collaboration Yorkshire and Humber (ARC-YH; NIHR award identifier: 200166). The NIHR had no role in the design and conduct of the study; collection, management, analysis and interpretation of the data; preparation, review or approval of the manuscript; and decision to submit the manuscript for publication. The views expressed are those of the author(s) and not necessarily those of the NIHR or the Department of Health and Social Care. The funding agreement ensured the authors’ independence in developing the purview of the manuscript, writing and publishing the manuscript.

Conflicts of interest/competing interests

Daniel Howdon, Sebastian Hinde, James Lomas and Matthew Franklin have no conflicts of interest that are directly relevant to the content of this article.

Ethics approval

Not applicable.

Consent to participate

Not applicable.

Consent for publication

Not applicable.

Availability of data and materials

Not applicable.

Code availability

Not applicable.

Authors’ contributions

SH, JL, MF and DH were responsible for the conceptualisation of the research. DH wrote the original draft with review, editing and additional input from SH, MF and JL. All authors agreed on the final draft.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial 4.0 International License, which permits any non-commercial use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc/4.0/.

About this article

Cite this article

Howdon, D., Hinde, S., Lomas, J. et al. Economic Evaluation Evidence for Resource-Allocation Decision Making: Bridging the Gap for Local Decision Makers Using English Case Studies. Appl Health Econ Health Policy 20, 783–792 (2022). https://doi.org/10.1007/s40258-022-00756-7

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s40258-022-00756-7