Abstract

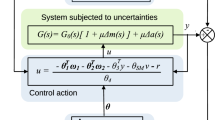

Path integral control integrated with backstepping control is proposed to address the practical regulation problem, wherein the system dynamics are represented as stochastic differential equations. Path integral control requires the sampling of uncontrolled trajectories to calculate the optimal control input. However, the probability of generating a low-cost trajectory from uncontrolled dynamics is low. This implies that the path integral control requires an excessive number of trajectory samples to approximate the optimal control input appropriately. Therefore, we propose an integrated method of backstepping and path integral control to provide a systematic approach for sampling stabilized trajectories that are close to the optimal one. This proposed method requires a relatively small number of samples than that of the path integral control and uses the terminal set to further reduce the computational load. In simulation studies, the proposed method is applied to a single-input single-output example and a continuous stirred-tank reactor for demonstration. The results show the advantages of integrating the backstepping control and the path integral control.

Similar content being viewed by others

References

D. P. Bertsekas et al, Dynamic Programming and Optimal Control, vol 1, Athena Scientific Belmont, 2000.

S. J. Qin and T. A. Badgwell, “A survey of industrial model predictive control technology,” Control Engineering Practice, vol. 11, no. 7, pp. 733–764, 2003.

J. B. Rawlings and D. Q. Mayne, Model Predictive Control: Theory and Design, Nob Hill Pub, 2009.

S. H. Son, B. J. Park, T. H. Oh, J. W. Kim, and J. M. Lee, “Move blocked model predictive control with guaranteed stability and improved optimality using linear interpolation of base sequences,” International Journal of Control, vol. 94, no. 11, pp. 3213–3225, 2020.

W. B. Powell, Approximate Dynamic Programming: Solving the Curses of Dimensionality, John Wiley & Sons, 2007, vol. 703.

J. M. Lee and J. H. Lee, “Approximate dynamic programming-based approaches for input-output data-driven control of nonlinear processes,” Automatica, vol. 41, no. 7, pp. 1281–1288, 2005.

J. W. Kim, B. J. Park, H. Yoo, T. H. Oh, J. H. Lee, and J. M. Lee, “A model-based deep reinforcement learning method applied to finite-horizon optimal control of nonlinear control-affine system,” Journal of Process Control, vol. 87, pp. 166–178, 2020.

Y. Kim and J. M. Lee, “Model-based reinforcement learning for nonlinear optimal control with practical asymptotic stability guarantees,” AIChE Journal, p. e16544, 2020.

T. H. Oh, J. W. Kim, S. H. Son, H. Kim, K. Lee, and J. M. Lee, “Automatic control of simulated moving bed process with deep q-network,” Journal of Chromatography A, vol. 1647, 462073, 2021.

R. F. Stengel, Optimal Control and Estimation, Courier Corporation, 1994.

R. van Handel, “Stochastic calculus, filtering, and stochastic control,” Course notes., vol. 14, 2007. URL http://www.princeton.edu/rvan/acm217/ACM217.pdf

G. Fabbri, F. Gozzi, and A. Swiech, “Stochastic optimal control in infinite dimension,” Probability and Stochastic Modelling, Springer, 2017.

H. J. Kappen, “Linear theory for control of nonlinear stochastic systems,” Physical Review Lettersn, vol. 95, no. 20, 200201, 2005.

E. Theodorou, J. Buchli, and S. Schaal, “A generalized path integral control approach to reinforcement learning,” The Journal of Machine Learning Research, vol. 11, pp. 3137–3181, 2010.

S. Thijssen and H. Kappen, “Path integral control and state-dependent feedback,” Physical Review E, vol. 91, no. 3, 032104, 2015.

E. A. Theodorou, “Nonlinear stochastic control and information theoretic dualities: Connections, interdependencies and thermodynamic interpretations,” Entropy, vol. 17, no. 5, pp. 3352–3375, 2015.

G. Williams, P. Drews, B. Goldfain, J. M. Rehg, and E. A. Theodorou, “Aggressive driving with model predictive path integral control,” Proc. of IEEE International Conference on Robotics and Automation (ICRA), IEEE, pp. 1433–1440, 2016.

G. Williams, N. Wagener, B. Goldfain, P. Drews, J. M. Rehg, B. Boots, and E. A. Theodorou, “Information theoretic mpc for model-based reinforcement learning,” Proc. of IEEE International Conference on Robotics and Automation (ICRA), IEEE, pp. 1714–1721, 2017.

G. Williams, A. Aldrich, and E. A. Theodorou, “Model predictive path integral control: From theory to parallel computation,” Journal of Guidance, Control, and Dynamics, vol. 40, no. 2, pp. 344–357, 2017.

W. Zhu, X. Guo, Y. Fang, and X. Zhang, “A path-integral based reinforcement learning algorithm for path following of an autoassembly mobile robot,” IEEE transactions on neural networks and learning systems, vol. 31, no. 11, pp. 4487–4499, 2019.

E. A. Theodorou and E. Todorov, “Relative entropy and free energy dualities: Connections to path integral and kl control,” Proc. of 51st IEEE Conference on Decision and Control (CDC), IEEE, pp. 1466–1473, 2012.

H. K. Khalil and J. W. Grizzle, Nonlinear Systems, vol. 3, Prentice Hall, Upper Saddle River, NJ, 2002.

Y. Kim, T. H. Oh, T. Park, and J. M. Lee, “Backstepping control integrated with lyapunov-based model predictive control,” Journal of Process Control, vol. 73, pp. 137–146, 2019.

Z. Anjum and Y. Guo, “Finite time fractional-order adaptive backstepping fault tolerant control of robotic manipulator,” International Journal of Control, Automation, and Systems, vol. 19, no. 1, pp. 301–310, 2021.

H. Deng and M. Krstic, “Stochastic nonlinear stabilization–I: A backstepping design,” Systems & Control Letters, vol. 32, no. 3, pp. 143–150, 1997.

Z. Pan and T. Basar, “Backstepping controller design for nonlinear stochastic systems under a risk-sensitive cost criterion,” SIAM Journal on Control and Optimization, vol. 37, no. 3, pp. 957–995, 1999.

Y. Xia, M. Fu, P. Shi, Z. Wu, and J. Zhang, “Adaptive back-stepping controller design for stochastic jump systems,” IEEE Transactions on Automatic Control, vol. 54, no. 12, pp. 2853–2859, 2009.

P. Jagtap and M. Zamani, “Backstepping design for incremental stability of stochastic hamiltonian systems with jumps,” IEEE Transactions on Automatic Control, vol. 63, no. 1, pp. 255–261, 2017.

K. Do, “Backstepping control design for stochastic systems driven by lévy processes,” International Journal of Control, vol. 95, no. 1, pp. 68–80, 2022.

X. Mao, Stochastic Differential Equations and Applications, Elsevier, 2007.

H. J. Kappen, “Path integrals and symmetry breaking for optimal control theory,” Journal of statistical mechanics: theory and experiment, vol. 2005, no. 11, P11011, 2005.

K. Itô, “Stochastic integral,” Proceedings of the Imperial Academy, vol. 20, no. 8, pp. 519–524, 1944.

W. H. Young, “On classes of summable functions and their fourier series,” Proceedings of the Royal Society of London. Series A, Containing Papers of a Mathematical and Physical Character, vol. 87, no. 594, pp. 225–229, 1912.

D. Q. Mayne, J. B. Rawlings, C. V. Rao, and P. O. Scokaert, “Constrained model predictive control: Stability and optimality,” Automatica, vol. 36, no. 6, pp. 789–814, 2000.

B. Kouvaritakis and M. Cannon, Model Predictive Control, Springer International Publishing, Switzerland, 2016.

H. Chen, A. Kremling, and F. Allgöwer, “Nonlinear predictive control of a benchmark CSTR,” Proc. of 3rd European Control Conference, pp. 3247–3252, 1995.

E. Theodorou, F. Stulp, J. Buchli, and S. Schaal, “An iterative path integral stochastic optimal control approach,” IFAC Proceedings Volumes, vol. 44, no. 1, pp. 11 594–11 601, 2011.

Author information

Authors and Affiliations

Corresponding authors

Additional information

Conflict of Interest

The authors declare that there is no competing financial interest or personal relationship that could have appeared to influence the work reported in this paper.

Shinyoung Bae received his B.S. and M.S. degrees in chemical and biological engineering from Sogang University, Seoul, Korea, in 2017 and 2019, respectively. He is currently working toward a Ph.D. degree in chemical and biological engineering at Seoul National University, Seoul, Korea. His current research interests include control, machine learning, and design of experiments through model-based optimization.

Tae Hoon Oh received his Ph.D. degree in chemical and biological engineering from Seoul National University, Korea, in 2022. He is currently an assistant professor in the Department of Chemical Engineering, Kyoto University, Kyoto, Japan. His research interests are process modeling, optimization, and control, with a particular interest in developing the stochastic model predictive control algorithm and its integration with reinforcement learning.

Jong Woo Kim is an Assistant Professor in the Department of Energy and Chemical Engineering at Incheon National University (Incheon, Korea). He obtained B.Sc. and Ph.D. degrees in the School of Chemical and Biological Engineering at Seoul National University (Seoul, Korea), in 2014 and 2020, respectively. He was a postdoctoral researcher in Chair of Bioprocess Engineering, Technische Universität Berlin (Berlin, Germany) from 2020 to 2022. His research interests are in the areas of process automation and development, including reinforcement learning, stochastic optimal control, and high-throughput bioprocess development.

Yeonsoo Kim received her B.S. and Ph.D. degrees in chemical and biological engineering from Seoul National University, Korea, in 2013 and 2019, respectively. Sponsored by the NRF of Korea, she spent a year as a visiting postdoctoral researcher at Carnegie Mellon University developing a computationally efficient model predictive controller. In 2020, she joined the Department of Chemical Engineering, Kwangwoon University as an Assistant Professor. Her current research interests include modeling, stochastic control, and safe reinforcement learning. She received the best paper award in ICCAS 2018, and the outstanding young researcher award in ICROS 2021.

Jong Min Lee is a Professor in School of Chemical and Biological Engineering and the Director of Engineering Development Research Center (EDRC) at Seoul National University (SNU) (Seoul, Korea). From September 2016 to August 2017, he was a Visiting Associate Professor in the Department of Chemical Engineering at MIT. He also held the Samwha Motors Chaired Professorship from 2015 to 2017. He obtained his B.Sc. degree in chemical engineering from SNU in 1996 and completed his Ph.D. degree in chemical engineering at Georgia Institute of Technology (Atlanta, United States) in 2004. He also held a research associate position in biomedical engineering at the University of Virginia (Charlottesville, United States) from 2005 to 2006. He was an assistant professor of chemical and materials engineering at University of Alberta (Edmonton, Canada) from 2006 before joining SNU in 2010. He is also a registered professional engineer with APEGA in Alberta, Canada. His current research interests include modeling, control, and optimization of large-scale chemical process, energy, and biological systems with uncertainty and reinforcement learning-based control.

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

This work was supported by the National Research Foundation of Korea (NRF) grant funded by the Ministry of Science and ICT (MSIT) of the Korean government (No. 2020R1A2C100550311) and the Institute of Engineering Research at Seoul National University provided research facilities for this work. Shinyoung Bae and Tae Hoon Oh equally contribute to the paper.

Rights and permissions

About this article

Cite this article

Bae, S., Oh, T.H., Kim, J.W. et al. Integrating Path Integral Control With Backstepping Control to Regulate Stochastic System. Int. J. Control Autom. Syst. 21, 2124–2138 (2023). https://doi.org/10.1007/s12555-022-0799-8

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12555-022-0799-8