Abstract

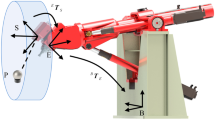

Alignment tasks for precision electronics manufacturing require high accuracy and low time consumption. However, in the current industrial environment, multiple servo alignment operations are often required to achieve the desired accuracy targets, which is time-consuming. In this paper, a high precision, fast alignment method based on binocular vision is proposed, which allows the accurate movement of the workpiece to the target position in only one alignment operation, without the need for a standard calibration board. Firstly, a calibration method of the telecentric lens camera is proposed based on an improved nonlinear damped least-squares method to establish the relationship between the image coordinate system and the local world coordinate system in the binocular vision system. Secondly, in order to transform the coordinates from the local world coordinate system to a unified coordinate system with the platform’s rotation center as the origin, an angle constraint-based rotation center calibration method is proposed. Thirdly, a two-stage feature point detection method based on shape matching is proposed to detect the feature points of the workpiece. Based on these, the position and pose of the workpiece are obtained. Then the alignment commands are calculated based on the current and the target position and pose of the workpiece, enabling the accurate alignment to be accomplished in one operation. Finally, taking the mobile phone’s cover glass alignment task as an example, a series of calibration and alignment experiments were carried out. The experiments and results show that the alignment errors are within ± 0.020 mm and the time taken to calculate alignment commands is less than 20 ms, which demonstrates the effectiveness of the proposed method.

Similar content being viewed by others

References

Chen, Z., Zhou, D., Liao, H., & Zhang, X. (2016). Precision alignment of optical fibers based on telecentric stereo microvision. IEEE/ASME Transactions on Mechatronics, 21(4), 1924–1934.

Shen, F., Wu, W., Yu, D., Xu, D., & Cao, Z. (2015). High-precision automated 3-D assembly with attitude adjustment performed by LMTI and vision-based control. IEEE/ASME Transactions on Mechatronics, 20(4), 1777–1789.

Tamadazte, B., Piat, N. L. F., & Dembélé, S. (2011). Robotic micromanipulation and microassembly using monoview and multiscale visual servoing. IEEE/ASME Transactions on Mechatronics, 16(2), 277–287.

Gu, Q., Aoyama, T., Takaki, T., & Ishii, I. (2015). Simultaneous vision-based shape and motion analysis of cells fast-flowing in a microchannel. IEEE Transactions on Automation Science and Engineering, 12(1), 204–215.

Kyriakoulis, N., & Gasteratos, A. (2010). Color-based monocular visuoinertial 3-D pose estimation of a volant robot. IEEE Transactions on Instrumentation and Measurement, 59(10), 2706–2715.

Li, C., & Gao, X. (2018). Adaptive contour feature and color feature fusion for monocular textureless 3D object tracking. IEEE Access, 6, 30473–30482.

Ma, Y., Liu, X., & Xu, D. (2020). Precision pose measurement of an object with flange based on shadow distribution. IEEE Transactions on Instrumentation and Measurement, 69(5), 2003–2015.

Shen, F., Qin, F., Zhang, Z., Xu, D., Zhang, J., & Wu, W. (2021). Automated pose measurement method based on multivision and sensor collaboration for slice microdevice. IEEE Transactions on Industrial Electronics, 68(1), 488–498.

Lins, R. G., Givigi, S. N., & Kurka, P. R. G. (2015). Vision-based measurement for localization of objects in 3-D for robotic applications. IEEE Transactions on Instrumentation and Measurement, 64(11), 2950–2958.

Liu, S., Xu, D., Liu, F., Zhang, D., & Zhang, Z. (2016). Relative pose estimation for alignment of long cylindrical components based on microscopic vision. IEEE/ASME Transactions on Mechatronics, 21(3), 1388–1398.

Ma, Y., Liu, X., Zhang, J., Xu, D., Zhang, D., & Wu, W. (2020). Robotic grasping and alignment for small size components assembly based on visual servoing. The International Journal of Advanced Manufacturing Technology, 106(11), 4827–4843.

Kwon, S., Jeong, H., & Hwang, J. (2012). Kalman filter-based coarse-to-fine control for display visual alignment systems. IEEE Transactions on Automation Science and Engineering, 9(3), 621–628.

Wang, P., Shen, S., Lu, H., & Shen, Y. (2019). Precise watch-hand alignment under disturbance condition by microrobotic system. IEEE Transactions on Automation Science and Engineering, 16(1), 278–285.

Golnabi, H., & Asadpour, A. (2007). Design and application of industrial machine vision systems”. Robotics and Computer-Integrated Manufacturing, 23(6), 630–637.

Transtrum, M. K., Sethna, J. P. (2012) Improvements to the Levenberg- Marquardt algorithm for nonlinear least-squares minimization. arXiv preprint arXiv

Kåsa, I. (1976). A circle fitting procedure and its error analysis. IEEE Transactions on Instrumentation and Measurement, 25(1), 8–14.

Zhu, J., Li, X. F., Tan, W. B., Xiang, H. B., & Chen, C. (2009). Measurement of short arc based on center constraint least square circle fitting”. Optics and Precision Engineering, 17(10), 2486–2492.

Korman, S., Reichman, D., Tsur, G., Avidan, S. Fast-match: Fast affine template matching. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (pp. 2331–2338).

Hinterstoisser, S., Cagniart, C., Ilic, S., Sturm, P., Navab, N., Fua, P., & Lepetit, V. (2012). Gradient response maps for real-time detection of textureless objects. IEEE Transactions on Pattern Analysis and Machine Intelligence, 34(5), 876–888.

Acknowledgements

This work was supported by Youth Innovation Promotion Association, CAS (2020139).

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Gao, H., Shen, F., Zhang, F. et al. A High Precision and Fast Alignment Method Based on Binocular Vision. Int. J. Precis. Eng. Manuf. 23, 969–984 (2022). https://doi.org/10.1007/s12541-022-00674-7

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12541-022-00674-7