Abstract

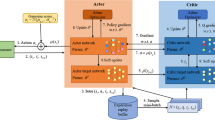

Local planning is a critical and difficult task for intelligent vehicles in dynamic transportation environments. In this paper, a new method Suppress Q Deep Q Network (SQDQN) combining traditional deep reinforcement learning Deep Q Network (DQN) with information entropy is proposed for local planning in automatic driving. In the proposed approach, local planning strategy in complex traffic environment established by the actor–critic network based on DQN, the method adopts the way of execution action-evaluation action-update network to explore the optimal local planning strategy. Proposed strategy does not rely on accurate modeling of the scene, so it is suitable for complex and changeable traffic scenes. At the same time, evaluate the update process and determine the update range by using information entropy to solve a common problem in the network that over expectation of actions damage the performance of strategies. Use this approach to improve strategic performance. The trained local planning strategy is evaluated in three simulation scenarios: overtaking, following, driving in hazardous situations. The results illustrate the advantages of the proposed SQDQN method in solving local planning problem.

Similar content being viewed by others

Data availability

The data used in this study are available and willing to be provided to others for use upon reasonable request.

References

Arulkumaran, K., Deisenroth, M. P., Brundage, M., & Bharath, A. A. (2017). Deep reinforcement learning: A brief survey. IEEE Signal Processing Magazine, 34(6), 26–38.

Badrinarayanan, V., Kendall, A., & Cipolla, R. (2017). SegNet: A deep convolutional encoder–decoder architecture for image segmentation. IEEE Transactions on Pattern Analysis and Machine Intelligence, 39(12), 2481–2495.

Chu, K., Lee, M., & Sunwoo, M. (2012). Local path planning for off-road autonomous driving with avoidance of static obstacles. IEEE Transactions on Intelligent Transportation Systems, 13(4), 1599–1616.

Gao, H. B., Zhu, J. P., Zhang, T., Xie, G. T., Kan, Z., Hao, Z. Y., & Liu, K. (2022). Situational assessment for intelligent vehicles based on stochastic model and Gaussian distributions in typical traffic scenarios. IEEE Transactions on Systems, Man, and Cybernetics: System, 52(3), 1426–1436.

Gao, S. H., Huang, S. N., Xiang, C., & Lee, T. H. (2020). A review of optimal motion planning for unmanned vehicles. Journal of Marine Science and Technology-Taiwan, 28(25), 321–330.

Hassaballah, M., Kenk, M. A., Muhammad, K., & Minaee, S. (2021). Vehicle detection and tracking in adverse weather using a deep learning framework. IEEE Transactions on Intelligent Transportation Systems, 22(7), 4230–4242.

Huang, Y. J., Ding, H. T., Zhang, Y. B., Wang, H., Cao, D. P., Xu, N., & Hu, C. (2020). A motion planning and tracking framework for autonomous vehicles based on artificial potential field elaborated resistance network approach. IEEE Transactions on Industrial Electronics, 67(2), 1376–1386.

Jeong, Y., & Yi, K. (2021). Target vehicle motion prediction-based motion planning framework for autonomous driving in uncontrolled intersections. IEEE Transactions on Intelligent Transportation Systems, 22(1), 168–177.

Kong, D. W., List, G. F., Guo, X. C., & Wu, D. X. (2018). Modeling vehicle car-following behavior in congested traffic conditions based on different vehicle combinations. Transportation Letters-the International Journal of Transportation Research, 10(5), 280–293.

Li, L. J., Gan, Z. Y., Xu, Qu., & Ran, B. (2020). Risk perception and the warning strategy based on safety potential field theory. Accident Analysis & Prevention, 148, 1–17.

Li, X., Sun, Z., Dongpu, C., Liu, D., & He, H. (2017a). Development of a new integrated local trajectory planning and tracking control framework for autonomous ground vehicles. Mechanical Systems and Signal Processing, 87, 118–137.

Li, Y., Wang, Jq., & Wu, J. (2017b). Model calibration concerning risk coefficients of driving safety field model. Journal of Central South University, 24(1), 1494–1502.

Lopez-Martin, M., Carro, B., & Sanchez-Esguevillas, A. (2020). Application of deep reinforcement learning to intrusion detection for supervised problems. Expert Systems with Applications, 141, 1–15.

Morales, E. F., Murrieta-Cid, R., Becerra, I., & Esquivel-Basaldua, M. A. (2021). A survey on deep learning and deep reinforcement learning in robotics with a tutorial on deep reinforcement learning. Intelligent Service Robotics, 14(5), 773–805.

Rafiei, A., Fasakhodi, A. O., & Hajati, F. (2022). Pedestrian collision avoidance using deep reinforcement learning. International Journal of Automotive Technology, 23, 613–622.

Sajjad, M., Irfan, M., Muhammad, K., Del Ser, J., Sanchez-Medina, J., Andreev, S., Ding, W. P., & Lee, J. W. (2021). An efficient and scalable simulation model for autonomous vehicles with economical hardware. IEEE Transactions on Intelligent Transportation Systems, 22(3), 1718–1732.

Sakhare, K. V., Tewari, T., & Vyas, V. (2020). Review of vehicle detection systems in advanced driver assistant systems. Archives of Computational Methods in Engineering, 27(2), 591–610.

van Hasselt, H. (2010). Double Q-learning[C]. In 23rd Advances in Neural Information Processing Systems (NeurIPS). Vancouver, British Columbia: MIT Press, pp. 2613–2621.

Wang, H., Huang, Y. A., Khajepour, Y., Zhang, Y. R., & Cao, D. (2019). Crash mitigation in motion planning for autonomous vehicles. IEEE Transactions on Intelligent Transportation Systems, 20(9), 3313–3323.

Wang, H., Wang, Q., Chen, W., Zhao, L., & Tan, D. (2021). Path tracking based on model predictive control with variable predictive horizon. Transactions of the Institute of Measurement and Control, 43(12), 2676–2688.

Wang, Liu, X. J., Qiu, T., Mu, C., Chen, C., & Zhou, P. (2020). A real-time collision prediction mechanism with deep learning for intelligent transportaton system. IEEE Transactions on Vehicular Technology, 69(9), 9497–9508.

Watkins, C. J. C. H. (1989). Learning from delayed rewards[D]. University of Cambridge.

Wen, S., Zhao, Y., Yuan, X., Wang, Z., Zhang, D., & Manfredi, L. (2020). Path planning for active SLAM based on deep reinforcement learning under unknown environments. Intelligent Service Robotics, 13, 263–272.

Yoo, J. M., Jeong, Y., & Yi, K. (2021). Virtual target-based longitudinal motion planning of autonomous vehicles at urban intersections: determining control inputs of acceleration with human driving characteristic-based constraints. IEEE Vehicular Technology Magazine, 16(3), 38–46.

Zhang, X., Ma, H., Luo, X., & Yuan, J. (2022). LIDAR: Learning from imperfect demonstrations with advantage rectification. Frontiers of Computer Science, 16(1), 1–9.

Zhao, Z. Y., Wang, Q., & Li, X. L. (2020). Deep reinforcement learning based lane detection and localization. Neurocomputing, 413, 328–338.

Zuo, Z. Q., Yang, X., Li, Z., Wang, Y. J., Han, Q. N., Wang, L., & Luo, X. Y. (2021). MPC-based cooperative control strategy of path planning and trajectory tracking for intelligent vehicles. IEEE Transactions on Intelligent Vehicles, 6(3), 513–522.

Acknowledgements

The authors are very grateful to the China government by the support of this work through National Natural Science Foundation Program (52372407), Jilin Provincial Science and Technology Development Plan Project (20230402064GH) and the Natural Science Foundation of Jilin Province (20220101236JC).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Han, L., Wang, Y., Chi, R. et al. Local Planning Strategy Based on Deep Reinforcement Learning Over Estimation Suppression. Int.J Automot. Technol. (2024). https://doi.org/10.1007/s12239-024-00076-w

Received:

Revised:

Accepted:

Published:

DOI: https://doi.org/10.1007/s12239-024-00076-w