Abstract

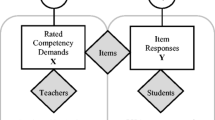

Initiated by the Program for International Student Assessment (PISA), Mathematical Key Competencies (MKCs), which integrate into the process of solving situational problems, becomes a typical example of Mathematical Key Competencies assessment. Cognitive Diagnostic Assessment, as a new generation of measurement theory, integrates the measurement objectives into the cognitive process model via cognitive analysis, and helps increase understanding of students’ mastery over fine-grained knowledge points. This paper analyzed 12 PISA test items and calibrated their attributes, thus forming a cognitive model based on six PISA’s MKCs, which were namely Mathematical Abstraction, Logical Reasoning, Intuitive Imagination, Mathematical Modeling, Mathematical Operation and Data Analysis. Through the comparison of models fit for DINA, DINO, RRUM, ACDM, GDM, LCDM, LLM, G-DINA and Mixed Model, the LCDM with a good model fit was selected to analyze the data of 19, 454 students in eight countries, and comparisons of the six MKCs among these countries were obtained. Through analyzing the knowledge states, combing the prerequisite relationships between the attributes, and exploring the learning trajectories of students’ MKCs in different countries, we found that the students’ performance in China was the best among all countries for each of the six MKCs. In all other countries, the students’ performance in Logical Reasoning and Intuitive Imagination were weaker than the other attributes. An analysis of learning trajectories found that Russia, Singapore, Australia and Finland had very similar main learning trajectories (highlighted in red), and the main learning trajectories of Russia and Singapore are the same; however, there exist distinct differences in learning trajectories between China and the United States, where the learning trajectories in China is complicated with varied branches, while the ones in the United States is relatively simple with fewer branches.

Similar content being viewed by others

References

Akaike, H. (1973). In B. N. Petrov & F. Csaki (Eds.), Information theory as an extension of the maximum likelihood principle, Second international symposium on information theory. BNPBF Csaki Budapest: Academiai Kiado.

Akaike, H. (1998). Information theory and an extension of the maximum likelihood principle. In Selected papers of hirotugu akaike (pp. 199–213). Springer. https://doi.org/10.1007/978-1-4612-0919-5_38.

Ausubel, D. P. (1960). The use of advance organizers in the learning and retention of. Meaningful verbal material. Journal of Educational Psychology, 51(5), 267–276. https://doi.org/10.1037/h0046669.

Carl, I. M. (1989). Essential mathematics for the twenty-first century: The position of the National Council of supervisors of mathematics. The Mathematics Teacher, 82(6), 470-474.Doi: Org/stable/27966331.

Chen, J., & Choi, J. (2009). A comparison of maximum likelihood and expected a posteriori estimation for polychoric correlation using Monte Carlo simulation. Journal of Modern Applied Statistical Methods, 8(1), 32. https://doi.org/10.22237/jmasm/1241137860.

Clements, D. H., & Sarama, J. (2004). Learning trajectories in mathematics education. Mathematical thinking and learning, 6(2), 81-89. Doi: Org/https://doi.org/10.1207/s15327833mtl0602_1.

Confrey, J. (2006). The evolution of design studies as methodology. A aparecer en RK sawyer. In R. K. Sawyer (Ed.), The Cambridge handbook of the learning sciences (pp. 135–151). Thessaloniki, Greece: Cambridge, UK: Cambridge University Press.

Confrey, J., Maloney, A., Nguyen, K., Mojica, G., & Myers, M. (2009). Equipartitioning/splitting as a foundation of rational number reasoning using learning trajectories. In Paper presented at the 33rd conference of the International Group for the Psychology of mathematics education. Thessaloniki: Greece.

Corcoran, T. B., Mosher, F. A., & Rogat, A. (2009). Learning progressions in science: An evidence-based approach to reform. https://doi.org/10.12698/cpre.2009.rr63.

Council, N. R. (2007). Taking science to school: Learning and teaching science in grades K-8. National Academies Press.

De Lange, J. (2003). Mathematics for literacy. In Quantitative literacy: Why numeracy matters for schools and colleges Retrieved from http://www.steen-frost.org/Steen/Papers/02why-ql.pdf.

De La Torre, J. (2009). DINA model and parameter estimation: A didactic. Journal of Educational and Behavioral Statistics, 34(1), 115–130. https://doi.org/10.3102/1076998607309474.

De La Torre, J. (2011). The generalized DINA model framework. Psychometrika, 76(2), 179-199.Dol. https://doi.org/10.1007/s11336-011-9207-7.

De Lange, J. (2007). Large-scale assessment and mathematics education. In K. Frank & J. Leater (Eds.), Second handbook of research on mathematics teaching and learning (pp. 1111–1144). Charlotte: Information Age Publishing press.

Duschl, R., Maeng, S., & Sezen, A. (2011). Learning progressions and teaching sequences: A review and analysis. Studies in Science Education, 47(2), 123–182. https://doi.org/10.1083/03057267.2011.604476.

Fu, J. (2005). A polytomous extension of the fusion model and its Bayesian parameter estimation. Unpublished doctoral dissertation.

Haertel, E. H. (1989). Using restricted latent class models to map the skill structure of achievement items. Journal of Educational Measurement, 26(4), 301–321. https://doi.org/10.1111/j.1745-3984.tb00336.x.

Hagenaars, J. A. (1990). Categorical longitudinal data: Loglinear panel, trend, and cohort analysis. Thousand Oaks: Sage.

Hagenaars, J. A. (1993). Loglinear models with latent variables. Thousand Oaks: Sage.

Hartz, S. M. (2002). A Bayesian framework for the unified model for assessing cognitive abilities: Blending theory with practicality (Doctoral dissertation, ProQuest Information & Learning).

Henson, R. A., Templin, J. L., & Willse, J. T. (2009). Defining a family of cognitive diagnosis models using log-linear models with latent variables. Psychometrika, 74, 191–210. https://doi.org/10.1007/s11336-008-9089-5.

Hilbert, D., & Cohn-Vossen, S. (1999). Geometry and the imagination. American Mathematical Society,providence, Rhode Island: Ams Chelsea Publishing Press.

Jablonka, E. (2003). Mathematical literacy, Second international handbook of mathematics education (pp. 75–102). Springer.

Junker, B. W., & Sijtsma, K. (2001). Cognitive assessment models with few assumptions, and connections. With.Nonparametric item response theory. Applied Psychological Measurement, 25(3), 258–272. https://doi.org/10.1177/01466210122032064.

Kaiser, G., & Sriraman, B. (2006). A global survey of international perspectives on modelling in mathematics education. ZDM, 38(3), 302-310. Doc: https://doi.org/10.1007/bf02652813.

Kilpatrick, J., Swafford, J., Findell, B., & council, N. r. (2001). Adding it up: Helping children learn mathematics. Washington, DC: National Academy Press.

Kunina-Habenicht, O., Rupp, A. A., & Wilhelm, O. (2012). The impact of model misspecification on parameter estimation and item-fit assessment in log-linear diagnostic classification models. Journal of Educational Measurement, 49(1), 59–81. https://doi.org/10.1111/j.1745-3984.2011.00160.x.

Madison, B. L., & Steen, L. A. (2003). Quantitative literacy: Why numeracy matters for schools and colleges. Retrieved from http://www.steen-frost.org/Steen/Papers/02why-ql.pdf

Maloney, A., & Confrey, J. (2010). The construction, refinement, and early validation of the equipartitioning learning trajectory. Retrieved from Chicago: https://doi.org/10.22318/icls2010.1.968.

Maris, E. (1999). Estimating multiple classification latent class models. Psychometrika, 64(2), 187–212 0033-3123/1999-2/1995-0422-a.

National Council of Teachers of Mathematics, & Commission on Standards for School Mathematics. (1989). Curriculum and evaluation standards for school mathematics: Natl Council of Teachers of Mathematics.

Niss, M. (2003). Mathematical competencies and the learning of mathematics: The Danish KOM project. In Paper presented at the 3rd Mediterranean conference on mathematical education.

Niss, M. (2004). The Danish KOM project and possible consequences for teacher education. Paper presented at the Educating for the future. Proceedings of an international symposium on mathematics teacher education.

Niss, M., & Højgaard, T. (2011). Competencies and mathematical learning: Ideas and inspiration for the development of mathematics teaching and learning in Denmark (English edition). pure. au. dk/portal/files/41669781/THJ11_MN_KOM_in_english. pdf.

Oliveri, M. E., & von Davier, M. (2011). Investigation of model fit and score scale comparability in international assessments. Psychological Test and Assessment Modeling, 53(3), 315–333.

Organisation for Economic Co-operation Development. (2018). PISA 2021 Mathematics Framework (DEAFT). OECD Publishing.

Schleicher, A., Zimmer, K., Evans, J., & Clements, N. (2009). PISA 2009 assessment framework: Key competencies in Reading, mathematics and science. OECD Publishing (NJ1).

Schwarz, G. (1978). Estimating the dimension of a model. The Annals of Statistics, 6(2), 461–464.

Simon, & Schuster. (2004). PISA Learning for Tomorrow's World: First Results from PISA 2003. In Programme for international student assessment, Organització de Cooperació Desenvolupament Econòmic (Vol. 659).

Simon, M. A. (1995). Reconstructing mathematics pedagogy from a constructivist perspective. Journal for Research in Mathematics Education, 114–145. https://doi.org/10.2307/749205.

Steen, L. A. (1990). Numeracy. Daedalus, 211–231 https://www.jstor.org/stable/20025307.

Tatsuoka, K. K. (1984a). Analysis of errors in fraction addition and subtraction problems: Computer-based education research laboratory, report no: ED257665. Urbana: University of Illinois.

Tatsuoka, K. K. (1984b). Caution indices based on item response theory. Psychometrika, 49, 95–110. https://doi.org/10.1007/bf02294208.

Tatsuoka, K. K. (1990). Toward an integration of item-response theory and cognitive error diagnosis. In N. Frederiksen, R. Glaser, A. Lesgold, & M. G. Shafto (Eds.), Diagnostic monitoring of skill and knowledge acquisition (pp. 453–488). Inc: Lawrence Erlbaum Associates.

Tatsuoka, K. K. (2009). Cognitive assessment: An introduction to the rule space method: Routledge. https://doi.org/10.4324/9780203883372.

Templin, J., & Bradshaw, L. (2013). Measuring the reliability of diagnostic classification model examinee estimates. Journal of Classification, 30(2), 251–275. https://doi.org/10.1007/s00357-013-9129-4.

Templin, J., & Henson, R. A. (2010). Diagnostic measurement: Theory, methods, and applications. New York: Guilford Press.

Templin, J. L., & Henson, R. A. (2006). Measurement of psychological disorders using cognitive diagnosis models. Psychological Methods, 11(3), 287–305. https://doi.org/10.1037/1082-989X.11.3.287.

Tout, D. (2000). Numeracy up front: Behind the international life skills survey. In Report no:CE079935. ARIS Resources: Bulletin.

von Davier, M. (2005). A general diagnostic model applied to language testing data (ETS research report RR-05-16).

von Davier, M. (2010). Hierarchical mixtures of diagnostic models. Psychological test and assessment models, 52(1), 8–28.

von Davier, M. (2014). The log-linear cognitive diagnostic model (LCDM) as a special case of the general diagnostic model (GDM). ETS Research Report Series, 2014(2), 1–13. https://doi.org/10.1002/ets2.12043.

Vrieze, S. I. (2012). Model selection and psychological theory: A discussion of the differences between the Akaike information criterion (AIC) and the Bayesian information criterion (BIC). Psychological Methods, 17(2), 228–243. https://doi.org/10.1037/a0027127.

Wang, W., Song, L., & Ding, S. (2018). The index and application of cognitive diagnostic test from the perspective of classification. Psychological science, 41(2), 475–483. https://doi.org/10.16719/j.cnki.1671-6981.20180234.

Wu, X., Wu, R., Chang, H.-H., Kong, Q., & Zhang, Y. (2020). International comparative study on PISA mathematics achievement test based on cognitive diagnostic models. Frontiers in Psychology, 11, 2230. https://doi.org/10.3389/fpsyg.2020.02230.

Author. (2020). The construction of cognitive diagnostic assessment methods for key competence. Modern Educational Technology, 30(2), 20–28. https://doi.org/10.3969/j.issn.1009-8097.2020.02.006.

Zhan, P. (2020). Longitudinal learning diagnosis: Minireview and future research directions. Frontiers in Psychology, 11. https://doi.org/10.3389/fpsyg.2020.01185.

Zhan, P., Jiao, H., Liao, D., & Li, F. (2019). A longitudinal higher-order diagnostic classification model. Journal of Educational and Behavioral Statistics, 44(3), 251–281. https://doi.org/10.3102/1076998619827593.

Zhang, M., Ding, X., & Xu, J. (2016). Developing Shanghai's teachers. Teacher Quality Systems in Top Performing Countries. In National Center on Education and the Economy.

Funding

This work was support by China Scholarship Council (No. 201906140104); 2020 Academic Innovation Ability Enhancement Plan for outstanding doctoral Students of East China Normal University (No. YBNLTS2020–003) and Guizhou Philosophy and Social Science Planning Youth Fund(No. 19GZQN29).

Author information

Authors and Affiliations

Corresponding authors

Ethics declarations

Ethics Statements

Ethical review and approval were not required for the study on human participants in accordance with the local legislation and institutional requirements. Written informed consent from the participants’ legal guardian/next of kin was not required to participate in this study in accordance with the national legislation and the institutional requirements. No animal studies are presented in this manuscript. No potentially identifiable human images or data is presented in this study.

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Wu, X., Zhang, Y., Wu, R. et al. A comparative study on cognitive diagnostic assessment of mathematical key competencies and learning trajectories. Curr Psychol 41, 7854–7866 (2022). https://doi.org/10.1007/s12144-020-01230-0

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12144-020-01230-0