Abstract

Covariate-adjusted treatment effects are commonly estimated in non-randomized studies. It has been shown that measurement error in covariates can bias treatment effect estimates when not appropriately accounted for. So far, these delineations primarily assumed a true data generating model that included just one single covariate. It is, however, more plausible that the true model consists of more than one covariate. We evaluate when a further covariate may reduce bias due to measurement error in another covariate and in which cases it is not recommended to include a further covariate. We analytically derive the amount of bias related to the fallible covariate’s reliability and systematically disentangle bias compensation and amplification due to an additional covariate. With a fallible covariate, it is not always beneficial to include an additional covariate for adjustment, as the additional covariate can extensively increase the bias. The mechanisms for an increased bias due to an additional covariate can be complex, even in a simple setting of just two covariates. A high reliability of the fallible covariate or a high correlation between the covariates cannot in general prevent from substantial bias. We show distorting effects of a fallible covariate in an empirical example and discuss adjustment for latent covariates as a possible solution.

Similar content being viewed by others

Notes

The variance of the treatment regression in Eq. 1 is \({\text {Var}}(E(X{|}\xi ,W))= {\text {Var}}(\alpha _{0} + \alpha _{\xi }\cdot \xi + \alpha _{W}\cdot W)\), which can be simplified to \(\alpha _{\xi } ^{2}{\text {Var}}(\xi ) + \alpha _{W}^{2}{\text {Var}}(W) + 2\alpha _{\xi }\alpha _{W}{\textit{Cov}}(\xi ,W) = \alpha _{\xi }^{2} + \alpha _{W}^{2} + 2\alpha _{\xi }\alpha _{W}\rho _{{\xi W}}\) according to calculation rules for conditional expectations and because all variables have a variance of one. The explained variance of X cannot exceed the total variance of X, that is, \({\text {Var}}(E(X{|}\xi ,W)) \le {\text {Var}}(X)\). Thus, only parameter combinations are possible for which \(\alpha _\xi ^2 + \alpha _W^2+2\alpha _{\xi }\alpha _{W}\rho _{{\xi W}} \le 1\) holds.

In our derivation, all variables have a variance of one. Accordingly, we directly obtain a bias difference in standard deviation units of the outcome variable, when using the standardized regression coefficients from our empirical data in the formulas. We standardized the estimated bias in the empirical data in a second step using the standard deviation of the outcome variable in the control group for standardization.

References

Aiken, L. S., & West, S. G. (1991). Multiple regression: Testing and interpreting interactions. Newbury Park: Sage.

Clarke, K. A. (2005). The phantom menace: Omitted variable bias in econometric research. Conflict. Management and Peace Science, 22, 341–352. https://doi.org/10.1080/07388940500339183.

Cohen, J., Cohen, P., West, S. G., & Aiken, L. S. (2003). Applied multiple regression/correlation analyses for the behavioral sciences. Hillsdale, NJ: Erlbaum.

Cole, S. R., Platt, R. W., Schisterman, E. F., Chu, H., Westreich, D., Richardson, D., et al. (2010). Illustrating bias due to conditioning on a collider. International Journal of Epidemiology, 39(2), 417–420. https://doi.org/10.1093/ije/dyp334.

Cook, T. D., Steiner, P. M., & Pohl, S. (2009). How bias reduction is affected by covariate choice, unreliability, and mode of data analysis: Results from two types of within-study comparisons. Multivariate Behavioral Research, 44(6), 828–847. https://doi.org/10.1080/00273170903333673.

Ding, P., & Miratrix, L. W. (2015). To adjust or not to adjust? Sensitivity analysis of M-bias and butterfly-bias. Journal of Causal Inference, 3(1), 41–57. https://doi.org/10.1515/jci-2013-0021.

Duncan, O. D. (1975). Introduction to structural equation models. New York: Academic.

Fritz, M. S., Kenny, D. A., & MacKinnon, D. P. (2016). The combined effects of measurement error and omitted confounding in the single-mediator model. Multivariate Behavioral Research, 51(5), 681–697. https://doi.org/10.1080/00273171.2016.1224154.

Greenland, S. (2003). Quantifying biases in causal models: Classical confounding vs. collider-stratification bias. Epidemiology, 14(3), 300–306. https://doi.org/10.1097/01.EDE.0000042804.12056.6C.

Hong, H., Rudolph, K. E., & Stuart, E. A. (2017). Bayesian approach for addressing differential covariate measurement error in propensity score methods. Psychometrika, 82(4), 1078–1096. https://doi.org/10.1007/s11336-016-9533-x.

Kenny, D. A. (1979). Correlation and causation. New York: Wiley.

Kuroki, M., & Pearl, J. (2014). Measurement bias and effect restoration in causal inference. Biometrika, 101(2), 423–437. https://doi.org/10.1093/biomet/ast066.

Lockwood, J., & McCaffrey, D. (2014). Correcting for test score measurement error in ANCOVA models for estimating treatment effects. Journal of Educational and Behavioral Statistics, 39(1), 22–51. https://doi.org/10.3102/1076998613509405.

Lockwood, J., & McCaffrey, D. (2015). Simulation-extrapolation for estimating means and causal effects with mismeasured covariates. Observational Studies, 1, 241–290.

Lockwood, J., & McCaffrey, D. (2016). Matching and weighting with functions of error-prone covariates for causal inference. Journal of the American Statistical Association, 111, 1831–1839. https://doi.org/10.1080/01621459.2015.1122601.

Lockwood, J., & McCaffrey, D. (2018). Hidden information, wobbly surfaces, and test score measurement error: Unpacking student-teacher selection mechanisms. Manuscript under review.

Lockwood, J., & McCaffrey, D. (2017). Simulation-extrapolation with latent heteroskedastic error variance. Psychometrika, 82(3), 717–736. https://doi.org/10.1007/s11336-017-9556-y.

Mayer, A., Dietzfelbinger, L., & Rosseel, Y. (2016). The EffectLiteR approach for analyzing average and conditional effects. Multivariate Behavioral Research, 51(2–3), 374–91. https://doi.org/10.1080/00273171.2016.1151334.

Myers, J. A., Rassen, J. A., Gagne, J. J., Huybrechts, K. F., Schneeweiss, S., Rothman, K. J., et al. (2011). Effects of adjusting for instrumental variables on bias and precision of effect estimates. American Journal of Epidemiology, 174(11), 1213–1222. https://doi.org/10.1093/aje/kwr364.

McCaffrey, D., Lockwood, J., & Setodji, C. (2013). Inverse probability weighting with error-prone covariates. Biometrika, 100(3), 671–680. https://doi.org/10.1093/biomet/ast022.

Pearl, J. (2009). Causality: Models, reasoning, and inference (2nd ed.). Cambridge: Cambridge University Press.

Pearl, J. (2011). Understanding bias amplification (Invited commentary). American Journal of Epidemiology, 174(11), 1223–1227. https://doi.org/10.1093/aje/kwr352.

Pearl, J. (2013). Linear models: A useful "microscope" for causal analysis. Journal of Causal Inference, 1(1), 155–17. https://doi.org/10.1515/jci-2013-0003.

Pearl, J. (2014). A short note on the virtues of graphical tools. Technical report. Los Angeles, CA: Computer Science Department, University of California Los Angeles.

Pohl, S., Sengewald, M.-A., & Steyer, R. (2016). Adjustment when covariates are fallible. In W. Wiedermann & A. V. Eye (Eds.), Statistics and causality: Methods for applied empirical research. Hoboken: Wiley.

Pohl, S., Steiner, P. M., Eisermann, J., Soellner, R., & Cook, T. D. (2009). Unbiased causal inference from an observational study: Results of a within-study comparison. Education Evaluation and Policy Analysis, 31(4), 463–479. https://doi.org/10.3102/0162373709343964.

Raykov, T. (2012). Propensity score analysis with fallible covariates: A note on a latent variable modeling approach. Educational and Psychological Measurement, 72, 715–733. https://doi.org/10.1177/0013164412440999.

Rosseel, Y. (2012). Lavaan: an R package for structural equation modeling. Journal of Statistical Software, 48, 1–36. Retrieved from http://www.jstatsoft.org/v48/i02/.

Rubin, D. B. (2005). Causal inference using potential outcomes: Design, modeling, decisions. Journal of the American Statistical Association, 100, 322–331. https://doi.org/10.1198/016214504000001880.

Sengewald, M.-A., Steiner, P. M., & Pohl, S. (2018). When does measurement error in covariates impact causal effect estimates? Analytical derivations of different scenarios and an empirical illustration. British Journal of Mathematical and Statistical Psychology. https://doi.org/10.1111/bmsp.12146.

Shadish, W. R., Cook, T. D., & Campbell, D. T. (2002). Experimental and quasi experimental designs for generalized causal inference. Boston: Houghton Mifflin.

Skrondal, A., & Laake, P. (2001). Regression among factor scores. Psychometrika, 66, 563–575. https://doi.org/10.1007/BF02296196.

Steiner, P. M., Cook, T. D., Li, W., & Clark, M. H. (2015). Bias reduction in quasi-experiments with little selection theory but many covariates. Journal of Research on Educational Effectiveness, 8(4), 552–576. https://doi.org/10.1080/19345747.2014.978058.

Steiner, P. M., Cook, T. D., & Shadish, W. R. (2011). On the importance of reliable covariate measurement in selection bias adjustments using propensity scores. Journal of Educational and Behavioral Statistics, 36(2), 213–236. https://doi.org/10.3102/1076998610375835.

Steiner, P. M., & Kim, Y. (2016). The mechanics of omitted variable bias: Bias amplification and cancellation of offsetting biases.Journal of Causal Inference. https://doi.org/10.1515/jci-2016-0009.

Steyer, R., Mayer, A., & Fiege, C. (2014). Causal inference on total, direct, and indirect effects. In A. C. Michalos (Ed.), Encyclopedia of quality of life and well-being research (pp. 606–631). Dordrecht: Springer.

Thoemmes, F., & Rose, N. (2014). A cautious note on auxiliary variables that can increase bias in missing data problems. Multivariate Behavioral Research, 49, 443–459. https://doi.org/10.1080/00273171.2014.931799.

Wright, S. (1922). Coefficients of inbreeding and relationship. The American Naturalist, 56, 330–338.

Yi, G., Ma, Y., & Carroll, R. (2012). A functional generalized method of moments approach for longitudinal studies with missing responses and covariate measurement error. Biometrika, 99(1), 151–165. https://doi.org/10.1093/biomet/asr076.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

We thank Peter M. Steiner and Rolf Steyer for valuable discussions as well as Renate Soellner and Jens Eisermann who provided the empirical data. Furthermore, we thank the anonymous reviewers for insightful comments and constructive suggestions.

Electronic supplementary material

Below is the link to the electronic supplementary material.

Appendix: Proof that W is an Irrelevant Covariate in 1a, 1b, 1c, 1d

Appendix: Proof that W is an Irrelevant Covariate in 1a, 1b, 1c, 1d

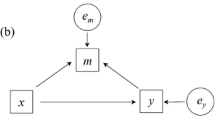

We are interested in \(\beta _X ={\text {ATE}}\) that is the regression coefficient of treatment X in the outcome regression \(E\left( {Y{|}X,\xi ,W} \right) =\beta _0 +\beta _X \cdot X+\beta _\xi \cdot \xi +\beta _W \cdot W\). Omitting W and using the outcome regression \(E\left( {Y{|}X,\xi } \right) =\beta _0^\xi +\beta _X^\xi \cdot X+\beta _\xi ^\xi \cdot \xi \) for ATE estimation results in the ATE estimate \(\beta _X^\xi =\frac{\rho _{XY} -\rho _{X\xi } \rho _{Y\xi } }{1-(\rho _{X\xi })^{2}}\), with the bivariate correlations of X, Y, and \(\xi \). The bias in this ATE estimate can be quantified in our formal framework according to Steiner & Kim (2016) as

In 1a, 1b, 1c, 1d the bias is always zero, because either \(\alpha _W\), \(\beta _W\), or both parameters are fixed to zero. Thus, using \(E\left( {Y{|}X,\xi } \right) \) instead of \(E\left( {Y{|}X,\xi ,W} \right) \) obtains the same ATE estimate. W has no impact on ATE estimation in addition to \(\xi \). This is not the case in 2a and 2b, where using \(E\left( {Y{|}X,\xi } \right) \) instead of \(E\left( {Y{|}X,\xi ,W} \right) \) can result in different ATE estimates. Note, different causal frameworks provide more general conditions [backdoor criterion (Pearl 2009), strong ignorability condition (Rubin 2005), causality conditions in the theory of causal effects (Steyer et al. 2014)] which result in the same conclusions for the relevance of W in addition to \(\xi \).

Rights and permissions

About this article

Cite this article

Sengewald, MA., Pohl, S. Compensation and Amplification of Attenuation Bias in Causal Effect Estimates. Psychometrika 84, 589–610 (2019). https://doi.org/10.1007/s11336-019-09665-6

Received:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11336-019-09665-6