Abstract

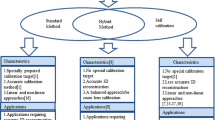

This paper surveys adaptive cameras, i.e. any camera device able to change its geometric settings. We consider their classification in four categories: lensless, dioptric, catadioptric and polydioptric cameras. In each category, we report and describe all the existing adaptive cameras. Then, the known applications of these devices are summarized. Finally, we discuss open research lines for new adaptations of cameras, and their promising uses.

Similar content being viewed by others

Data Availability

Data sharing not applicable to this article as no datasets were generated or analysed during the current study.

Notes

the brightness loss can reach \(90\%\) in the case of Canon 50 mm f/1.2 lens mounted on a Canon 1DS Mark III body (Guenter et al., 2017).

A monocentric lens consists in spherical optical elements arranged concentrically around a central point. Its FOV can reach more than 120\(^{\circ }\) (Ford et al., 2018).

but simpler anti-shake modes do exist in some cameras, for instance Panasonic Lumix G Vario Lens®with a MegaOIS®system (Panasonic®, 2022).

in stereovision in general, the gap between two distinct cameras

e.g. manufactured by VStone® (Vstone®, 2010)

e.g. made by Asphericon ® (Asphericon®, 2023).

generally provided by Texas Instruments ® (Texas Instruments®, 2018).

supplied by Microgate® (Microgate®, 2011).

continuous MOEMS provided by ALPAO (ALPAO®, 2023) have a 90 micro-meters stroke.

e.g. GoPro 360® (GoPro®, 2015) camera array.

available as Ricoh Theta® (Ricoh®, 2019) products, for instance.

References

Adelson, E. H., & Bergen, J. R. (1991). The plenoptic function and the elements of early vision (Vol. 2). Media Laboratory, Massachusetts Institute of Vision and Modeling Group.

Afshari, H., Jacques, L., Bagnato, L., Schmid, A., Vandergheynst, P., & Leblebici, Y. (2013). The panoptic camera: A plenoptic sensor with real-time omnidirectional capability. Journal of Signal Processing Systems, 70(3), 305–328.

Allen, E., & Triantaphillidou, S. (2012). The manual of photography. CRC Press.

Alterman, M., Swirski, Y., & Schechner, Y. Y. (2014). STELLA MARIS: Stellar marine refractive imaging sensor. In IEEE international conference on computational photography (ICCP) (pp. 1–10). IEEE.

Asif, M. S., Ayremlou, A., Sankaranarayanan, A., Veeraraghavan, A., & Baraniuk, R. G. (2016). Flatcam: Thin, lensless cameras using coded aperture and computation. IEEE Transactions on Computational Imaging, 3(3), 384–397.

Baker, H. H., Tanguay, D., & Papadas, C. (2005). Multi-viewpoint uncompressed capture and mosaicking with a high-bandwidth pc camera array. In Proceedings of workshop on omnidirectional vision (OMNIVIS 2005)

Baker, P., Ogale, A. S., & Fermuller, C. (2004). The argus eye: A new imaging system designed to facilitate robotic tasks of motion. IEEE Robotics & Automation Magazine, 11(4), 31–38.

Baker, S., & Nayar, S. K. (1999). A theory of single-viewpoint catadioptric image formation. International Journal of Computer Vision, 35(2), 175–196.

Bakstein, H., & Pajdla, T. (2001). An overview of non-central cameras. In Computer vision winter workshop (Vol. 2).

Bettonvil, F. (2005). Fisheye lenses. WGN, Journal of the International Meteor Organization, 33, 9–14.

Boominathan, V., Adams, J. K., Asif, M. S., Avants, B. W., Robinson, J. T., Baraniuk, R. G., Sankaranarayanan, A. C., & Veeraraghavan, A. (2016). Lensless imaging: A computational renaissance. IEEE Signal Processing Magazine, 33(5), 23–35.

Boominathan, V., Adams, J. K., Robinson, J. T., & Veeraraghavan, A. (2020). Phlatcam: Designed phase-mask based thin lensless camera. IEEE Transactions on Pattern Analysis and Machine Intelligence, 42(7), 1618–1629.

Borra, E. F. (1982). The liquid-mirror telescope as a viable astronomical tool. Journal of the Royal Astronomical Society of Canada, 76, 245–256.

Brady, D. J., Gehm, M. E., Stack, R. A., Marks, D. L., Kittle, D. S., Golish, D. R., Vera, E., & Feller, S. D. (2012). Multiscale gigapixel photography. Nature, 486(7403), 386–389.

Brousseau, D., Borra, E. F., & Thibault, S. (2007). Wavefront correction with a 37-actuator ferrofluid deformable mirror. Optics Express, 15(26), 18190–18199.

Cao, J. J., Hou, Z. S., Tian, Z. N., Hua, J. G., Zhang, Y. L., & Chen, Q. D. (2020). Bioinspired zoom compound eyes enable variable-focus imaging. ACS Applied Materials & Interfaces, 12(9), 10107–10117.

Caron, G., & Eynard, D. (2011). Multiple camera types simultaneous stereo calibration. In International conference on robotics and automation (pp. 2933–2938).

Chan, W. S., Lam, E. Y., Ng, & M. K. (2006). Extending the depth of field in a compound-eye imaging system with super-resolution reconstruction. In 18th International conference on pattern recognition (ICPR’06) (Vol. 3, pp. 623–626). IEEE.

Cheng, Y., Cao, J., Zhang, Y., & Hao, Q. (2019). Review of state-of-the-art artificial compound eye imaging systems. Bioinspiration & Biomimetics, 14, 3.

Clark, A. D., & Wright, W. (1973). Zoom lenses. 7, Hilger.

Conti, C., Soares, L. D., & Nunes, P. (2020). Dense light field coding: A survey. IEEE Access, 8, 49244–49284.

Corke, P. (2017). Robotics, vision and control: fundamental algorithms in MATLAB® second, completely revised (Vol. 118). Springer.

Cossairt, O. S., Miau, D., & Nayar, S. K. (2011). Gigapixel computational imaging. In IEEE internaional conference on computational photography (ICCP) (p. 1–8).

Davies, R., & Kasper, M. (2012). Adaptive optics for astronomy. Annual Review of Astronomy and Astrophysics, 50, 305–351.

Demenikov, M., Findlay, E., & Harvey, A. R. (2009). Miniaturization of zoom lenses with a single moving element. Optics Express, 17(8), 6118–6127.

Dickie, C., Fellion, N., & Vertegaal, R. (2012). Flexcam: Using thin-film flexible oled color prints as a camera array. In CHI ’12 extended abstracts on human factors in computing systems (CHI EA ’12, pp. 1051–1054). Association for Computing Machinery. https://doi.org/10.1145/2212776.2212383

Evens, L. (2008). View camera geometry.

Ewing, J. (2016). Follow the sun: A field guide to architectural photography in the digital age. CRC Press.

Falahati, M., Zhou, W., Yi, A., & Li, L. (2020). Development of an adjustable-focus ferrogel mirror. Optics & Laser Technology, 125, 52.

Fayman, J. A., Sudarsky, O., & Rivlin, E. (1998). Zoom tracking. In Proceedings. IEEE international conference on robotics and automation (Cat. No. 98CH36146) (Vol. 4, pp. 2783–2788). IEEE.

Fenimore, E. E., & Cannon, T. M. (1978). Coded aperture imaging with uniformly redundant arrays. Applied Optics, 17(3), 337–347.

Flaugher, B., Diehl, H., Honscheid, K., Abbott, T., Alvarez, O., Angstadt, R., Annis, J., Antonik, M., Ballester, O., Beaufore, L., et al. (2015). The dark energy camera. The Astronomical Journal, 150, 5.

Floreano, D., Pericet-Camara, R., Viollet, S., Ruffier, F., Brückner, A., Leitel, R., Buss, W., Menouni, M., Expert, F., Juston, R., et al. (2013). Miniature curved artificial compound eyes. Proc of the National Academy of Sciences, 110(23), 9267–9272.

Ford, J. E., Agurok, I., & Stamenov, I. (2018). Monocentric lens designs and associated imaging systems having wide field of view and high resolution. US Patent 9,860,443.

Forutanpour, B., Le Nguyen, P. H., & Bi, N. (2019) Dual fisheye image stitching for spherical image content. US Patent 10,275,928.

Geyer, C., & Daniilidis, K. (2001). Catadioptric projective geometry. International Journal of Computer Vision, 45(3), 223–243.

Ghorayeb, A., Potelle, A., Devendeville, L., & Mouaddib, E. M. (2010). Optimal omnidirectional sensor for urban traffic diagnosis in crossroads. In IEEE intelligent vehicles symposium (pp. 597–602).

Gill, J. S., Moosajee, M., & Dubis, A. M. (2019). Cellular imaging of inherited retinal diseases using adaptive optics. Eye, 33(11), 1683–1698.

Gluckman, J., & Nayar, S. K. (1999). Planar catadioptric stereo: Geometry and calibration. In Proceedings of the IEEE computer society conference on computer vision and pattern recognition (CVPR) (Vol. 1, pp. 22–28).

Gottesman, S. R., & Fenimore, E. E. (1989). New family of binary arrays for coded aperture imaging. Applied Optics, 28(20), 4344–4352.

Goy, J., Courtois, B., Karam, J., & Pressecq, F. (2001). Design of an APS CMOS image sensor for low light level applications using standard cmos technology. Analog Integrated Circuits and Signal Processing, 29(1), 95–104.

Guenter, B., Joshi, N., Stoakley, R., Keefe, A., Geary, K., Freeman, R., Hundley, J., Patterson, P., Hammon, D., Herrera, G., et al. (2017). Highly curved image sensors: A practical approach for improved optical performance. Optics Express, 25(12), 13010–13023.

Hamanaka, K., & Koshi, H. (1996). An artificial compound eye using a microlens array and its application to scale-invariant processing. Optical Review, 3(4), 264–268.

Held, R. T., Cooper, E. A., Obrien, J. F., & Banks, M. S. (2010). Using blur to affect perceived distance and size. ACM Transactions on Graphics, 29, 3.

Herbert, G. (1960). Apparatus for orienting photographic images. US Patent 2,931,268.

Hicks, R. A., & Perline, R. K. (2001). Geometric distributions for catadioptric sensor design. In Proceedings of the IEEE computer society conference on computer vision and pattern recognition (CVPR) (Vol. 1).

Hornbeck, L. J. (1983). 128\(\times \) 128 deformable mirror device. IEEE Transactions on Electron Devices, 30(5), 539–545.

Hsu, C. H., Cheng, W. H., Wu, Y. L., Huang, W. S., Mei, T., & Hua, K. L. (2017). Crossbowcam: A handheld adjustable multi-camera system. Multimedia Tools and Applications, 76(23), 24961–24981.

Hua, Y., Nakamura, S., Asif, S., & Sankaranarayanan, A. (2020). Sweepcam-depth-aware lensless imaing using programmable masks. IEEE Transactions on Pattern Analysis and Machine Intelligence (TPAMI).

Huang, G., Jiang, H., Matthews, K., & Wilford, P. (2013). Lensless imaging by compressive sensing. In 2013 IEEE international conference on image processing (pp. 2101–2105). IEEE.

Huisman, R., Bruijn, M., Damerio, S., Eggens, M., Kazmi, S., Schmerbauch, A., Smit, H., Vasquez-Beltran, M., van der Veer, E., Acuautla, M. (2020). High pixel number deformable mirror concept utilizing piezoelectric hysteresis for stable shape configurations. arXiv preprint arXiv:2008.09338

Ihrke, I., Restrepo, J., & Mignard-Debise, L. (2016). Principles of light field imaging: Briefly revisiting 25 years of research. IEEE Signal Processing Magazine, 33(5), 59–69.

Indebetouw, G., & Bai, H. (1984). Imaging with fresnel zone pupil masks: Extended depth of field. Applied Optics, 23(23), 4299–4302.

Ishihara, K. (2015). Imaging apparatus having a curved image surface. US Patent 9,104,018.

Jeon, H. G., Park, J., Choe, G., Park, J., Bok, Y., Tai, Y. W., & So Kweon, I. (2015). Accurate depth map estimation from a lenslet light field camera. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 1547–1555).

Joo, H., Liu, H., Tan, L., Gui, L., Nabbe, B., Matthews, I., Kanade, T., Nobuhara, S., & Sheikh, Y. (2015). Panoptic studio: A massively multiview system for social motion capture. In Proceedings of the IEEE international conference on computer vision (pp. 3334–3342).

Jung, G. S., & Won, Y. H. (2020). Simple and fast field curvature measurement by depth from defocus using electrowetting liquid lens. Applied Optics, 59(18), 5527–5531.

Jung, I., Xiao, J., Malyarchuk, V., Lu, C., Li, M., Liu, Z., Yoon, J., Huang, Y., & Rogers, J. A. (2011). Dynamically tunable hemispherical electronic eye camera system with adjustable zoom capability. Proceedings of the National Academy of Sciences, 108(5), 1788–1793.

Kahn, S., Kurita, N., Gilmore, K., Nordby, M., O’Connor, P., Schindler, R., Oliver, J., Van Berg, R., Olivier, S., Riot, V. (2010). Design and development of the 3.2 gigapixel camera for the large synoptic survey telescope. In Ground-based and airborne instrumentation for astronomy III (Vol. 7735). International Society for Optics and Photonics.

Kang, S., Duocastella, M., & Arnold, C. B. (2020). Variable optical elements for fast focus control. Nature Photonics, 14(9), 533–542.

Kim, D. H., Kim, Y. S., Wu, J., Liu, Z., Song, J., Kim, H. S., Huang, Y. Y., Hwang, K. C., & Rogers, J. A. (2009). Ultrathin silicon circuits with strain-isolation layers and mesh layouts for high-performance electronics on fabric, vinyl, leather, and paper. Advanced Materials, 21(36), 3703–3707.

Kim, J. J., Liu, H., Ashtiani, A. O., & Jiang, H. (2020). Biologically inspired artificial eyes and photonics. Reports on Progress in Physics, 83(4), 047101.

Kim, W. Y., Seo, H. T., Kim, S., & Kim, K. S. (2019). Practical approach for controlling optical image stabilization system. International Journal of Control, Automation and Systems, 8, 1–10.

Kingslake, R. (1992). Optics in photography. SPIE Press.

Ko, H. C., Stoykovich, M. P., Song, J., Malyarchuk, V., Choi, W. M., Yu, C. J., Geddes Iii, J. B., Xiao, J., Wang, S., Huang, Y., et al. (2008). A hemispherical electronic eye camera based on compressible silicon optoelectronics. Nature, 454(7205), 748–753.

Koppelhuber, A., & Bimber, O. (2013). Towards a transparent, flexible, scalable and disposable image sensor using thin-film luminescent concentrators. Optics Express, 21(4), 4796–4810.

Koppelhuber, A., & Bimber, O. (2017). Thin-film camera using luminescent concentrators and an optical söller collimator. Optics Express, 25(16), 18526–18536.

Korneliussen, J. T., & Hirakawa, K. (2014). Camera processing with chromatic aberration. IEEE Transactions on Image Processing, 23(10), 4539–4552.

Krishnan, G., & Nayar, S. K. (2008). Cata-fisheye camera for panoramic imaging. In IEEE workshop on applications of computer vision (pp. 1–8).

Krishnan, G., & Nayar, S. K. (2009). Towards a true spherical camera. In Human vision and electronic imaging XIV (Vol. 7240). International Society for Optics and Photonics.

Kurmi, I., Schedl, D. C., & Bimber, O. (2018). Micro-lens aperture array for enhanced thin-film imaging using luminescent concentrators. Optics Express, 26(22), 29253–29261.

Kuthirummal, S., & Nayar, S. K. (2006). Multiview radial catadioptric imaging for scene capture. In ACM SIGGRAPH 2006 papers (SIGGRAPH ’06, pp. 916–923). Association for Computing Machinery. https://doi.org/10.1145/1179352.1141975

Kuthirummal, S., & Nayar, S. K. (2007). Flexible mirror imaging. In IEEE 11th international conference on computer vision (pp. 1–8).

Kuthirummal, S., Nagahara, H., Zhou, C., & Nayar, S. K. (2010). Flexible depth of field photography. IEEE Transactions on Pattern Analysis and Machine Intelligence, 33(1), 58–71.

Langford, M. (2000). Basic photography. Taylor & Francis.

Layerle, J. F., Savatier, X., Mouaddib, E., & Ertaud, J. Y. (2008). Catadioptric sensor for a simultaneous tracking of the driver s face and the road scene. In: OMNIVIS’2008, the eighth workshop on omnidirectional vision, camera networks and non-classical cameras, in conjunction with ECCV 2008.

Lee, G. J., Nam, W. I., & Song, Y. M. (2017). Robustness of an artificially tailored fisheye imaging system with a curvilinear image surface. Optics & Laser Technology, 96, 50–57.

Lee, J., Wu, J., Shi, M., Yoon, J., Park, S. I., Li, M., Liu, Z., Huang, Y., & Rogers, J. A. (2011). Stretchable gaas photovoltaics with designs that enable high areal coverage. Advanced Materials, 23(8), 986–991.

Lee, J. H., You, B. G., Park, S. W., & Kim, H. (2020). Motion-free TSOM using a deformable mirror. Optics Express, 28(11), 16352–16362.

Lenk, L., Mitschunas, B., & Sinzinger, S. (2019). Zoom systems with tuneable lenses and linear lens movements. Journal of the European Optical Society-Rapid Publications, 15(1), 1–10.

Levoy, M. (2006). Light fields and computational imaging. Computer, 39(8), 46–55.

Li, L., Wang, D., Liu, C., & Wang, Q. H. (2016). Zoom microscope objective using electrowetting lenses. Optics Express, 24(3), 2931–2940.

Li, L., Hao, Y., Xu, J., Liu, F., & Lu, J. (2018). The design and positioning method of a flexible zoom artificial compound eye. Micromachines, 9, 7.

Liang, C. K., Lin, T. H., Wong, B. Y., Liu, C., & Chen, H. H. (2008). Programmable aperture photography: Multiplexed light field acquisition. In ACM SIGGRAPH 2008 papers (SIGGRAPH ’08). Association for Computing Machinery. https://doi.org/10.1145/1399504.1360654

Liang, J. (2020). Punching holes in light: Recent progress in single-shot coded-aperture optical imaging. Reports on Progress in Physics, 83, 11.

Lin, Y. H., Chen, M. S., & Lin, H. C. (2011). An electrically tunable optical zoom system using two composite liquid crystal lenses with a large zoom ratio. Optics Express, 19(5), 4714–4721.

Lin, Y. H., Liu, Y. L., & Su, G. D. J. (2012). Optical zoom module based on two deformable mirrors for mobile device applications. Applied Optics, 51(11), 1804–1810.

Lipton, L., & Meyer, L. D. (1992). Stereoscopic video camera with image sensors having variable effective position. US Patent 5,142,357.

Madec, P. Y. (2012). Overview of deformable mirror technologies for adaptive optics and astronomy. In Adaptive optics systems III (Vol. 8447). International Society for Optics and Photonics.

Maître, H. (2017). From photon to pixel: The digital camera handbook. Wiley.

Marchand, E., & Chaumette, F. (2017). Visual servoing through mirror reflection. In 2017 IEEE international conference on robotics and automation (ICRA) (pp. 3798–3804). IEEE.

Martin, C. B. (2004). Design issues of a hyperfield fisheye lens. In Novel optical systems design and optimization VII (Vol. 5524, pp. 84–92). International Society for Optics and Photonics.

McLeod, B., Geary, J., Conroy, M., Fabricant, D., Ordway, M., Szentgyorgyi, A., Amato, S., Ashby, M., Caldwell, N., Curley, D., et al. (2015). Megacam: A wide-field CCD imager for the MMT and Magellan. Publications of the Astronomical Society of the Pacific, 127(950), 366.

Merklinger, H. M. (1996). Focusing the view camera (p. 5). Seaboard Printing Limited.

Moon, S., Keles, H. O., Ozcan, A., Khademhosseini, A., Hæggstrom, E., Kuritzkes, D., & Demirci, U. (2009). Integrating microfluidics and lensless imaging for point-of-care testing. Biosensors and Bioelectronics, 24(11), 3208–3214.

Motamedi, M. E. (2005). MOEMS: Micro-opto-electro-mechanical systems (Vol. 126). SPIE Press.

Mouaddib, E. M., Sagawa, R., Echigo, T., & Yagi, Y. (2005). Stereovision with a single camera and multiple mirrors. In Proceedings of the IEEE international conference on robotics and automation (pp. 800–805).

Mutze, U. (2000). Electronic camera for the realization of the imaging properties of a studio bellow camera. US Patent 6,072,529.

Nagahara, H., Zhou, C., Watanabe, T., Ishiguro, H., & Nayar, S. K. (2010). Programmable aperture camera using lcos. In European conference on computer vision (pp. 337–350). Springer.

Nakamura, T., Horisaki, R., & Tanida, J. (2015). Compact wide-field-of-view imager with a designed disordered medium. Optical Review, 22(1), 19–24.

Nakamura, T., Kagawa, K., Torashima, S., & Yamaguchi, M. (2019). Super field-of-view lensless camera by coded image sensors. Sensors, 19, 6.

Nanjo, Y., & Sueyoshi, M. (2011). Tilt lens system and image pickup apparatus. US Patent 7,880,797.

Nayar, S. K. (1997). Catadioptric omnidirectional camera. In Proceedings of the IEEE computer society conference on computer vision and pattern recognition (CVPR) (pp. 482–488).

Nayar, S. K., Branzoi, V., & Boult, T. E. (2006). Programmable imaging: Towards a flexible camera. International Journal of Computer Vision, 70(1), 7–22.

Newman, P. A., & Rible, V. E. (1966). Pinhole array camera for integrated circuits. Applied Optics, 5(7), 1225–1228.

Ng, R., Levoy, M., Brédif, M., Duval, G., Horowitz, M., & Hanrahan, P. (2005). Light field photography with a hand-held plenoptic camera. PhD thesis, Stanford University.

Ng, T. N., Wong, W. S., Chabinyc, M. L., Sambandan, S., & Street, R. A. (2008). Flexible image sensor array with bulk heterojunction organic photodiode. Applied Physics Letters, 92(21), 191.

Nomura, Y., Zhang, L., & Nayar, S. K. (2007). Scene collages and flexible camera arrays. In Proceedings of the 18th eurographics conference on rendering techniques (pp. 127–138).

Okumura, M. (2017). Panoramic-imaging digital camera, and panoramic imaging system. US Patent 9,756,244.

Olagoke, A. S., Ibrahim, H., & Teoh, S. S. (2020). Literature survey on multi-camera system and its application. IEEE Access, 8, 172892–172922.

Papadopoulos, I. N., Farahi, S., Moser, C., & Psaltis, D. (2013). High-resolution, lensless endoscope based on digital scanning through a multimode optical fiber. Biomedical Optics Express, 4(2), 260–270.

Phillips, S. J., Kelley, D. L., & Prassas, S. G. (1984). Accuracy of a perspective control lens. Research Quarterly for Exercise and Sport, 55(2), 197–200.

Poling, B. (2015). A tutorial on camera models (pp. 1–10). University of Minnesota.

Potmesil, M., & Chakravarty, I. (1982). Synthetic image generation with a lens and aperture camera model. ACM Transactions on Graphics (TOG), 1(2), 85–108.

Poulsen, A. (2011). Tilt and shift adaptor, camera and image correction method. US Patent App. 13/000,591.

Qaisar, S., Bilal, R. M., Iqbal, W., Naureen, M., & Lee, S. (2013). Compressive sensing: From theory to applications, a survey. Journal of Communications and Networks, 15(5), 443–456.

Qian, K., & Miura, K. (2019). The roles of color lightness and saturation in inducing the perception of miniature faking. In 2019 11th International conference on knowledge and smart technology (KST) (pp. 194–198). IEEE.

Ritcey, A. M., & Borra, E. (2010). Magnetically deformable liquid mirrors from surface films of silver nanoparticles. ChemPhysChem, 11(5), 981–986.

Roberts, G. (1995). A real-time response of vigilance behaviour to changes in group size. Animal Behaviour, 50(5), 1371–1374. https://doi.org/10.1016/0003-3472(95)80052-2

Roddier, F. (1999). Adaptive optics in astronomy. Cambridge University Press.

Sadlo, F., & Dachsbacher, C. (2011). Auto-tilt photography. In P. Eisert, J. Hornegger, & K. Polthier (Eds.), Vision, modeling, and visualization. The Eurographics Association. https://doi.org/10.2312/PE/VMV/VMV11/239-246

Sahin, F. E., & Laroia, R. (2017). Light L16 computational camera. In Applied industrial optics: Spectroscopy, imaging and metrology (pp. JTu5A–20). Optical Society of America.

Saito, H., Hoshino, K., Matsumoto, K., & Shimoyama, I. (2005). Compound eye shaped flexible organic image sensor with a tunable visual field. In 18th IEEE international conference on micro electro mechanical systems, MEMS 2005 (pp. 96–99). IEEE.

Sako, S., Osawa, R., Takahashi, H., Kikuchi, Y., Doi, M., Kobayashi, N., Aoki, T., Arimatsu, K., Ichiki, M., Ikeda, S. (2016). Development of a prototype of the Tomo-e Gozen wide-field CMOS camera. In Ground-based and airborne instrumentation for astronomy VI (Vol. 9908). International Society for Optics and Photonics.

Scheimpflug, T. (1904). Improved method and apparatus for the systematic alteration or distortion of plane pictures and images by means of lenses and mirrors for photography and for other purposes. GB patent 1196.

Scholz, E., Bittner, W. A. A., & Caldwell, J. B. (2014). Tilt shift lens adapter. US Patent 8,678,676.

Schulz, A. (2015). Architectural photography: Composition, capture, and digital image processing. Rocky Nook Inc.

Schwarz, A., Wang, J., Shemer, A., Zalevsky, Z., & Javidi, B. (2015). Lensless three-dimensional integral imaging using variable and time multiplexed pinhole array. Optics Letters, 40(8), 1814–1817.

Schwarz, A., Wang, J., Shemer, A., Zalevsky, Z., & Javidi, B. (2016). Time multiplexed pinhole array based lensless three-dimensional imager. In Three-dimensional imaging, visualization, and display 2016 (Vol. 9867, p. 98670R). International Society for Optics and Photonics.

Sims, D. C., Yue, Y., Nayar, & S. K. (2016). Towards flexible sheet cameras: Deformable lens arrays with intrinsic optical adaptation. In IEEE international conference on computational photography (ICCP) (pp. 1–11).

Sims, D. C., Cossairt, O., Yu, Y., & Nayar, S. K. (2018). Stretchcam: Zooming using thin, elastic optics. arXiv preprint arXiv:1804.07052

Someya, T., Kato, Y., Iba, S., Noguchi, Y., Sekitani, T., Kawaguchi, H., & Sakurai, T. (2005). Integration of organic fets with organic photodiodes for a large area, flexible, and lightweight sheet image scanners. IEEE Transactions on Electron Devices, 52(11), 2502–2511.

Stroebel, L. (1999). View camera technique. CRC Press.

Strom, S. E., Stepp, L. M., & Gregory, B. (2003). Giant segmented mirror telescope: A point design based on science drivers. In Future giant telescopes (Vol. 4840, pp. 116–128). International Society for Optics and Photonics.

Tan, K. H., Hua, H., & Ahuja, N. (2004). Multiview panoramic cameras using mirror pyramids. IEEE Transactions on Pattern Analysis and Machine Intelligence (TPAMI), 26(7), 941–946.

Tilmon, B., Jain, E., Ferrari, S., & Koppal, S. (2020). FoveaCam: A MEMS mirror-enabled foveating camera. In IEEE international conference on computational photography (ICCP) (pp. 1–11).

Tyson, R. K. (2015). Principles of adaptive optics. CRC Press.

Wang, D., Pan, Q., Zhao, C., Hu, J., Xu, Z., Yang, F., & Zhou, Y. (2017). A study on camera array and its applications. IFAC-PapersOnLine, 50(1), 10323–10328.

Wang, X., Ji, J., & Zhu, Y. (2021). A zoom tracking algorithm based on deep learning. Multimedia Tools and Applications, 7, 1–19.

Wilburn, B., Joshi, N., Vaish, V., Talvala, E. V., Antunez, E., Barth, A., Adams, A., Horowitz, M., & Levoy, M. (2005). High performance imaging using large camera arrays. In ACM SIGGRAPH 2005 papers (SIGGRAPH ’05, pp. 765–776). Association for Computing Machinery. https://doi.org/10.1145/1186822.1073259

Wu, S., Jiang, T., Zhang, G., Schoenemann, B., Neri, F., Zhu, M., Bu, C., Han, J., & Kuhnert, K. D. (2017). Artificial compound eye: A survey of the state-of-the-art. Artificial Intelligence Review, 48(4), 573–603.

Xie, S., Wang, P., Sang, X., & Li, C. (2016). Augmented reality three-dimensional display with light field fusion. Optics Express, 24(11), 11483–11494.

Young, M. (1971). Pinhole optics. Applied Optics, 10(12), 2763–2767.

Yu, G., Wang, J., McElvain, J., & Heeger, A. J. (1998). Large-area, full-color image sensors made with semiconducting polymers. Advanced Materials, 10(17), 1431–1434.

Zamkotsian, F., & Dohlen, K. (2002). Prospects for moems-based adaptive optical systems on extremely large telescopes. In European southern observatory conference and workshop proceedings (Vol. 58, p. 293).

Zhang, C., & Chen, T. (2004). A self-reconfigurable camera array. In Proceedings of the fifteenth eurographics conference on rendering techniques (pp. 243–254).

Zhang, K., Jung, Y. H., Mikael, S., Seo, J. H., Kim, M., Mi, H., Zhou, H., Xia, Z., Zhou, W., Gong, S., et al. (2017). Origami silicon optoelectronics for hemispherical electronic eye systems. Nature Communications, 8(1), 1–8.

Zhao, H., Fan, X., Zou, G., Pang, Z., Wang, W., Ren, G., Du, Y., & Su, Y. (2013). All-reflective optical bifocal zooming system without moving elements based on deformable mirror for space camera application. Applied Optics, 52(6), 1192–1210.

Zhao, J., Zhang, Y., Li, X., & Shi, M. (2019). An improved design of the substrate of stretchable gallium arsenide photovoltaics. Journal of Applied Mechanics, 86(3), 031009.

Zheng, Y., Lin, S., Kambhamettu, C., Yu, J., & Kang, S. B. (2008). Single-image vignetting correction. IEEE Transactions on Pattern Analysis and Machine Intelligence, 31(12), 2243–2256.

Zhou, C., & Nayar, S. (2009). What are good apertures for defocus deblurring? In IEEE international conference on computational photography (ICCP) (pp. 1–8).

Zhou, C., & Nayar, S. K. (2011). Computational cameras: Convergence of optics and processing. IEEE Trans on Image Processing, 20(12), 3322–3340.

Zhou, C., Lin, S., & Nayar, S. (2009). Coded aperture pairs for depth from defocus. In IEEE 12th international conference on computer vision (ICCV) (pp. 325–332).

Zomet, A., & Nayar, S. K. (2006). Lensless imaging with a controllable aperture. In IEEE computer society conference on computer vision and pattern recognition (CVPR) (Vol. 1, pp. 339–346). IEEE.

Zou, T., Tang, X., Song, B., Wang, J., & Chen, J. (2012). Robust feedback zoom tracking for digital video surveillance. Sensors, 12(6), 8073–8099.

ALPAO®. (2023). Deformable mirrors. https://www.alpao.com/adaptive-optics/deformable-mirrors.html

Asphericon®. (2023). Parabolic mirror. https://www.asphericon.com/en/products/parabolic-mirror?gclid=CjwKCAiA2O39BRBjEiwApB2Iks5rpILOEag23Q_Qd5UR2ENSYIrzqCO3xrHG-w_b7dQeAxyqg0s0RBoCrecQAvD_BwE

Boston Micromachines®. (2023). Standard deformable mirrors. https://bostonmicromachines.com/standard-deformable-mirrors/

Canon®. (2021). EF-S 10-18mm f/4.5-5.6 IS STM. https://www.usa.canon.com/internet/portal/us/home/products/details/lenses/ef/ultra-wide-zoom/ef-s-10-18mm-f-4-5-5-6-is-stm

Canon®. (2022). Tilt-shift lenses. https://www.canon-europe.com/lenses/tilt-and-shift-lenses/

Canon®. (2023). RF24-105mm F4-7.1 IS STM. https://www.usa.canon.com/internet/portal/us/home/products/details/lenses/ef/standard-zoom/rf24-105mm-f4-7-1-is-stm

Cilas®. (2023). Cilas, expert in laser and optronics. https://www.cilas.com/

Carnegie Mellon University. (2009). Virtualizing engine. http://www.cs.cmu.edu/~VirtualizedR/page_Data.html

Carnegie Mellon University. (2015). The panoptic studio: A massively multiview system for social motion capture. In ICCV. https://www.cs.cmu.edu/~hanbyulj/panoptic-studio/

Dxomark®. (2021). All camera tests. https://www.dxomark.com/category/mobile-reviews/#tabs

Dyson®. (2020). Dyson 360 Heurist™robot vacuum. https://www.dyson.co.uk/vacuum-cleaners/robot-vacuums/dyson-360-heurist/dyson-360-heurist-overview

Edmund Optics®. (2021). Precision parabolic mirror. https://www.edmundoptics.com/f/precision-parabolic-mirrors/11895/

European Southern Observatory (ESO). (2018). The European extremely large telescope (“ELT”) project. http://www.eso.org/sci/facilities/eelt/

Edmund Optics®. (2021). Cone mirrors. https://www.edmundoptics.com.au/f/cone-mirrors/11556/

Entaniya®. (2021). Industrial fisheye lens M12 280. https://products.entaniya.co.jp/en/products/entaniya-fisheye-m12-280/

GoPro®. (2015). Happy sweet sixteen: Introducing GoPro’s 360\(^{\circ }\) camera array. https://gopro.com/en/us/news/happy-sweet-sixteen-introducing-gopros-360-camera-array

Hitachi®. (2017). Lensless camera—Innovative camera supporting the evolution of IoT. https://www.hitachi.com/rd/sc/story/lensless/index.html

Imagine Eyes®. (2021). rtx1 adaptive optics retinal camera. https://www.imagine-eyes.com/products/rtx1/

Immersive Media®. (2010). Dodeca®2360 camera system. http://www.360worlds.gr/portal/sites/default/files/IMC_Dodeca_2360.pdf

IrisAO®. (2002). DOWN. http://www.irisao.com/products.html

Insta 360®. (2021). Insta 360 Pro 2. https://www.insta360.com/product/insta360-pro2

Insta 360®. (2021). Autori’s road maintenance software integrates Insta360 Pro 2 & Street View. https://blog.insta360.com/autoris-road-maintenance-software-integrates-insta360-pro-2-street-view/

Lightfield Forum®. (2023). Lytro LightField camera. http://lightfield-forum.com/lytro/lytro-lightfield-camera/

Light®. (2019). Multi view depth perception. https://light.co/technology

Light®. (2019). The technology behind the L16 camera Pt. 1. https://support.light.co/l16-photography/l16-tech-part-1

Lawrence Livermore National Laboratory. (2019). World’s largest optical lens shipped to SLAC. https://www.llnl.gov/news/world%E2%80%99s-largest-optical-lens-shipped-slac

Microgate®. (2011). Adaptive deformable mirrors. https://engineering.microgate.it/en/adaptive-deformable-mirrors/concept

NASA. (2023). James webb space telescope. https://www.jwst.nasa.gov/

Nikon®. (2019). Nikon releases the NIKKOR Z 58mm f/0.95 S. https://www.nikon.com/news/2019/1010_lens_02.htm

Nikon®. (2022). Coolpix P1000. https://www.nikonusa.com/en/nikon-products/product/compact-digital-cameras/coolpix-p1000.html

Nikkor®. (2023). AF-S Fisheye NIKKOR 8-15mm f/3.5-4.5E ED. https://www.nikon.co.in/af-s-fisheye-nikkor-8-15mm-f-3-5-4-5e-ed

Nikkor®. (2022). PC Nikkor 35mm f/2.8. http://cdn-10.nikon-cdn.com/pdf/manuals/archive/PC-Nikkor%2035%20mm%20f-2.8.pdf

Nikon®. (2023). PC Nikkor 19mm f/4E ED. https://www.nikonusa.com/en/nikon-products/product/camera-lenses/pc-nikkor-19mm-f%252f4e-ed.html

Nikon®. (2020). The PC lens advantage: What you see is what you’ll get. https://www.nikonusa.com/en/learn-and-explore/a/tips-and-techniques/the-pc-lens-advantage-what-you-see-is-what-youll-get.html

Nikon®. (2020). AF-S NIKKOR 200mm f/2G ED VR II. https://www.nikonusa.com/en/nikon-products/product/camera-lenses/af-s-nikkor-200mm-f%252f2g-ed-vr-ii.html#tab-ProductDetail-ProductTabs-TechSpecs

Panasonic®. (2023). LUMIX compact system (Mirrorless) camera DC-GX9 body only. https://www.panasonic.com/uk/consumer/cameras-camcorders/lumix-mirrorless-cameras/lumix-g-cameras/dc-gx9.specs.html

Panasonic®. (2022). G series 14-42mm F3.5-5.6 ASPH X Vario lens. https://shop.panasonic.com/products/g-series-14-42mm-f3-5-5-6-asph-x-vario-lens

Raytrix®. (2021). 3D light-field cameras. https://raytrix.de/products/

Ricoh®. (2019). RICOH THETA lineup comparison. https://theta360.com/en/about/theta/

Samsung®. (2023). WB2200F 16MP digital camera with 60x optical zoom-black. https://www.samsung.com/uk/support/model/EC-WB2200BPBGB/

Sony®. (2023). FE C 16-35 MM T3.1: full specifications and features. https://pro.sony/ue_US/products/camera-lenses/selc1635g#ProductSpecificationsBlock-selc1635g

Standford. (2020). Sensors of world’s largest digital camera snap first 3,200-megapixel images at SLAC. https://www6.slac.stanford.edu/news/2020-09-08-sensors-world-largest-digital-camera-snap-first-3200-megapixel-images-slac.aspx

TekVoka®. (2020). HD/1080p auto-tracking camera-10x zoom. https://www.tekvox.com/product/79068-auto10/

Texas Instruments®. (2021). TI DLP®technology. https://www.ti.com/dlp-chip/overview.html

Texas Instruments®. (2018). Introduction to \(\pm 12\) degree orthogonal digital micromirror devices (DMDs). https://www.ti.com/lit/an/dlpa008b/dlpa008b.pdf?ts

Vstone®. (2010). Omni directional sensor. https://www.vstone.co.jp/english/products/sensor_camera/

W. M. Keck Observatory. (2023). Keck I and Keck II telescopes. https://keckobservatory.org/about/telescopes-instrumentation/

Zeiss®. (2021). Making sense of sensors—Full frame versus APS-C. https://lenspire.zeiss.com/photo/en/article/making-sense-of-sensors-full-frame-vs-aps-c

Funding

Funding is provided by Amiens Métropole (FR) and Ministère de l’Enseignement Supérieur et de la Recherche.

Author information

Authors and Affiliations

Corresponding author

Additional information

Communicated by Seon Joo Kim.

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Ducrocq, J., Caron, G. A Survey on Adaptive Cameras. Int J Comput Vis (2024). https://doi.org/10.1007/s11263-024-02025-7

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s11263-024-02025-7