Abstract

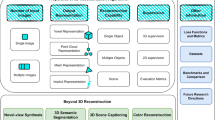

Single image based three-dimensional (3D) scene reconstruction has become an important research topic for computer vision and computer graphics fields to provide machine vision systems with near human visual perception. Previous approaches for 3D scene reconstruction and depth estimation from single images required many factors including motion parallax, stereoscopic parallels, and various monocular depth cues adopted from known geometric priors. Deep learning based depth estimation techniques have advanced single image based depth estimation by aggregating various complexity information from RGB depth image datasets for training images to drive the process. This paper proposes an effective 3D scene estimation methodology by automatically extracting vanishing point and semantic information including 3D geometric characteristics without prior assumptions. The vanishing point is extracted from line segments and minimum spanning tree clustering to remove spurious noisy edges. Retracting geometric and semantic information from a given image is achieved by a generative adversarial network trained on the created training set. We verified the proposed approach’s efficiency and effectiveness experimentally with a large database created by directly recovering 3D scenes from from an input image.

Similar content being viewed by others

References

Alagoz BB (2008) Obtaining depth maps from color images by region based stereo matching algorithms. Comment, New figures were added

Alhashim I, Wonka P (2018) High quality monocular depth estimation via transfer learning. CoRR arXiv:1812.11941

Benzougar A, Bernard J, Simon T (1998) Depth from defocus: a spatial moments based method. Mach Vis Appl

Cheng CM, Hsu XA, Lai SH (2010) A novel structure-from-motion strategy for refining depth map estimation and multi-view synthesis in 3dtv. In: 2010 IEEE international conference on multimedia and Expo, pp 944–949. IEEE

Cheng FH, Liang YH (2009) Depth map generation based on scene categories. J Electron Imaging 18(4):043006

Ding L, Sharma G (2017) Fusing structure from motion and lidar for dense accurate depth map estimation. In: 2017 IEEE international conference on acoustics, speech and signal processing (ICASSP), pp 1283–1287. IEEE

Eigen D, Puhrsch C, Fergus R (2014) Depth map prediction from a single image using a multi-scale deep network. CoRR arXiv:1406.2283

Furukawa R, Sagawa R, Kawasaki H (2017) Depth estimation using structured light flow–analysis of projected pattern flow on an object’s surface. In: Proceedings of the IEEE international conference on computer vision, pp 4640–4648

Godard C, Aodha OM, Brostow GJ (2016) Unsupervised monocular depth estimation with left-right consistency. CoRR arXiv:1609.03677

Godard C, Mac Aodha O, Brostow GJ (2017) Unsupervised monocular depth estimation with left-right consistency. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 270–279

Haigron P, Bellemare ME, Acosta O, Goksu C, Kulik C, Rioual K, Lucas A (2004) Depth-map-based scene analysis for active navigation in virtual angioscopy. IEEE Trans Med Imaging 23(11):1380–1390

Hwang HJ, Yoon GJ, Yoon SM (2020) Optimized clustering scheme-based robust vanishing point detection. IEEE Trans Intell Transp Syst 21(1):199–208

Kao CC (2017) Stereoscopic image generation with depth image based rendering. Multimedia Tools Appl 76(11):12981–12999

Kellnhofer P, Didyk P, Ritschel T, Masiá B, Myszkowski K, Seidel H (2016) Motion parallax in stereo 3d: model and applications. ACM Trans Graph 35(6):176:1-176:12

Kuznietsov Y, Stuckler J, Leibe B (2017) Semi-supervised deep learning for monocular depth map prediction. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 6647–6655

Kuznietsov Y, Stückler J, Leibe B (2017) Semi-supervised deep learning for monocular depth map prediction. In: CVPR, pp 2215–2223. IEEE Computer Society

Ladicky L, Shi J, Pollefeys M (2014) Pulling things out of perspective. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 89–96

Laina I, Rupprecht C, Belagiannis V, Tombari F, Navab N (2016) Deeper depth prediction with fully convolutional residual networks. In: 2016 Fourth international conference on 3D vision (3DV), pp 239–248. IEEE

Li J, Yuce C, Klein R, Yao A (2019) A two-streamed network for estimating fine-scaled depth maps from single RGB images. Comput Vis Image Underst 186:25–36

Li Z, Snavely N (2018) Megadepth: Learning single-view depth prediction from internet photos. CoRR arXiv:1804.00607

Liu F, Shen C, Lin G (2015) Deep convolutional neural fields for depth estimation from a single image. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 5162–5170

Liu F, Shen C, Lin G, Reid I (2015) Learning depth from single monocular images using deep convolutional neural fields. IEEE Trans Pattern Anal Mach Intell 38(10):2024–2039

Liu M, Zhang W, Orabona F, Yang T (2020) Adam\({}^{\text{+}}\): A stochastic method with adaptive variance reduction. CoRR arXiv:2011.11985

Liu S, Zhou F, Liao Q (2016) Defocus map estimation from a single image based on two-parameter defocus model. IEEE Trans Image Process 25(12):5943–5956

Mahmoudpour S, Kim M (2016) Superpixel-based depth map estimation using defocus blur. In: 2016 IEEE international conference on image processing (ICIP), pp 2613–2617. IEEE

Martínez-Martín E (2012) Computer vision methods for robot tasks: motion detection, depth estimation and tracking. AI Commun 25(4):373–375

Mirza M, Osindero S (2014) Conditional generative adversarial nets. CoRR arXiv:1411.1784

Moon H, Ju G, Park S, Shin H (2016) 3d freehand ultrasound reconstruction using a piecewise smooth markov random field. Comput Vis Image Underst 151:101–113

Nicolas H (2012) Depth analysis for surveillance videos in the h264 compressed domain

Ranftl R, Lasinger K, Hafner D, Koltun V (2020) Towards robust monocular depth estimation: mixing datasets for zero-shot cross-dataset transfer. IEEE Trans Pattern Anal Mach Intell. https://doi.org/10.1109/TPAMI.2020.3019967

Ranftl R, Vineet V, Chen Q, Koltun V (2016) Dense monocular depth estimation in complex dynamic scenes. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 4058–4066

Saxena A, Sun M, Ng A.Y (2009) Make3D: Learning 3D scene structure from a single still image. IEEE Trans Pattern Anal Mach Intell 31(5):824–840

Schennings J (2017) Deep convolutional neural networks for real-time single frame monocular depth estimation. Uppsala universitet, Avdelningen för systemteknik

Shin YS, Kim A (2019) Sparse depth enhanced direct thermal-infrared slam beyond the visible spectrum. IEEE Robotics Autom Lett 4(3):2918–2925

Tannoury A, Darazi R, Guyeux C, Makhoul A (2017) Efficient and accurate monitoring of the depth information in a wireless multimedia sensor network based surveillance. CoRR

Tao Y, Jian-Hua Z, Qin-Bao S (2017) 3d reconstruction from a single still image based on monocular vision of an uncalibrated camera. Web Conf 12:01018

Teed Z, Deng J (2018) Deepv2d: Video to depth with differentiable structure from motion. CoRR arXiv:1812.04605

Villamizar M, Martínez-González A, Canévet O, Odobez JM (2018) Watchnet: Efficient and depth-based network for people detection in video surveillance systems. In: 2018 15th IEEE International conference on advanced video and signal based surveillance (AVSS), pp 1–6. IEEE

Wang C, Lucey S, Perazzi F, Wang O (2019) Web stereo video supervision for depth prediction from dynamic scenes. pp 348–357. IEEE

Yokozuka M, Tomita K, Matsumoto O, Banno A (2016) Accurate depth-map refinement by per-pixel plane fitting for stereo vision. In: 2016 23rd international conference on pattern recognition (ICPR), pp 2807–2812. IEEE

Zhang X, Huang B (2018) Bayes-metis.3d. (3d geometric reconstruction based on bayes-metis mesh partition) 45(6):265–269

Zhao S, Fang Z (2018) Direct depth slam: sparse geometric feature enhanced direct depth slam system for low-texture environments. Sensors 18(10):3339

Zhou Z, Farhat F, Wang JZ (2017) Detecting dominant vanishing points in natural scenes with application to composition-sensitive image retrieval. IEEE Trans Multim 19(12):2651–2665

Acknowledgements

G.-J. Yoon is supported by the National Institute for Mathematical Sciences grant funded by the Korean government (No. NIMS-B21810000). J. Song is supported by the National Research Foundation of Korea (No. 2021R1F1A1059202). S.M. Yoon is supported by Institute of Information communications Technology Planning and Evaluation (IITP) (2020-0-00457) and by the National Research Foundation of Korea (No. NRF-2021R1A2C1008555).

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix: Geometric Quantities and Image Transformations

Appendix: Geometric Quantities and Image Transformations

In this appendix, we obtain various geometric quantities including a vanishing point representation and several transformations related to the camera settings. As seen in Fig. 4, we take a 2D image from an optical center \(C_h(0,c_h, 0)\) with angle \(\theta \) focusing at \(F_c(0,b,c)\) in a 3D space. Then we correspond the focusing point \(F_c\) to the origin \(O_I\) of the image plane \(I_{2D}\) with orthogonal coordinate system. We can see that \(\overrightarrow{C_hF_c}=(0,b-c_h,c)\) so that the normal vector \(\mathbf {n}\) to the image plane \(I_{2D}\) lying in the 3D space is given as

1.1 Gemmetric Quantities and Vanishing Point Representation

Now, we calculate 3D coordinates of several points related to the 2D image obtained from the projection. Since the vanishing point \((0, v_h)\), we can calculate the camera focusing angle \(\theta \) using the focal and focusing points as

From the projection given in Fig. 8, we obtain the angle relation

and

Applying the relations to the trigonometric values, we can obtain the z coordinate of the vanishing point \(v=(0,c_h, v_3)\) in 3D space as

1.2 3D Representation of Points in the Two-Dimensional Image Plane \(I_{2D}\)

In Sect. 2.4, we proposed a depth estimation from the 2D image \(I_{2D}\) using the vanishing point. For the depth estimation, it is convenient to have a transform of the 2D image onto the plane containing the image plane in 3D. The plane \(I_{3D}\) containing the image is given as \(I_{3D}=\{\mathbf{x}\in \mathbb {R}^3: \mathbf {n}\cdot (\mathbf{x}-F_c)=n_1 x +n_2(y-b)+n_3(z-c)=0\}\) with normal vector \(\mathbf {n}\) in (1). Even though we can find a clue for the transform in the proof given for the projection into the 2D image plane, we are to give a concrete form to the transform.

Let Q(u, v) be a point in \(I_{2D}.\) From the orthogonal coordinate system given to \(I_{2D},\) this means \(\overrightarrow{O_IQ}=u\mathbf {e}_1+ v\mathbf {e}_2.\)

In 3D, the point Q is represented in vector sum from Fig. 9 as

On the other hand, we have found the relations between \(\{\mathbf {e}_1, \mathbf {e}_2\}\) and \(\{\mathbf {e}_x, \mathbf {e}_y, \mathbf {e}_z\}\) in (11) as \( \mathbf {e}_1=\mathbf {e}_x\quad \text{ and }\quad \mathbf {e}_2=\cos \theta \mathbf {e}_y+\sin \theta \mathbf {e}_z. \) Applying these relations to (9) and using the fact \( x=\overrightarrow{OQ}\cdot \mathbf {e}_x,\quad y=\overrightarrow{OQ}\cdot \mathbf {e}_y, \quad z=\overrightarrow{OQ}\cdot \mathbf {e}_z, \) we find the 3D coordinate (x, y, z) for the point Q as

Here \(\cos \theta \) and \(\sin \theta \) are given in (7) and (6), respectively. Thus, the transform \(T_{I_{2D}\rightarrow I_{3D}}: I_{2D}\rightarrow I_{3D}\) is found to be

Conversely, we assume to be given the 3D coordinate (x, y, z) for the point Q. From Fig. 9, the vector sum in (9) gives the relation

Applying the relations (10) and the orthogonality gives the inverse transform \(T_{I_{3D}\rightarrow I_{2D}}: I_{3D}\rightarrow I_{2D}\) as

We note that it is not difficult to show that

and

by using the fact that \((x, y-b, z-c)\cdot \mathbf {n}=0\) for (x, y, z) in \(I_{3D}\) with the normal vector \(\mathbf {n}\) given in (4).

1.3 Projection Onto a Two-Dimensional Image \(I_{2D}\)

The 3D plane \(I_{3D}\) corresponding to the image plane \(I_{2D}\) is the set of all points \(\mathbf{x}=(x,y,z)\) such that \( \mathbf {n}\cdot (\mathbf{x}-F_c)=n_1 x +n_2(y-b)+n_3(z-c)=0.\) In this last section, we find the projection of 3D points in \(\mathbb {R}^3\) into the image plane \(I_{2D}.\) Let P(x, y, z) be a 3D point in \(\{\mathbf{x}=(x,y,z): \mathbf {n}\cdot (\mathbf{x}-C_h)\ne 0\}\), then we are to find the coordinate (u, v) of the corresponding point Q in image plane \(I_{2D}\). We plan to find u and v using the relation

To do this, we need to represent the two unit vectors \(\mathbf {e}_1\) and \(\mathbf {e}_2\) in terms of \(\mathbf {e}_x, \mathbf {e}_y, \mathbf {e}_y.\) Using the angle \(\theta ,\) we see that

On the other hand, the two vectors \(\overrightarrow{C_hQ}\) and \(\overrightarrow{C_hP}\) are parallel so that there exists a constant \(\alpha \) such that \(\overrightarrow{C_hQ}=\alpha \overrightarrow{C_hP}.\) And \(\overrightarrow{OQ}\) lies in the plane so that it satisfies \(\mathbf {n}\cdot (\overrightarrow{OQ}-\overrightarrow{OF_c})=0.\) And we have \(\overrightarrow{OQ}=\overrightarrow{OC_h}+\alpha \overrightarrow{C_hP}\) and \(\overrightarrow{C_hP}=\overrightarrow{OP}-\overrightarrow{OC_h}=(x,y-c_h,z).\) Combining these relations, we get

Also, \(\overrightarrow{O_IQ}\) is represented in vector sum as \( \overrightarrow{F_cQ}=\overrightarrow{C_hQ}+\overrightarrow{F_cC_h}=\alpha (x,y-c_h,z)+(0, c_h-b,-c) =(\alpha x, \alpha (y-c_h)+c_h-b, \alpha z-c), \) Applying this vector sum and (11) to (10), we obtain \( u=\alpha x\) and \(v=(\alpha (y-c_h)+c_h-b)\cos \theta +(\alpha z-c)\sin \theta .\) Finally, we calculate the projection mapping \(T_{{3D}\rightarrow I_{2D}}: \mathbb {R}^3\rightarrow I_{2D}\) as

where the parameters \(\alpha \), \(\sin \theta ,\) and \(\cos \theta \) are given in (12), (6) and (7), respectively.

Rights and permissions

About this article

Cite this article

Yoon, GJ., Song, J., Hong, YJ. et al. Single Image Based Three-Dimensional Scene Reconstruction Using Semantic and Geometric Priors. Neural Process Lett 54, 3679–3694 (2022). https://doi.org/10.1007/s11063-022-10780-2

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11063-022-10780-2