Abstract

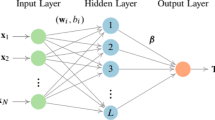

Extreme learning machine (ELM) is one of the most remarkable machine learning algorithm in consequence of superior properties particularly its speed. ELM algorithm tends to have some drawbacks like instability and poor generalization performance in the presence of perturbation and multicollinearity. This paper introduces a novel algorithm based on Liu regression estimator (L-ELM) to handle these drawbacks. Different selection approaches have been used to determine the appropriate Liu biasing parameter. The new algorithm is tested against the basic ELM, RR-ELM, AUR-ELM and OP-ELM on nine well-known benchmark data sets. Statistical significance tests have been carried out. Experimental results show that L-ELM for at least one Liu biasing parameter generally outperforms basic ELM, RR-ELM, AUR-ELM and OP-ELM in terms of stability and generalization performance with a little lost of speed. Conversely, the training time of L-ELM is generally much slower than RR-ELM, AUR-ELM and OP-ELM. Consequently, the proposed algorithm can be considered a powerful alternative to avoid the loss of performance in regression studies

Similar content being viewed by others

Notes

Only the main results of the whole statistical process have been given for the sake of simplicity and keeping the study shorter. The bold values in Table 3 show the statistically significant values at the 0.05 level.

References

Allen DM (1974) The relationship between variable selection and data agumentation and a method for prediction. Technometrics 16(1):125–127. https://doi.org/10.1080/00401706.1974.10489157

Deng W, Zheng Q, Chen L (2009) Regularized extreme learning machine. In: 2009 IEEE symposium on computational intelligence and data mining. IEEE, Nashville, TN, USA, pp 389–395

Ding S, Zhao H, Zhang Y et al (2015) Extreme learning machine: algorithm, theory and applications. Artif Intell Rev 44:103–115. https://doi.org/10.1007/s10462-013-9405-z

Dua D, Taniskidou K (2018) UCI machine learning repository. University of California, School of Information and Computer Science, Irvine, CA. http://archive.ics.uci.edu/ml. Accessed 23 Dec 2018

Fakhr MW, Youssef E-NS, El-Mahallawy MS (2015) L1-regularized least squares sparse extreme learning machine for classification. In: 2015 International conference on information and communication technology research (ICTRC). IEEE, Abu Dhabi, United Arab Emirates, pp 222–225

Graybill FA (1983) Matrices with applications in statistics. Wadsworth, Belmont

He B, Sun T, Yan T et al (2017) A pruning ensemble model of extreme learning machine with \(L_{1/2}\) regularizer. Multidimens Syst Signal Process 28:1051–1069. https://doi.org/10.1007/s11045-016-0437-9

Hoerl AE, Kennard RW (1970) Ridge regression: applications to nonorthogonal problems. Technometrics 12:69–82. https://doi.org/10.1080/00401706.1970.10488635

Huang G, Huang GB, Song S, You K (2015) Trends in extreme learning machines: a review. Neural Netw 61:32–48. https://doi.org/10.1016/j.neunet.2014.10.001

Huang GB, Zhu QY, Siew CK (2004) Extreme learning machine: a new learning scheme of feedforward neural networks. In: 2004 IEEE international joint conference on neural networks (IEEE Cat. No.04CH37541). IEEE, Budapest, Hungary, pp 985–990

Huang GB, Zhu QY, Siew CK (2006) Extreme learning machine: theory and applications. Neurocomputing 70:489–501. https://doi.org/10.1016/j.neucom.2005.12.126

Huang GB, Wang DH, Lan Y (2011) Extreme learning machines: a survey. Int J Mach Learn Cybernet 2:107–122. https://doi.org/10.1007/s13042-011-0019-y

Huang GB, Zho H, Ding X, Zhang R (2012) Extreme learning machine for regression and multiclass classification. IEEE Trans Syst Man Cybernet Part B (Cybernet) 42:513–529. https://doi.org/10.1109/TSMCB.2011.2168604

Huang GB (2019) Extreme learning machines. http://www.ntu.edu.sg/home/egbhuang Accessed 18 April 2019

Huynh HT, Won Y, Kim JJ (2008) An improvement of extreme learning machine for compact single hidden layer feedforward neural networks. Int J Neural Syst 18:433–441. https://doi.org/10.1142/S0129065708001695

Liu K (1993) A new class of biased estimate in linear regression. Commun Stat Theory Methods 22:393–402. https://doi.org/10.1080/03610929308831027

Li G, Niu P (2013) An enhanced extreme learning machine based on ridge regression for regression. Neural Comput Appl 22:803–810. https://doi.org/10.1007/s00521-011-0771-7

Lu S, Wang X, Zhang G, Zhou X (2015) Effective algorithms of the Moore-Penrose inverse matrices for extreme learning machine. Intell Data Anal 19:743–760. https://doi.org/10.3233/IDA-150743

Luo X, Chang X, Ban X (2016) Regression and classification using extreme learning machine based on \(L_{1}\) norm and \(L_{2}\) norm. Neurocomputing 174:179–186. https://doi.org/10.1016/j.neucom.2015.03.112

Martínez-Martínez JM, Escandell-Montero P, Soria-Olivas E et al (2011) Regularized extreme learning machine for regression problems. Neurocomputing 74:3716–3721. https://doi.org/10.1016/j.neucom.2011.06.013

McCulloch WS, Pitts W (1943) A logical calculus of the ideas immanent in nervous activity. Bull Math Biophys 5(4):115–133. https://doi.org/10.1007/BF02478259

Miche Y, Sorjamaa A, Bas P et al (2010) OP-ELM: optimally pruned extreme learning machine. IEEE Trans Neural Netw 21:158–162. https://doi.org/10.1109/TNN.2009.2036259

Miche Y, Heeswijk MV, Bas P et al (2011) TROP-ELM: a double-regularized ELM using LARS and Tikhonov regularization. Neurocomputing 74:2413–2421. https://doi.org/10.1016/j.neucom.2010.12.042

Nomura M (1988) On the almost unbiased ridge regression estimator. Commun Stat Simul Comput 17:729–743. https://doi.org/10.1080/03610918808812690

Rao CR, Mitra SK (1971) Generalized inverse of matrices and its applications, vol 7. Wiley, New York

Schmidhuber J (2015) Deep learning in neural networks: an overview. Neural Netw 61:85–117. https://doi.org/10.1016/j.neunet.2014.09.003

Schott JR (2005) Matrix analysis for statistics. Wiley, Hoboken

Torgo L (2019) Regression datasets. http://www.dcc.fc.up.pt/~ltorgo/Regression/DataSets.html. Accessed 18 April 2019

Wang Y, Cao F, Yuan Y (2011) A study on effectiveness of extreme learning machine. Neurocomputing 74:2483–2490. https://doi.org/10.1016/j.neucom.2010.11.030

Yıldırım H, Özkale MR (2019) The performance of ELM based ridge regression via the regularization parameters. Expert Syst Appl 134:225–233. https://doi.org/10.1016/j.eswa.2019.05.039

Zhang H, Zhang S, Yin Y (2013) An improved ELM algorithm based on EM-ELM and ridge regression. In: 2013 International conference on intelligent science and big data engineering. Springer, Berlin, pp 756-763

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

All authors declare that they have no conflict of interest

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Yıldırım, H., Özkale, M.R. An Enhanced Extreme Learning Machine Based on Liu Regression. Neural Process Lett 52, 421–442 (2020). https://doi.org/10.1007/s11063-020-10263-2

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11063-020-10263-2