Abstract

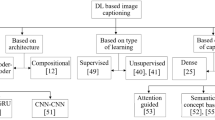

Nowadays, images are being used more extensively for communication purposes. A single image can convey a variety of stories, depending on the perspective and thoughts of everyone who views it. To facilitate comprehension, inclusion image captions is highly beneficial, especially for individuals with visual impairments who can read Braille or rely on audio descriptions. The purpose of this research is to create an automatic captioning system that is easy to understand and quick to generate. This system can be applied to other related systems. In this research, the transformer learning process is applied to image captioning instead of the convolutional neural networks (CNN) and recurrent neural networks (RNN) process which has limitations in processing long-sequence data and managing data complexity. The transformer learning process can handle these limitations well and more efficiently. Additionally, the image captioning system was trained on a dataset of 5,000 images from Instagram that were tagged with the hashtag "Phuket" (#Phuket). The researchers also wrote the captions themselves to use as a dataset for testing the image captioning system. The experiments showed that the transformer learning process can generate natural captions that are close to human language. The generated captions will also be evaluated using the Bilingual Evaluation Understudy (BLEU) score and Metric for Evaluation of Translation with Explicit Ordering (METEOR) score, a metric for measuring the similarity between machine-translated text and human-written text. This will allow us to compare the resemblance between the researcher-written captions and the transformer-generated captions.

Similar content being viewed by others

Data availability

The data that support the findings of this study are available from the authors, upon reasonable request.

References

Dixon S (2022) Instagram: active users 2018. Statista. https://www.statista.com/statistics/253577/number-of-monthly-active-instagram-users/

Singh A, Singh TD, Bandyopadhyay S (2021) An encoder-decoder based framework for hindi image caption generation. Multimed Tools Appl 80(28–29):35721–35740

Vaswani A, Shazeer N, Parmar N, Uszkoreit J, Jones L, Gomez AN, Kaiser Ł, Polosukhin I (2017) Attention is all you need. In: 31st Conference on Neural Information Processing Systems (NIPS 2017), vol 30. Cornell University. https://arxiv.org/pdf/1706.03762v5

Liu W, Chen S, Guo L, Zhu X, Liu J (2021) CPTR: full transformer network for image captioning. arXiv (Cornell University). https://doi.org/10.48550/arxiv.2101.10804

Dosovitskiy A, Beyer L, Kolesnikov A, Weissenborn D, Zhai X, Unterthiner T, Dehghani M, Minderer M, Heigold G, Gelly S, Uszkoreit J, Houlsby N (2020) An image is worth 16x16 words: transformers for image recognition at scale. In: The ninth international conference on learning representations. Cornell University. https://arxiv.org/pdf/2010.11929

Vinyals O, Toshev A, Bengio S, Erhan D (2015) Show and tell: a neural image caption generator. 2015 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp 3156–3164. https://doi.org/10.1109/cvpr.2015.7298935

Cornia M, Stefanini M, Baraldi L, Cucchiara R (2020) Meshed-memory transformer for image captioning. 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp 10575–10584. https://doi.org/10.1109/cvpr42600.2020.01059

Wang Y, Xu J, Sun Y (2022) End-to-end transformer based model for image captioning. Proceedings of the AAAI Conference on Artificial Intelligence 36(3):2585–2594. https://doi.org/10.1609/aaai.v36i3.20160

Aneja J, Deshpande A, Schwing AG (2018) Convolutional image captioning. 2018 IEEE/CVF conference on computer vision and pattern recognition, pp 5561–5570. https://doi.org/10.1109/cvpr.2018.00583

Ghandi T, Pourreza HR, Pourreza HR (2023) Deep learning approaches on image Captioning: a review. ACM Computing Surveys. https://doi.org/10.1145/3617592

Mookdarsanit P, Mookdarsanit L (2020) Thai-IC: Thai Image Captioning based on CNN-RNN Architecture. Int J Appl Comp Inform Syst 10(1):40–45

Deorukhkar KP, Ket S (2022) Image Captioning using Hybrid LSTM-RNN with Deep Features. Sens Imaging 23:31

Gupta N, Jalal AS (2020) Integration of textual cues for fine-grained image captioning using deep CNN and LSTM. Neural Comput & Applic 32:17899–17908

Cao D, Zhu M, Gao L (2019) An image caption method based on object detection. Multimedia Tools and Applications 78:35329–35350

Xu K, Ba J, Kiros R, Cho K, Courville A, Salakhudinov R, Zemel R, Bengio Y (2015) Show, attend and tell: neural image caption generation with visual attention. International Conference on Machine Learning 3:2048–2057. http://proceedings.mlr.press/v37/xuc15.pdf

Johnson J, Karpathy A, Li F (2016) DenseCap: fully convolutional localization networks for dense captioning. 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp 4565–4574. https://doi.org/10.1109/CVPR.2016.494

Kim D, Choi J, Oh T, Kweon IS (2019) Dense relational captioning: triple-stream networks for relationship-based captioning. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pp 6271–6280. https://doi.org/10.1109/cvpr.2019.00643

Kim D-J, Oh T-H, Choi J, Kweon IS (2022) Dense relational image captioning via multi-task triple-stream networks. IEEE Trans Pattern Anal Mach Intell 44:11

Das R, Singh TD (2022) Assamese news image caption generation using attention mechanism. Multimed Tools Appl 81(7):10051–10069

Zhang W, Nie W, Li X, Yao Y (2019) Image caption generation with adaptive transformer. 2019 34rd youth academic annual conference of Chinese association of automation (YAC), pp 521–526. https://doi.org/10.1109/yac.2019.8787715

You Q, Jin H, Wang Z, Chen F, Luo J (2016) Image captioning with semantic attention. Proceedings of the IEEE conference on computer vision and pattern recognition, pp 4651–4659. https://doi.org/10.1109/cvpr.2016.503

Zhu X, Li L, Liu J, Peng H, Niu X (2018) Captioning transformer with stacked attention modules. Appl Sci 8(5):739

Herdade S, Kappeler A, Boakye K, Soares JCV (2019) Image captioning: transforming objects into words. 33rd conference on neural information processing systems (NeurIPS 2019) 32:11135–11145. https://doi.org/10.48550/arXiv.1906.05963

Shao Z, Han J, Marnerides D, Debattista K (2022) Region-object relation-aware dense captioning via transformer. IEEE transactions on neural networks and learning systems, pp 1–12. https://doi.org/10.1109/tnnls.2022.3152990

Shao Z, Han J, Debattista K, Pang Y (2023) Textual context-aware dense captioning with diverse words. IEEE Transactions on Multimedia, pp 1–15. https://doi.org/10.1109/tmm.2023.3241517

Szegedy C, Vanhoucke V, Ioffe S, Shlens J, Wojna Z (2016) Rethinking the inception architecture for computer vision. Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp 2818–2826. https://doi.org/10.1109/cvpr.2016.308

MongoDB (2019, January 1) What Is MongoDB. https://www.mongodb.com/what-is-mongodb

Python (2022, May 1) Urllib.Request — Extensible library for opening URLs. Python Software Foundation. https://docs.python.org/3/library/urllib.request.html

Guo L, Liu J, Zhu X, Yao P, Lu S, Lu H (2020) Normalized and geometry-aware self-attention network for image captioning. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pp 10327–10336. https://doi.org/10.1109/cvpr42600.2020.01034

Papineni K, Roukos S, Ward TJ, Zhu W (2001) BLEU: a method for automatic evaluation of machine translation. Proceedings of the 40th annual meeting on association for computational linguistics - ACL ’02, pp 311–318. https://doi.org/10.3115/1073083.1073135

Banerjee S, Lavie A (2005) METEOR: An automatic metric for MT evaluation with improved correlation with human judgments. Proceedings of the Acl workshop on intrinsic and extrinsic evaluation measures for machine translation and/or summarization, pp 65–72. https://www.cs.cmu.edu/~alavie/METEOR/pdf/Banerjee-Lavie-2005-METEOR.pdf

Shah FM, Humaira M, Jim ARK, Ami AS, Paul S (2021) Bornon: Bengali image captioning with transformer-based deep learning approach. SN Comput Sci 3(1). https://doi.org/10.1007/s42979-021-00975-0

Tan M, Le QV (2019) EfficientNet: Rethinking model scaling for convolutional neural networks. Proceedings of the 36th international conference on machine learning, vol 97, pp 6105–6114. http://proceedings.mlr.press/v97/tan19a/tan19a.pdf

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Dittakan, K., Prompitak, K., Thungklang, P. et al. Image caption generation using transformer learning methods: a case study on instagram image. Multimed Tools Appl 83, 46397–46417 (2024). https://doi.org/10.1007/s11042-023-17275-9

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11042-023-17275-9