Abstract

We prove a factorization formula for the point-to-point partition function associated with a model of directed polymers on the space-time lattice \(\mathbb {Z}^{d+1}\). The polymers are subject to a random potential induced by independent identically distributed random variables and we consider the regime of weak disorder, where polymers behave diffusively. We show that when writing the quotient of the point-to-point partition function and the transition probability for the underlying random walk as the product of two point-to-line partition functions plus an error term, then, for large time intervals [0, t], the error term is small uniformly over starting points x and endpoints y in the sub-ballistic regime \(\Vert x - y \Vert \le t^{\sigma }\), where \(\sigma < 1\) can be arbitrarily close to 1. This extends a result of Sinai, who proved smallness of the error term in the diffusive regime \(\Vert x - y \Vert \le t^{1/2}\). We also derive asymptotics for spatial and temporal correlations of the field of limiting partition functions.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

The theory of directed polymers has been actively studied in the mathematical and physical literature in the last 30 years. From the point of view of probability theory and statistical mechanics, directed polymers are random walks in a random potential. The probability distribution for a random path \(\gamma \) of length t is given by the Gibbs distribution \(P^t_\omega (\gamma )= \frac{1}{Z^t_\omega }\exp {\left[ -\beta H^t_\omega (\gamma )\right] }\), where \(\beta \) is the inverse temperature, \(H^t_\omega (\gamma )\) is the total energy of the interaction between the path \(\gamma \) and a fixed realization of the external random potential, and the normalizing factor \(Z^t_\omega \) is the partition function. The random potential is a functional defined on some probability space, and a point \(\omega \) in this probability space completely characterizes a fixed realization of the potential. In this paper we are interested only in the case of non-stationary time-dependent random potentials. The simplest setting corresponds to the discrete space-time lattice \(\mathbb {Z}^{d+1}\), where d is the spatial dimension. In this case the random potential normally is assumed to be given by the i.i.d. field \(\omega =\{\xi (x,i): \, x\in \mathbb {Z}^d, i\in \mathbb {Z}\}\), and \(H_\omega ^t= -\sum _{i=0}^t {\xi (\gamma _i,i)}\). As usual one is interested in the asymptotic behavior of directed polymers as \(t\rightarrow \infty \).

The first rigorous results for directed polymers were obtained by Imbrie and Spencer [1], Bolthausen [2], and Sinai [3]. It was proved that in the case of weak disorder, namely when \(d \ge 3\) and \(|\beta |\) is small, the polymer almost surely has diffusive behavior with a non-random covariance matrix. It was later proved by Carmona and Hu [4], and Comets et al. [5] that in the cases \(d=1,2\), and \(d\ge 3\) with \(|\beta |\) large, the asymptotic behavior is very different. In this regime, called strong disorder, the directed polymers are not spreading as \(t\rightarrow \infty \) but remain concentrated in certain random places.

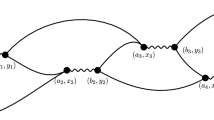

Sinai’s approach in [3] is based on the study of asymptotic properties of partition functions \(Z^t_\omega \) as \(t \rightarrow \infty \). It turns out that if the polymer starts at a point x at time s, then in the limit \(t\rightarrow \infty \) the properly normalized partition function converges almost surely to a random variable \(Z^\infty _{x,s}\). Here, in order to simplify notation, we are not indicating the dependence on \(\omega \). In a similar way one can consider backward in time partition functions, and prove that after the same normalization they also converge to limiting partition functions \(Z_{-\infty }^{y,t}\), where (y, t) is the endpoint of the polymer. The proof of the diffusive behavior follows from a factorization formula proved by Sinai. Namely, a bridging partition function \(Z_{x,s}^{y,t}\) corresponding to the random-walk bridge between points \((x,s), \, (y,t), \, t>s,\) satisfies the following asymptotic relation:

where \(q^{y-x}_{t-s}\) is the transition probability of the simple symmetric random walk, and a small error term \(\delta _{x,s}^{y,t}\) tends to zero as \(t-s \rightarrow \infty \), provided \(y-x\) belongs to the diffusive region: \(\Vert y-x\Vert =O(\sqrt{t-s})\). Later, Sinai’s formula was extended by Kifer [6] to the continuous setting.

The interest in the asymptotic behavior of directed polymers is largely motivated by the connection between directed polymers and the theory of the stochastic heat equation

and the random Hamilton-Jacobi equation

which is related to the stochastic heat equation through the Hopf-Cole transformation \(\Phi (x,t) = - \ln Z(x,t)\). The connection between directed polymers and the stochastic heat equation is a direct consequence of the Feynman-Kac formula, see, e.g., [7].

The main conjecture about the asymptotic behavior of the solutions to the random Hamilton-Jacobi equation can be formulated in the following way. For a fixed value of the average velocity \(b=\langle \nabla \Phi (x, \cdot )\rangle \), which is preserved by the equation, with probability one there exists a unique (up to an additive constant) global solution. This means that solutions starting from two different initial conditions \(\Phi _1(x,0)=b\cdot x +\Psi _1(x,0), \, \Phi _2(x,0)=b\cdot x +\Psi _2(x,0)\) approach each other up to an additive constant as \(t\rightarrow \infty \), provided \(\Psi _1(x,0)\) and \(\Psi _2(x,0)\) are functions of sublinear growth in \(\Vert x\Vert \) [7].

In terms of the stochastic heat equation, a similar uniqueness statement up to a multiplicative constant conjecturally holds for two initial conditions of the form \(Z_1(x,0)=\exp {[-b\cdot x -\Psi _1(x,0)]}\) and \(Z_2(x,0)=\exp {[-b\cdot x -\Psi _2(x,0)]}\).

In order to be able to prove the above conjecture in the weak-disorder case, one has to extend the factorization formula (1) to a much larger scale. This is the purpose of the present article: We prove that the factorization formula holds for \(\Vert x-y\Vert <(t-s)^\sigma \), where \(\sigma \) can be taken arbitrarily close to 1. Compared to [3], such an extension of the factorization formula requires very different analytical methods.

In this paper we restrict ourselves to the simplest discrete case, i.e., polymers live on the discrete space-time lattice \(\mathbb {Z}^{d+1}\) and the potential is induced by an i.i.d. field of random variables. This allows us to make the exposition more transparent. However, in order to prove the uniqueness conjecture for the stochastic heat equation in the weak-disorder regime, one needs to consider the parabolic Anderson model, which is discrete in space and continuous in time. The proof of the factorization formula in this semi-discrete setting, which is based on similar ideas but technically more involved, will be published elsewhere. The proof of the uniqueness conjecture itself will be published separately as well.

We conclude the introduction with several remarks:

-

1.

The factorization formula can be extended to the full sub-ballistic regime \(\Vert x-y\Vert =o(t-s)\). We are considering a smaller region \(\Vert x-y\Vert <(t-s)^\sigma \), which allows for effective estimates of the smallness of the error term \(\delta _{x,s}^{y,t}\).

-

2.

We believe that a similar factorization formula can be proved in the fully continuous case. For this, one would need to assume that the correlations of the disorder field \(\{\xi (x,t): x\in \mathbb {R}^d, t \in \mathbb {R}\}\) are decaying sufficiently fast.

-

3.

It is interesting to study the probability distribution for the limiting partition function \(Z := Z_{x,s}^{\infty }\) and for \(\Phi =-\ln {Z}\). Although these probability distributions are not universal, we believe that the tail distributions have many universal features. We conjecture that in the case when the probability distribution of \(\xi \) has compact support, the left tail of the density for \(\Phi \) behaves like \(\exp {[-\Phi ^{1+d/2}]}\) and the right tail decays like \(\exp {[-\Phi ^{1+d}]}\). A related conjecture concerns the moments \(m(l) :=\langle Z^l\rangle \) of Z, which we conjecture to grow as \(\exp {[l^{1+2/d}]}\) in the limit \(l\rightarrow \infty \). If the disorder \(\xi \) is Gaussian, then for any \(\beta >0\) only a finite number of moments for Z is finite. Thus, one can expect exponential decay of the left tail for \(\Phi \).

-

4.

The uniqueness of global solutions to the stochastic heat equation and the random Hamilton-Jacobi equation was also proved in dimension \(d=1\) [8,9,10]. The mechanism leading to uniqueness in this case is completely different from our setting. We should also mention that the case \(d=1\) corresponds to the famous KPZ universality class.

The rest of this paper is organized as follows: In Sect. 2 we derive an expansion for the partition functions and convergence to limiting partition functions. This allows us to state our main result, the factorization formula for \(Z_{x,s}^{y,t}\). We also derive asymptotics for both spatial and temporal correlations of the field of limiting partition functions. In Sect. 3, we collect several estimates on transition probabilities for the simple symmetric random walk on \(\mathbb {Z}^d\). Sections 4 and 5 are devoted to the proof of the factorization formula. Finally, in the appendix we prove the estimates on transition probabilities from Sect. 3.

Notation: Throughout this article the Euclidean norm and inner product in \(\mathbb {R}^d\) are denoted by \(\Vert \cdot \Vert \) and \(\ \cdot \ \), respectively. The 1-norm in \(\mathbb {R}^d\) is denoted by \(\Vert \cdot \Vert _1\). We simply write \(a \equiv b\) to indicate that \(a \equiv b\) (mod 2). For functions A and B, potentially of several variables, we write \(A \lesssim B\) or A is dominated by B to denote that \(A \le c B\) for some constant \(c > 0\). The constant c may depend on the dimension d, the inverse temperature \(\beta \) and the law of the disorder (e.g., through \(\lambda \) defined in (6)), or on scaling parameters such as \(\sigma \) from Theorem 3 or \(\xi \) from Sect. 4.1. However, c is not allowed to depend on any time or space variables such as t and z. The same remark applies to every constant introduced in this paper. Finally, in order to simplify notation, we will write \(\sum _{\textbf{z}}\) to indicate that we are summing over all \(\textbf{z}= (z_1, \ldots , z_r) \in (\mathbb {Z}^d)^r\), where the value of r will be clear from the context.

2 Setting and Main Result

Let \(\gamma = (\gamma _n)_{n \in \mathbb {Z}}\) be a discrete-time simple symmetric random walk on \(\mathbb {Z}^d\), \(d \ge 3\), starting at point \(x \in \mathbb {Z}^d\) at time \(s \in \mathbb {Z}\), with corresponding probability measure \(\textbf{P}_{x,s}\) and corresponding expectation \(\textbf{E}_{x,s}\). As \(d \ge 3\), \(\gamma \) is transient. For integers \(t > s\) and \(y \in \mathbb {Z}^d\), we denote the probability measure obtained from \(\textbf{P}_{x,s}\) by conditioning on the event \(\{\gamma _t = y\}\) by \(\textbf{P}_{x,s}^{y,t}\). The corresponding expectation is denoted by \(\textbf{E}_{x,s}^{y,t}\). We also set

Let \((\xi (x, t))_{x \in \mathbb {Z}^d, t \in \mathbb {Z}}\) be a collection of i.i.d. random variables with corresponding probability measure Q and corresponding expectation \(\langle \,\cdot \, \rangle \). These constitute the random potential in our setting. We assume that

for \(\beta > 0\) sufficiently small. To a sample path of \(\gamma \) over a time interval [s, t], we assign the random action

For integers \(s < t\), \(x, y \in \mathbb {Z}^d\), and inverse temperature \(\beta > 0\), we define the random partition functions

Since \(c(\beta )^{-(t-s+1)} \langle e^{\beta \mathcal {A}_s^t} \rangle = 1\) for every realization of \(\gamma \), we have \(\langle Z_{x,s}^t \rangle = \langle Z_s^{y,t} \rangle = 1\). Notice that the law of the stochastic process \((Z_{x,s}^{s+\tau })_{\tau \in \mathbb {N}_0}\) with respect to Q does not depend on x or s. Besides, \((Z_{x,s}^{s+\tau })_{\tau \in \mathbb {N}_0}\) and \((Z_{t-\tau }^{y,t})_{\tau \in \mathbb {N}_0}\) have the same law.

Remark 1

This is essentially the model considered by Sinai, where F(x, t) in [3] corresponds to \(\beta \xi (x,t)\) in our setting. Furthermore, the partition function \(Z_{x,k}^{y,n}\) from [3] becomes \(c(\beta )^{n-k+1} Z_{x,k}^{y,n}\) in our notation.

Given \(z \in \mathbb {Z}^d\) and \(s \in \mathbb {Z}\), define

As shown in the proof of Theorem 2 in [3], \(Z_{x,s}^{y,t}\) can be written as

Similarly, one obtains the expansions

and

2.1 Convergence to Limiting Partition Functions

As in [3], define

It is well known that \(q_t^z \lesssim t^{-\frac{d}{2}}\) (see, e.g., [11] or Lemma 7). Therefore, as \(d \ge 3\), there is a constant \(C > 0\) such that

We also define

The following convergence statement for partition functions corresponds to Theorem 1 in [3].

Theorem 1

For \(\beta \) so small that \(\alpha _d \lambda < 1\), the following holds: As \(t \rightarrow \infty \), \(Z_{x,s}^t\) converges in \(L^2(Q)\) to a limiting partition function \(Z_{x,s}^{\infty }\).

Remark 2

Due to symmetry, we also have that

exists in the sense of \(L^2(Q)\) for all \(y \in \mathbb {Z}^d\) and \(t \in \mathbb {Z}\).

Remark 3

As pointed out by Bolthausen [2], \((Z_{x,s}^t)_{t \ge s}\) is a martingale with respect to the filtration \(\mathcal {F}_t := \sigma (\xi (y,u): s \le u \le t, y \in \mathbb {Z}^d)\), so convergence to the limiting partition functions also holds Q-almost surely by the Martingale Convergence Theorem.

Proof of Theorem 1

We follow the approach in [3]. The right-hand side of (4) has an orthogonality structure which we will exploit. Since h(z, s) and \(h(z',s')\) are independent if \(z \ne z'\) or if \(s \ne s'\), and since \(\langle h(z,s) \rangle = 0\), we have with Jensen’s Inequality and Fubini’s Theorem that \(\langle (Z^t_{x,s})^2 \rangle \) is bounded from above by

Since \(\langle h(z,s)^2 \rangle = c(\beta )^{-2} c(2 \beta ) - 1\), we find

Since \(\alpha _d \lambda < 1\), one has \(\sum _{r=1}^{\infty } (\alpha _d \lambda )^r < \infty \), so the expression in (7) is finite. As a result,

which yields \(L^2\)-convergence by the Martingale Convergence Theorem. \(\square \)

The following theorem gives a rate of convergence to the limiting partition function \(Z_{x,s}^{\infty }\), which is needed to prove the factorization formula in Theorem 3.

Theorem 2

For \(\beta \) so small that \(\alpha _d \lambda < 1\) and for \(\theta \in (0, \min \{\tfrac{d}{2}-1,-\ln (\alpha _d \lambda )\})\), one has

Proof

For an integer \(t \ge s\), let

which is monotone increasing in t. Set

Then, for \(t > s\),

The expression in (11) is dominated by

and

The expression in (10) is dominated by

and

\(\square \)

2.2 Factorization Formula

The following factorization formula for the partition function \(Z_{x,s}^{y,t}\) with fixed starting and endpoint is the main result of this article.

Theorem 3

Let \(\beta \) be so small that \(\alpha _d \lambda < 1\). For every \(\sigma \in (0,1)\), no matter how close to 1, there exists \(\theta = \theta (\sigma ) > 0\) such that for all \(x,y\in \mathbb {Z}^d\) and \(s < t\) with \(\Vert x - y \Vert < (t - s)^\sigma \), the partition function \(Z_{x,s}^{y,t}\) has the representation

where the error term \(\delta _{x,s}^{y,t}\) defined by the formula above satisfies

Theorem 3 is proved in Sect. 4. Notice that the formula is similar to the ones obtained by Sinai in [3, Theorem 2] and Kifer in [6, Theorem 6.1]. However, we show that the error term is small not only within the diffusive regime \(\Vert x-y\Vert < O(t-s)^{\frac{1}{2}}\), but also for \(\Vert x-y\Vert < (t-s)^{\sigma }\) with \(\sigma \) arbitrarily close to 1. This extension beyond the diffusive regime is nontrivial because the error term in (12) is multiplied by the random-walk transition probability \(q_{t-s}^{y-x}\), which is itself extremely small for \(\Vert x-y \Vert \ge (t-s)^{\frac{1}{2}}\). In a forthcoming publication, we rely heavily on a continuous-time version of Theorem 3 to prove a uniqueness statement for global solutions to the semi-discrete stochastic heat equation.

2.3 Correlations for the Field of Limiting Partition Functions

As mentioned in Sect. 1, the distribution for the field of limiting partition functions \((Z_{x,s}^{\infty })_{x \in \mathbb {Z}^d, s \in \mathbb {Z}}\) is an interesting object to study, with several important questions still open. Below, we state asymptotics for the spatial and temporal correlations of this field.

Theorem 4

Let \(\beta \) be so small that \(\alpha _d \lambda < 1\). Then the spatial and temporal correlations for the field of limiting partition functions \((Z_{x,s}^{\infty })_{x \in \mathbb {Z}^d, s \in \mathbb {Z}}\) have the following asymptotics.

-

1.

\(\qquad \displaystyle \lim _{\begin{array}{c} \Vert y\Vert \rightarrow \infty , \\ \Vert y\Vert _1 \equiv 0 \end{array}} \Vert y\Vert ^{d-2} \left( \langle Z_{0,0}^{\infty } Z_{y,0}^{\infty } \rangle - \langle Z_{0,0}^{\infty } \rangle \langle Z_{y,0}^{\infty } \rangle \right) \in (0,\infty )\);

-

2.

\(\qquad \displaystyle \lim _{\begin{array}{c} |s |\rightarrow \infty , \\ s \equiv 0 \end{array}} |s |^{\frac{d}{2} - 1} \left( \langle Z_{0,0}^{\infty } Z_{0,s}^{\infty } \rangle - \langle Z_{0,0}^{\infty } \rangle \langle Z_{0,s}^{\infty } \rangle \right) \in (0, \infty )\).

It is necessary to take the limit in part (1) along sequences \((y_n)\) such that \(\Vert y_n\Vert _1 \equiv 0\) for all n, as \(Z_{0,0}^{\infty }\) and \(Z_{y,0}^{\infty }\) are independent if \(\Vert y\Vert _1 \equiv 1\). A similar observation applies to the limit in part (2). The proof of Theorem 4 relies on the following estimates for simple symmetric random walk on \({\mathbb {Z}}^d\), \(d \ge 3\).

Lemma 5

The following statements hold:

-

1.

\(\qquad \displaystyle \lim _{\begin{array}{c} \Vert y\Vert \rightarrow \infty , \\ \Vert y\Vert _1 \equiv 0 \end{array}} \Vert y\Vert ^{d-2} \sum _{t=0}^{\infty } \sum _{x \in \mathbb {Z}^d} q_t^x q_t^{y-x} \in (0,\infty )\);

-

2.

\(\qquad \displaystyle \lim _{\begin{array}{c} s \rightarrow \infty , \\ s \equiv 0 \end{array}} s^{\frac{d}{2}-1} \sum _{t=0}^{\infty } \sum _{x \in \mathbb {Z}^d} q_t^x q_{s+t}^x \in (0, \infty )\).

Proof

For \(y \in \mathbb {Z}^d\) whose 1-norm is even,

where G denotes the Green’s function for simple symmetric random walk on \(\mathbb {Z}^d\). Theorem 4.3.1 in [11] implies that

so (1) follows. To prove (2), first notice that for every even \(s \in \mathbb {N}_0\),

It is well known (see, e.g., [12, Chapter 1]) that

Let \(\epsilon > 0\). Then there exists \(T \in \mathbb {N}\) such that

Thus, for s even and \(\ge 2T\),

and

Hence,

Since \(\epsilon \) was arbitrarily chosen, we obtain (2). \(\square \)

Proof of Theorem 4

For \(y \in \mathbb {Z}^d\) such that \(\Vert y\Vert _1 \equiv 0\) and \(t \in \mathbb {N}\), the expansion in (4) along with the properties of h(z, s) yield

Along the lines of the proof of Theorem 2, one can easily show that, as \(t \rightarrow \infty \), the expression on the right-hand side converges to

Therefore,

and part (1 ) follows from Lemma 5. The proof of part (2) is similar and we omit it. \(\square \)

3 Transition Probabilities for the Simple Symmetric Random Walk

In this section we collect several estimates on transition probabilities for the discrete-time simple symmetric random walk on \(\mathbb {Z}^d\), some of which are presumably well known to specialists. Appendix A will be devoted to the proofs of the results presented here.

Let \((\gamma _n)_{n \in \mathbb {N}_0}\) be a discrete-time simple symmetric random walk on \(\mathbb {Z}^d\) starting at the origin.

Lemma 6

There exist constants \(c_1, c_2 > 0\) such that the following holds: For every \(\sigma \in (\tfrac{3}{4},1)\) and \({\tilde{\sigma }} \in (\sigma , 1)\), there exists \(T \in \mathbb {N}\) such that for every \(t \ge T\) and \(y \in \mathbb {Z}^d\) with \(q^y_t > 0\) and \(\Vert y\Vert \le t^{\sigma }\),

Lemma 7

There exists \(c_1 > 0\) such that for every \(y \in \mathbb {Z}^d\) and for every linear functional \(\varphi \) on \(\mathbb {R}^d\) with \(|\varphi (x) |\le \Vert x\Vert \), \(x \in \mathbb {R}^d\), we have

In particular,

Fix a linear functional \(\varphi \) on \(\mathbb {R}^d\) such that \(|\varphi (x) |\le \Vert x\Vert \) for every \(x \in \mathbb {R}^d\). To simplify notation, we set \(\varphi _j {:}=\varphi (e_j)\) for \(1 \le j \le d\), where \(\{ e_j \}\) is the standard basis in \(\mathbb {R}^d\). Define for every \(\theta = (\theta ^1, \ldots , \theta ^d) \in \mathbb {R}^d\)

where i is the imaginary unit. Notice that for every \(\theta \in \mathbb {R}^d\),

where 0 is the zero vector in \(\mathbb {R}^d\). Furthermore,

Notice also that \(\Phi \) is \(2\pi \)-periodic in every argument, so it will be convenient to work with the cube \(\mathcal {C}{:}=(-\tfrac{\pi }{2}, \tfrac{3 \pi }{2}]^d\). It is not hard to see that the inequality (16) is strict for all \(\theta \in \mathcal {C}\) except for \(\theta ^0 {:}=(0, \ldots , 0)\) and \(\theta ^1 {:}=(\pi , \ldots , \pi )\).

Lemma 8

There exist \(\rho _1, \rho _2 > 0\) such that the following holds: For every \(t \in \mathbb {N}\) and for every \(z \in \mathbb {Z}^d\) such that \(\Vert z\Vert \le \rho _1 t\) and \(q^z_t > 0\), there exists a linear functional \(\varphi \) on \(\mathbb {R}^d\) of norm \(\Vert \varphi \Vert \le \rho _2 \tfrac{\Vert z\Vert }{t}\) which satisfies

and

Lemma 9

There exist constants \(\rho , c > 0\) such that for every \(t, t' \in \mathbb {N}\) and \(z, z' \in \mathbb {Z}^d\) with \(\Vert z\Vert \le \rho t\) and \(q^z_t > 0\), one has

4 Proof of Theorem 3

The main idea behind the factorization formula, which goes back at least to [3], is that there is strong averaging for times neither too close to s nor too close to t.

Consider the representation of \(Z_{x,s}^{y,t}\) in (2). For fixed r, \(i_1, \ldots , i_r\), and \(z_1, \ldots , z_r\), the random walk is pinned to the points \(z_1, \ldots , z_r\) at the corresponding times \(i_1, \ldots , i_r\). The proof of Theorem 1 suggests that the contribution to \(Z_{x,s}^{y,t}\) from r on the order of \((t-s)\) is negligible. If r is not on the order of \((t-s)\), at least one of the gaps \(i_j - i_{j-1}\) must be in some sense large (see Sect. 4.1). In Sect. 4.2.2, we show that the contribution to \(Z_{x,s}^{y,t}\) coming from two or more large gaps is negligible as well. Thus, the main contribution comes from having exactly one large gap \(i_j - i_{j-1}\), which is then on the order of \((t-s)\). In order for \(q_{i_j - i_{j-1}}^{z_j - z_{j-1}}\) to be positive, \(z_{j-1}\) must be close to x and \(z_j\) must be close to y. The transition probability \(q_{i_j - i_{j-1}}^{z_j - z_{j-1}}\) is then close to \(q_{t-s}^{y-x}\).

Notice that to prove Theorem 3, it is enough to show that if \(\alpha _d \lambda < 1\), then for every \(\sigma \in (0,1)\) there exists \(\theta > 0\) such that

This is because for a fixed realization \(\omega \) of the disorder, \(\delta _{x,s}^{y,t}(\omega )\) can be written as \(\delta _{0,0}^{y-x,t-s}({{\hat{\omega }}})\), where \({{\hat{\omega }}}\) is obtained by shifting \(\omega \) in space and time. The distribution of the disorder is invariant under such shifts.

For \(t \in \mathbb {N}_0\) and \(r \in \{1, \ldots , t+1\}\), let

For \(\textbf{i}\in I(t,r)\) and \(\textbf{z}= (z_1, \ldots , z_r) \in (\mathbb {Z}^d)^r\), define

With this notation, the expansion in (2) becomes

where one should recall from Sect. 1 the notational shorthand \(\sum _{\textbf{z}}\) for summation over all \(\textbf{z}= (z_1, \ldots , z_r) \in (\mathbb {Z}^d)^r\). The first step is to split the double sum into terms according to the size of the largest gap between indices, as discussed in Sect. 4.1.

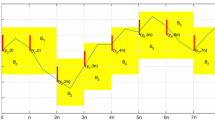

4.1 Large and Huge Gaps

If there exist \(\sigma \in (0,1)\) and \(\theta > 0\) such that (13) (the convergence of the error term in the factorization formula) holds, then (13) also holds for \({{\tilde{\sigma }}}\) and \(\theta \), where \({{\tilde{\sigma }}}\) can be any value in \((0, \sigma )\). There is then no loss of generality in assuming that \(\sigma > 3/4\), and one may even think of \(\sigma \) as being very close to 1. For a collection of indices \(0 ={:}i_0 \le i_1< \cdots < i_r \le i_{r+1} {:}=t\), the gaps are the differences between consecutive indices, i.e., \(i_1 - i_0, i_2 - i_1, \ldots , i_{r+1} - i_r\). To quantify what it means to have many gaps, we fix positive constants \(\kappa _1\), \(\kappa _2 \in (\tfrac{1}{2} (3 \sigma - 1), \sigma )\) such that \(\kappa _1 < \kappa _2\). Let \(T_{\kappa _2} \in \mathbb {N}\) be so large that \( 2 (t - t^{\kappa _2}) > t \) for all \(t \ge T_{\kappa _2}\). Then define

Note that k(t) grows with t like \(t^{\kappa _1}\). We say that a collection of indices \(0 \le i_1< \cdots < i_r \le t\) has many gaps if \(r > k(t)\).

To classify the size of a gap between indices, fix another constant \(\xi \) such that \(0< \xi < \min \big \{ 1-\sigma , \kappa _2 - \kappa _1 \}\). One should think of \(\xi \) as being very close to 0. Note that \(\xi + \kappa _1 < 1\) and that \(\xi < \kappa _1\), the latter because of \(\xi< 1 - \sigma< 1/4< \tfrac{1}{2} (\tfrac{9}{4} - 1) < \kappa _1\). Let \(t \in \mathbb {N}\) such that \(k(t) \ge 1\), r such that \(1 \le r \le k(t)\), and consider a sequence of indices \(0 = i_0 \le i_1< \cdots < i_r \le i_{r+1} = t\). We say that the gap between two consecutive indices \(i_{j-1}\) and \(i_j\) is

-

large if \(i_j - i_{j-1} \ge t^{\xi }\);

-

huge if \(i_j - i_{j-1} \ge t - r t^{\xi }\).

Observe that the size of the largest gap is necessarily greater than \(t / (r+1) \ge t^{1-\kappa _1} \ge t^{\xi }\), so there is at least one large gap. A huge gap is necessarily large. If there is only one large gap, then all other gaps are of size less than \(t^{\xi }\), so this large gap is even huge. Thus, if there is no huge gap, there are at least two large ones. Since t must be greater than \(T_{\kappa _2}\) in order for \(k (t) \ge 1\) to hold, we have \( 2(t-r t^{\xi })> 2 (t-t^{\kappa _1+\xi })> 2 (t-t^{\kappa _2}) > t, \) so there can be at most one huge gap. Note, however, that a huge gap is not necessarily the only large one.

Let us introduce some more notation. Fix \(r \in \mathbb {N}\) and \(t \in \mathbb {N}_0\). For \(m \in \mathbb {N}\) such that \(1 \le m \le r+1\), define the following set of r-tuples:

Also define

For t so large that \(k(t) \ge 1\), we decompose the expansion of \(Z^{y,t}_{0,0}\) in (18) as follows:

where,

With this decomposition in hand, Theorem 3 follows immediately from the following lemma.

Lemma 10

(Central Lemma) Let \(\beta >0\) be so small that \(\alpha _d \lambda < 1\), and let \(\sigma \in (0,1)\).

-

1.

For every \(\theta > 0\),

$$\begin{aligned} \lim _{t \rightarrow \infty } t^{\theta } \sup _{y: \Vert y\Vert \le t^{\sigma }, q^y_t > 0} \frac{\langle |B_1^{y,t} |\rangle }{q_t^y} = 0. \end{aligned}$$(19) -

2.

There exists \(\theta > 0\) such that

$$\begin{aligned} \lim _{t \rightarrow \infty } t^{\theta } \sup _{y: \Vert y\Vert \le t^{\sigma }, q^y_t > 0} \frac{\langle |B^{y,t}_2 |\rangle }{q_t^y} = 0. \end{aligned}$$(20) -

3.

There exists \(\theta > 0\) such that

$$\begin{aligned} \lim _{t \rightarrow \infty } t^{\theta } \sup _{y: \Vert y\Vert \le t^{\sigma }, q_t^y > 0} \left\langle \left|1 + \frac{B_3^{y,t}}{q_t^y} - Z_{0,0}^{\infty } Z_{-\infty }^{y,t} \right|\right\rangle = 0. \end{aligned}$$(21)

Sections 4.2 and 4.3 are devoted to the proof of this lemma.

4.2 Proof of the Central Lemma, Parts 1 and 2: Small Contributions

In this section, we show that the contributions of the terms \(B_1^{y,t}\) and \(B_2^{y,t}\) to \(Z_{0,0}^{y,t}\) are negligible. We start with the observation that, by Jensen’s inequality,

4.2.1 Proof of Part 1: Many Gaps

Let \(t \in \mathbb {N}\) be so large that \(k(t) \ge 1\). Since \(\alpha _d \lambda < 1\), (8) and the definition (5) of \(\alpha _d\) let us estimate \(\langle (B_1^{y,t})^2 \rangle \) as follows:

Recall our assumption that \(\sigma > \tfrac{3}{4}\). To estimate \(1/(q_t^y)^2\) on the right-hand side of (22), fix \({\tilde{\sigma }} \in (\sigma , 1)\) such that \(4 {\tilde{\sigma }} - 3 < 2 \sigma - 1\). By Lemma 6, there exist constants \(c_1, c_2 > 0\) (independent of \(\sigma , {\tilde{\sigma }}\)) and \(T \in \mathbb {N}\) (depending on \(\sigma ,{\tilde{\sigma }}\)) such that for every integer \(t \ge T\) and \(y \in \mathbb {Z}^d\) with \(q_t^y > 0\) and \(\Vert y \Vert \le t^{\sigma }\),

for some constant \(c > 0\). Therefore,

Since \(\kappa _1> \tfrac{1}{2} (3 \sigma -1) > 2 \sigma -1\), we have \(t^{2 \sigma -1} / k(t) \rightarrow 0\) as \(t \rightarrow \infty \), and therefore, for all \(\theta > 0\),

4.2.2 Proof of Part 2: No Huge Gaps

Let \(t \in \mathbb {N}\) be so large that \(k(t) \ge 1\). Then

where

Now we estimate \(M_{t,r}(y)\). Let \(r \in \mathbb {N}\) such that \(1 \le r \le k(t)\), and \(y \in \mathbb {Z}^d\) such that \(\Vert y \Vert \le t^{\sigma }\) and \(q^y_t > 0\). Given \(\textbf{i}= (i_1, \ldots , i_r) \in I_2(t,r)\) such that \(i_1 \ne 0\) and \(i_r \ne t\), set \(t_1 {:}=i_1, t_2 {:}=i_2 - i_1, \ldots , t_r {:}=i_r - i_{r-1}, t_{r+1} {:}=t - i_r\). And given \(\textbf{z}= (z_1, \ldots , z_r) \in (\mathbb {Z}^d)^r\), set \(x_1 {:}=z_1, x_2 {:}=z_2 - z_1, \ldots , x_r {:}=z_r - z_{r-1}, x_{r+1} {:}=y - z_r\). This change of variables yields

For \(\textbf{i}\in I_2(t,r)\), there is no huge gap and hence there are at least two large ones. Let

i.e., \((l+1)\) gives the number of large gaps in \(\textbf{i}\). There are \((r+1)\) possible slots for the largest gap (which is then also a large gap), and \(\left( {\begin{array}{c}r\\ l\end{array}}\right) \) possible slots for the other l large gaps once the largest gap has been fixed. Together with (24), this yields the estimate

where

The sum on the right-hand side is taken over all \(t_1, \ldots , t_{r+1} \in \mathbb {N}\) and \(x_1, \ldots , x_{r+1} \in \mathbb {Z}^d\) that satisfy the four conditions under the summation sign. In the special case \(l=r\), the fourth condition is void.

For given positive integers \(t_{l+1}, \ldots , t_r\) strictly less than \(t^{\xi }\), set

and for \(x_{l+1}, \ldots , x_r \in \mathbb {Z}^d\), set

If \(l < r\), this lets us write

We now search for a bound for \(M_{t,r,l}^{t'} (x')\) when t is sufficiently large.

Claim 4.1

There exist constants \(C, C', T > 0\) such that for every integer \(t \ge T\) and for all \(r, l, t', x'\) as above,

and, in the special case \(l=r\),

We use Claim 4.1 to estimate \(M_{t,r,l} (y)\) from (26) as follows:

Then we combine the above estimate with (25) to obtain

Finally, combining this estimate with (22) and (23), we obtain

Since \(d \ge 3\) and since \(\xi < 1- \sigma \), one has

as long as \(\theta < \xi / 4\) and hence (20) for every \(\theta < \xi / 8\). To complete the proof of Part 2, it remains to prove Claim 4.1.

Proof of Claim 4.1

By Lemma 8, there exist constants \(\rho _1, \rho _2 > 0\) such that for every \(t \in \mathbb {N}\) and \(y \in \mathbb {Z}^d\) with \(\Vert y \Vert \le \rho _1 t\) and \(q^y_t > 0\), there exists a linear functional \(\varphi \) on \(\mathbb {R}^d\) of norm \(\Vert \varphi \Vert \le \rho _2 \Vert y \Vert / t\) which satisfies

Fix \(t \in \mathbb {N}\) so large that \(k(t) \ge 1\) as well as \(t^{\sigma } \le \rho _1 t\) and \(\rho _2 t^{\sigma - 1} \le 1\). Let \(y \in \mathbb {Z}^d\) such that \(\Vert y \Vert \le t^{\sigma }\) and \(q^y_t > 0\). The conditions \(\rho _2 t^{\sigma - 1} \le 1\) and \(\Vert y \Vert \le t^{\sigma }\) imply in particular that \(\Vert \varphi \Vert \le 1\) for the linear function \(\varphi \) corresponding to t and y. Let \(t_1, \ldots , t_l, t_{r+1} \in \mathbb {N}\) and \(x_1, \ldots , x_l, x_{r+1} \in \mathbb {Z}^d\) such that the conditions under the summation sign in (27) hold. In the special case \(l=r\), replace \(t'\) and \(x'\) with t and y, respectively, here and in the remainder of the proof. By Lemma 7, there exists a constant \(c_1 > 0\) such that

where in the third line we used the fact that \(\varphi \) is a linear functional, and in the fourth line we used (17), where \(\Phi \) was defined in (15). Since \(t' < t\) and \(\Phi (0) \ge 1\), it follows from (28) that \(\Phi (0)^{t'} \le \Phi (0)^t \lesssim t^{d/2} q_t^y e^{\varphi (y)}\). As a result, for all positive integers \(t_1, \ldots , t_l, t_{r+1}\) such that \(t_1 + \cdots + t_l + t_{r+1} = t'\) and \(t_{r+1} \ge t_1, \ldots , t_l \ge t^{\xi }\), one has

Furthermore, the sum \(\sum q_{t_1}^{x_1} \cdots q_{t_l}^{x_l} q_{t_{r+1}}^{x_{r+1}}\) over all tuples \((x_1, \ldots , x_l, x_{r+1})\) such that \(x_1 + \cdots x_l + x_{r+1} = x'\) equals \(q_{t'}^{x'}\), and by Lemma 9 there exist constants \(c, \rho > 0\) such that

for t so large that \(t^{\sigma } \le \rho t\). Therefore,

where \(P (t) {:}=\exp \bigg ( \dfrac{c'}{t} \Big ( 2 \Vert y\Vert \Vert y - x' \Vert + \Vert y\Vert (t-t') + \ln (t) (t-t') \Big ) \bigg )\) for a constant \(c' > 0\). In the second line of the estimate above, we also used that \(\Vert \varphi \Vert \le \rho _2 \Vert y\Vert /t\).

Together with Lemma 14 from the appendix, we obtain the following estimate on \(M^{t'}_{t,r,l} (x')\):

where \(C > 0\) is a constant. It remains to bound \((t / t')^{d/2} P(t)\). We estimate the following expressions involved in \((t / t')^{d/2} P(t)\) like so:

where \(\sum _{j=l+1}^r \Vert x_j \Vert \le \sum _{j=l+1}^r t_j\) is valid under the assumption that \(q_{t_1}^{x_1} \ldots q_{t_{r+1}}^{x_{r+1}} > 0\). Then, using \(\Vert y\Vert \le t^{\sigma }\), we obtain

for some constant \(C' > 0\). This completes the proof of Claim 4.1. \(\square \)

4.3 Proof of the Central Lemma, Part 3: The Main Contribution

Let t be so large that \(k(t) \ge 1\) and let \(y \in \mathbb {Z}^d\) such that \(\Vert y \Vert \le t^{\sigma }\) and \(q^y_t > 0\). For \(\textbf{i}\in I_1(t,r,m)\) and \(\textbf{z}\in (\mathbb {Z}^d)^r\), define

where the factor with the hat is absent; in other words, we set the transition probability corresponding to the huge gap equal to 1.

Now decompose \(B_3^{y,t}\) further, depending on the position of the huge gap 1) at the begining, 2) in the middle, or 3) at the end, as follows:

where

and the error terms are given by

Notice that \(F_1^{y,t}, F_2^{y,t}, F_3^{y,t}\) are well-defined even if \(q^y_t = 0\). We first show that the contribution from each error term is negligible.

Lemma 11

There exists \(\theta > 0\) such that

Proof

It is enough to show that there exists \(\theta > 0\) such that for \(i \in \{1,2,3\}\),

For t so large that \(k(t) \ge 1\) and for \(y \in \mathbb {Z}^d\) such that \(\Vert y \Vert \le t^{\sigma }\) and \(q^y_t > 0\), one has

where \(a_i(r) {:}=1\) if \(i=1,3\), \(a_2(r) {:}=(r-1) \mathbbm {1}_{r \ge 2}\),

and where the sum \(\sum _{\textbf{x}}\) is taken over all \(\textbf{x}= (x_1, \ldots , x_r) \in (\mathbb {Z}^d)^r\).

The convergence in (31) relies on \(q_{t-t_1 -\cdots -t_r}^{y-x_1 -\cdots - x_r}\) being close to \(q_t^y\) in the following sense: Let \(\rho > 0\) be the constant from Lemma 9, and assume that t is so large that \(t^{\sigma } \le \rho t\). Let \(1 \le r \le k(t)\), \(t_1, t_r \in \mathbb {N}_0\), \(t_2, \ldots , t_{r-1} \in \mathbb {N}\) with \(t_1 + \cdots + t_r \le r t^{\xi }\). Without loss of generality, let \(x_1, \ldots , x_r \in \mathbb {Z}^d\) such that \(q_{t_1}^{x_1} \ldots q_{t_r}^{x_r} > 0\), as otherwise the contribution to \(\langle (L_i^{y,t})^2 \rangle \) is zero.

Claim 4.2

There exists a constant \(c_3 > 0\) such that

Using this claim we can bound \(\sup _{y: \Vert y\Vert \le t^{\sigma }, q^y_t > 0} \langle (L_i^{y,t})^2 \rangle \) by

Let \(\theta \in (0, \min \{2/5, 1 - \sigma - \xi \})\). The definition of the Landau symbol \(O(t^{-\frac{2}{5}})\) implies that there exist constants \(C, T > 0\) such that for every \(t > T\),

where, in the third line, we used that \((e^x - 1)/x \le e^x\) for every \(x > 0\). Hence,

For \(\varphi \in (\alpha _d \lambda , 1)\) and t so large that \(\alpha _d \lambda \exp (c_3 t^{\sigma + \xi -1}) \le \varphi \), the series on the right is dominated by the convergent series \(\sum \varphi ^r (c_3 r^2 + Cr)\). Dominated convergence and (32) then imply (31) for \(\theta \in (0, \max \{2/5, 1 - \sigma - \xi \})\).

To complete the proof of Lemma 11, it remains to prove Claim 4.2.

Proof of Claim 4.2

Let \(x' {:}=x_1+\cdots + x_r\) and \(t' {:}=t_1 + \cdots + t_r\). Observe that \(q_{t - t'}^{y - x'} > 0\): Indeed, notice first that \(t - t' \ge t - k(t) t^{\xi }\) since \(t' \le r t^\xi \). As k(t) is of order \(t^{\kappa _1}\) and \(\kappa _1 + \xi < 1\), the term \(t - t'\) is of order t. Moreover,

which is of smaller order than \(t - t'\). Finally, \(t - t'\) and \(\Vert y - x' \Vert _1\) have the same parity because \(q^y_t > 0\) and \(q_{t_1}^{x_1} \ldots q_{t_r}^{x_r} > 0\).

Now, we derive an upper bound on \(|q_{t-t'}^{y-x'} - q_t^y |/{q_t^y}\). If \(q_{t-t'}^{y-x'} \ge q_t^y\), then combining Lemma 9 with the estimate \(\Vert x' \Vert \le t' \le r t^{\xi }\) gives

for some constant \(c_1 > 0\). If \(q_t^y > q_{t-t'}^{y-x'}\), we argue as follows: Let \(t \in \mathbb {N}\) be so large that \( t^{\sigma } + k(t) t^{\xi } \le \rho (t-t'). \) Then

and Lemma 9 with the estimate \(k(t) t^{\xi } \lesssim t^{\kappa _1 + \xi } \le t^{\sigma }\) (coming from \(\xi< \kappa _2 - \kappa _1 < \sigma - \kappa _1\)) yields

Using that \((a-1)^2 \le a^2 - 1\) for every \(a \ge 1\), in either case (33) or (34), we have the following bound:

where \(c_3 > 0\) is a constant. \(\square \)

In order to deal with the \(F_i\)’s defined in (30), the strategy is to first define suitable truncations of the partition functions. Fix \(\xi _1, \xi _2\) satisfying

and notice that since \(\xi + \sigma < 1\), we have \(\xi _1 + \sigma < 1\). Now set

and

where \(q^y_{t, \widehat{r+1}}(\textbf{i}, \textbf{z})\) and \(q^y_{t, {\hat{1}}}(\textbf{i}, \textbf{z})\) are defined according to (29), with \(q^y_{t, \widehat{r+1}}(\textbf{i}, \textbf{z})\) not depending on y. Notice that \(T_{0,0}^t\) and \(T_0^{y,t}\) are truncations of the partition functions \(Z_{0,0}^t\) and \(Z_0^{y,t}\), respectively (see (4) and (3)). The convergence statement in (21) will follow from the lemmas below.

Lemma 12

There exists \(\theta > 0\) such that

Lemma 13

There exists \(\theta > 0\) such that

The convergence statement in (35) is shown in Sect. 5.1. We show the convergence statements in (36) and (37) in Sect. 5.2, and the one in (38) in Sect. 5.3.

5 Main Contribution: Proofs of Lemmas 12 and 13

5.1 Proof of Lemma 12, (35): Convergence for One Huge Gap in the Middle

One has

Define the set

and its complement in \(I_1(t,r,m)\)

Recall the notation \(q^{y}_{t,{{\hat{m}}}}(\textbf{i},\textbf{z})\) from (29). Making the change of summation indices \(r{:}=r+s\) and \(m{:}=r+1\) in (39), one has

The identity in (40) allows us to rewrite \(F_2^{y,t} - (T_{0,0}^t - 1) (T_0^{y,t} - 1)\) as \( f^{y,t}_{2;1} + f^{y,t}_{2;2} + f^{y,t}_{2;3}, \) where

\(R^1 {:}=\{r \in \mathbb {N}: \ 2 \le r \le t^{\xi _1} + 2\}\), \(R^2 {:}=\{r \in \mathbb {N}: \ t^{\xi _1} + 2 < r \le 2 t^{\xi _1} + 2\}\), and \(R^3 {:}=\{r \in \mathbb {N}: \ 2 t^{\xi _1} + 2 < r \le k(t)\}\).

In order to prove (35), it is then enough to show existence of \(\theta > 0\) such that for \(i=1,2,3\),

For \(i = 1, 3\), one has

where \(H^1(t,r,m) {:}=W(t,r,m)\) and \(H^3(t,r,m) {:}=I_1(t,r,m)\). Notice furthermore that \(\langle (f_{2;2}^{y,t})^2 \rangle \) is bounded by (42) with \(i=2\) and \(H^2(t,r,m) {:}=I_1(t,r,m)\). Now, we take up cases \(i=1,2,3\) separately.

Case \(i = 1\). Since for \(\textbf{i}\in W(t,r,m)\),

the expression in (42) is dominated by

This implies (41) for \(\theta < (\xi _2-\xi _1) (\tfrac{d}{2}-1)\).

Case \(i=2\). The expression in (42) is dominated by

From this estimate we deduce (41) for every \(\theta > 0\).

Case \(i = 3\). The expression in (42) is dominated by

which converges to 0 as \(t\rightarrow \infty \) faster than any polynomial by the same argument as in the case \(i=2\).

5.2 Proof of Lemma 12, (36) and (37): Convergence for One Huge Gap at the Start or the End

We only show the convergence statement in (37) as the proof of (36) is analogous. Write

where for \(i= 1,2\),

and \(R^1 {:}=\{r \in \mathbb {N}: \ 1 \le r \le t^{\xi _1}+1\}\), \(R^2 {:}=\{r \in \mathbb {N}: \ t^{\xi _1}+1 < r \le k(t) \}\),

\(H^1(t,r) {:}=\left\{ \textbf{i}= (i_1, \ldots , i_r) ~:~ \begin{array}{c} \displaystyle 0 \le i_1< \cdots < i_{r} \le r t^{\xi } \\ \displaystyle i_r > t^{\xi _2} \end{array} \right\} , \) \( H_r^2 {:}=I_1(t,r,r+1). \)

For \(i = 1,2\), one has

Convergence in the cases \(i=1\) and \(i=2\) works then as in the proof of (35).

5.3 Proof of Lemma 13: Convergence to Limiting Partition Functions

Let us first show that the truncated partition function \(T_{0,0}^t\) converges to the limiting partition function \(Z_{0,0}^\infty \) in the \(L^2\) sense and obtain a rate of convergence. We will prove that there exists \(\theta > 0\) such that

One has

where

It is then enough to show existence of \(\theta > 0\) such that

We have

so (44) holds for \(i=2\) and for every \(\theta > 0\). Moreover,

so (44) holds for \(i=1\) and \(\theta \in (0, (\xi _2 - \xi _1) (\tfrac{d}{2}-1))\). This implies (43). Combining (43) with Theorem 2, one obtains in particular that there exists \(\theta > 0\) such that

To complete the proof of Lemma 13, notice that

Therefore, we obtain the desired result by applying the Cauchy-Schwarz Inequality to the two summands on the right-hand side, and using (45) together with

Data Availability

Data sharing does not apply to this article as no datasets were generated or analyzed during the current study.

References

Imbrie, J.Z., Spencer, T.: Diffusion of directed polymers in a random environment. J. Stat. Phys. 52(3), 609–626 (1988)

Bolthausen, E.: A note on the diffusion of directed polymers in a random environment. Commun. Math. Phys. 123(4), 529–534 (1989)

Sinai, Ya.G.: A remark concerning random walks with random potentials. Fundamenta Mathematicae 147(2), 173–180 (1995)

Carmona, P., Hu, Y.: On the partition function of a directed polymer in a Gaussian random environment. Probab. Theory Relat. Fields 124(3), 431–457 (2002)

Comets, F., Shiga, T., Yoshida, N.: Directed polymers in a random environment: path localization and strong disorder. Bernoulli 9(4), 705–723 (2003)

Kifer, Yu.: The Burgers equation with a random force and a general model for directed polymers in random environments. Probab. Theory Relat. Fields 108(1), 29–65 (1997)

Bec, J., Khanin, K.M.: Burgers turbulence. Phys. Rep. 447(1), 1–66 (2007)

Bakhtin, Yu., Cator, E., Khanin, K.M.: Space-time stationary solutions for the Burgers equation. J. Am. Math. Soc. 27, 193–238 (2014)

Bakhtin, Yu., Li, L.: Thermodynamic limit for directed polymers and stationary solutions of the Burgers equation. Commun. Pure Appl. Math. 72(3), 536–619 (2019)

Bakhtin, Yu., Li, L.: Zero temperature limit for directed polymers and inviscid limit for stationary solutions of stochastic Burgers equation. J. Stat. Phys. 172(5), 1358–1397 (2018)

Lawler, G.F., Limic, V.: Random Walk: A Modern Introduction. Cambridge Studies in Advanced Mathematics, Cambridge University Press, Cambridge (2010)

Lawler, G.F.: Random Walk and the Heat Equation. Student Mathematical Library, American Mathematical Society, Providence (2010)

Acknowledgements

Part of this paper was written during two-week stays at Mathematisches Forschungszentrum Oberwolfach, in 2018, and Centre International de Rencontres Mathématiques, in 2019, as part of their respective research in pairs programs. We thank both institutions for their kind hospitality. TH gratefully acknowledges support from the Einstein Foundation through grant IPF-2021-651 and, until 2020, from the Swiss National Science Foundation through grant 200021 – 175728/1.

Funding

Open Access funding enabled and organized by Projekt DEAL.

Author information

Authors and Affiliations

Contributions

All authors contributed equally to the present work. The authors are listed in alphabetical order. In particular, the order does not in any way reflect the extent to which the authors contributed to the present work.

Corresponding author

Ethics declarations

Financial or Non-financial

The authors of this article declare that they have no relevant financial or non-financial interests related to the work submitted for publication.

Additional information

Communicated by Eric A. Carlen.

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendices

Appendix A Proofs of Estimates for Transition Probabilities

1.1 A.1 Proof of Lemma 6

For \(t \in \mathbb {N}_0\), set \(\gamma _t^* {:}=\gamma _{2t}\). Then \(\gamma ^*\) is a random walk on the lattice \((\mathbb {Z}^d)_{\text {ev}}\) consisting of those points in \(\mathbb {Z}^d\) whose coordinate sum is even. If \(\{ e_j \}_{1 \le j \le d}\) is the standard basis for \({\mathbb {R}}^d\), then \(\{e_1 + e_j: 1 \le j \le d\}\) is a basis for \((\mathbb {Z}^d)_{\text {ev}}\). Let \(L : {\mathbb {R}}^d \rightarrow {\mathbb {R}}^d\) be the linear transformation mapping \(e_1 + e_j\) to \(e_j\) for \(1 \le j \le d\), and define \({\tilde{\gamma }}_t {:}=L \gamma _t^*\). Then, \({{\tilde{\gamma }}}\) is an aperiodic, irreducible, symmetric random walk on \(\mathbb {Z}^d\) with bounded increments, so it satisfies the conditions of Theorem 2.3.11 in [11]. Thus, there exists \(\rho > 0\) such that for every \(i \in \mathbb {N}\) and \(z \in \mathbb {Z}^d\) satisfying \(\Vert z\Vert < \rho i\), we have

Now, fix \(\sigma \in (\tfrac{3}{4}, 1)\), \({\tilde{\sigma }} \in (\sigma ,1)\), and let \(T \in \mathbb {N}\) be so large that \(1 + t^{\sigma } < (t-1)^{{{\tilde{\sigma }}}}\) and \(t^{{{\tilde{\sigma }}}} < \tfrac{\rho }{2 {\left| \hspace{-1.0625pt}\left| \hspace{-1.0625pt}\left| L \right| \hspace{-1.0625pt}\right| \hspace{-1.0625pt}\right| } } t\) for every \(t \ge T\), where \({\left| \hspace{-1.0625pt}\left| \hspace{-1.0625pt}\left| L \right| \hspace{-1.0625pt}\right| \hspace{-1.0625pt}\right| }\) is the operator norm of L. We distinguish between two cases: t is either even or odd.

Even case. If \(t=2m\) for some \(m \in \mathbb {N}\), then we can prove a slightly stronger statement:

Claim A.1

There exist constants \(c_1, c_2 > 0\), independent of \(\sigma \) and \({\tilde{\sigma }}\), such that (14) holds for every even \(t \ge T\) and \(y \in \mathbb {Z}^d\) with \(q^y_{t} > 0\) and \(\Vert y\Vert \le {t}^{{{\tilde{\sigma }}}}\).

The difference to the conclusion of Lemma 6 is that the estimate holds for \(\Vert y \Vert \le t^{{{\tilde{\sigma }}}}\) and not just for \(\Vert y\Vert \le t^{\sigma }\). To prove this claim, fix \(t=2m \ge T\) and \(y \in \mathbb {Z}^d\) such that \(q^y_{2m} > 0\) and \(\Vert y\Vert \le (2m)^{{{\tilde{\sigma }}}}\). Then \(q^y_{2m} = {{\tilde{q}}}^{Ly}_m.\) Since \( \Vert Ly \Vert \le {\left| \hspace{-1.0625pt}\left| \hspace{-1.0625pt}\left| L \right| \hspace{-1.0625pt}\right| \hspace{-1.0625pt}\right| } \Vert y\Vert \le {\left| \hspace{-1.0625pt}\left| \hspace{-1.0625pt}\left| L \right| \hspace{-1.0625pt}\right| \hspace{-1.0625pt}\right| } t^{{{\tilde{\sigma }}}} < \rho m, \) one has

for some constants \(c_1, c_2 > 0\).

Odd case. Now, suppose \(t = 2m+1 \ge T\) for some \(m \in \mathbb {N}\). Fix \(y \in \mathbb {Z}^d\) such that \(q^y_{2m+1} > 0\) and \(\Vert y\Vert \le (2m+1)^{\sigma }\). Let E be the set of standard unit vectors in \(\mathbb {R}^d\) and their additive inverses. Then

Since \(\Vert y-z\Vert< 1 + t^{\sigma } < (t-1)^{{{\tilde{\sigma }}}} = (2m)^{{{\tilde{\sigma }}}}\) and \(q^{y-z}_{2m} > 0\) for every \(z \in E\), then using Claim A.1, we can bound \(q^y_{2m+1}\) from below as follows: There exist \(c'_1, c'_2 > 0\) such that

In addition to \(t \ge T\), assume that t is so large that

Since \( \Vert y - e_1 \Vert ^2 = \Vert y\Vert ^2 + 1 - 2 y \cdot e_1 \le \Vert y\Vert ^2 + 1 + 2 \Vert y\Vert , \) it follows that

Plugging this into the right-hand side of (A1), we obtain the desired estimate.

1.2 A.2 Proof of Lemma 7

Recall from Sect. 3 that \(\theta ^0 = (0,\ldots ,0)\) and \(\theta ^1 = (\pi ,\ldots ,\pi )\). For \(\varepsilon > 0\) and \(j\in \{0,1\}\), let \({\mathcal {D}}_j^\varepsilon {:}=\{ \theta \in \mathbb {R}^d ~:~ \Vert \theta - \theta ^j \Vert < \varepsilon \}\). Let \(\varphi \) be a linear functional on \(\mathbb {R}^d\) such that \(|\varphi (x) |\le \Vert x \Vert , x \in \mathbb {R}^d\), and let \(\Phi \) be the corresponding function defined in (15).

Claim A.2

There exist \(\varepsilon , \delta > 0\) such that, for \(j \in \{0,1\}\),

Proof of Claim A.2

For \(j \in \{0,1\}\), define scaled versions of the gradient vector and the Hessian matrix of \(\Phi \) at \(\theta ^j\):

A simple computation shows that the matrix \(H_j\) is diagonal, and that for every \(l \in \{ 1, \ldots , d \}\), the l-th component of \(G_j\) and the (l, l)-entry of \(H_j\) are, respectively,

If we Taylor expand \(\Phi \) around \(\theta ^j\), we obtain

Here and in the sequel, \(g(\theta ) = O(f(\theta ))\) means there exists a constant \(c > 0\), independent of \(\varphi \), such that \(|g(\theta ) |\le c f(\theta )\). In the Taylor expansion above, the constant c corresponding to the error term \(O\big ( \Vert \theta - \theta ^j \Vert ^3 \big )\) may be chosen independently of \(\varphi \) because of the assumption that \(\Vert \varphi \Vert \le 1\). Notice from (A2) that \(G_0 = G_1\) and \(H_0 = H_1\), so in order to prove Claim A.2, it is enough to consider the case \(j = 0\), where \(\theta ^j = (0, \ldots , 0)\). If we write \(\theta = (\theta _1, \ldots , \theta _d)\), then, using Jensen’s Inequality for sums,

Using the expression for \(H_0\) in (A2) as well as \(\Vert \varphi \Vert \le 1\), we obtain

Thus, there exist \(\varepsilon > 0\) and a constant \(c > 0\) such that for every \(\theta \) with \(\Vert \theta \Vert \le \varepsilon \),

Since the map \(\theta \mapsto \big |\Phi (\theta ) / \Phi (0) \big |\) is continuous and strictly less than 1 for every \(\theta \in \mathcal {C}\) except \(\theta ^0, \theta ^1\), it follows that

In fact, one even has \(\sup _{\Vert \varphi \Vert \le 1} {\mathcalligra{s}}(\varphi ) < 1\). Hence, if we choose \({\tilde{c}} \in (0, c)\) so small that \(\big ( 1 - {\tilde{c}} \Vert \theta \Vert ^2 \big ) \ge (\sup _{\Vert \varphi \Vert \le 1} {\mathcalligra{s}}(\varphi ))^2\) for every \(\theta \in \mathcal {C}\), then Claim A.2 follows with \(\delta {:}={\tilde{c}} / 2\).

For \(t \in \mathbb {N}\), let \(\widehat{\Phi ^t}\) be the Fourier transform of \(\Phi ^t\); i.e.,

Since \(\Phi (\theta )^t = \textbf{E}\left[ e^{i \theta \cdot \gamma _t} e^{\varphi (\gamma _t)} \right] ,\) one has

Now, we estimate with the help of (17) and Claim A.2:

Finally, for some constant \(C > 0\),

1.3 A.3 Proof of Lemma 8

For \(j \in \{0,1\}\), let \({\mathcal {B}}_j {:}=\{ \theta \in \mathcal {C}~:~ \Vert \theta - \theta ^j \Vert \le t^{-2/5} \}\). Recall from (A3) that for every \(z \in \mathbb {Z}^d\), \(t \in \mathbb {N}\), and for every linear functional \(\varphi \) on \(\mathbb {R}^d\) satisfying \(\Vert \varphi \Vert \le 1\), one has

where

Then we find

We now estimate the expression on the right-hand side. First, we show that the linear functional \(\varphi \) can be chosen in such a way that for \(j \in \{0,1\}\),

The idea is to choose \(\varphi \) as a function of z and t in such a way that the linear term in the Taylor expansion of \(\Phi (\theta ) e^{- i z \cdot \theta /t}\) around \(\theta ^{j}\) vanishes, i.e.,

If we denote the kth component of z by \(z_k\), this is equivalent to

by virtue of (A2). Let \(F:\mathbb {R}^d \rightarrow \mathbb {R}^d\) be given by

For \(r > 0\) and \(x \in \mathbb {R}^d\), let \(B_r(x)\) denote the open Euclidean ball of radius r centered at x. Since \(F(0) = 0\) and

the Inverse Function Theorem yields existence of \(\rho _1 > 0\) and an open neighborhood U of 0 such that \(F: U \rightarrow B_{\rho _1}(0)\) is a diffeomorphism. Therefore, for every \(t \in \mathbb {N}\) and \(z \in \mathbb {Z}^d\) with \(\Vert z\Vert < \rho _1 t\), there exists \(\varphi \in U\) such that \(F(\varphi ) = z/t\). Since \(F^{-1}\) is differentiable and \(F^{-1} (0) = 0\), there exists \(\rho _2 >0\) such that

Without loss of generality, we may assume that \(\rho _1 \rho _2 \le 1\) so that \(\Vert \varphi \Vert \le 1\).

Fix \(t \in \mathbb {N}\), \(z \in \mathbb {Z}^d\) such that \(\Vert z\Vert \le \rho _1 t\) and \(q^z_t > 0\), and the corresponding \(\varphi \in \mathbb {R}^d\) such that \(F(\varphi ) = z/t\). We identify \(\varphi \) with the linear functional mapping \(e_k\) to \(\varphi _k\) for \(1 \le k \le d\). For this choice of \(\varphi \), the linear term in the Taylor expansion of \(\Phi (\theta ) e^{-i z \cdot \theta /t}\) vanishes, so we have for \(j \in \{0,1\}\) and \(\theta \in {\mathcal {B}}_j\) (\(\Vert \theta - \theta ^j \Vert \le t^{-\frac{2}{5}}\))

where \(A_j\) is the quadratic form in the Taylor expansion of \(\Phi (\theta ) e^{-i z \cdot \theta /t}\). The error term \(O(t^{-6/5})\) is complex-valued, whereas the entries of \(A_j \big / \Phi (\theta ^j) e^{-i z \cdot \theta ^j/t}\) are real numbers. Let \(x_j(\theta )\) and \(y_j(\theta )\) denote respectively the real and imaginary part of

Then the left-hand side of (A5) can be written as follows:

Here, in the case \(j=1\), we used the assumption that \(q^z_t > 0\) and hence t and \(\Vert z\Vert _1\) have the same parity: as \(t \equiv \Vert z \Vert _1\), one has \(\Phi (\theta ^1)^t e^{-i z \cdot \theta ^1} = \Phi (0)^t (-1)^t e^{- i \pi \Vert z \Vert _1} = \Phi (0)^t\). If we represent \(x_j(\theta ) + i y_j(\theta )\) in polar form, then the modulus is \(\big |\Phi (\theta ) / \Phi (0) \big |\) and the argument is of order \(O (t^{-6/5})\). As a result, the integrand in (A7) can be written as

which yields (A5).

We continue estimating the expression on the right-hand side of (A4) by showing that for \(F(\varphi ) = z/t\), one also has

By Claim A.2, there exist \(\varepsilon , \delta > 0\) such that the left-hand side of (A8) is dominated by

Here we used that

As \(e^{-\delta t^{1/5}} \lesssim t^{-2/5} t^{-d/2}\), the estimate in (A8) follows once we show that

We have

where we used (A6). For \(\theta \in {\mathcal {B}}_0\), one has \(x_0 (\theta ) = \exp \big ((\theta \cdot A_0 \theta ) / \Phi (0) \big ) \big (1 + O (t^{-6/5}) \big )\), so we can continue the above chain of inequalities as follows:

where \(c > 0\) is some constant. Combining (A4), (A5), and (A8) yields

and hence

To show that

one simply combines (A10) with (A9) and (17).

1.4 A.4 Proof of Lemma 9

Let \(\rho {:}=\rho _1\) and \(\rho _2\) be as in Lemma 8, and let \(t, t' \in \mathbb {N}\), \(z, z' \in \mathbb {Z}^d\) such that \(\Vert z\Vert \le \rho t\) and \(q^z_t > 0\). Let \(\varphi \) be the linear functional from Lemma 8 that corresponds to t and z, and for which \(\Vert \varphi \Vert \le \rho _2 \Vert z\Vert /t\) and

We consider two cases: \(t' > t\) and \(t' \le t\).

Case “\(t' > t\)”. By (A3) and (16), one has

Furthermore,

The estimate in (A11) then implies

Case “\(t' \le t\)”. If \(t' \le t\), the function \(x \mapsto x^{t/ t'}\) is convex, and Jensen’s Inequality implies

where \(J_t\) was defined in (A9). Since \(J_t \gtrsim t^{-d/2}\),

for some constants \(c_2, c_3>0\). Combining (A12) and (A13), we obtain

Together with (A11), this yields

for some constant \(c > 0\).

Appendix B A Calculus Estimate

Lemma 14

There exists \(c > 0\) such that for every \(t \in \mathbb {N}\), \(l \in \mathbb {N}_0\), and \(M > 0\),

The sum on the left-hand side is taken over all positive integers \(t_1, \ldots , t_{l+1}\) that satisfy the two conditions under the summation sign.

Proof

We choose

where \(\zeta \) is the Riemann Zeta Function, and prove the statement by induction. In the base case \(l=0\), the left-hand side of (B14) is either zero (if \(t < M\)), or becomes

In the induction step, suppose that (B14) holds for some \(l \in \mathbb {N}_0\). Then,

For every \(t'\),

by induction hypothesis. Hence, the right-hand side of (B15) is bounded from above by

We have

If \(t' + t_{l+2} = t\) and \(t' \ge t_{l+2}\), it follows that \(t' \ge \tfrac{t}{2}\), so the expression on the right-hand side is bounded from above by

If \(M \ge 2\), we have

If \(M < 2\),

The expression in (B17) is therefore less than \(c M^{1-\frac{d}{2}} t^{- \frac{d}{2}}\). Combining this estimate with (B16) yields

\(\square \)

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Hurth, T., Khanin, K., Navarro Lameda, B. et al. On a Factorization Formula for the Partition Function of Directed Polymers. J Stat Phys 190, 165 (2023). https://doi.org/10.1007/s10955-023-03172-w

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s10955-023-03172-w