Abstract

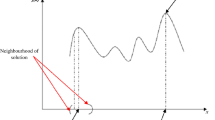

A general class of non-monotone line search algorithms has been proposed by Sachs and Sachs (Control Cybern 40:1059–1075, 2011) for smooth unconstrained optimization, generalizing various non-monotone step size rules such as the modified Armijo rule of Zhang and Hager (SIAM J Optim 14:1043–1056, 2004). In this paper, the worst-case complexity of this class of non-monotone algorithms is studied. The analysis is carried out in the context of non-convex, convex and strongly convex objectives with Lipschitz continuous gradients. Despite de nonmonotonicity in the decrease of function values, the complexity bounds obtained agree in order with the bounds already established for monotone algorithms.

Similar content being viewed by others

Notes

The MATLAB codes of all test problems considered are freely available in the website http://www.mat.univie.ac.at/~neum/glopt/moretest/.

The performance profiles were generated using the MATLAB code perf.m freely available in the website http://www.mcs.anl.gov/~more/cops/.

References

Ahookhosh, M., Amini, K., Bohrami, S.: A class of nonmonotone Armijo-type line search method for unconstrained optimization. Optimization 61, 387–404 (2012)

Birgin, E.G., Martínez, J.M., Raydan, M.: Spectral projected gradient methods: review and pespectives. J. Stat. Softw. 60, 1–21 (2014)

Birgin, E.G., Gardenghi, J.L., Martínez, J.M., Santos, S.A., Toint, P.L.: Worst-case evaluation complexity for unconstrained nonlinear optimization using high-order regularized models. Math. Program. 163, 359–368 (2017)

Birgin, E.G., Martínez, J.M.: The use of quadratic regularization with a cubic descent condition for unconstrained optimization. SIAM J. Optim. 27, 1049–1074 (2017)

Cartis, C., Gould, N.I.M., Toint, P.L.: Adaptive cubic regularization methods for unconstrained optimization. Part II: worst-case function—and derivative—evaluation complexity. Math. Program. 130, 295–319 (2011)

Cartis, C., Gould, N.I.M., Toint, P.L.: Evaluation complexity of adaptive cubic regularization methods for convex unconstrained optimization. Optim. Methods Softw. 27, 197–219 (2012)

Cartis, C., Sampaio, P.R., Toint, P.L.: Worst-case evaluation complexity of first-order non-monotone gradient-related algorithms for unconstrained optimization. Optimization 64, 1349–1361 (2015)

Curtis, F.E., Robinson, D.P., Samadi, M.: A trust region algorithm with a worst-case iteration complexity of \(\cal{O}(\epsilon ^{-3/2})\) for nonconvex optimization. Math. Program. doi:10.1007/s10107-016-1026-2 (2017)

Dodangeh, M., Vicente, L.N.: Worst case complexity of direct search under convexity. Math. Program. 155, 307–332 (2016)

Dolan, E., Moré, J.J.: Benchmarking optimization software with performance profiles. Math. Program. 91, 201–2013 (2002)

Dussault, J.-P.: ARCq: a new adaptive regularization by cubics variant. Optim. Methods Softw. doi:10.1080/10556788.2017.1322080 (2017)

Grapiglia, G.N., Nesterov, Y.: Regularized Newton methods for minimizing functions with Hölder continuous hessians. SIAM J. Optim. 27, 478–506 (2017)

Grapiglia, G.N., Yuan, J., Yuan, Y.: On the convergence and worst-case complexity of trust-region and regularization methods for unconstrained optimization. Math. Program. 152, 491–520 (2015)

Grapiglia, G.N., Yuan, J., Yuan, Y.: On the worst-case complexity of nonlinear stepsize control algorithms for convex unconstrained optimization. Optim. Methods Softw. 31, 591–604 (2016)

Grapiglia, G.N., Yuan, J., Yuan, Y.: Nonlinear stepsize control algorithms: complexity bounds for first-and second-order optimality. J. Optim. Theory Appl. 171, 980–997 (2016)

Gratton, S., Sartenaer, A., Toint, P.L.: Recursive trust-region methods for multiscale nonlinear optimization. SIAM J. Optim. 19, 414–444 (2008)

Grippo, L., Lampariello, F., Lucidi, S.: A nonmonotone line search technique for Newton’s method. SIAM J. Numer. Anal. 23, 707–716 (1986)

Gu, N., Mo, J.: Incorporating nonmonotone strategies into the trust region method for unconstrained optimization. Comput. Math. Appl. 55, 2158–2172 (2008)

Martínez, J.M., Raydan, M.: Cubic-regularization counterpart of a variable-norm trust-region method for unconstrained minimization. J. Global Optim. 68, 367–385 (2017)

Mo, J., Liu, C., Yan, S.: A nonmonotone trust-region method based on nonincreasing technique of weighted average of the sucessive function value. J. Comput. Appl. Math. 209, 97–108 (2007)

Moré, J.J., Garbow, B.S., Hillstrom, K.E.: Testing unconstrained optimization software. ACM Trans. Math. Softw. 7, 17–41 (1981)

Nesterov, Y.: Introductory Lectures on Convex Optimization: A Basic Course. Kluwer Academic Publishers, Dordrecht (2004)

Nesterov, Y., Polyak, B.T.: Cubic regularization of Newton method and its global performance. Math. Program. 108, 177–205 (2006)

Sachs, E.W., Sachs, S.M.: Nonmonotone line searches for optimization algorithms. Control Cybern. 40, 1059–1075 (2011)

Sun, W., Yuan, Y.: Optimization theory and methods: nonlinear programming. Springer Optimization and its Application, vol. 1. Springer, New York (2006)

Vicente, L.N.: Worst case complexity of direct search. EURO J. Comput. Optim. 1, 143–153 (2013)

Zhang, H.C., Hager, W.W.: A nonmonotone line search technique for unconstrained optimization. SIAM J. Optim. 14, 1043–1056 (2004)

Author information

Authors and Affiliations

Corresponding author

Additional information

G. N. Grapiglia was partially supported by CNPq - Brazil, Grant 401288/2014-5.

Rights and permissions

About this article

Cite this article

Grapiglia, G.N., Sachs, E.W. On the worst-case evaluation complexity of non-monotone line search algorithms. Comput Optim Appl 68, 555–577 (2017). https://doi.org/10.1007/s10589-017-9928-3

Received:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10589-017-9928-3