Abstract

Networks of the brain are composed of a very large number of neurons connected through a random graph and interacting after random delays that both depend on the anatomical distance between cells. In order to comprehend the role of these random architectures on the dynamics of such networks, we analyze the mesoscopic and macroscopic limits of networks with random correlated connectivity weights and delays. We address both averaged and quenched limits, and show propagation of chaos and convergence to a complex integral McKean-Vlasov equations with distributed delays. We then instantiate a completely solvable model illustrating the role of such random architectures in the emerging macroscopic activity. We particularly focus on the role of connectivity levels in the emergence of periodic solutions.

Similar content being viewed by others

Notes

Note that the whole sequence of weights (w ij ;1≤i,j≤N) as well as the delays (τ ij ;1≤i,j≤N) might be correlated. When these are related to the distance r ij between i and j, correlations may arise from symmetry (r ij =r ji ) or triangular inequality r ij ≤r ik +r kj . The independence assumption is nevertheless valid in that setting provided that the locations of the different cells are independent and identically distributed random variables.

The term invariant by translation is chosen in reference to random variables τ ij and w ij function of the distance r ij between neuron i and j: this distance is independent of the particular choice of neuron i (and of its location) if the neural field is invariant by translation in the usual sense.

This is always the case when considering bounded neural fields.

More precisely, taking a finite set of neurons {i 1,…,i k } the law of the process \((X_{t}^{i_{1},N},\ldots ,X_{t}^{i_{1},N},t\in[-\tau,T])\) converge in probability towards a vector \((\bar{X}_{t}^{i_{1}},\ldots,\bar{X}_{t}^{i_{1}},t\in[-\tau,T])\), where the processes \(\bar{X}^{l}\) are independent and have the law of \(X^{p(i_{l})}\) given by (2).

If the initial condition is not Gaussian, the solution to the mean-field equation will nevertheless be attracted exponentially fast towards the Gaussian solution described.

References

Aradi, I., Soltesz, I.: Modulation of network behaviour by changes in variance in interneuronal properties. J. Physiol. 538(1), 227 (2002)

Babb, T., Pretorius, J., Kupfer, W., Crandall, P.: Glutamate decarboxylase-immunoreactive neurons are preserved in human epileptic hippocampus. J. Neurosci. 9(7), 2562–2574 (1989)

Bassett, D.S., Bullmore, E.: Small-world brain networks. Neuroscientist 12(6), 512–523 (2006)

Bettus, G., Wendling, F., Guye, M., Valton, L., Régis, J., Chauvel, P., Bartolomei, F.: Enhanced eeg functional connectivity in mesial temporal lobe epilepsy. Epilepsy Res. 81(1), 58–68 (2008)

Bosking, W., Zhang, Y., Schofield, B., Fitzpatrick, D.: Orientation selectivity and the arrangement of horizontal connections in tree shrew striate cortex. J. Neurosci. 17(6), 2112–2127 (1997)

Bressloff, P.C.: Spatiotemporal dynamics of continuum neural fields. J. Phys. A, Math. Theor. 45(3), 033001 (2012)

Bullmore, E., Sporns, O.: Complex brain networks: graph theoretical analysis of structural and functional systems. Nat. Rev. Neurosci. 10(3), 186–198 (2009)

Buzsaki, G.: Rhythms of the Brain. Oxford University Press, Oxford (2004)

Da Prato, G., Zabczyk, J.: Stochastic Equations in Infinite Dimensions. Cambridge Univ Press, Cambridge (1992)

Dobrushin, R.: Prescribing a system of random variables by conditional distributions. Theory Probab. Appl. 15, 458–486 (1970)

Ermentrout, G., Cowan, J.: Temporal oscillations in neuronal nets. J. Math. Biol. 7(3), 265–280 (1979)

Ermentrout, G., Terman, D.: Mathematical Foundations of Neuroscience (2010)

Ermentrout, G.B., Terman, D.: Foundations of Mathematical Neuroscience. Interdisciplinary Applied Mathematics. Springer, Berlin (2010)

FitzHugh, R.: Mathematical models of threshold phenomena in the nerve membrane. Bull. Math. Biol. 17(4), 257–278 (1955)

Gray, C.M., König, P., Engel, A.K., Singer, W., et al.: Oscillatory responses in cat visual cortex exhibit inter-columnar synchronization which reflects global stimulus properties. Nature 338(6213), 334–337 (1989)

Hodgkin, A., Huxley, A.: Action potentials recorded from inside a nerve fibre. Nature 144, 710–711 (1939)

Hodgkin, A., Huxley, A.: A quantitative description of membrane current and its application to conduction and excitation in nerve. J. Physiol. 117, 500–544 (1952)

Jansen, B.H., Rit, V.G.: Electroencephalogram and visual evoked potential generation in a mathematical model of coupled cortical columns. Biol. Cybern. 73, 357–366 (1995)

Mao, X.: Stochastic Differential Equations and Applications. Horwood, Chichester (2008)

Munoz, A., Mendez, P., DeFelipe, J., Alvarez-Leefmans, F.: Cation-chloride cotransporters and gaba-ergic innervation in the human epileptic hippocampus. Epilepsia 48(4), 663–673 (2007)

Noebels, J.: Targeting epilepsy genes minireview. Neuron 16, 241–244 (1996)

Ohki, K., Chung, S., Ch’ng, Y., Kara, P., Reid, R.: Functional imaging with cellular resolution reveals precise micro-architecture in visual cortex. Nature 433, 597–603 (2005)

Schnitzler, A., Gross, J.: Normal and pathological oscillatory communication in the brain. Nat. Rev. Neurosci. 6(4), 285–296 (2005)

Shpiro, A., Curtu, R., Rinzel, J., Rubin, N.: Dynamical characteristics common to neuronal competition models. J. Neurophysiol. 97(1), 462–473 (2007)

Sznitman, A.: Nonlinear reflecting diffusion process, and the propagation of chaos and fluctuations associated. J. Funct. Anal. 56(3), 311–336 (1984)

Sznitman, A.: Topics in propagation of chaos. Ecole d’Eté de Probabilités de Saint-Flour XIX, pp. 165–251 (1989)

Tanaka, H.: Probabilistic treatment of the Boltzmann equation of Maxwellian molecules. Probab. Theory Relat. Fields 46(1), 67–105 (1978)

Touboul, J.: Limits and dynamics of stochastic neuronal networks with random delays. J. Stat. Phys. 149(4), 569–597 (2012)

Touboul, J.: The propagation of chaos in neural fields. Ann. Appl. Probab. 24, 1298–1327 (2014)

Touboul, J.: On the dynamics of mean-field equations for stochastic neural fields with delays

Touboul, J., Hermann, G., Faugeras, O.: Noise-induced behaviors in neural mean field dynamics. SIAM J. Appl. Dyn. Syst. 11, 49–81 (2011)

Wilson, H., Cowan, J.: Excitatory and inhibitory interactions in localized populations of model neurons. Biophys. J. 12, 1–24 (1972)

Wilson, H., Cowan, J.: A mathematical theory of the functional dynamics of cortical and thalamic nervous tissue. Biol. Cybern. 13(2), 55–80 (1973)

Author information

Authors and Affiliations

Corresponding author

Appendix A: Randomly Connected Neural Fields

Appendix A: Randomly Connected Neural Fields

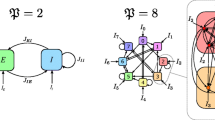

We now extend the above results to the mesoscopic case of spatially-extended neural fields with random correlated connectivity weights and delays. In this case, following [29], we consider that the number of populations in a network of size N is P(N), and this quantity diverges when N tends to infinity covering, in the limit N→∞, a piece of cortical tissue Γ which compact set of \(\mathbb{R}^{\delta}\) (generally δ=1,2). In this interpretation, a population index represents the location r α ∈Γ of a microcolumn on the neural field, which are assumed to be independent random variables with distribution λ on Γ. For the sake of simplicity and consistency with other works about neural fields, we include the dependence on the neural populations in the drift and diffusion functions. We therefore introduce three maps:

-

the measurable functions \(f: \varGamma\times \mathbb{R}\times E\mapsto E\) and \(g: \varGamma\times \mathbb{R}\times E \mapsto E^{m}\)

-

the map \(b:\varGamma\times\varGamma\times \mathbb{R}\times E \times E\mapsto E\) which is assumed measurable,

and rewrite the network equations as:

These equations are clearly well-defined as proved in Proposition 1. As described in the macroscopic framework 2, the two sequences of random variables (w ij ) and (τ ij ) for fixed \(i\in \mathbb{N}\) are independent, and for fixed (i,j), τ ij and w ij are correlated. Their law depend on the locations r α and r γ of the microcolumns neurons i and j belong to. We denote \(\varLambda_{r_{\alpha},r_{\gamma}}\) this law. We assume that this law is measurable with respect to the Borel algebra of Γ, i.e. for any \(A \in \mathcal{B}(\mathbb{R}\times \mathbb{R}_{+})\) the Borel algebra of \(\mathbb{R}\times \mathbb{R}_{+}\), the map (r,r′)↦Λ r,r′(A) is measurable with respect to \(\mathcal{B}(\varGamma\times\varGamma)\). We assume that assumptions (H1)–(H4) are valid uniformly in the space variables, and consider the neural field limit given by the condition:

Elaborating on the proofs provided (i) in the finite-population case treated in the present manuscript and (ii) in the neural field limit for non random synaptic weights or delays, we will show that the network equations converge towards a spatially-extended McKean-Vlasov equation:

In these equations, the process (W t (r)) is a chaotic Brownian motion (as defined in [29]), i.e. a stochastic process indexed by space r∈Γ, such that for any r∈Γ, the process W t (r) is a standard m-dimensional Brownian motion and for any r≠r′∈Γ 2, W t (r) and W t (r′) are independent. These processes are singular functions of space, and in particular not measurable with respect to the Borel algebra of Γ, \(\mathcal{B}(\varGamma)\). Therefore, the solutions are themselves not measurable, which raise questions on the definition of the mean-field equation (16) in particular for the definition of the integral on space of the mean-field term. However, it was shown in [29], making sense of this equation amounts showing that the law of the solution is \(\mathcal{B}(\varGamma)\)-measurable. Once this is proved, the integral is well defined. In the spatial case, we make the following assumptions, that are directly corresponding to the assumptions (H1)–(H4) of the finite-population case:

-

(H1’)

f and g are uniformly Lipschitz-continuous functions with respect to their last variable.

-

(H2’)

For almost all \(w\in \mathbb{R}\) and any (r,r′)∈Γ 2, b(r,r′,w,⋅,⋅) is L-Lipschitz-continuous, i.e. for any (x,y) and (x′,y′) in E×E, we have:

$$|b(r,r',w,x,y)-b(r,r',w,x^\prime,y^\prime)|\leq L(|x-x^\prime |+|y-y^\prime|). $$ -

(H3’)

There exists a function \(\bar{K} : \mathbb{R}\mapsto \mathbb{R}^{+}\) such that for any (r,r′)∈Γ 2,

$$|b(r,r',w,x,y)|^2\leq\bar{K}(w)\quad\mbox{and}\quad \mathcal{E}_{\varLambda _{r,r'}}[\bar{K}(w)]\leq\bar{k}<\infty. $$ -

(H4’)

The drift and diffusion functions satisfy the uniform (in r) monotone growth condition:

$$x^Tf(r,t,x)+\frac{1}{2}|g(r,t,x)|^2\leq K(1+|x|^2). $$

The initial conditions we consider for the mean-field equations are processes \((\zeta_{t}(r), t\in[-\tau,0])\in\mathcal{X}_{0}\) the space of spatially chaotic square integrable process with measurable law, processes such that the regularity conditions are satisfied:

-

for any r∈Γ, ζ t (r) is square integrable in \(\mathcal{C}_{\tau}\)

-

for any r≠r′, the processes ζ(r) and ζ(r′) are independent

-

for fixed t∈[−τ,0], the law of ζ t (r) is measurable with respect to \(\mathcal{B}(\varGamma)\), i.e. for any \(A\in \mathcal{B}(E)\), \(p_{\zeta _{t}}(r)=\mathbb{P}(\zeta_{t}(r)\in A)\) is a measurable function of \((\varGamma,\mathcal{B}(\varGamma))\) in [0,1].

We will denote \(\mathcal{X}_{T}\) the set of processes (ζ t (r),t∈[−τ,T]) satisfying the above regularity conditions on [−τ,T].

Proposition 6

Under assumptions (H1’)–(H4’), for any initial condition \(\zeta\in \mathcal{X}\), there exists a unique, well-defined strong solution to the mean-field equations (16).

The proof classically starts by showing square integrability of possible solutions, then considers equation (16) as a fixed point equation X t =Φ(X t ), and shows a convergence property of iterates of the map Φ starting from an arbitrary chaotic process \(X^{0}_{t}(r)\in\mathcal{X}_{T}\). It is easy to see that the function Φ maps \(\mathcal{X}_{T}\) in itself. The sequence of processes X k=Φ k(X 0) is therefore well-defined. Estimates similar to those proved in Proposition 1 and Theorem 2 allow concluding on the existence and uniqueness of solutions. The proof being classical, it is left to the interested reader extending the argument of [29, Theorem 2] to our random environment setting.

The convergence result of the network equations towards the mean-field equations can be stated as follows:

Theorem 7

Let \(\zeta\in\mathcal{X}_{0}\) a chaotic process. Consider the process \((X^{i,N}_{t}, t\in[-\tau,T])\) solution of the network equations (14) with independent initial conditions identically distributed for neurons in the same population located at r∈Γ with law equal to (ξ t (r),t∈[−τ,0]). Under assumptions (H1’)–(H4’) and the neural field limit assumption (15), the process \((X^{i,N}_{t}, t\in[-\tau,T])\) converges in law towards (X t (r),t∈[−τ,T]) solution of the mean-field equations with initial conditions ζ.

The proof of this result proceeds as that of [29, Theorem 3] including the refinements brought in the proof of Theorem 2 to take into account random connectivities and delays.

Rights and permissions

About this article

Cite this article

Quiñinao, C., Touboul, J. Limits and Dynamics of Randomly Connected Neuronal Networks. Acta Appl Math 136, 167–192 (2015). https://doi.org/10.1007/s10440-014-9945-5

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10440-014-9945-5