Abstract

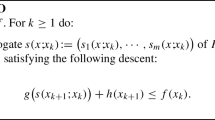

We introduce an inexact variant of stochastic mirror descent (SMD), called inexact stochastic mirror descent (ISMD), to solve nonlinear two-stage stochastic programs where the second stage problem has linear and nonlinear coupling constraints and a nonlinear objective function which depends on both first and second stage decisions. Given a candidate first stage solution and a realization of the second stage random vector, each iteration of ISMD combines a stochastic subgradient descent using a prox-mapping with the computation of approximate (instead of exact for SMD) primal and dual second stage solutions. We provide two convergence analysis of ISMD, under two sets of assumptions. The first convergence analysis is based on the formulas for inexact cuts of value functions of convex optimization problems shown recently in Guigues (SIAM J. Optim. 30(1), 407–438, 2020). The second convergence analysis provides a convergence rate (the same as SMD) and relies on new formulas that we derive for inexact cuts of value functions of convex optimization problems assuming that the dual function of the second stage problem for all fixed first stage solution and realization of the second stage random vector, is strongly concave. We show that this assumption of strong concavity is satisfied for some classes of problems and present the results of numerical experiments on two simple two-stage problems which show that solving approximately the second stage problem for the first iterations of ISMD can help us obtain a good approximate first stage solution quicker than with SMD.

Similar content being viewed by others

Notes

Using the equivalence between norms in \(\mathbb {R}^n\), we can derive a valid constant of strong concavity for other norms, for instance \(\Vert \cdot \Vert _{\infty }\) and \(\Vert \cdot \Vert _1\).

Note that we used (A4) to ensure that \(x(\lambda _*, \mu _*) = x_*\), which is also used in the proof of Theorem 10 in [19].

Note that (H1) and (H2) imply the convexity of \(\mathcal {Q}\) given by (3.1). Indeed, let \(x_1, x_2 \in X\), \(0 \le t \le 1\), and \(y_1 \in S( x_1), y_2 \in S( x_2),\) such that \(\mathcal {Q}( x_1)=f(y_1, x_1)\) and \(\mathcal {Q}( x_2)=f(y_2, x_2)\). By convexity of g and Y, we have that have \(ty_1 +(1-t)y_2 \in S(tx_1 + (1-t)x_2)\) and therefore \(\mathcal {Q}(tx_1 +(1-t)x_2) \le f(ty_1 + (1-t)y_2,t x_1 + (1-t)x_2) \le t f(y_1,x_1) +(1-t)f(y_2,x_2)=t\mathcal {Q}(x_1)+(1-t)\mathcal {Q}(x_2)\) where for the last inequality we have used the convexity of f.

The proof is similar to the proof of Proposition 4.6 in [6].

According to current Mosek documentation, it is not possible to use absolute errors. Therefore, early termination of the solver can either be obtained limiting the number of iterations or defining relative errors.

Naturally, after running \(t-1\) of the \(N-1\) total iterations, the approximate optimal value computed by SMD is \(\displaystyle \frac{1}{\sum _{\tau =1}^t \gamma _\tau (N)} \sum \nolimits _{\tau =1}^t \gamma _\tau ( N) \Big ( f_1(x_1^{N,\tau }) + f_2( x_2^{N,\tau }, x_1^{N,\tau }, \xi _2^{N,\tau }) \Big )\) obtained on the basis of sample \(\xi _2^{N,1},\ldots ,\xi _2^{N,t}\) of \(\xi _2\).

Due to the increase in computational time when N increases, we do not take the largest sample size \(N=2000\) for all instances. However, for all instances and values of N chosen, we observe a stabilization of the approximate optimal value before stopping the algorithm, which indicates a good solution has been found at termination.

When SMD (and similarly for ISMD) is run on samples of \(\xi _2\) of size N, we have seen how to compute at iteration \(t-1\) an estimation \(\displaystyle \frac{1}{\sum _{\tau =1}^t \gamma _\tau (N)} \sum \nolimits _{\tau =1}^t \gamma _\tau ( N) \Big ( f_1(x_1^{N,\tau }) + f_2( x_2^{N,\tau }, x_1^{N,\tau }, \xi _2^{N,\tau }) \Big )\) of the optimal value on the basis of sample \(\xi _2^{N,1},\ldots ,\xi _2^{N,t}\) of \(\xi _2\). The mean approximate optimal value after \(t-1\) iterations is obtained running SMD on 10 independent samples of \(\xi _2\) of size N and computing the mean of these values on these samples.

References

Andersen, E.D., Andersen, K.D.: The MOSEK optimization toolbox for MATLAB manual. Version 7.0, (2013). https://www.mosek.com/

Birge, J., Louveaux, F.: Introduction to Stochastic Programming. Springer, New York (1997)

Dantzig, G.B., Glynn, P.W.: Parallel processors for planning under uncertainty. Ann. Oper. Res. 22, 1–21 (1990)

Guigues, V.: Convergence analysis of sampling-based decomposition methods for risk-averse multistage stochastic convex programs. SIAM J. Optim. 26, 2468–2494 (2016)

Guigues, V.: Multistep stochastic mirror descent for risk-averse convex stochastic programs based on extended polyhedral risk measures. Math. Program. 163, 169–212 (2017)

Guigues, V.: Inexact cuts in Stochastic Dual Dynamic Programming. SIAM J. Optim. 30(1), 407–438 (2020)

Hiriart-Urruty, J.-B., Lemaréchal, C.: Convex Analysis and Minimization Algorithms I. Springer, Berlin (1996)

Infanger, G.: Monte Carlo (importance) sampling within a benders decomposition algorithm for stochastic linear programs. Ann. Oper. Res. 39, 69–95 (1992)

Juditsky, A., Nesterov, Y.: Primal–dual subgradient methods for minimizing uniformly convex functions. arXiv:1401.1792 (2010)

Lan, G., Nemirovski, A., Shapiro, A.: Validation analysis of mirror descent stochastic approximation method. Math. Program. 134, 425–458 (2012)

Lan, G., Zhou, Z.: Dynamic stochastic approximation for multi-stage stochastic optimization. arXiv (2017)

Lemaréchal, C., Nemirovski, A., Nesterov, Y.: New variants of bundle methods. Math. Program. 69, 111–148 (1995)

Nemirovski, A., Juditsky, A., Lan, G., Shapiro, A.: Robust stochastic approximation approach to stochastic programming. SIAM J. Optim. 19, 1574–1609 (2009)

Pereira, M.V.F., Pinto, L.M.V.G.: Multi-stage stochastic optimization applied to energy planning. Math. Program. 52, 359–375 (1991)

Polyak, B.T., Juditsky, A.: Acceleration of stochastic approximation by averaging. SIAM J. Control Optim. 30, 838–855 (1992)

Rockafellar, R.T., Wets, R.J.B.: Variational Analysis. Grundlehren der Mathematischen Wissenschaften. Springer, New York (1997)

Ruszczyński, A.: A multicut regularized decomposition method for minimizing a sum of polyhedral functions. Math. Program. 35, 309–333 (1986)

Shapiro, A., Dentcheva, D., Ruszczyński, A.: Lectures on Stochastic Programming: Modeling and Theory. SIAM, Philadelphia (2009)

Yu, H., Neely, J.: On the convergence time of the drift-plus-penalty algorithm for strongly convex programs. arXiv:1503.06235 (2015)

Acknowledgements

The author’s research was partially supported by an FGV Grant, CNPq Grant 311289/2016-9, and FAPERJ Grant E-26/201.599/2014. The author would like to thank Alberto Seeger for helpful discussions.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix

Appendix

Dual function \(\theta _{{\bar{x}}}\) of problem (3.30) for some \({\bar{x}}\) randomly drawn in ball \(\{x \in \mathbb {R}^n: \Vert x-x_0 \Vert _2 \le 1\}\), \(S=AA^T + \lambda I_{2 n}\) for some random matrix A with random entries in \([-20,20]\), and several values of the pair \((n,\lambda )\). The dual iterates are represented by red diamonds

Plots of \(\eta _{1}(\varepsilon _k, {\bar{x}})\) and \(\eta _2(\varepsilon _k, {\bar{x}})\) as a function of iteration k where \(\varepsilon _k\) is the duality gap at iteration k for problem (3.30) for some \({\bar{x}}\) randomly drawn in ball \(\{x \in \mathbb {R}^n: \Vert x-x_0 \Vert _2 \le 1\}\), \(S=AA^T + \lambda I_{2 n}\) for some random matrix A with random entries in \([-20,20]\), and several values of the pair \((n,\lambda )\)

Rights and permissions

About this article

Cite this article

Guigues, V. Inexact stochastic mirror descent for two-stage nonlinear stochastic programs. Math. Program. 187, 533–577 (2021). https://doi.org/10.1007/s10107-020-01490-5

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10107-020-01490-5

Keywords

- Inexact cuts for value functions

- Inexact stochastic mirror descent

- Strong concavity of the dual function

- Stochastic programming