Abstract

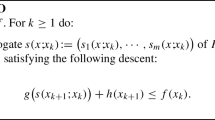

Composite minimization involves a collection of smooth functions which are aggregated in a nonsmooth manner. In the convex setting, we design an algorithm by linearizing each smooth component in accordance with its main curvature. The resulting method, called the Multiprox method, consists in solving successively simple problems (e.g., constrained quadratic problems) which can also feature some proximal operators. To study the complexity and the convergence of this method, we are led to prove a new type of qualification condition and to understand the impact of multipliers on the complexity bounds. We obtain explicit complexity results of the form \(O(\frac{1}{k})\) involving new types of constant terms. A distinctive feature of our approach is to be able to cope with oracles involving moving constraints. Our method is flexible enough to include the moving balls method, the proximal Gauss–Newton’s method, or the forward–backward splitting, for which we recover known complexity results or establish new ones. We show through several numerical experiments how the use of multiple proximal terms can be decisive for problems with complex geometries.

Similar content being viewed by others

Notes

Also known as the proximal Gauss–Newton’s method

Indeed, PGNM is somehow a “constant step size” method

We refer to constants relative to the gradients.

For any such i and any m real numbers \(z_1, \ldots z_m\), the function \(z \mapsto g(z_1,\ldots , z_{i-1}, z,z_{i+1}, \ldots , z_m)\) is nondecreasing. In particular, its domain is either the whole of \(\mathbb {R}\) or a closed half line \((-\infty , a]\) for some \(a\in \mathbb {R}\), or empty.

There is a slight shift in the indices of F

Observe that the subproblems are simple convex quadratic problems.

For the original problem (28).

Lipschitz continuity is actually superfluous for Theorem 1 to hold.

Actually the inverse of our steps.

References

Auslender, A., Teboulle, M.: Interior gradient and proximal methods for convex and conic optimization. SIAM J. Optim. 16(3), 697–725 (2006)

Auslender, A., Shefi, R., Teboulle, M.: A moving balls approximation method for a class of smooth constrained minimization problems. SIAM J. Optim. 20(6), 3232–3259 (2010)

Auslender, A.: An extended sequential quadratically constrained quadratic programming algorithm for nonlinear, semidefinite, and second-order cone programming. J. Optim. Theory Appl. 156(2), 183–212 (2013)

Bauschke, H.H., Combettes, P.L.: Convex Analysis and Monotone Operator Theory in Hilbert Spaces. Springer, New York (2017)

Beck A, A., Teboulle, M.: A fast iterative shrinkage-thresholding algorithm for linear inverse problems. SIAM J. Imaging Sci. 2(1), 183–202 (2009)

Bolte, J., Pauwels, E.: Majorization-minimization procedures and convergence of SQP methods for semi-algebraic and tame programs. Math. Oper. Res. 41(2), 442–465 (2016)

Burke, J.V.: Descent methods for composite nondifferentiable optimization problems. Math. Program. 33(3), 260–279 (1985)

Burke, J.V., Ferris, M.C.: A Gauss–Newton method for convex composite optimization. Math. Program. 71(2), 179–194 (1995)

Cartis, C., Gould, N.I., Toint, P.L.: On the evaluation complexity of composite function minimization with applications to nonconvex nonlinear programming. SIAM J. Optim. 21(4), 1721–1739 (2011)

Cartis, C., Gould, N., Toint, P.: On the complexity of finding first-order critical points in constrained nonlinear optimization. Math. Program. 144(1), 93–106 (2014)

Combettes, P.L., Wajs, V.R.: Signal recovery by proximal forward–backward splitting. Multiscale Model. Simul. 4(4), 1168–2000 (2005)

Combettes, P.L., Pesquet, J.-C.: Proximal Splitting Methods in Signal Processing, Fixed-Point Algorithm for Inverse Problems in Science and Engineering. Optimization and Its Applications, pp. 185–212. Springer, New York (2011)

Combettes, P.L.: Systems of structured monotone inclusions: duality, algorithms, and applications. SIAM J. Optim. 23(4), 2420–2447 (2013)

Combettes, P.L., Eckstein, J.: Asynchronous block-iterative primal-dual decomposition methods for monotone inclusions. Math. Program. 168(1–2), 645–672 (2018). https://doi.org/10.1007/s10107-016-1044-0

Drusvyatskiy, D., Lewis, A.S.: Error bounds, quadratic growth, and linear convergence of proximal methods. Math. Op. Res. 43(3), 919–948 (2018). https://doi.org/10.1287/moor.2017.0889

Drusvyatskiy, D., Paquette, C.: Efficiency of minimizing compositions of convex functions and smooth maps. Math. Program. (2018). https://doi.org/10.1007/s10107-018-1311-3

Eckstein, J.: Nonlinear proximal point algorithms using Bregman functions, with applications to convex programming. Math. Oper. Res. 18(1), 202–226 (1993)

Fletcher, R.: A model algorithm for composite nondifferentiable optimization problems. In: Sorensen, D.C., Wets, R.J.B. (eds.) Nondifferential and Variational Techniques in Optimization. Mathematical Programming Studies, vol. 17. Springer, Berlin, Heidelberg (1982)

Hiriart-Urruty, J.-B., Lemarechal, C.: Convex Analysis and Minimization Algorithm I. Springer, New York (1993)

Hiriart-Urruty, J.B.: A note on the Legendre–Fenchel transform of convex composite functions. In: Alart, P., Maisonneuve, O., Rockafellar R.T. (eds.) Nonsmooth Mechanics and Analysis, pp. 35–46. Springer US (2006)

Le Roux, N., Schmidt, M., Bach, F.: A stochastic gradient method with an exponential convergence rate for finite training sets. Adv. Neural Inf. Process. Syst. 25, 2663–2671 (2012)

Levitin, E.S., Polyak, B.T.: Constrained minimization methods. USSR Comput. Math. Math. Phys. 6(5), 1–50 (1966)

Lewis, A.S., Wright, S.J.: A proximal method for composite minimization. Mathe. Program. Math. Program. 158(1–2), 501–546 (2016). https://doi.org/10.1007/s10107-015-0943-9

Li, C., Ng, K.F.: Majorizing functions and convergence of the Gauss–Newton method for convex composite optimization. SIAM J. Optim. 18(2), 613–642 (2007)

Li, C., Wang, X.: On convergence of the Gauss–Newton method for convex composite optimization. Math. Program. 91(2), 349–356 (2002)

Lions, P.-L., Mercier, B.: Splitting algorithms for the sum of two nonlinear operators. SIAM J. Numer. Anal. 16(6), 964–979 (1979)

Lofberg, J.: YALMIP: A toolbox for modeling and optimization in MATLAB. In: IEEE International Symposium on Computer Aided Control Systems Design (2004)

Martinet, B.: Revue française d’informatique et de recherche opérationnelle, série rouge. Brève communication. Régularisation d’inéquations variationnelles par approximations successives 4(3), 154–158 (1970)

Moreau, J.-J.: Proximité et dualité dans un espace hilbertien. Bulletin de la Société mathématique de France. 93, 273–299 (1965)

Moreau, J.-J.: Evolution problem associated with a moving convex set in a Hilbert space. J. Differ. Equ. 26(3), 347–374 (1977)

Mosek Aps: The MOSEK optimization toolbox for MATLAB manual. Version 7, 1 (2016). https://HrBwww.yumpu.com/en/document/view/54768342/the-mosek-optimization-toolbox-for-matlab-manuaHrBl-version-70-revision-141/32

Nesterov, Y., Nemirovskii, A.: Interior-Point Polynomial Algorithms in Convex Programming. Society for Industrial and Applied Mathematics, Philadelphia (1994)

Nesterov, Y.: Introductory Lectures on Convex Programming, Volumne I: Basis Course. Springer, New York (2004)

Nemirovskii, A., Yudin, D.: Problem Complexity and Method Efficiency in Optimization. Wiley, New York (1983)

Nocedal, J., Wright, S.: Numerical Optimization. Springer, New York (2006)

Ortega, J.M., Rheinboldt, W.C.: Iterative Solution of Nonlinear Equations in Several Variables. SIAM, Philadelphia (2000)

Passty, G.B.: Ergodic convergence to a zero of the sum of monotone operators in Hilbert space. J. Math. Anal. Appl. 72(2), 383–390 (1979)

Pauwels, E.: The value function approach to convergence analysis in composite optimization. Oper. Res. Lett. 44(6), 790–795 (2016)

Pshenichnyi, B.N.: The linearization method. Optimization 18(2), 179–196 (1987)

Rockafellar, R.T.: Augmented Lagrangians and applications of the proximal point algorithm in convex programming. Math. Oper. Res. 1(2), 97–116 (1976)

Rockafellar, R.T., Wets, R.: Variational Analysis. Springer, New York (1998)

Rosen, J.B.: The gradient projection method for nonlinear programming. Part I. Linear constraints. J. Soc. Ind. Appl. Math. 8(1), 181–217 (1960)

Rosen, J.B.: The gradient projection method for nonlinear programming. Part II. Nonlinear constraints. J. Soc. Ind. Appl. Math. 9(4), 514–532 (1961)

Salzo, S., Villa, S.: Convergence analysis of a proximal Gauss–Newton method. Comput. Optim. Appl. 53(2), 557–589 (2012)

Schmidt, M., Le Roux, N., Bach, F.: Minimizing finite sums with the stochastic average gradient. Math. Program. 162(1–2), 83–112 (2017)

Shefi, R., Teboulle, M.: A dual method for minimizing a nonsmooth objective over one smooth inequality constraint. Math. Program. 159(1–2), 137–164 (2016)

Solodov, : Global convergence of an SQP method without boundedness assumptions on any of the iterative sequences. Math. Program. 118(1), 1–12 (2009)

Tseng, P.: Applications of a splitting algorithm to decomposition in convex programming and variational inequalities. SIAM J. Control Optim. 29(1), 119–138 (1991)

Villa, S., Salzo, S., Baldassarre, L., Verri, A.: Accelerated and inexact forward–backward algorithms. SIAM J. Optim. 23(3), 1607–1633 (2013)

Ye, Y.: Interior Point Algorithms: Theory and Analysis. Yinyu Ye Wiley & Sons, New York (1997)

Acknowledgements

We thank Marc Teboulle for his suggestions and the anonymous referees for their very useful comments.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

This work is sponsored by the Air Force Office of Scientific Research under Grant FA9550-14-1-0500.

Appendices

Appendix A: Proof of Proposition 1

Let us recall a qualification condition from [41]. Given any \(x\in F^{-1}(\mathrm {dom}\,g)\), let

be the linearized mapping of F at x. Proposition 1 follows immediately from the classical chain rule given in [41, Theorem 10.6] and the following proposition.

Proposition 3

(Two equivalent qualification conditions) Under Assumptions 1 on F and g, Assumption 4 holds if and only if

(QC) \(\mathrm {dom}\,g\) cannot be separated from \(J(x,{\mathbb {R}}^n)\) for any \(x\in F^{-1}(\mathrm {dom}\,g)\).

Proof

We first suppose that (QC) is true. We begin with a remark showing that this implies that \(\mathrm {dom}\,g\) is not empty. Let A and B be two subsets of \(\mathbb {R}^m\). The logical negation of the sentence “A and B can be separated” can be written as follows: for all a in \(\mathbb {R}^m\) and for all \(b \in \mathbb {R}\), there exists \(y \in A\) such that

or, there exists \(z \in B\) such that

In particular if A and B cannot be separated, then either A or B is not empty. Note that if \(\mathrm {dom}\,g\) is empty, then so is the set \(\{J(x, \mathbb {R}^n),\, x \in F^{-1}(\mathrm {dom}\,g)\}\). Hence (QC) actually implies that \(\mathrm {dom}\,g\) is not empty. Pick a point \({\tilde{x}}\in F^{-1}(\mathrm {dom}\,g)\). If \(F({\tilde{x}})\in \text {int dom}(g)\), there is nothing to prove, so we may suppose that \(F({\tilde{x}})\in \text {bd dom}\,g\). If we had \([\text {int dom}(g)] \cap J({\tilde{x}},{\mathbb {R}}^n) = \emptyset \), then, \(\mathrm {dom}\,g\) and \(J({\tilde{x}},{\mathbb {R}}^n)\) could be separated by Hahn-Banach theorem contradicting (QC). Hence, there exists \(\tilde{\omega }\in {\mathbb {R}}^n\) such that \(J({\tilde{x}},{\tilde{\omega }})\in \text {int dom}(g)\). Note that, since \(F({\tilde{x}})\in \mathrm {dom}(g)\) and g is nondecreasing with respect to each argument, it follows \(F({\tilde{x}}) - d\in \mathrm {dom}(g)\) for any \(d\in ({\mathbb {R}}_+^*)^m\), indicating that \(\mathrm {int}(\mathrm {dom}(g))\ne \emptyset \). Since \(\mathrm {dom}\,g\) is convex, a classical result yields

On the other hand F is differentiable thus

where \(o(\lambda )/\lambda \) tends to zero as \(\lambda \) goes to zero.

After these basic observations, let us recall an important property of the signed distance (see [19, p. 154]). Let \(D\subset {\mathbb {R}}^m\) be a nonempty closed convex set. Then, the function

is concave. Using this concavity property for \(D=\mathrm {dom}\,g\) and the fact that \(F({\tilde{x}}) = J({\tilde{x}},0)\), it holds that

Since \(\text {dist}[F({\tilde{x}}),\text {bd}(\mathrm {dom}\,g)] = 0\), it follows that

Note that \( \text {dist}[J({\tilde{x}},{\tilde{\omega }}),\text {bd}(\mathrm {dom}\,g)] > 0\) since \(J({\tilde{x}},{\tilde{\omega }})\in \text {int dom}(g)\). Hence, Eq. (44) indicates that there exists \(\epsilon > 0\) such that for any \(0 < \lambda \le \epsilon \), we have

Substituting this inequality into Eq. (45) indicates that for any \(0 < \lambda \le \epsilon \), we have

Using Eq. (43), for any \(0 <\lambda \le \epsilon \), we have \(F({\tilde{x}} + \lambda {\tilde{\omega }})\in \text {int dom}(g)\). This shows the first implication of the equivalence.

Let us prove the reverse implication by contraposition and assume that (QC) does not hold, that is, there exists a point \({\tilde{x}}\in F^{-1}(\mathrm {dom}\,g)\) such that \(\mathrm {dom}\,g\) can be separated from \(J({\tilde{x}},{\mathbb {R}}^n)\). In this case, there exists \(a \ne 0 \in {\mathbb {R}}^{m}\) and \(b\in {\mathbb {R}}\) such that

Since \(J({\tilde{x}},0) = F({\tilde{x}}) \in \mathrm {dom}\,g\), it follows

By the coordinatewise convexity of F, for every \(i\in \{1,\ldots ,m\}\) one has

We thus have the componentwise inequality

The monotonicity properties of g implies thus that

As a result, combining Eq. (46) with Eq. (47), one has

which reduces to \(a^T [F({\tilde{x}}+\omega ) - J({\tilde{x}},\omega ) ] \ge 0,\)\(\forall \omega \in {\mathbb {R}}^n\). Hence, for any \(\omega \in {\mathbb {R}}^n\) one has

where for the last inequality, Eq. (46) is used. This inequality combined with the fact \(a^T z + b < 0,\, \forall z\in \mathrm {int\ dom}\ g\) obtained according to the first item of Eq. (46), shows that \(F({\tilde{x}}+\omega )\not \in \text {int dom}\ g\), for all \(\omega \in {\mathbb {R}}^n\), and thus \(F^{-1}(\text {int dom}\,g)= \emptyset \), that is Assumption 4 does not hold. This provides the reverse implication and the proof is complete. \(\square \)

Appendix B: Proof of Lemma 4

In this section, we present an explicit estimate of the condition number appearing in our complexity result. Let us first introduce a notation. For any \(D\subset {\mathbb {R}}^m\), nonempty closed set, we define a signed distance function as

It is worth recalling that the signed distance function is concave (see [19, p. 154]). We begin with a lemma which describes a monotonicity property of the signed distance function.

Lemma 5

Given any \(z\in \mathrm {dom}\,g \) and any \(d=(d_1,\ldots ,d_m)\in {\mathbb {R}}_+^m\) with \(d_i =0\) if \(L_i = 0\), if \(\mathrm {bd\ dom}g \ne \emptyset \), one has

Proof

Fix an arbitrary \(z\in \mathrm {dom}\,g \) and an arbitrary \(d=(d_1,\ldots ,d_m)\in {\mathbb {R}}_+^m\) such that \(d_i = 0\) whenever \(L_i = 0\) in the sequel of the proof. If \(z+d \not \in \mathrm {dom}\ g\), Eq. (49) holds true by the definition in Eq. (48).

From now on, we suppose \(z+d \in \mathrm {dom}\ g\). Let \({\bar{z}} \in \mathrm {bd}\ \mathrm {dom}\,g \) be a point such that

Then, one has

Since \({\bar{z}}\) lies on the boundary of \(\mathrm {dom}\,g \), it follows that \({\bar{z}}+d \not \in \mathrm {int}\ \mathrm {dom}\,g \) because of the monotonicity property of g in Assumption 1. Hence, by the definition of \(\mathrm {sdist}\) in Eq. (48), we have

Combining this inequality with Eq. (50) completes the proof. \(\square \)

The following lemma shows that it is possible to construct a convex combination between the current x and the Slater point \({\bar{x}}\) given in Assumption 4 which will be a Slater point for the current sub-problem with a uniform control over the “degree” of qualification.

Lemma 6

Let \({\bar{x}}\) be given as in Assumption 4 and \(x \in F^{-1}(\mathrm {dom}\ g)\) and assume that \(\mathrm {bd\ dom}g \ne \emptyset \). Set

Then,

Proof

Fix an arbitary \(x\in K\). Then, for any \(t\in (0,1]\) one has

where the last inequality is obtained by applying the coordinatewise convexity of F. Therefore, for any \(t\in (0,1]\), we have

where for (a) we combine Eq. (52) with Lemma 5, for (b) we use the concavity of the signed distance function (see [19, p. 154]), for (c) we use the fact that \((1-t) \mathrm {sdist} [F(x),\mathrm {bd}\ \mathrm {dom}\,g ] \ge 0\), and for (d) we use the concavity of the signed distance function again.

It is easy to verify that \(\gamma ({\bar{x}},x)\in (0,1]\) is the maximizer of \(\delta (t)\) over the interval (0, 1]. We now consider the following inequality.

Inequality (54) holds true: indeed, either \(F({\bar{x}})+\varvec{L} \Vert x - {\bar{x}}\Vert ^2/2 \in \mathrm {dom}\ g\) and the result is trivial or otherwise, the result holds by the definition of the distance as an infimum. If \(\gamma ({\bar{x}},x) = 1\), by its definition, one immediately has

which implies

Substituting \(\gamma ({\bar{x}},x) = 1\) into Eq. (53) yields

where the first inequality is obtained by considering Eq. (55). As a result, Eq. (51) holds true if \(\gamma ({\bar{x}},x) = 1\).

From now on, let us consider \(\gamma ({\bar{x}},x)<1\). In this case, one has

Substituting into Eq. (53) and using (54) leads to

Eventually, combining this equation with (53) and (56) completes the proof. \(\square \)

We are now ready to describe the proof of Lemma 4

Proof of Lemma 4

-

(i)

As the function g is \(L_g\) Lipschitz continuous on its domain, an immediate application of the Cauchy–Schwartz inequality leads to \(\varvec{L}^T\nu \le L_g \Vert \varvec{L}\Vert \) (see also Sect. 3.2.1).

-

(ii)

The claim is trivial if \(\mathrm {bd\ dom}(g) = \emptyset \), hence we will assume that it is not so that we can use Lemmas 5 and 6. Set \(w = x + \gamma ({\bar{x}},x)({\bar{x}} - x)\) with \(\gamma ({\bar{x}},x)\) given as in Lemma 6. By Lemma 6, one has \(w \in \mathrm {dom}(g\circ H(x,\cdot ))\). Then, one obtains

$$\begin{aligned} \frac{\varvec{L}^T\nu }{2}\Vert w - y\Vert ^2= & {} [H(x,w) - H(x,y)]^T\nu \nonumber \\\le & {} g\circ H(x,w) - g\circ H(x,y)\nonumber \\\le & {} L_g \Vert H(x,w) - H(x,y)\Vert , \end{aligned}$$(57)where the equality follows from Eq. (11), the first inequality is obtained by the convexity of g, and the last inequality is due to the assumption that g is \(L_g\) Lipschitz continuous on its domain. On the other hand, a direct calculation yields

$$\begin{aligned} \Vert H(x,w) - H(x,y)\Vert =&\ \Vert \nabla F(x) (w - y) + \frac{\varvec{L}}{2}\Vert w - x\Vert ^2 - \frac{\varvec{L}}{2}\Vert y - x\Vert ^2 \Vert \\ =&\ \Vert \nabla F(x) (w - y) + \frac{\varvec{L}}{2} ( \Vert w - y\Vert ^2 + 2(w-y)^T(y-x) ) \Vert \\ \le&\ \Vert \nabla F(x)\Vert _{\mathrm{op}}\Vert w-y\Vert + \frac{\Vert \varvec{L}\Vert }{2} \Vert w - y\Vert ^2 + \Vert \varvec{L}\Vert \Vert w-y\Vert \Vert y - x\Vert \\ =&\ \Vert w - y\Vert \left[ \Vert \nabla F(x)\Vert _{\mathrm{op}} + \frac{\Vert \varvec{L}\Vert }{2}\Vert w - y\Vert + \Vert \varvec{L}\Vert \Vert y - x\Vert \right] \\ \le&\ \Vert w - y\Vert \left[ \Vert \nabla F(x)\Vert _{\mathrm{op}} + \frac{\Vert \varvec{L}\Vert }{2}\Vert x - y\Vert \right. \\&\left. \quad + \gamma ({\bar{x}},x)\frac{\Vert \varvec{L}\Vert }{2}\Vert {\bar{x}} - x\Vert + \Vert \varvec{L}\Vert \Vert y - x\Vert \right] \\ \le&\ \Vert w - y\Vert \left[ \Vert \nabla F(x)\Vert _{\mathrm{op}} + \frac{3\Vert \varvec{L}\Vert }{2}\Vert x - y\Vert + \frac{\Vert \varvec{L}\Vert }{2}\Vert {\bar{x}} - x\Vert \right] \end{aligned}$$Substituting this inequality into Eq. (57) leads to

$$\begin{aligned} \frac{\varvec{L}^T \nu }{2}\Vert H(x,w) - H(x,y)\Vert \le L_g \left[ \Vert \nabla F(x)\Vert _{\mathrm{op}} + \frac{3\Vert \varvec{L}\Vert }{2}\Vert x - y\Vert + \frac{\Vert \varvec{L}\Vert }{2}\Vert {\bar{x}} - x\Vert \right] ^2.\nonumber \\ \end{aligned}$$(58)As H(x, y) is on the boundary of \(\mathrm {dom}\,g \), it follows that

$$\begin{aligned} \Vert H(x,w) - H(x,y)\Vert \ge \mathrm {sdist}[H(x,w),\mathrm {bd}\ \mathrm {dom}\,g ]. \end{aligned}$$(59)Combining this inequality with Lemma 6 eventually completes the proof. \(\square \)

Rights and permissions

About this article

Cite this article

Bolte, J., Chen, Z. & Pauwels, E. The multiproximal linearization method for convex composite problems. Math. Program. 182, 1–36 (2020). https://doi.org/10.1007/s10107-019-01382-3

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10107-019-01382-3

Keywords

- Composite optimization

- Convex optimization

- Complexity

- First order methods

- Proximal Gauss–Newton’s method

- Prox-linear method