Abstract

Background

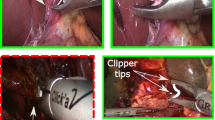

Direct optical trocar insertion is a common procedure in laparoscopic minimally invasive surgery. However, misinterpretations of the abdominal wall anatomy can lead to severe complications. Artificial intelligence has shown promise in surgical endoscopy, particularly in the employment of deep learning models for anatomical landmark identification. This study aimed to integrate a deep learning model with an alarm system algorithm for the precise detection of abdominal wall layers during trocar placement.

Method

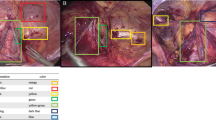

Annotated bounding boxes and assigned classes were based on the six layers of the abdominal wall: subcutaneous, anterior rectus sheath, rectus muscle, posterior rectus sheath, peritoneum, and abdominal cavity. The cutting-edge YOLOv8 model was combined with a deep learning detector to train the dataset. The model was trained on still images and inferenced on laparoscopic videos to ensure real-time detection in the operating room. The alarm system was activated upon recognizing the peritoneum and abdominal cavity layers. We assessed the model’s performance using mean average precision (mAP), precision, and recall metrics.

Results

A total of 3600 images were captured from 89 laparoscopic video cases. The proposed model was trained on 3000 images, validated with a set of 200 images, and tested on a separate set of 400 images. The results from the test set were 95.8% mAP, 89.8% precision, and 91.7% recall. The alarm system was validated and accepted by experienced surgeons at our institute.

Conclusion

We demonstrated that deep learning has the potential to assist surgeons during direct optical trocar insertion. During trocar insertion, the proposed model promptly detects precise landmark references in real-time. The integration of this model with the alarm system enables timely reminders for surgeons to tilt the scope accordingly. Consequently, the implementation of the framework provides the potential to mitigate complications associated with direct optical trocar placement, thereby enhancing surgical safety and outcomes.

Similar content being viewed by others

References

Tinelli A, Malvasi A, Mynbaev OA, Tsin DA, Davila F, Dominguez G et al (2013) Bladeless direct optical trocar insertion in laparoscopic procedures on the obese patient. J Soc Laparoendosc Surg 17(4):521–528

Ambardar S, Cabot J, Cekic V, Baxter K, Arnell TD, Forde KA et al (2009) Abdominal wall dimensions and umbilical position vary widely with BMI and should be taken into account when choosing port locations. Surg Endosc 23(9):1995–2000

Usman R, Ahmed H, Ahmed Z, Ali M (2020) Optical trocar causing aortic injury: a potentially fatal complication of minimal access surgery. J Coll Physicians Surg 30(1):85–87

Sharp HT, Dodson MK, Draper ML, Watts DA, Doucette RC, Hurd WW (2002) Complications associated with optical-access laparoscopic trocars. Obstet Gynecol Surv 57(8):502–503

Sundbom M, Hedberg J, Wanhainen A, Ottosson J (2014) Aortic injuries during laparoscopic gastric bypass for morbid obesity in Sweden 2009–2010: a nationwide survey. Surg Obes Relat Dis 10(2):203–207

Rajkomar A, Dean J, Kohane I (2019) Machine learning in medicine. N Engl J Med 380(14):1347–1358

Kitaguchi D, Takeshita N, Hasegawa H, Ito M (2021) Artificial intelligence-based computer vision in surgery: recent advances and future perspectives. Ann Gastroenterol Surg 6(1):29–36

Kitaguchi D, Takeshita N, Matsuzaki H, Hasegawa H, Igaki T, Oda T et al (2022) Deep learning-based automatic surgical step recognition in intraoperative videos for transanal total mesorectal excision. Surg Endosc 36(2):1143–1151

Padoy N (2019) Machine and deep learning for workflow recognition during surgery. Minim Invasiv Ther 28(2):82–90

Chen YW, Zhang J, Wang P, Hu ZY, Zhong KH (2022) Convolutional-de-convolutional neural networks for recognition of surgical workflow. Front Comput Neurosc 16:998096

García-Peraza-Herrera LC, Li W, Gruijthuijsen C, Devreker A, Attilakos G, Deprest J et al (2020) Real-time segmentation of non-rigid surgical tools based on deep learning and tracking. Preprint at https://arxiv.org/abs/2009.03016

Jha D, Ali S, Tomar NK, Riegler MA, Johansen D, Johansen HD et al (2021) Exploring deep learning methods for real-time surgical instrument segmentation in laparoscopy. In: 2021 IEEE EMBS International Conference on Biomedical and Health Informatics (BHI), IEEE, Athens, pp 1–4

Twinanda AP, Shehata S, Mutter D, Marescaux J, de Mathelin M, Padoy N (2016) EndoNet: a deep architecture for recognition tasks on laparoscopic videos. IEEE Trans Med Imaging 36(1):86–97

Wang J, Jin Y, Cai S, Xu H, Heng PA, Qin J et al (2021) Real-time landmark detection for precise endoscopic submucosal dissection via shape-aware relation network. Preprint at https://arxiv.org/abs/2111.04733

Alexandrova S, Tatlock Z, Cakmak M (2015) RoboFlow: a flow-based visual programming language for mobile manipulation tasks. In: 2015 IEEE International Conference on Robotics and Automation (ICRA), IEEE, Seattle, pp 5537–5544

Allen B, Nistor V, Dutson E, Carman G, Lewis C, Faloutsos P (2009) Support vector machines improve the accuracy of evaluation for the performance of laparoscopic training tasks. Surg Endosc 24(1):170

Terven J, Cordova-Esparza D (2023) A comprehensive review of YOLO: from YOLOv1 to YOLOv8 and beyond. Preprint at https://arxiv.org/abs/2304.00501

Hashimoto DA, Rosman G, Rus D, Meireles OR (2018) Artificial intelligence in surgery: promises and perils. Ann Surg 268(1):70–76

Acknowledgements

We would like to thank the surgical team at Songklanagarind Hospital for their invaluable assistance in establishing the project set-up within the operating room and for their insightful feedback during laparoscopic surgery.

Funding

No funding was provided for this study.

Author information

Authors and Affiliations

Contributions

All authors contributed to the drafting of the manuscript.

Corresponding author

Ethics declarations

Disclosures

Supakool Jearanai, Siripong Cheewatanakornkul, Piyanun Wangkulangkul, and Wannipa Sae-Lim affirm that they have no conflicts of interest to disclose.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Jearanai, S., Wangkulangkul, P., Sae-Lim, W. et al. Development of a deep learning model for safe direct optical trocar insertion in minimally invasive surgery: an innovative method to prevent trocar injuries. Surg Endosc 37, 7295–7304 (2023). https://doi.org/10.1007/s00464-023-10309-1

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00464-023-10309-1