Abstract

Objectives

To evaluate the improvement of mammography interpretation for novice and experienced radiologists assisted by two commercial AI software.

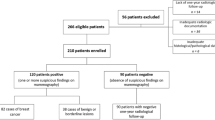

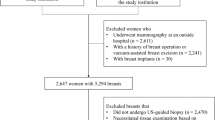

Methods

We compared the performance of two AI software (AI-1 and AI-2) in two experienced and two novice readers for 200 mammographic examinations (80 cancer cases). Two reading sessions were conducted within 4 weeks. The readers rated the likelihood of malignancy (range, 1–7) and the percentage probability of malignancy (range, 0–100%), with and without AI assistance. Differences in AUROC, sensitivity, and specificity were analyzed.

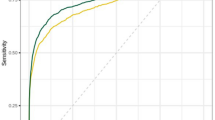

Results

Mean AUROC increased in both novice (0.86 to 0.90 with AI-1 [p = 0.005]; 0.91 with AI-2 [p < 0.001]) and experienced readers (0.87 to 0.92 with AI-1 [p < 0.001]; 0.90 with AI-2 [p = 0.004]). Sensitivities increased from 81.3 to 88.8% with AI-1 (p = 0.027) and to 91.3% with AI-2 (p = 0.005) in novice readers, and from 81.9 to 90.6% with AI-1 (p = 0.001) and to 87.5% with AI-2 (p = 0.016) in experienced readers. Specificity did not decrease significantly in both novice (p > 0.999, both) and experienced readers (p > 0.999 with AI-1 and 0.282 with AI-2). There was no significant difference in the performance change depending on the type of AI software (p > 0.999).

Conclusion

Commercial AI software improved the diagnostic performance of both novice and experienced readers. The type of AI software used did not significantly impact performance changes. Further validation with a larger number of cases and readers is needed.

Clinical relevance statement

Commercial AI software effectively aided mammography interpretation irrespective of the experience level of human readers.

Key Points

• Mammography interpretation remains challenging and is subject to a wide range of interobserver variability.

• In this multi-reader study, two commercial AI software improved the sensitivity of mammography interpretation by both novice and experienced readers. The type of AI software used did not significantly impact performance changes.

• Commercial AI software may effectively support mammography interpretation irrespective of the experience level of human readers.

Similar content being viewed by others

Abbreviations

- AI:

-

Artificial intelligence

- AUROC:

-

Area under the receiver operating characteristics curve

- CAD:

-

Computer-aided detection

- CI:

-

Confidence interval

- FFDM:

-

Full-field digital mammography

- GEE:

-

Generalized estimating equation

- ICC:

-

Intraclass correlation coefficient

- LOM:

-

Likelihood of malignancy

- NPV:

-

Negative predictive value

- PPV:

-

Positive predictive value

- SD:

-

Standard deviation

References

World Health Organization (2015) IARC handbooks. Breast cancer screening, vol 15. International Agency for Research on Cancer, Lyon

Mook S, Van ’t Veer LJ, Rutgers EJ et al (2011) Independent prognostic value of screen detection in invasive breast cancer. J Natl Cancer Inst 103:585–597

Lehtimäki T, Lundin M, Linder N et al (2011) Long-term prognosis of breast cancer detected by mammography screening or other methods. Breast Cancer Res 13:R134

Siu AL (2016) Screening for breast cancer: U.S. Preventive Services Task Force Recommendation Statement. Ann Intern Med 164:279–296

Cardoso F, Kyriakides S, Ohno S et al (2019) Early breast cancer: ESMO clinical practice guidelines for diagnosis, treatment and follow-up†. Ann Oncol 30:1194–1220

Hamashima C, Hamashima CC, Hattori M et al (2016) The Japanese Guidelines for Breast Cancer Screening. Jpn J Clin Oncol 46:482–492

Hong S, Song SY, Park B et al (2020) Effect of digital mammography for breast cancer screening: a comparative study of more than 8 million Korean women. Radiology 294:247–255

Perry N, Broeders M, de Wolf C, Törnberg S, Holland R, von Karsa L (2008) European guidelines for quality assurance in breast cancer screening and diagnosis. Fourth edition–summary document. Ann Oncol 19:614–622

Lehman CD, Wellman RD, Buist DS, Kerlikowske K, Tosteson AN, Miglioretti DL (2015) Diagnostic accuracy of digital screening mammography with and without computer-aided detection. JAMA Intern Med 175:1828–1837

Cole EB, Zhang Z, Marques HS, Edward Hendrick R, Yaffe MJ, Pisano ED (2014) Impact of computer-aided detection systems on radiologist accuracy with digital mammography. AJR Am J Roentgenol 203:909–916

Rodriguez-Ruiz A, Krupinski E, Mordang JJ et al (2019) Detection of breast cancer with mammography: effect of an artificial intelligence support system. Radiology 290:305–314

Schaffter T, Buist DSM, Lee CI et al (2020) Evaluation of combined artificial intelligence and radiologist assessment to interpret screening mammograms. JAMA Netw Open 3:e200265

Kim HE, Kim HH, Han BK et al (2020) Changes in cancer detection and false-positive recall in mammography using artificial intelligence: a retrospective, multireader study. Lancet Digital Health 2:E138–E148

Lee JH, Kim KH, Lee EH et al (2022) Improving the performance of radiologists using artificial intelligence-based detection support software for mammography: a multi-reader study. Korean J Radiol 23:505–516

McKinney SM, Sieniek M, Godbole V et al (2020) International evaluation of an AI system for breast cancer screening. Nature 577:89–94

Rodriguez-Ruiz A, Lang K, Gubern-Merida A et al (2019) Stand-alone artificial intelligence for breast cancer detection in mammography: comparison with 101 radiologists. J Natl Cancer Inst 111:916–922

Salim M, Wåhlin E, Dembrower K et al (2020) External evaluation of 3 commercial artificial intelligence algorithms for independent assessment of screening mammograms. JAMA Oncol 6:1581–1588

Rawashdeh MA, Lee WB, Bourne RM et al (2013) Markers of good performance in mammography depend on number of annual readings. Radiology 269:61–67

Miglioretti DL, Gard CC, Carney PA et al (2009) When radiologists perform best: the learning curve in screening mammogram interpretation. Radiology 253:632–640

Elmore JG, Jackson SL, Abraham L et al (2009) Variability in interpretive performance at screening mammography and radiologists’ characteristics associated with accuracy. Radiology 253:641–651

Sohns C, Angic B, Sossalla S, Konietschke F, Obenauer S (2010) Computer-assisted diagnosis in full-field digital mammography–results in dependence of readers experiences. Breast J 16:490–497

Hupse R, Samulski M, Lobbes MB et al (2013) Computer-aided detection of masses at mammography: interactive decision support versus prompts. Radiology 266:123–129

Choi JS, Han BK, Ko EY, Kim GR, Ko ES, Park KW (2019) Comparison of synthetic and digital mammography with digital breast tomosynthesis or alone for the detection and classification of microcalcifications. Eur Radiol 29:319–329

Obuchowski NA, Bullen JA (2019) Statistical considerations for testing an AI algorithm used for prescreening lung CT images. Contemp Clin Trials Commun 16:100434

Landis JR, Koch GG (1977) The measurement of observer agreement for categorical data. Biometrics 33:159–174

Oppong BA, Dash C, O’Neill S et al (2018) Breast density in multiethnic women presenting for screening mammography. Breast J 24:334–338

Freer PE (2015) Mammographic breast density: impact on breast cancer risk and implications for screening. Radiographics 35:302–315

Conant EF, Barlow WE, Herschorn SD et al (2019) Association of digital breast tomosynthesis vs digital mammography with cancer detection and recall rates by age and breast density. JAMA Oncol 5:635–642

Phi XA, Tagliafico A, Houssami N, Greuter MJW, de Bock GH (2018) Digital breast tomosynthesis for breast cancer screening and diagnosis in women with dense breasts - a systematic review and meta-analysis. BMC Cancer 18:380

Weigel S, Heindel W, Heidrich J, Hense HW, Heidinger O (2017) Digital mammography screening: sensitivity of the programme dependent on breast density. Eur Radiol 27:2744–2751

Cheung YC, Lin YC, Wan YL et al (2014) Diagnostic performance of dual-energy contrast-enhanced subtracted mammography in dense breasts compared to mammography alone: interobserver blind-reading analysis. Eur Radiol 24:2394–2403

Sardanelli F, Cozzi A, Trimboli RM, Schiaffino S (2020) Gadolinium retention and breast MRI screening: more harm than good? AJR Am J Roentgenol 214:324–327

Sechopoulos I (2013) A review of breast tomosynthesis. Part I. The image acquisition process. Med Phys 40:014301

Kim EK, Kim HE, Han K et al (2018) Applying Data-driven imaging biomarker in mammography for breast cancer screening: preliminary study. Sci Rep 8:2762

Kim HJ, Kim HH, Kim KH et al (2022) Mammographically occult breast cancers detected with AI-based diagnosis supporting software: clinical and histopathologic characteristics. Insights Imaging 13:57

Partyka L, Lourenco AP, Mainiero MB (2014) Detection of mammographically occult architectural distortion on digital breast tomosynthesis screening: initial clinical experience. AJR Am J Roentgenol 203:216–222

Yi A, Chang JM, Shin SU et al (2019) Detection of noncalcified breast cancer in patients with extremely dense breasts using digital breast tomosynthesis compared with full-field digital mammography. Br J Radiol 92:20180101

Cho KR, Seo BK, Woo OH et al (2016) Breast cancer detection in a screening population: comparison of digital mammography, computer-aided detection applied to digital mammography and breast ultrasound. J Breast Cancer 19:316–323

Murakami R, Kumita S, Tani H et al (2013) Detection of breast cancer with a computer-aided detection applied to full-field digital mammography. J Digit Imaging 26:768–773

Sadaf A, Crystal P, Scaranelo A, Helbich T (2011) Performance of computer-aided detection applied to full-field digital mammography in detection of breast cancers. Eur J Radiol 77:457–461

Acknowledgements

The authors thank Min-Ju Kim from the Department of Clinical Epidemiology and Biostatistics at Asan Medical Center for providing statistical consultation.

Funding

The authors state that this work has not received any funding.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Guarantor

The scientific guarantor of this publication is Woo Jung Choi.

Conflict of interest

All authors declare no competing interests.

Statistics and biometry

Min-Ju Kim from the Department of Clinical Epidemiology and Biostatistics at Asan Medical Center kindly provided statistical advice for this manuscript.

Informed consent

Written informed consent was waived by the Institutional Review Board.

Ethical approval

Institutional Review Board approval was obtained (Asan Medical Center, approval no. 2020–0281).

Study subjects or cohorts overlap

No study subjects or cohorts have been previously reported.

Methodology

• retrospective

• observational study

• performed at one institution

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

The work originated at Asan Medical Center, University of Ulsan College of Medicine, 88, Olympic-ro, 43-gil, Songpa-gu, Seoul 05505, South Korea.

Supplementary Information

Below is the link to the electronic supplementary material.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Kim, H.J., Choi, W.J., Gwon, H.Y. et al. Improving mammography interpretation for both novice and experienced readers: a comparative study of two commercial artificial intelligence software. Eur Radiol (2023). https://doi.org/10.1007/s00330-023-10422-8

Received:

Revised:

Accepted:

Published:

DOI: https://doi.org/10.1007/s00330-023-10422-8