Abstract

Objectives

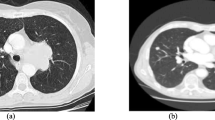

With the popularization of chest computed tomography (CT) screening, there are more sub-centimeter (≤ 1 cm) pulmonary nodules (SCPNs) requiring further diagnostic workup. This area represents an important opportunity to optimize the SCPN management algorithm avoiding “one-size fits all” approach. One critical problem is how to learn the discriminative multi-view characteristics and the unique context of each SCPN.

Methods

Here, we propose a multi-view coupled self-attention module (MVCS) to capture the global spatial context of the CT image through modeling the association order of space and dimension. Compared with existing self-attention methods, MVCS uses less memory consumption and computational complexity, unearths dimension correlations that previous methods have not found, and is easy to integrate with other frameworks.

Results

In total, a public dataset LUNA16 from LIDC-IDRI, 1319 SCPNs from 1069 patients presenting to a major referral center, and 160 SCPNs from 137 patients from three other major centers were analyzed to pre-train, train, and validate the model. Experimental results showed that performance outperforms the state-of-the-art models in terms of accuracy and stability and is comparable to that of human experts in classifying precancerous lesions and invasive adenocarcinoma. We also provide a fusion MVCS network (MVCSN) by combining the CT image with the clinical characteristics and radiographic features of patients.

Conclusion

This tool may ultimately aid in expediting resection of the malignant SCPNs and avoid over-diagnosis of the benign ones, resulting in improved management outcomes.

Clinical relevance statement

In the diagnosis of sub-centimeter lung adenocarcinoma, fusion MVCSN can help doctors improve work efficiency and guide their treatment decisions to a certain extent.

Key Points

• Advances in computed tomography (CT) not only increase the number of nodules detected, but also the nodules that are identified are smaller, such as sub-centimeter pulmonary nodules (SCPNs).

• We propose a multi-view coupled self-attention module (MVCS), which could model spatial and dimensional correlations sequentially for learning global spatial contexts, which is better than other attention mechanisms.

• MVCS uses fewer huge memory consumption and computational complexity than the existing self-attention methods when dealing with 3D medical image data. Additionally, it reaches promising accuracy for SCPNs’ malignancy evaluation and has lower training cost than other models.

Graphical abstract

Similar content being viewed by others

Abbreviations

- AAH:

-

Atypical adenomatous hyperplasia

- ADC:

-

Invasive adenocarcinoma

- AIS:

-

Adenocarcinoma in situ

- CBAM:

-

Convolutional Block Attention Module

- CT:

-

Computed tomography

- GGO:

-

Ground-glass opacity

- MIA:

-

Minimally invasive adenocarcinoma

- MVCS:

-

Multi-View Coupled Self-Attention module

- SCPNs:

-

Sub-centimeter pulmonary nodules

- SD:

-

Standard deviation

- SE:

-

Squeeze-and-Excitation

References

Zhang Y, Liu H, Hu Q (2021) Transfuse: Fusing transformers and cnns for medical image segmentation. In: Medical Image Computing and Computer Assisted Intervention–MICCAI 2021: 24th International Conference, Strasbourg, France, September 27–October 1, 2021, Proceedings, Part I 24. Springer International Publishing, 2021: 14–24

Zhang B, Qi S, Wu Y et al (2022) Multi-scale segmentation squeeze-and-excitation UNet with conditional random field for segmenting lung tumor from CT images. Comput Methods Programs Biomed 222:106946

Yushkevich PA, Piven J, Hazlett HC et al (2006) User-guided 3D active contour segmentation of anatomical structures: significantly improved efficiency and reliability. Neuroimage 31(3):1116–1128

Chelala L, Hossain R, Kazerooni EA, Christensen JD, Dyer DS, White CS (2021) Lung-RADS Version 1.1: challenges and a look ahead, from the AJR special series on radiology reporting and data systems. AJR Am J Roentgenol 216(6):1411–1422

He K, Zhang X, Ren S, Sun J (2016) Deep residual learning for image recognition. In: 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR); 2016 27–30 June 2016; p. 770–778

Dosovitskiy A, Beyer L, Kolesnikov A, et al (2020) An image is worth 16x16 words: transformers for image recognition at scale[J]. arXiv preprint arXiv:2010.11929

Kumar D, Wong A, Clausi DA (2015) Lung nodule classification using deep features in CT images. In: 2015 12th Conference on Computer and Robot Vision; 2015 3–5 June 2015; p. 133–138

Shen W, Zhou M, Yang F, Yang C, Tian J (2015) Multi-scale convolutional neural networks for lung nodule classification. Inf Process Med Imaging 24:588–599

Shen W, Zhou M, Yang F et al (2017) Multi-crop convolutional neural networks for lung nodule malignancy suspiciousness classification. Pattern Recogn 61:663–673

Yan X, Pang J, Qi H, et al (2017) Classification of lung nodule malignancy risk on computed tomography images using convolutional neural network: a comparison between 2D and 3D strategies. In; 2017; Cham: Springer International Publishing; p. 91–101

Zhu WT, Liu CC, Fan W, Xie XH (2018) Ieee. DeepLung: deep 3D dual path nets for automated pulmonary nodule detection and classification. 2018 Ieee Winter Conference on Applications of Computer Vision, pp. 673–681

Jiang H, Gao F, Xu X, Huang F, Zhu S (2020) Attentive and ensemble 3D dual path networks for pulmonary nodules classification. Neurocomputing 398:422–430

Jiang H, Shen F, Gao F, Han W (2021) Learning efficient, explainable and discriminative representations for pulmonary nodules classification. Pattern Recogn 113:107825

Zhao W, Yang JC, Sun YL et al (2018) 3D deep learning from CT scans predicts tumor invasiveness of subcentimeter pulmonary adenocarcinomas. Can Res 78(24):6881–6889

Massion PP, Antic S, Ather S et al (2020) Assessing the accuracy of a deep learning method to risk stratify indeterminate pulmonary nodules. Am J Respir Crit Care Med 202(2):241–249

Wang X, Li Q, Cai J et al (2020) Predicting the invasiveness of lung adenocarcinomas appearing as ground-glass nodule on CT scan using multi-task learning and deep radiomics. Transl Lung Cancer Res 9(4):1397–1406

Smilkov D, Thorat N, Kim B, Viégas F, Wattenberg M (2017) SmoothGrad: removing noise by adding noise. arXiv preprint arXiv:1706.03825

Lin RY, Zheng YN, Lv FJ, et al (2023) A combined non-enhanced CT radiomics and clinical variable machine learning model for differentiating benign and malignant sub-centimeter pulmonary solid nodules. Med Phys 50(5):2835–2843

Ardila D, Kiraly AP, Bharadwaj S et al (2019) End-to-end lung cancer screening with three-dimensional deep learning on low-dose chest computed tomography. Nat Med 25(6):954-+

Wainberg M, Merico D, Delong A, Frey BJ (2018) Deep learning in biomedicine. Nat Biotechnol 36(9):829–838

Noble WS (2006) What is a support vector machine? Nat Biotechnol 24(12):1565–1567

van Griethuysen JJM, Fedorov A, Parmar C et al (2017) Computational radiomics system to decode the radiographic phenotype. Can Res 77(21):E104–E107

Acknowledgements

Thanks to colleagues in the department of radiology and pathology for their detailed diagnostic reports. We would like to thank Editage (www.editage.cn) for English language editing.

Funding

This work was supported by the National Natural Science Foundation of China (81872510); Guangdong Provincial People's Hospital Young Talent Project (GDPPHYTP201902); High-level Hospital Construction Project (DFJH201801); GDPH Scientific Research Funds for Leading Medical Talents and Distinguished Young Scholars in Guangdong Province (No. KJ012019449); Guangdong Basic and Applied Basic Research Foundation (No. 2019B1515130002); 2021 Maoming Science and Technology Special Fund Project (No. 2021186); Guangdong Medical Research Fund (B2022051); and Guangdong Provincial Key Laboratory of Artificial Intelligence in Medical Image Analysis and Application (No. 2022B1212010011).

Author information

Authors and Affiliations

Corresponding authors

Ethics declarations

Guarantor

The scientific guarantor of this publication is Wen-Zhao Zhong.

Conflict of interest

The authors of this manuscript declare no relationships with any companies whose products or services may be related to the subject matter of the article.

Statistics and biometry

No complex statistical methods were necessary for this paper.

Informed consent

Written informed consent was obtained from all subjects (patients) in this study.

Ethical approval

Institutional Review Board approval was obtained.

All procedures involving collection of tissue were in accordance with the ethical standards of the institutional and/or national research committee and with the 1964 Helsinki Declaration and its later amendments or comparable ethical standards. This study was approved by the ethics committee of Guangdong Provincial People’s Hospital, the Third Affiliated Hospital of Sun Yat sen University, Maoming City People’s Hospital, and Zhongshan City People’s Hospital. Written informed consent was obtained from individual or guardian participants.

Study subjects or cohorts overlap

No study subjects or cohorts have been previously reported.

Methodology

• retrospective

• diagnostic or prognostic study

• multicenter study

Additional information

Publisher's note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Below is the link to the electronic supplementary material.

330_2023_10026_MOESM1_ESM.pdf

Supplementary file1 (PDF 327 kb) Figure S1. Overview of the proposed fusion multi-view coupled self-attention network (Fusion MVCSN).

Rights and permissions

About this article

Cite this article

Yang, X., Chu, XP., Huang, S. et al. A novel image deep learning–based sub-centimeter pulmonary nodule management algorithm to expedite resection of the malignant and avoid over-diagnosis of the benign. Eur Radiol 34, 2048–2061 (2024). https://doi.org/10.1007/s00330-023-10026-2

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00330-023-10026-2