Abstract

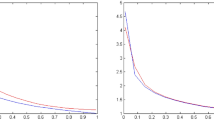

In the present article, we derive certain direct approximation results for the family of exponential sampling type neural network operators. The Voronovskaja type theorem of convergence for these operators is proved. Further, the Jackson-type inequalities concerning the order of approximation for these family of operators are established by utilizing the notion of logarithmic modulus of continuity for the involved functions and their higher derivatives. In order to improve the order of convergence, we provide a constructive mechanism by considering the linear combination of these operators. At the end, we also discuss a few numerical examples based on the presented theory.

Similar content being viewed by others

Data Availibility

Data sharing is not applicable to this article as no data sets were generated or analyzed during the current study.

References

Anastassiou, G.A.: Rate of convergence of some neural network operators to the unit-univariate case. J. Math. Anal. Appl. 212(1), 237–262 (1997)

Anastassiou, G.A.: Quantitative Approximations. Chapman and Hall/CRC, Boca Raton, FL (2001)

Anastassiou, G.A.: Univariate hyperbolic tangent neural network approximation. Math. Comput. Model. 53, 1111–1132 (2011)

Anastassiou, G.A.: Univariate sigmoidal neural network approximation. J. Comput. Anal. Appl. 14(4), 659–690 (2012)

Angamuthu, S.K., Bajpeyi, S.: Direct and inverse results for Kantorovich type exponential sampling series. Results Math. 75, 119 (2020)

Angamuthu, S.K., Kumar, P., Ponnaian, D.: Approximation of discontinuous signals by exponential sampling series. Results Math. 77, 23 (2022)

Aral, A., Acar, T., Kursun, S.: Generalized Kantorovich forms of exponential sampling series. Anal. Math. Phys. 12, 50 (2022)

Bajpeyi, S., Kumar, A.S.: Approximation by exponential sampling type neural network operators. Anal. Math. Phys. 11, 108 (2021)

Bajpeyi, S., Kumar, A.S.: On approximation by Kantorovich exponential sampling operators. Numer. Funct. Anal. Optim. 42(9), 1096–1113 (2021)

Bajpeyi, S., Kumar, A.S., Mantellini, I.: Approximation by Durrmeyer type exponential sampling operators. Numer. Funct. Anal. Optim. 43(1), 16–34 (2022)

Bardaro, C., Butzer, P.L., Mantellini, I.: The exponential sampling theorem of signal analysis and the reproducing kernel formula in the Mellin transform setting. Sampl. Theory Signal Image Process. 13(1), 35–66 (2014)

Bardaro, C., Butzer, P.L., Mantellini, I.: The Mellin–Parseval formula and its interconnections with the exponential sampling theorem of optical physics. Integ. Transforms Spec. Funct. 27(1), 17–29 (2016)

Bardaro, C., Faina, L., Mantellini, I.: A generalization of the exponential sampling series and its approximation properties. Math. Slovaca. 67(6), 1481–1496 (2017)

Bardaro, C., Mantellini, I.,Schmeisser, G.: Exponential sampling series: convergence in Mellin-Lebesgue spaces. Results Math. 74(3), (2019)

Butzer, P.L.: Linear combinations of Bernstein polynomials. Can. J. Math. 5, 559–567 (1953)

Butzer, P. L., Stens, R. L.: Linear prediction by samples from the past. In: Advanced Topics in Shannon Sampling and Interpolation Theory, pp. 157–183. Springer Texts Electrical Engrg., Springer, New York (1993)

Butzer, P.L., Jansche, S.: A direct approach to the Mellin transform. J. Fourier Anal. Appl. 3(4), 325–376 (1997)

Butzer, P.L., Jansche, S.: The finite Mellin transform, Mellin-Fourier series, and the Mellin–Poisson summation formula. In: Proceedings of the Third International Conference on Functional Analysis and Approximation Theory, Vol. I (Acquafredda di Maratea, 1996). Rend. Circ. Mat. Palermo (2) Suppl. No. 52, 55–81 (1998)

Butzer, P. L., Jansche, S.: The exponential sampling theorem of signal analysis. Dedicated to Prof. C. Vinti (Italian) (Perugia, 1996), Atti. Sem. Mat. Fis. Univ. Modena, vol. 46, pp. 99–122 (1998)

Bertero, M., Pike, E.R.: Exponential-sampling method for Laplace and other dilationally invariant transforms. II. Examples in photon correlation spectroscopy and Fraunhofer diffraction. Inverse Probl. 7(1), 21–41 (1991)

Cardaliaguet, P., Euvrard, G.: Approximation of a function and its derivative with a neural network. Neural Netw. 5(2), 207–220 (1992)

Costarelli, D., Spigler, R.: Approximation results for neural network operators activated by sigmoidal functions. Neural Netw. 44, 101–106 (2013)

Costarelli, D.: Interpolation by neural network operators activated by ramp functions. J. Math. Anal. Appl. 419(1), 574–582 (2014)

Costarelli, D., Spigler, R.: Convergence of a family of neural network operators of the Kantorovich type. J. Approx. Theory 185, 80–90 (2014)

Costarelli, D., Spigler, R., Vinti, G.: A survey on approximation by means of neural network operators. J. NeuroTechnol. 1(1), 29–52 (2016)

Costarelli, D., Vinti, G.: Quantitative estimates involving \(K\)-functionals for neural network-type operators. Appl. Anal. 98(15), 2639–2647 (2019)

Costarelli, D., Vinti, G.: Voronovskaja type theorems and high-order convergence neural network operators with sigmoidal functions. Mediterr. J. Math. 17(3), 23 (2020)

Cybenko, G.: Approximation by superpositions of sigmoidal function. Math. Control Signals Syst. 2, 303–314 (1989)

Chui, C.. K., Xin, Li.: Approximation by ridge functions and neural networks with one hidden layer. J. Approx. Theory 70(2), 131–141 (1992)

Gori, F.: Sampling in Optics. Advanced Topics in Shannon Sampling and Interpolation Theory, pp. 37–83. Springer Texts Electrical Engrg., Springer, New York (1993)

Hornik, K., Stinchombe, M., White, H.: Multilayer feedforward networks are universal approximators. Neural Netw. 2, 359–366 (1989)

Mamedov, R. G.: The Mellin transform and approximation theory, ’Èlm’, Baku (1991). ISBN: 5-8066-0137-4 (in Russian)

Ostrowsky, N., Sornette, D., Parke, P., Pike, E.R.: Exponential sampling method for light scattering polydispersity analysis. Opt. Acta. 28, 1059–1070 (1984)

Qian, Y., Yu, D.: Rates of approximation by neural network interpolation operators. Appl. Math. Comput. 418 (2022)

Xie, T.F., Cao, F.L.: The errors of simultaneous approximation by feed forward neural networks. Neurocomputing. 73, 903–907 (2010)

Acknowledgements

The author is thankful to the anonymous reviewers for a careful reading and making valuable suggestions leading to a better presentation of the manuscript.

Funding

The author would like to acknowledge the financial support through project no. CRG/2019/002412 funded by the Department of Science and Technology, Government of India.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The author has no conflicts of interest to declare that are relevant to the content of this article.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Bajpeyi, S. Order of Approximation for Exponential Sampling Type Neural Network Operators. Results Math 78, 99 (2023). https://doi.org/10.1007/s00025-023-01879-6

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s00025-023-01879-6

Keywords

- Exponential sampling

- Neural network operators

- Order of convergence

- Mellin transform

- Jackson type inequalities