Abstract

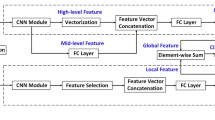

Accurately learning facial expression recognition (FER) features using convolutional neural networks (CNNs) is a non-trivial task because of the presence of significant intra-class variability and inter-class similarity as well as the ambiguity of the expressions themselves. Deep metric learning (DML) methods, such as joint central loss and softmax loss optimization, have been adopted by many FER methods to improve the discriminative power of expression recognition models. However, equal supervision of all features with DML methods may include irrelevant features, which ultimately reduces the generalization ability of the learning algorithm. We propose the Attentive Cascaded Network (ACD) method to enhance the discriminative power by adaptively selecting a subset of important feature elements. The proposed ACD integrates multiple feature extractors with smooth center loss to extract to discriminative features. The estimated weights adapt to the sparse representation of central loss to selectively achieve intra-class compactness and inter-class separation of relevant information in the embedding space. The proposed ACD approach is superior compared to state-of-the-art

The authors would like to acknowledge the support from the National Natural Science Foundation of China (52075530), the AiBle project co-financed by the European Regional Development Fund, and the Zhejiang Provincial Natural Science Foundation of China (LQ23F030001).

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Amos, B., et al.: OpenFace: a general-purpose face recognition library with mobile applications. CMU School Comput. Sci. 6(2) (2016)

Bagherinezhad, H., Horton, M., Rastegari, M., Farhadi, A.: Label refinery: improving ImageNet classification through label progression. arXiv preprint arXiv:1805.02641 (2018)

Cai, J., et al.: Island loss for learning discriminative features in facial expression recognition. In: 2018 13th IEEE International Conference on Automatic Face & Gesture Recognition (FG 2018), pp. 302–309. IEEE (2018)

Cai, J., Meng, Z., Khan, A.S., Li, Z., O’Reilly, J., Tong, Y.: Probabilistic attribute tree in convolutional neural networks for facial expression recognition. arXiv preprint arXiv:1812.07067 (2018)

Chen, C., Crivelli, C., Garrod, O.G., Schyns, P.G., Fernández-Dols, J.M., Jack, R.E.: Distinct facial expressions represent pain and pleasure across cultures. Proc. Natl. Acad. Sci. 115(43), E10013–E10021 (2018)

Chen, S., Wang, J., Chen, Y., Shi, Z., Geng, X., Rui, Y.: Label distribution learning on auxiliary label space graphs for facial expression recognition. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 13984–13993 (2020)

Ding, H., Zhou, S.K., Chellappa, R.: FaceNet2ExpNet: regularizing a deep face recognition net for expression recognition. In: 2017 12th IEEE International Conference on Automatic Face & Gesture Recognition (FG 2017), pp. 118–126. IEEE (2017)

Florea, C., Florea, L., Badea, M.S., Vertan, C., Racoviteanu, A.: Annealed label transfer for face expression recognition. In: BMVC, p. 104 (2019)

Goldberger, J., Ben-Reuven, E.: Training deep neural-networks using a noise adaptation layer (2016)

Li, S., Deng, W.: Reliable crowdsourcing and deep locality-preserving learning for unconstrained facial expression recognition. IEEE Trans. Image Process. 28(1), 356–370 (2018)

Li, S., Deng, W., Du, J.: Reliable crowdsourcing and deep locality-preserving learning for expression recognition in the wild. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 2852–2861 (2017)

Li, Y., Zeng, J., Shan, S., Chen, X.: Occlusion aware facial expression recognition using CNN with attention mechanism. IEEE Trans. Image Process. 28(5), 2439–2450 (2018)

Lin, Z., et al.: CAiRE: an empathetic neural chatbot. arXiv preprint arXiv:1907.12108 (2019)

Liu, H., Cai, H., Lin, Q., Li, X., Xiao, H.: Adaptive multilayer perceptual attention network for facial expression recognition. IEEE Trans. Circuits Syst. Video Technol. 32(9), 6253–6266 (2022). https://doi.org/10.1109/TCSVT.2022.3165321

Liu, X., Kumar, B.V., Jia, P., You, J.: Hard negative generation for identity-disentangled facial expression recognition. Pattern Recogn. 88, 1–12 (2019)

Lucey, P., Cohn, J.F., Kanade, T., Saragih, J., Ambadar, Z., Matthews, I.: The extended Cohn-Kanade dataset (CK+): a complete dataset for action unit and emotion-specified expression. In: 2010 IEEE Computer Society Conference on Computer Vision and Pattern Recognition-Workshops, pp. 94–101. IEEE (2010)

Mandal, M., Verma, M., Mathur, S., Vipparthi, S.K., Murala, S., Kumar, D.K.: Regional adaptive affinitive patterns (RADAP) with logical operators for facial expression recognition. IET Image Proc. 13(5), 850–861 (2019)

Meng, Z., Liu, P., Cai, J., Han, S., Tong, Y.: Identity-aware convolutional neural network for facial expression recognition. In: 2017 12th IEEE International Conference on Automatic Face & Gesture Recognition (FG 2017), pp. 558–565. IEEE (2017)

Minaee, S., Minaei, M., Abdolrashidi, A.: Deep-Emotion: facial expression recognition using attentional convolutional network. Sensors 21(9), 3046 (2021)

Tang, Y., Zhang, X., Hu, X., Wang, S., Wang, H.: Facial expression recognition using frequency neural network. IEEE Trans. Image Process. 30, 444–457 (2020)

Wells, L.J., Gillespie, S.M., Rotshtein, P.: Identification of emotional facial expressions: effects of expression, intensity, and sex on eye gaze. PLoS ONE 11(12), e0168307 (2016)

Xu, N., Liu, Y.P., Geng, X.: Label enhancement for label distribution learning. IEEE Trans. Knowl. Data Eng. (2019)

Xu, N., Shu, J., Liu, Y.P., Geng, X.: Variational label enhancement. In: International Conference on Machine Learning, pp. 10597–10606. PMLR (2020)

Yu, J., Gao, H., Chen, Y., Zhou, D., Liu, J., Ju, Z.: Deep object detector with attentional spatiotemporal LSTM for space human-robot interaction. IEEE Trans. Hum.-Mach. Syst. 52(4), 784–793 (2022)

Yu, J., Gao, H., Sun, J., Zhou, D., Ju, Z.: Spatial cognition-driven deep learning for car detection in unmanned aerial vehicle imagery. IEEE Trans. Cogn. Dev. Syst. 14(4), 1574–1583 (2021)

Yu, J., Xu, Y., Chen, H., Ju, Z.: Versatile graph neural networks toward intuitive human activity understanding. IEEE Trans. Neural Netw. Learn. Syst. (2022)

Zhang, K., Zhang, Z., Li, Z., Qiao, Y.: Joint face detection and alignment using multitask cascaded convolutional networks. IEEE Signal Process. Lett. 23(10), 1499–1503 (2016)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Chen, Y., Liu, Z., Wang, X., Xue, S., Yu, J., Ju, Z. (2023). Combating Label Ambiguity with Smooth Learning for Facial Expression Recognition. In: Yang, H., et al. Intelligent Robotics and Applications. ICIRA 2023. Lecture Notes in Computer Science(), vol 14268. Springer, Singapore. https://doi.org/10.1007/978-981-99-6486-4_11

Download citation

DOI: https://doi.org/10.1007/978-981-99-6486-4_11

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-99-6485-7

Online ISBN: 978-981-99-6486-4

eBook Packages: Computer ScienceComputer Science (R0)