Abstract

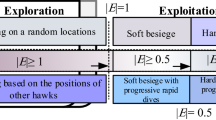

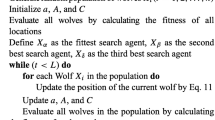

Feature selection is a preprocessing step that aims to eliminate the features that may negatively influence the performance of the machine learning techniques. The negative influence is due to the possibility of having many irrelevant and/or redundant features. In this chapter, a binary variant of recent Harris hawks optimizer (HHO) is proposed to boost the efficacy of wrapper-based feature selection techniques. HHO is a new fast and efficient swarm-based optimizer with various simple but effective exploratory and exploitative mechanisms (Levy flight, greedy selection, etc.) and a dynamic structure for solving continuous problems. However, it was originally designed for continuous search spaces. To deal with binary feature spaces, we propose a new binary HHO in this chapter. The binary HHO is validated based on special types of feature selection datasets. These hard datasets are high dimensional, which means that there is a huge number of features. Simultaneously, we should deal with a low number of samples. Various experiments and comparisons reveal the improved stability of HHO in dealing with this type of datasets.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

Notes

- 1.

Note that the codes of HHO method can be publicly downloaded from: http://www.alimirjalili.com/HHO.html and http://www.evo-ml.com/2019/03/02/hho.

- 2.

Please visit these home pages that are publicly devoted to HHO algorithm: http://www.alimirjalili.com/HHO.html and http://www.evo-ml.com/2019/03/02/hho.

- 3.

- 4.

Please refer to https://www.nature.com/articles/d41586-019-00874-8.

References

Pudil P, Novovičová J, Kittler J (1994) Floating search methods in feature selection. Pattern Recognit Lett 15:1119–1125

Seijo-Pardo B, Bolón-Canedo V, Alonso-Betanzos A (2019) On developing an automatic threshold applied to feature selection ensembles. Inf Fusion 45:227–245

Bolón-Canedo V, Alonso-Betanzos A (2019) Ensembles for feature selection: a review and future trends. Inf Fusion 52:1–12

Jin X, Xu A, Bie R, Guo P (2006) Machine learning techniques and chi-square feature selection for cancer classification using sage gene expression profiles. In: International workshop on data mining for biomedical applications. Springer, pp 106–115

Mafarja M, Aljarah I, Heidari AA, Hammouri AI, Faris H, Ala’M A-Z, Mirjalili S (2018) Evolutionary population dynamics and grasshopper optimization approaches for feature selection problems. Knowl-Based Syst 145:25–45

Heidari AA, Aljarah I, Faris H, Chen H, Luo J, Mirjalili S (2019) An enhanced associative learning-based exploratory whale optimizer for global optimization. Neural Comput Appl

Xu Y, Chen H, Heidari AA, Luo J, Zhang Q, Zhao X, Li C (2019) An efficient chaotic mutative moth-flame-inspired optimizer for global optimization tasks. Expert Syst Appl 129:135–155

Heidari AA, Mirjalili S, Faris H, Aljarah I, Mafarja M, Chen H (2019) Harris hawks optimization: algorithm and applications. Futur Gener Comput Syst 97:849–872

Kohavi R, John GH (1997) Wrappers for feature subset selection. Artif Intell 97:273–324

Crawford B, Soto R, Astorga G, Conejeros JG, Castro C, Paredes F (2017) Putting continuous metaheuristics to work in binary search spaces. Complexity 2017:1–19

Afshinmanesh F, Marandi A, Rahimi-Kian A (2005) A novel binary particle swarm optimization method using artificial immune system. In: EUROCON 2005-The international conference on Computer as a Tool, vol 1. IEEE, pp 217–220

Kennedy J, Eberhart RC (1997) A discrete binary version of the particle swarm algorithm. In: 1997 IEEE international conference on systems, man, and cybernetics, computational cybernetics and simulation, vol 5. IEEE, pp 4104–4108

Rashedi E, Nezamabadi-Pour H, Saryazdi S (2010) Bgsa: binary gravitational search algorithm. Nat Comput 9:727–745

Altman NS (1992) An introduction to kernel and nearest-neighbor nonparametric regression. Am Stat 46:175–185

Liao T, Kuo R (2018) Five discrete symbiotic organisms search algorithms for simultaneous optimization of feature subset and neighborhood size of knn classification models. Appl Soft Comput 64:581–595

Mafarja M, Aljarah I, Heidari AA, Faris H, Fournier-Viger P, Li X, Mirjalili S (2018) Binary dragonfly optimization for feature selection using time-varying transfer functions. Knowl-Based Syst 161:185–204

Faris H, Mafarja MM, Heidari AA, Aljarah I, Ala’M A-Z, Mirjalili S, Fujita H (2018) An efficient binary salp swarm algorithm with crossover scheme for feature selection problems. Knowl-Based Syst 154:43–67

Statnikov A, Aliferis CF, Tsamardinos I, Hardin D, Levy S (2004) A comprehensive evaluation of multicategory classification methods for microarray gene expression cancer diagnosis. Bioinformatics 21:631–643

Luo J, Chen H, Heidari AA, Xu Y, Zhang Q, Li C (2019) Multi-strategy boosted mutative whale-inspired optimization approaches. Appl Math Model

Benjamin DJ, Berger JO (2019) Three recommendations for improving the use of p-values. Am Stat 73:186–191

Goldberg DE, Holland JH (1988) Genetic algorithms and machine learning. Mach Learn 3:95–99

Emary E, Zawbaa HM, Hassanien AE (2016) Binary ant lion approaches for feature selection. Neurocomputing 213:54–65

Nakamura RY, Pereira LA, Costa KA, Rodrigues D, Papa JP, Yang X-S (2012) Bba: a binary bat algorithm for feature selection. In: 2012 25th SIBGRAPI conference on graphics, patterns and images. IEEE, pp 291–297

Sayed GI, Khoriba G, Haggag MH (2018) A novel chaotic salp swarm algorithm for global optimization and feature selection. Appl Intell 48:3462–3481

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2020 Springer Nature Singapore Pte Ltd.

About this chapter

Cite this chapter

Thaher, T., Heidari, A.A., Mafarja, M., Dong, J.S., Mirjalili, S. (2020). Binary Harris Hawks Optimizer for High-Dimensional, Low Sample Size Feature Selection. In: Mirjalili, S., Faris, H., Aljarah, I. (eds) Evolutionary Machine Learning Techniques. Algorithms for Intelligent Systems. Springer, Singapore. https://doi.org/10.1007/978-981-32-9990-0_12

Download citation

DOI: https://doi.org/10.1007/978-981-32-9990-0_12

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-32-9989-4

Online ISBN: 978-981-32-9990-0

eBook Packages: Intelligent Technologies and RoboticsIntelligent Technologies and Robotics (R0)