Abstract

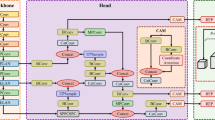

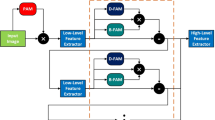

Object detection is a favourite research technology in the field of maritime computer vision and current research mostly adopts region models based on convolutional neural networks (CNNs). Swin-transformer brings a new and effective approach, with its hierarchical structure and attention mechanism-based block, well compensating for the weakness of CNNs that cannot take into account global features. In this paper, we propose a method for applying the Swin-Transformer to maritime object detection, obtaining significantly improved results on the well-known Seaships dataset. Experiments demonstrate that Swin-Transformer, after pre-trained and fine-tuning, has a detection mean average precision of 96.61%, outperforming other CNNs. Furthermore, Swin-Transformer has demonstrated its capability to detect features of the irregular ship shapes in complex backgrounds, and the detection of general cargo ships and passenger ships is excellent.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Qiao, D., Liu, G., Lv, T., Li, W., Zhang, J.: Marine vision-based situational awareness using discriminative deep learning: a survey. J. Mar. Sci. Eng. 9(4), 397 (2021)

Shao, Z.F., Wang, L.G., Wang, Z.Y., Du, W., Wu, W.J.: Saliency-aware convolution neural network for ship detection in surveillance video. IEEE Trans. Circuits Syst. Video Technol. 30(3), 781–794 (2020)

Thompson, D.J.: Maritime object detection, tracking, and classification using lidar and vision-based sensor fusion. PhD dissertations and master’s theses, p. 377 (2017)

Prasad, D.K., Prasath, C.K., Rajan, D., et al.: Object detection in a maritime environment: performance evaluation of background subtraction methods. IEEE Trans. Intell. Transp. Syst. 20(5), 1787–1802 (2019)

Cane, T., Ferryman, J.: Evaluating deep semantic segmentation networks for object detection in maritime surveillance. In: Proceeding of 15th IEEE International Conference on Advanced Video and Signal-Based Surveillance, pp. 1–6, CentAUR (2019)

Zhang, W., He, X., Li, W., Zhang, Z., Wang, P.: An integrated ship segmentation method based on discriminator and extractor. Image Vis. Comput. 93, 103824 (2020)

Hu, H.M., Guo, Q., Zheng, J., Wang, H.Z., Li, B.: Single image defogging based on illumination decomposition for visual maritime surveillance. IEEE Trans. Image Process. 8(6), 2882–2897 (2019)

Zhang, Y., Li, Q.Z., Zang, F.N.: Ship detection for visual maritime surveillance from non-stationary platforms. Ocean Eng. 141(1), 53–63 (2017)

Liu, Z., Waqas, M., Yang, J., Rashid, A., Han, Z.: A multi-task CNN for maritime target detection. IEEE Signal Process. Lett. 28, 434–438 (2021)

Li, S.L., Guo, Y.P., Xu, Y., Li, Z.L.: Real-time geometry identification of moving ships by computer vision techniques in bridge area. Smart Struct. Syst. 23(4), 359–371 (2019)

Li, Q.Z., Xu, X.Y.: Fast detection of surface ship targets based on improved YOLOV3-Tiny. Comput. Eng. 47(10), 283–289 (2020)

Xu, Z., Zhang, W., Zhang, T., Yang, Z., Li, J.: Efficient transformer for remote sensing image segmentation. Remote Sens. 13, 3585 (2021)

Shao, Z.F., Wu, W.J., Wang, Z.Y., Du, W., Li, C.Y.: SeaShips: a large-scale precisely-annotated dataset for ship detection. IEEE Trans. Multimedia 20(10), 1 (2018)

Dilip, K., Prasad, D.K., Rachmawati, L., Rajabally, E., Quek, C.: Video processing from electro-optical sensors for object detection and tracking in a maritime environment: a survey. IEEE Trans. Intell. Transp. Syst. 18(8), 1993–2016 (2017)

Zhao, H.W., Zhang, W.S., Sun, H.Y., Xue, B.: Embedded deep learning for ship detection and recognition. Future Internet 11(2), 53 (2019)

Wang, N., Wang, Y., Er, M.J.: Review on deep learning techniques for marine object recognition: architectures and algorithms. Control Eng. Pract. 118, 104458 (2020)

Soloviev, V., Farahnakian, F., Zelioli, L., Iancu, B., Lilius, J., Heikkonen, J.: Comparing CNN-based object detectors on two novel maritime datasets. In: Proceeding of 2020 IEEE International Conference on Multimedia & Expo Workshops, ICMEW, pp. 1–6 (2020)

Liu, Z., et al.: Swin transformer: hierarchical vision transformer using shifted windows. arXiv:2103.14030 (2021)

Zhou, H., Lu, C., Yang, S., Yu, Y.: ConvNets vs. transformers: whose visual representations are more transferable? arXiv:2108.05305 (2021)

Xie, J., Wu, Z., Zhu, R., Zhu, H.: Melanoma detection based on Swin Transformer and SimAM. In: Proceeding of 2021 IEEE 5th Information Technology, Networking, Electronic and Automation Control Conference, ITNEC, pp. 1517–1521 (2021)

Wang, P., Ji, L., Ji, Z., Gao, Y., Liu, X.: 1st place solutions for UG2+ challenge 2021–(semi-)supervised face detection in the low light condition. arXiv:2107.00818 (2021)

Koay, H.V., Huang, C.J., Chow, C.O.: Shifted-window hierarchical vision transformer for distracted driver detection. In: Proceeding of 2021 IEEE Region 10 Symposium, TENSYMP, pp. 1–7 (2021)

Liu, S., Zhou, H., Li, C., Wang, S.: Analysis of anchor-based and anchor-free object detection methods based on deep learning. In: Proceeding of IEEE International Conference on Mechatronics and Automation, ICMA, pp. 1058–1065 (2020)

Moosbauer, S., Knig, D., Jaekel, J., Teutsch, M.: A benchmark for deep learning based object detection in maritime environments. In: Proceeding of 15th IEEE Workshop Perception Beyond the Visible Spectrum, pp. 916–925 (2019)

Ren, S., He, K., Girshick, R.B., Sun, J.: Faster R-CNN: towards realtime object detection with region proposal networks. IEEE Trans. Pattern Anal. Mach. Intell. 39(6), 1137–1149 (2017)

Cai, Z., Vasconcelos, N.: Cascade R-CNN: delving into high quality object detection. In: Proceeding of 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 6154–6162 (2018)

Zhang, S., Chi, C., Yao, Y., Lei, Z., Li, S.Z.: Bridging the gap between anchor-based and anchor-free detection via adaptive training sample selection. In: Proceeding of 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 9756–9765 (2020)

He, K., Zhang, X., Ren, S., Jian, S.: Deep residual learning for image recognition. In: Proceeding of 2016 IEEE Conference on Computer Vision and Pattern Recognition, pp. 770–778, IEEE Press, Seattle (2016)

Liang, T.T., et al.: CBNetV2: a composite backbone network architecture for object detection. arXiv:2107.00420 (2021)

Song, G.L., Liu, Y., Wang, X.G.: Revisiting the sibling head in object detector. In: Proceeding of 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 11560–11569 (2020)

Khan, S., Naseer, M., Hayat, M., Zamir, S.W., Khan, F.S., Shah, M.: Transformers in vision: a survey. arXiv:2101.01169 (2021)

Vaswani, A., et al.: Attention is all you need. In: Proceeding of 31st International Conference on Neural Information Processing Systems, pp. 6000–6010, ACM, Beijing (2017)

Dosovitskiy, A., et al.: An image is worth 16x16 words: transformers for image recognition at scale. arXiv:2010.11929 (2021)

Lin, T., Wang, Y., Liu, X., Qiu, X.P.: A survey of transformers. arXiv:2106.04554 (2021)

Tan, F., et al.: SDNet: mutil-branch for single image deraining using swin. arXiv:2105.15077 (2021)

Liang, J., Cao, J., Sun, G., Zhang, K., Gool, L.V., Timofte, R.: SwinIR: image restoration using swin transformer. arXiv:2108.10257 (2021)

Wu, M., Qian, Y., Liao, X., Wang, Q., Heng, P.: Hepatic vessel segmentation based on 3D swin-transformer with inductive biased multi-head self-attention. arXiv:2111.03368 (2021)

Xu, M.D., et al.: End-to-end semi-supervised object detection with soft teacher. arXiv:2106.09018 (2021)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Sun, W., Gao, X. (2022). Object Detection in Maritime Scenarios Based on Swin-Transformer. In: S. Shmaliy, Y., Abdelnaby Zekry, A. (eds) 6th International Technical Conference on Advances in Computing, Control and Industrial Engineering (CCIE 2021). CCIE 2021. Lecture Notes in Electrical Engineering, vol 920. Springer, Singapore. https://doi.org/10.1007/978-981-19-3927-3_77

Download citation

DOI: https://doi.org/10.1007/978-981-19-3927-3_77

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-19-3926-6

Online ISBN: 978-981-19-3927-3

eBook Packages: EngineeringEngineering (R0)